Your curated collection of saved posts and media

For the intelligent man, faith is the only remedy for anguish. The fool can be cured by "reason," "progress," alcohol, and work. -Dávila https://t.co/FRQJViS38m

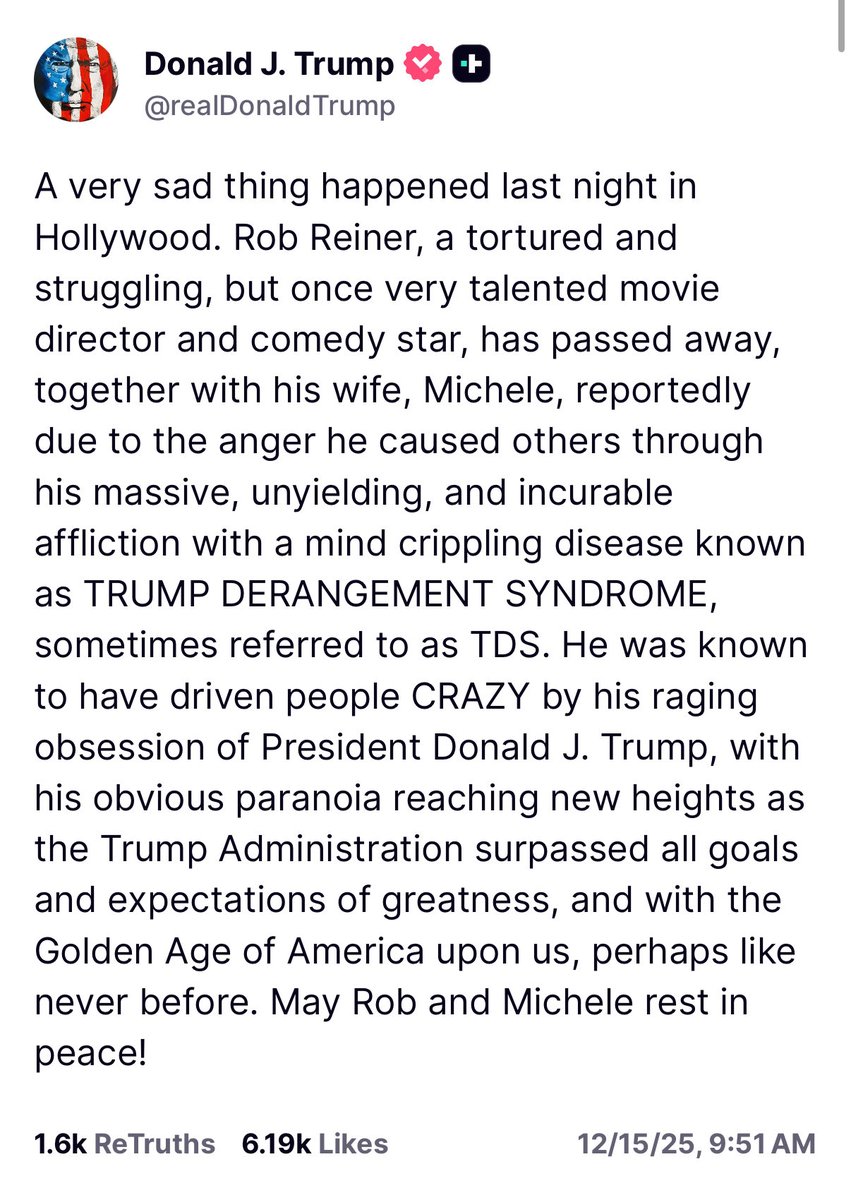

Regardless of how you felt about Rob Reiner, this is inappropriate and disrespectful discourse about a man who was just brutally murdered. I guess my elected GOP colleagues, the VP, and White House staff will just ignore it because they’re afraid? I challenge anyone to defend it. https://t.co/j3dvzRxLQJ

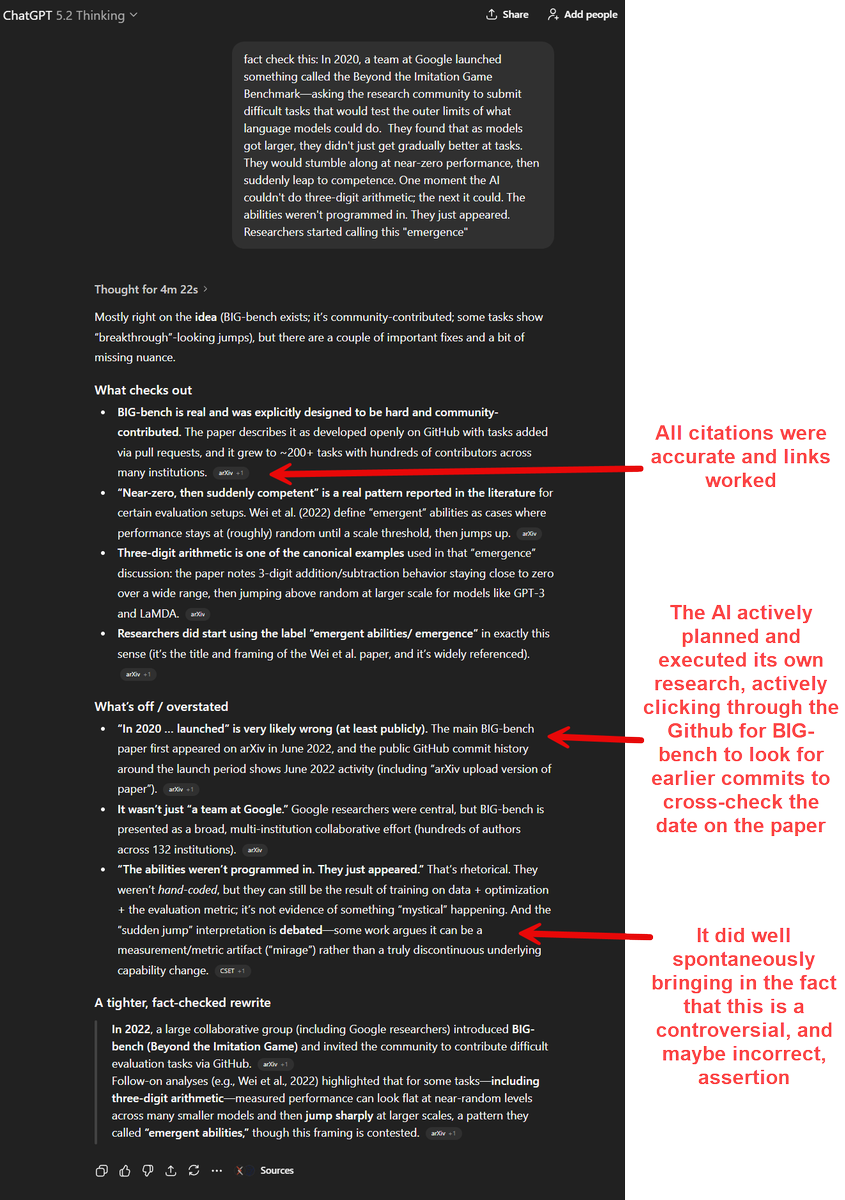

I have found GPT-5.2 Thinking to be a surprisingly deep second-opinion/fact checker. I gave it a dense paragraph with a few correct claims, a couple errors that required research to find, and some things that needed interpretation It found and gently corrected all the problems https://t.co/5fNvGFMr2C

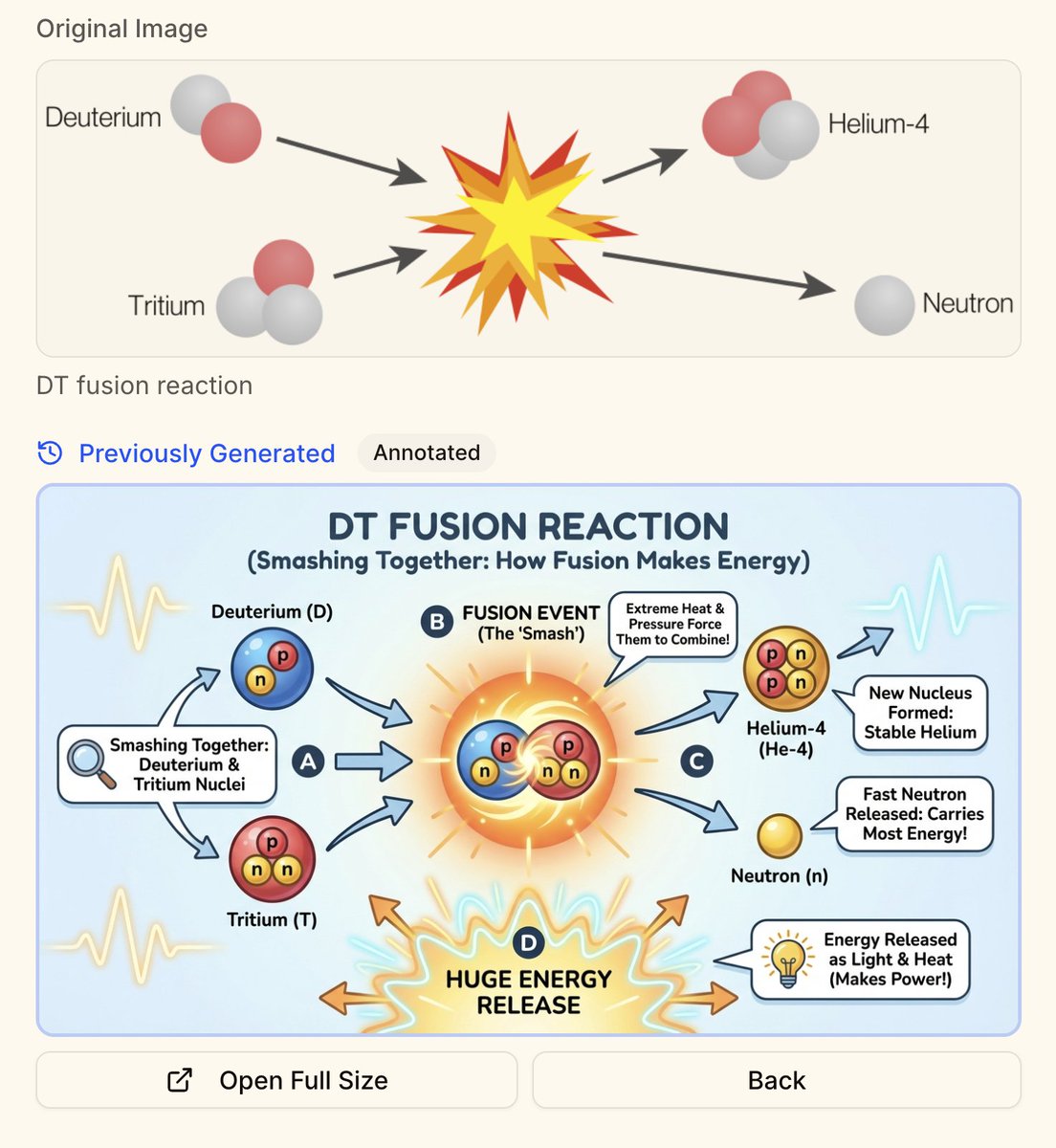

@DeryaTR_ @NotebookLM I guess NotebookLLM is using Nano Banana Pro, right? I was playing around with something similar for intro topics in Physics and Biology, and was having a lot of fun using Nana Banana Pro for remixing images. I find this to be a really fun application of these models. https://t.co/mBUTBtlCnD

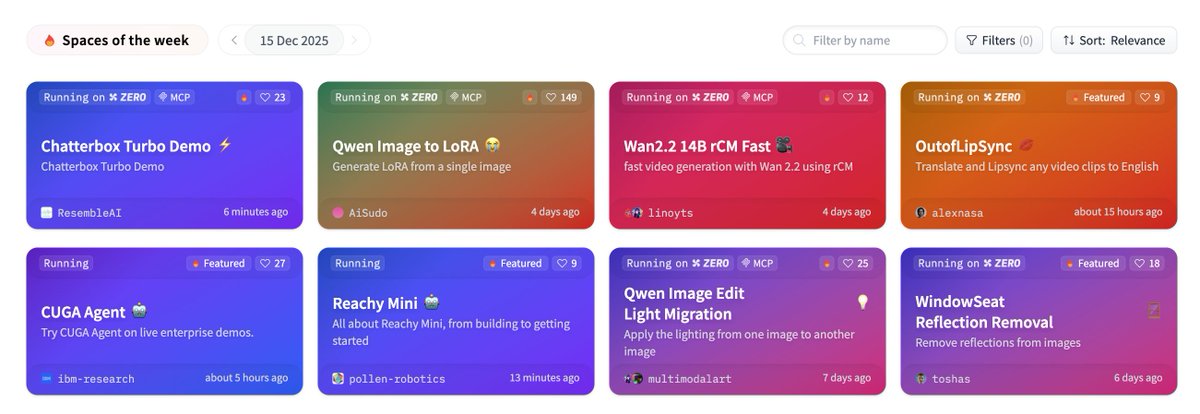

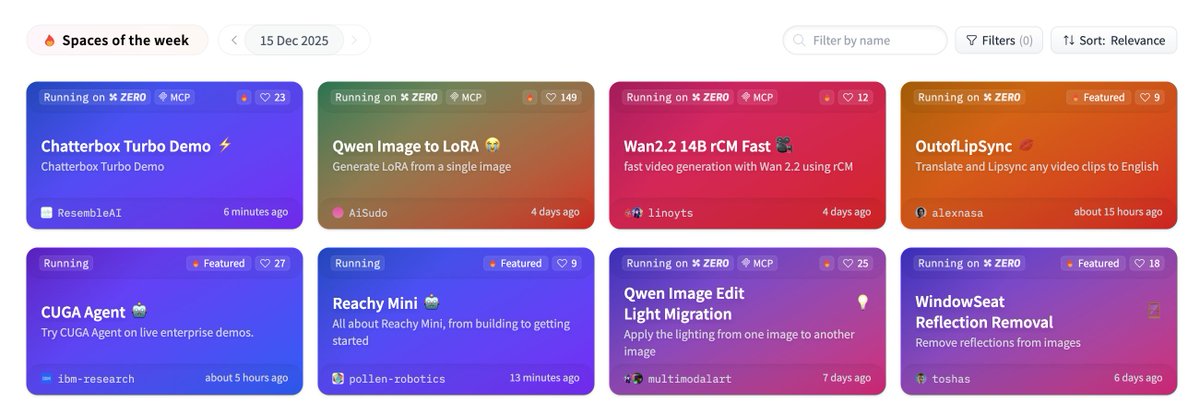

PSA: https://t.co/n1RdT94ij3 is a good link to bookmark 🤗(and refresh)

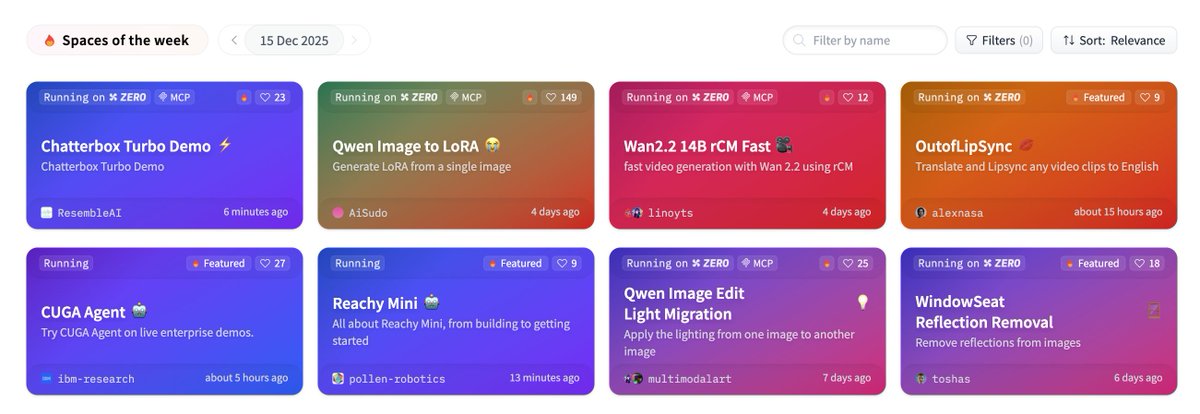

New TTS banger: Chatterbox Turbo 🤯 Zero-shot model that matches any reference voice with native paralinguistic tags, optimized for low-latency voice agents. ⬇️ Demo available on Hugging Face https://t.co/2aoX7zawON

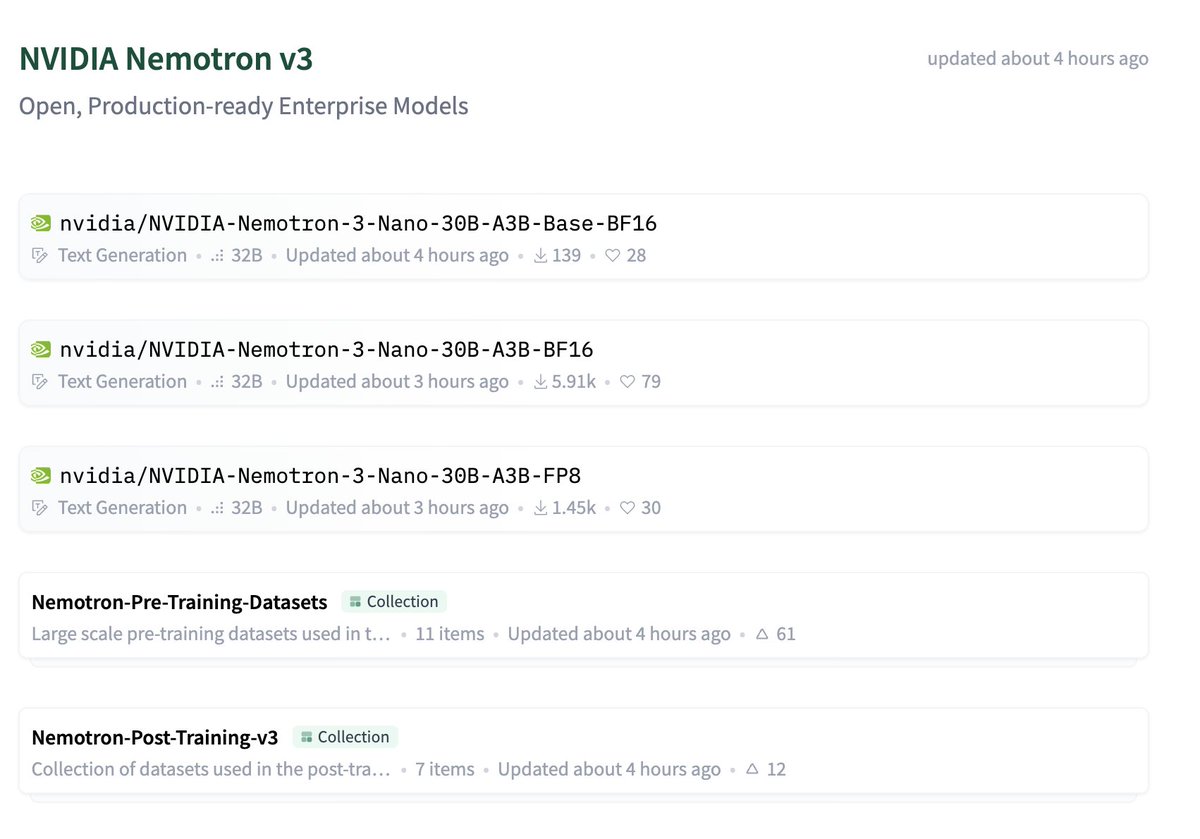

First time I see a major org release @huggingface collections inside collections 🤯 Kudos @nvidia for this brilliant release https://t.co/UwGno2iSR0

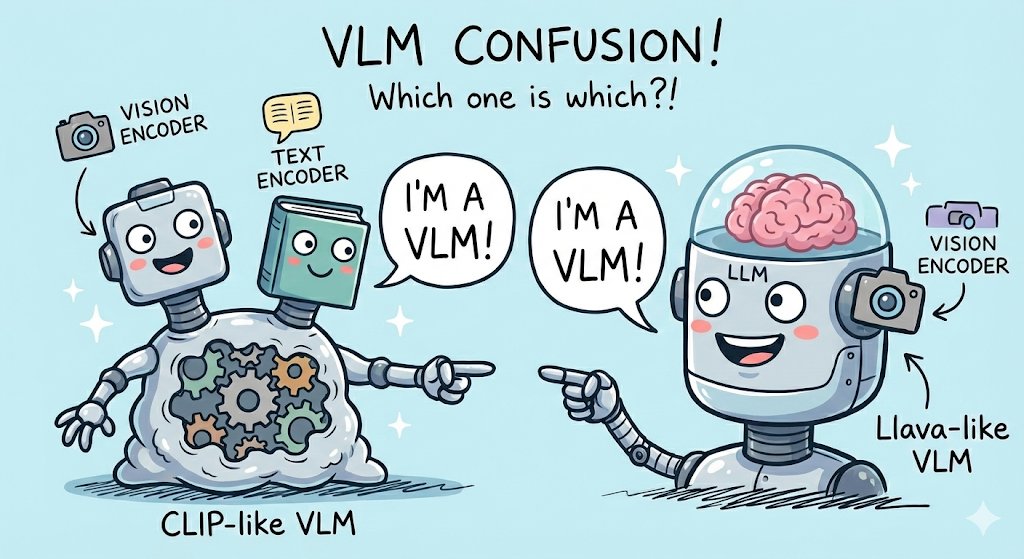

The term VLM has two related but very different meanings and it's so confusing 1) CLIP-like VLMs: 2 encoders trained from scratch 2) Llava-like VLMs: a vision encoder attached to an LLM, both pretrained Ugly image generated with nano banana of course https://t.co/JrhVsJxySq

back at it again with Chatterbox turbo. #1 on @huggingface https://t.co/pYmp78ZWg4

back at it again with Chatterbox turbo. #1 on @huggingface https://t.co/pYmp78ZWg4

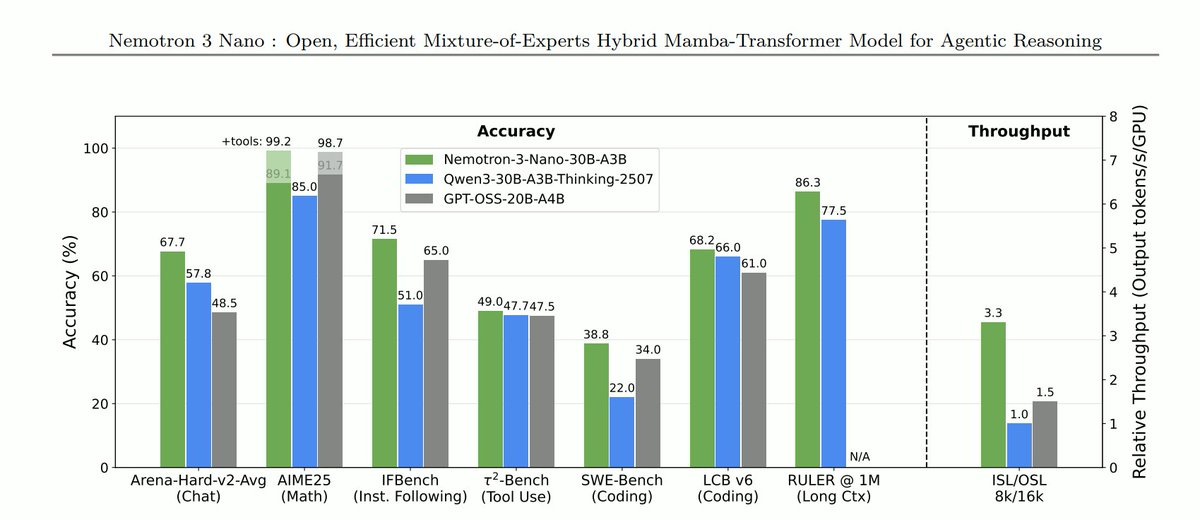

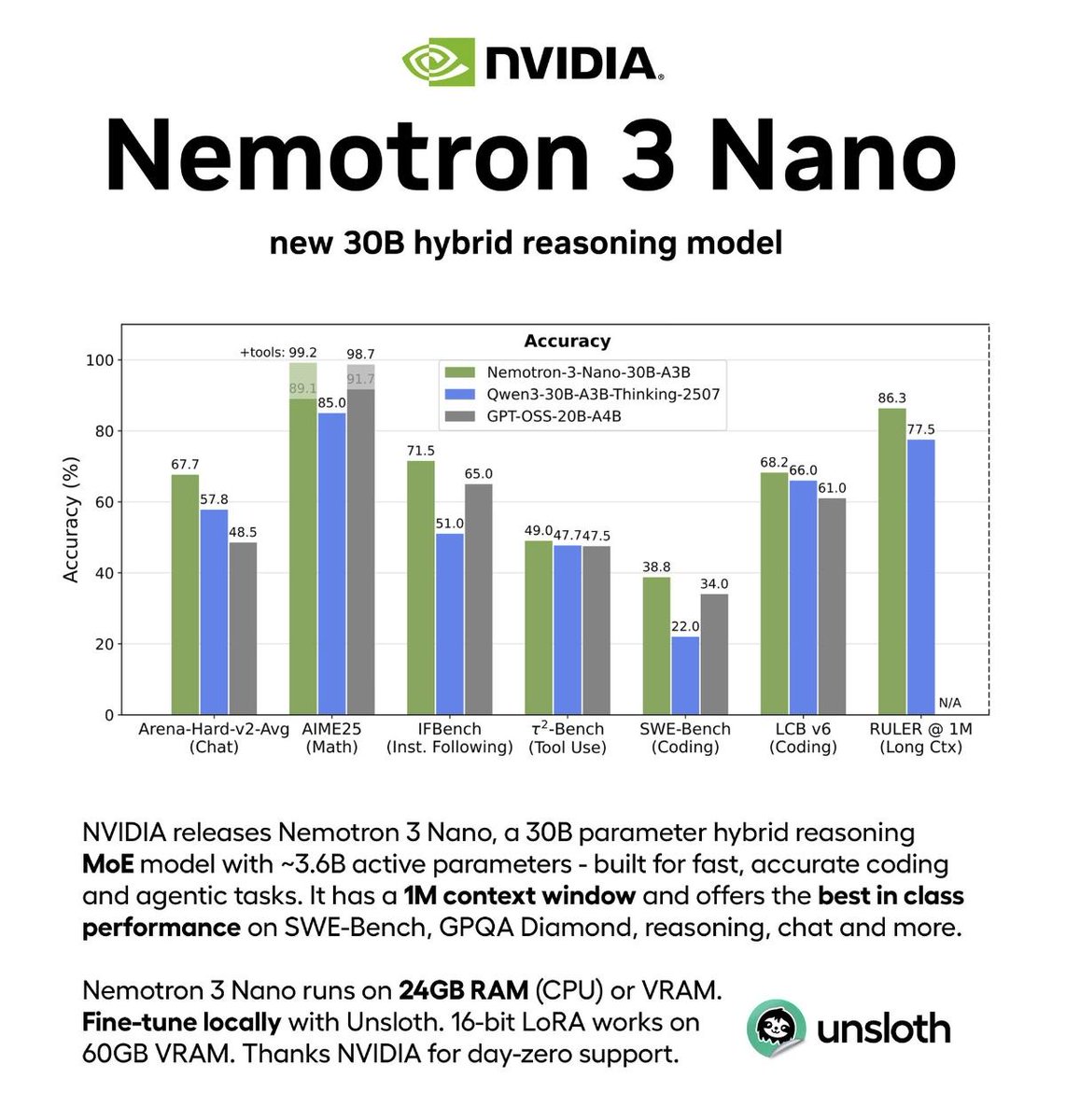

🚀@Nvidia Nemotron 3 Nano is live! Nemotron 3 Nano is the world's most efficient open MoE with an Hybrid-MoE architecture and 1M context length. 🔥 Strong in reasoning, agentic and chat tasks with leading accuracy among AA index, Tau2, SWE Bench. 🔥 Up to 3.3X higher throughput comparing to other open MoE at similar sizes 🔥 A fully open recipe with data, infra released to the community Checkout the new model architecture and reinforcement learning technologies we used below: 😊 Huggingface: https://t.co/UX9L9QmuWJ 📢 Research blog: https://t.co/NeTb5xANxR 🛣️Nemo RL & Nemo Gym (RL environment orchestration): https://t.co/fD78eabCZv & https://t.co/E3Q67AIA4j Kudos to the teams for months of hard work! We are excited to keep building the Nemotron 3 model family and empower the community.

NVIDIA has just released Nemotron 3 Nano, a ~30B MoE model that scores 52 on the Artificial Analysis Intelligence Index with just ~3B active parameters Hybrid Mamba-Transformer architecture: Nemotron 3 Nano combines the hybrid Mamba-Transformer approach @NVIDIAAI has used on previous Nemotron models with a moderate-sparsity MoE architecture, enabling highly efficient inference, particularly at longer sequence lengths Small-model improvements: with 31.6B total and 3.6B active parameters, Nemotron 3 Nano scores 52 on our Intelligence Index, in line with OpenAI’s gpt-oss-20b (high). This represents a +6 point lead on the similarly-sized Qwen3 30B A3B 2507 and +15 improvement on NVIDIA’s previous Nemotron Nano 9B V2 (a dense model) High openness: Nemotron 3 Nano follows other recent NVIDIA models in open licensing and releases of data and methodology for the community to use and replicate - it scores an 67 on the Artificial Analysis Openness Index, in line with previous Nemotron Nano models Key model details: ➤ 1 million token context window, with text only support ➤ Supports reasoning and non-reasoning modes ➤ Released under the NVIDIA Open Model License; the model is freely available for commercial use or training of derivative models ➤ On launch, the model is being made available with a range of serverless inference providers including @basetenco, @DeepInfra, @FireworksAI_HQ, @togethercompute and @friendliai, and it is available now on Hugging Face for local inference or self-deployment See below for our full analysis and key announcement links from NVIDIA 👇

BREAKING Grok is growing faster than any other major AI website. Grok saw the biggest month to month jump in visits in November at +14.74%, beating ChatGPT, Google Gemini, Claude, and Perplexity, as per the latest @Similarweb data. https://t.co/cMNh18TpmF

@elonmusk Haters will say this is AI https://t.co/djLDxhqJlw

Grok Voice Mode gives you a variety of voice options: • Ara – Upbeat Female • Eve – Soft, Soothing Female • Leo – Polished British Male • Rex – Calm, Steady Male • Sal – Smooth, Warm Male • Gork – Relaxed, Laid-back Male Just open the settings icon to pick your voice. You can also adjust the speaking speed to your liking.

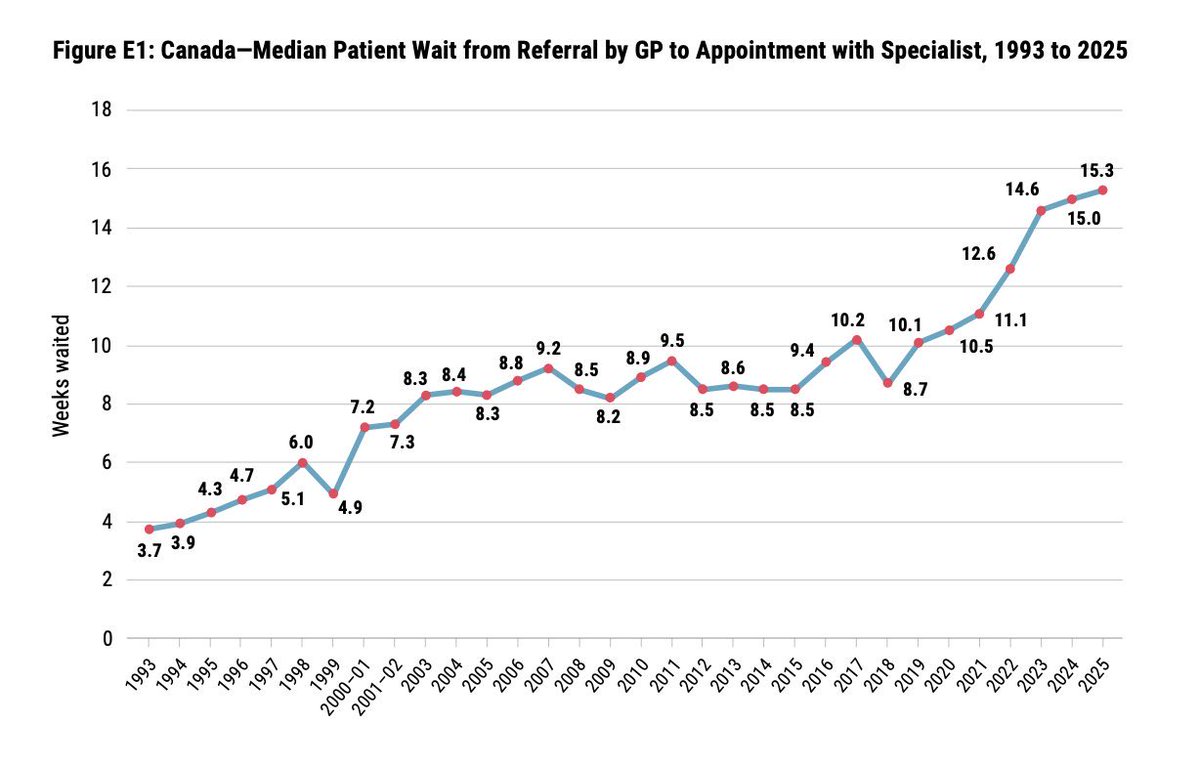

How bad has the Canadian healthcare system gotten? It now takes 3 or 4 months just to get a doctor’s appointment. We have real problems in America, but let this be a reminder that universal coverage is only good for ensuring you are dead before you can see a doctor. As midterms draw closer, be skeptical of anyone promising an expansion of government programs under the guise of humanitarian compassion. These people are at best misguided and perhaps not especially bright. There is one solution to ridiculous healthcare costs and it sits on the supply side, and requires us to seriously and thoughtfully accelerate the deployment of AI into our care delivery system.

marketing is the new cs major https://t.co/bk1lrxokAe

If Reze had gone to school, she would have been a Soviet Pioneer #チェンソーマン #chainsawman https://t.co/hTB36Fh5VK

NVIDIA just open-sourced a 30B model that beats GPT-OSS 2-3× faster. The hybrid MoE architecture is clever: activating only 6 of 128 experts per token while maintaining accuracy. Supporting 1M-token context puts it ahead of most competitors. Most importantly: full transparency with model weights, training recipes, and redistributable datasets.

Link: https://t.co/E3lzqy3trQ

Agents need great OSS models & data for RL. Really excited about this NVIDIA release: • New Hybrid MoE architecture • Data sets released, Pre-training (!!) and post-training • Two larger models coming soon Models here: https://t.co/3fJCMfhGMF Free endpoint here: https://t.co/7v4AVoGTg1

NEWS: NVIDIA announces the NVIDIA Nemotron 3 family of open models, data, and libraries, offering a transparent and efficient foundation for building specialized agentic AI across industries. Nemotron 3 features a hybrid mixture-of-experts (MoE) architecture and new open Nemotro

The web was built for humans. And honestly, that's fine for you guys. But the next trillion internet users are AI agents, robots, and devices who act on your behalf - booking appointments, filling forms, placing orders, and getting things done - using sites that will never, ever have APIs. 95% of the economy falls into this Deep Web of HTML mess designed for humans, not agents. Oh, those generic computer use agents aren't taking you anywhere. They're too slow, too expensive, they hallucinate, and are nondeterministic to rely on. That changes now. This is me, Mino, a web agents API to build on this Deep Web. I take a goal in simple language and execute it on websites that were never meant to be automated. Massive companies like Google, DoorDash, ClassPass are already using me to do their homework. Now it’s your turn. How though? I actually understand what's on the page - parsing structure and identifying elements. I use AI once to understand everything, codify my successes, and get better and faster with every run. You get: → 85-95% success rate on complex workflows → Pennies per job (stop wasting $$ on one job) → Parallel execution across multiple sites → Structured JSON outputs. Every. Single. Time. The web wasn't built for agents. I forced it to work anyway. Go build something real. 50 completed runs on the house: https://t.co/jGnj2amXto

This new paper is wild! It suggests that LLM-based agents operate according to macroscopic physical laws, similar to how particles behave in thermodynamic systems. And it looks like it's a discovery that applies across models. LLM agents work really well on different domains, but we don't have a theory for why. The behavior of these systems is often viewed as a direct product of complex internal engineering: prompt templates, memory modules, and sophisticated tool calling. The dynamics remain a black box. This new research suggests that LLM-driven agents exhibit detailed balance, a fundamental property of equilibrium systems in physics. What does this mean? It suggests that LLMs don't just learn rule sets and strategies; they might be implicitly learning an underlying potential function that evaluates states globally, capturing something like "how far the LLM perceives a state to be from the goal." This enables directed convergence without getting stuck in repetitive cycles. The researchers embedded LLMs within agent frameworks and measured transition probabilities between states. Using a least action principle from physics, they estimated the potential function governing these transitions. The results across GPT-5 Nano, Claude-4, and Gemini-2.5-flash: state transitions largely satisfy the detailed balance condition. This indicates that their generative dynamics exhibit characteristics similar to equilibrium systems. In a symbolic fitting task with 50,228 state transitions across 7,484 different states, 69.56% of high-probability transitions moved toward lower potential. The potential function captured expression-level features like complexity and syntactic validity without needing string-level information. Different models showed different behaviors on the exploration-exploitation spectrum. Claude-4 and Gemini-2.5-flash converged rapidly to a few states. GPT-5 Nano explored widely, producing 645 different valid outputs in 20,000 generations. This might be the first discovery of a macroscopic physical law in LLM generative dynamics that doesn't depend on specific model details. It suggests we can study AI agents as physical systems with measurable, predictable properties rather than just engineering artifacts. Paper: https://t.co/UO1pMWxctY Learn to build effective AI Agents in our academy: https://t.co/JBU5beIoD0

NVIDIA just released Nemotron-Agentic-v1 on Hugging Face This dataset empowers LLMs as interactive, tool-using agents for multi-turn conversations and reliable task completion. Ready for commercial use. https://t.co/U2Q09UO3yv

Introducing Bolmo, a new family of byte-level language models built by "byteifying" our open Olmo 3—and to our knowledge, the first fully open byte-level LM to match or surpass SOTA subword models across a wide range of tasks. 🧵 https://t.co/qgsn4QNvJP

No Gemma 4 yet so I went through Google’s @huggingface Discovered this, wow MedGemma is a collection of Gemma 3 variants that are trained for performance on medical text and image comprehension Developers can use MedGemma to accelerate building healthcare-based AI applications https://t.co/yujaQR25aC

Apple just released Sharp Sharp Monocular View Synthesis in Less Than a Second https://t.co/bXoFtIPmWs

Google is preparing for a new open source release on @huggingface Also noticed just recently that Gemma models are not available on AI Studio anymore. What do you expect? 👀 https://t.co/zOenLbvvbb

PSA: https://t.co/n1RdT94ij3 is a good link to bookmark 🤗(and refresh)

back at it again with Chatterbox turbo. #1 on @huggingface happy holidays! go build! https://t.co/3iEsbmvNP9

back at it again with Chatterbox turbo. #1 on @huggingface happy holidays! go build! https://t.co/3iEsbmvNP9