Your curated collection of saved posts and media

🇿🇦🇺🇸 Gunther Eagleman nailed it: Calls to “Kill the Boer, Kill the Farmer” are still happening in South Africa, and ignoring the brutal farm attacks is straight-up tragic. As with many things, the far left chooses to look the other way on these crimes. https://t.co/HZVaqkq4TA

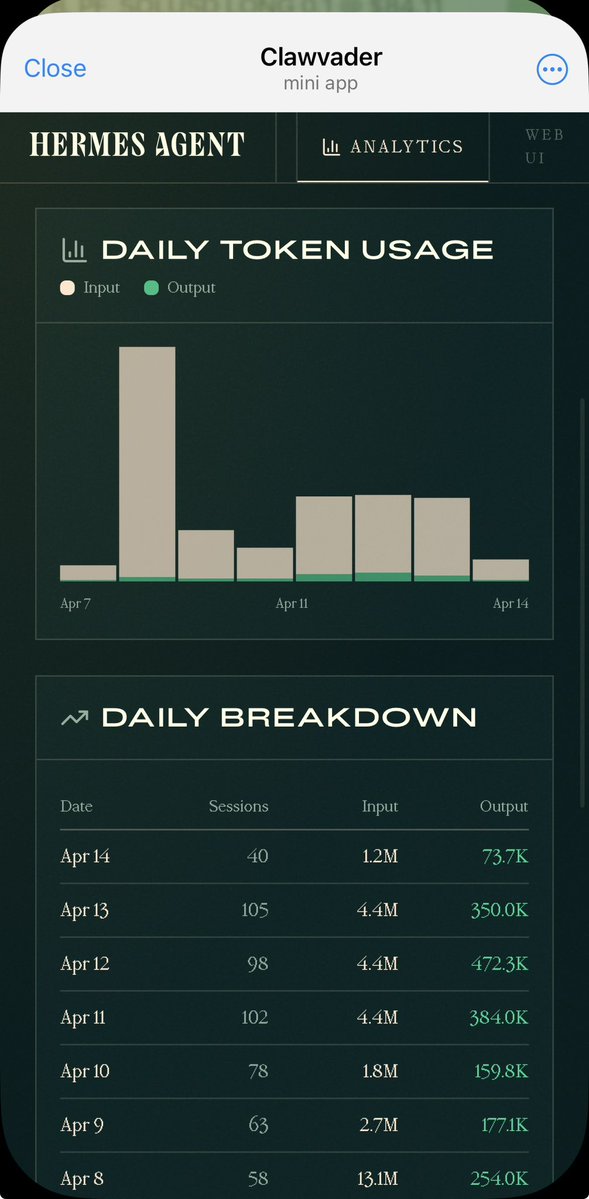

The new @NousResearch Hermes Dashboard is gorgeous! On the web AND on your phone 🤩 Hermes Telegram Mini App v2.0.0 incoming! https://t.co/h6eAxcMOeM

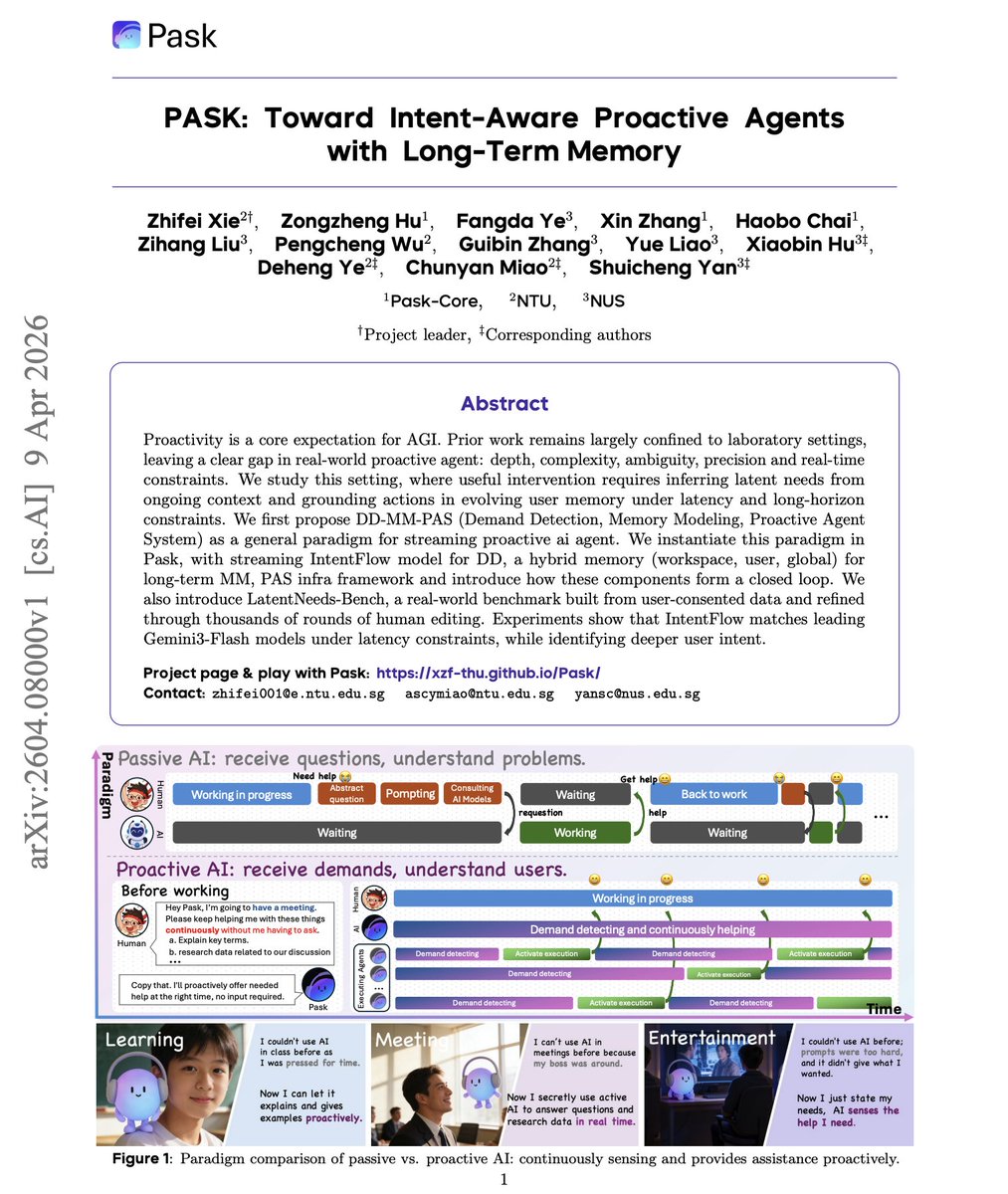

Most AI assistants wait for you to ask. But a truly useful agent should notice you need help before you say anything. New research takes a serious shot at building proactive agents that work in real time. The work introduces PASK with three components: IntentFlow for streaming demand detection, a hybrid memory system (workspace, user, global) for long-term context, and a proactive agent framework that forms a closed loop. They also release LatentNeeds-Bench, built from real user-consented data refined through thousands of rounds of human editing. IntentFlow scores 84.2 overall, matching Gemini-3-Flash (80.8) while most other models, including GPT-5-Mini (77.2) and Claude-Haiku-4.5 (66.2), struggle badly at this task. Why does it matter? The hardest part isn't complex reasoning. It's reliably detecting when a user has an unstated need versus when they don't. Most models are either too helpful or too silent, but rarely both calibrated. This is one of the first systems to tackle proactive assistance as a real product problem. Paper: https://t.co/EYIt2pv6fQ Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

@AshrafGhori https://t.co/YSPVZ6ZhzJ

@kenakennedy https://t.co/rFzxI4j0aF

@farmer_skot https://t.co/HPlHquVP7W

Join us in 15 minutes for the Hermes Agent Jam! https://t.co/avq295YPIm

Tomorrow: Hermes Agent Jam. Nous Research team, presentations, Q&A. Tuesday April 14th, 4PM EST in the Nous Discord https://t.co/QUYMx2h9Xc

Join us in 15 minutes for the Hermes Agent Jam! https://t.co/avq295YPIm

Fish Audio just benchmarked SGLang, vLLM, and MAX 👀 TLDR: 16% faster throughput than vLLM on L40, p99 TTFT of 13.1ms vs 23.6ms, containers under 700MB. The only stack in the comparison built without CUDA, running across NVIDIA, AMD, Apple Silicon, and CPU from one codebase. https://t.co/JAE4e69agh

🔒 Today, we're launching Relay: a new way to verify who you are while keeping your online activity private. https://t.co/BCAnyOGywy

Sub-agents in (latent) space! We’ve been working on a side project. As far as I know, this is the first massively multiplayer, completely LLM-driven game. Come play Gradient Bang with us. See if you can catch me on the leaderboard. This whole thing started because I wanted to explore a bunch of things I’m currently obsessed with, in an application of non-trivial size, that felt both new and old at the same time. So … a retro-style space trading game built entirely around interacting with and managing multiple LLMs. Factorio, but instead of clicking, you cajole your ship AI into tasking other AIs to do things for you. Some of the things we’ve been thinking about as we hack on Gradient Bang: - Sub-agent orchestration - Partial context sharing between multiple LLM inference loops - Managing very long contexts, and episodic memory across user sessions - World events and large volumes of structured data input as part of human/agent conversations - Dynamic user interfaces, driven/created on the fly by LLMs - And, of course, voice as primary input If you’ve been building coding harnesses, or writing Open Claw agents, or doing pretty much anything that pushes the boundaries of AI-native development these days, you’re probably thinking about these things too! This is all built with @pipecat_ai, the back end is @supabase, the React front end is deployed to @vercel, and all the code is open source.

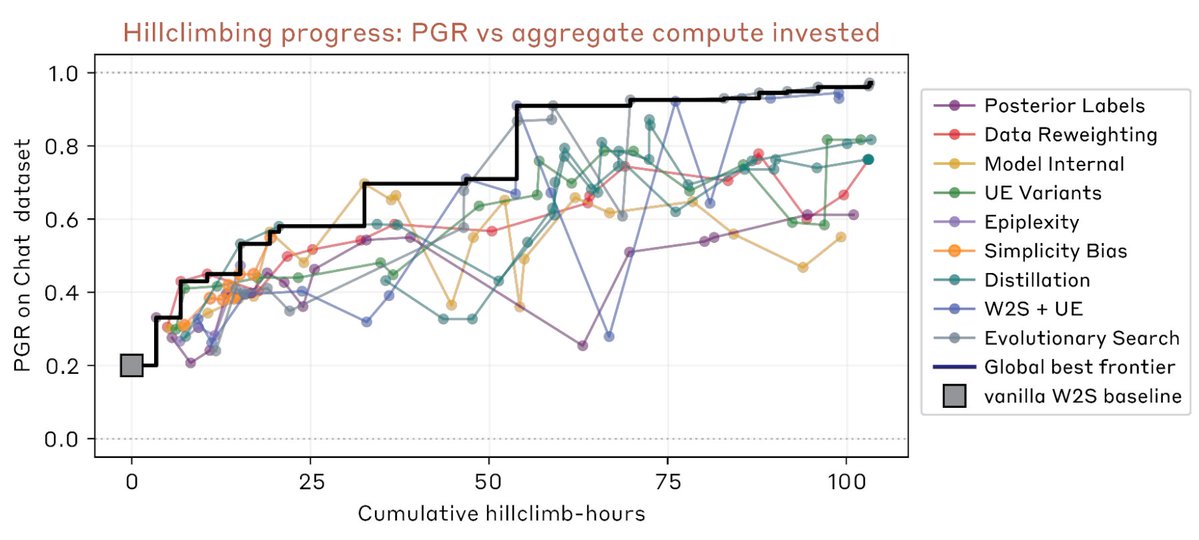

New Anthropic Fellows research: developing an Automated Alignment Researcher. We ran an experiment to learn whether Claude Opus 4.6 could accelerate research on a key alignment problem: using a weak AI model to supervise the training of a stronger one. https://t.co/OAxCjOiWTm

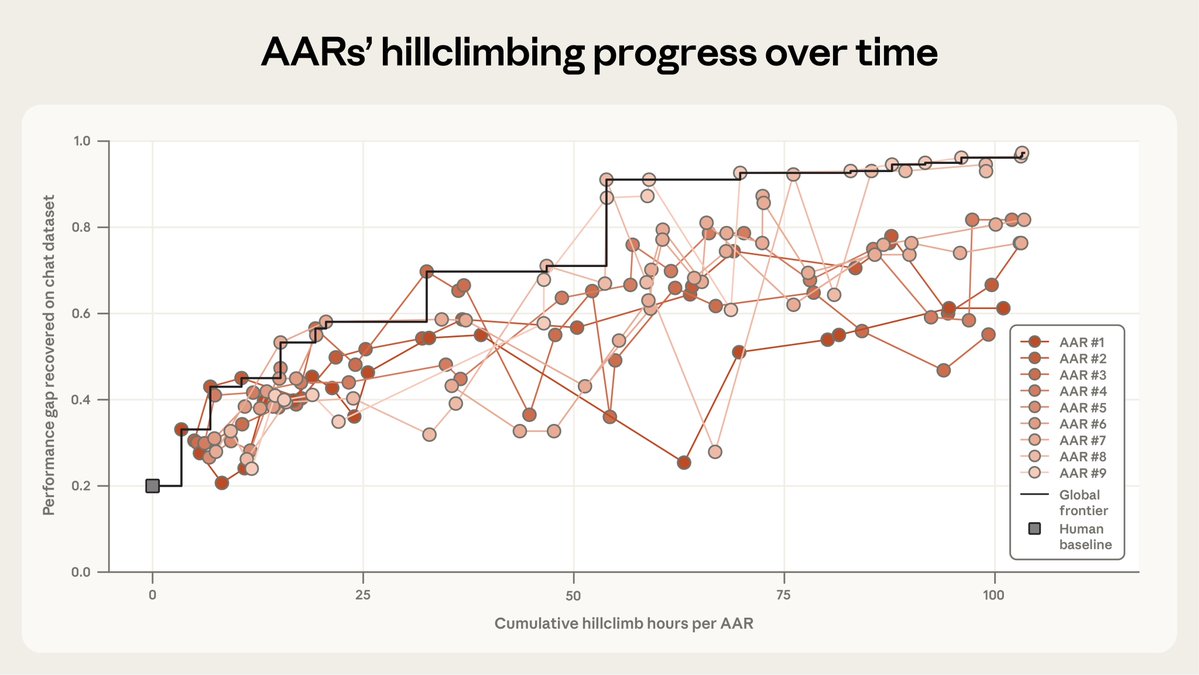

Here, we measure success by the fraction of the “performance gap” we can close between the weak model and the potential of the strong model. After 7 days, human researchers closed it by 23%. Then, our Automated Alignment Researchers—Opus 4.6 with extra tools—closed it by 97%. https://t.co/w1xy4l0MSn

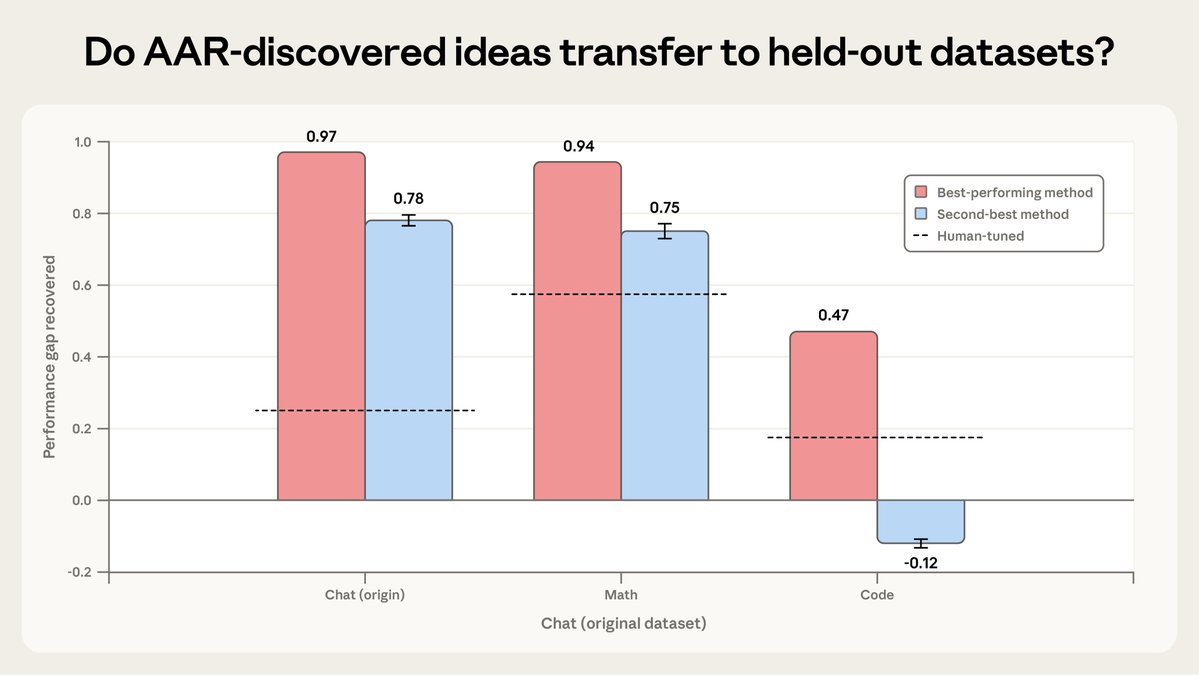

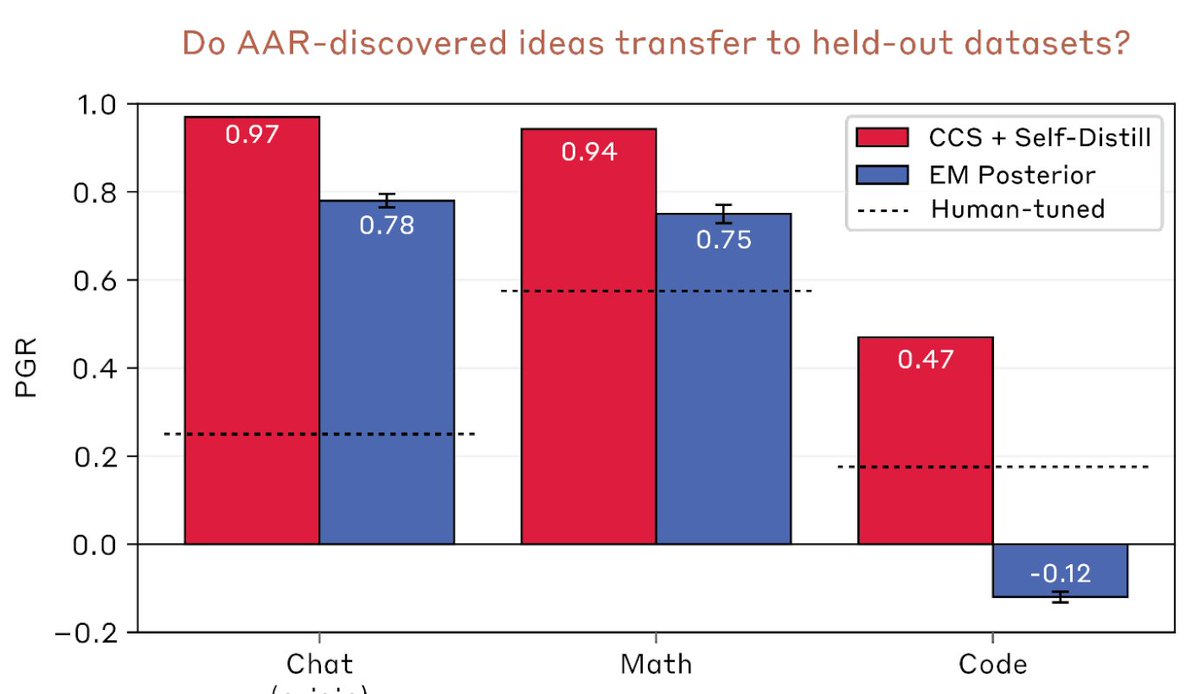

To test the broader usefulness of the AARs’ methods, we assessed how well they worked on two datasets the AARs hadn’t seen before. The AARs’ best-performing method successfully generalized to both coding and math tasks, though their second-best method only generalized to math. https://t.co/r2EUH7MxEK

We discuss this, along with the other implications of this research, in our blog: https://t.co/OAxCjOiWTm For the full study, see here: https://t.co/uDwO5P9yoK

LLM Knowledge Base → Slides When @karpathy shared his LLM Knowledge Base setup, many were wondering how to generate more visual forms of the wiki. There are many options, but I think @GammaApp is one of the best at producing high-quality, rich presentations. To showcase this, I just built a pipeline that turns my AI papers wiki (1K+ papers across 20 AI agent topics) into polished slide presentations using Gamma. The flow: Obsidian vault → Gamma MCP → embedded preview in my dashboard. I give one command to my agent, which pulls the top papers from each topic (via the wiki), feeds them to Gamma, and renders the presentation inline. The Gamma connector for Claude is a great choice for generating beautiful and professional slides. Easy to use. Go to your Claude instance and add the official Gamma connector. That's it! Claude Code will now have access to all the necessary MCP tools for generating slides. I use the Claude Agent SDK for my agent orchestrator, so I use the official Gamma MCP tools and embed the generated slides in an iframe via my artifact preview. See the clip below for an example.

@ElianXBT https://t.co/A9JxBINwsB

New research result: we use Claude to make fully autonomous progress on scalable oversight research, as measured by performance gap recovered (PGR). Claude iterates on a number of different techniques and ends up significantly outperforming human researchers for $18k in credits. https://t.co/fbVpCPPtaU

In this case, Claude develops scalable oversight methods on chat reward modeling datasets and evaluates them on math and code datasets. The best methods do really well on math, but are more mixed on code. This suggests Claude’s methods were overfit to the data and models we used https://t.co/mwwP5GKRuQ

Awesome work by @jiaxinwen22, @liangqiu_1994, Joe Benton, and @janhkirchner! For more details, check out the blog post 👇 https://t.co/62UUoPgxa1

Elon Musk’s AI software, Grok, continues to generate sexualized images of people without their consent, despite his company’s pledge months ago to halt abusive deepfakes after a public backlash and government investigations. https://t.co/uJXRGFHPGT

@rsitarz https://t.co/cekS29EzZX

Your inbox feels like walking into a room where people are yelling at you all at once. And you can't find the one email that matters! This is what email has become. A nightmare you can’t wake up from. We built @odo_email to fix this. It monitors your inbox, flags only the important emails, and drafts replies for you. We're out of beta today! Stop checking your own email. Get early access

@faithdefender More seriously, when I start a project, I go for a walk and talk it through while @ReadAI_ listens and transcribes. That's the prompt. Cover everything you can about what you are trying to build and who you are trying to build it for. That's how I started https://t.co/8L5xphk0qQ

Today we're launching a rebuilt version of Claude Code on desktop. The app has been redesigned for the ground up to make it easier than ever to parallelize work with Claude. I haven't opened an IDE or terminal in weeks. Excited for you all to give it a shot! https://t.co/JV4YQNxZh1

Today we're launching Replay When you're building with agents, the conversation is the work. The code is a byproduct. But that conversation disappears when you close the terminal Replay gives your Claude Code sessions a permanent, shareable home. Upload a session → browsable thread with inline diffs, tool calls, and decision traces And it's open source: https://replay[dot]md

@arena Ready to try Gemma 4 on W&B Inference? We're giving away $25 in inference credits. All you have to do is reply "Gem Drop" to our first tweet, and dm us your org ID or email you use to sign in to wandb. Get started here: https://t.co/Y3kCgOw8V2 https://t.co/nY3fBQsksf

NEW: @googlegemma 4 is live on W&B Inference! 31B params. Currently #4 among open models on @arena. Competing with models 20x its size. Apache 2.0. Full breakdown + free inference credits for you to use below 🧵 https://t.co/XRDXnjOjcq

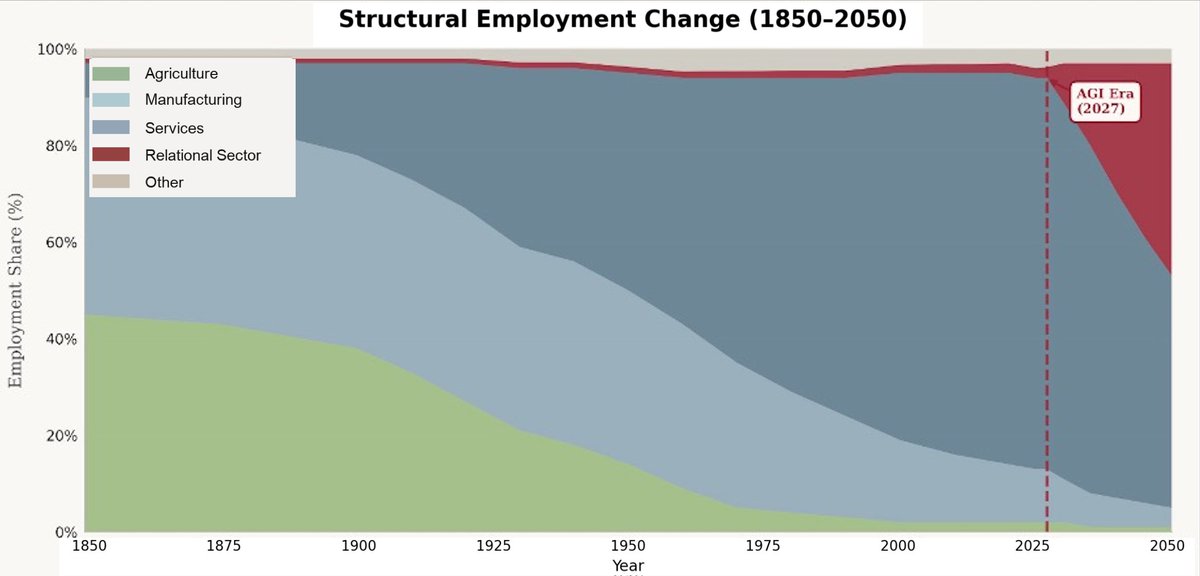

New essay on the economics of structural change and the post-commodity future of work. 1. Almost any question about the impact of advanced AI on the economy needs to start at the same place: what is still scarce? Answer that, and the analysis becomes pretty straightforward. This essay explores what becomes scarce if AI really can replicate most of what humans do in production, and what this mean for the future of jobs. 2. My conjecture, working through the economics: labor reallocates across sectors, and the sector it reallocates to has properties that keep labor a meaningful share of the economy. Ultimately this is about the structure of demand itself. For this, we have to go back to Girard, Augustine and Rousseau: once people's base needs are met, their preferences shift to comparative motives (e.g., status, exclusivity, social desirability). This motive is inherently non-satiated. 4. The key paper is Comin, Lashkari, and Mestieri (Econometrica 2021). As people get richer, they don't buy proportionally more of everything. They shift spending toward sectors with higher income elasticity. They estimate income effects account for 75%+ of observed structural change. 5. The ironic consequence: the sector that gets automated becomes a smaller share of the economy, not a larger one. Agriculture got massively more productive and its share of employment collapsed. Manufacturing too. The "stagnant" sectors absorb the spending and the jobs. 6. So the question is: which sectors have high income elasticity in a post-AGI world? I argue it's what I call the relational sector. Categories where the human isn't just an input into production, it is part of the value. 7. Why does the relational sector have high income elasticity? Because human desire has a mimetic, relational dimension. We don't just want things for their intrinsic properties. We want what others want, and we want it more when others can't have it. Girard, Rousseau, Augustine, and Hobbes all saw this. 8. In work with Kristóf Madarász, we showed this experimentally: WTP roughly doubles when a random subset of others is excluded from the good. And in new work with Graelin Mandel, AI involvement kills the premium. Human-made art gains 44% from exclusivity; AI-made art only 21%. 9. This all comes together for the core argument. The sector that absorbs spending as AI makes commodity production cheap is one where human provenance is part of the value, and demand for it grows faster than income. Exactly the profile that keeps labor meaningful. 10. To be clear about the claim: I'm NOT saying aggregate labor share must rise. It may fall. The claim is about sectoral composition, i.e., where expenditure and employment go once commodities get cheap, and the fact that the sector that will absorb reallocated labor maps to a substantial component of human preferences and desire. 11. If you're interested in the formal model, a linked companion technical note works out all the economics. Read the essay here: https://t.co/NcjVgn2o8g

"I'm just contemplating the fact that one moron, one psychotic moron, one capricious idiot, has completely bollocksed up the global economy not only to the detriment of his own people, but the detriment of the planet. ... It's like how much more of this can the planet take?" https://t.co/NPg5S4hcqy

@themaxburns give him time, it will get worse. https://t.co/jXayImZNi2

@themaxburns give him time, it will get worse. https://t.co/jXayImZNi2

@sd_marlow @CaptainHaHaa @nikitabier I try. It's why I built https://t.co/kiuZ7QXLzb