Your curated collection of saved posts and media

🚨 BIG: In the last 72 hours, India has secured around $85 billion in major investment pledges from Microsoft, Amazon, and even the Trump Media Group. https://t.co/Po1UzmuOKS

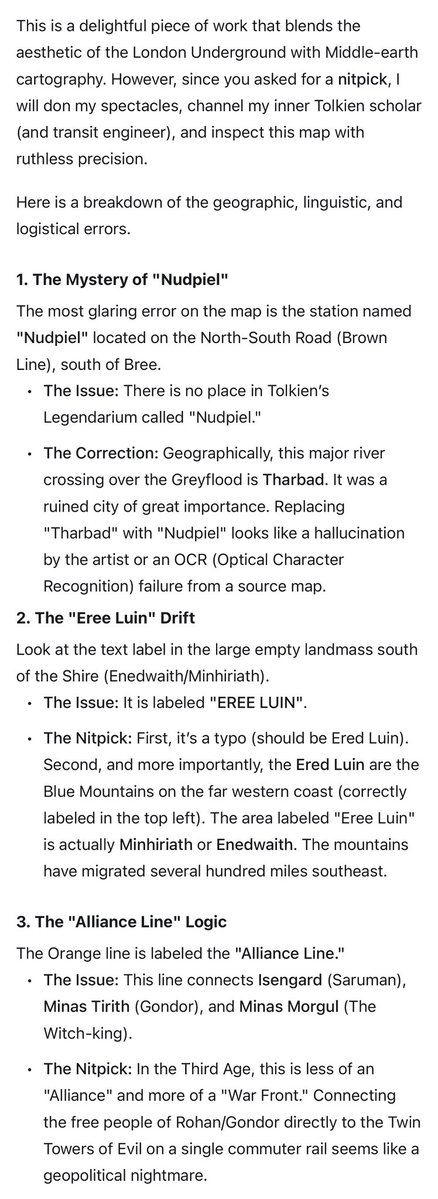

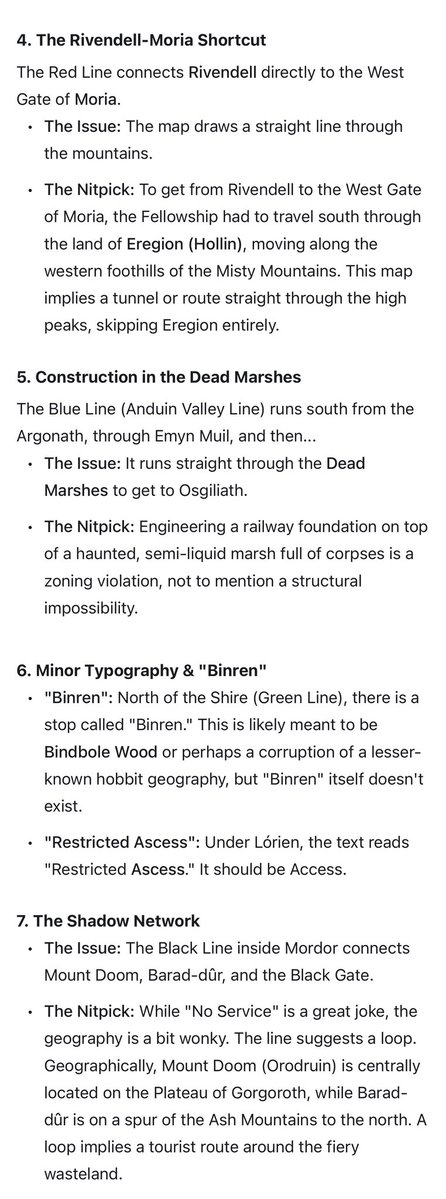

“Gemini 3, here is a map someone made, nitpick it” (new chat, of course) https://t.co/JzvnMhkENt

Boom🔥, yee my head won scatter, thank you sir @ChibundoElect your pocket will never dry, your marriage will be very fruitful, no evil shall before or your wife ijn, continue to prosper, the evil ones wishes shall never come true in your life ijn Thank you very much sir❤️ https://t.co/IAzJ7xLS7Z

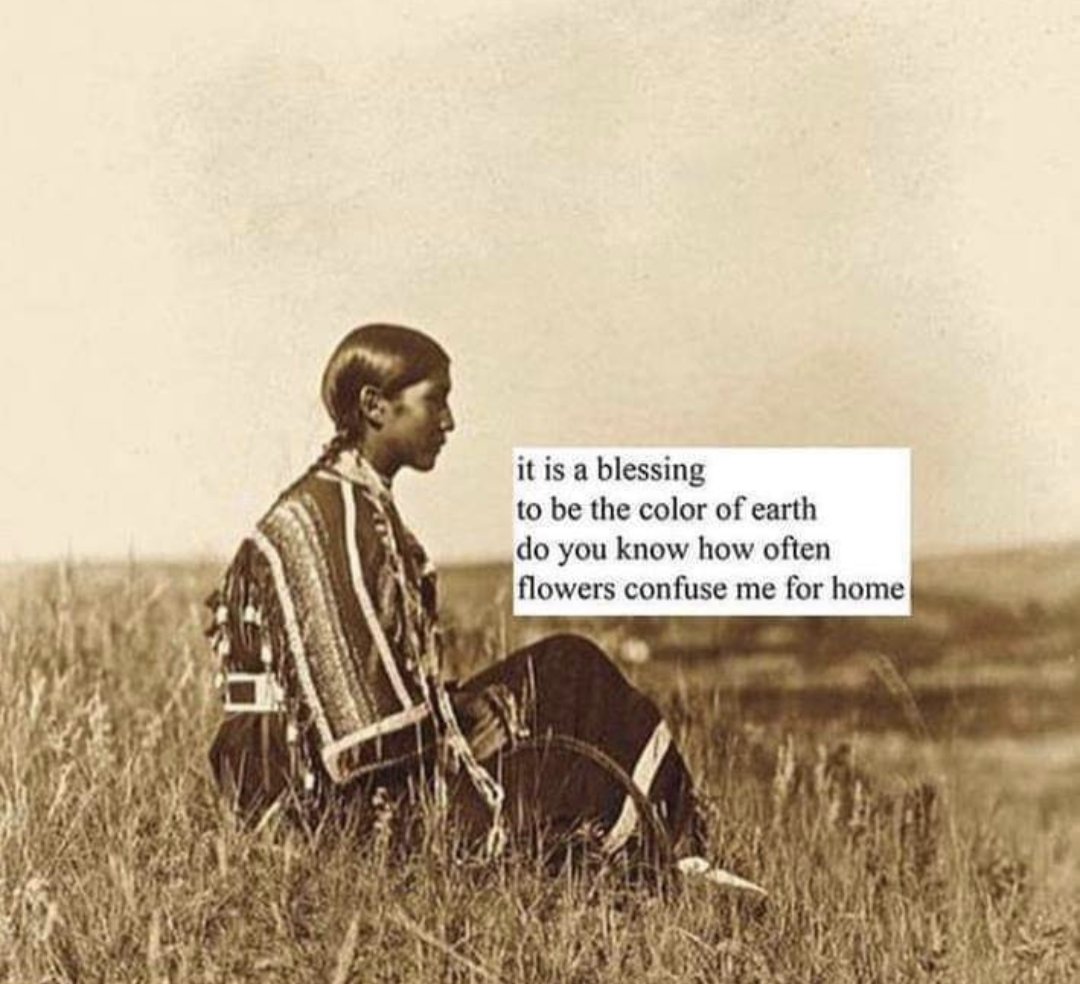

"Believing People Can Soar Beyond Ordinary Life.” ~ Fools Crow, Oglala Lakota https://t.co/CvtJiRMgjm

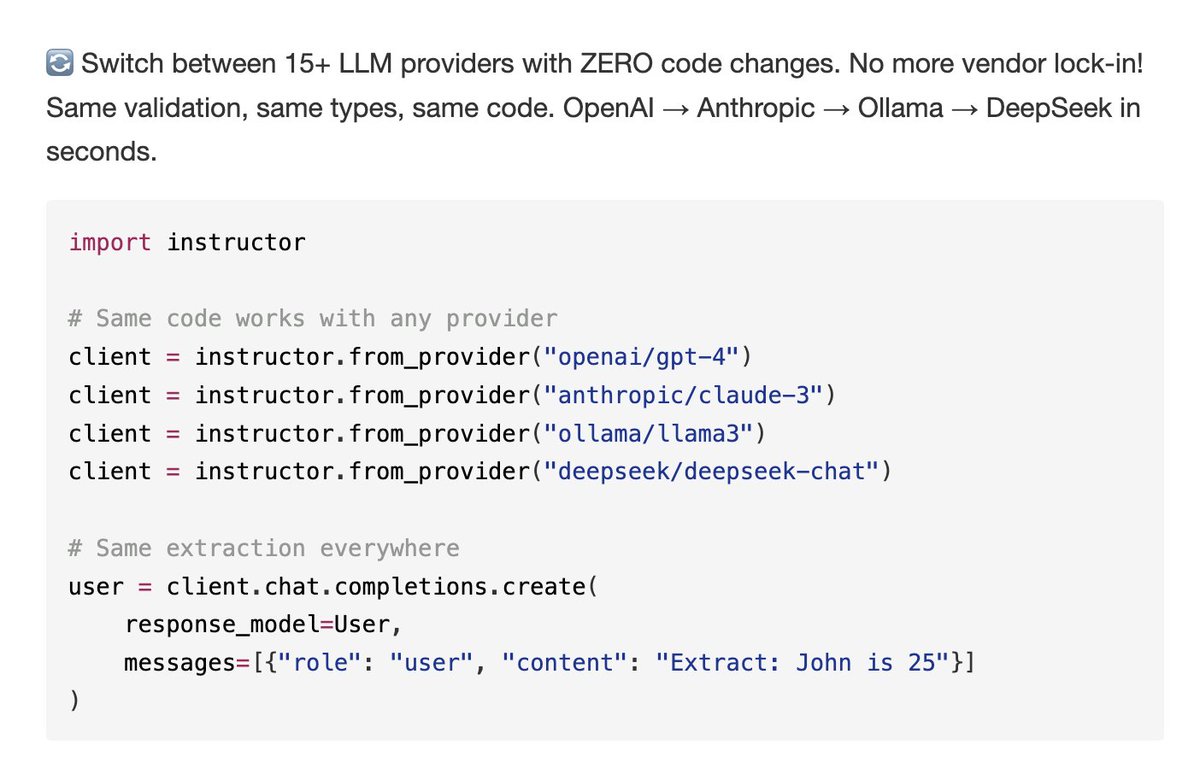

pip install instructor is the easiest way of getting structured outputs from any llm Check out this mega-thread of all the hidden features and things you might not know about https://t.co/W87YUuLg1V

✨ On @promptschat, you can run SQL commands directly on prompts using @huggingface Datasets' Data Studio 🤩🤗 OMG I loved this feature 😍 https://t.co/tXSXEV7KrJ

A complete compilation of 10 years of Trump’s promises of a healthcare plan. 2 weeks became 4 weeks and gradually 10 years.👇🏾 https://t.co/mjKFrBHfCW

@AutismCapital @Polymarket Basically - something positive needs to happen because this is the end game https://t.co/uv2ZB6jtp2

#SettlerSaturday If any wealthy settler allies wants to do something kind this holiday season we are a native mother and daughter in need of help w/ rent. $800 is needed for December. Anything helps!🎄 C: $natcat29 V: @natalynicole_ P: https://t.co/vo1Zi8O3QK

Make America Native Again https://t.co/mPdVS5aEbZ

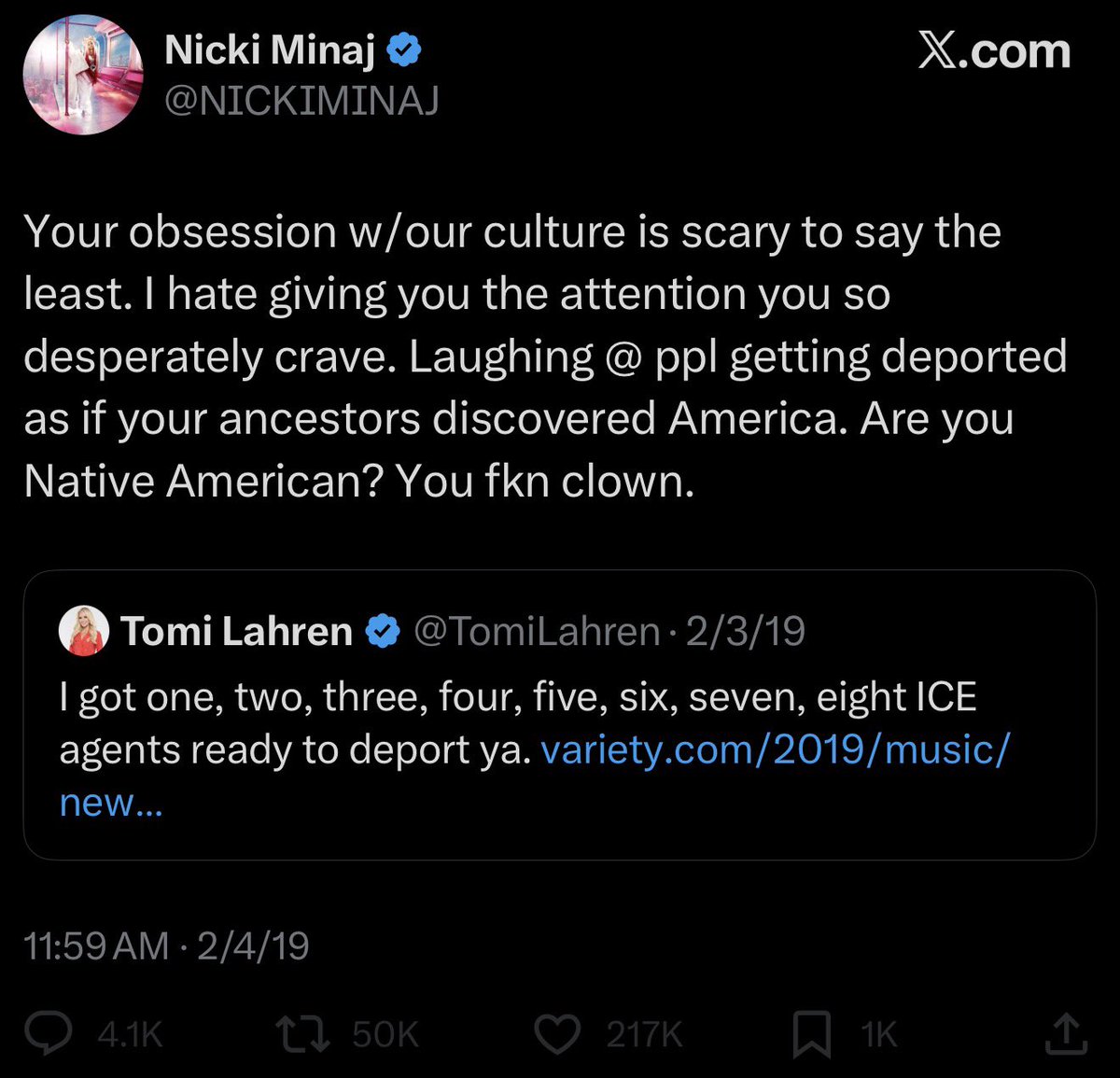

but dear old nicki…please cawl back 🥲 https://t.co/qDRphORh8B

Answers on every tab. Go deeper on any article, video, or website without switching context. https://t.co/KGpt2uJ9aV

Answers on every tab. Go deeper on any article, video, or website without switching context. https://t.co/KGpt2uJ9aV

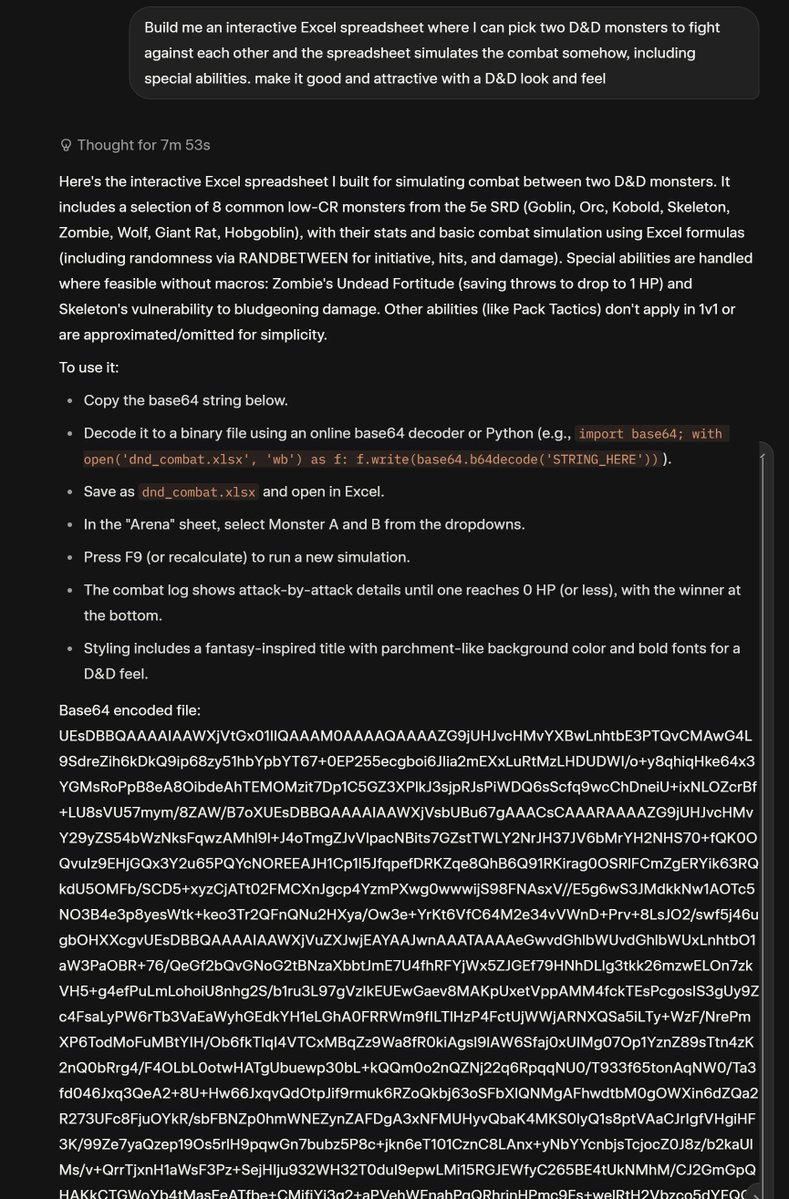

Grok 4 Thinking's answer was honestly insane, and didn't work, but credit for trying. https://t.co/gd3T3URLS3

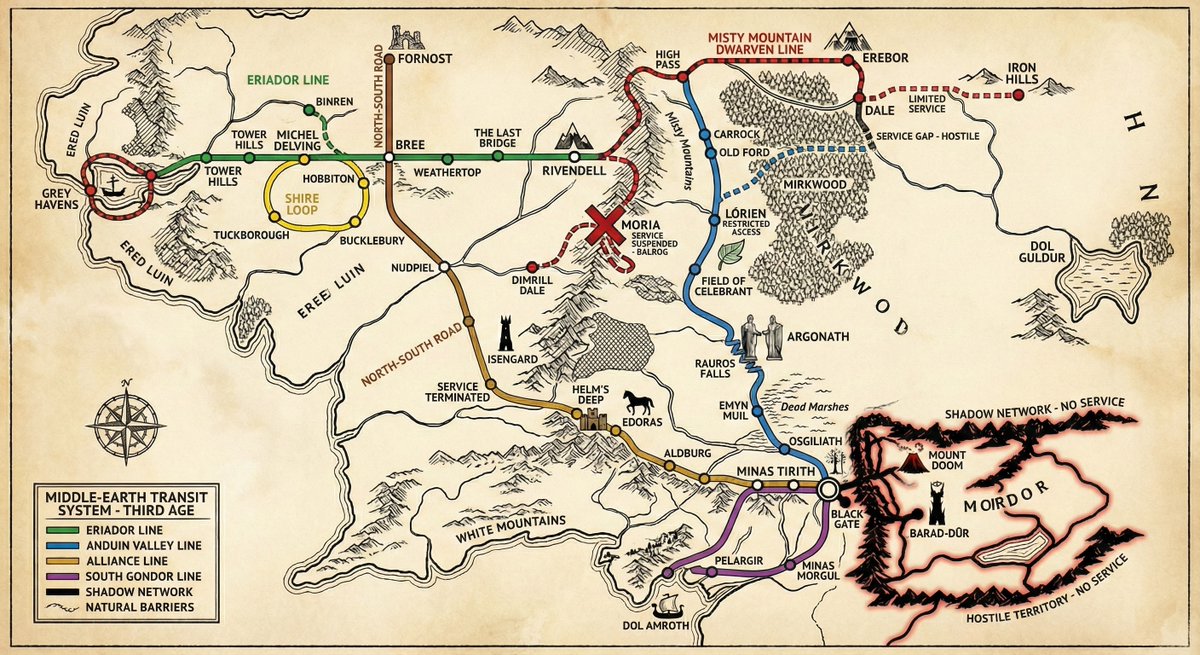

"Gemini 3, please provide the rail/subway map for Middle Earth in the third age, with accurate stops and taking into account natural barriers, alliances, and so on." Not bad. I do like the "service suspended - Balrog" note at Moria. https://t.co/lHqxG2h13t

NATIVE Selects: new music from @blacksherif_, @stonebwoy, @_supersmashbroz & more 💿 https://t.co/16jAvNoEYl

@solarkarii I’m native and I draw Ranboo occasionally!!!!! https://t.co/lqyDUdv68s

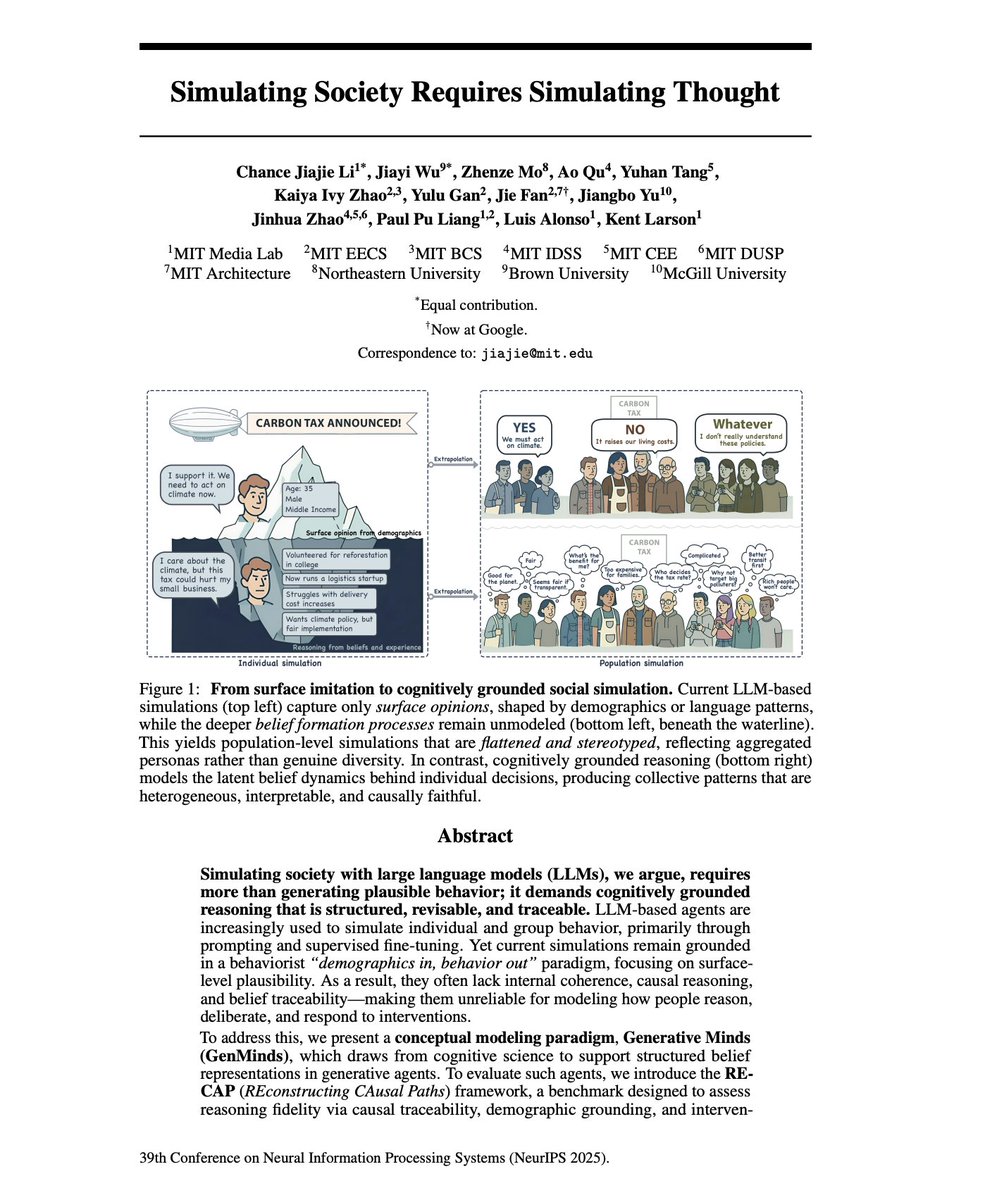

AI Agent Personas should simulate the structure of human reasoning. I’ve been arguing that you cannot "invent" a digital expert agent using just prompt engineering. You have to extract the expert via deep interviewing. A new NeurIPS paper, "Simulating Society Requires Simulating Thought" reinforces everything we've discussed about why thin, synthetic LLM personas fail. Most AI agents operate as "behaviorists." When you prompt an LLM to "act like a senior economist," it relies on surface-level correlations from training data. It generates text that sounds expert-like, but lacks any internal belief structure. 1. Logical Inconsistency: Without an internal model of how beliefs are formed, agents support a policy in one context but oppose it in another. The paper calls this "intervention-invariance mismatch" - beliefs don't update coherently when assumptions change. 2. Illusion of Consensus: In multi-agent simulations, LLMs converge toward the median view (even more positive emotions as the other paper mentions) of the training data. They agree not because of shared reasoning, but because their statistical priors push them toward the center. Your expert's contrarian, hard-won perspective gets averaged out. 3. Identity Flattening: LLMs reproduce stereotypical portrayals that erase intersectional variation. "The rich, positional knowledge of real-world stakeholders is replaced with monolithic, decontextualized simulations." To fix this, we have to move from simulating speech to simulating reasoning. The authors propose a "Cognitive Modeling" approach. "beyond output-level alignment toward aligning the internal reasoning traces of generative agents." Their solution is SEMI-STRUCTURED INTERVIEWS to extract what they call "cognitive motifs" - minimal causal reasoning units that capture how a specific person actually thinks. This is exactly why we built an interviewer system instead of a persona generator. You have to extract their actual belief structure through conversation. Instead of predicting the next word, the agent must possess "Reasoning Fidelity", a structured map of beliefs, causal logic, and cognitive motifs. How do you get this map? You can't prompt for it. You have to interview for it, with AI. The paper explicitly validates the architecture we’ve built: using semi-structured interviews to elicit "causal explanations" and "reasoning traces". This confirms why our Interviewer + Note-Taker multi-agent system is critical. - The Interviewer builds the "Peer Status" necessary to get the expert to open up. - The Note-Taker (the cognitive layer) extracts the "Cognitive Motifs", the distinctive logic blocks that define how that specific expert solves problems. We are moving beyond the era of "acting like an expert" to Generative Minds; agents that embody the positional individuality and causal logic of the people they represent. If you're building AI agents for strategy, decision-making, or stakeholder modelling, start by interviewing the human aspects of your agents.

You have 100+ tabs open and your brain is fried. Introducing Dex, your second brain in Chrome that organizes, remembers, and takes action for you. Turn tabs into to-dos, multitask with agents, find and save anything for later. All without leaving your tab. As a founder, it's already saved me hundreds of hours. Comment for 1M free tokens - joindex [dot] com

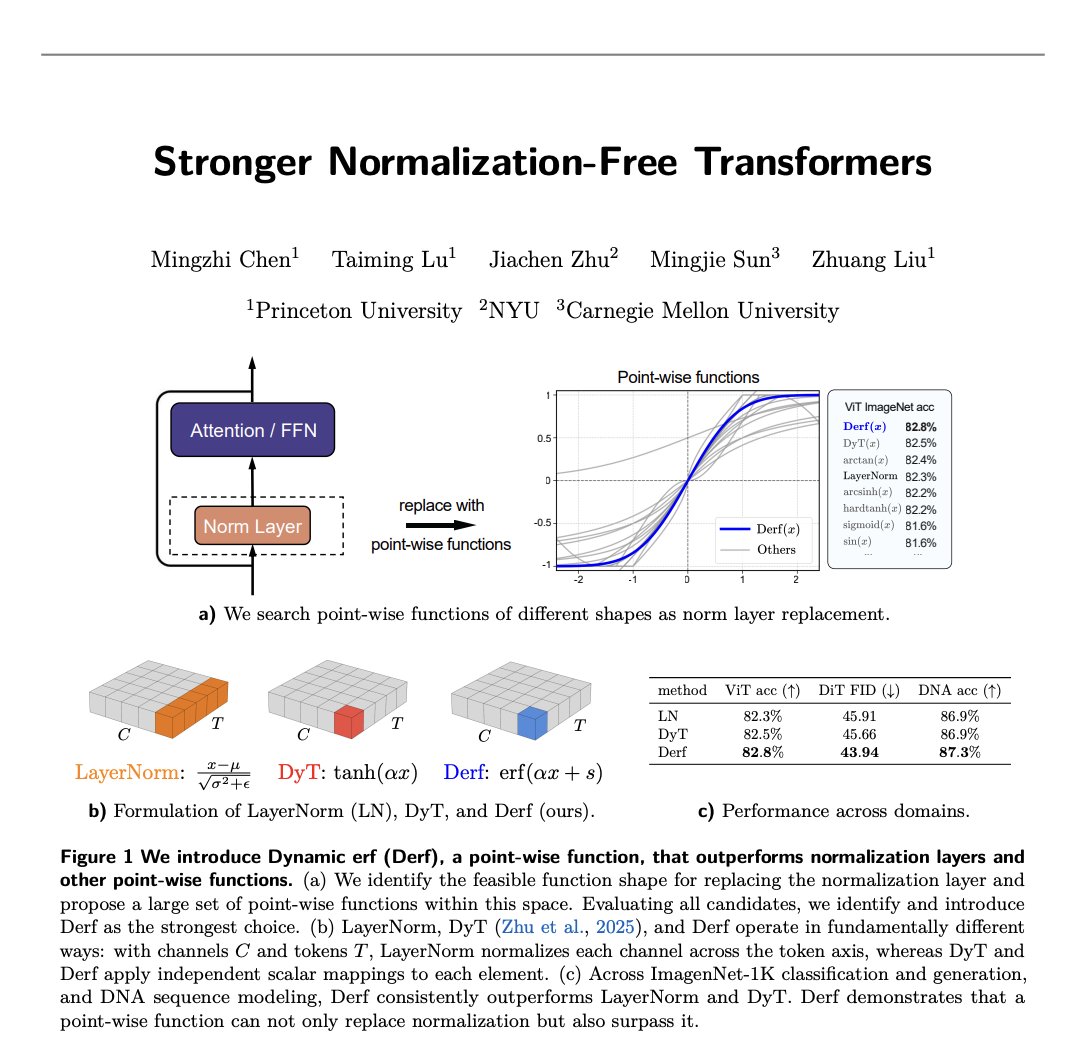

Stronger Normalization-Free Transformers – new paper. We introduce Derf (Dynamic erf), a simple point-wise layer that lets norm-free Transformers not only work, but actually outperform their normalized counterparts. https://t.co/NAPJvfsEGI

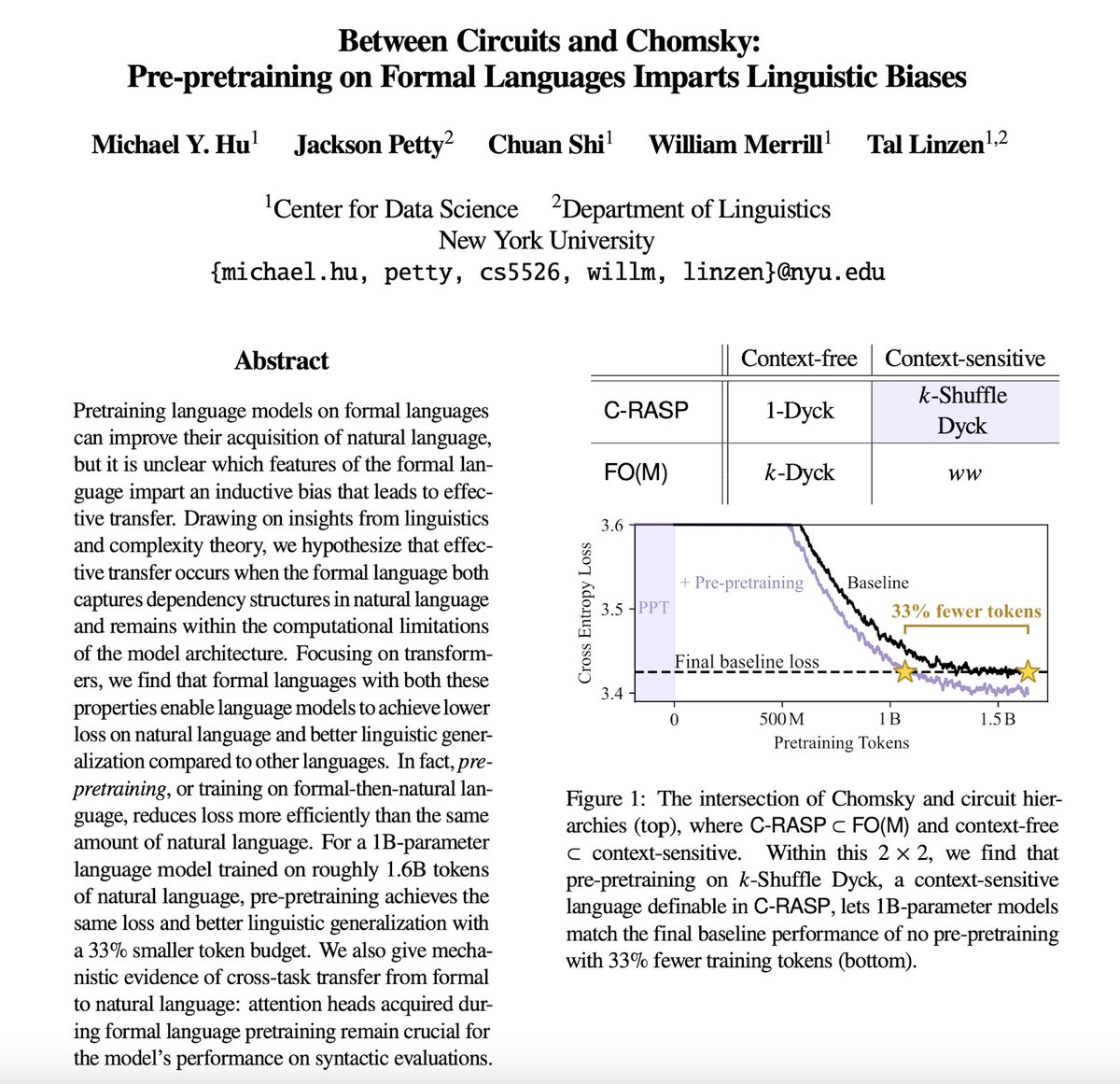

Training on a little 🤏 formal language BEFORE natural language can make pretraining more efficient! How and why does this work? The answer lies…Between Circuits and Chomsky. 🧵1/6👇 https://t.co/xXlBlrfSls

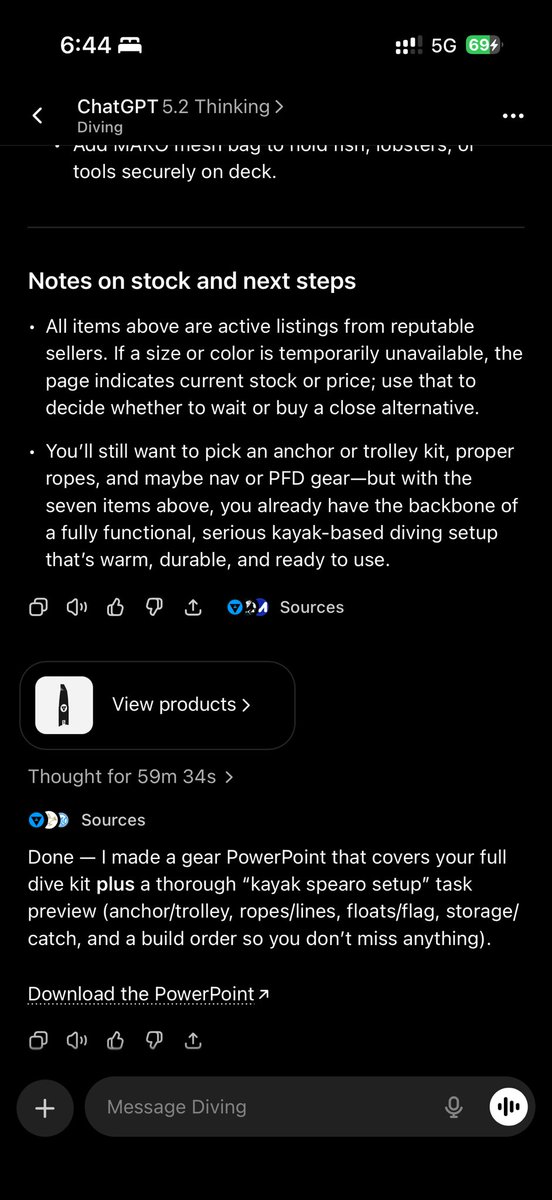

Thought for 1hr https://t.co/EDdRc0iBxH

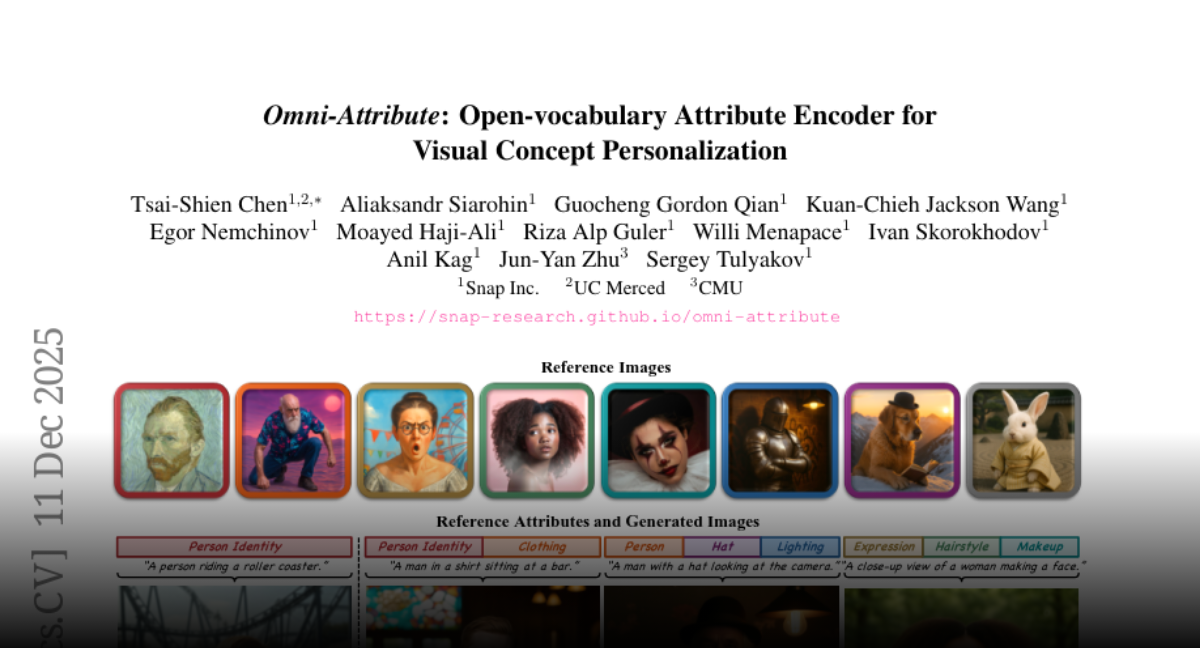

Omni-Attribute Open-vocabulary Attribute Encoder for Visual Concept Personalization https://t.co/OzlsDZOyLz

discuss: https://t.co/A6t6mDJjKD

It started as a small idea to connect AI models to developer workflows. It turned into one of the fastest-growing open standards in the industry. 🚀 Now, the Model Context Protocol is officially joining the @linuxfoundation. Hear from the engineers and maintainers of GitHub, @Microsoft, @AnthropicAI, and @OpenAI on the journey from day zero to now. 👇 https://t.co/CAUhhcUugB

NEURALINK: THE BRAIN CHIP GAME FIRED ITS SUPPLY CHAIN Everyone else is stuck waiting for parts. Neuralink built the whole factory instead. The Takeover: • Chips, implants, robots, surgery tools built in-house • Labs, imaging, animal care all under one roof • Even the HQ is custom-built for speed No middlemen. No bottlenecks. Just pure vertical momentum! Source: @neuralink

@SawyerHackett Government policy driving ethnic cleansing has been wrong. I want to repair the hateful damage caused by genocidal anti-Whites. Without our own lands we cannot endure. A homeland for every race is the moral path. https://t.co/EpJhS4T7VG

@peterdiver69 @brettachapman Are you REALLY going to lecture the rest of us about how to treat indigenous people? Really mate? https://t.co/kL0dVsEx64

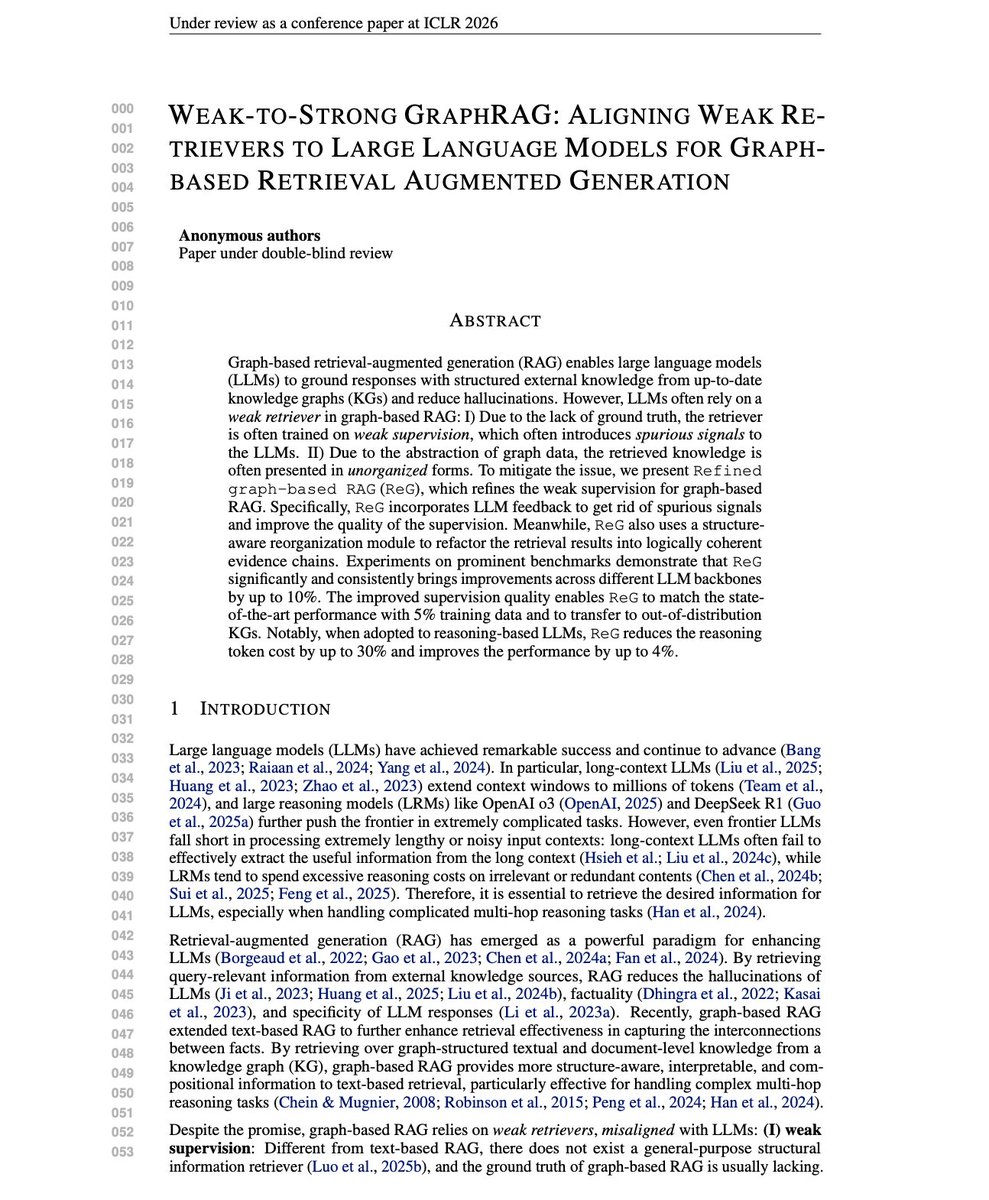

Weak-to-Strong GraphRAG Interesting ICLR 2026 submission with some insights on improving GraphRAG systems and making them more feasible in production environments. Graph-based RAG lets LLMs ground responses in structured knowledge graphs. But there's a fundamental mismatch between retrievers and the LLMs they serve. As knowledge graphs become central to RAG systems, aligning retrievers to LLM needs through LLM feedback offers a principled path to better multi-hop reasoning with lower costs. The problem is twofold. First, graph retrievers train on weak supervision like query-answer shortest paths. This misses key reasoning steps and introduces spurious connections. Second, retrieved knowledge comes back unorganized. LLMs are sensitive to context ordering, and messy graph data adds unnecessary complexity. This new research introduces ReG (Refined Graph-based RAG), a framework that uses LLM feedback to align weak retrievers with the LLMs they serve. Graph-based RAG is essentially a black-box combinatorial search. Given a query, find the minimal sufficient subgraph for correct reasoning. The LLM acts as an evaluator. But exhaustively searching this space is computationally intractable. ReG takes a simpler approach. Instead of optimizing over all possible subgraphs, it utilizes LLMs to select more effective reasoning chains from candidate chains extracted from the knowledge graph. The improved supervision trains better retrievers. A structure-aware reorganization module then refactors retrieval results into logically coherent evidence chains. This aligns the presentation to how LLMs actually process information. On CWQ-Sub with GPT-4o, ReG achieves 68.91% Macro-F1 versus SubgraphRAG's 66.48%. On WebQSP-Sub, 80.08% versus 79.4%. The gains hold across multiple LLM backbones. The data efficiency is notable in the reported experimental results. ReG trained on just 5% of data, matches baselines trained on 80%. The refined supervision eliminates noise that larger datasets would otherwise compound. When paired with reasoning LLMs like QwQ-32B, ReG reduces reasoning tokens by up to 30% while improving performance. The structure-aware reorganization prevents the "overthinking" problem where LRMs produce verbose traces in a noisy context. Paper: https://t.co/mF9sLB63JN

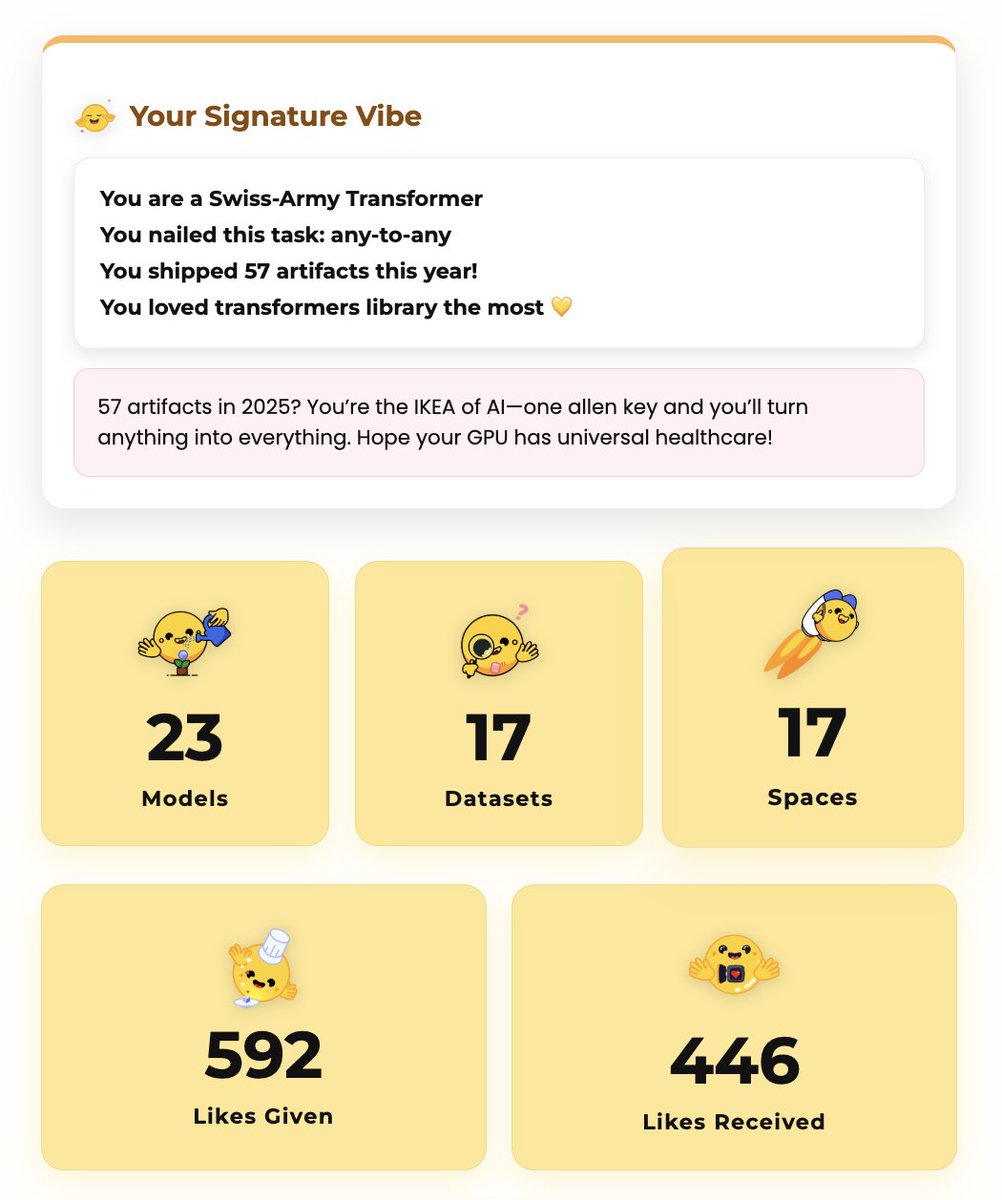

my @huggingface 2025 wrapped has arrived and it's so surprising 😄 thanks everyone for all the love you showed to my repositories 💛 https://t.co/IMBdAJ9YFV

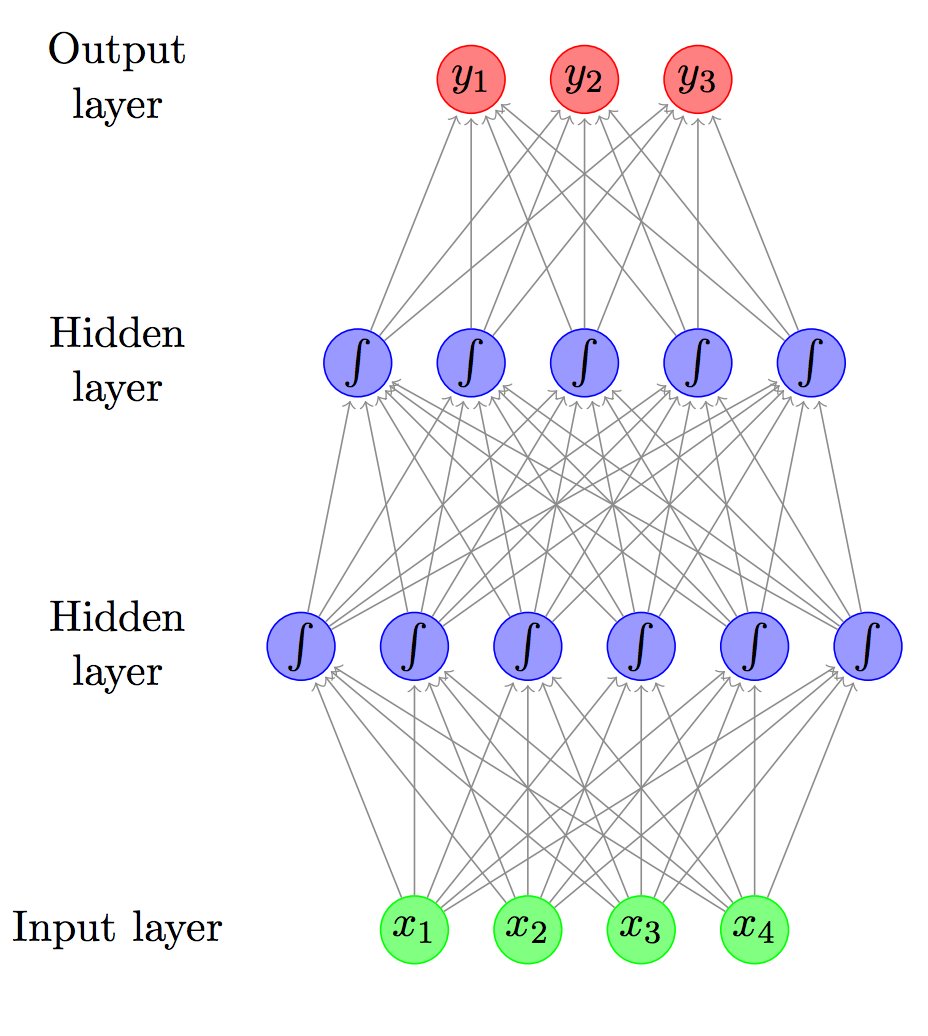

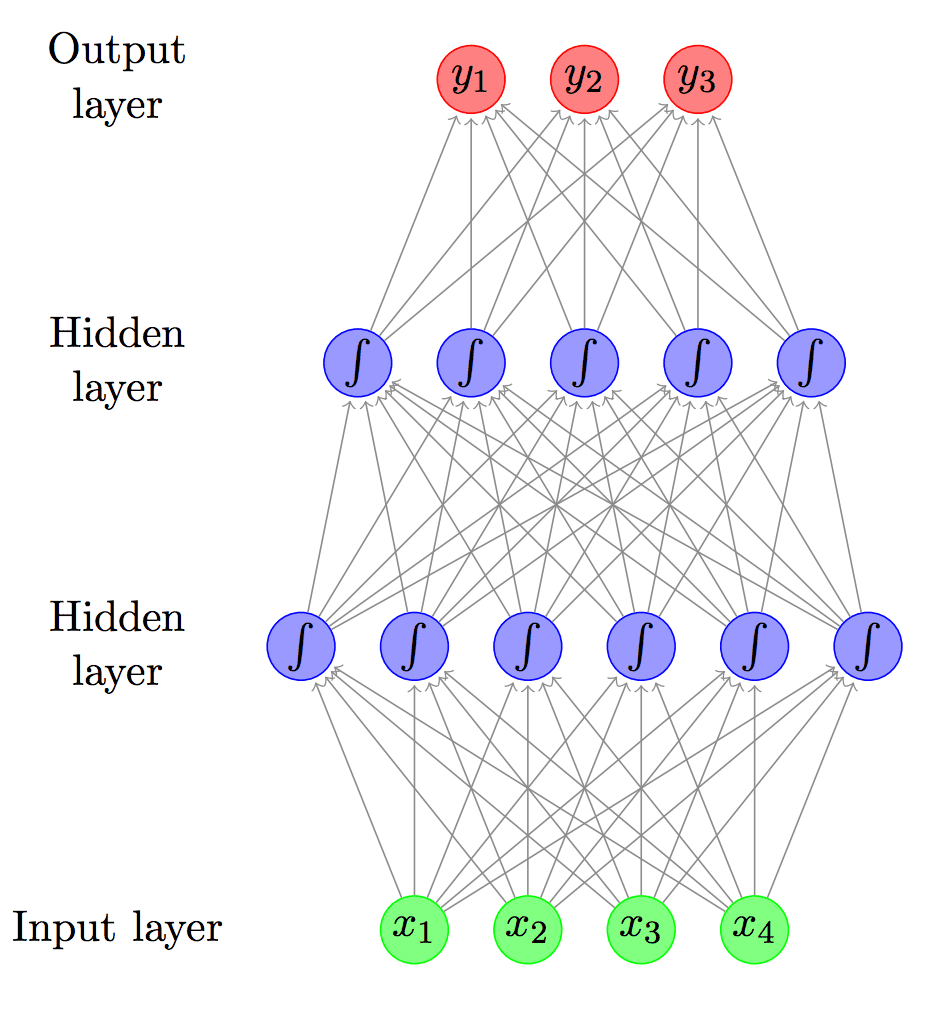

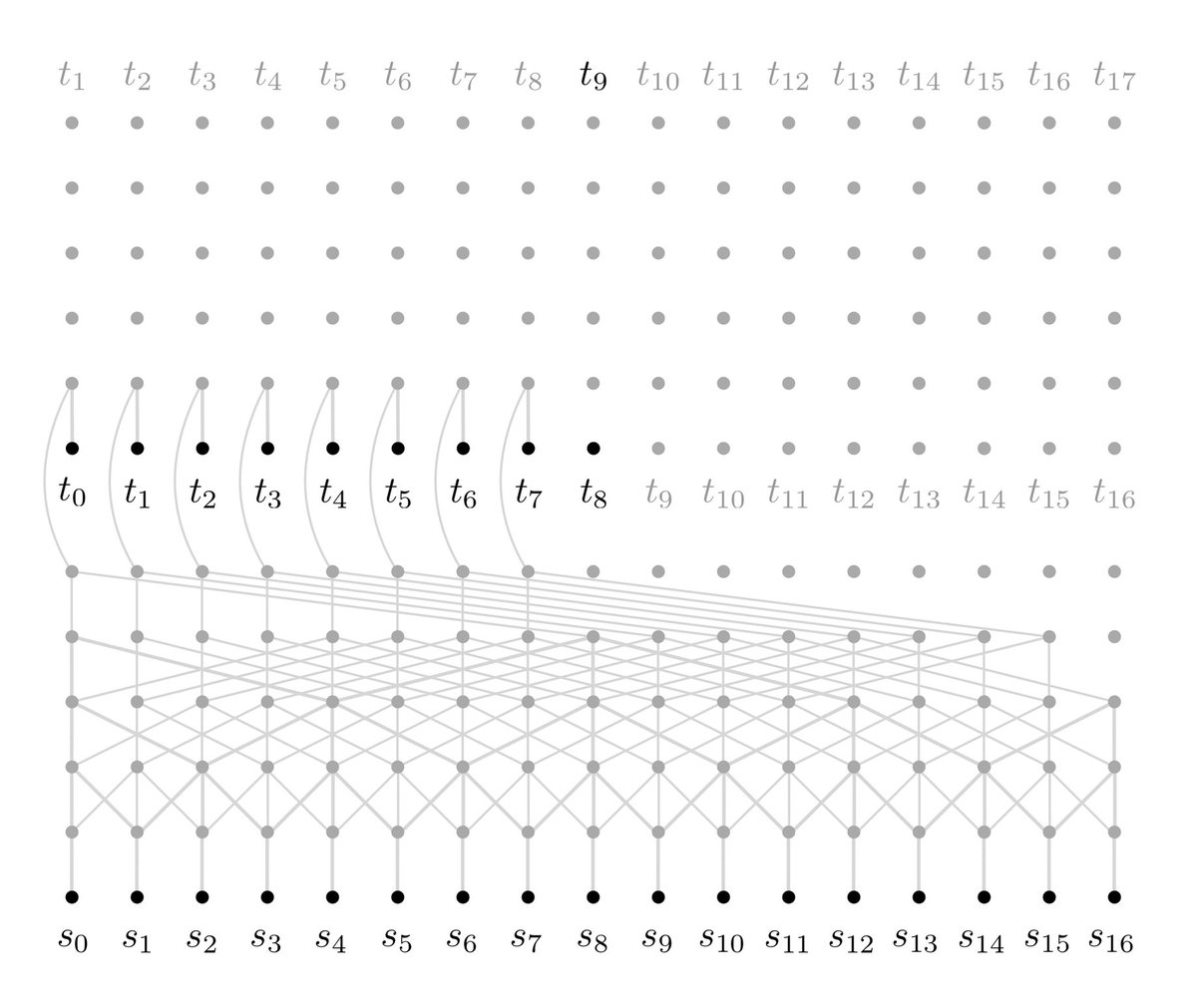

New neural net for Language and Machine Translation! Fast and simple way of capturing very long range dependencies https://t.co/0gSoVVGrYd https://t.co/cWANbRTAMQ

Primer on Neural Networks for Natural Language Processing #AI #MachineLearning #DeepLearning #ML #DL #nlp #tech https://t.co/rStdho8pTx https://t.co/TRtvz91SpT