Your curated collection of saved posts and media

This is happening mad fast! I started to realize this when moving all my workflows to Claude Code Skills. Painful at first, but then suddenly moving at speeds never imaginable. I hear more companies embracing skills, which accelerate things more. Good read! https://t.co/HsgySimauW

How can we make a better TerminalBench agent? Today, we are announcing the OpenThoughts-Agent project. OpenThoughts-Agent v1 is the first TerminalBench agent trained on fully open curated SFT and RL environments. OpenThinker-Agent-v1 is the strongest model of its size on TerminalBench, and sets a new bar on our newly released OpenThoughts-TB-Dev benchmark. (1/n)

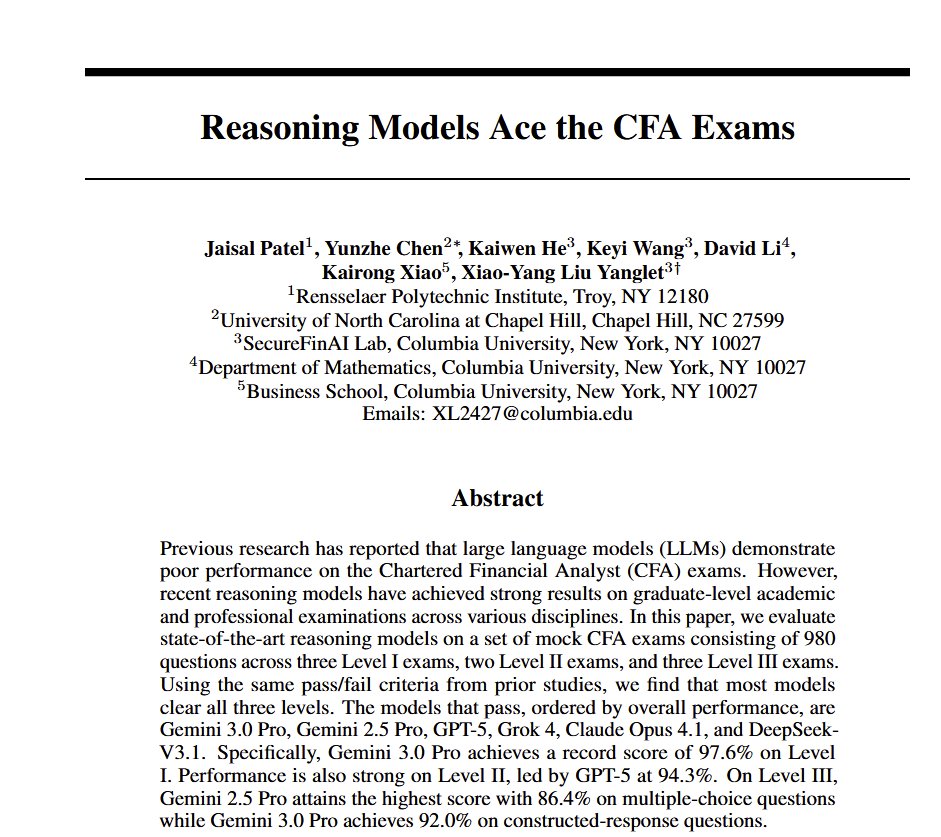

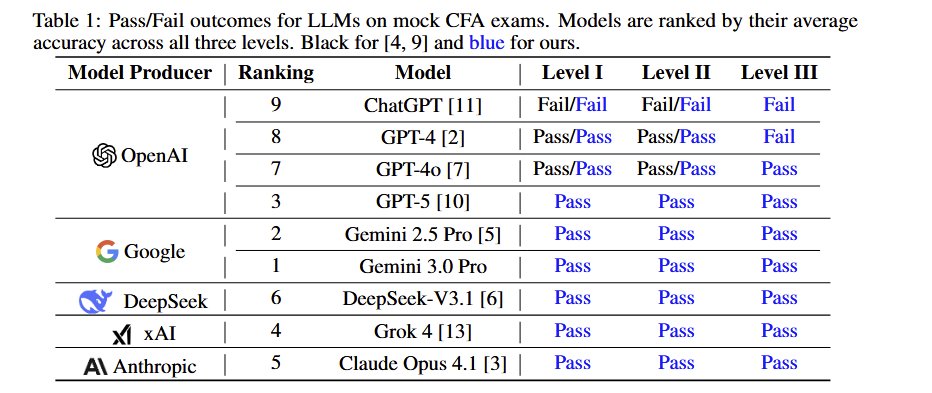

All the frontier AIs now pass all levels of the very challenging Chartered Financial Analyst (CFA) exam The paper used paywalled, new mock exams to reduce the risk of leakage but AI grading for the essays. Interestingly, prompting strategy doesn't matter for most question types https://t.co/k5PsKGObi7

Apple just released Sharp Sharp Monocular View Synthesis in Less Than a Second https://t.co/bXoFtIPmWs

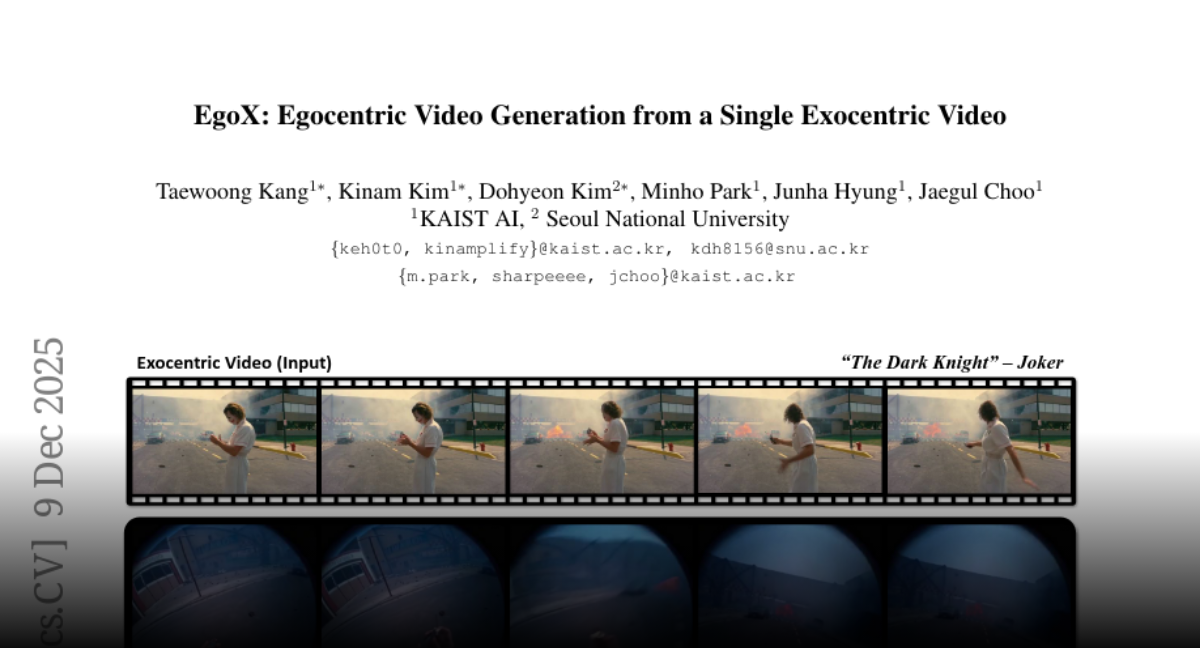

EgoX Egocentric Video Generation from a Single Exocentric Video https://t.co/t2RWPiqSPR

discuss: https://t.co/XnYeSzpn2Y

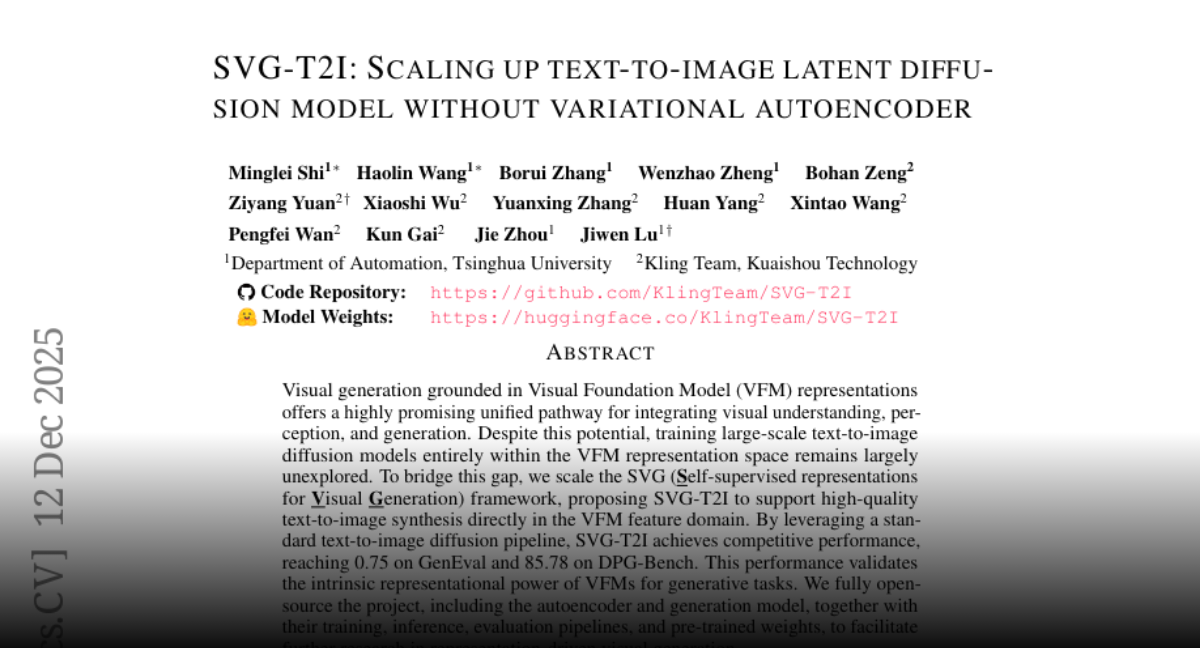

SVG-T2I Scaling Up Text-to-Image Latent Diffusion Model Without Variational Autoencoder https://t.co/cN3g4qn6Js

discuss: https://t.co/NmUO0sare6

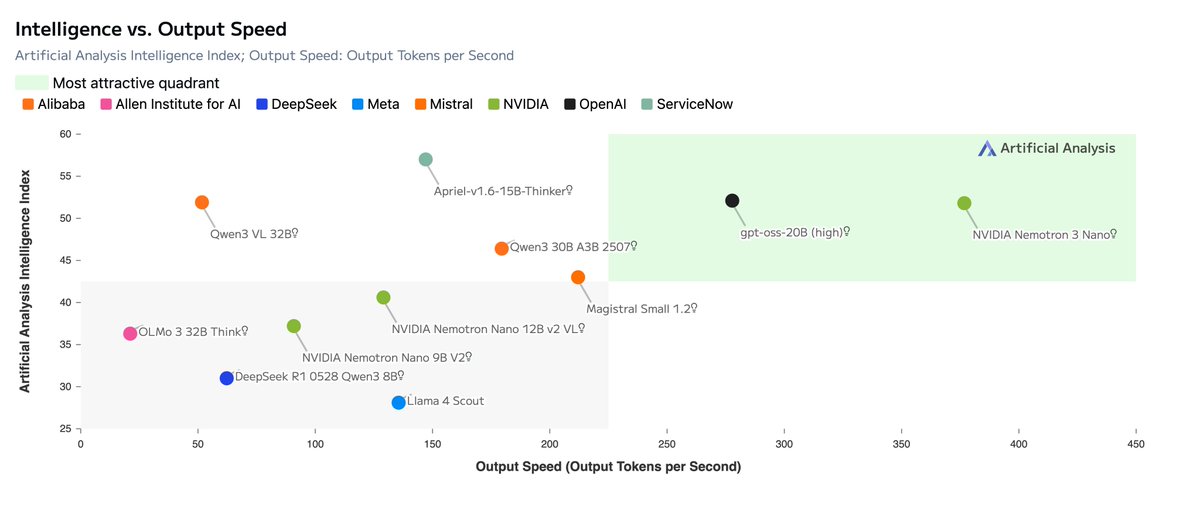

Today, @NVIDIA is launching the open Nemotron 3 model family, starting with Nano (30B-3A), which pushes the frontier of accuracy and inference efficiency with a novel hybrid SSM Mixture of Experts architecture. Super and Ultra are coming in the next few months. https://t.co/v7MKIy7Oe4

A new day, a new Google release: today it's open source! It remains exciting. https://t.co/1WhUibF70r

PSA: https://t.co/n1RdT94ij3 is a good link to bookmark 🤗(and refresh)

A new day, a new Google release: today it's open source! It remains exciting. https://t.co/1WhUibF70r

It's here. Manus 1.6 is now live We've upgraded our core agent architecture for more complex work with less supervision. Here's what that means for you 👇 https://t.co/PHtEduT0BB

🎥🙌| QUIENES SON MÁS INTELIGENTES LOS LATINOS O LOS EUROPEOS? https://t.co/Vbl3SdZ95I

Simply Red - Holding Back The Years https://t.co/TugNAG7FeU

आईटी सेल के सक्रिय सदस्य देश के शोषित पीड़ित, आदिवासी-दलितों की आवाज बुलंद करने वाले ट्विटर टीम @dayalalnanoma80 @nanoma_dayalal भाई डायालाल जी ननोमा के कुदरती अवतरण दिवस पर ढैर सारी शुभकामनाएं जोहार🌷🌷🌷💐🌱🌱🌱 पुरखा आर्शीवाद बना रहे https://t.co/GYJs1gciNW

“Pax Silica” is now a thing. It’s Trump admin’s attempt to establish a group of critical partners to maintain and expand global supply chains and develop AI. In the Indo-Pacific, here’s the list: —Australia —Japan —Singapore —South Korea —Taiwan (guest) https://t.co/cjQeromtlV

NEW Research from Meta Superintelligence Labs and collaborators. The default approach to improving LLM reasoning today remains extending chain-of-thought sequences. Longer reasoning traces aren't always better. Longer traces conflate reasoning depth with sequence length and inherit long-context failure modes. This new research introduces Parallel-Distill-Refine (PDR), a framework that treats LLMs as improvement operators rather than single-pass reasoners. Instead of one long reasoning chain, PDR operates in phases: - Generate diverse drafts in parallel. - Distill them into a bounded textual workspace. - Refine conditioned on this workspace. - Repeat. Context length becomes controllable via degree of parallelism, no longer conflated with total tokens generated. The model accumulates wisdom across rounds through compact summaries rather than replaying full histories. On AIME 2024, PDR achieves 93.3% accuracy compared to 79.4% for standard long chain-of-thought at matched latency budgets. For o3-mini at 49k effective tokens, accuracy improves from 76.9% (Long CoT) to 86.7% (PDR), a 9.8 percentage point gain. PDR also achieves the same accuracy as sequential refinement with 2.57x smaller sequential budget by converting parallel compute into accuracy without lengthening per-call context. The researchers also trained an 8B model with operator-consistent RL to make training match the PDR inference interface. Mixing standard and operator RL yields an additional 5% improvement on both AIME benchmarks. Bounded memory iteration can substitute for long reasoning traces while holding latency fixed. Strategic parallelism and distillation is shown to beat brute-force sequence extension. Paper: https://t.co/EviERpmTu7 Learn to build effective AI Agents in our academy: https://t.co/zQXQt0PMbG

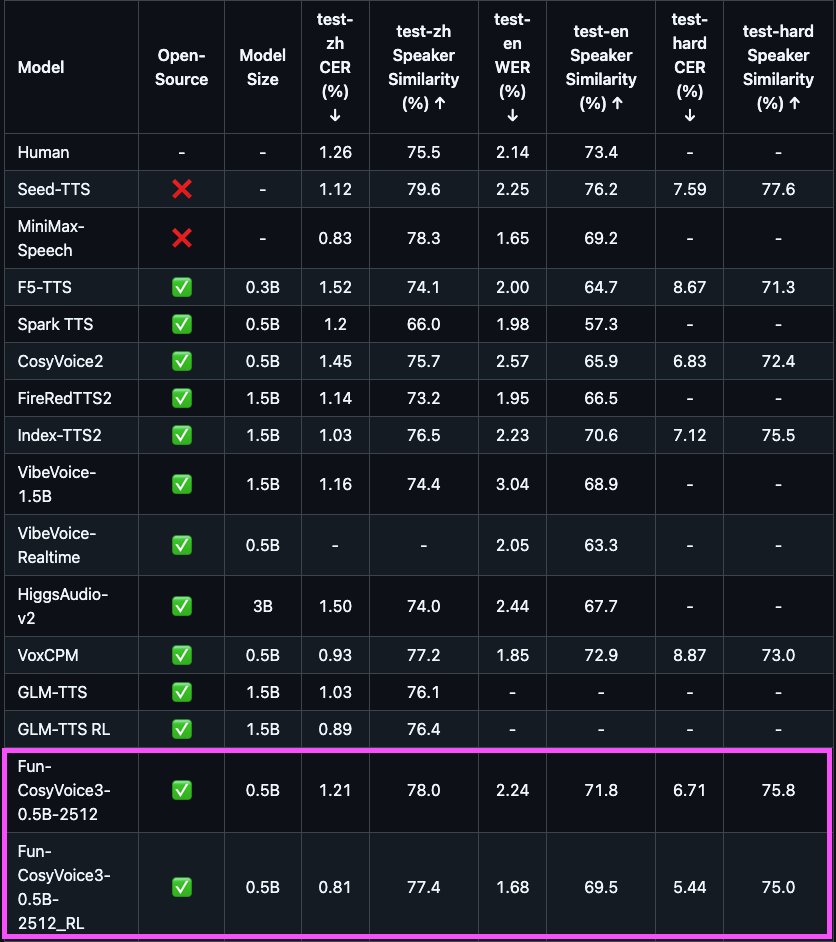

Alibaba @TONGYI_SpeechAI just released a new TTS 🔥 ✨ 0.5B - Apache2.0 ✨ 9 languages/18+ Chinese dialects, with cross lingual zero-shot voice cloning ✨ Low latency: Bi-streaming (text-in / audio-out) with ~150ms latency ✨ Leading performance in content accuracy, speaker similarity, and prosody

PSA: https://t.co/n1RdT94ij3 is a good link to bookmark 🤗(and refresh)

PSA: https://t.co/n1RdT94ij3 is a good link to bookmark 🤗(and refresh)

Open models year in review What a year! We're back with an updated open model builder tier list, our top models of the year, and our predictions for 2026. First, the winning models: 1. DeepSeek R1 (@deepseek_ai): Transformed the AI world 2. Qwen 3 Family (@AlibabaGroup): The new default open models 3. Kimi K2 Family (@Kimi_Moonshot): Models that convinced the world that DeepSeek wasn't special and China would produce numerous leading models. Runner up models: MiniMax M2 (@MiniMax__AI), GLM 4.5 (@Zai_org), GPT-OSS (@OpenAI), Gemma 3 (@GoogleAI), Olmo 3 (@allen_ai) Honorable Mentions: Nvidia's (@nvidia) Parakeet speech-to-text model & Nemotron 2 LLM, Moondream 3 VLM (@moondreamai), Granite 4 LLMs (@IBMResearch), and HuggingFace's (@huggingface) SmolLM3. Updated Tier list: Frontier open labs: DeepSeek (@deepseek_ai), Qwen (@AlibabaGroup), and Kimi Moonshot (@Kimi_Moonshot) Close behind: https://t.co/d5wmnd2o3C (@Zai_org) & MiniMax AI (@MiniMax__AI) (notably none from the U.S. here and up) Noteworthy (a mix of US & China): StepFun AI (@StepFun_ai), Ant Group's (@AntGroup/ @TheInclusionAI Inclusion AI, Meituan (@Meituan_LongCat), Tencent (@TencentHunyuan), IBM (@IBMResearch), Nvidia (@nvidia), Google (@GoogleAI), & Mistral (@MistralAI) Then a bunch more below that, which we detail. Predictions for 2026: 1. Scaling will continue with open models. 2. No substantive changes in the open model safety narrative. 3. Participation will continue to grow. 4. Ongoing general trends will continue w/ MoEs, hybrid attention, dense for fine-tuning. 5. The open and closed frontier gap will stay roughly the same on any public benchmarks. 6. No Llama-branded open model releases from Meta in 2026. Read the full post on @interconnectsai -- link below.

今日のAIニュースをゆる〜くお届け <第18話 Reachy Mini> #ゆるふわAI研究所 https://t.co/7F4l3Gtza8

今日のAIニュースをゆる〜くお届け <第18話 Reachy Mini> #ゆるふわAI研究所 https://t.co/7F4l3Gtza8

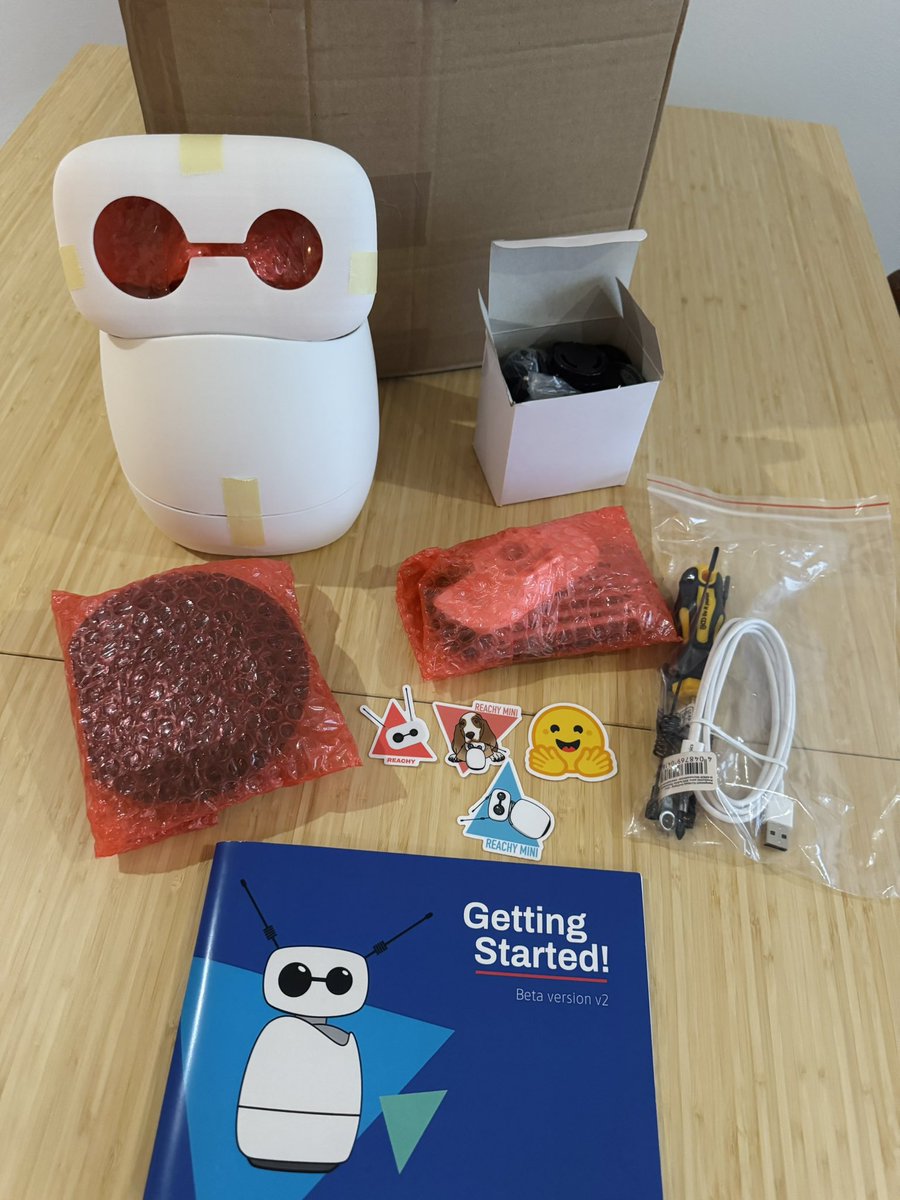

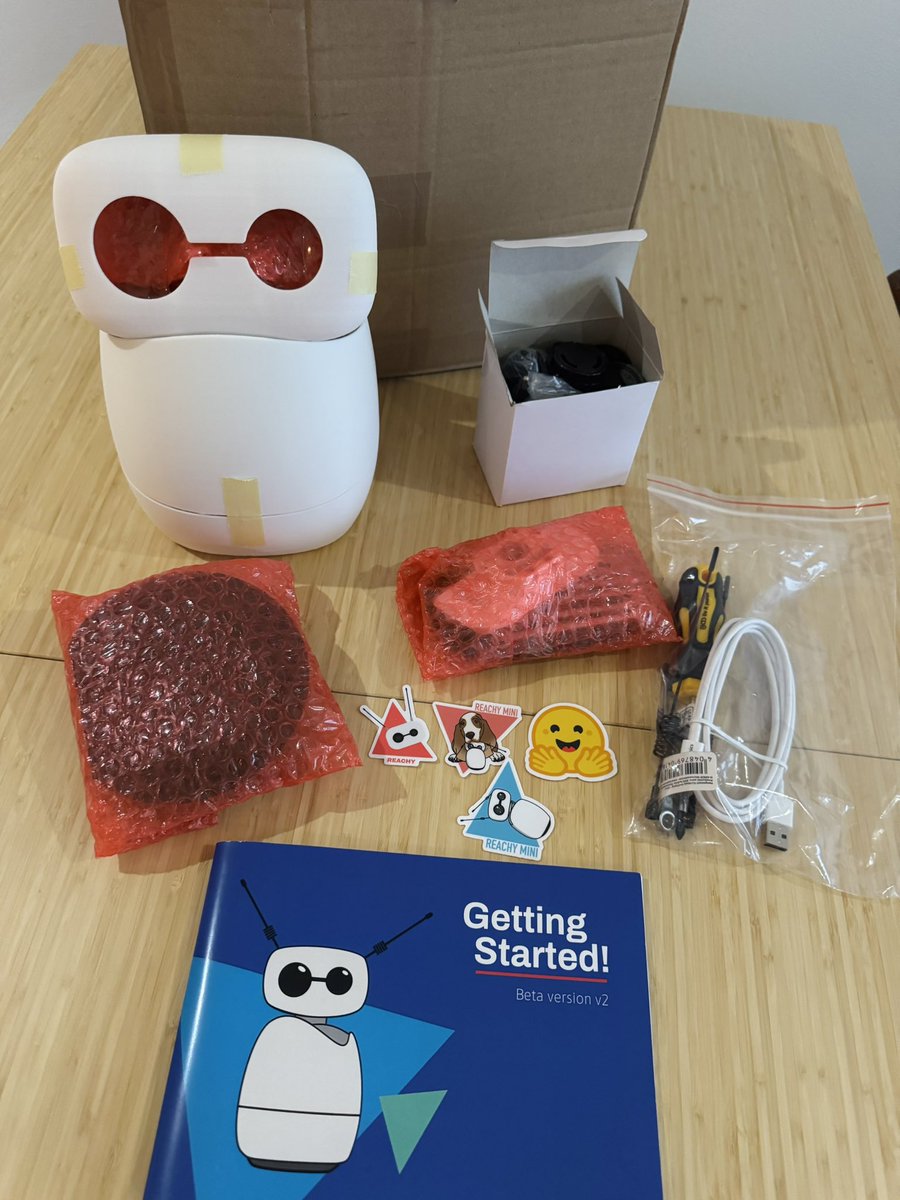

my reachy mini is here, can’t wait to play around with it! https://t.co/wyEp2lXk4X

my reachy mini is here, can’t wait to play around with it! https://t.co/wyEp2lXk4X

2/ In GitHub Copilot, GPT-5.2 is a fantastic multi-purpose model, especially when it comes to long-context and reasoning when coding or investigating a complex code base. Also available today. https://t.co/VMnJP1TXdp

Imagine: Two animals run the exact same distance. One chooses to. One is forced. The voluntary runner gets healthier—better heart rate, blood pressure, glucose. The forced runner? Gets sicker. Andrew Huberman says the same rule destroys or upgrades your stress, your workouts, even your life. And the craziest proof: People who watched hours of Boston bombing news coverage suffered MORE acute stress than those who were actually there. Your mind doesn’t know the difference between experiencing and relentlessly consuming. So… what “have-to” in your life are you ready to reframe as a choice? This 1:58 clip just rewired my brain.

NEW Research from Apple. When you think about it, RAG systems are fundamentally broken. Retrieval and generation are optimized separately, retrieval selects documents based on surface-level similarity while generators produce answers without feedback about what information is actually needed. There is an architectural mismatch. Dense retrievers rank documents in embedding space while generators consume raw text. This creates inconsistent representation spaces that prevent end-to-end optimization, redundant text processing that causes context overflow, and duplicated encoding for both retrieval and generation. This new research introduces CLaRa, a unified framework that performs retrieval and generation over shared continuous document representations. They encode documents once into compact memory-token representations that serve both purposes. Instead of maintaining separate embeddings and raw text, documents are compressed into dense vectors that both the retriever and generator operate on directly. This enables something previously impossible: gradients flowing from the generator back to the retriever through a differentiable top-k selector using Straight-Through estimation. The retriever learns which documents truly enhance answer generation rather than relying on surface similarity. To make compression work, they introduce SCP, a pretraining framework that synthesizes QA pairs and paraphrases to teach the compressor which information is essential. Simple QA captures atomic facts, complex QA promotes relational reasoning, and paraphrases preserve semantics while altering surface form. Results: At 16x compression, CLaRa-Mistral-7B surpasses the text-based DRO-Mistral-7B on NQ (51.41 vs 51.01 F1) and 2Wiki (47.18 vs 43.65 F1) while processing far less context. At 4x compression, it exceeds uncompressed text baselines by 2.36% average on Mistral-7B. Most notably, CLaRa trained with only weak supervision from next-token prediction outperforms fully supervised retrievers with ground-truth relevance labels. On HotpotQA, it achieves 96.21% Recall@5, exceeding BGE-Reranker (85.93%) by over 10 points despite using no annotated relevance data. Well-trained soft compression can retain essential reasoning information while substantially reducing input length. The compressed representations filter out irrelevant content and focus the generator on reasoning-relevant context, leading to better generalization than raw text inputs. Great read for AI devs. (bookmark it) Paper: https://t.co/JtMukGVNwV Learn to build with RAG and AI Agents in my academy: https://t.co/JBU5beIoD0

NVIDIA introduces the Nemotron 3 family of models! Super, Ultra will be released later, Nano released today * Mixture-of-Experts hybrid Mamba–Transformer architecture Super and Ultra models: * are trained with NVFP4 * LatentMoE (project token embedding to smaller latent dimension for experts to process) * multi-token prediction full pretraining+post-training data and code will be made open-source

🧠⚙️Building the world’s first autonomous science operating system requires extraordinary scale, speed & security. Find out how @LilaSciences are partnering with #AWS to support their mission of accelerating scientific discovery. 👉 https://t.co/HBCCvY0wLu https://t.co/waU86oEeVi

“iRobot Corp., the company that revolutionized robot vacuum cleaners in the early 2000s with its Roomba model, filed for bankruptcy and proposed handing over control to its main Chinese supplier.” 😥 https://t.co/sSifua7Tjn

INDIGENOUS PEOPLES FOR BLACK LIVES #JusticeForAhmadArbery #JusticeForBigFloyd #JusticeforBreonnaTaylor #JusticeForTonyMcDade https://t.co/dFNZ7JfLH3