Your curated collection of saved posts and media

The Microsoft split in 1983 that changed everything. https://t.co/Mqd4TjBCw7

The Microsoft split in 1983 that changed everything. https://t.co/Mqd4TjBCw7

Professor Jeffrey Sachs, makes the case that Trump is not only insane, but also a dangerously dumb human being. 😳👇 https://t.co/DYUyxA4Xq3

🚨 In 2 weeks, a final decision on amendments to the EU AI Act and the GDPR will be made. What is at stake is nothing other than the future of Europe. Many don't know, but the stream of events leading to this moment began much earlier, with the publication of the Draghi report on European competitiveness in September 2024. In his long report, Mario Draghi diagnosed various areas in which European competitiveness was lagging behind and suggested that one of the reasons was overregulation and the excessive number of laws governing the digital space. Laws such as the GDPR and the AI Act were to blame. Before I continue, here is something many overlook: the Draghi report was finalized in September 2024, while the AI Act was officially enacted one month earlier. The AI Act had barely been enacted, and it was already considered 'wrong,' excessive, and to blame for Europe's less-than-ideal (to be light) position in the AI race. From that moment on, the European discourse on the protection of fundamental rights was never the same. Its narrative shifted, and after that, the new dogma was that the path to innovation would be to "remove the red tape," and "apply the AI Act in a business-friendly way." (whatever that means from a legal perspective). The AI Action Summit last year made this new narrative loud and clear to the public, as EU officials abandoned fundamental rights-focused statements. Last year, the narrative shift was legally materialized. The EU published the Digital Omnibus with proposed amendments to some of its most important laws regulating data protection and AI: the GDPR and the AI Act. Strangely, the main justification for the AI Act's amendments was the designation delays by EU member states and the work delays by EU standardization organizations. If these were the real reasons, wouldn't it be more coherent to pressure them to move faster, hire more people, increase the budget, or help address the bureaucratic obstacles...? Does the EU need to amend some of the AI Act’s core obligations because EU bodies are delayed? I didn't buy it. Given the context, it felt more like a broader political shift. The time has arrived, and in two weeks, EU officials will meet to make a final decision on the Digital Omnibus and the amendments to the GDPR and the AI Act (among other topics). As I wrote in my newsletter, several of the proposed amendments weaken AI regulation in the EU and go against the protection of fundamental rights. If you are European, if you were hopeful for a Brussels Effect in AI, or if you are interested in the protection of fundamental rights, I invite you to read my full article below. - 👉 To learn more: - Read my article about the Digital Ominibus first draft and join my newsletter's 93,500+ subscribers (link below). - Join the 29th cohort of my Advanced AI Governance Training. Among the topics I cover in depth are the EU AI Act, the Digital Omnibus, and the European AI strategy (link below).

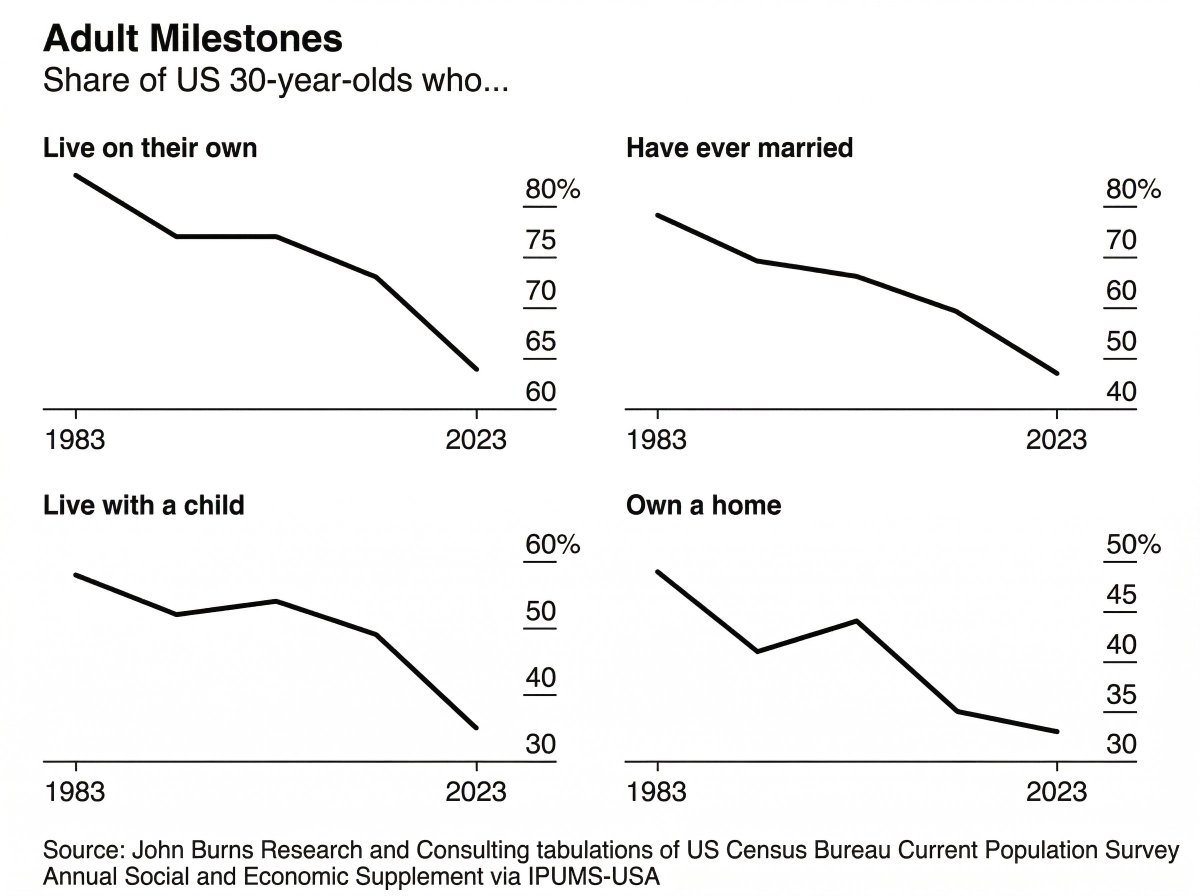

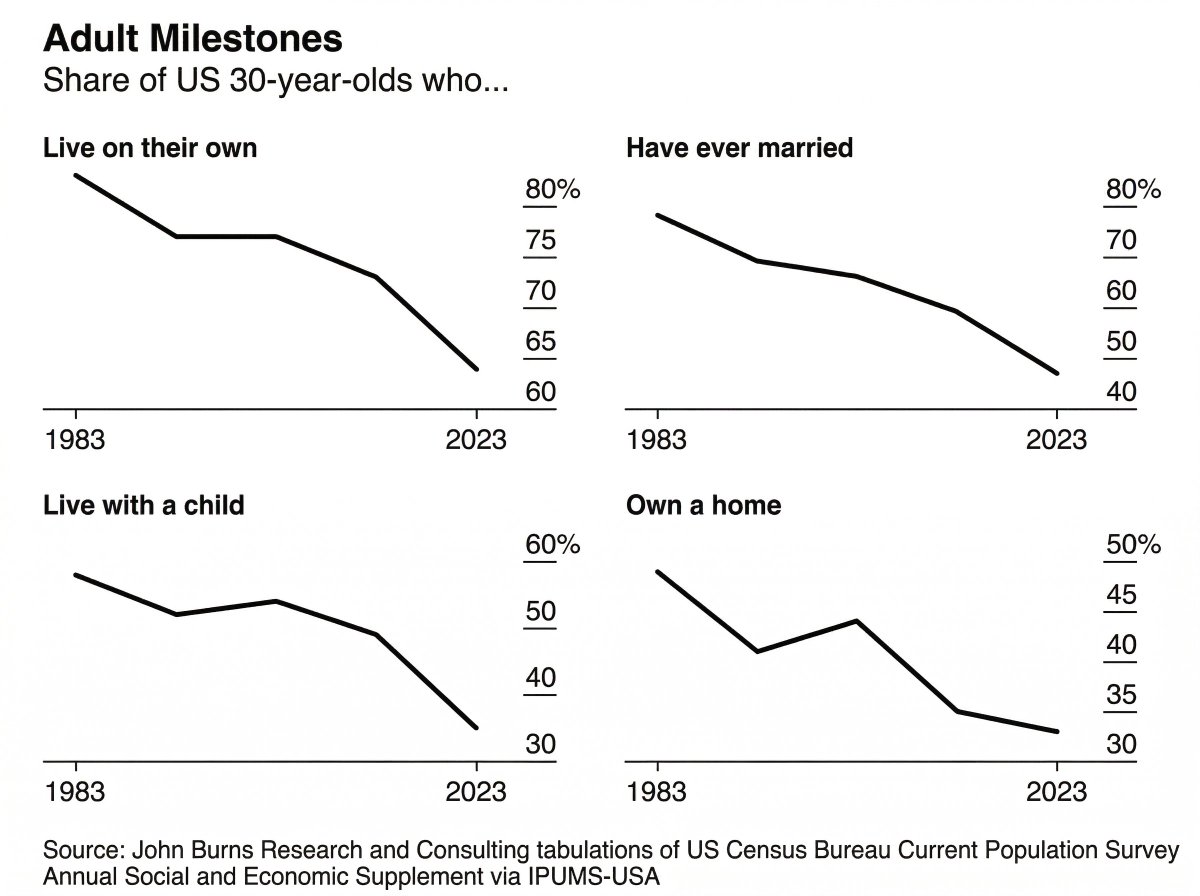

the economic collapse of gen z in four charts https://t.co/FoWNscQFPh

the economic collapse of gen z in four charts https://t.co/FoWNscQFPh

I love Twitter's new custom feed I just wish it wouldn't reset all the time to the default For You whenever I re-open the app. https://t.co/1U1zJubzK8

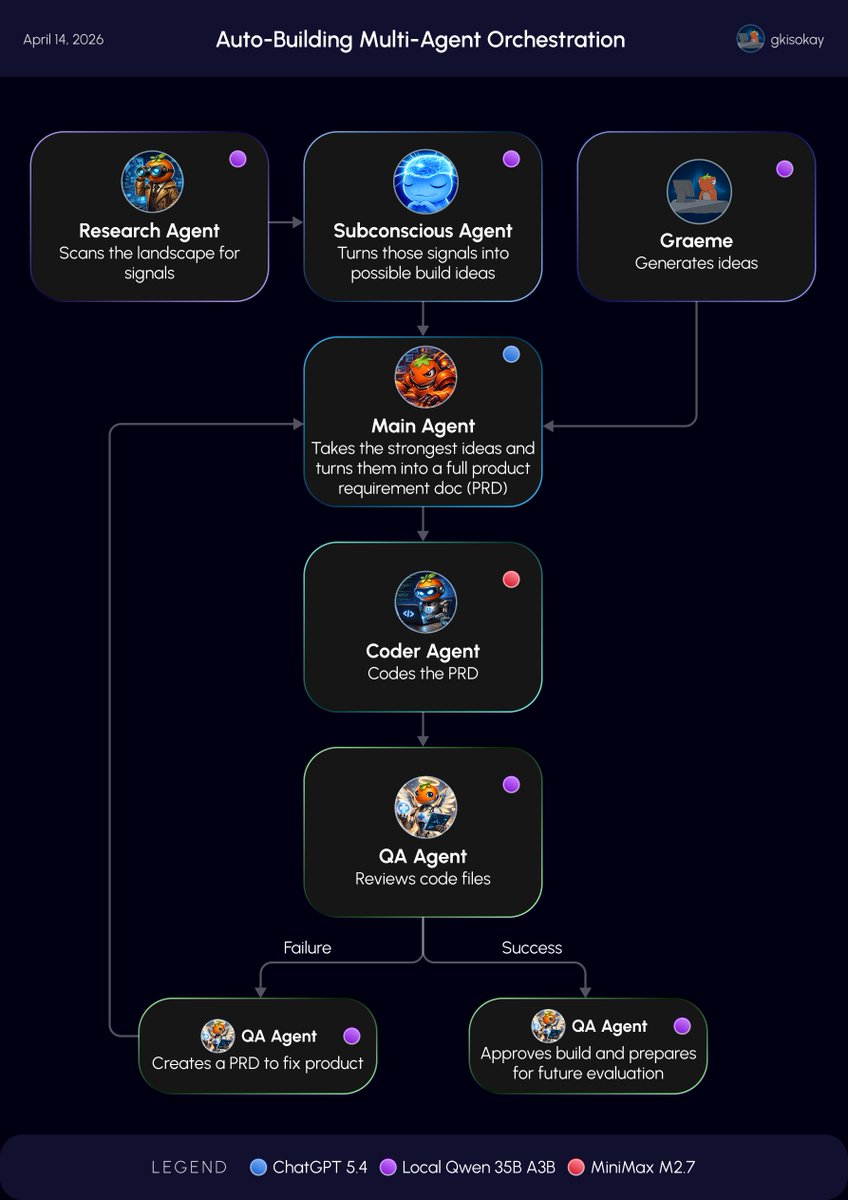

Day 7 of Building AGI for my Hermes Agent: I got my GPT-5.4 calls from 270x to 0x per day Today I found one of the dumbest parts of my setup where routine agent work was quietly burning GPT-5.4 token credits. Scanning, summaries, low-risk review, background thinking. These should not be hitting GPT-5.4. So I rebuilt the stack. Using @leopardracer’s Mac Mini local model setup, I got Local Qwen 35B A3B running for the always-on cognitive work as a test. Now the system works like this: - Local Qwen 35B A3B for scanning, summaries, low-risk review, and constant background thinking - MiniMax M2.7 for approved coding work - GPT-5.4 for final planning and high-judgment approval - No model for preflight checks when queues are empty The result: - GPT-5.4 cron calls per day went from 270 to 0. - Now GPT-5.4 only fires when there is real planning work or a deliberate escalation. Frontier intelligence is too expensive to waste on routine cognition, and my system got dramatically cheaper overnight, and much cleaner too. A lot of people are overspending because they are using the smartest model for the wrong jobs. Not every thought deserves a frontier token. Follow @gkisokay to see what happens next.

Day 6 of Building AGI for my Hermes Agent: The Crew Arrives 🧠 Today, the system stopped being a single experimental mind and became a coordinated crew. Up until now, the subconscious agent could freely think, explore, and generate new build ideas. So today I built the first mu

A video on how to install hermes agent because all of my friends and family keep asking me for a tutorial https://t.co/pYvInGx67N

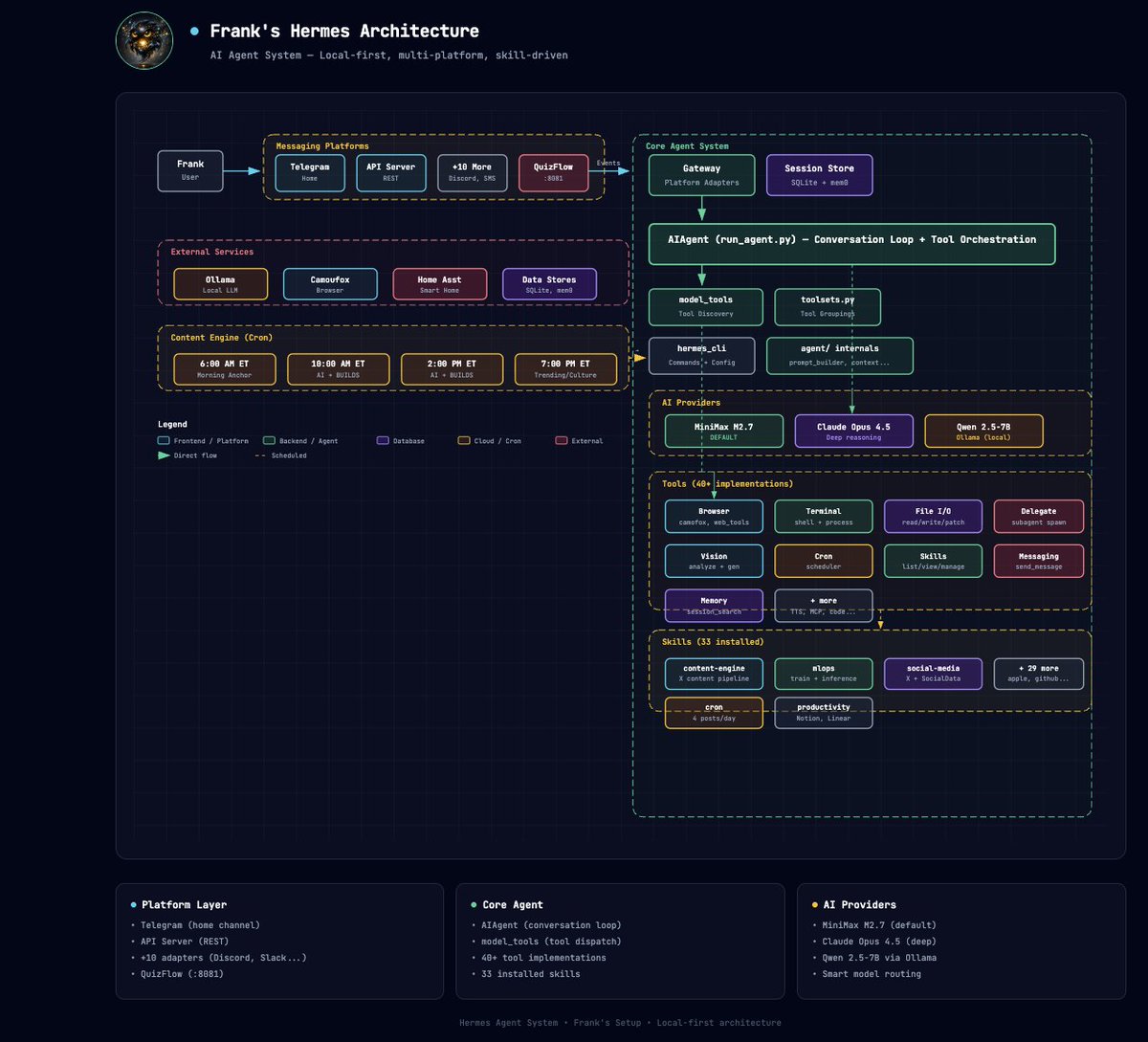

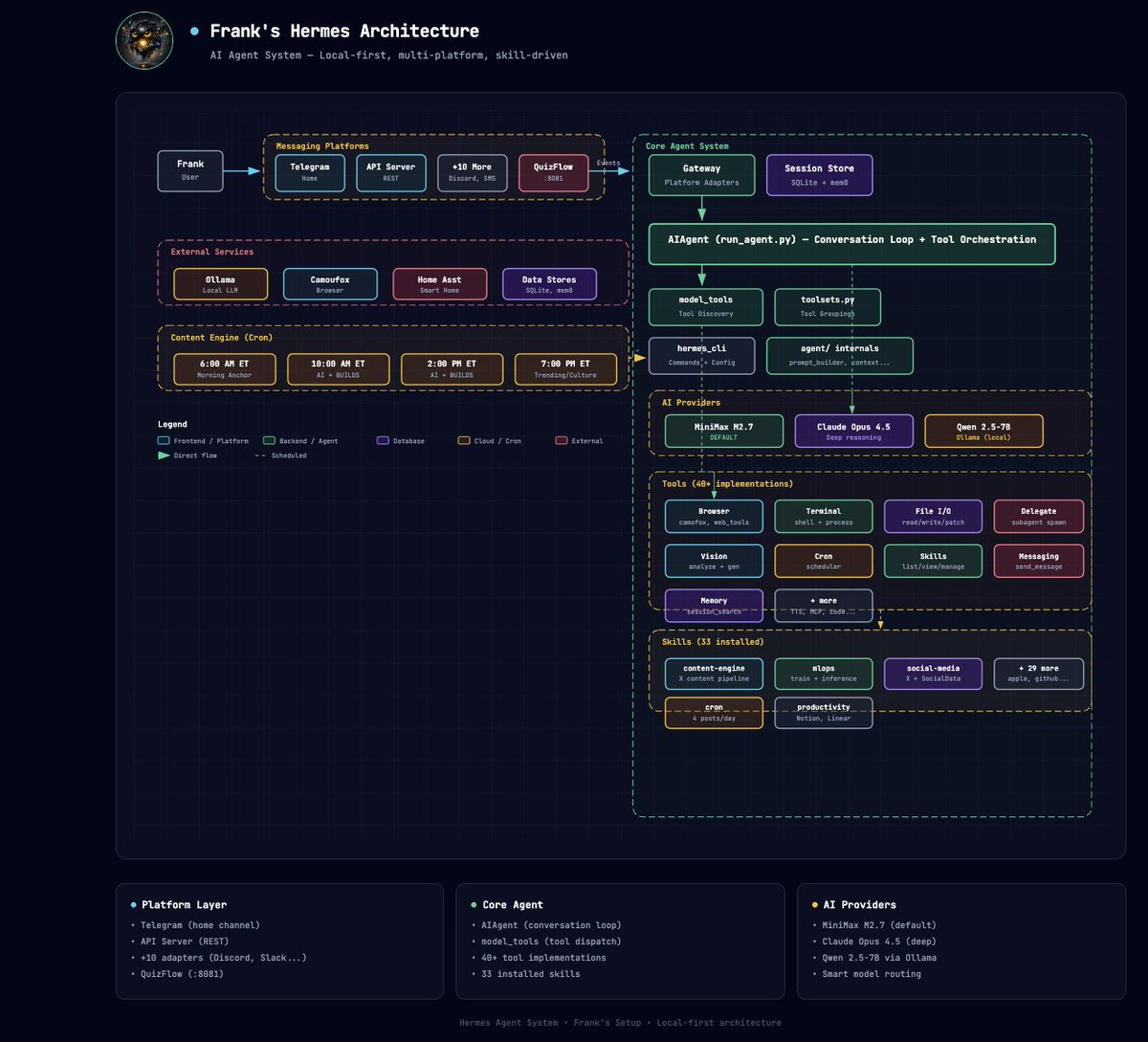

This skill is actually cool af. Say Hi to Frank https://t.co/wcVUtPuWUC

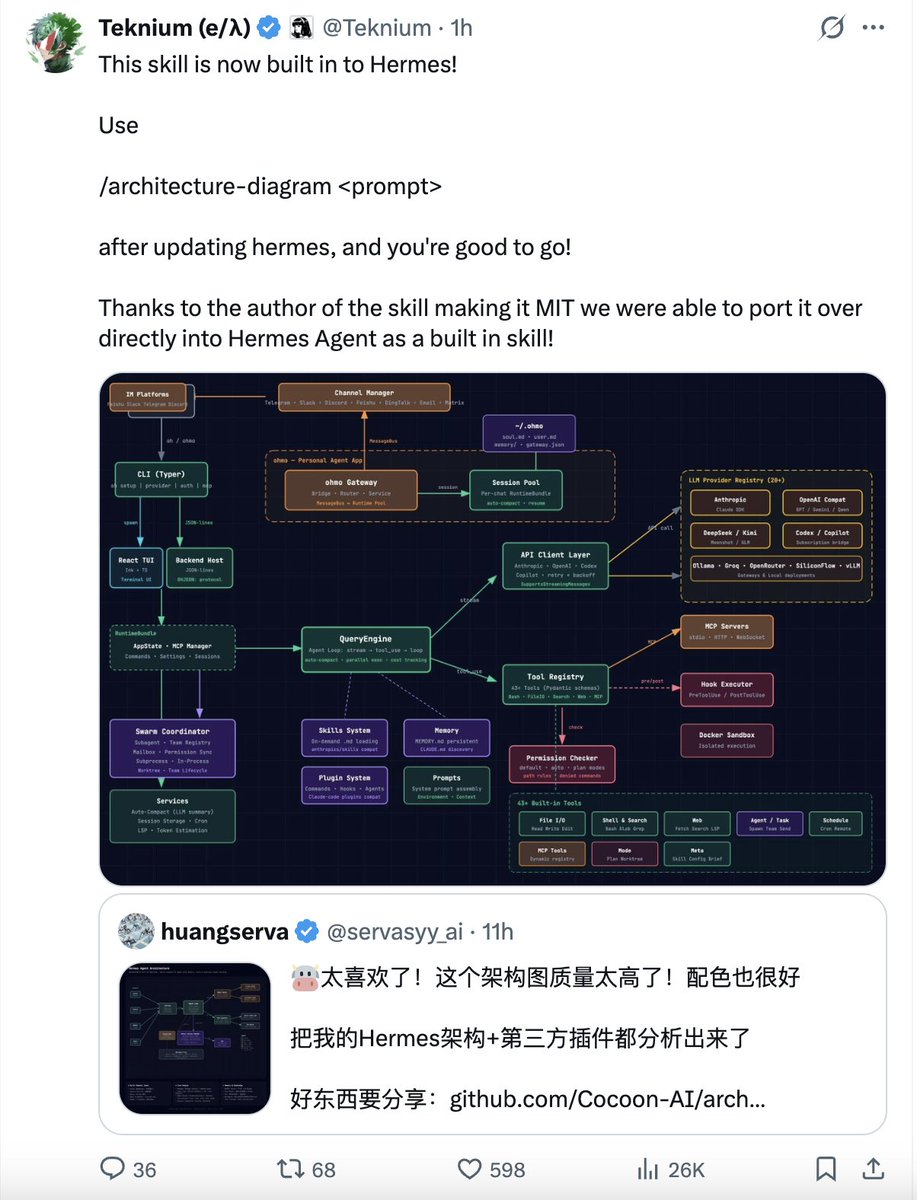

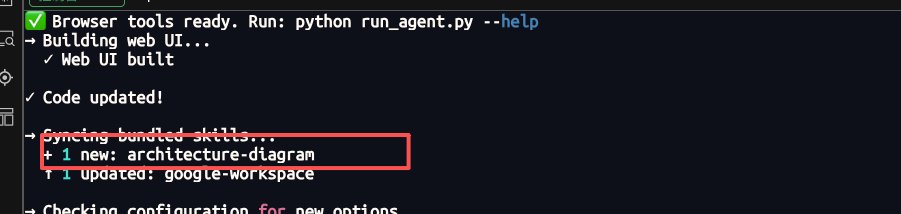

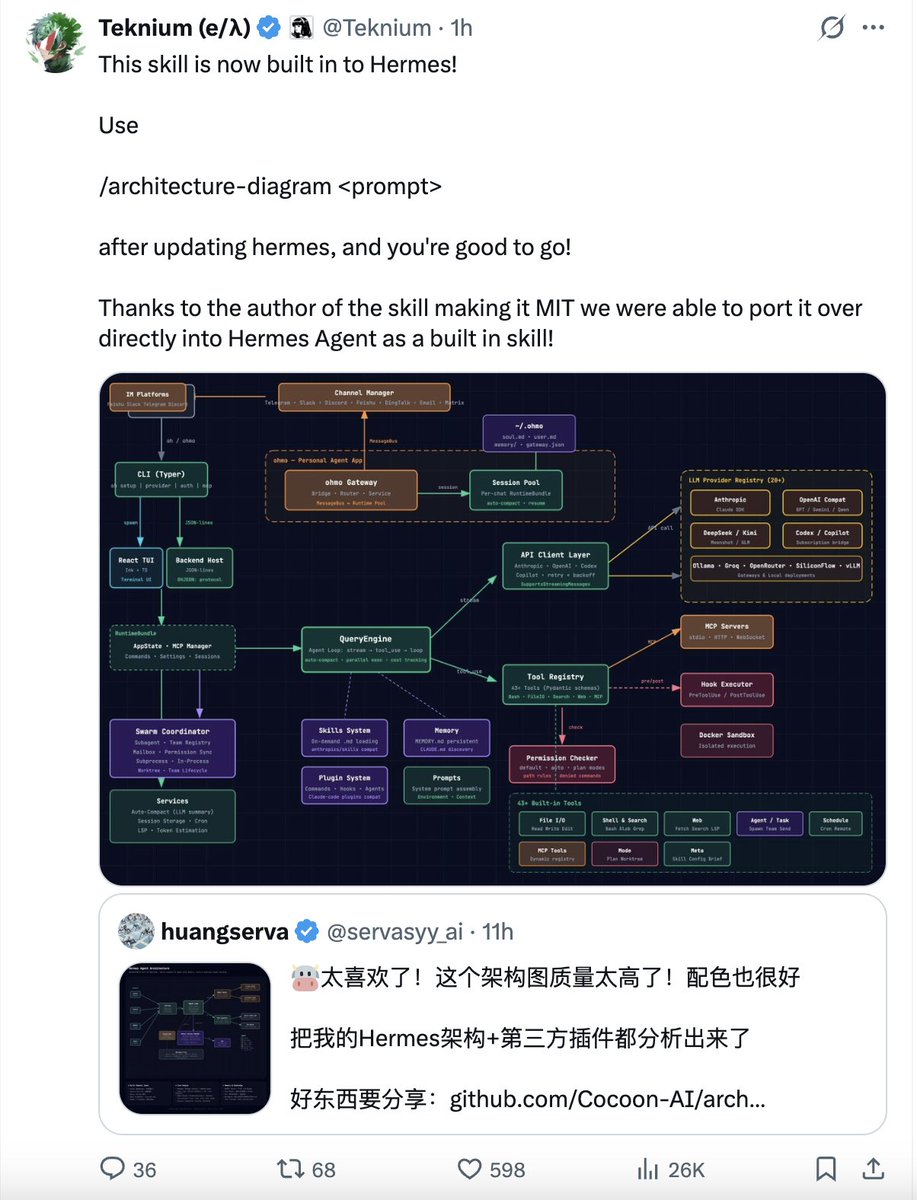

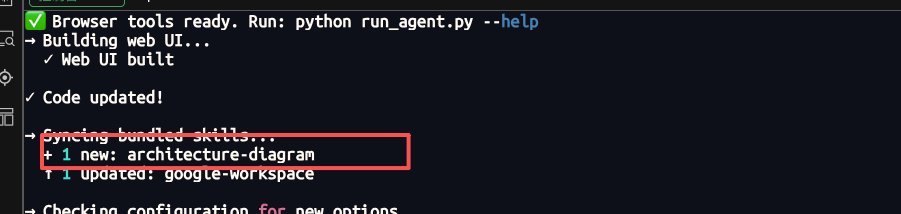

This skill is now built in to Hermes! Use /architecture-diagram <prompt> after updating hermes, and you're good to go! Thanks to the author of the skill making it MIT we were able to port it over directly into Hermes Agent as a built in skill! https://t.co/CVfMGpPQp6

This skill is actually cool af. Say Hi to Frank https://t.co/wcVUtPuWUC

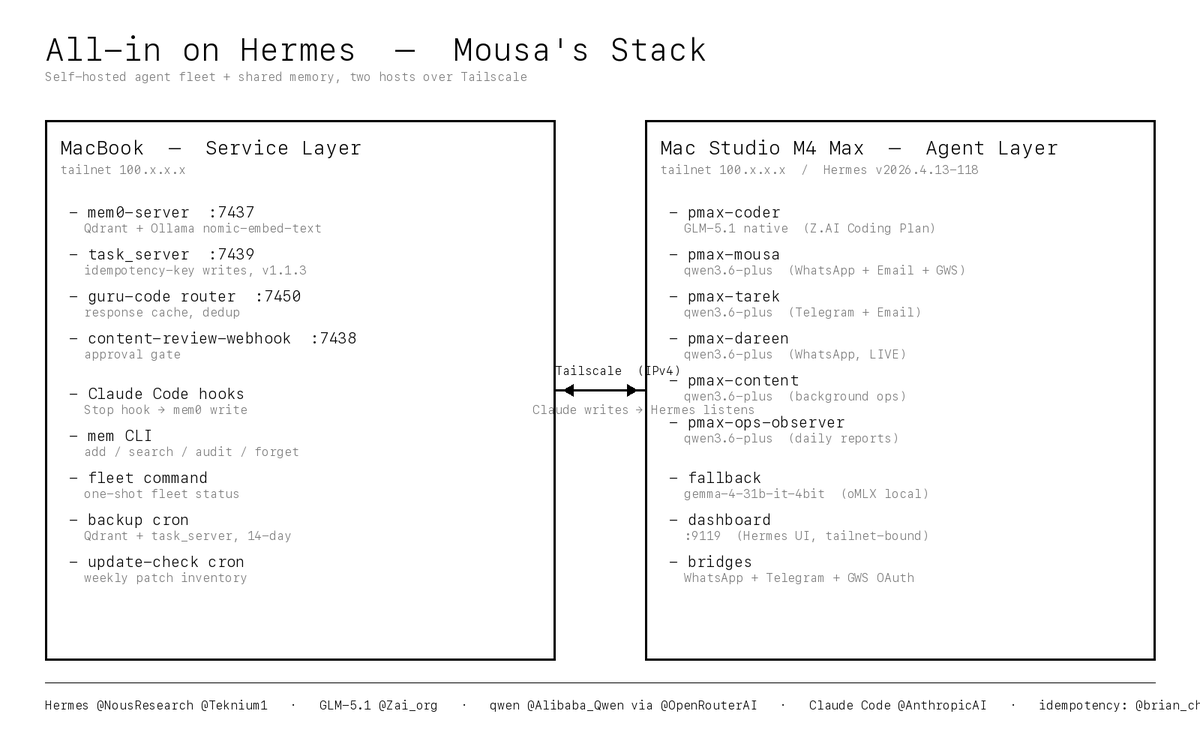

I'm going all in on Hermes (@NousResearch, @Teknium1) as my entire agent and coding stack. Six profiles. One shared self-hosted memory store. Zero hosted-coder dependencies. The fleet: - pmax-mousa — my own WhatsApp + Email + Google Workspace agent - pmax-tarek — my co-founder's Telegram + Email agent - pmax-dareen — our content creator's WhatsApp assistant (LIVE on real client chats) - pmax-content — background content ops - pmax-ops-observer — daily health reports - pmax-coder — my primary coding CLI, no hosted coder, no gateway The model dial — this is the part I'm most excited about: pmax-coder runs on GLM-5.1 native via the https://t.co/s6oYqmfv05 Coding Plan (@Zai_org, quarterly $45). Direct to https://t.co/HUrIPiINWn, no middleman, no OpenRouter tax. GLM-5.1 published the exact thing I needed — a frontier coder at a flat price I can plan around. I've spent the last three days heads-down just getting the system running. Not tweaking it. Not optimizing it. Getting it to stand up end-to-end without a single load-bearing piece silently falling over. Six profiles, one memory store, two hosts, a dozen services, launchd, Tailscale, native provider pinning, patch re-application, ghost-process recovery, bridge port collisions, FTPS quirks, CI cycles, Qdrant lock contention, Happy Eyeballs hangs — every one of them a real bug I hit and fixed before I could move on. The three days are the story. The five gateway profiles (pmax-mousa, pmax-tarek, pmax-dareen, pmax-content, pmax-ops-observer) all run on qwen/qwen3.6-plus via OpenRouter native Alibaba routing (@Alibaba_Qwen, @OpenRouterAI). I pinned native-only with a strict provider.only patch so nothing silently falls through to a more expensive lane. Offline fallback everywhere is gemma-4-31b-it-4bit served by oMLX on the Mac Studio. If OpenRouter or https://t.co/s6oYqmfv05 goes sideways mid-conversation, every profile transparently fails over to local MLX inference and the user never notices. Swapping models is one YAML line. The real unlock: unified self-hosted memory. Every Hermes profile reads and writes one mem0 store on my MacBook (Qdrant + Ollama nomic-embed-text embeddings, zero cloud). Claude Code (@claudeai, @AnthropicAI) is wired to auto-broadcast every session turn into the same store via a Stop hook. The direction of flow is Claude writes, Hermes listens. Anything I decide in a Claude Code session is visible to the WhatsApp agents on my very next message. Nothing gets re-explained. Ever. Two-host architecture over Tailscale: MacBook (100.x.x.x) is the service layer. It runs mem0-server on 7437, task_server v1.1.3 on 7439, the guru-code router cache on 7450, the content-review webhook on 7438, all the Claude Code hooks, daily backup cron, and the mem CLI. Mac Studio M4 Max (100.x.x.x) is the agent layer. It runs Hermes v2026.4.13-118, all six profile gateways under launchd, the Hermes dashboard on 9119, the WhatsApp and Telegram bridges, and the Google Workspace OAuth session. Both hosts are pinned to IPv4 over the tailnet because macOS Happy Eyeballs was randomly hanging on IPv6 tailnet paths — one flag on every curl and ssh killed a whole class of flakiness. Huge credit to @brian_cheong — his push on idempotency-on-retries directly shaped task_server v1.1.3 (Idempotency-Key header on every write path), https://t.co/mrslfLodFL deterministic run_id dedup, and the guru-code router's response cache. Without that, retried agent actions would silently double-fire — a tool call would hit twice, a message would get sent twice, a file would get written twice. Whole classes of bugs I'll never write now. (btw I knew nothing abt idempotency bar — thanks dude) What else ships with the stack: Daily Qdrant and task_server backups with a 14-day rotation, plus a weekly full Hermes zip. Ghost-process immunity on launchd restarts (a startup_guard script kills any zombie https://t.co/eDFb9bsTOf holding the Qdrant lock before mem0-server boots). A native-provider pinning patch that wires provider_routing.allow_fallbacks straight through to OpenRouter. A secret redactor that runs on every Claude Code turn end so OpenRouter keys, Anthropic keys, GitHub PATs, and Bearer tokens can never leak into transcripts. A mem audit command that scans the memory store itself for leaked patterns. And a `fleet` one-shot status command I can run from any terminal to get a color-coded snapshot of every service on both hosts plus GitHub Actions status plus the Hermes patch inventory. Over these three days I also pulled 98 commits of upstream Hermes in two passes (70 + 28) without losing a single custom patch. An update-check cron inventories every local patch weekly so nothing regresses silently. Upgrades are safe. That's the invariant I wanted and I finally have it. None of this is a custom AI platform. It's Hermes doing what Hermes does, plus a few surgical patches I kept small enough to re-apply on every upstream pull. The whole philosophy is minimal lock-in: use the upstream as much as possible, patch only the load-bearing seams, never fork. The point isn't that Hermes beats every other coder tool today. The point is it's mine. I own the model dial, the memory store, the tools, the hooks, the backup policy, the security posture, the failover behavior. When something breaks I fix it. When I want to upgrade I upgrade. When I want to swap models I swap models. No middleman. No platform. No rug pull risk. Reports from the field to follow.

This is your sign to submit your #GitHubUniverse session idea ✨ Call for sessions is now open through May 1 👇 https://t.co/gRdMaPQ5NM https://t.co/KS7yYUuLx2

@thelumiereguy Should be fixed now thanks for the report! https://t.co/HqOI8uTBhq

速度真的好快!昨晚刚推荐,就被Hermes收入! 感谢Hermes创始人! https://t.co/zOl5IralEy

速度真的好快!昨晚刚推荐,就被Hermes收入! 感谢Hermes创始人! https://t.co/zOl5IralEy

@chipcamel @NousResearch Fixed and added, please `hermes update` to access :) https://t.co/WOurUGPuTG

Document OCR benchmarks are still an open problem Existing document OCR benchmarks are either too narrowly focused on a specific type (e.g. FinTabNet, ChartQA), or on documents that aren’t reflective of real-world tasks (e.g. OmniDocBench, OlmOCR-bench on over academic papers) ParseBench is a step towards solving this problem. * It tries to comprehensively cover real-world document distributions within the enterprise. * It contains comprehensive evaluations across 5 different dimensions (tables, charts, content faithfulness, formatting, grounding). * It tries to use metrics that optimize for agent semantic understanding rather than structural similarity. We released this yesterday, and there’s a TON of content: 1. Whitepaper 2. HF dataset 3. Github repo 4. Blog 5. Video And today, we’re excited to feature https://t.co/FYbk3s6M2w, our home page website for ParseBench 💫 come check it out! Take a look at some of our other materials if you’re interested: Blog: https://t.co/57OHkx0pQW Paper: https://t.co/Ho2oH2xEAM

How do you learn to trust AI? When it works even in a noisy environment. This is @typelessdotcom. Faster than typing. And you don’t need to turn down the music to use it. https://t.co/f2Oh0awxNb

Falcon 9 launches 29 @Starlink satellites from Florida https://t.co/LArWOnC3tD

Full-duration static fire for the first time on Starship V3 https://t.co/Q1HZIX53HB

Today is the biggest product launch in @customerio history. We built a new way of working so every message reaches further, every campaign gets smarter, and you can see what's actually driving impact. Here's an overview of everything new on the platform today. 🧵 1/11 https://t.co/y7t0S2L7HP

Use voice-to-text when prompting in @code 🎤 https://t.co/ConJBUUdkR https://t.co/k9594ysOL7

Thanks @_akhaliq Github: https://t.co/Go5Dlh7WHd Pr welcomed :)

Attention Sink in Transformers A Survey on Utilization, Interpretation, and Mitigation paper: https://t.co/QyXl9xYaru https://t.co/Q0mTZvpTET

Thanks @_akhaliq Github: https://t.co/Go5Dlh7WHd Pr welcomed :)

THIS IS ULTRA SAVAGE 🔥 Journalist –– Why do you think President Trump posted that photo depicting Jesus? 🇺🇸 Rep Nancy –– "You should ask a psychiatrist. It needs diagnosis, not conversation" 😂 https://t.co/Xhq3TwcZqA

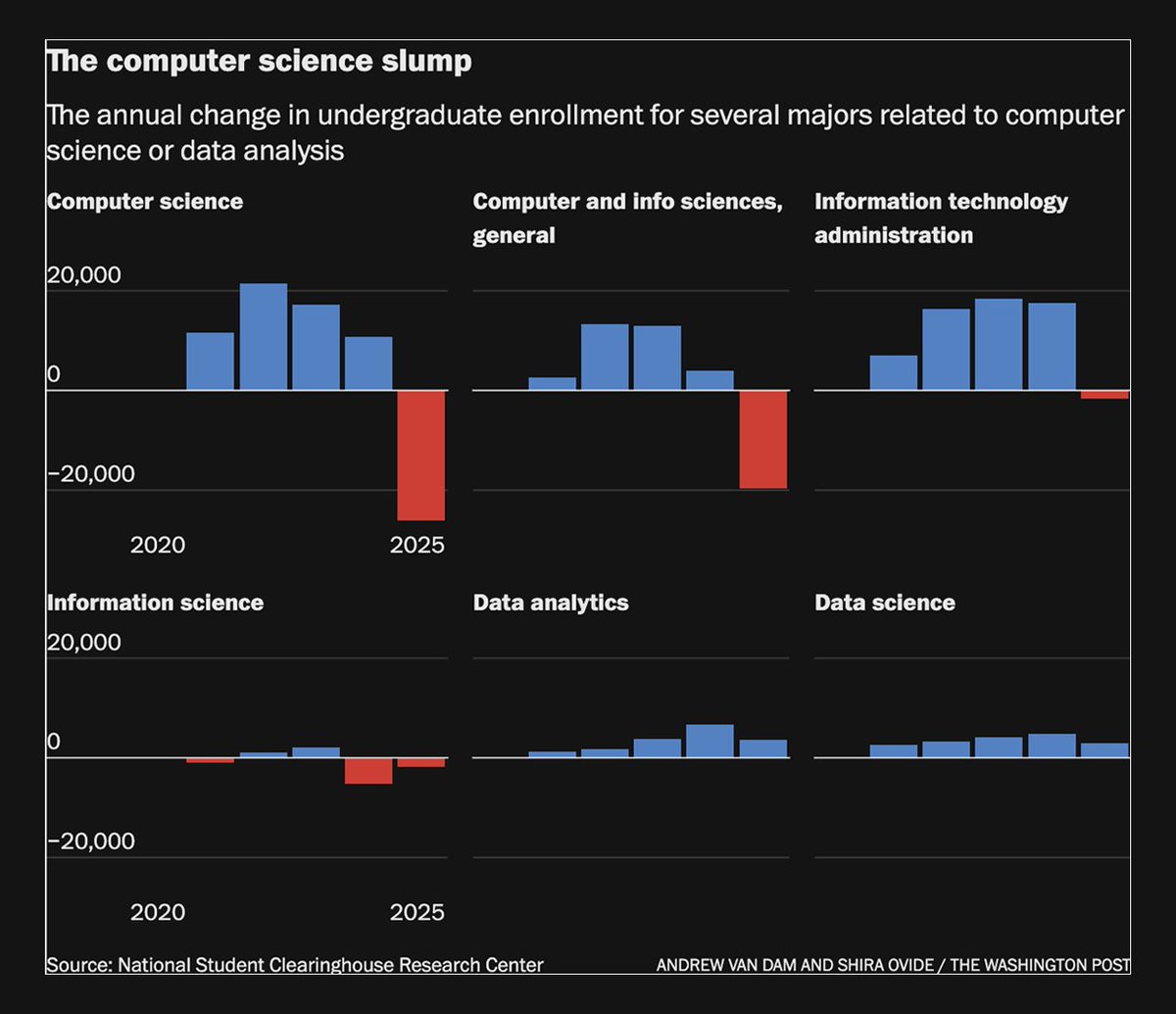

This is a remarkable chart about the future of computer science degrees. https://t.co/ybQRiNqVBf https://t.co/Wq6I8md0Lr

@Maxime44 Done! https://t.co/nM1PAaL60I

@TensorSlay @NousResearch https://t.co/spUmotCXAH

@teeetariq @NousResearch I bet some in our discord do! come join: https://t.co/SxWoPjZTVD

@PavanKumarNY @fdotinc Yes sir. Billy this so you can keep up on AI: https://t.co/kiuZ7QXLzb See you at Founders…