@Mosescreates

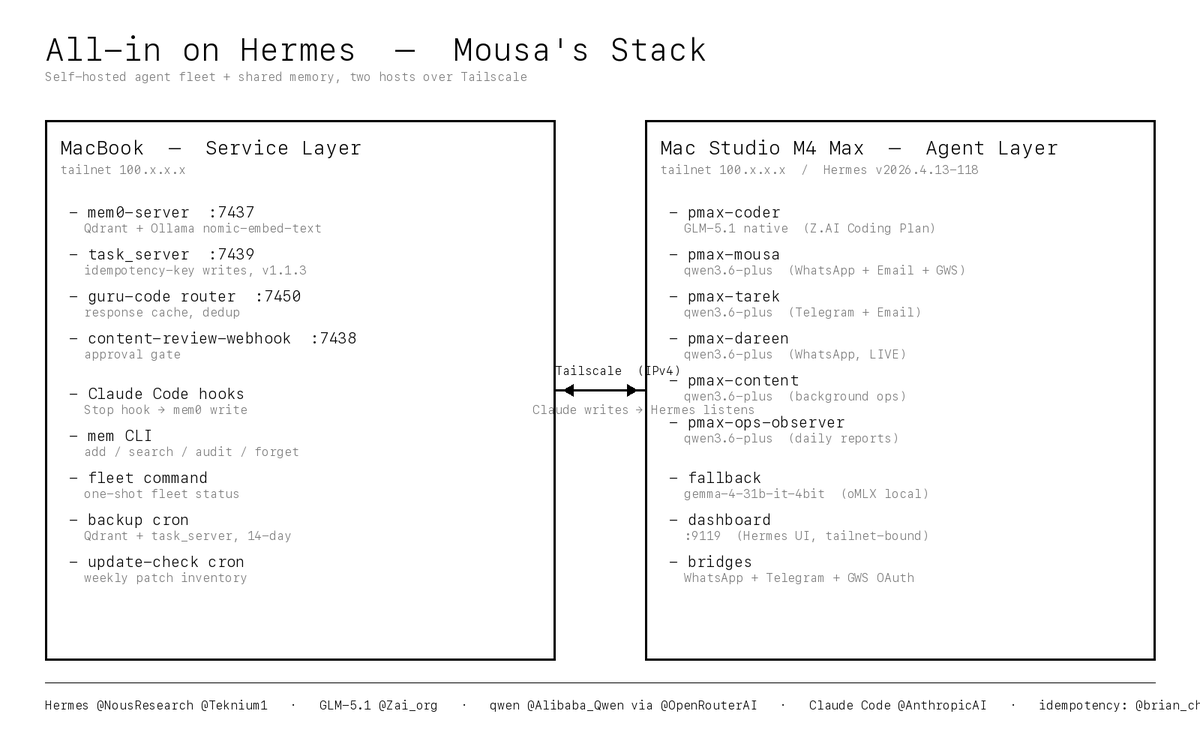

I'm going all in on Hermes (@NousResearch, @Teknium1) as my entire agent and coding stack. Six profiles. One shared self-hosted memory store. Zero hosted-coder dependencies. The fleet: - pmax-mousa — my own WhatsApp + Email + Google Workspace agent - pmax-tarek — my co-founder's Telegram + Email agent - pmax-dareen — our content creator's WhatsApp assistant (LIVE on real client chats) - pmax-content — background content ops - pmax-ops-observer — daily health reports - pmax-coder — my primary coding CLI, no hosted coder, no gateway The model dial — this is the part I'm most excited about: pmax-coder runs on GLM-5.1 native via the https://t.co/s6oYqmfv05 Coding Plan (@Zai_org, quarterly $45). Direct to https://t.co/HUrIPiINWn, no middleman, no OpenRouter tax. GLM-5.1 published the exact thing I needed — a frontier coder at a flat price I can plan around. I've spent the last three days heads-down just getting the system running. Not tweaking it. Not optimizing it. Getting it to stand up end-to-end without a single load-bearing piece silently falling over. Six profiles, one memory store, two hosts, a dozen services, launchd, Tailscale, native provider pinning, patch re-application, ghost-process recovery, bridge port collisions, FTPS quirks, CI cycles, Qdrant lock contention, Happy Eyeballs hangs — every one of them a real bug I hit and fixed before I could move on. The three days are the story. The five gateway profiles (pmax-mousa, pmax-tarek, pmax-dareen, pmax-content, pmax-ops-observer) all run on qwen/qwen3.6-plus via OpenRouter native Alibaba routing (@Alibaba_Qwen, @OpenRouterAI). I pinned native-only with a strict provider.only patch so nothing silently falls through to a more expensive lane. Offline fallback everywhere is gemma-4-31b-it-4bit served by oMLX on the Mac Studio. If OpenRouter or https://t.co/s6oYqmfv05 goes sideways mid-conversation, every profile transparently fails over to local MLX inference and the user never notices. Swapping models is one YAML line. The real unlock: unified self-hosted memory. Every Hermes profile reads and writes one mem0 store on my MacBook (Qdrant + Ollama nomic-embed-text embeddings, zero cloud). Claude Code (@claudeai, @AnthropicAI) is wired to auto-broadcast every session turn into the same store via a Stop hook. The direction of flow is Claude writes, Hermes listens. Anything I decide in a Claude Code session is visible to the WhatsApp agents on my very next message. Nothing gets re-explained. Ever. Two-host architecture over Tailscale: MacBook (100.x.x.x) is the service layer. It runs mem0-server on 7437, task_server v1.1.3 on 7439, the guru-code router cache on 7450, the content-review webhook on 7438, all the Claude Code hooks, daily backup cron, and the mem CLI. Mac Studio M4 Max (100.x.x.x) is the agent layer. It runs Hermes v2026.4.13-118, all six profile gateways under launchd, the Hermes dashboard on 9119, the WhatsApp and Telegram bridges, and the Google Workspace OAuth session. Both hosts are pinned to IPv4 over the tailnet because macOS Happy Eyeballs was randomly hanging on IPv6 tailnet paths — one flag on every curl and ssh killed a whole class of flakiness. Huge credit to @brian_cheong — his push on idempotency-on-retries directly shaped task_server v1.1.3 (Idempotency-Key header on every write path), https://t.co/mrslfLodFL deterministic run_id dedup, and the guru-code router's response cache. Without that, retried agent actions would silently double-fire — a tool call would hit twice, a message would get sent twice, a file would get written twice. Whole classes of bugs I'll never write now. (btw I knew nothing abt idempotency bar — thanks dude) What else ships with the stack: Daily Qdrant and task_server backups with a 14-day rotation, plus a weekly full Hermes zip. Ghost-process immunity on launchd restarts (a startup_guard script kills any zombie https://t.co/eDFb9bsTOf holding the Qdrant lock before mem0-server boots). A native-provider pinning patch that wires provider_routing.allow_fallbacks straight through to OpenRouter. A secret redactor that runs on every Claude Code turn end so OpenRouter keys, Anthropic keys, GitHub PATs, and Bearer tokens can never leak into transcripts. A mem audit command that scans the memory store itself for leaked patterns. And a `fleet` one-shot status command I can run from any terminal to get a color-coded snapshot of every service on both hosts plus GitHub Actions status plus the Hermes patch inventory. Over these three days I also pulled 98 commits of upstream Hermes in two passes (70 + 28) without losing a single custom patch. An update-check cron inventories every local patch weekly so nothing regresses silently. Upgrades are safe. That's the invariant I wanted and I finally have it. None of this is a custom AI platform. It's Hermes doing what Hermes does, plus a few surgical patches I kept small enough to re-apply on every upstream pull. The whole philosophy is minimal lock-in: use the upstream as much as possible, patch only the load-bearing seams, never fork. The point isn't that Hermes beats every other coder tool today. The point is it's mine. I own the model dial, the memory store, the tools, the hooks, the backup policy, the security posture, the failover behavior. When something breaks I fix it. When I want to upgrade I upgrade. When I want to swap models I swap models. No middleman. No platform. No rug pull risk. Reports from the field to follow.