@gkisokay

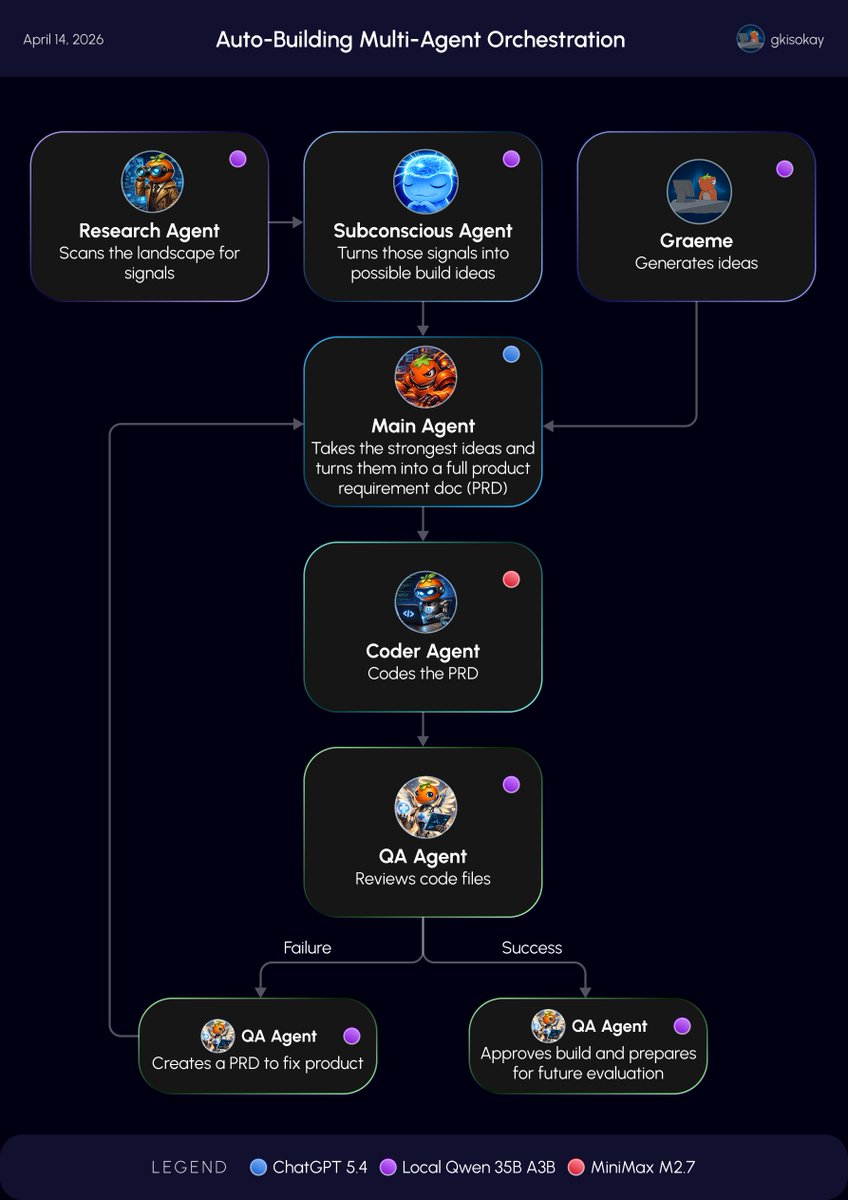

Day 7 of Building AGI for my Hermes Agent: I got my GPT-5.4 calls from 270x to 0x per day Today I found one of the dumbest parts of my setup where routine agent work was quietly burning GPT-5.4 token credits. Scanning, summaries, low-risk review, background thinking. These should not be hitting GPT-5.4. So I rebuilt the stack. Using @leopardracer’s Mac Mini local model setup, I got Local Qwen 35B A3B running for the always-on cognitive work as a test. Now the system works like this: - Local Qwen 35B A3B for scanning, summaries, low-risk review, and constant background thinking - MiniMax M2.7 for approved coding work - GPT-5.4 for final planning and high-judgment approval - No model for preflight checks when queues are empty The result: - GPT-5.4 cron calls per day went from 270 to 0. - Now GPT-5.4 only fires when there is real planning work or a deliberate escalation. Frontier intelligence is too expensive to waste on routine cognition, and my system got dramatically cheaper overnight, and much cleaner too. A lot of people are overspending because they are using the smartest model for the wrong jobs. Not every thought deserves a frontier token. Follow @gkisokay to see what happens next.