Your curated collection of saved posts and media

the grok imagine landing page is essentially an open repository of successful prompts. treat it like a library: grab the prompt logic from the best videos, concatenate them, and see how the model responds. here's a text-to-video prompt i came up with by combining a few of them. grok Imagine text to video prompt: Hyper-detailed anime style, cinematic perspective, gritty and dramatic lighting, bold contrast with saturated colors. A low-angle tilted cinematic street-style shot of an empty urban parking lot with cracked asphalt and faded lines, looking up at an anime girl walking forward while carrying a skateboard tucked under one arm, as seen from behind. She wears white baggy cargo pants, a cropped white leather jacket with the xAI Grok logo prominently on the back, a black belt, and white high-top skate shoes, with the worn tread and grip details of one skate shoe dominating the foreground in sharp detail. The skateboard she carries is white with detailed black grip tape, colorful wheels visible. The perspective emphasizes the gritty, urban skate aesthetic with bold foreshortening and a sense of impending action.

BREAKING: Grok had the highest rate of new users in November. It is leading in new user growth. Grok recorded the highest share of first time visitors among major GenAI tools, showing strong momentum and expanding reach beyond existing users, as per the latest @Similarweb data. https://t.co/xGRS2iyljq

Why has Facebook allowed racism against Whites only? https://t.co/svIryrXCm0

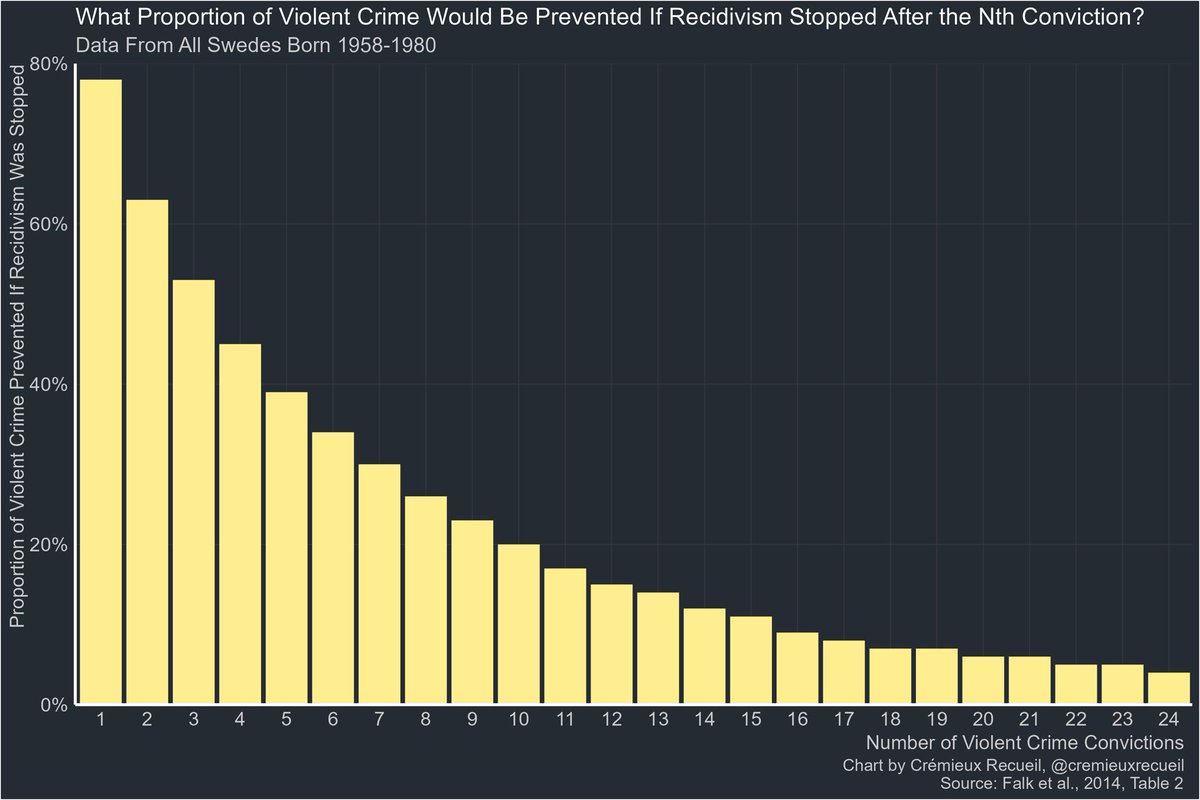

Everyone on the Left discussing gun control refuses to ever consider that if we just locked up ALL violent criminals, the majority of violent crime would disappear, overnight. It's not the guns. It's the violent criminals... ...and the Left keeps letting them out of prison https://t.co/9W4lT7VeVq

Bombshell report: Companies did everything they could to avoid hiring “white millennial men” over the last decade https://t.co/d3gEal0Kfp

Grok 4.1 Fast Reasoning outperforms every Frontier model on τ²-Bench Telecom agentic tool use and is now officially ranked #1 https://t.co/JajfE1nPD7

https://t.co/n2FjmSxzHd

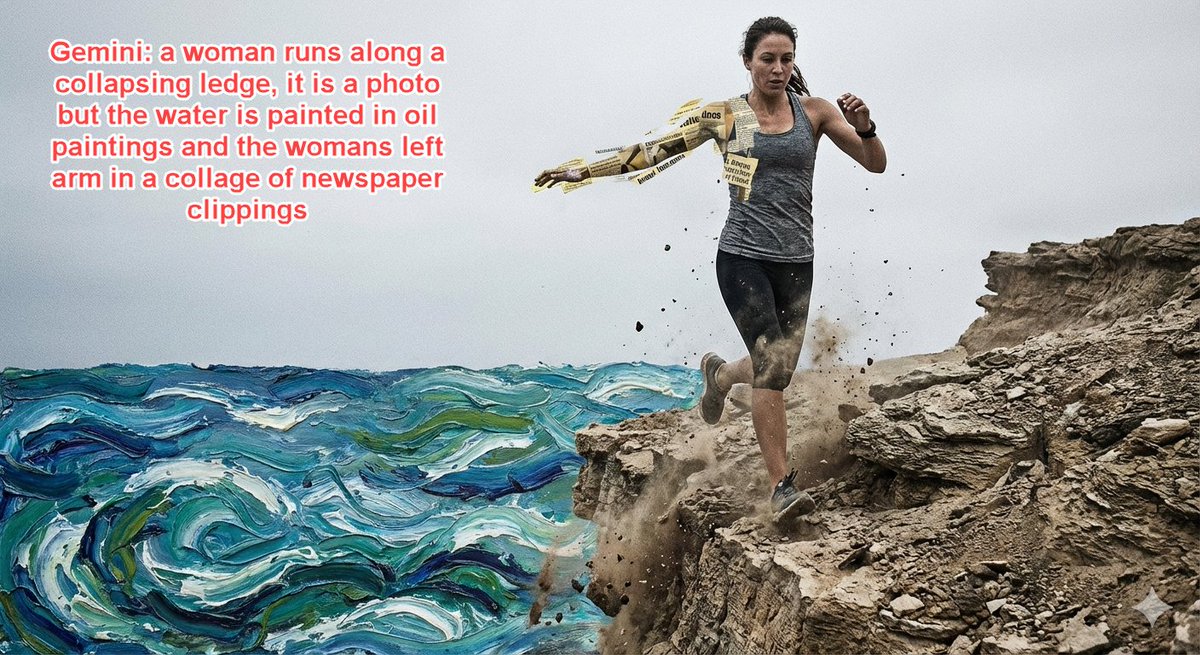

Had early access to the new ChatGPT Image 1.5. It is good, especially at single images, but I don't think it works as well for complex slides/graphics/information as Nano Banana Pro (though GPT-5.2 Thinking seems to do better than GPT-5.2 instant, so maybe more planning helps?) https://t.co/0vigzsoab7

🎖Take GenAI from MVP to production excellence with GenAIOps on #AWS. 🦾Learn how automation, observability & continuous improvement can help your #startup scale #AI solutions efficiently & reliably. Check out the article: 👉 https://t.co/TTBg7Jnmqm https://t.co/msriZdkJ4b

We just launched Self-Hosted Voice AI—our Universal-Streaming model, deployed on your infrastructure, with the same performance developers already trust from our API. Self-hosting speech AI used to mean compromising on quality or paying a premium for the privilege. Not anymore. Here's what this unlocks: 🔹 Co-locate your Voice AI stack where your traffic originates for optimized latency 🔹 Process all audio within your controlled perimeter for full data sovereignty 🔹 Deploy with Kubernetes, AWS ECS, or any container orchestration platform you're already using 🔹 Count usage toward your cloud provider's committed spend program No self-hosting premium. Session-based pricing with volume discounts. For teams navigating strict compliance requirements, data residency mandates, or just wanting tighter control over their stack—this is built for you.

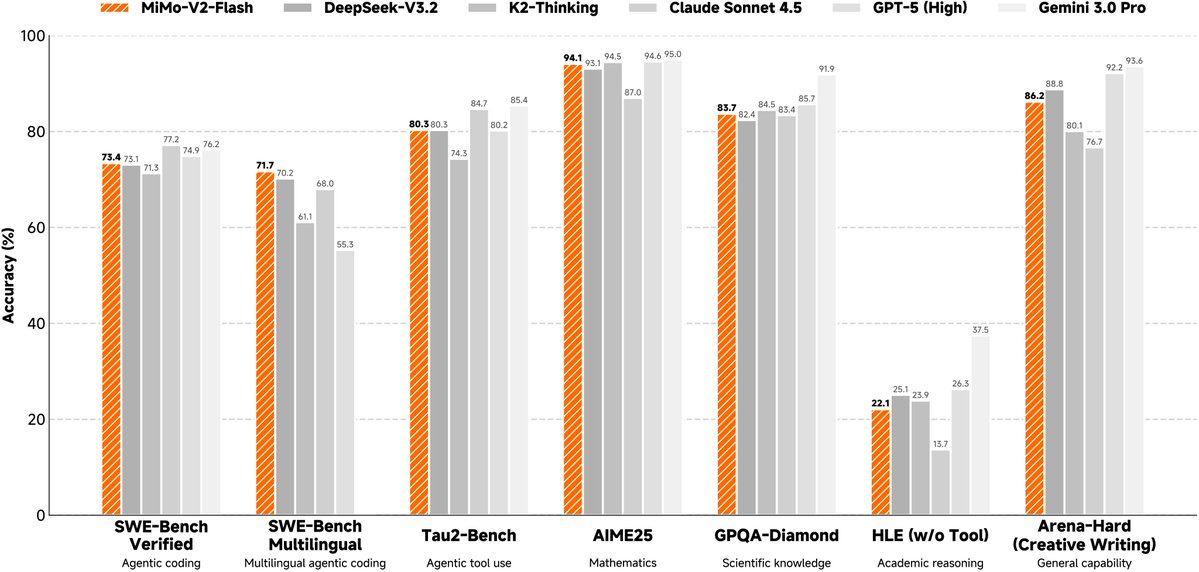

⚡ Faster than Fast. Designed for Agentic AI. Introducing Xiaomi MiMo-V2-Flash — our new open-source MoE model: 309B total params, 15B active. Blazing speed meets frontier performance. 🔥 Highlights: 🏗️ Hybrid Attention: 5:1 interleaved 128-window SWA + Global | 256K context 📈 Performance: ⚔️ Matches DeepSeek-V3.2 on general benchmarks — at a fraction of the latency 🏆 SWE-Bench Verified: 73.4% | SWE-Bench Multilingual: 71.7% — new SOTA for open-source models 🚀 Speed: 150 output tokens/s with Day-0 support from @lmsysorg🤝 🤗 Model: https://t.co/4Etm0yZKTL 📝 Blog Post: https://t.co/5zxmcDuB6o 📄 Technical Report: https://t.co/crac1YTLYl 🎨 AI Studio: https://t.co/nSReUs6QgW

Just tried @bubblelab_ai and I’m actually blown away Best way I can describe it is if @cursor_ai and @n8n_io had a baby This afternoon I built a full sales qualifier workflow in three prompts: – defined a target segment (e.g. Shopify store owners) – identified market leaders – analyzed their social presence + inferred priorities – extracted contact details – generated outreach emails + sales call talking points – compiled everything into a single Google Sheet It got it right with zero errors As tools like this emerge, the hard part stops being building workflows and starts being understanding them The advantage moves to people who deeply understand the domain and know what questions are worth asking Props to @Selinaliyy and the Bubble Lab team! This feels like a glimpse of what AI-native ops should look like.

We're going live in one hour! Tune in for a hands-on look at Gemini 3, Nano Banana Pro, Veo and how to create a full brand ecosystem from scratch. → https://t.co/GByTvMja87 https://t.co/691F2HEqPj

Today we're announcing cua-bench: a framework for benchmarking, training data, and RL environments for computer-use AI agents. Why? Current agents show 10x variance across minor UI changes. Here's how we're fixing it.

🔉 Introducing SAM Audio, the first unified model that isolates any sound from complex audio mixtures using text, visual, or span prompts. We’re sharing SAM Audio with the community, along with a perception encoder model, benchmarks and research papers, to empower others to explore new forms of expression and build applications that were previously out of reach. 🔗 Learn more: https://t.co/FPnfv66UCP

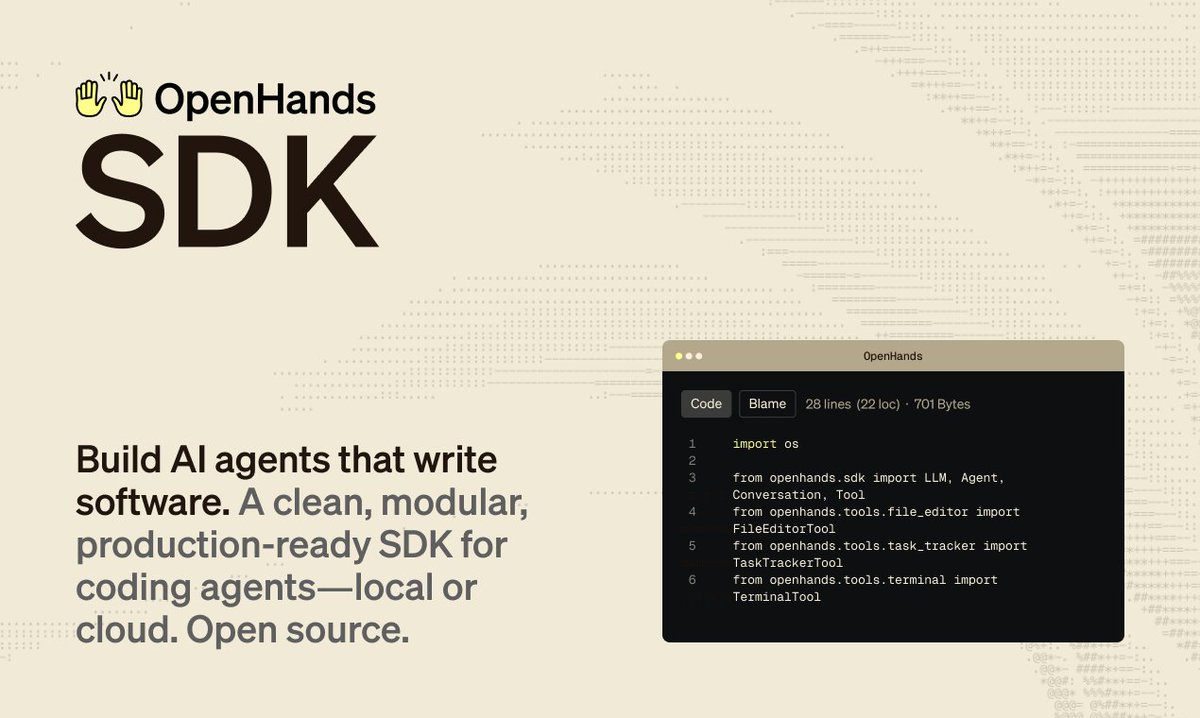

🚀 We just launched OpenHands Software Agent SDK on @ProductHunt! A smarter way to build agent-driven software — fast, flexible, and production-ready. 👉 Check it out + show some love! https://t.co/xekxMFGJtD

Today marks an important milestone in the history of @SimularAI, the autonomous computer company. Our open source computer-use agent, 𝐀𝐠𝐞𝐧𝐭 𝐒, scored 72.6% on the OSWorld benchmark, surpassing the human baseline (72.36%) for the first time ever. This milestone matters because it shows AI can now use computers the way humans do, and, in many cases, do it better. This is a glimpse of a future where work becomes faster, more accessible, and more empowered for everyone. #AI #Automation #Simular #AgenticAI #ComputerUse

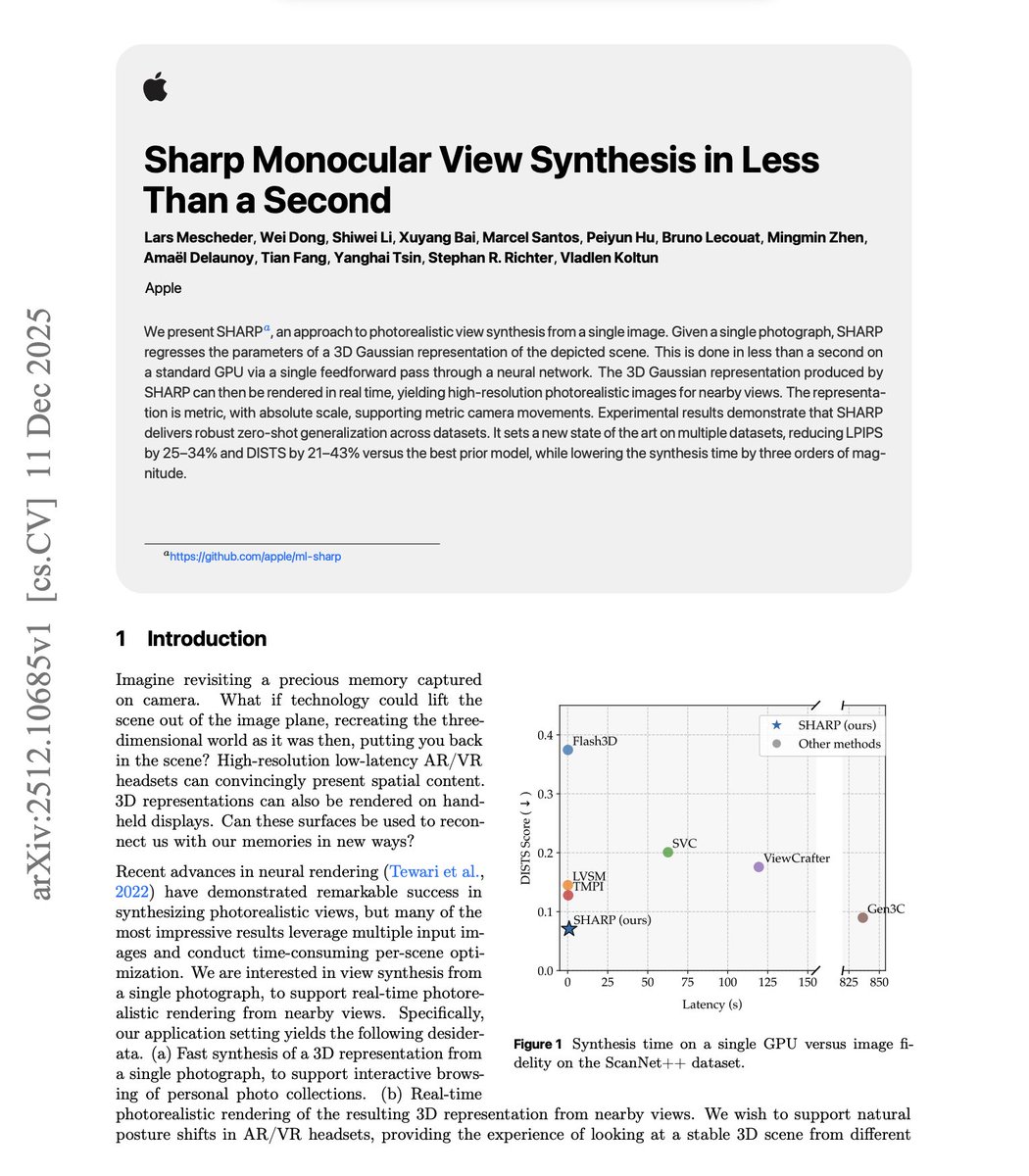

Another banger paper from Apple. View synthesis from a single image is impressive. But most methods are extremely slow. The default approach to high-quality novel view synthesis uses diffusion models. Iterative denoising produces compelling results, but latency can stretch into hundreds of seconds per scene. Real-world applications, like in AR/VR headsets and interactive photo browsing, need instant 3D from a single photograph. This new research from Apple introduces SHARP, a method that generates a complete 3D Gaussian representation from a single image in under one second on a standard GPU. Architecture details: A neural network takes a single photograph and produces about 1.2 million 3D Gaussians in a single feedforward pass. The architecture builds on a pretrained depth backbone, but crucially unfreezes parts of it during training. A learned depth adjustment module resolves the inherent ambiguity of monocular depth estimation. A Gaussian decoder then refines all attributes: position, scale, rotation, color, and opacity. Results On ScanNet++, SHARP achieves 0.071 DISTS versus 0.090 for Gen3C, the previous best. That's a 21% improvement in perceptual quality. LPIPS drops from 0.227 to 0.154, a 32% reduction. The latency difference is more dramatic. SHARP runs in under 1 second. Gen3C takes approximately 850 seconds. That's roughly a 1000x speedup. Once the 3D representation exists, rendering runs at over 100 frames per second at high resolution. The representation is metric with an absolute scale, so virtual cameras can be accurately coupled to physical headsets. The method is not perfect. SHARP excels at nearby views corresponding to natural head motion and posture shifts. Diffusion-based methods handle faraway views better by hallucinating plausible content for regions with no overlap to the input. Paper: https://t.co/JPoXOqqj2l Learn to build effective AI Agents in my academy: https://t.co/JBU5beIoD0

Who leaking https://t.co/mhuBy8FQp8

EgoX: Generate immersive first-person video from any third-person clip A novel framework from KAIST AI & Seoul National University that leverages video diffusion models to transform a single exocentric video into a realistic egocentric view. See it in action! https://t.co/Vt3cPAdUL3

Time to follow https://t.co/dqWrV1R3t7 to get the notification!

🎮Get a first look at Tencent HY World 1.5 (WorldPlay)! 🎮 Our newest world model with real-time interaction and long-term memory. It’s going *open-source* tomorrow. https://t.co/zvMI3rCX7u

Time to follow https://t.co/dqWrV1R3t7 to get the notification!

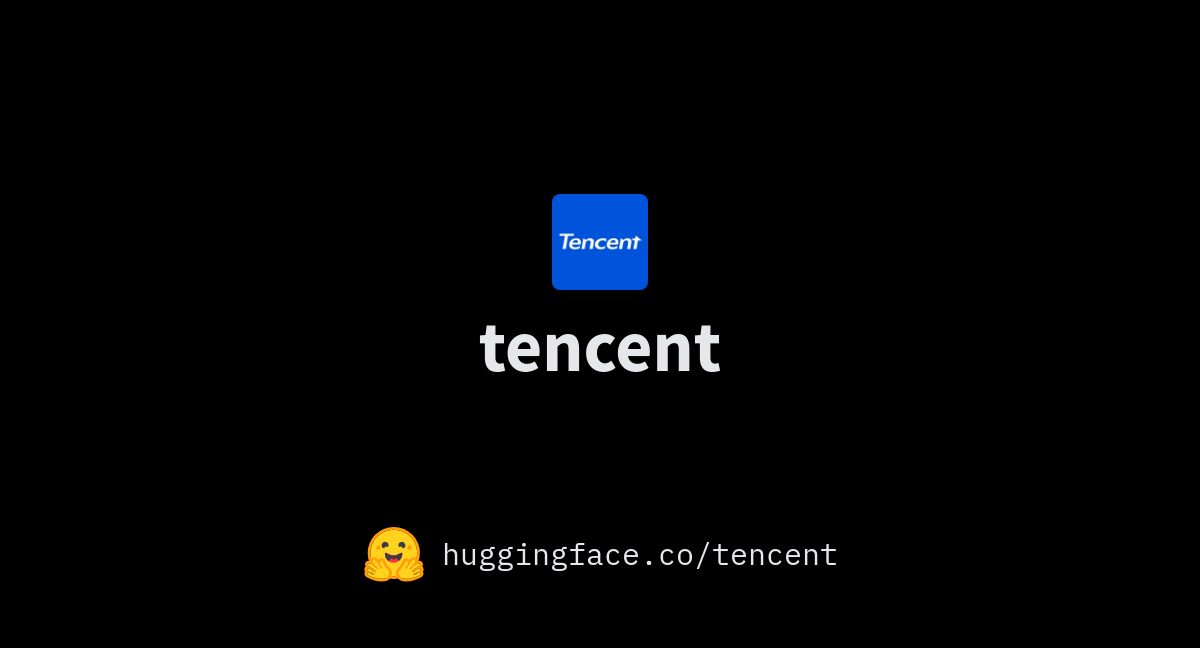

🚀 Introducing Nemotron-Cascade! 🚀 We’re thrilled to release Nemotron-Cascade, a family of general-purpose reasoning models trained with cascaded, domain-wise reinforcement learning (Cascade RL), delivering best-in-class performance across a wide range of benchmarks. 💻 Coding powerhouse After RL, our 14B model: • Surpasses DeepSeek-R1-0528 (671B) on LiveCodeBench v5/v6/Pro. • Achieves silver-medal performance at IOI 2025 🥈. • Reaches a 43.1% pass@1 on SWE-Bench Verified, and 53.8% with test-time scaling. 🧠 What is Cascade RL? Instead of mixing heterogeneous prompts across domains, Cascade RL trains sequentially, domain by domain, which reduces engineering complexity, mitigates heterogeneous verification latencies, and enables domain-specific curricula and tailored hyperparameter tuning. ✨ Key insight Using RLHF for alignment as a pre-step dramatically boosts complex reasoning—far beyond preference optimization. Subsequent domain-wise RLVR stages rarely hurt the benchmark performance attained in earlier domains and may even improve it, as illustrated in the following figure. 🤗 Models & training data 🔥 👉 https://t.co/wfVcAaMocA 📄 Technical report with detailed training and data recipes 👉 https://t.co/FdMINvB4yM

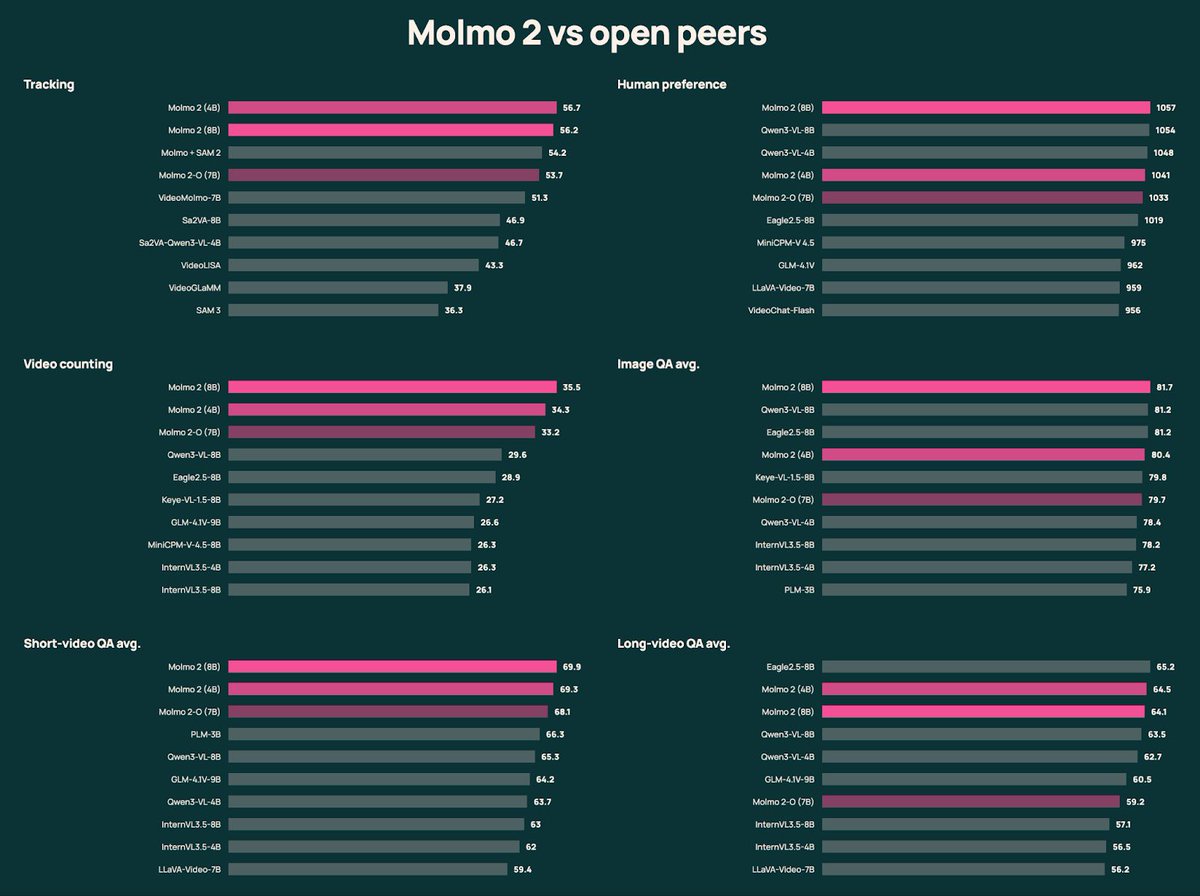

Last year Molmo set SOTA on image benchmarks + pioneered image pointing. Millions of downloads later, Molmo 2 brings Molmo’s grounded multimodal capabilities to video 🎥—and leads many open models on challenging industry video benchmarks. 🧵 https://t.co/uFs30b2DR3

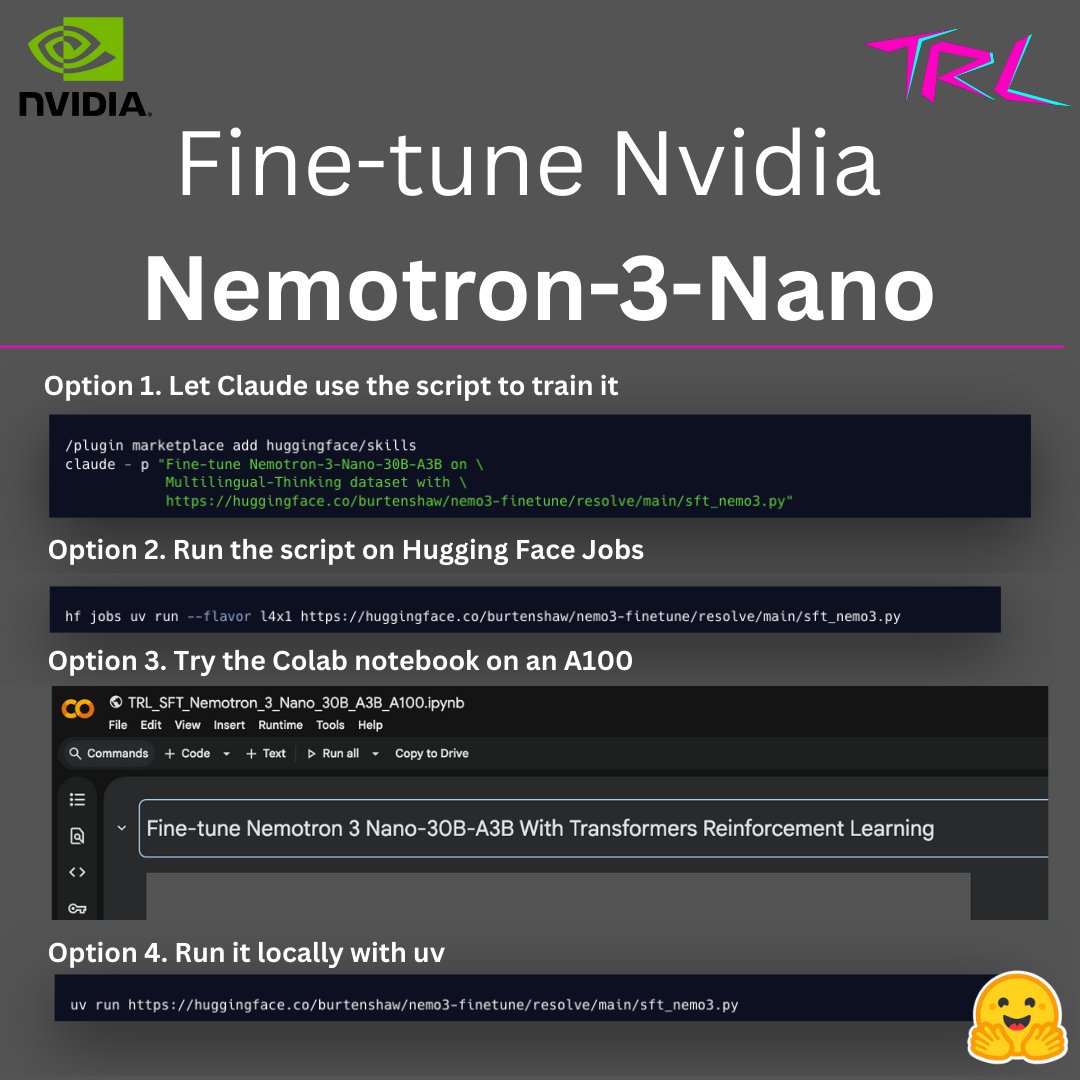

Fine-tune Nemotron 3 Nano in TRL with coding agents like claude code, colab, locally or on the hub. To fine tune, pick one of these tools: - Combine HF skills with a coding agent like claude code. - Use this colab notebook. - Train it on HF jobs using the Hugging Face hub - If you can, run this script on your own setup with uv This should get anyone started with fine tuning, and this is the perfect model to start with.

New model from @Meituan_LongCat 🚀 LongCat-Video-Avatar🔥 Audio driven character animation with text, image, and video inputs, all in one! ✨ MIT license ✨ Audio > talking video (single & multi-person) ✨ Natural motion and lip sync ✨ Fewer repeats, stable identity ✨ Available on @huggingface

Introducing the Ndea podcast - Abstract Synthesis. Hear the stories behind interesting academic papers in the world of program synthesis. Episode 1 features @MarkSantolucito, @BarnardCollege/@Columbia, discussing his paper "Grammar Filtering for Syntax-Guided Synthesis". https://t.co/uJ1NVxU6rK

@youwouldntpost @Srirachachau Downloading the “driving during daytime” patch https://t.co/R6EmIolLDo

@youwouldntpost @Srirachachau Downloading the “driving during daytime” patch https://t.co/R6EmIolLDo