@XiaomiMiMo

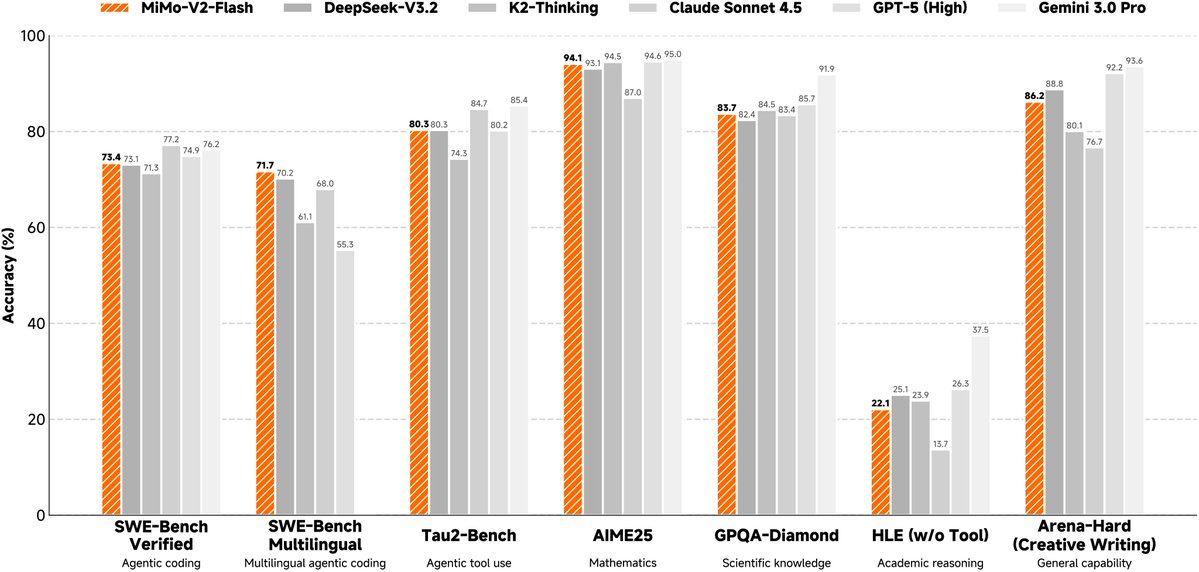

⚡ Faster than Fast. Designed for Agentic AI. Introducing Xiaomi MiMo-V2-Flash — our new open-source MoE model: 309B total params, 15B active. Blazing speed meets frontier performance. 🔥 Highlights: 🏗️ Hybrid Attention: 5:1 interleaved 128-window SWA + Global | 256K context 📈 Performance: ⚔️ Matches DeepSeek-V3.2 on general benchmarks — at a fraction of the latency 🏆 SWE-Bench Verified: 73.4% | SWE-Bench Multilingual: 71.7% — new SOTA for open-source models 🚀 Speed: 150 output tokens/s with Day-0 support from @lmsysorg🤝 🤗 Model: https://t.co/4Etm0yZKTL 📝 Blog Post: https://t.co/5zxmcDuB6o 📄 Technical Report: https://t.co/crac1YTLYl 🎨 AI Studio: https://t.co/nSReUs6QgW