@omarsar0

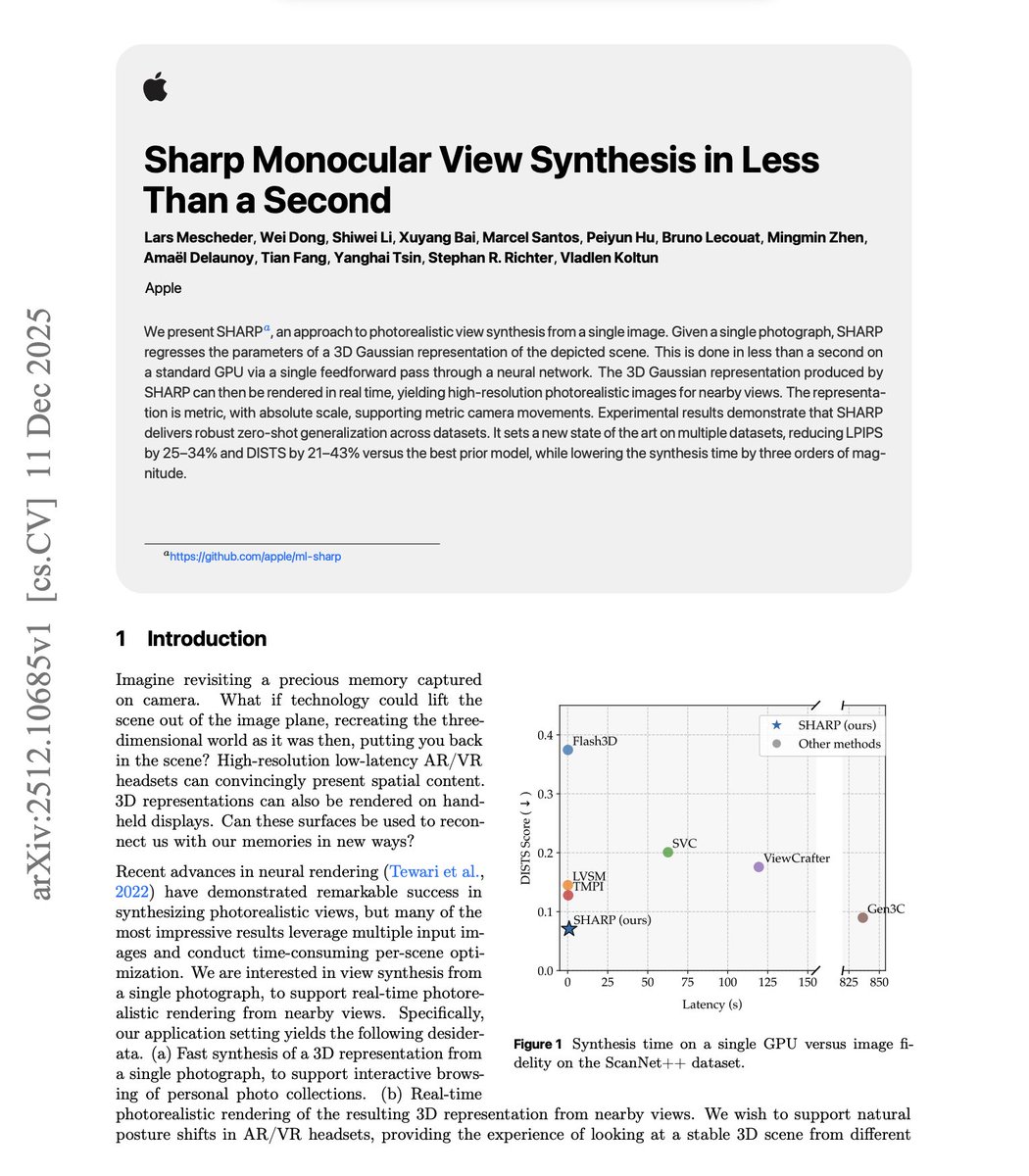

Another banger paper from Apple. View synthesis from a single image is impressive. But most methods are extremely slow. The default approach to high-quality novel view synthesis uses diffusion models. Iterative denoising produces compelling results, but latency can stretch into hundreds of seconds per scene. Real-world applications, like in AR/VR headsets and interactive photo browsing, need instant 3D from a single photograph. This new research from Apple introduces SHARP, a method that generates a complete 3D Gaussian representation from a single image in under one second on a standard GPU. Architecture details: A neural network takes a single photograph and produces about 1.2 million 3D Gaussians in a single feedforward pass. The architecture builds on a pretrained depth backbone, but crucially unfreezes parts of it during training. A learned depth adjustment module resolves the inherent ambiguity of monocular depth estimation. A Gaussian decoder then refines all attributes: position, scale, rotation, color, and opacity. Results On ScanNet++, SHARP achieves 0.071 DISTS versus 0.090 for Gen3C, the previous best. That's a 21% improvement in perceptual quality. LPIPS drops from 0.227 to 0.154, a 32% reduction. The latency difference is more dramatic. SHARP runs in under 1 second. Gen3C takes approximately 850 seconds. That's roughly a 1000x speedup. Once the 3D representation exists, rendering runs at over 100 frames per second at high resolution. The representation is metric with an absolute scale, so virtual cameras can be accurately coupled to physical headsets. The method is not perfect. SHARP excels at nearby views corresponding to natural head motion and posture shifts. Diffusion-based methods handle faraway views better by hallucinating plausible content for regions with no overlap to the input. Paper: https://t.co/JPoXOqqj2l Learn to build effective AI Agents in my academy: https://t.co/JBU5beIoD0