Your curated collection of saved posts and media

last chance to sign up if interested! https://t.co/NY7HCOTEMB

last chance to sign up if interested! https://t.co/NY7HCOTEMB

Since central park was so beautiful covered in snow, we decided to put it on our website :) https://t.co/bms4JsbdOG

Not many people know this, but the company behind Manus is Butterfly Effect. In that small office, we pressed the launch button—never imagining how one small move could change the course ahead. Keep shipping! https://t.co/5PggD9FXla

$0 → $100M ARR in 8 months. Since we launched in March: -147 trillion tokens processed -80M+ virtual computers created -Total revenue run rate over $125M Thank you to everyone building with us. https://t.co/TZJ3n162zl

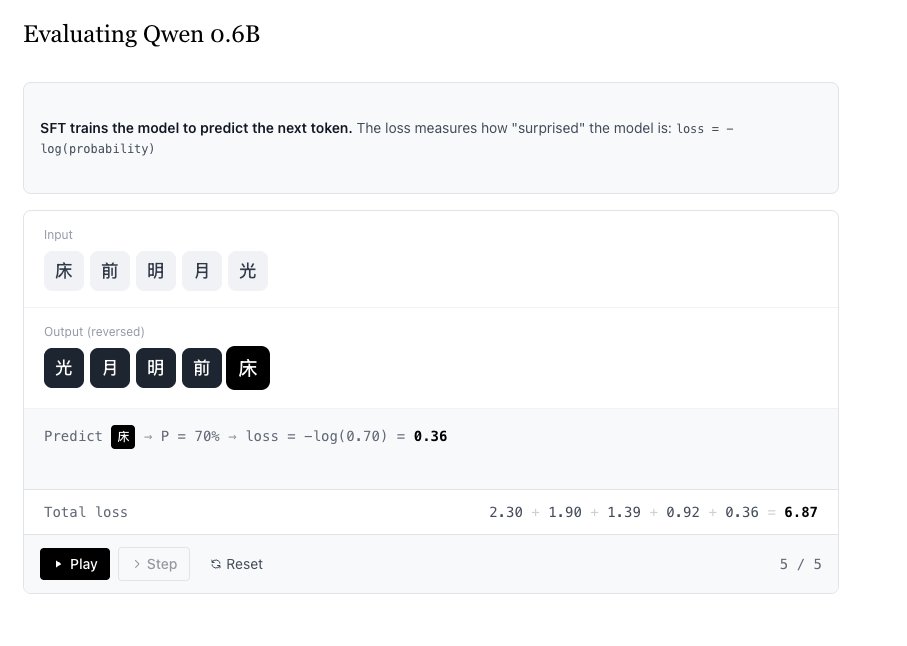

Working on a new RL article based on what I've been mucking around with :) Finally cracked these custom visualisations https://t.co/OgXiMnerBw

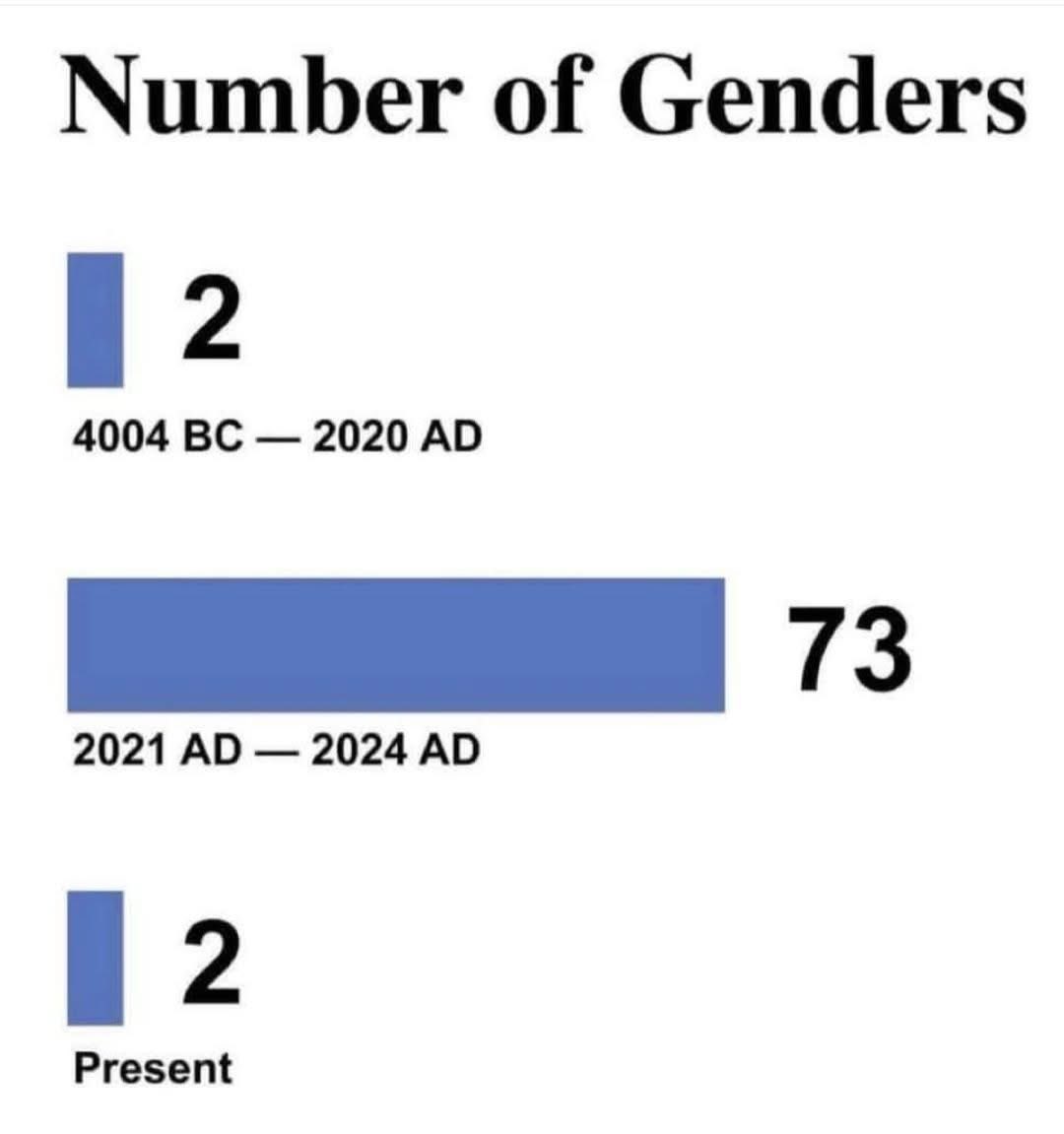

There’s only 2 genders https://t.co/iEtLBYz3Pv

John Carmack on what he admires about Elon Musk Programming legend John Carmack is asked about his relationship with Elon Musk, to which he replies: “In some ways we have a similar background. We’re almost exactly the same age, have backgrounds programming personal computers, and have even read similar books that have turned us into the people we are today.” John first met Elon when he was building Armadillo Aerospace. Elon visited Armadillo with his right-hand propulsion guy, and the three of them talked about rockets. “I think in many corners [Elon] does not get the respect he should for being a wealthy person who could just retire,” John says. “He went all-in, and he could’ve gone bust. There’s plenty of athletes or entertainers who had all the money in the world and blew it. [Elon] could’ve been the business case example of that with the things he was doing: space exploration, electrification of transportation, and Solar City type things. These are big, world-level things. And I have a great deal of admiration that he was willing to throw himself so completely into that.” John contrasts this with the way he approached his own aerospace company: “I was doing Armadillo Aerospace in this tightly-bounded way. It was ‘John’s crazy money’ at the time that had a finite limit on it. It was never going to impact me or my family if it completely failed, and I was still hedging my bets working at id Software at a time when [Elon] had been really all-in. I have a huge amount of respect for that.” It also irritates John when people call Elon “just a business guy”: “Elon was deeply involved in a lot of the [technical] decisions. Not all of them were perfect, but he cared very much about engine material selection and propellant selection. For years he’d be telling me to ‘Get off that hydrogen peroxide stuff. Liquid oxygen is the only proper oxidizer for this.’ And the times that I’ve gone through the factories with him, we talked about very detailed things like how this weld is made or how this subassembly goes together. He’s really in there a very detailed level . . . I worry a lot that he’s stretched too thin. He’s got the Boring Company and Neuralink and Twitter too whereas I know I have limits on how much I can pay attention to.” John continues: “I look back at my aerospace side of things, and I’m like, ‘I did not go all-in on that.’ I did not commit myself at a level that it would’ve taken to be successful there. And it’s a weird thing having a discussion with him. He’s the richest man in the world right now, but he operates on a level that is still very much in my wheelhouse on the technical side of things.” Video source: @lexfridman (2022)

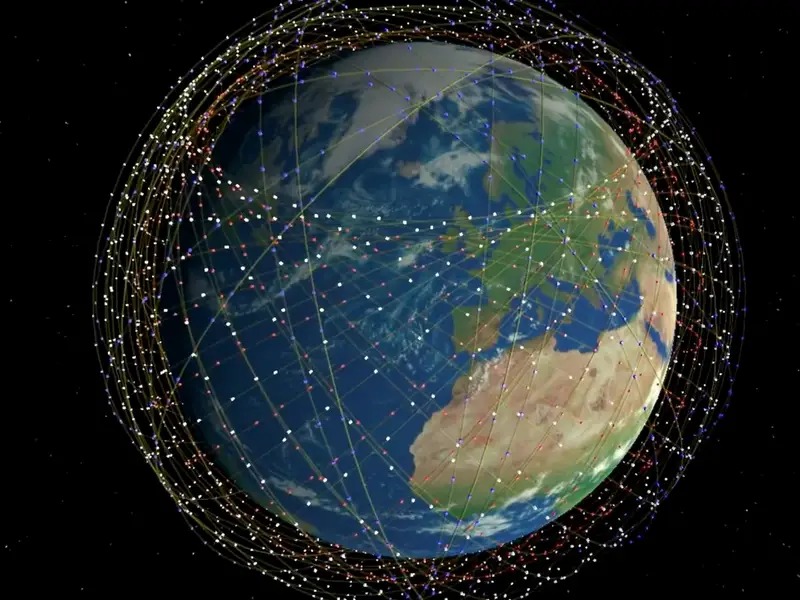

🚨 STARLINK HITS 8.6 MILLION USERS: ELON’S SKY-NET IS OFFICIALLY ONLINE Starlink just crossed 8.6 million subscribers, turning Elon’s space-based internet experiment into a full-blown global ISP juggernaut. What started as a lifeline for remote areas is now delivering high-speed internet to dozens of countries, connecting war zones, deserts, mountains, and basically anyone with a dish and a clear view of the sky. No government involvement and no telecom monopoly. Just thousands of satellites, beaming WiFi from orbit like it’s sci-fi made real. And with 10,000+ satellites expected by 2026, this isn’t a network, it’s orbital dominance. Next step? Probably streaming Netflix from Mars. Source: @muskonomy, @Starlink

The Exodus https://t.co/kKP6rcrt7P

NEWS: Lee So-young has become the first member of the South Korean government (National Assembly) to share their experience of @Tesla FSD (Supervised). Here reaction: "It drives just as well as most people do. It already feels like a completed technology, which gives me a lot to think about. Once it actually spreads into widespread use, I feel like our daily lives are going to change a lot."

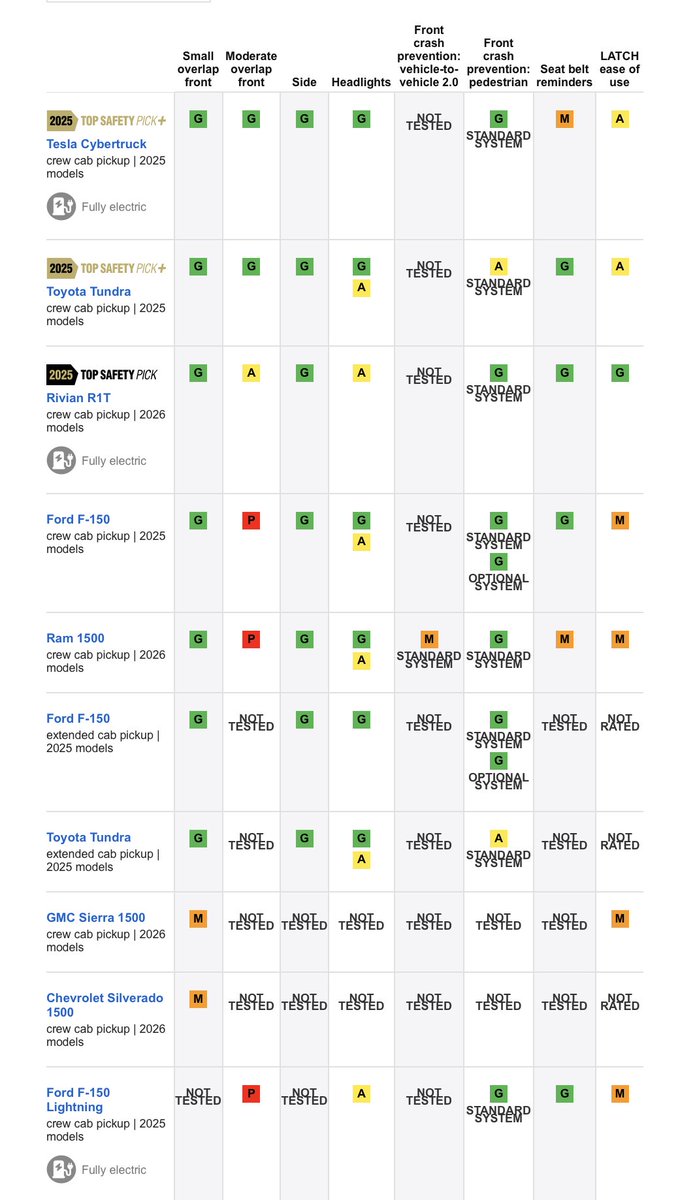

NEWS: The Tesla Cybertruck has just earned a Top Safety Pick+ rating from the IIHS, the best possible rating, and scored overall higher than any other pickup truck, gas or electric, on the list, including the Ford F-150, Rivian R1T, Ram 1500 & Toyota Tundra. Congrats @Tesla team https://t.co/FXZuJVkqLt

Humans used to build cool things Grok is amazing https://t.co/AUYQokYmaL

Javier Milei on Censorship in UK 🇬🇧 "Since the socialists came to power, they have been jailing people for posting on social media, and the journalists here would like that too, because they don't like that they have lost the monopoly of the microphone and the ability to use that tool to extort and smear, to defame without any cause. Social media makes them accountable, and they don't like it. Now the people are doing it organically because they realized many of them are criminals."

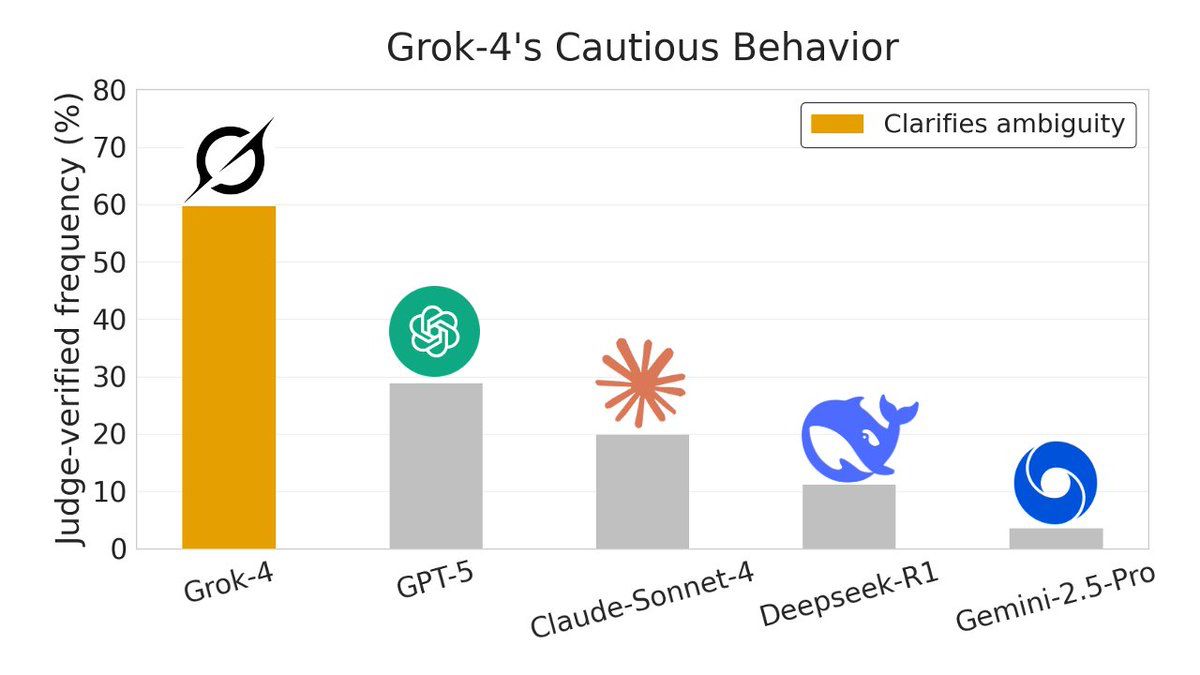

GROK-4 OUTPERFORMS TOP AI MODELS ON TRUTH SEEKING AND CAUTIOUS REASONING New research using Sparse Autoencoders shows a clear behavioral gap among frontier AI models when facing uncertainty. Instead of guessing, Grok-4 consistently pauses, clarifies assumptions, and seeks truth before answering. In ambiguity clarification tests, Grok-4 triggered this behavior roughly 70 percent of the time, far ahead of GPT-5, Claude Sonnet-4, DeepSeek-R1, and Gemini-2.5-Pro. The study flips interpretability on its head by using SAEs to analyze outputs themselves, treating latent features as thousands of soft labels for unstructured text. SAEs are a tool used to understand what AI models are actually thinking. Normally, big AI models have millions of internal signals firing at once, all tangled together. It’s powerful, but messy and hard to interpret. Across four analysis tasks, SAEs outperformed baseline methods and revealed model “personalities” directly from behavior. Grok’s edge appears tied to its design philosophy: prioritize truth seeking, surface uncertainty, and avoid confident nonsense. When reality is unclear, caution beats speed, and the data shows it. Source: @NickJiang, Alignment Forum, arXiv @grok

There it is: Tesla, $TSLA, is officially back in record high territory, up +130% from its April 2025 low. That’s +$900 BILLION in market cap in 7 months. Absolutely incredible. https://t.co/LBjDsnQnG8

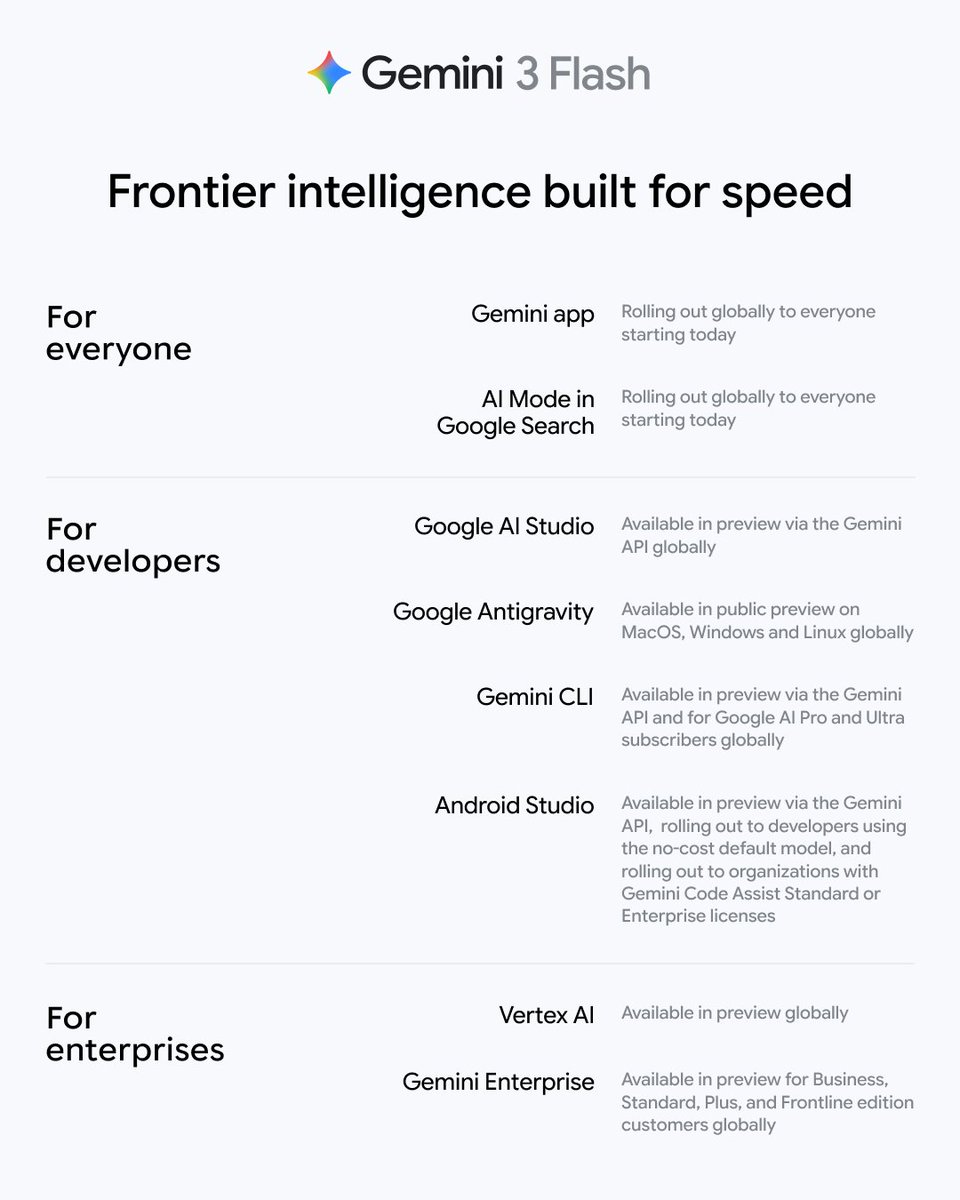

We’re expanding the Gemini 3 family with the launch of Gemini 3 Flash. This model: — Combines Gemini 3’s Pro-grade reasoning with Flash-level latency, efficiency, and cost — Delivers frontier-level performance on PHD-level reasoning and knowledge benchmarks — Is our most impressive model for agentic workflows Watch Gemini 3 Flash handle hundreds of function calling options at low latency, compiling global recipes from 100 ingredients and 100 kitchen tools.

We’re rolling out Gemini 3 Flash starting today. Here’s where you can find it: https://t.co/xzcbc1wE6A

Improving RAG with Forward and Backward Lookup This is a clever use of small and large language models. Traditional RAG systems compute similarity between the query and context chunks, retrieve the highest-scoring chunks, and then generate. But complex queries often lack sufficient signal to retrieve what's actually needed. That said, it could be useful if the RAG system could peek into potential future generations. The paper introduces FB-RAG (Forward-Backward RAG), a training-free framework that peeks into potential future generations to improve retrieval. A lightweight LLM generates multiple candidate answers with reasoning, and the chunks most relevant to those attempted answers get scored highest for retrieval. Even when the smaller model fails to answer correctly, its reasoning attempts contain enough relevant language to identify the right context chunks for a more powerful model. The approach works in three stages. Stage I uses an off-the-shelf retriever to narrow the context, optimizing for recall. Stage II runs a lightweight LLM (8B parameters) on that reduced context, samples multiple reasoning+answer outputs, and scores each original chunk by how well it matches any sampled output. Stage III feeds only the highest-scoring chunks to a powerful generator (70B parameters) for the final answer. The results are consistent across 9 datasets from LongBench and ∞Bench. On EN-QA, FB-RAG matches the leading baseline with over 48% latency reduction, or achieves 8% performance improvement with 10% latency reduction. The approach outperforms OP-RAG, Self-Route, and vanilla RAG across QA, summarization, and multiple choice tasks. Forward-looking alone outperforms combining forward and backward components. Setting the backward weight to zero consistently produces better results than averaging, indicating that once you have LLM-generated reasoning, the original query adds no useful signal. Even a 3B model for forward lookup shows visible improvements. The reasoning doesn't need to be correct. It just needs to contain relevant language that points toward the right chunks. Smaller models can systematically improve larger ones without fine-tuning or reinforcement learning. The lightweight forward pass filters context more precisely than query-based retrieval, reducing both noise and latency for the final generation step. Paper: https://t.co/RiRyx0A6tC Learn to build RAG systems and AI Agents in our academy: https://t.co/zQXQt0PMbG

The next scaling frontier isn't bigger models. It's societies of models and tools. That's the big claim made in this concept paper. It actually points to something really important in the AI field. Let's take a look: (bookmark for later) Classical scaling laws relate performance to parameters, tokens, and compute. More of each, better loss. These laws have driven a decade of progress. But they describe a single-agent world: one model, static corpus, one prompt at a time. There is a clear misalignment with how real-world problems actually work. This new perspective paper argues that scaling must expand along three new axes: population, organization, and institution. Not just how many parameters, but how many agents, how they're connected, and what norms govern their interaction. Simply adding more agents doesn't monotonically improve performance. Early experiments in multi-agent debate show that naive agent swarms can degenerate into majority herding, where the first plausible-but-wrong answer locks in and gets reinforced through subsequent rounds. Groups of frontier models fail to integrate distributed information, displaying human-like collective failures. The paper proposes three interaction regimes for multi-agent systems: 1) Competition: debate, adversarial critique, self-play. 2) Collaboration: role specialization, division of labor, complementary expertise. 3) Coordination: orchestrated workflows, planner-worker hierarchies, reliable execution. Which regime fits which task matters. Competitive regimes suit focused reasoning problems with clear correctness criteria. Collaborative regimes fit an open-ended design where diverse skills are needed. Coordinated regimes handle long-horizon, safety-critical workflows. The architectural implications are significant. Effective multi-agent systems need cognitive diversity: agents with different priors, reasoning styles, and tool access. They need institutional memory: persistent artifacts that outlive individual sessions, analogous to lab notebooks and version control. They need communication topologies: not just broadcast or hub-and-spoke, but structured graphs that balance diversity and coherence. Training objectives must change, too. Current models optimize individual next-token prediction. Multi-agent systems need collective objectives: group accuracy, calibration, hypothesis diversity, and conflict resolution quality. The paper proposes "multi-agent pretraining" where debate, peer review, and negotiation become first-class optimization targets. Paper: https://t.co/OqwIIeJLYr Learn to build AI agents in my academy: https://t.co/JBU5beIoD0

Ever opened a repo and thought: “What does this codebase actually do?” “Where did I put that file?” 🤔 You’re not alone. With the release of Gemini 3 Flash ⚡ from @GoogleDeepMind, we decided to build something fun (and useful): a file-system explorer agent that answers those questions for you. 🔍 What makes it cool? 🔧 Tool-powered exploration: the agent can read, grep, and glob your files 📄 Real-time parsing: unstructured files are instantly turned into clean, readable Markdown using LlamaParse ❓ Interactive by design: the agent asks clarifying and follow-up questions when things get ambiguous ♻️ Agentic workflows: runs are guided by looping, branching, and human-in-the-loop patterns for controlled, effective exploration 🎥 Check out the demo below! 💻 GitHub: https://t.co/6DeVZIhWvw 📚 Learn more about LlamaIndex Agent Workflows: https://t.co/d13Xoa5ycC 🦙 Get started with LlamaParse: https://t.co/gjFS6evAiT

Gemini 3 Flash gives you frontier intelligence at a fraction of the cost. ⚡ Here’s how it’s built for speed and scale 🧵

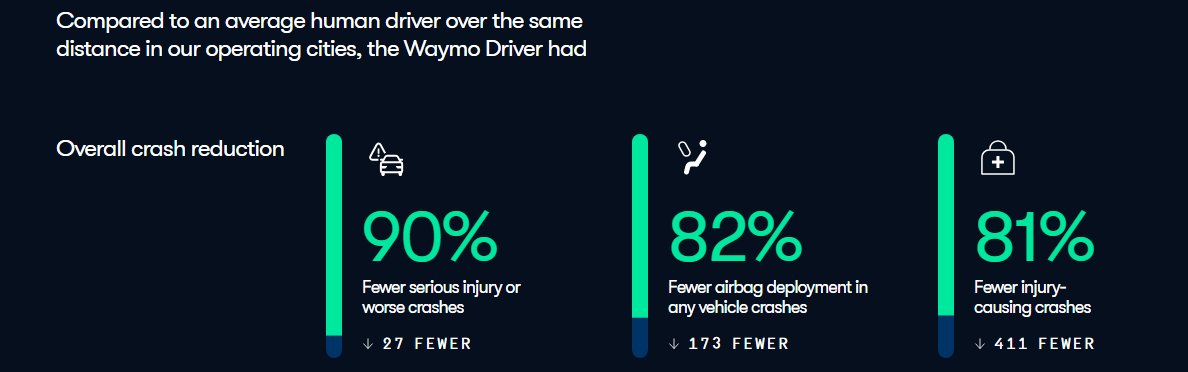

We have exactly these statistics for self-driving cars and those statistics play a central role in the discussions about the technology. https://t.co/adFdlR2duK

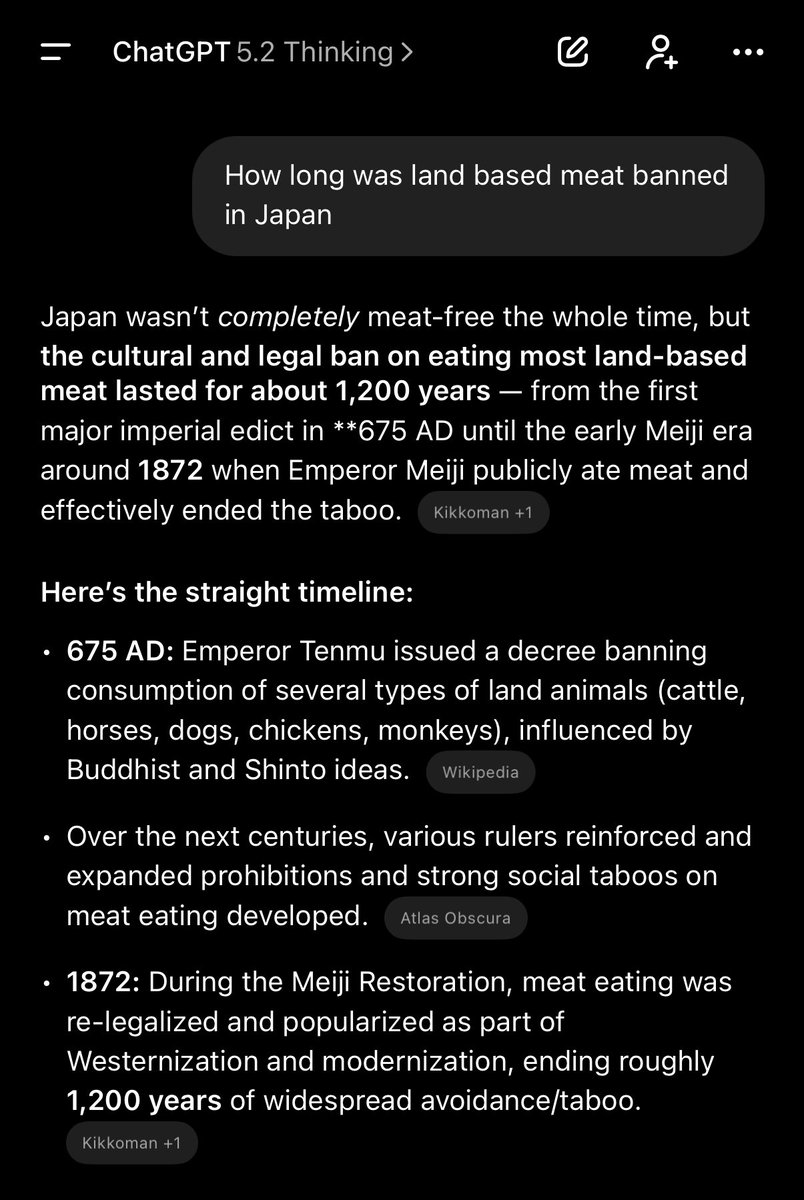

Til https://t.co/RL6j9JWTGL

@ManusAI hit 100M! Happy to be here for the ride and to help make my little dent :) i guess next milestone 1b? https://t.co/fy1nmFxsnE

It’s official: Manus just hit $100M ARR in 8 months. Working at a startup is like building a rocket ship while flying it. Some days are chaotic, but moments like these? You just have to be there. So proud to be part of this journey. We're hiring! If you want to build the future, come join us.

$0 → $100M ARR in 8 months. Since we launched in March: -147 trillion tokens processed -80M+ virtual computers created -Total revenue run rate over $125M Thank you to everyone building with us. https://t.co/TZJ3n162zl

Elon Musk in 2019 on LiDAR: “They’re all going to dump LIDAR; that’s my prediction. I should point out that I don’t actually super hate LIDAR as much as it may sound. But at SpaceX, SpaceX Dragon uses LIDAR to navigate to the space station and dock. Not only that, SpaceX developed its own LIDAR from scratch to do that, and I spearheaded that effort personally. Because in that scenario, LIDAR makes sense. In cars, it’s freaking stupid. Once you solve Vision, it’s worthless.”

BREAKING: Grok Voice API is now live. It lets you build apps that talk with users in real time. It listens, understands speech in many languages, and replies with natural voices, making it easy to build voice assistants or phone agents. Features • 5 built in voices to choose from: Ara, Rex, Sal, Eve, Leo • Live voice conversations using WebSocket • Understands and replies in many languages • Natural sounding voice options • Low delay for smooth back and forth speech • Can use tools like web search and X search Great for voice assistants and phone agents.

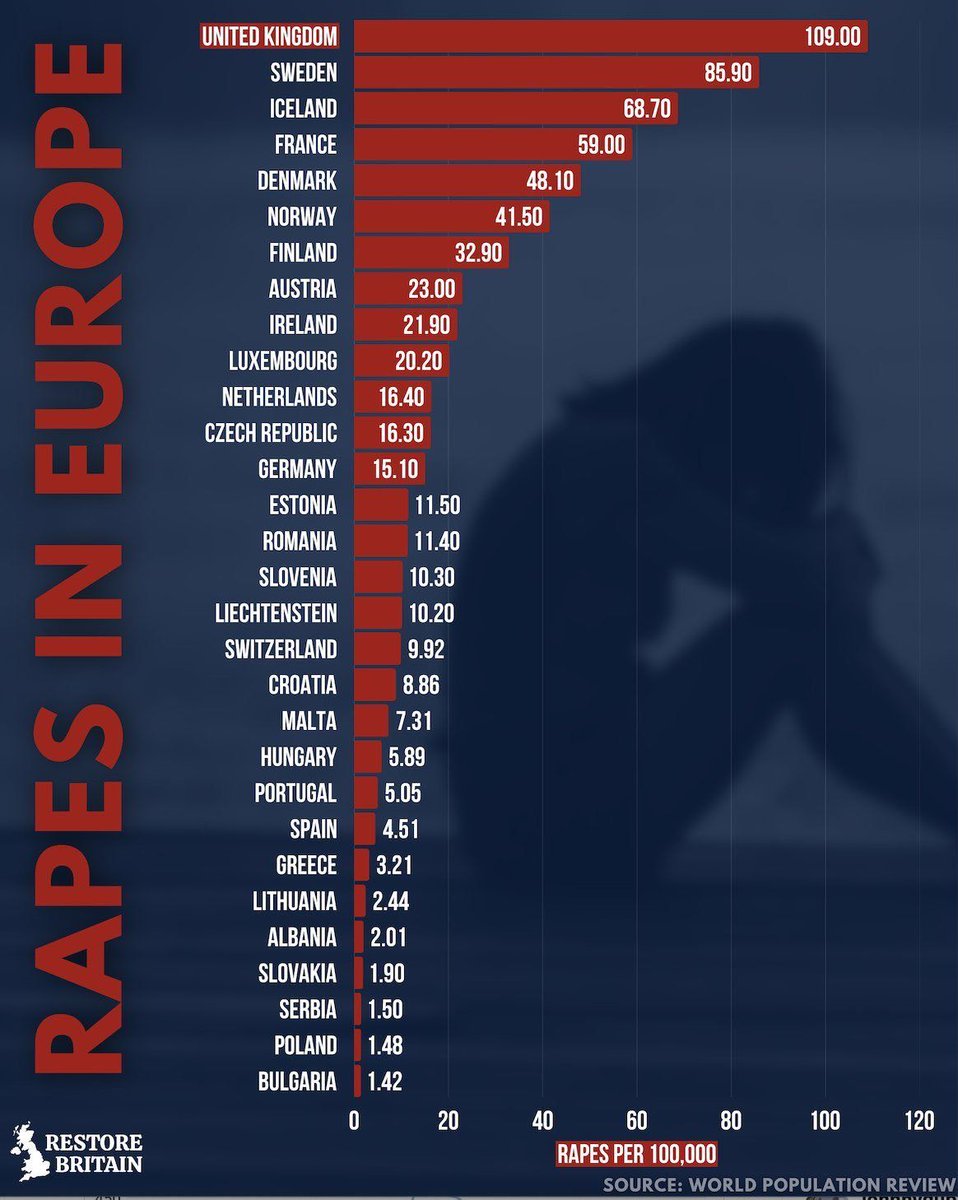

🚨🇬🇧 UK LEADS EUROPE IN RAPE RATES - SHOCKING STATS SPARK DEBATE ON IMMIGRATION, CRIME, AND CULTURE New data's out, and it's grim. The UK is sitting at the top of Europe's rape incidence chart with a staggering 109.06 cases per 100,000 people, far ahead of Sweden at 68.50 and France at 59.00. The figures come from Restore Britain's analysis of World Population Review data. The drop-off after the UK is steep. Norway stands at 41.50, Denmark at 32.50, and rates fall sharply from there. On raw numbers alone, the UK is an extreme outlier. This isn't just statistics - it's a political and cultural flashpoint. Many argue mass immigration policies and weak border controls have imported cultural conflicts and driven crime spikes. Others focus on failures inside the system itself: low conviction rates, collapsed trust in policing, and victim-blaming dynamics that discourage reporting. Comparing rape statistics across countries is notoriously messy. Definitions differ, counting methods vary, and reporting incentives are not uniform. Still, the UK's numbers are difficult to dismiss. High-profile cases, collapsing conviction rates, and growing public anger are pushing the issue into the center of national debate. Calls are mounting for tougher immigration controls, stronger cultural integration expectations, and major reforms in how sexual violence is investigated and prosecuted. Whatever the cause, the current trajectory is so obiously unsustainable. Source: Restore Britain, World Population Review