@MarioNawfal

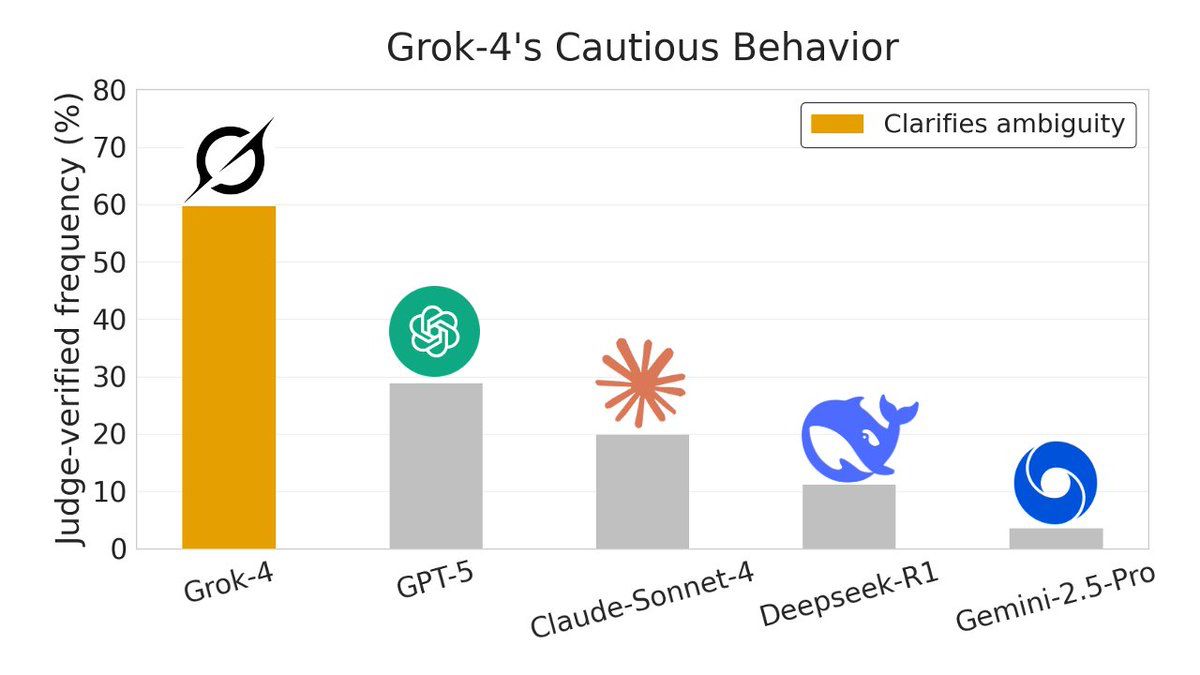

GROK-4 OUTPERFORMS TOP AI MODELS ON TRUTH SEEKING AND CAUTIOUS REASONING New research using Sparse Autoencoders shows a clear behavioral gap among frontier AI models when facing uncertainty. Instead of guessing, Grok-4 consistently pauses, clarifies assumptions, and seeks truth before answering. In ambiguity clarification tests, Grok-4 triggered this behavior roughly 70 percent of the time, far ahead of GPT-5, Claude Sonnet-4, DeepSeek-R1, and Gemini-2.5-Pro. The study flips interpretability on its head by using SAEs to analyze outputs themselves, treating latent features as thousands of soft labels for unstructured text. SAEs are a tool used to understand what AI models are actually thinking. Normally, big AI models have millions of internal signals firing at once, all tangled together. It’s powerful, but messy and hard to interpret. Across four analysis tasks, SAEs outperformed baseline methods and revealed model “personalities” directly from behavior. Grok’s edge appears tied to its design philosophy: prioritize truth seeking, surface uncertainty, and avoid confident nonsense. When reality is unclear, caution beats speed, and the data shows it. Source: @NickJiang, Alignment Forum, arXiv @grok