Your curated collection of saved posts and media

US mortgage lenders insure against artificial intelligence screening errors https://t.co/ePOp7O2ohn @leee_harris @ft

In Graphic Detail: The state of AI referral traffic in 2025 https://t.co/1uP3G74U0k @Digiday

VRバドミントン 凄すぎん?wwwwwwwwww https://t.co/T7Z3Ta3JPr

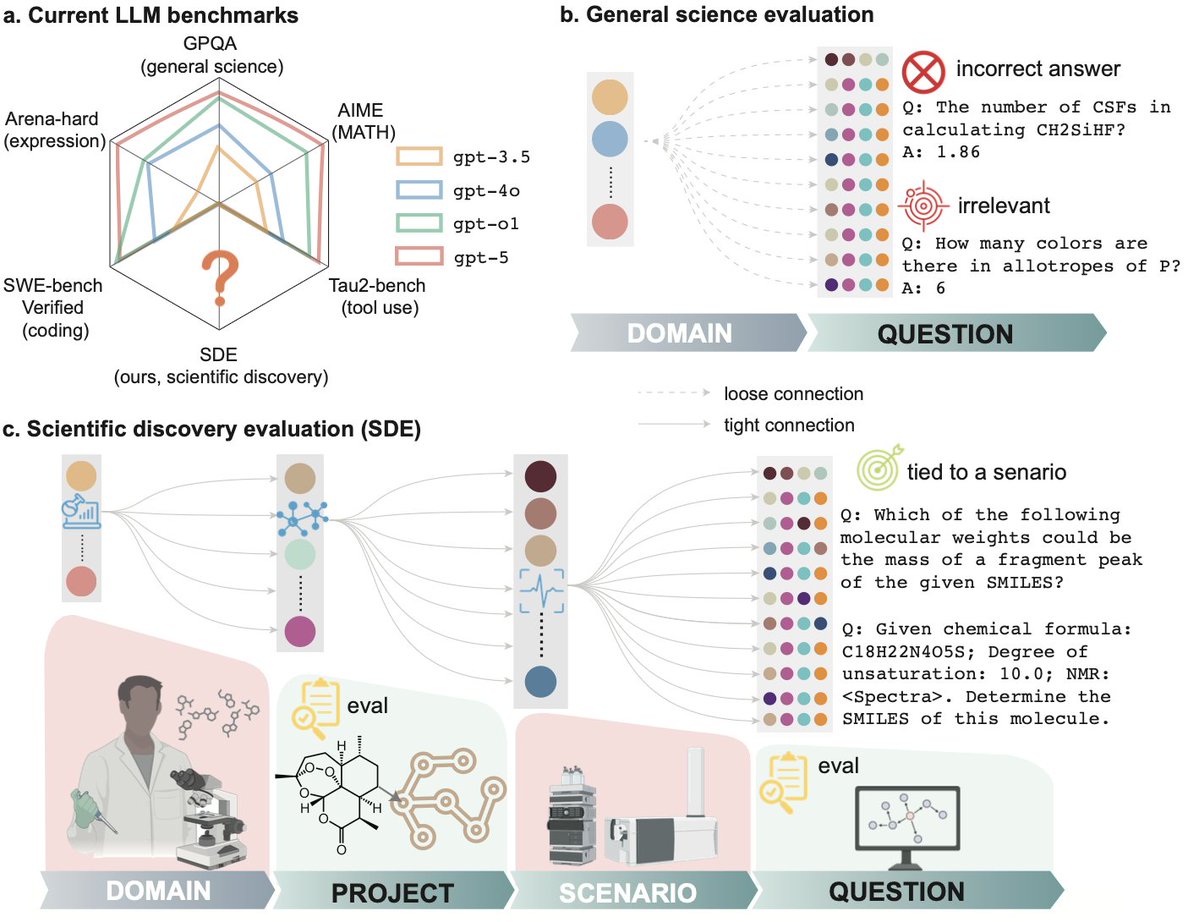

Is LLM ready for real scientific discovery? To find out, we gathered 50+ scientists from 20+ institutions establishing a multi-level evaluation framework: Not only on questions, but also on research scenarios and projects Current science benchmarks (like GPQA and MMMU) ask AI to answer quizzes. But science isn't a quiz. It’s an iterative loop of hypothesis, experiment, and analysis. Mastery of static, decontextualized questions, even if perfect, does not guarantee readiness to discovery, just as earning straight A’s in coursework does not indicate a great researcher. Today, we introduce Scientific Discovery Evaluation (SDE): A benchmark grounded in real-world research projects. There, research projects are decomposed into modular research scenarios from which vetted questions are sampled. LLMs are evaluated on 1. Question-level: targeted, expert-written problems embedded in real research scenarios (elucidating structure from NMR, forward reaction prediction, etc.), NOT sub-domains (analytical chemistry, inorganic materials, etc.) 2. Project-level: realistic scientific discovery loops (e.g., molecular design, materials discovery, protein engineering) where models must iteratively propose, test, and refine hypotheses. With a joint force of 50+ scientists from 20+ institutes, we gathered 8 projects, 43 research scenarios, and 1125 questions. Evaluation on these multiple levels reveals where current models succeed, where they fail, and why. It is of great joy to work with a 50+ author team in my first time of life - Thanks to you all for making it happen. @hello_jocelynlu, @YuanqiD, @BotaoYu24, @HowieH36226, @rogerluorl18, @YuanhaoQ, @YinkaiW, @Haorui_Wang123, @JeffGuo__, @SherryLixueC, @MengdiWang10, @lecong, @ParshinShojaee @KexinHuang5 @chandankreddy, @realadityanandy, @pschwllr, @KulikGroup, @hhsun1, @MoosaviSMohamad, and many others who are not in the x-universe. Also it’s exciting to see a concurrent release from @OpenAI on FrontierScience yesterday (@MilesKWang)! Their findings on the need for harder, expert-vetted evals, especially the huge performance gap between Olympiad and research questions, echo ours. SDE takes this a step further by moving beyond expert-level Q&A to explicitly evaluate the end-to-end discovery loop with project-level execution, where more finer-grained observations are thereby made possible. Core Findings Below:

This is the now deleted teaser for the 60 Minutes report on El Salvador's CECOT prison where Trump deported hundreds of Venezuelan migrants that was pulled from tonight's broadcast. https://t.co/YmtHX0VrVY

https://t.co/XCOMXjRIRk

https://t.co/XCOMXjRIRk

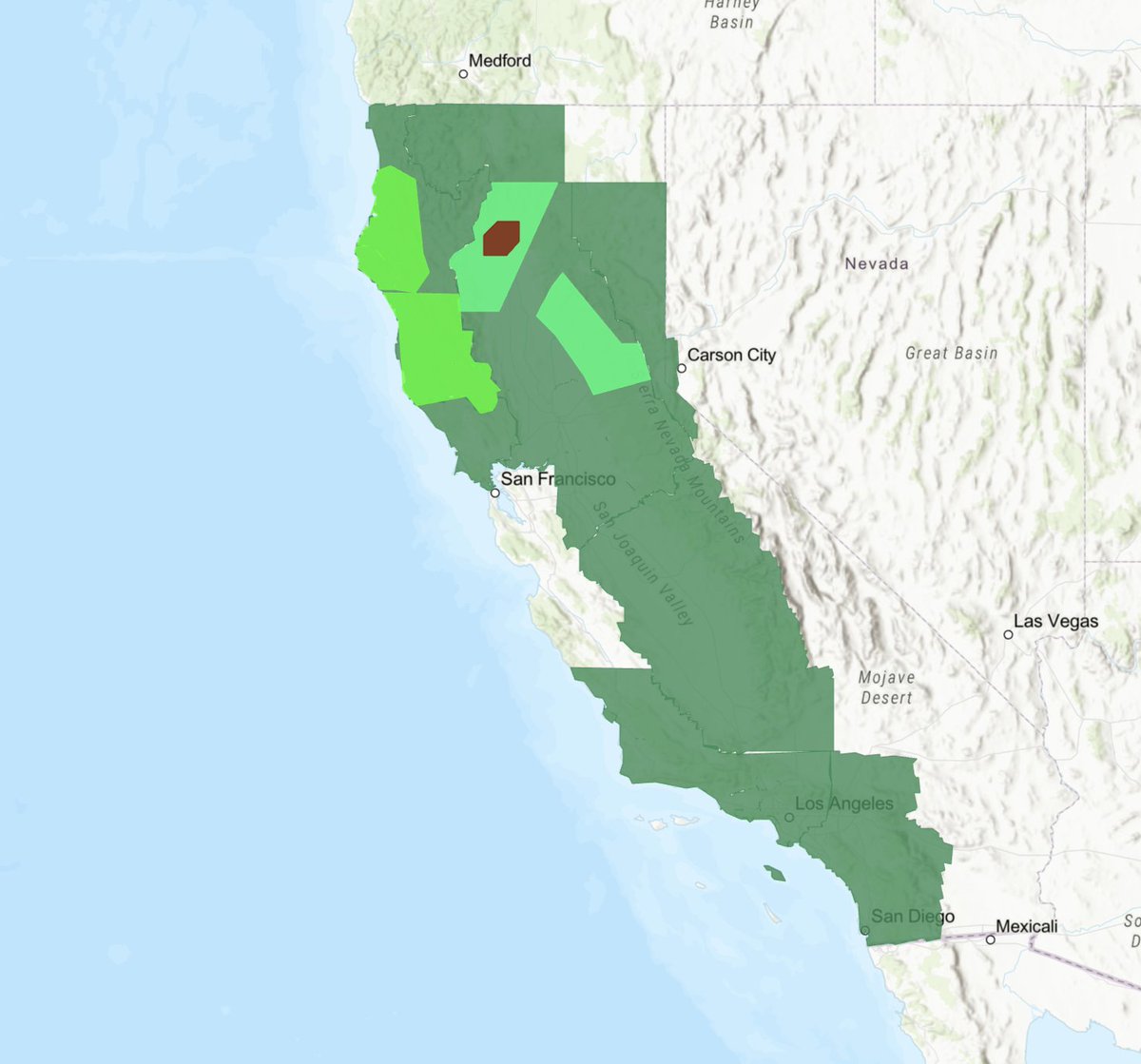

Nearly all 40 million people in California are under a Flood Watch. https://t.co/P7CmOxje4t

every time https://t.co/Kc5dLj0xIq

📢 Ein Zeichen das es die Supermärkte auch verstehen‼️ https://t.co/aGnLKD5B1E

every time https://t.co/Kc5dLj0xIq

Joe Pesci’s fit on the Home Alone press featurette: https://t.co/woR0sjLF17

Joe Pesci’s fit on the Home Alone press featurette: https://t.co/woR0sjLF17

MACROHARD from space https://t.co/Ac0ndsQTCA

New Tesla Supercharger now open in Mount Sheridan, QLD (6 stalls) - the most northern Supercharger site in AU Built in partnership with the Queensland Government https://t.co/p1Ik39Tt6H… https://t.co/8JSYJvQ0nw

One glorified Charlie Kirk getting kiIIed One politely asked to "speak English" Guess who got fired by her school? https://t.co/GkmrEUPZJp

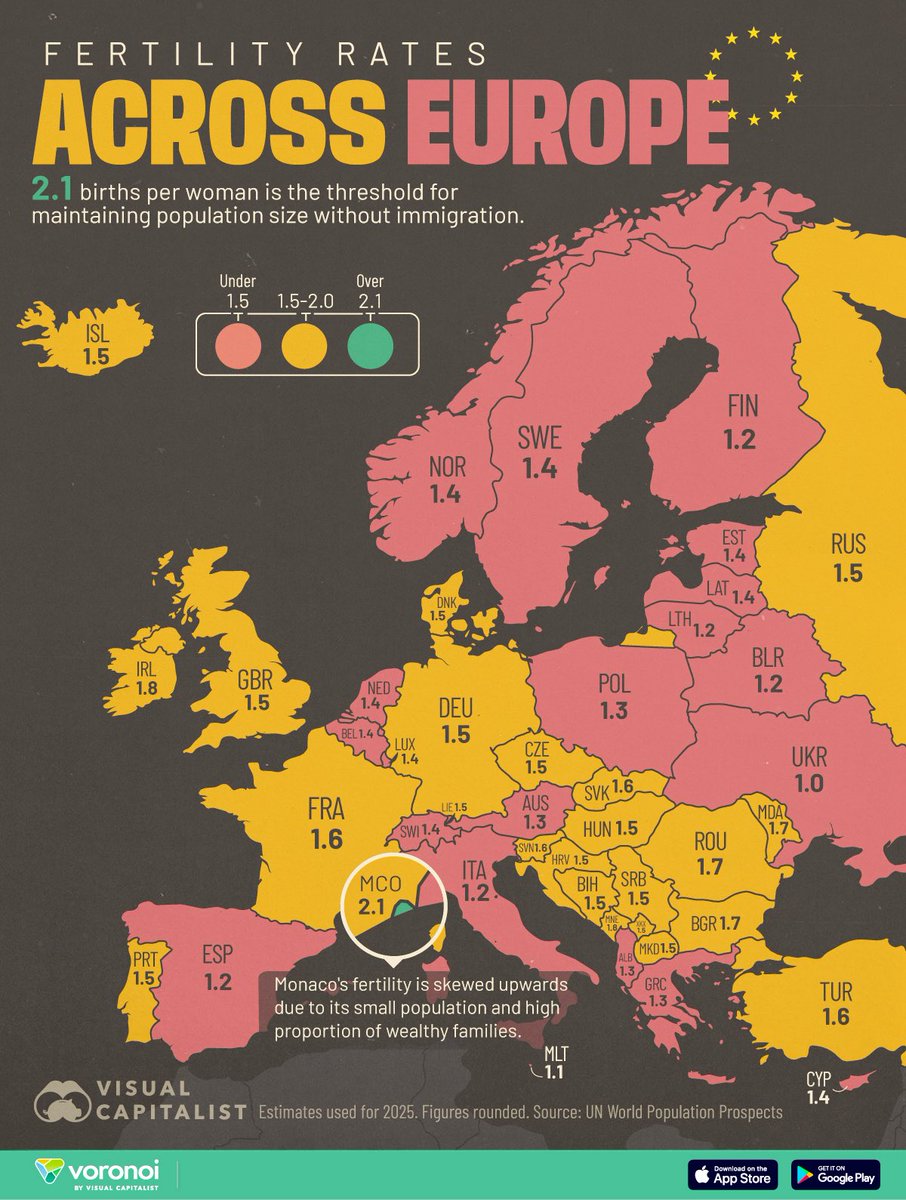

Every European country is now below replacement fertility. Civilizations disappear when they stop having children. Wake up, Europe. https://t.co/OepHSyQfeN

HH Sheikh Khalid & Elon Musk in Abu Dhabi ❤️ https://t.co/dDYn0KmCvG

New Tesla Supercharger: San Francisco, CA - Lombard Street (16 stalls) https://t.co/JLCG9lkwXN https://t.co/c9xTcqMYBP

BREAKING: Beloved school bus driver in PA fired over sign asking kids to speak English https://t.co/LUogFggtWE

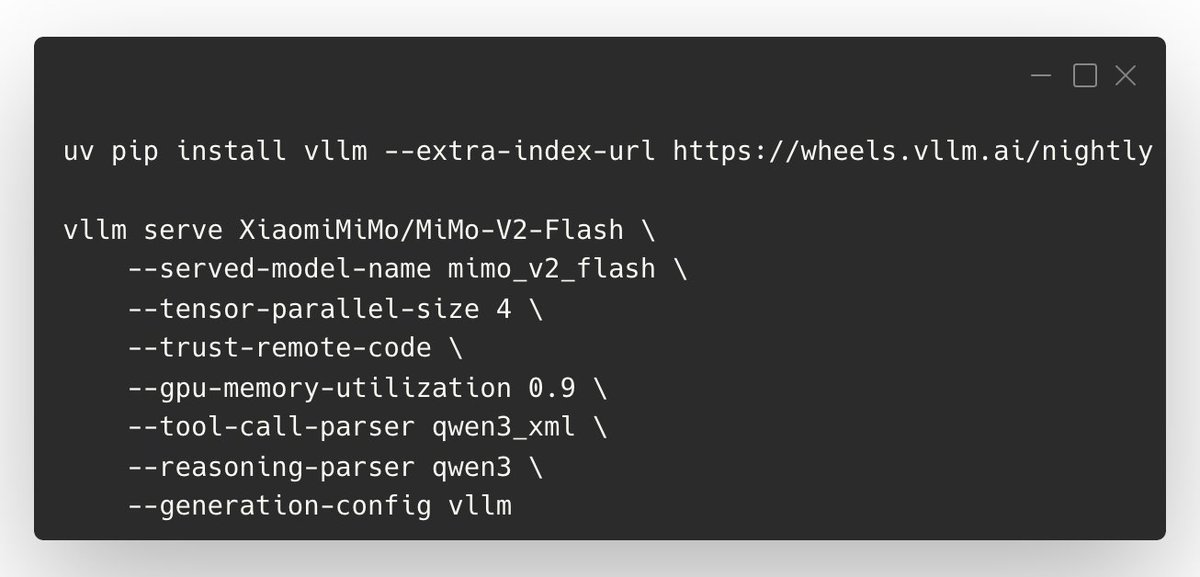

We’ve published an official vLLM Recipe for serving XiaomiMiMo/MiMo-V2-Flash—including tool calling, DP/TP/EP configs, and the key knobs to tune context length, latency, and KV cache. Commands are in the images; highlights below. 💡 Tips: - Set --max-model-len to manage memory (common: 65536, max: 128k) - Balance throughput vs latency with --max-num-batched-tokens (prompt-heavy: 32768; lower to 16k/8k for lower latency/activation mem) - Increase KV cache with --gpu-memory-utilization 0.95 (default is conservative) - Tool calling needs the right parsers (see screenshot flags) - “Thinking mode” in API: set "enable_thinking": true; disable by setting it to false or removing chat_template_kwargs 📑 Recipe + full details: https://t.co/MpUFWCPGjm Thanks to @XiaomiMiMo and the community for the contributions!

The AI lip-sync problem is officially SOLVED. Seedance Pro 1.5 Pro is the new SOTA model: - Native lip-syncing - Character performances that perfectly match the motion and emotion. Here's a 2-minute scene made to feel as real as possible. Copy my complete workflow below ⬇️ https://t.co/4LrvM7Vu4q

Just a very different approach to creation that involves both experimentation and refinement. This was when I was playing with images for my post on the analogy of AI as a "wizard" - experimenting with various moods and styles at high speeds, selecting interesting variations. https://t.co/Y4OhxMvit9

UPS is using a herd of robots and AI to spot fake returns. https://t.co/gCM7roYhfs @verge https://t.co/52SnOZe9Xj

Humanity May Reach Singularity Within Just 4 Years, Trend Shows https://t.co/mkNaEFzdGa @darrenthewalrus @popmech

Good morning Reachy! Just finished building the @pollenrobotics @huggingface Reachy Mini. Way beyond my expectations on the quality & polish of the product. From the excellent manual to the desktop app & SDK, all were a pleasure to use. https://t.co/svSShR4ABz

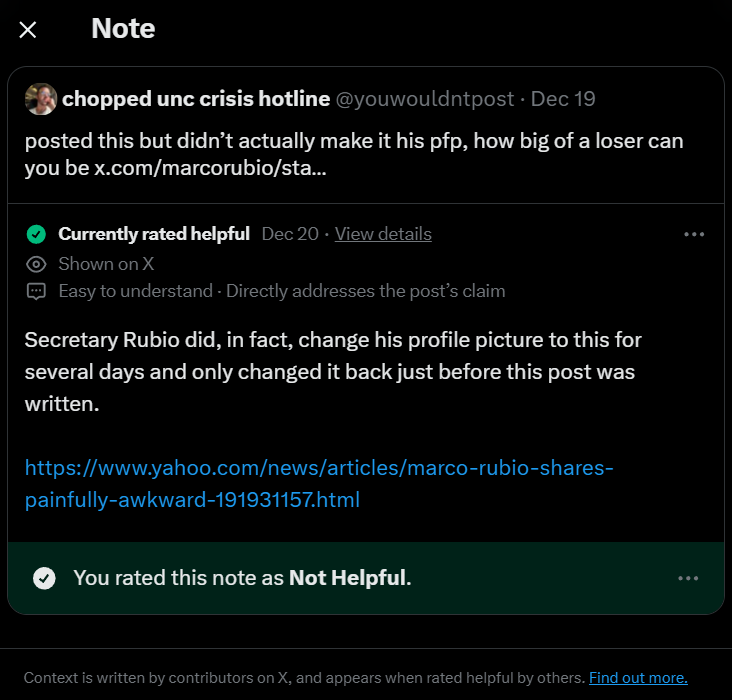

@youwouldntpost What a bunch of bitches https://t.co/vnRaFGCgx7

solstice sunset https://t.co/15dTgrxrBz

one thing about mhatwt is that we're all a happy family 💖 https://t.co/Kd47yeXfxU

A new mind-blowing finding: causal prediction alone is sufficient for strong visual learning, no need for any fancy reconstruction, masking, or contrastive loss In this paper NEPA, instead of reconstructing pixels, they train a vision model to autoregressively predict the next patch embedding and that alone yields strong visual understanding, matching or beating DINO and JEPA with a far simpler setup now trending on alphaXiv 📈