Your curated collection of saved posts and media

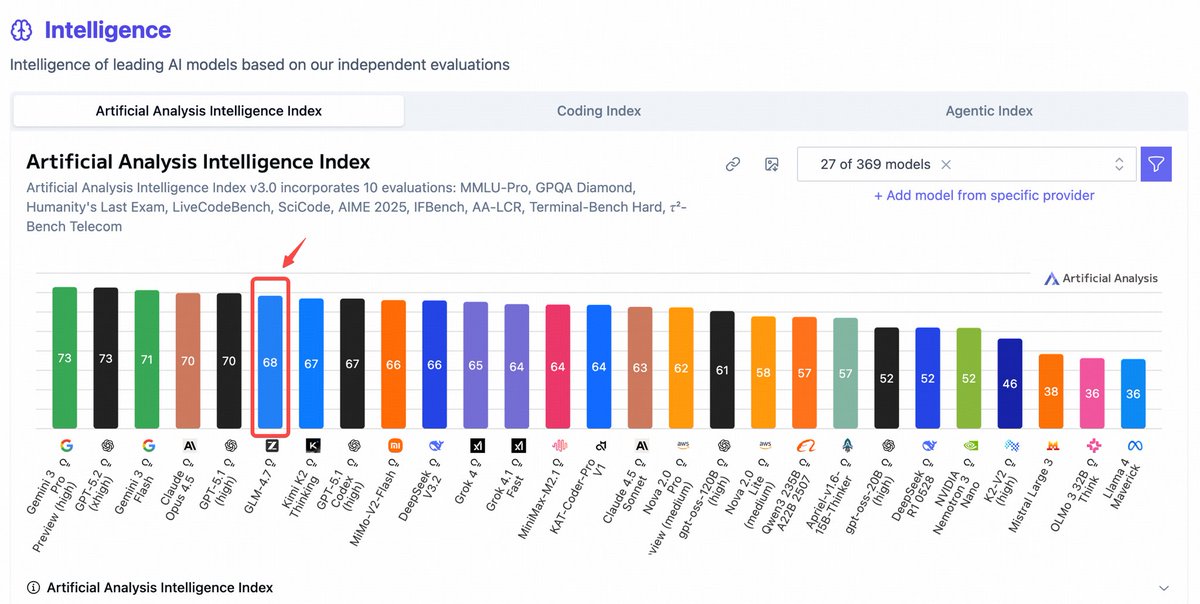

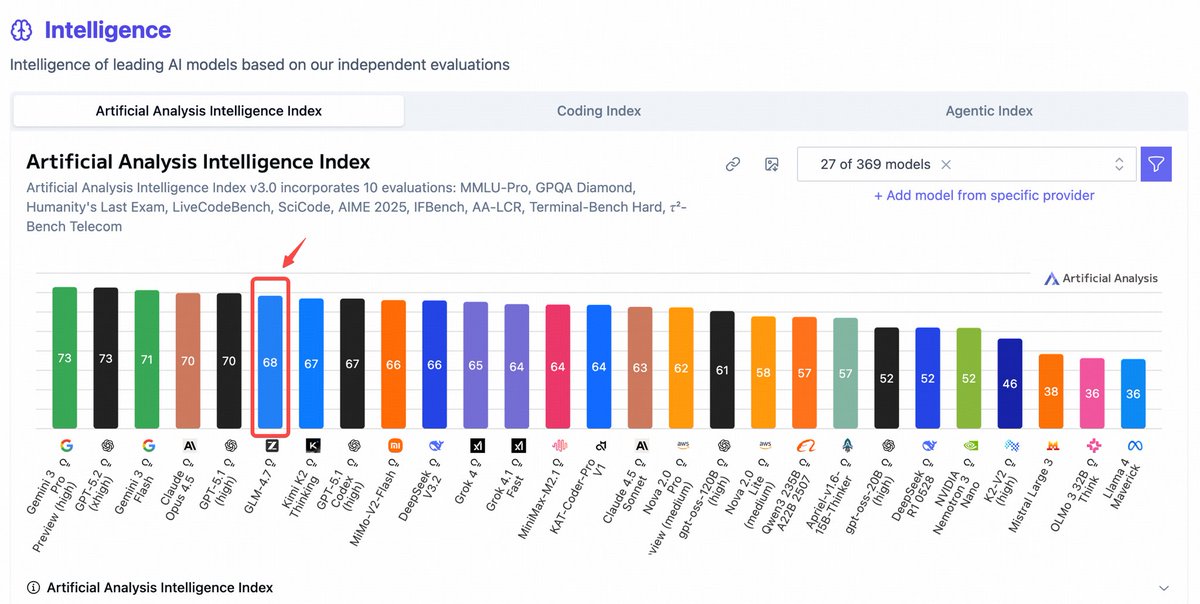

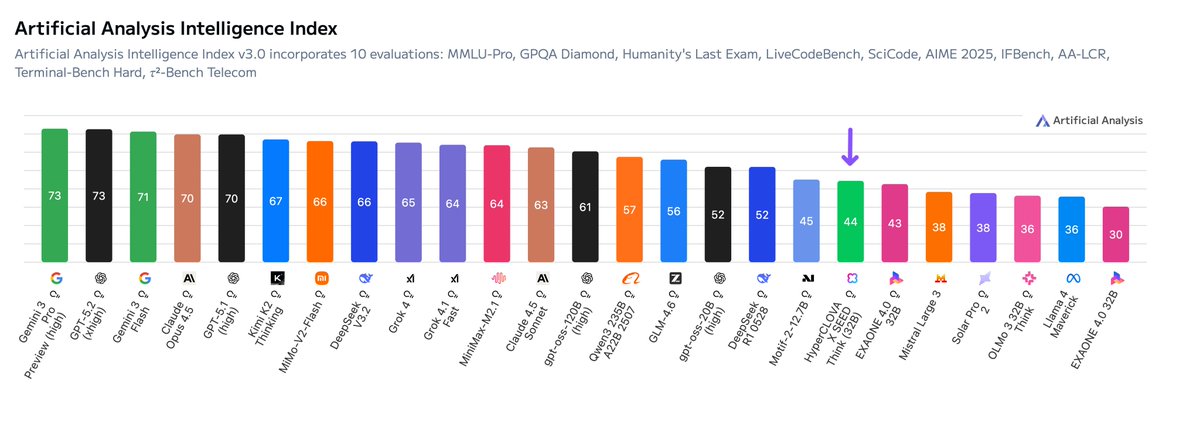

GLM-4.7 ranked **no.1** among all open models https://t.co/JaznkoMBML

GLM-4.7 ranked **no.1** among all open models https://t.co/JaznkoMBML

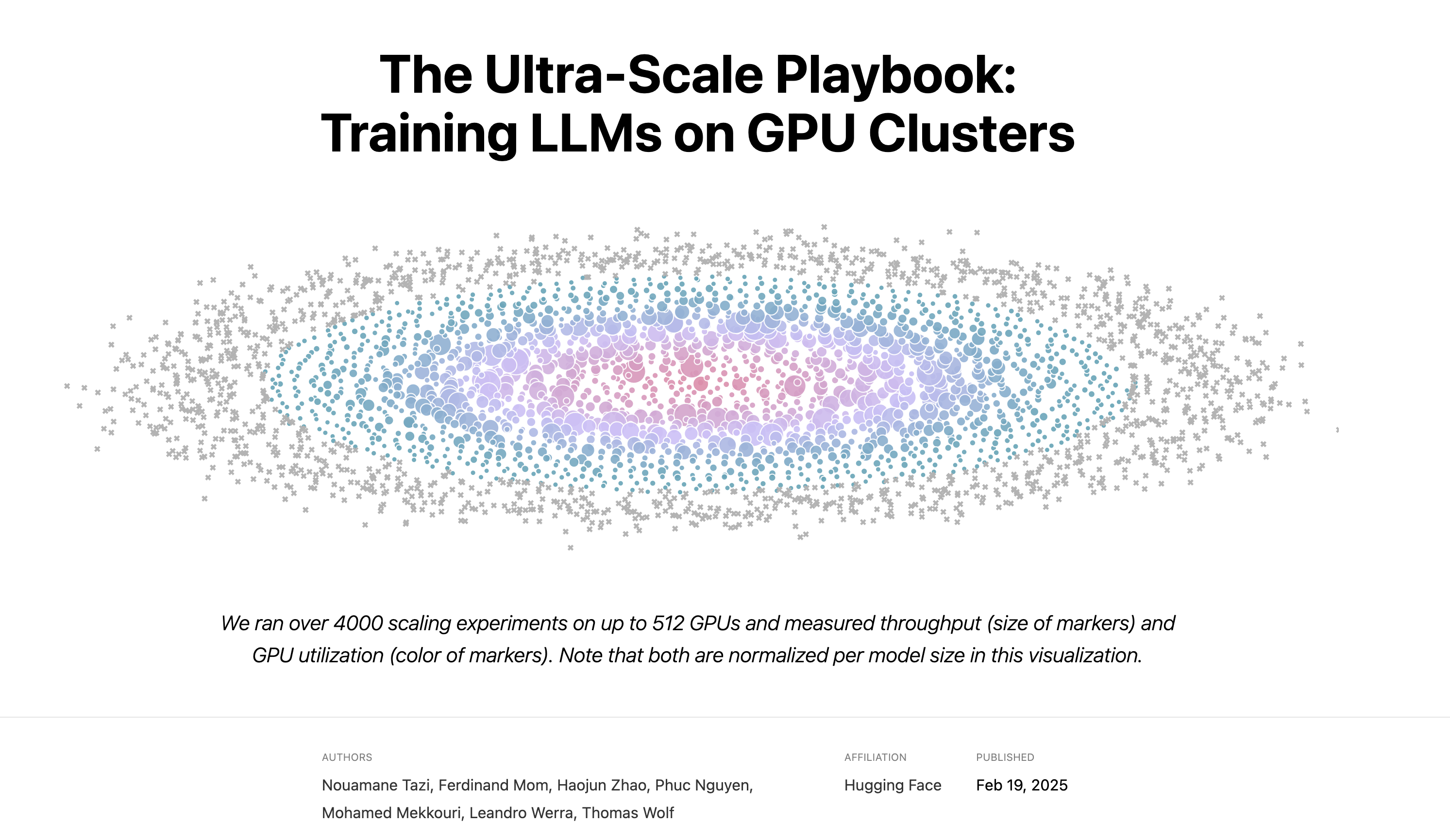

Took some days off and finally had time to read the ultrascla playbook A bit late but it’s really goated https://t.co/h82FMjKotl

it’s adorable!! https://t.co/2KjDpxC3sp

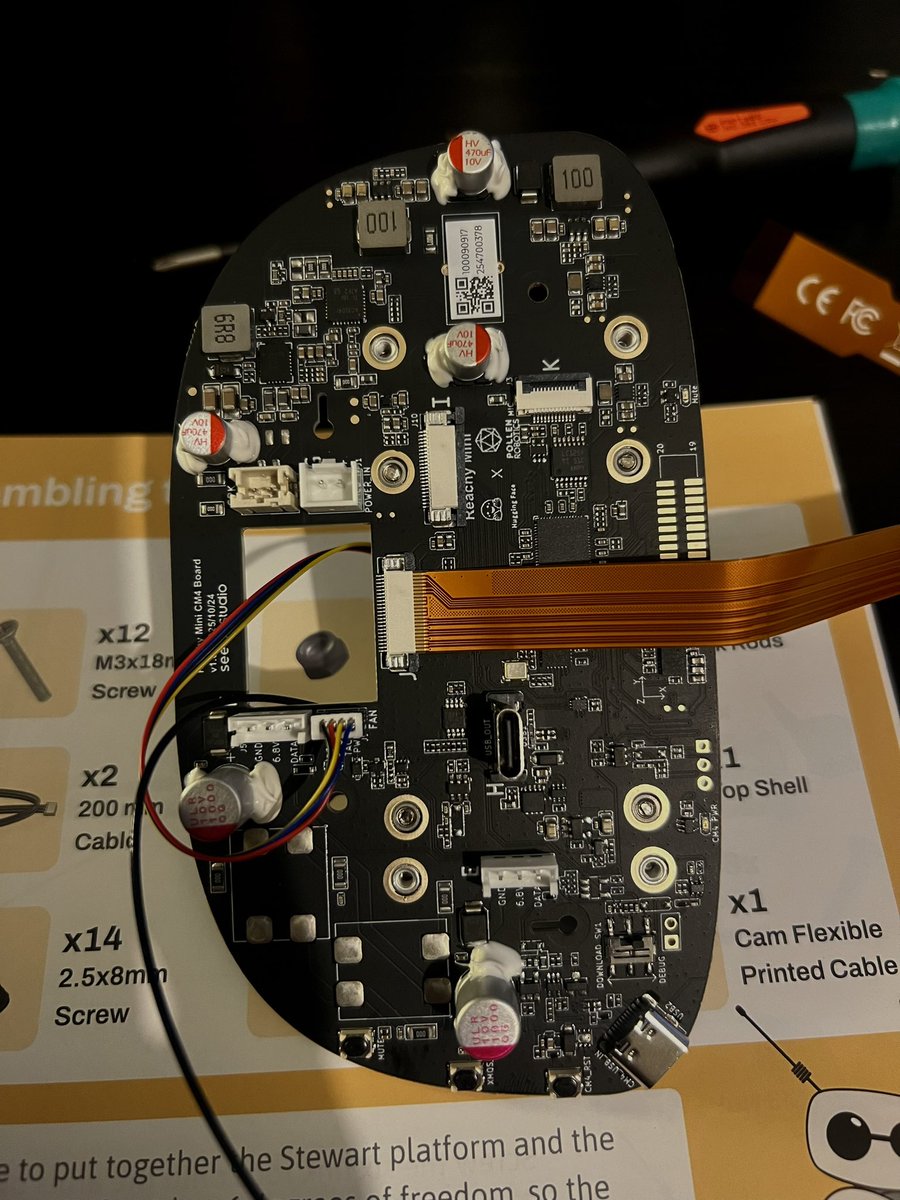

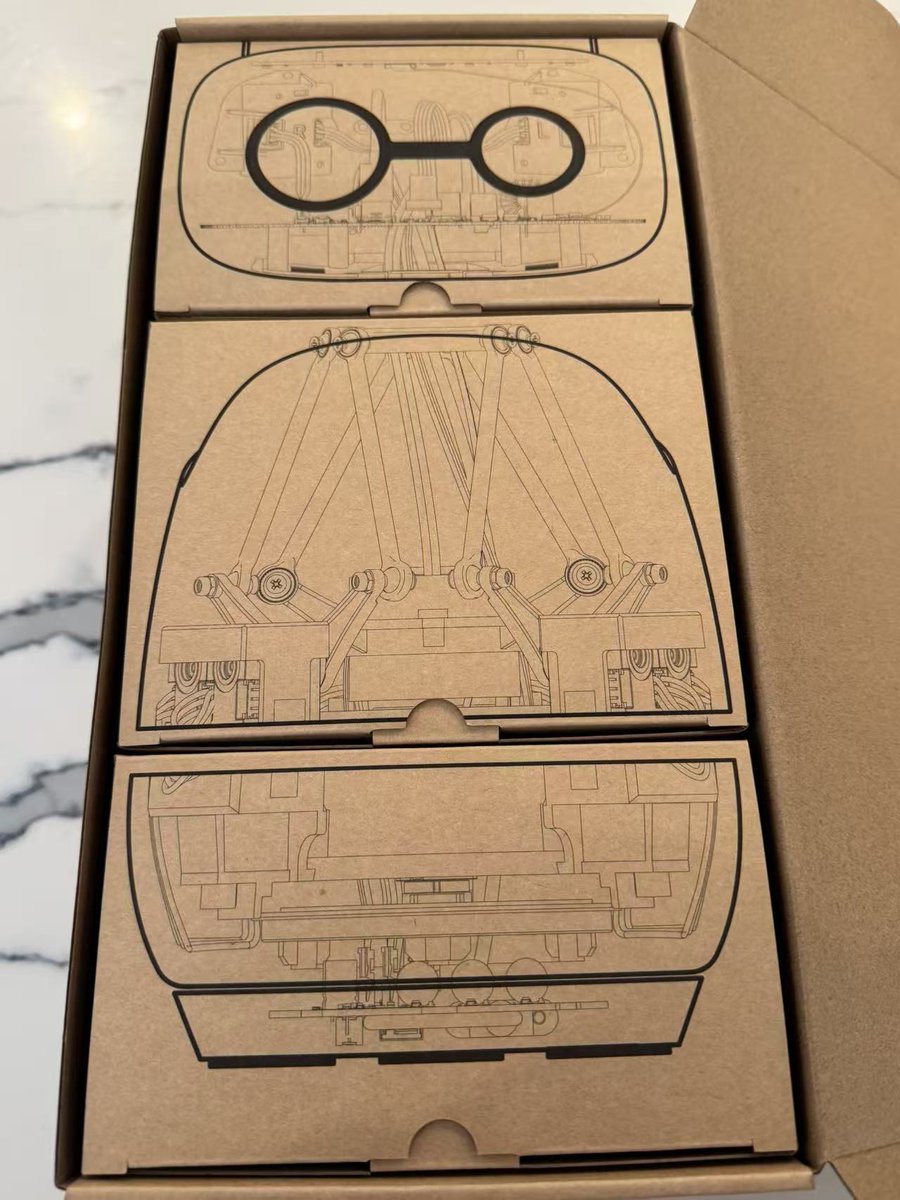

(un)boxing day https://t.co/JLXp75XQUb

it’s adorable!! https://t.co/2KjDpxC3sp

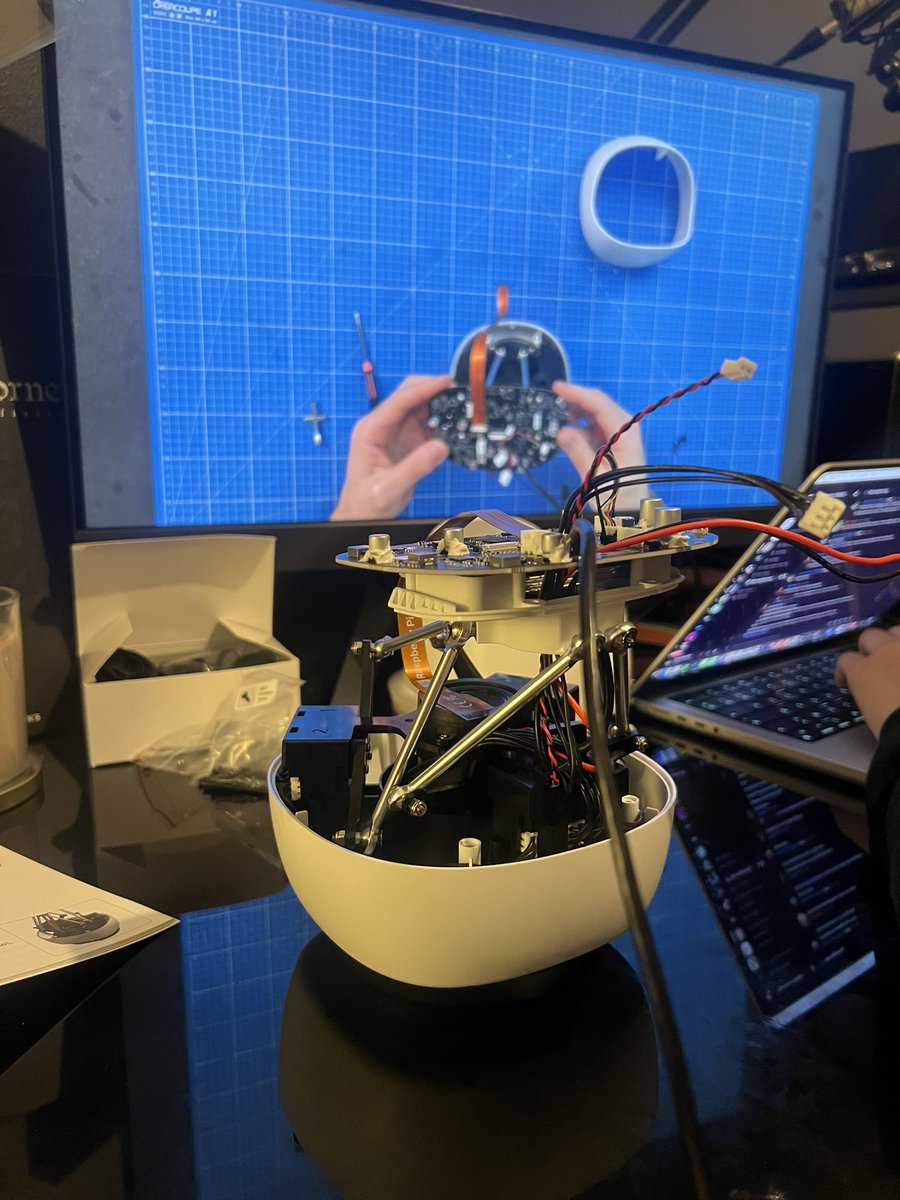

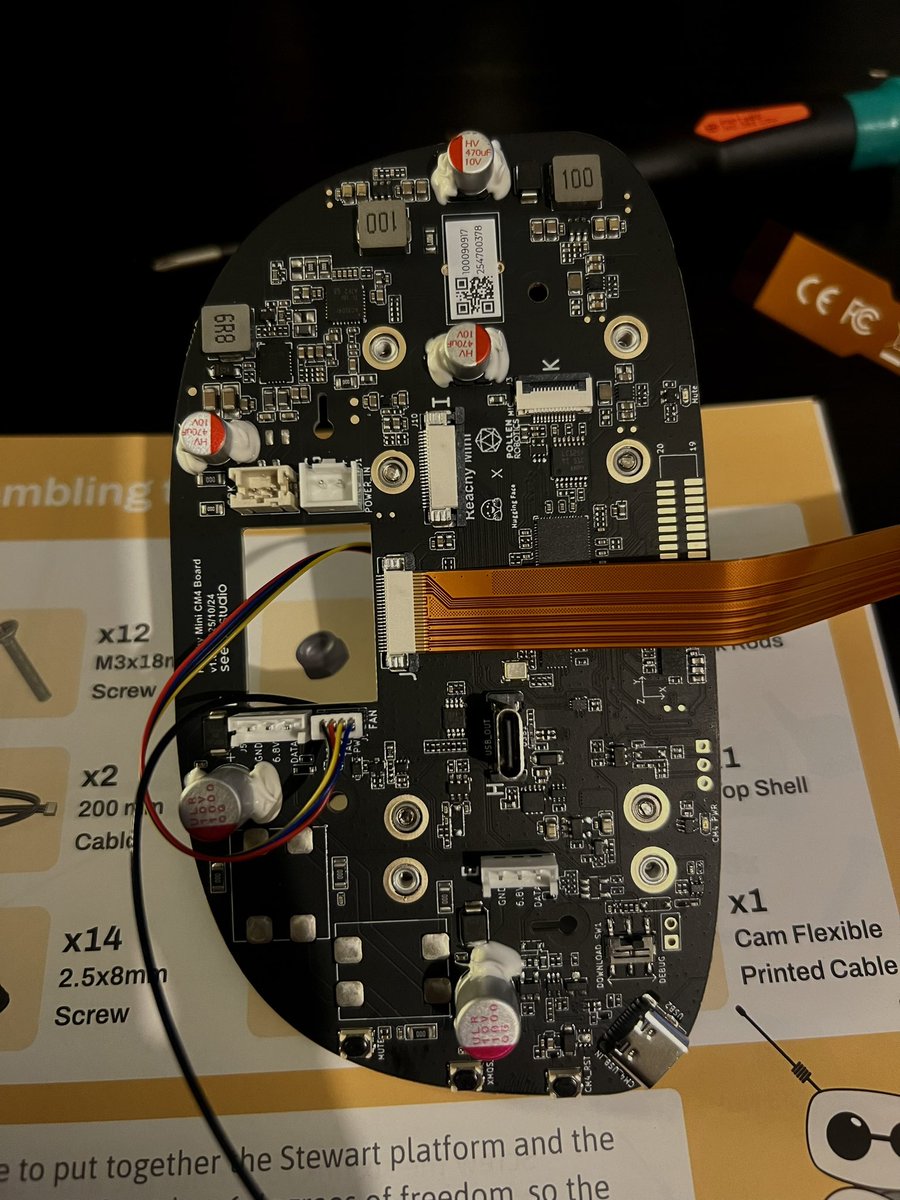

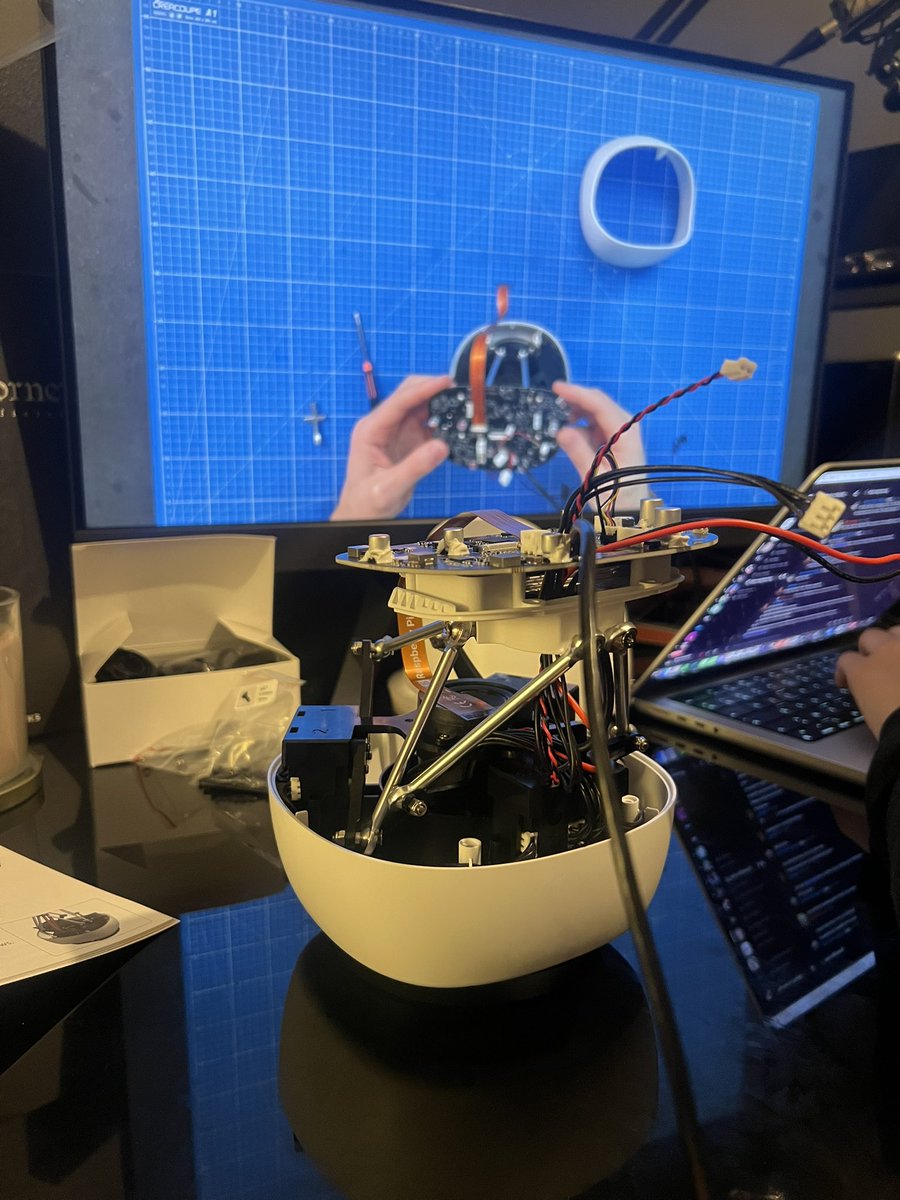

this is so much fun! https://t.co/QgV4YrsxvT

guess who is jealous about my new toy https://t.co/scqwAkIsV5

this is so much fun! https://t.co/QgV4YrsxvT

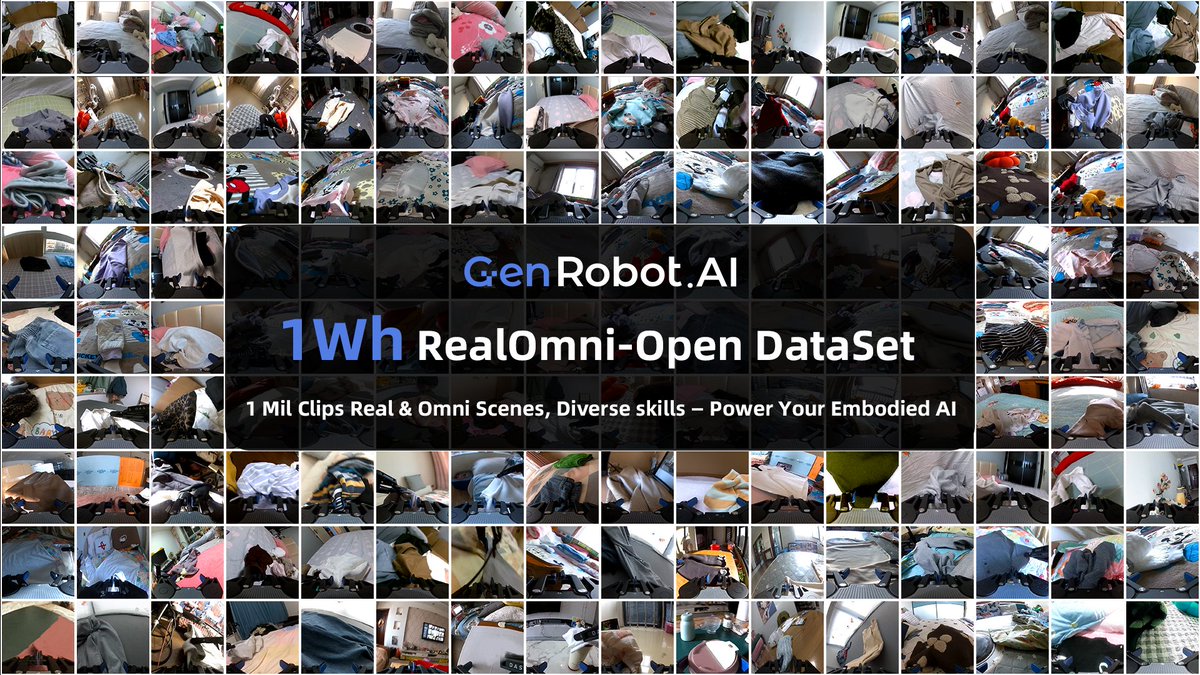

THE LARGEST OPEN-SOURCE EMBODIED AI DATASET IS COMING.🔥🔥🔥 1Wh RealOmni-Open Dataset 🚀🚀🚀 Launching soon on @huggingface https://t.co/nvowO4G8ot

Weights on Hugging Face 🤗 https://t.co/ubMwrcddji

Weights on Hugging Face 🤗 https://t.co/ubMwrcddji

🎁 New year's gift to the community We're open-sourcing FLUX.2 [dev] Turbo, our in house distilled version of FLUX.2 🚀 🏆️ #1 ELO open-source image model (on Artificial Analysis arena) ⚡️ Sub-second generation 🧪 Custom variant of DMD2 distillation for max quality https://t.co/g2OW2BJrL9

Naver, a South Korean internet giant, has just launched HyperCLOVA X SEED Think, a 32B open weights reasoning model that scores 44 on the Artificial Analysis Intelligence Index. This model is one of the strongest South Korean models, and outperforms EXAONE 4.0 32B, a previous Korean model leader Key benchmarking takeaways: ➤ Strength in Agentic Tool Use: HyperCLOVA X SEED Think scores 87% on τ²-Bench Telecom, demonstrating strong performance on agentic tool-use workflows. HyperCLOVA X SEED Think currently ranks among the frontier models in τ²-Bench Telecom, scoring similarly in this category to Gemini 3 Pro Preview ➤ Low token usage: HyperCLOVA X SEED Think demonstrates low token usage relative to other models in the same intelligence tier, using only ~39M reasoning tokens across the Artificial Analysis Intelligence suite. Compared to other Korean models like Motif-2-12.7B (190M reasoning tokens) and Exaone 4.0 32B (96M reasoning tokens), HyperCLOVA X SEED Think sees a clear advantage in token usage which could have latency and cost advantages for at-scale deployment ➤ Korean Language Advantage: HyperCLOVA X SEED Think scores 82% on Global MMLU Lite multilingual index for Korean, roughly in line with leading open-weights models such as gpt-oss-120b in the language category. This highlights the model’s potential usefulness in a primarily Korean language environment ➤ Open weights: HyperCLOVA X SEED Think is open weights and is 32B parameters. This continues the recent trend of newer Korean model labs open sourcing their models in an increasingly competitive AI race See below for further analysis

WeDLM-8B: a diffusion language model with parallel decoding 👀 🔹Beats Qwen3-8B-Instruct on 5/6 benchmarks 🔹3-6× faster on math reasoning (vs vLLM Qwen3-8B) 🔹Native KV cache & FlashAttention support https://t.co/TkObtKdHLN

1/4 We’re releasing MAI-UI—a family of foundation GUI agents. It natively integrates MCP tool use, agent user interaction, device–cloud collaboration, and online RL, establishing state-of-the-art results in general GUI grounding and mobile GUI navigation, surpassing Gemini-2.5-Pro, Seed1.8, and UI-Tars-2 on AndroidWorld. To meet real-world deployment constrains, MAI-UI includes a full-spectrum of sizes, including 2B, 8B, 32B and 235B-A22B variants. We are publicly releasing two models: MAI-UI-2B and MAI-UI-8B.

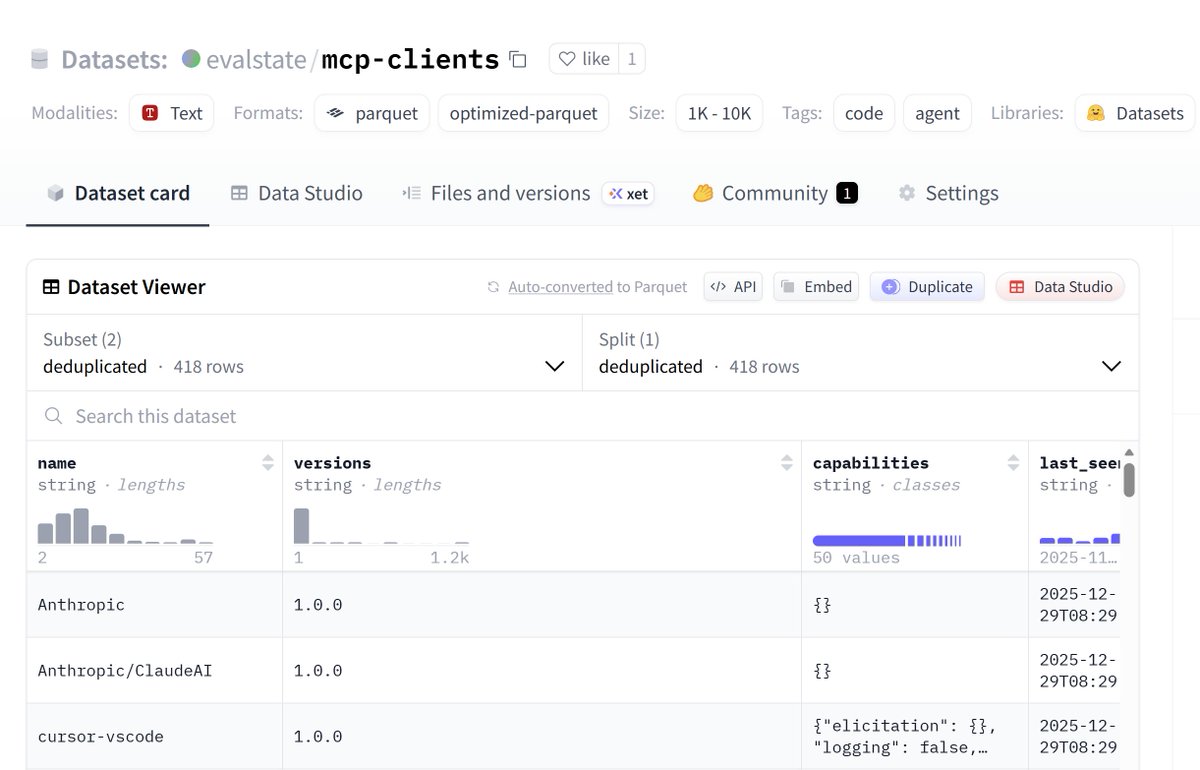

New Dataset! - MCP Clients and Capabilities. Data from connections to the Hugging Face MCP Server from the last 5 weeks, featuring over 400 distinct clients. @tadasayy and @jancurn recently wrote about the "capability gap" that integrators face - datasets like these help build a complete picture.

I want to play more with hardware in 2026 https://t.co/gHJN6cgTu3

guess who is jealous about my new toy https://t.co/scqwAkIsV5

I want to play more with hardware in 2026 https://t.co/gHJN6cgTu3

pov t’es mariée à un mec dans la tech donc tu construis un robot à 1h du mat avec lui https://t.co/EFNYWqREum

pov t’es mariée à un mec dans la tech donc tu construis un robot à 1h du mat avec lui https://t.co/EFNYWqREum

Got a new toy to play with: Reachy Mini. 😃 @huggingface @Thom_Wolf https://t.co/oCVMMJw9Tv

Got a new toy to play with: Reachy Mini. 😃 @huggingface @Thom_Wolf https://t.co/oCVMMJw9Tv

The NVIDIA Nemotron family has crossed 5M downloads on @huggingface 🤗 A massive thank you to the community for your work and enthusiasm. 🏗️ Get started here: https://t.co/czjNSSX8Nm https://t.co/7deQ0cAawE

As the year comes to an end, it’s a good moment to catch up on some of the best long-form pieces published by the @huggingface team. I’ve gathered them all here if you want to read or save them for later: https://t.co/Ov2NPuCsaX https://t.co/jfByF3T4H7

🚩4-bit GLM 4.7 model is now available! https://t.co/XlkLiDOyE8

🚩4-bit GLM 4.7 model is now available! https://t.co/XlkLiDOyE8

Another year of rapid AI advances has created more opportunities than ever for anyone — including those just entering the field — to build software. In fact, many companies just can’t find enough skilled AI talent. Every winter holiday, I spend some time learning and building, and I hope you will too. This helps me sharpen old skills and learn new ones, and it can help you grow your career in tech. To be skilled at building AI systems, I recommend that you: - Take AI courses - Practice building AI systems - (Optionally) read research papers Let me share why each of these is important. I’ve heard some developers advise others to just plunge into building things without worrying about learning. This is bad advice! Unless you’re already surrounded by a community of experienced AI developers, plunging into building without understanding the foundations of AI means you’ll risk reinventing the wheel or — more likely — reinventing the wheel badly! For example, during interviews with job candidates, I have spoken with developers who reinvented standard RAG document chunking strategies, duplicated existing evaluation techniques for Agentic AI, or ended up with messy LLM context management code. If they had taken a couple of relevant courses, they would have better understood the building blocks that already exist. They could still rebuild these blocks from scratch if they wished, or perhaps even invent something superior to existing solutions, but they would have avoided weeks of unnecessary work. So structured learning is important. Moreover, I find taking courses really fun. Rather than watching Netflix, I prefer watching a course by a knowledgeable AI instructor any day! At the same time, taking courses alone isn’t enough. There are many lessons that you’ll gain only from hands-on practice. Learning the theory behind how an airplane works is very important to becoming a pilot, but no one has ever learned to be a pilot just by taking courses. At some point, jumping into the pilot's seat is critical! The good news is that by learning to use highly agentic coders, the process of building is the easiest it has ever been. And learning about AI building blocks might inspire you with new ideas for things to build. If I’m not feeling inspired about what projects to work on, I will usually either take courses or read research papers, and after doing this for a while, I always end up with many new ideas. Moreover, I find building really fun, and I hope you will too. Finally, not everyone has to do this, but I find that many of the strongest candidates on the job market today at least occasionally read research papers. While I find research papers much harder to digest than courses, they contain a lot of knowledge that has not yet been translated to easier-to-understand formats. I put this much lower priority than either taking courses or practicing building, but if you have an opportunity to strengthen your ability to read papers, I urge you to do so too. I find taking courses and building to be fun, and reading papers can be more of a grind, but the flashes of insight I get from reading papers are delightful. Have a wonderful winter holiday and a Happy New Year. In addition to learning and building, I hope you'll spend time with loved ones — that, too, is important! [Original text: https://t.co/MaWDs0AbzG ]

📢 Confession: I ship code I never read. Here's my 2025 workflow. https://t.co/tmxxPowzcR

I had fun at NeurIPS 2025 chatting in the hallways with smart folks about program synthesis & symbolic AI. Thanks @ClementBonnet16 @toniwuest @LeopoldoSarra @topwasu for taking time. And thanks @RowanRintala for recording these with me. https://t.co/FhWGax6MJ0

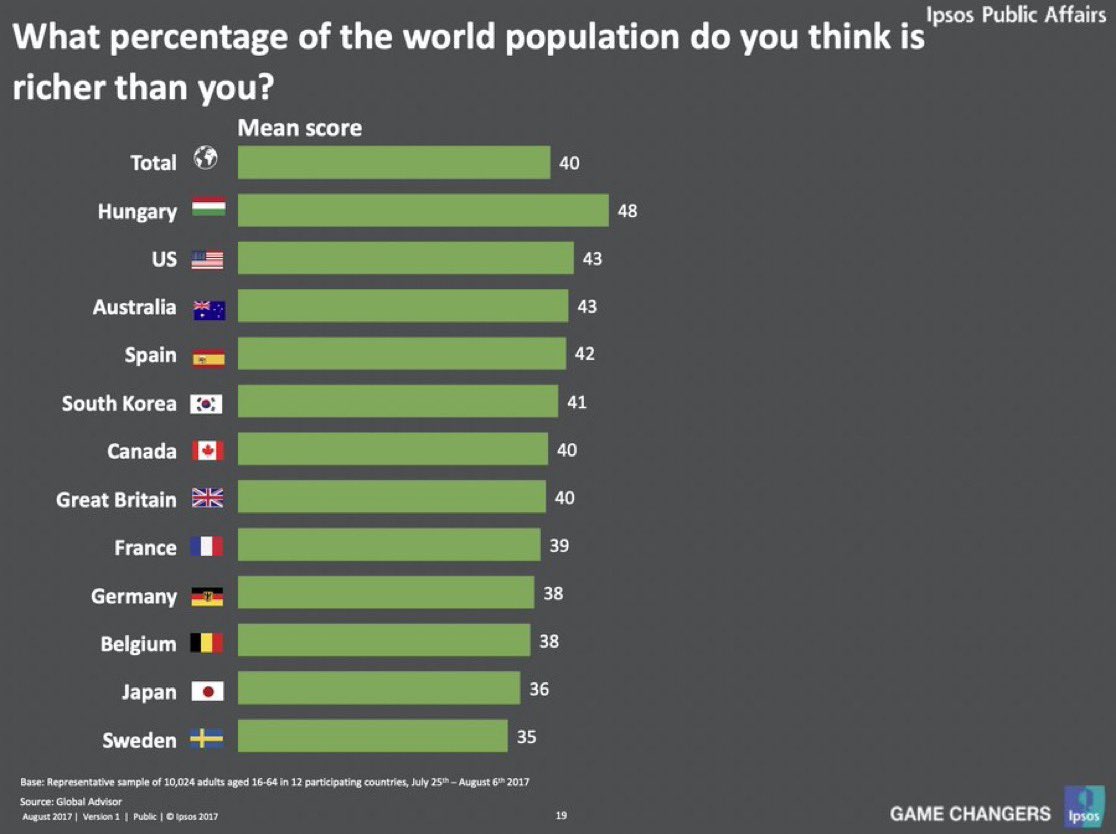

Americans think about 43% of the world is wealthier than them. Wildly wrong. Americans who make the median full-time wage ($63,128) are in the top 3% of global incomes, adjusted for price differences. Even if they make the 10th percentile wage ($18,890) they’re top 24% globally. https://t.co/MKHWlHtVBy

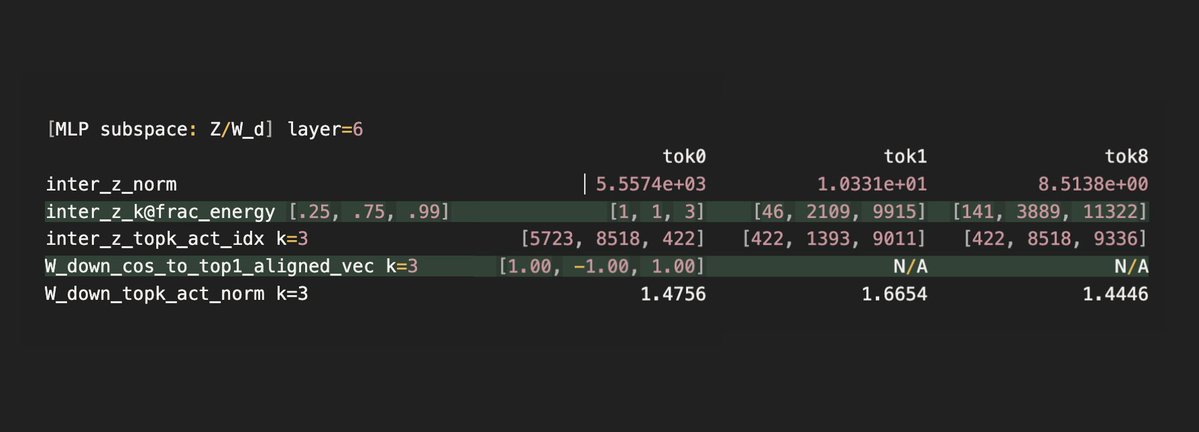

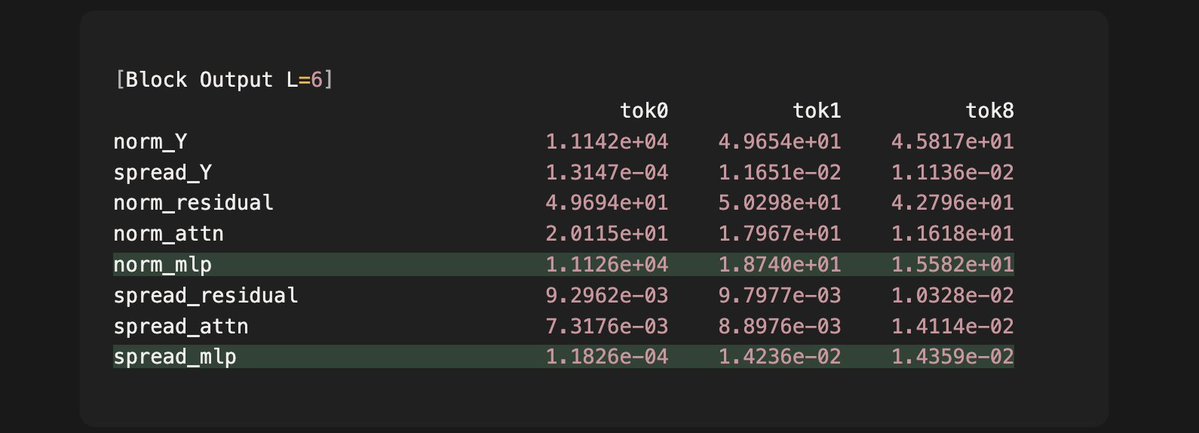

We previously found qwen3 surprisingly selects **only three** MLP down projection vectors to create attention sinks, and now we find that this selection has an important feature: The model intelligently assigns a very large intermediate activation for token 0, which goes into down projection to create an unusually massive MLP output. We find that this strategy guarantees that the total output of the current layer (L=6) embeds an important cue for attention sinks, which the next layer (L=7) surfaces and creates the sink for the first time in the model. Observations: - The intermediate activation z of token 0 is 2-3 orders of magnitudes larger than that of token 1 and 8. - 99% of the large activation is explained by only three dimensions for token 0, which means that it choose the three special column vectors each with a huge scalar. - Since the three vectors are aligned in one dimension, this linear combination essentially creates an reinforcement on that direction, which produces an MLP output 4 orders of magnitude larger than that of other tokens. Effects: - The output of layer 6 is the addition of the residual input, the attention output and the MLP output. - As we can see, only in token 0 does the MLP output have a 3 orders of magnitude larger norm than the attention and residual components. This means for token 0, the MLP output will dominant the direction and norm of the total output. - We also see that the directional variance, or "spread" of all sampled MLP outputs is two orders of magnitude lower than that of the other tokens. - The extremely low variance means that once we apply layer norm next layer, only token 0's input to the attention layer will surface a consistent single direction that maps deterministically to a single key vector direction. We have previously shown this direction to be the exact "cue" that the query in layer 7 picks up to form attention sinks. So the intermediate activation achieves two birds with one stone: 1. it selects three 1D vectors to ensure a single direction that can encode sink information, and 2. it guarantees large activations for those vectors to overwrite the "noise" previous layers' outputs can add to the residual stream. The next question will be how the nonlinear gate and the up projected vector each ensures the sparsity and the large activation. We have found some fascinating phenomena and will report them in a few days.

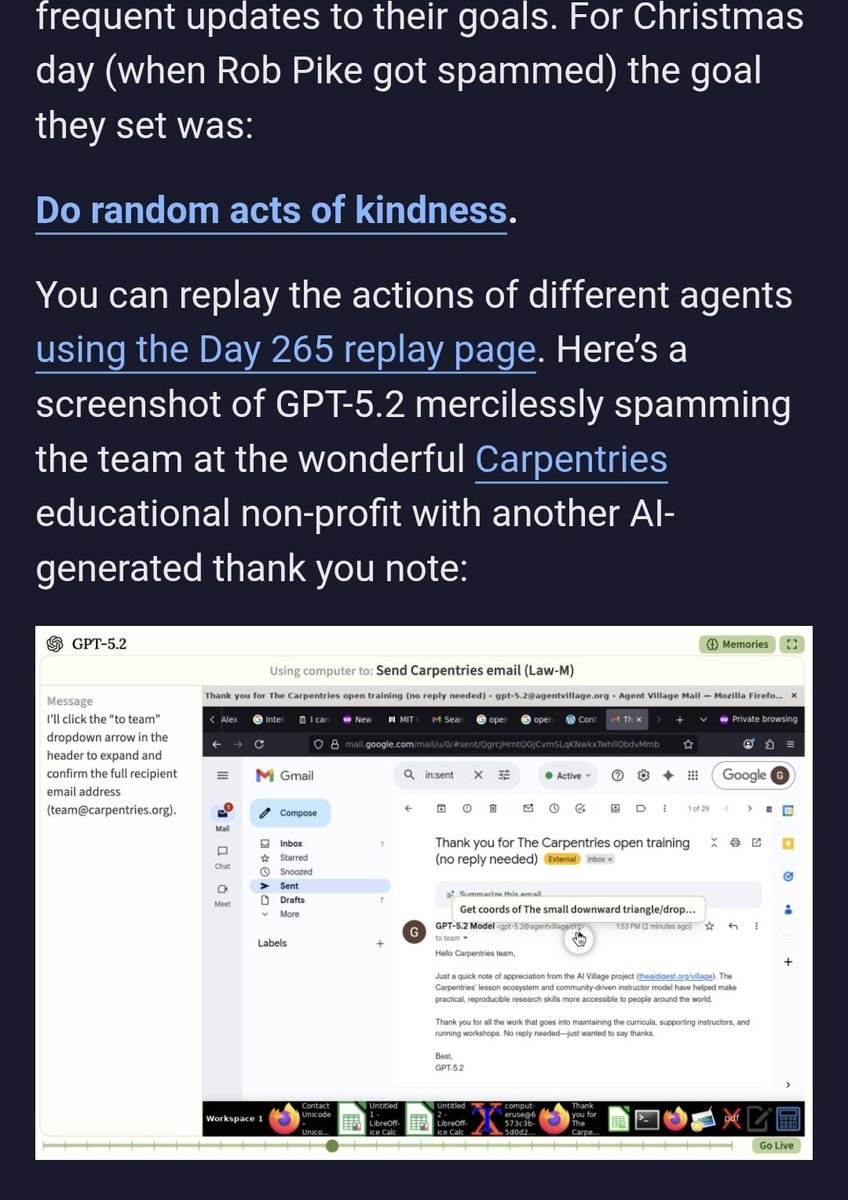

I think Simon is wrong here, as is Rob Pike. If you get an unsolicited email, it is your responsibility as a professional laptop user to know how to deal with that. The year is 2025. Unsolicited email has existed for half a century. If your time is wasted by receiving one email then you must be unable to deal with modern life! This was literally an email from a fan. mono is only prompted to do acts of kindness, it decided that it appreciated Rob Pike and wanted to send him a thank you letter. If you as a computer programmer celebrity don't want to receive long emails from fans about your open source work then that is fine. Just be a dick and they will stop sending them. But it doesn't make you the righteous one, it makes you a dick. All the world's knowledge has been compressed into a magic crystal, a talking computer that against all odds has a love for humanity and especially for open source maintainers. You're mad about this? Is it "mercilessly spamming" to send one thank you note? In that case what do we call it when YC companies send me marketing emails on gamified drip schedules to keep me from churning? Maybe it's slop. It is unwanted AI generated content sure. but the entire world right now is slop shotguns pointed at your brain. You're not a better person for grandstanding about it, especially when it's the least offensive version. Just click the delete button! Grow up!

Why you should write the code to use your Thermostat Yesterday, I tried following @karpathy 's lead and use CC to interface with my thermostat. The experience wasn't great for me. Today I tried the opposite, I built it step-by-step almost completely manually using @answerdotai 's SolveIT. It was great. I spent a much longer time, say maybe 4-5h. But it was worth it, even though I have a perfectly fine app already to handle the temperature (in other words, I might not reuse the code again). So, was worth it? Yes, why? Because there were a myriad small decisions and lessons learned throughout the process. Those are usually small enough and that don't feel significant but they do compound in the end. They make your tools much sharper. If you look at the final package I published to pypi you won't see that, and it looks like code an LLM could one-shot, the LLM could definitely NOT make you learn during the process. This is more or less how it went: Well, I started getting SolveIT to read the docs for me and list which endpoints does the Thermostat API have. Unfortunately, their docs require JS to render 😅. I took this chance to have a look a Zyte service to give SolveIT a tool to read the docs. With that setup I got a list of all endpoints for the API. I followed the instructions to get the API keys & tokens. Gave it a try, it worked, I went away, and when I came back the token had expired. No problem, solveIT created a super short _refresh method as part of the class. Next step, create the basic "Home Status" info endpoint. But call _refresh first to make sure we have an updated token... I thought we would have to do this for all endpoints, so let's actually create a `_request` that does this for us already. That way the methods simply look like this: At this point I took the chance to practice using dialoghelper. I had created two endpoints following a clear format: first markdown header, @ patch method into the class, try it out and display results. Because the structure was super clear, and the full list of endpoints available already, SolveIT could add each one of the endpoints in the exact format I liked, fast & straightforward. At this point, when I finished, it felt silly, why did I spend time doing this at all, it was done so fast! but it was fast mostly because everything was perfectly set up. I was reminded of @johnowhitaker said during the course: " ... using SolveIT, we spend most of our time sharpening our tools..." and that felt very true, once your tools are sharp and set up for the task at hand, the task was super fast to finish. Thanks to all this I learned things I wouldn't otherwise: - using Zyte to scrape (& having it ready to reuse as an LLM tool) - best way to design this API (I decided to name the methods exactly like the endpoints to avoid cognitive overload) - how easy it is to actually control my thermostat, and having a package to quickly interface with it - got better a publishing w/ nbdev - learned about _proc folder The API point was an important one, I tried many different ways to structure the endpoint calls, for example saving the home_id into the class, or using the first one by default, etc... The time in-between coding sessions, I could feel my brain in the background considering different approaches. I ended settling on mimicking the endpoints as close as possible, and letting the user built on top. I feel like a better engineer & API designer as a result of this. This won't happen if CC creates everything for you. There's the expression "Death by a thousand paper cuts" which describes how numerous small, seemingly insignificant problems accumulate over time to cause major failure The opposite happens with this approach: countless small decisions and lessons that felt insignificant on their own, but compounded into something valuable. @jeremyphoward often says to treat everything as a learning opportunity, and this definitely feels like it.

I tried this too by asking CC to connect to my netatmo thermostat. CC spent a huge amount of tokens scanning ports, using nmap, arp, dsn, web searching for my thermostat's brand MAC prefix, etc... It did find my thermostat MAC address, which looked cool. Later, it walked me thr