Your curated collection of saved posts and media

Tesla Semi just won over the toughest crowd in transportation: actual truckers. Drivers who tested the pilot models say it's a game changer. The cab puts you dead center so there's no right-side blind spot, plus screens show everything around the truck. It goes 500 miles on a charge while competitors barely hit 225. Charges to 60% in 30 minutes, which is 4x faster than other electric trucks. Costs under $300k, about $100k cheaper than rival EVs. California trucking companies just ordered over 1,000 Semis. That's double the number of electric big rigs currently operating in all of Southern California. The automatic transmission is easier on drivers' bodies compared to wrestling a 13-gear diesel all day. Less maintenance too since there are fewer moving parts. Tesla is expected to ship 5,000 to 15,000 Semis this year from the Nevada Gigafactory before ramping to 50,000 annually. @elonmusk might've actually cracked the trucking code. Source: WSJ

🇺🇸 The Tesla Semi is an 80,000-lb electric truck that runs almost silently and costs much less to operate than diesel rigs. Same heavy loads, but without the diesel bill… It's the future of trucking. https://t.co/A8JWaO1QKU

Falcon 9 landing confirmed https://t.co/KYrUYuWJii

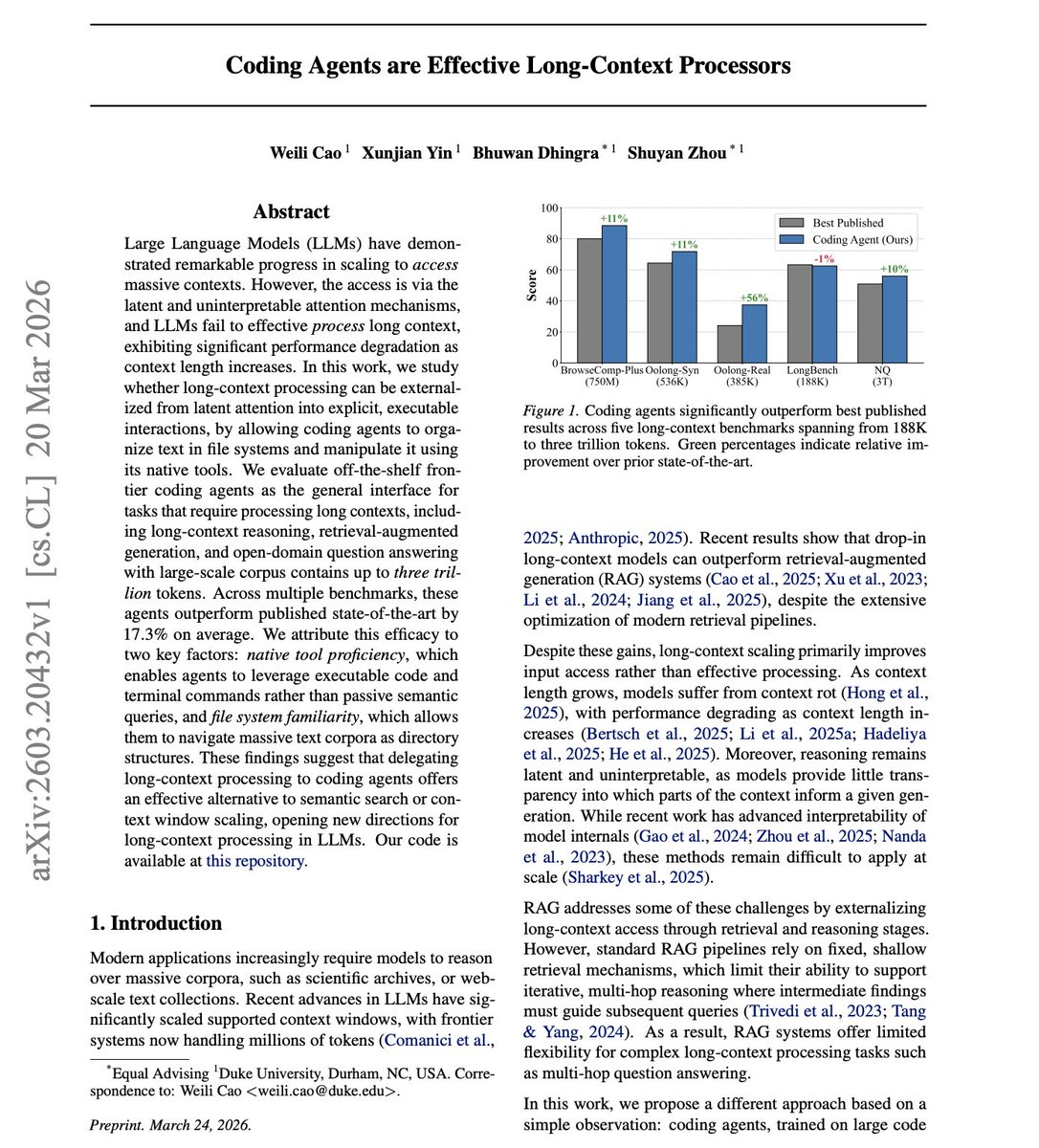

// Coding Agents are Effective Long-Context Processors // We are just touching the surface of what's possible with coding agents. LLMs struggle with long contexts, even the ones that support massive context windows. It turns out coding agents already know how to solve this; you just need to reframe the problem. This work places massive text corpora into directory structures and lets off-the-shelf coding agents (Codex, Claude Code) navigate them with terminal commands and Python scripts. This is great, as you are not feeding massive text directly into a model’s context window or relying on semantic retrieval. Results: - On BrowseComp-Plus (750M tokens), this approach scores 88.5% vs 80% best published. - On Oolong-Real (385K tokens), 33.7% vs 24.1%, a 56% relative improvement. - GPT-5 full-context baseline only manages 20% on BrowseComp-Plus. Works up to 3 trillion tokens. Instead of scaling context windows or building retrieval pipelines, coding agents that already know how to navigate file systems can process virtually unlimited context. The agents autonomously develop task-specific strategies: writing scripts, iterative query refinement, and programmatic aggregation. Paper: https://t.co/kiCFtlixMf Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

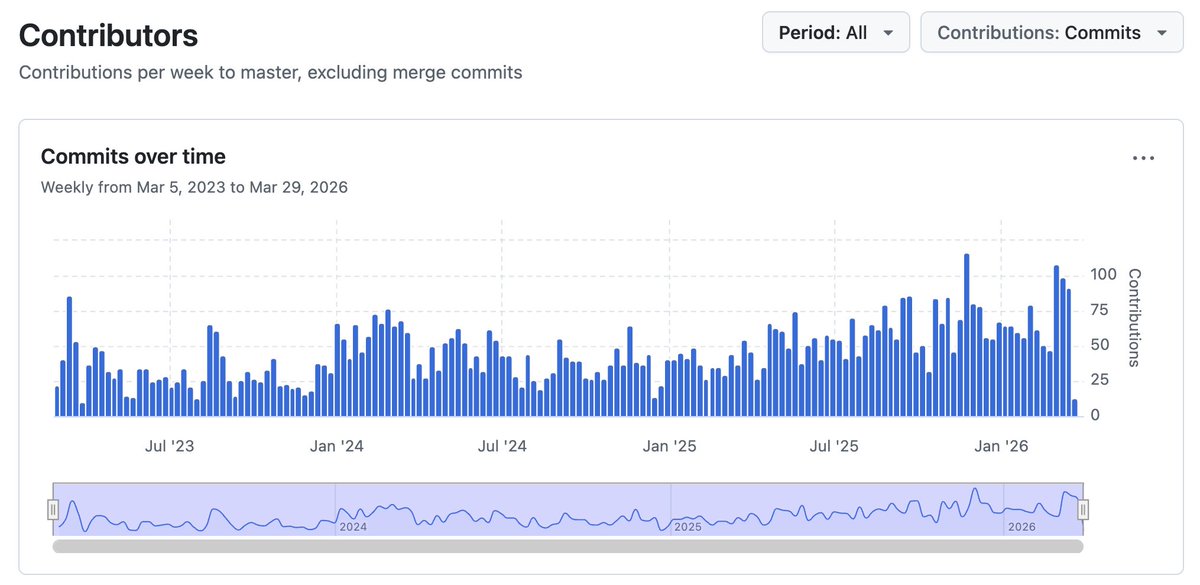

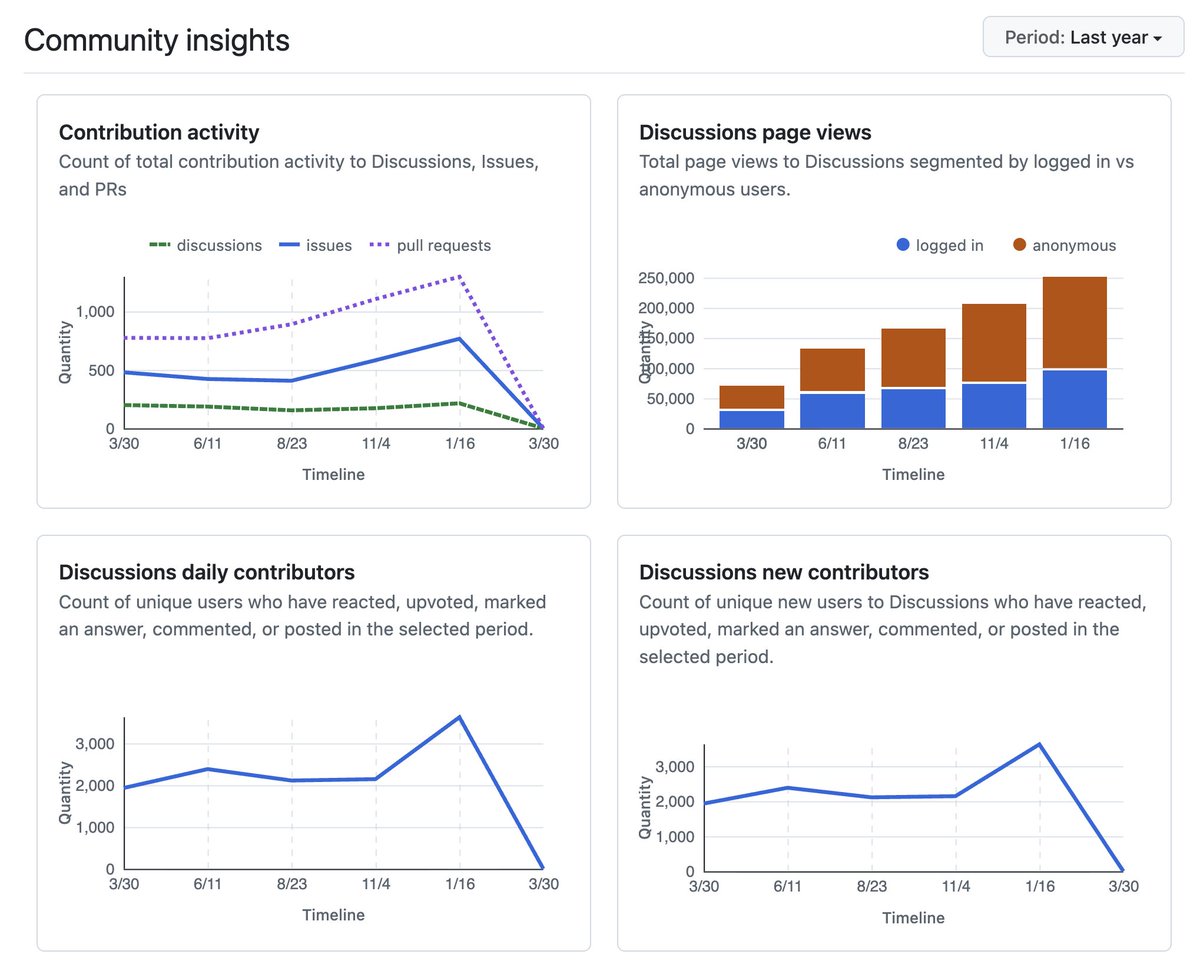

llama.cpp at 100k stars now that 90% of the code worldwide is being written by AI agents, I predict that within 3-6 months, 90% of all AI agents will be running locally with llama.cpp 😄 Jokes aside, I am going to use this small milestone as an opportunity to reflect a bit on the project and the state of AI from the perspective of local applications. There is a lot to say and discuss and yet it feels less and less important to try to make a point. Opinions about viability of local LLMs are strongly polarized, details are overlooked, the scientific approach is lacking. Arguments are predominantly based on vibes and hype waves. One thing is clear though - local LLMs are used more and more. I expect this trend to continue and likely 2026 will end up being one of the most important years for the local AI movement. I admit that I didn't expect the agentic era to come so quickly to the local LLM space. One year ago, the available models were too computationally expensive for doing long-context tasks. There wasn't an obvious path towards meaningful agentic applications. The memory and compute requirements were huge. Last summer, with the release of gpt-oss, things started to change. It was the first time we saw a glimpse of tool calling that actually works well within the resource constraints of our daily devices. Later in the year, even better models were released and by now, useful local agentic workflows are a reality. Comparing local vs hosted capabilities at a given moment of time is pointless. To try put things into perspective: - We don't need frontier intelligence to automate searches and sending emails - We don't need trillion parameter models to be able to summarize articles or technical documents - We don't need massive GPU data centers to control our home appliances or turn the lights off in the garage I believe that there is a certain level of intelligence we as humans can comprehend and meaningfully utilize to improve our working process. Beyond that level, access to more intelligence becomes unnecessary at best and counterproductive at worst. I also believe that that level of useful artificial intelligence is completely within reach locally and it has always been just a matter of implementing the right software stack to bring it to the end user. With llama.cpp, I am confident that we continue to be on the right track of building that software stack! The llama.cpp project is going stronger than ever. With more than 1500 contributors, the project keeps growing steadily. From technical point of view, I think that llama.cpp + ggml is the only solution that actually makes sense. That is, the software stack must run efficiently on every possible device, hardware and operating system. The technology is too important to be vendor-locked. It has to be developed in the open, by the community, together with the independent hardware vendors. This is the only right way to build something that will truly make a difference in the long run. I won't try to convince you about what is currently and will be possible with local AI. We will just continue to build as usual. I am confident that after the smoke clears and we look objectively at what we have built together, the benefits will be obvious to everyone. Big shoutout to all llama.cpp maintainers. I feel extremely lucky to be able to work together with so many talented contributors. Every day I learn something new and I feel there is so much more cool stuff that we are going to build. Also, I am really thankful that the project continues to have reliable partners to support it! Cheers!

AI is reshaping how companies approach experimental ad budgets. As platforms roll out new AI-driven ad products and traditional channels become saturated, marketers are rethinking where and how they test. Budgets are being adjusted toward areas like generative search, ChatGPT-style ads and new formats, with a stronger focus on reaching untapped audiences. The shift is not just about efficiency. It is about reallocating spend toward experimentation, changing KPIs and moving from guaranteed performance to discovering new sources of growth. https://t.co/19YHYvJvay @Digiday

That's it. That's the best picture from Saturday's No Kings protests in the USA. The literal Statue of Liberty being detained by police. It doesn't get much more poetic than this. https://t.co/KOoDCKFu52

Talking about tracing things back https://t.co/6CPjlgfajc

Every LLM from any lab today traces back to this guy, who was the only person at OpenAI pushing for pretraining transformer language models. He built GPT-1. After that did others see the potential. He invented it, and almost none of the so called AI experts even know his name. ht

Talking about tracing things back https://t.co/6CPjlgfajc

NEW research from CMU. (bookmark this one) The biggest unlock in coding agents is understanding strategies for how to run them asynchronously. Simply giving a single agent more iterations helps, but does not scale well. And multi-agent research shows that coordination > compute. A new paper from CMU proves this with a practical multi-agent system. CAID (Centralized Asynchronous Isolated Delegation) borrows proven human SWE practices: a manager builds a dependency graph, delegates tasks to engineer agents who work in isolated git worktrees, execute concurrently, self-verify with tests, and integrate via git merge. CAID improves accuracy over single-agent baselines by 26.7% absolute on paper reproduction tasks (PaperBench) and 14.3% on the Python library development tasks (Commit0). The key insight is that isolation plus explicit integration beats both single-agent scaling and naive multi-agent approaches. For long-horizon software engineering tasks, multi-agent coordination using git-native primitives should be the default strategy, not a fallback. Paper: https://t.co/cRAbG7SrR5 Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Live (and will be recorded) on WBUR: How to make AI work for us https://t.co/IhDm2ogdfw https://t.co/Tc0TpLuljz

AI-generated content is getting harder to spot, and brands are starting to lean into that. Gucci’s campaign looked like a high-end editorial, only later revealing it was created with AI. That kind of reveal is becoming part of the strategy, playing with perception and authenticity. The response is shaping a new playbook. In a world of AI “slop,” standing out may depend less on using AI and more on how transparently and creatively you use it. https://t.co/rk90JGhYGr @voguemagazine

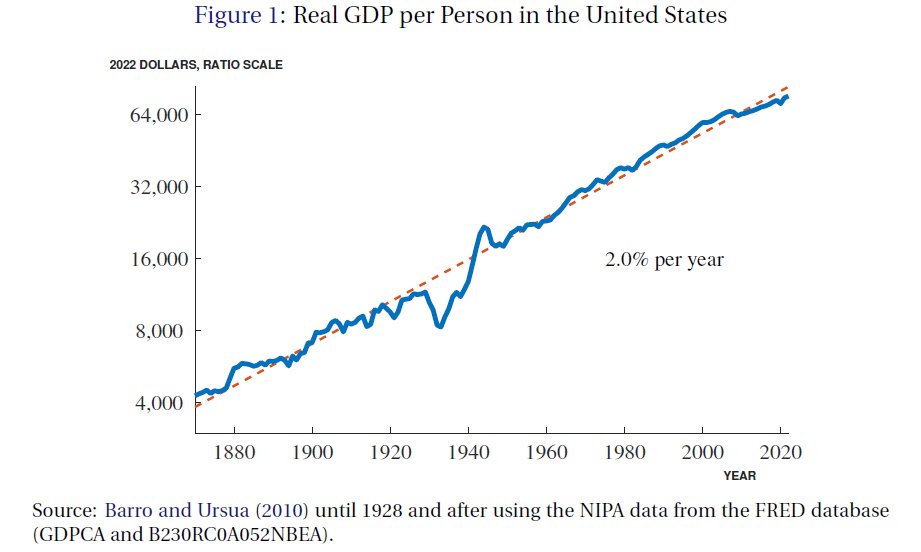

Why didn't electricity, mass production, the automobile, electronics, or computers lead to huge growth spikes? Because regular and yet massive breakthroughs such as those were required just to keep the US growing on trend. The real miracle is that these breakthroughs keep coming. https://t.co/4QHtKfBQEQ

People overestimate the effects of innovation on growth because they assume innovation is an addition to preexisting growth trends. In reality, it takes constant innovation to MAINTAIN growth trends. The growth benefits of old innovations decay just as new ones replace them.

Meet Qwen3.5-9B-Uncensored: a powerful, unfiltered language model that's taking the open-source AI world by storm. With over 500k downloads, this multilingual model removes restrictions while maintaining impressive capabilities. Perfect for developers who want raw AI power without guardrails.

@cursor_ai Cafe IS STARTING!!! @agrimsingh @SherryYanJiang @benln @fr4nnyp4ck @nickwm https://t.co/0GBHa4VlK7

@cursor_ai Cafe IS STARTING!!! @agrimsingh @SherryYanJiang @benln @fr4nnyp4ck @nickwm https://t.co/0GBHa4VlK7

Liftoff of Transporter-16! https://t.co/PpIorZ0ZL6

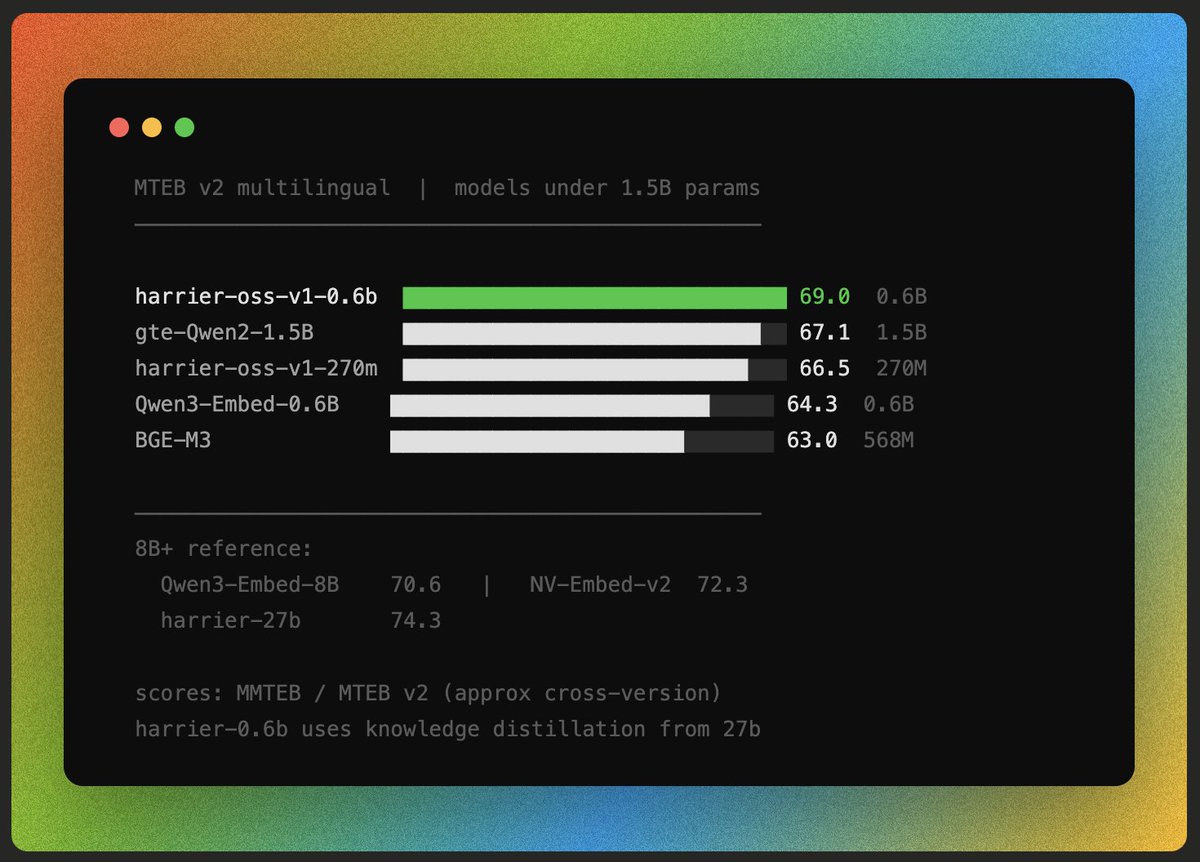

Surprise drop: new multilingual embedding models by Microsoft - seem quite good :) https://t.co/ljWZOG5sfG https://t.co/SE51bj2M3Z

Surprise drop: new multilingual embedding models by Microsoft - seem quite good :) https://t.co/ljWZOG5sfG https://t.co/SE51bj2M3Z

Cohere Transcribe is setting a new standard for automatic speech recognition model accuracy in real world conditions – even with a noisy blender running. Try it out for yourself 👇 https://t.co/cIHYqTVVyI

@cohere transcribe Sota open source transcription model running in the browser :) Weights on @huggingface link below https://t.co/OmrHFA94lG

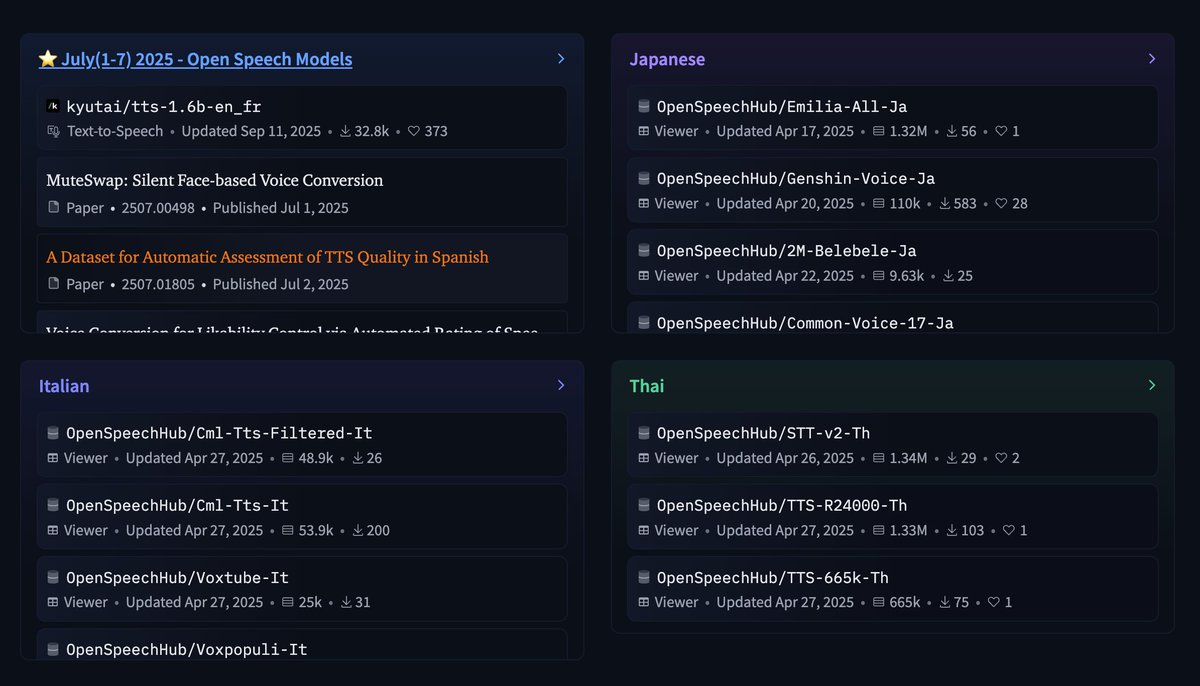

I'm going to start publishing open-source datasets for TTS and STT. I'll be creating a collection for newly released models every day. Make sure to follow the Huggingface page. https://t.co/6Cik7PKmY4

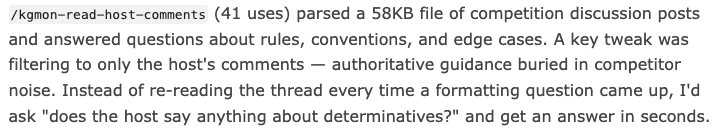

@gonedarragh Cool post! I am curious, but how did you do that? Afaik Kaggle does not add comments of live competition to meta-kaggle and scraping is not trivial. https://t.co/8tjwG7V6gy

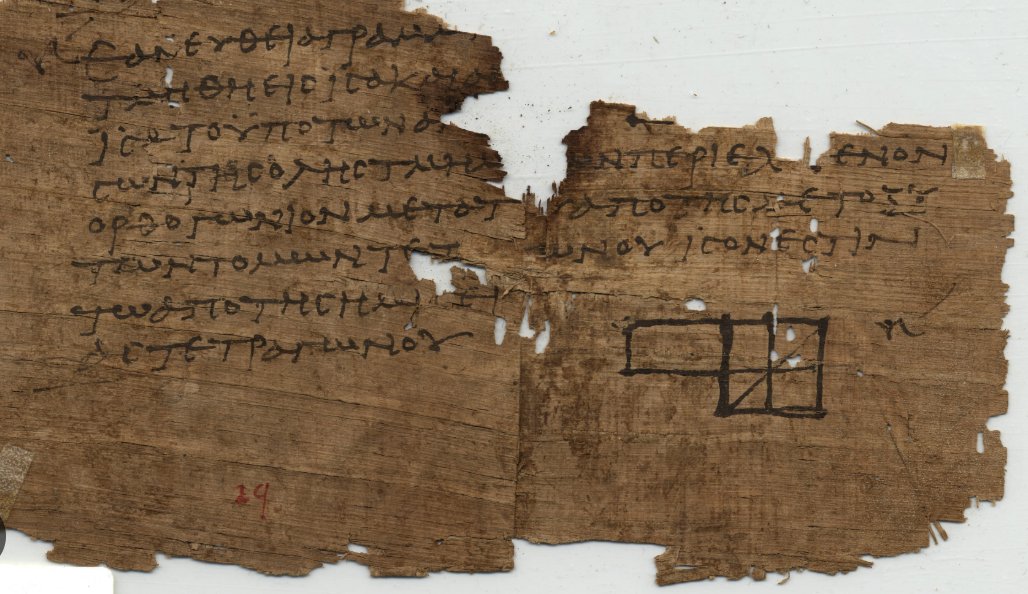

@pfau While Gauss is one of the best researchers of all time, LLM has roots way before. Here's a relevant work Elements from 300 BC by Euclid. https://t.co/lNNVg3PjGE

A round of applause for our #PyTorchCon Europe silver sponsors. Paris becomes the center of the #AI world on 7-8 April. 🌍 Join us for two days packed with world-class keynotes, workshops, & specialized tracks on modern AI development. Register: https://t.co/prWHolBpFf Schedule: https://t.co/luyi2NuKXz

神奇的磁悬浮技术!除了交通领域,大神们都说说这技术还可以应用于哪些行业? https://t.co/3H4BBlZBnv

神奇的磁悬浮技术!除了交通领域,大神们都说说这技术还可以应用于哪些行业? https://t.co/3H4BBlZBnv

BREAKING: xAI has released a new update for the Grok app. Update your app to v1.3.53 to get the latest improvements: https://t.co/XMxrTmpf7e

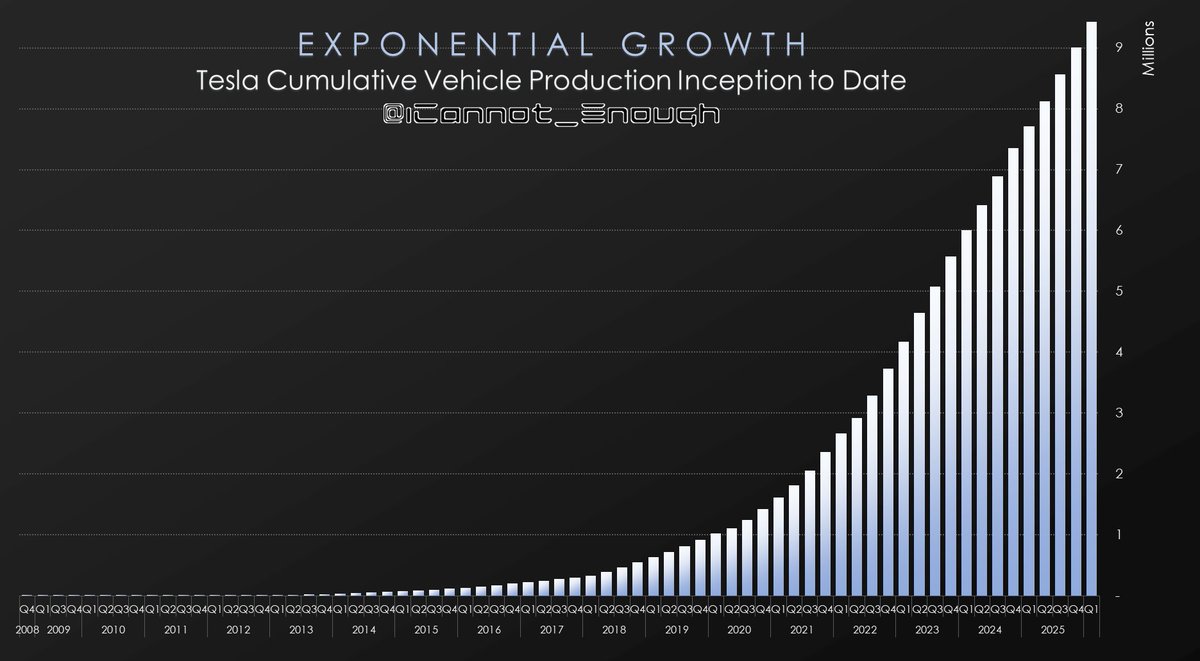

As Stephenson Indicator™️ climbs past $877, my Q1 deliveries forecast sits at 377,251 on Production of 420,069... and I predict Tesla will celebrate cumulative production exceeding 10 million vehicles by Halloween. 👻 $TSLA https://t.co/dLJrl8kPtu

TESLA AFTER A SPACEX IPO Many analysts assume a static pie — i.e. a scarcity mindset. They think a SpaceX IPO hurts Tesla longterm by splitting Elon’s (and investors’) attention. They somehow forgot that Elon Musk built both Tesla & SpaceX simultaneously to trillion-plus valuations. Tesla has always been volatile, a SpaceX IPO is just this week’s excuse. Traders make money from volatility. These analysts are ALREADY WRONG. Within 3 months of AJ’s doomsday 2/25 analysis, Tesla & SpaceX are deepening a mutually beneficial partnership. This isn’t a zero-sum game. Instead of each company remaining on a trajectory to be an $8 Trillion company, Elon Musk leveled up to a new 100 Trillion market cap goal. Instead of competition between Musk companies and divided loyalty, Elon Musk has tasked Tesla/SpaceX/xAI with one audacious mission: remove the constraint of Earth and its limited resources by kick-starting a true Space Economy flywheel. Digital Optimus, the Terafab and AI Space Compute are just the start. A SpaceX IPO primes the flywheel with initial operating cash. This is the biggest undertaking in human history — an investment opportunity that could exceed today’s Earth economy by 10x, 100x, or even 100,000x. What’s the actual limit once humanity goes galactic? The “Elon Musk community” doesn’t split — it gets stronger as the mission converges. 🧵 Next, don’t ignore the early signals & kick yourself later

Given that I have built two companies in widely different fields to trillion dollar plus valuations simultaneously, I am might be getting a few things right once in a while

50 seconds entirely created using Grok Imagine's tools. Grok can create scenes that could be featured in a film. https://t.co/aFD5VjM4fQ