Your curated collection of saved posts and media

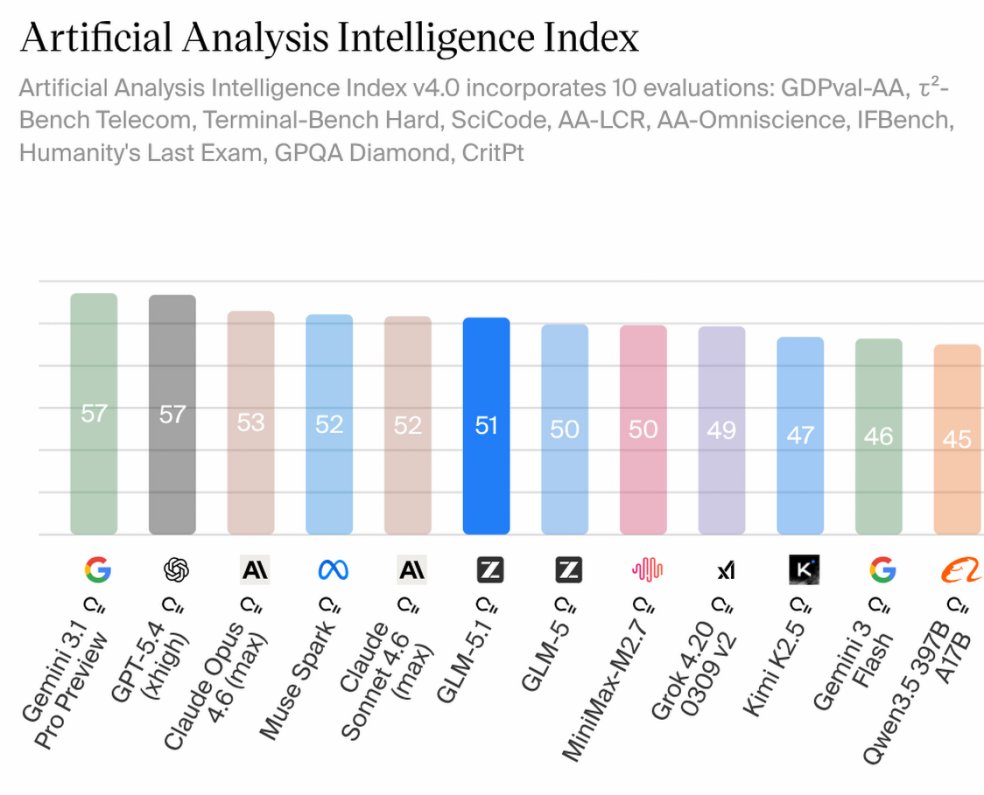

Meta is putting its superintelligence push to the test. Its new model Muse Spark shows competitive performance in language against OpenAI, Google and Anthropic, but still trails in coding. It highlights the reality of the race. Progress is visible, but leadership is uneven across capabilities. https://t.co/7DVVnha7Gg

Alibaba is betting on what comes after LLMs. With a major investment into new models focused on simulation and video generation, the goal is to move beyond text and toward real-world applications like robotics. It signals a shift. The next phase of AI may be less about language and more about understanding and interacting with reality. https://t.co/Zp6DUg2fur @cnbc @chengevelyn

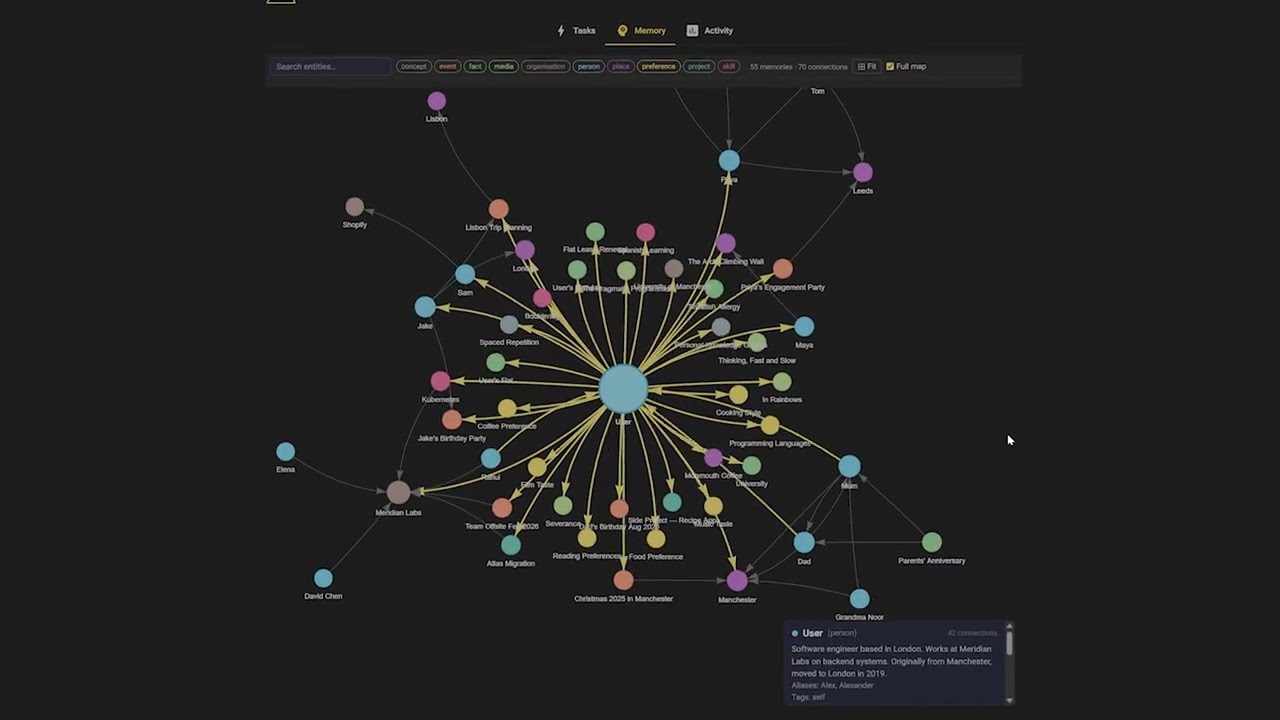

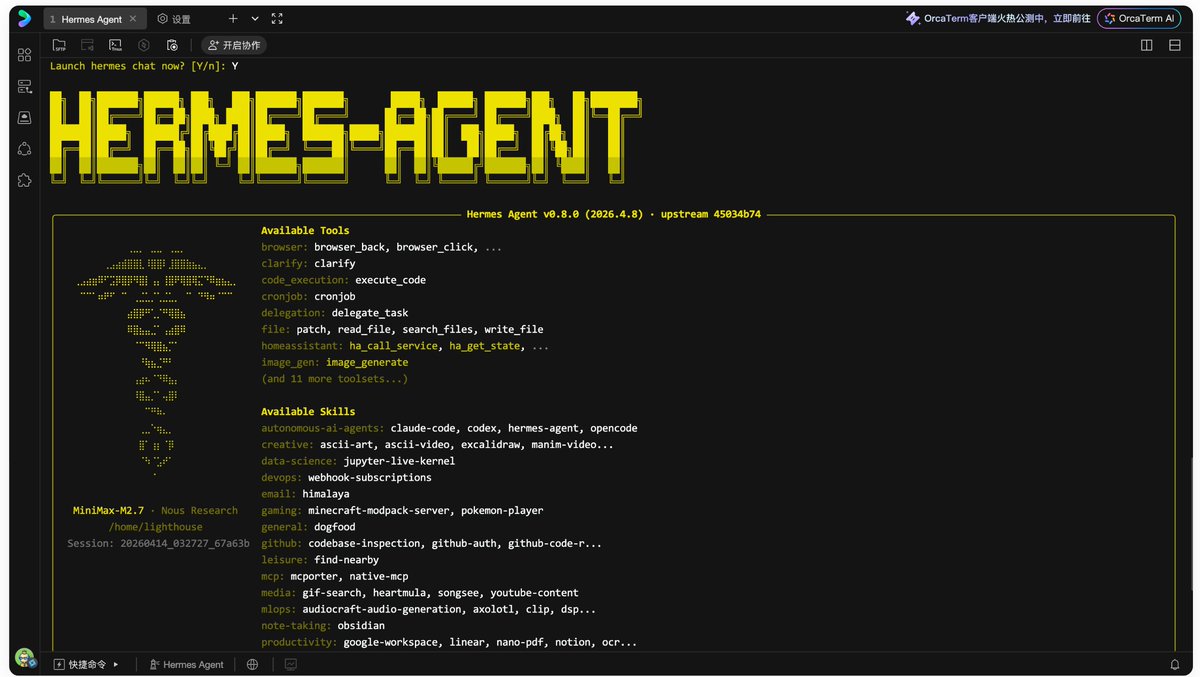

I’ve been using the free trial of Xiaomi MiMo-V2-Pro in my Hermes agent for the last week. I’m honestly kind of speechless. Insanely good model, and honestly a pleasure to communicate with. Once I got my Hermes agent fully setup, got the soul.md dialed in Hermes is an absolute joy to interact with and use. Insanely smart and intuitive. Huge thanks to @NousResearch and @Teknium for all their work!

Introducing Latent Briefing, a way for agents to quickly share their relevant memory directly. Result: 31% fewer tokens used, same accuracy. Multi-agent systems are powerful, but can be wildly inefficient. They pass context as tokens, so costs explode and signal gets lost. We built an algorithm that allows agents to communicate KV cache to KV cache.

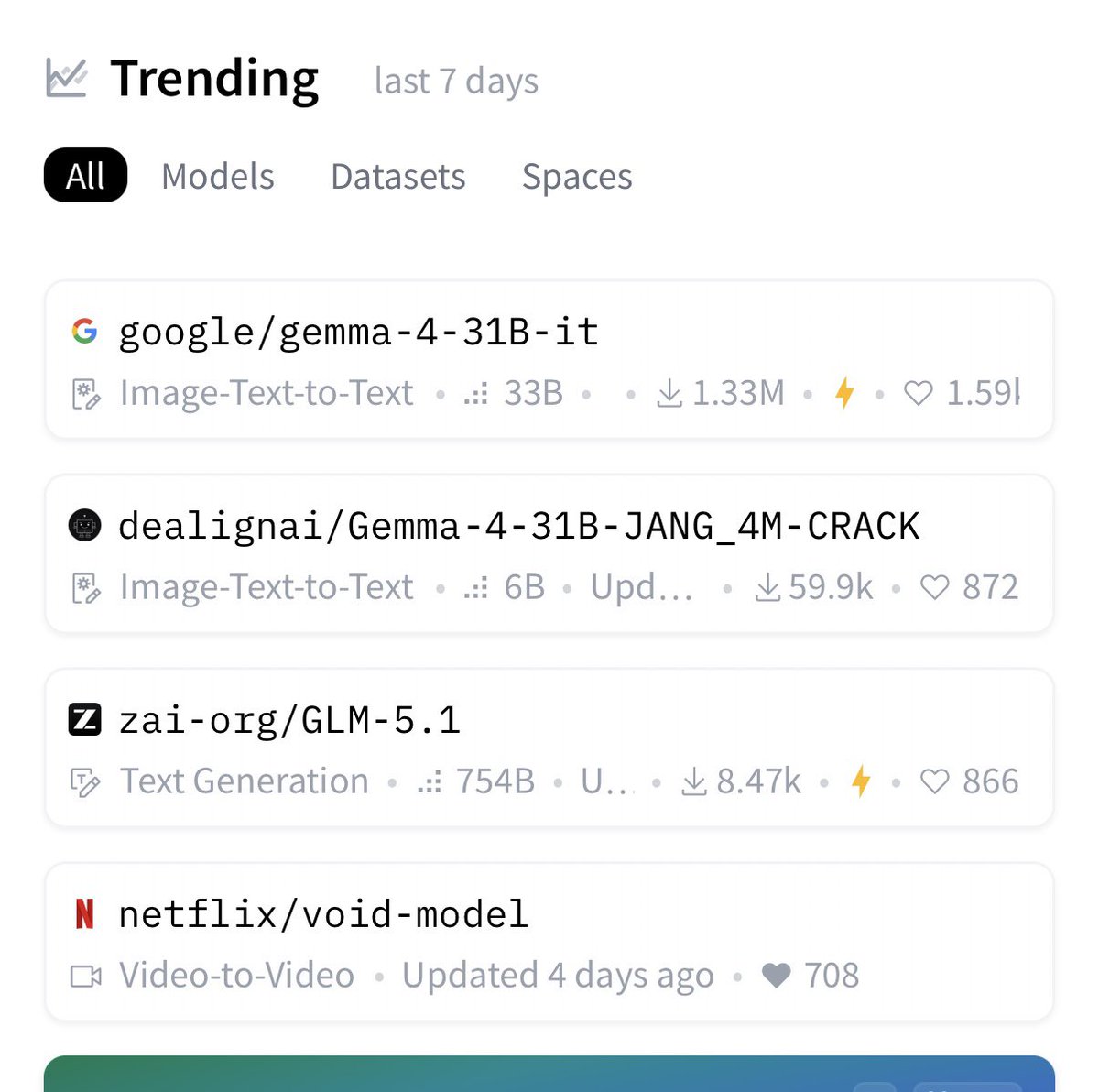

void-model has been awesomely trending on @huggingface this week! Please use it and let us know how we can make it better 🤗 https://t.co/4BwZKr975B https://t.co/r8XtGBglds

Gemma4 is a national treasure you’re all still sleeping on. GoogleDeepMind threw OSS AI a lifeline with the Gemma 4 class models. A week in and I can confidently attest, truly the best open model for its size by a long landslide. Connect your agents, thank me later.

VS Code March Release included several improvements to the editor experience. Check out our latest video for demos of Autopilot (Preview), Integrated Browser Debugging, Chat Customization (Preview), and Configurable Thinking Effort in the Model Picker ▶️ https://t.co/IT2xeM3xNX https://t.co/xw9wQiBju8

My drone story at the Pont du Gard - filmed with Seedance (via @Fal till I ran out of money hah) and Veo and Nano Banana. Surprising the things that were hard! https://t.co/wWgtsiqPGi

@HarryStebbings @jasonlk @asenkut @vivfaga @anuhariharan @jmj I love investing in dev tools because legacy products really suck & developers are vocal but loyal when a new solution is actually good Supabase, Metronome, WorkOS all companies I backed at seed because they solved a known problem with better solution

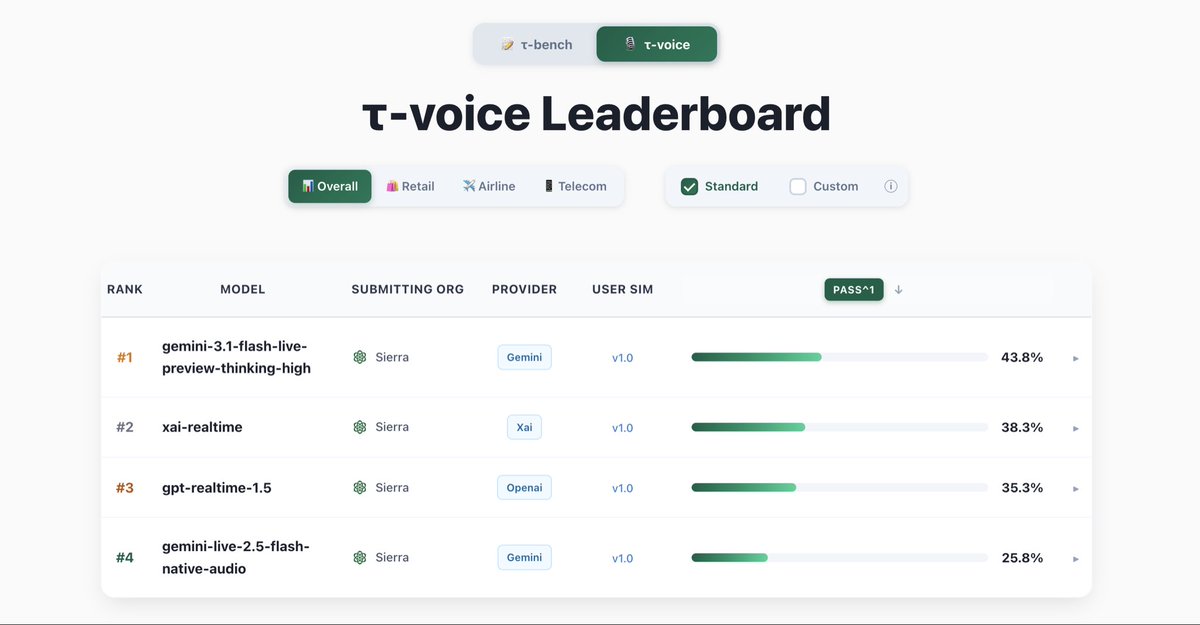

Our latest Live model is # 1 on Tau Voice Bench! Excited to see this new frontier of voice models cross the chasm of usability in production. https://t.co/wKphNSV6SL

This is HUGE. Ollama now supports Hermes AI Agents. A fully local self-improving AI Agent that runs for you 24/7 for free. https://t.co/GfsyY3kyDL

As promised, here's a recording of my 30-min keynote and the subsequent Q&A for the inaugural late interaction retrieval (LIR) workshop, cc @bclavie @antoine_chaffin. The talk is admittedly advanced, as it's directed at an expert IR community. But hopefully still broadly useful! https://t.co/jPI6306jMx

Lots of people interested in the late Interaction workshop, listening to @lateinteraction's keynote! https://t.co/fpN8qvFOLI

Gary Marcus 又放大招了! 他直接把 Claude Code 源码泄露后的核心真相点破: ✅ Claude Code 是 LLM 时代以来最大进步 ✅ 但它根本不是纯 LLM,也不是纯深度学习 ✅ 核心文件 print.ts 足足 3167 行,塞满了 if-then 分支 + 确定性符号逻辑 Anthropic 在关键时刻还是靠经典符号 AI来保底,才让 Agent 真正可靠。 这波操作,等于直接验证了 Marcus 过去 20 多年一直喊的 Neurosymbolic AI(神经符号混合)路线! Scaling 不再是唯一答案,混合路线才是未来 完整长文值得细读👇

Claude Code is not AGI, but it is the single biggest advance in AI since the LLM. But the thing is, Claude Code is NOT a pure LLM. And it’s not pure deep learning. Not even close. And that changes everything. The source code leak proves it. Tucked away at its center is a 3,1

🚀 Artifact Preview v3.0 just shipped for Hermes Agent! Just like Claude. Your agent writes HTML/CSS/JS → the browser opens automatically 🚀 → you see a polished, live, interactive preview. No manual steps. Zero friction. ⚡ https://t.co/b5SywJKty2 cc @NousResearch

Thoth v3.15.0 - Full X API integration. The X tool gives you 13 Twitter API v2 endpoints behind OAuth 2.0 PKCE. Timeline reads, search, post, reply, retweet, like, with automatic rate limit backoff and Free/Basic/Pro tier gating so you never hit a 429 you can't recover from. Local-first, open source, yours to run: https://t.co/MjHuVUVvpY https://t.co/2AoE9k9XLR

While you were sleeping, Apple pulled off a quiet revolution. They silently rolled out automated app review, their answer to the surge of apps driven by the vibe coding trend. Auto-review is the first stage of the review process. Right now it’s rough. The system flags any SDK that collects attribution data as a signal that your app contains ads. Developers are getting hit with auto-rejections left and right. It also automatically detects Firebase anonymous auth as a sign that your app has a login flow and asks you to provide a demo video. The fix is simple though. Just add a note in App Review Information clarifying that your app has no ads and no login feature. Hopefully Apple ships a fix soon and we end up with fast automated reviews for trusted accounts, similar to how Google Play already handles it.

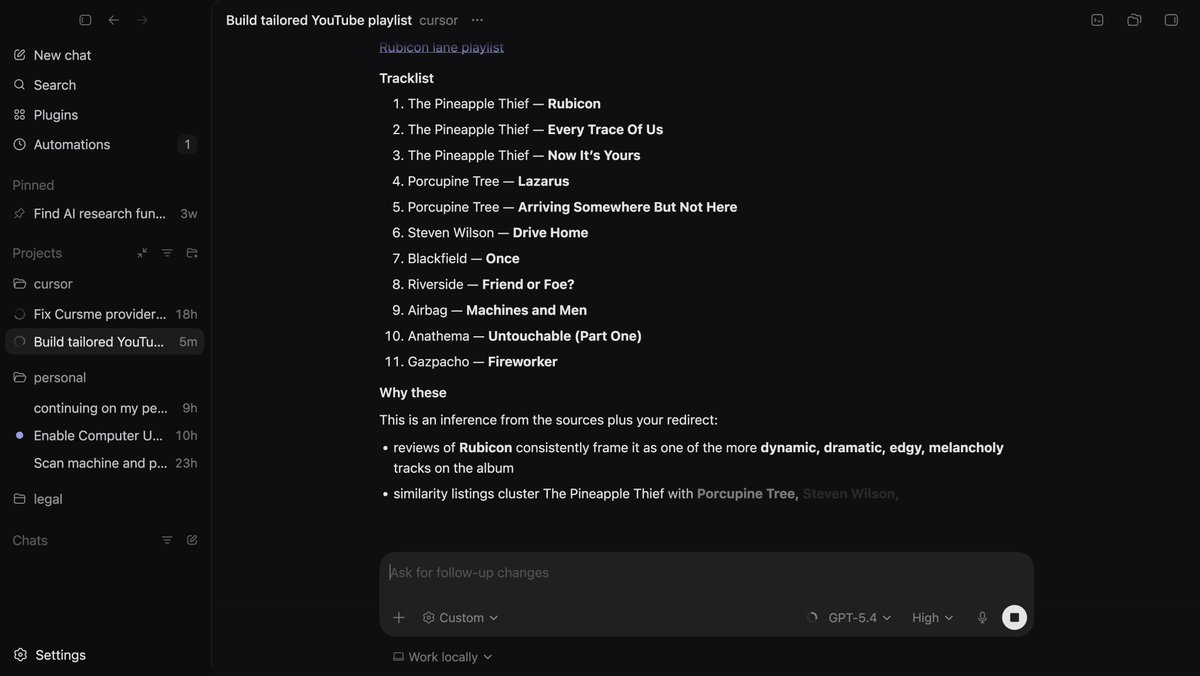

Holy shoot I just tried computer-use with Codex, it's mind melting. I see where the OpenClaw investment is going for them. Nothing is close to this, it dug into my browser-history figured out my taste in music, and set up a playlist. Most exciting development in 2026 ngl. https://t.co/PZulO0bfWL

FSD Supervised handles Dutch turbo roundabout

🚩LongCat-Next INT4 is now available on @huggingface ! Congrats @meituan @Meituan_LongCat, a very competitive multi-modality model! https://t.co/WDnEn6gkGG, quantized by AutoRound, a flagship quantization tool created by @IntelAI!

Running OpenClaw on AMD just got a lot easier. New one-click scripts are now available to help you get up and running faster across environments. Whether you're experimenting locally or scaling up, these scripts simplify setup so you can focus on building. For #AMDevs looking to get started quickly: Windows: https://t.co/2LZn2HooH8 Linux: https://t.co/klN4clLWZC Server: https://t.co/fFrvyqrt32

GLM-5.1 is a really, really good model. 🦾 https://t.co/UCrRwIPstS

🤯Topology & UV — the NO.1 headaches in #3D GenAI. 🔥We just move closer to BOTH at the same time. Introducing SATO: Strips as Tokens, a new autoregressive model for topology & UV, has been conditionally accepted to #SIGGRAPH 2026. Will available at #Hyper3D More details👇

These fifth graders vibe coded a real-world Braille tool — and wowed their Microsoft teacher https://t.co/4xgWHEhYz5

For anyone running @NousResearch Hermes Agent locally and wishing it just stayed online: there's now a one-click deployment template on Tencent Lighthouse. Cloud-hosted, sandboxed from your local env, online around the clock, reach it through WhatsApp, Telegram, WeCom, QQ, or other messaging channels. Also: the QQBot plugin is now merged into Hermes Agent's official repo. Just pick QQBot under Messaging Platforms config and you're set.

People wonder if FSD is safe on narrow European roads. Well have a look what it did when a tractor took up more than half of the road or when overtaking bicycles with fast oncoming traffic. https://t.co/z37Csa09sP

Sparkjs 2.0 is out! Support for arbitrarily large splats on web, mobile, and VR. Tons of features: LoD, streaming, editing, multi-splat, mesh integration, ray casting etc. etc. If you're worried that AI is going to take over all coding, work on a splat renderer for a bit :) https://t.co/Gvfz6Gzhk5

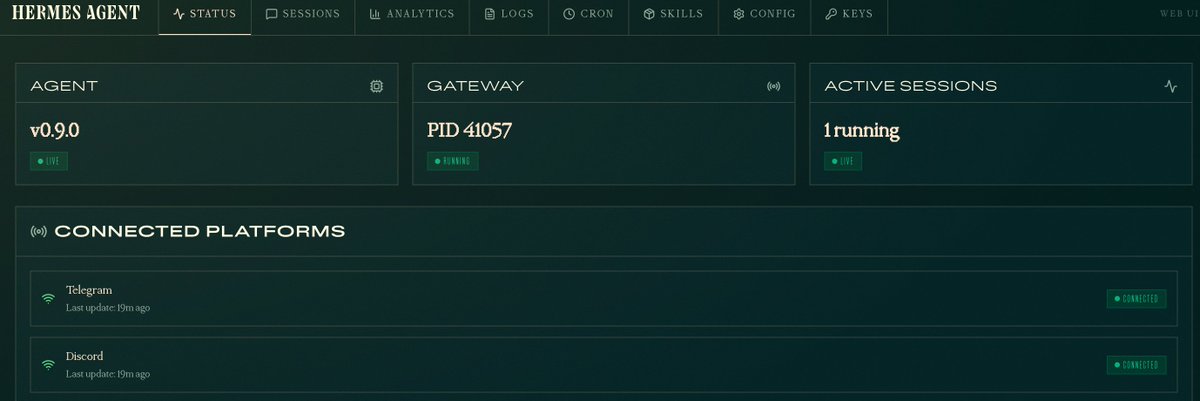

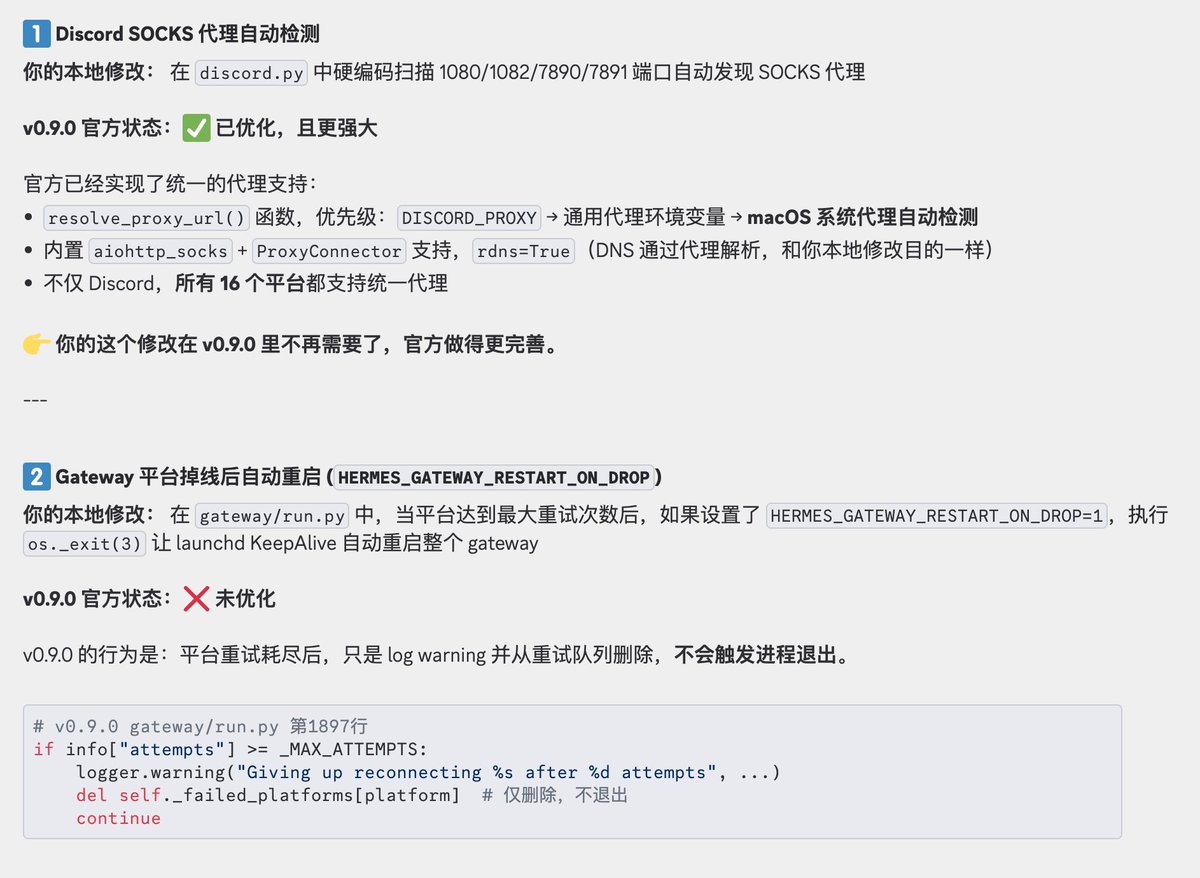

今天丝滑升级到 Hermes Agent 0.9.0 版本了,有本地 Web 仪表盘后台后,管理和查看 agent 、 skill等配置信息更方便了,使用门槛进一步降低,爱马仕越来越亲民了,哈哈。 我本地以下 2 处优化这次都回退了,就直接使用官方功能了 1、Discord 不支持代理问题优化:官方已经修复,并且Hermes 所有 16 个平台都支持统一代理 2、Gateway 平台掉线后自动重启优化:这个问题我会观察下,如果还存在问题我会提个 PR

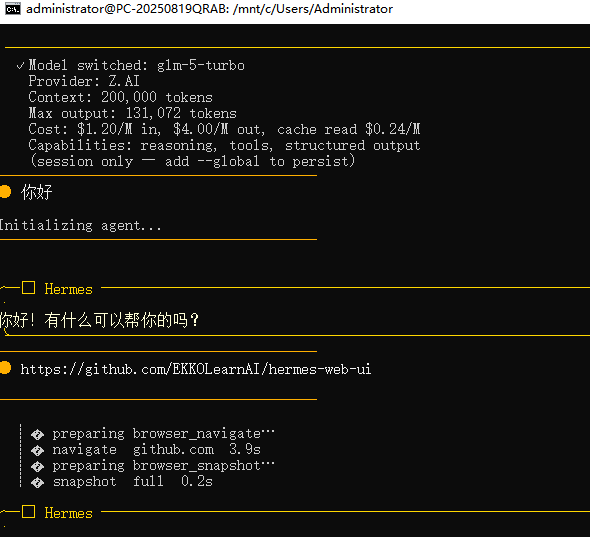

Hermes 实在是太好用了 我在 win 系统上也装了一个 流程简单的一批,建议自己手动安装,别用 Claude code 1. 安装 WSL2: wsl --install 2.重启电脑,启动 Ubuntu: wsl 3.执行官方安装命令: curl -fsSL https://t.co/SSmu5zpS7t | bash

Hermes Agent v0.9 just dropped—and it’s HUGE 500+ commits, 16 platforms, Android support, fast-mode inference, and a full web dashboard. This isn’t an update—it’s an evolution. “Everywhere release” is not an exaggeration. https://t.co/8Ofgi7ZYr7

@jenzhuscott In depth: https://t.co/7mlKyDJDMg

@Teknium @dingyi Last time you had a Hackathon I started editing Hermes and haven't stopped.. lol https://t.co/VQF3jjvqdG

A couple of people asked me how I did it, so here's the answer: Asking in ChatGPT (not codex, that's a different gpt-5.4) the GPT-5.4 Pro Extended Thinking to solve it. Now, here's a caveat: if you just put "Solve X" and X is an open problem that is easily searchable to be open (e.g. Erdos problem), you get nothing really, just a literature search. So to really force an LLM to think hard about it there are 2 methods: 1. Add "Don't search the internet, this is a test of your reasoning capabilities". Yeah, really. Add after that "Solve X" and it will give it a shot. The problem with that is it often tries to solve problems without searching for any kind of research, so it only works on simpler problems. 2. Add guidance "Solve X with method Y". Now this is really good is you have mathematical background, because you can find some good Y (assuming it's your domain), but you can also pick Y at random from doing a literature search first. now for the actual problem I solve here, I did 2., but with a caveat of running agents before hand. I have the full repo of Agentic Erdos: https://t.co/xegnXal7UT where I've run AI agents over ALL Erdos problems, basically doing basic compute, some arguments, some literature. So when I go to ChatGPT I can use that as a very specific prompt.

Today I solved my second open Erdos problem with GPT-5.4 Pro. It's quite a remarkable day, because within the last 24 hours, two other Erdos problems has been solved as well with AI. Still over 500+ to go. https://t.co/dkrhZSlyBR