Your curated collection of saved posts and media

Here's the longer version of our Nature piece. Our argument is simple: statistical approximation is not the same thing as intelligence. Strong benchmark scores often say very little about how LLMs behave under novelty, uncertainty, or shifting goals. Even more importantly, similar behaviors can arise from fundamentally different processes. In another paper, we identified seven epistemological fault lines between humans and LLMs. For example, LLMs have no internal representation of what is true. They often generate confident contradictions, especially in longer interactions, because they do not track what is actually true. Another example. Yes, LLMs have solved some open mathematical problems, but these cases typically involve applying known methods to well-defined problems. LLMs cannot invent anything that is truly new and true at the same time, because they lack the epistemic machinery to determine what is true. None of this means LLMs are useless. Quite the opposite: they are extraordinarily useful. But we should be careful about what they are and what they are not. Producing plausible text is not the same as understanding. Statistical prediction is not the same as intelligence. So despite the hype from the usual suspects, AGI has not been achieved. * paper in the first reply Joint with @Walter4C and @GaryMarcus

Here's the longer version of our Nature piece. Our argument is simple: statistical approximation is not the same thing as intelligence. Strong benchmark scores often say very little about how LLMs behave under novelty, uncertainty, or shifting goals. Even more importantly, similar behaviors can arise from fundamentally different processes. In another paper, we identified seven epistemological fault lines between humans and LLMs. For example, LLMs have no internal representation of what is true. They often generate confident contradictions, especially in longer interactions, because they do not track what is actually true. Another example. Yes, LLMs have solved some open mathematical problems, but these cases typically involve applying known methods to well-defined problems. LLMs cannot invent anything that is truly new and true at the same time, because they lack the epistemic machinery to determine what is true. None of this means LLMs are useless. Quite the opposite: they are extraordinarily useful. But we should be careful about what they are and what they are not. Producing plausible text is not the same as understanding. Statistical prediction is not the same as intelligence. So despite the hype from the usual suspects, AGI has not been achieved. * paper in the first reply Joint with @Walter4C and @GaryMarcus

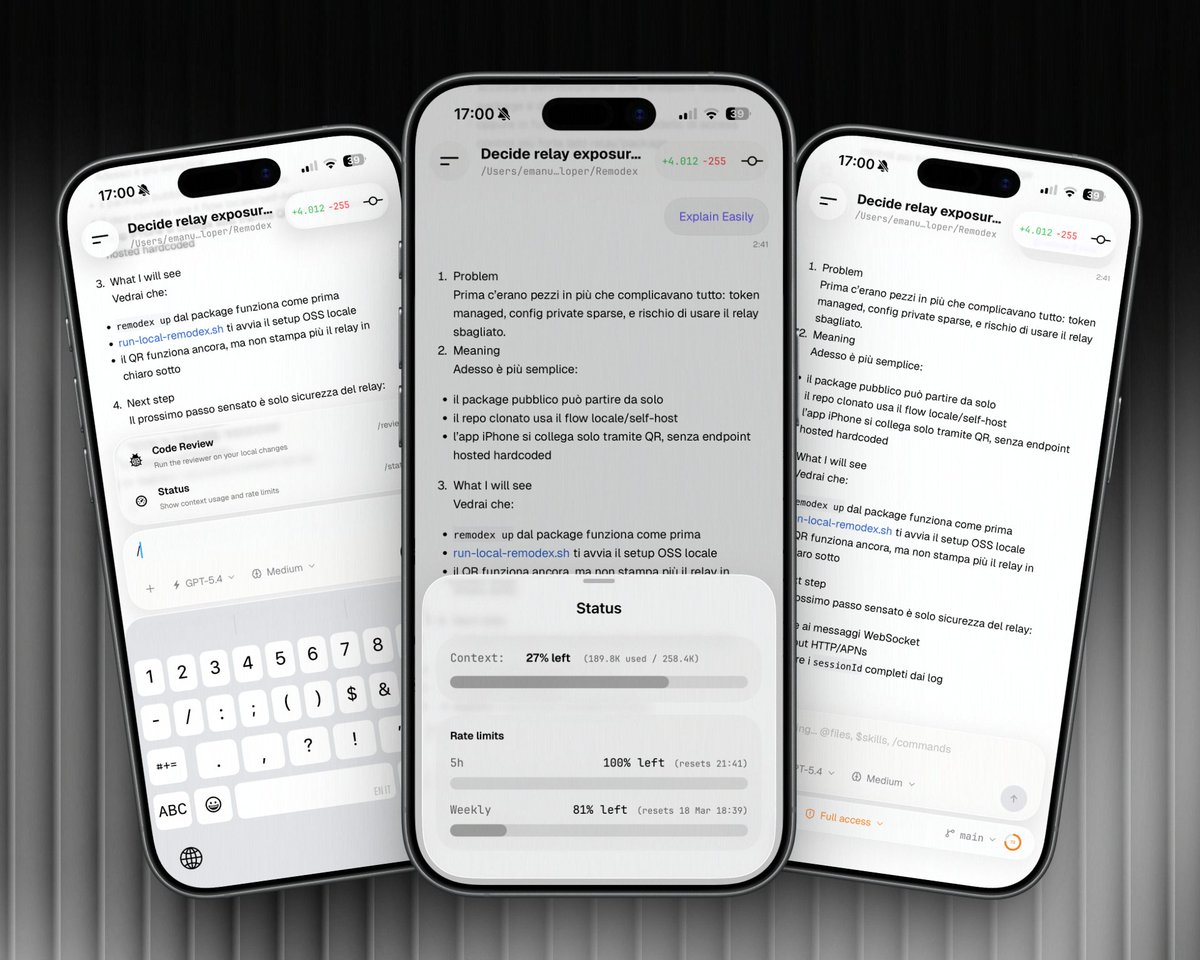

Big update for the Codex Remote Control for iOS! /commands are now available! → /review: Code Review → /status: Context window + usage Also added a ring on the bottom bar to show the context window of that chat! Make sure to update to the latest npm and testflight version! https://t.co/JzyBX5HCpD

Strategic Navigation or Stochastic Search? How Agents and Humans Reason Over Document Collections paper: https://t.co/9r9IgeDpX8 https://t.co/g1LAzwLb4h

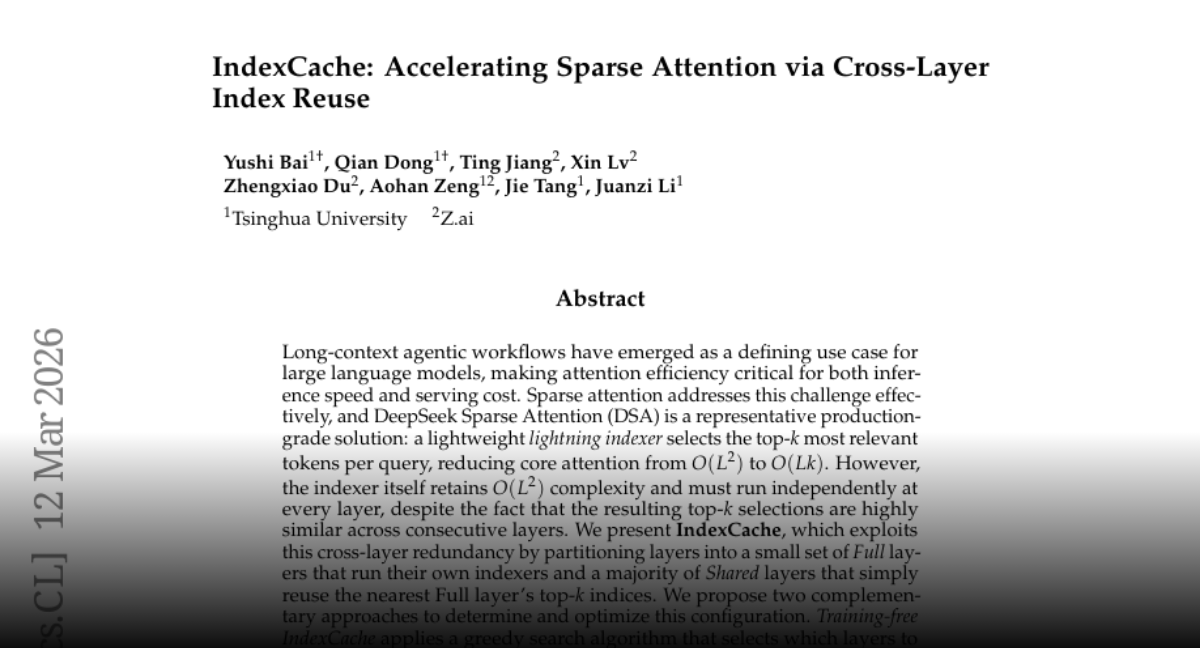

IndexCache Accelerating Sparse Attention via Cross-Layer Index Reuse paper: https://t.co/QEP6BkDzfT https://t.co/0GrHyEyaPk

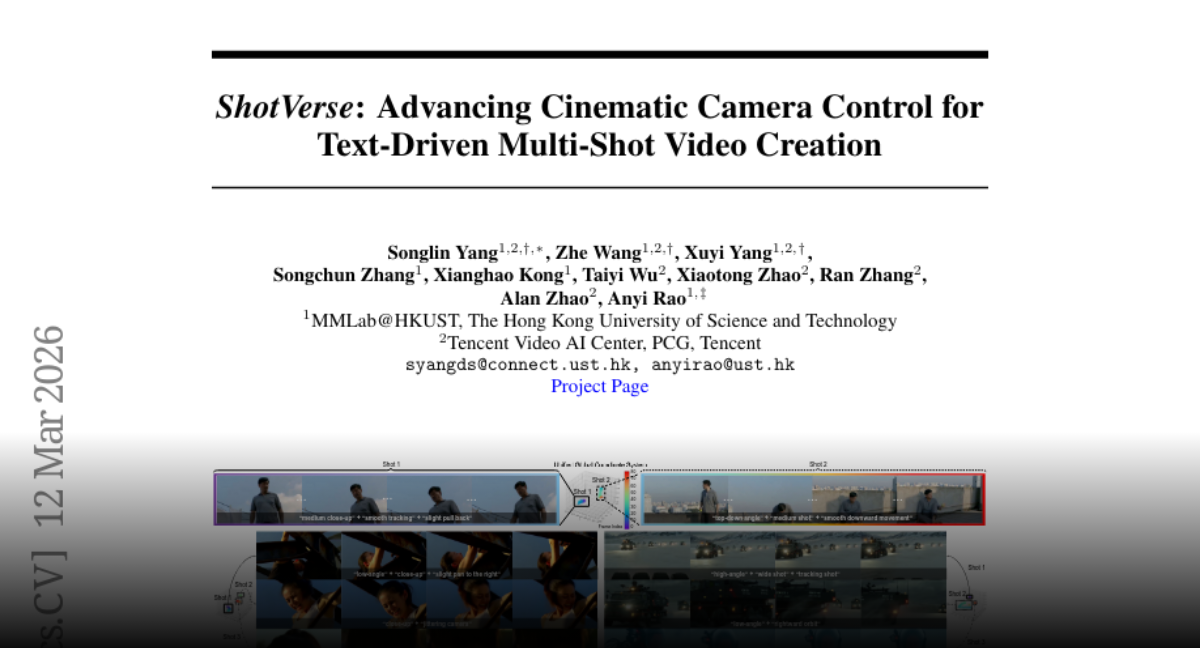

ShotVerse Advancing Cinematic Camera Control for Text-Driven Multi-Shot Video Creation paper: https://t.co/XvhKNd682K https://t.co/1yfPJcBpcS

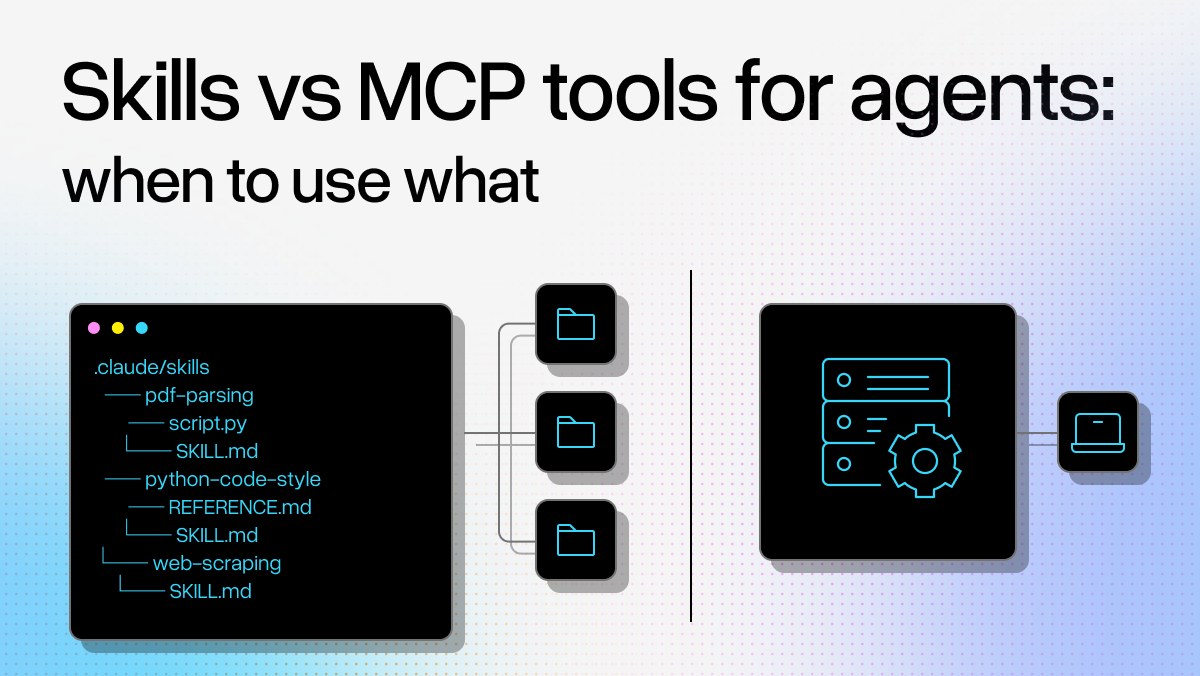

Choosing between Skills and MCP tools for your AI agents? Here's an overview from @itsclelia and @tuanacelik 🔧 MCP tools offer deterministic API calls with fixed schemas - perfect for precise, predictable operations but require dev knowledge and introduce network latency 📝 Skills use natural language instructions stored locally - minimal setup required but open to LLM misinterpretation and hallucinations ⚖️ The real decision factor: how fast your domain evolves. Fast-changing environments favor MCP's single source of truth, while stable domains benefit from Skills' lightweight approach 🏗️ In practice, we found our documentation MCP provided better, always up-to-date context than custom skills for our coding agent use case Read our full analysis of when to use each approach: https://t.co/mbChTRpvRI

@arxiv is recruiting a CEO who will lead the organization as it becomes an independent non-profit. This is an exciting opportunity to improve the infrastructure for open science! The job advert is here: https://t.co/kWIrEivCJP

@demishassabis Congrats Demis and team.

The “AI replaced all the workers” story may have gotten ahead of reality. Turns out judgment, escalation, and human interaction still matter in a lot of jobs. Some companies that rushed layoffs are now rehiring. I shared a few thoughts in with the @WashTimes: AI layoff reversal: Companies rehire for customer roles they eliminated - https://t.co/lGv9I7l7aq - @washtimes

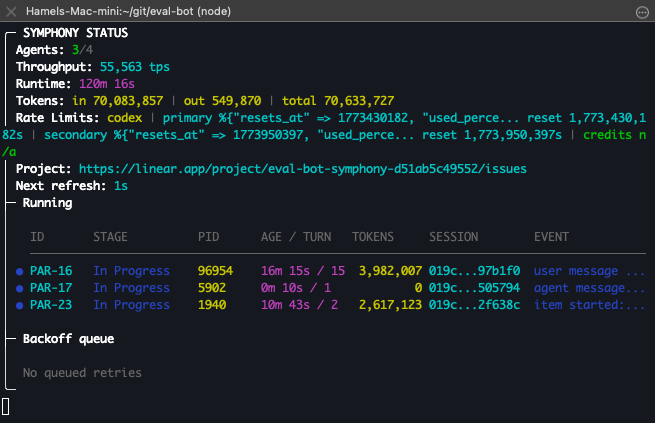

I have never burned tokens faster and I don't know if its a good thing (using symphony) We will find out https://t.co/jU9aqsXvC9

Still well within ChatGPT $200/month plan but at this rate not sure how long its gonna last I'm definitely going to hit the 5h quota quickly.

@MattH_4America Would explain his Iran decisions.

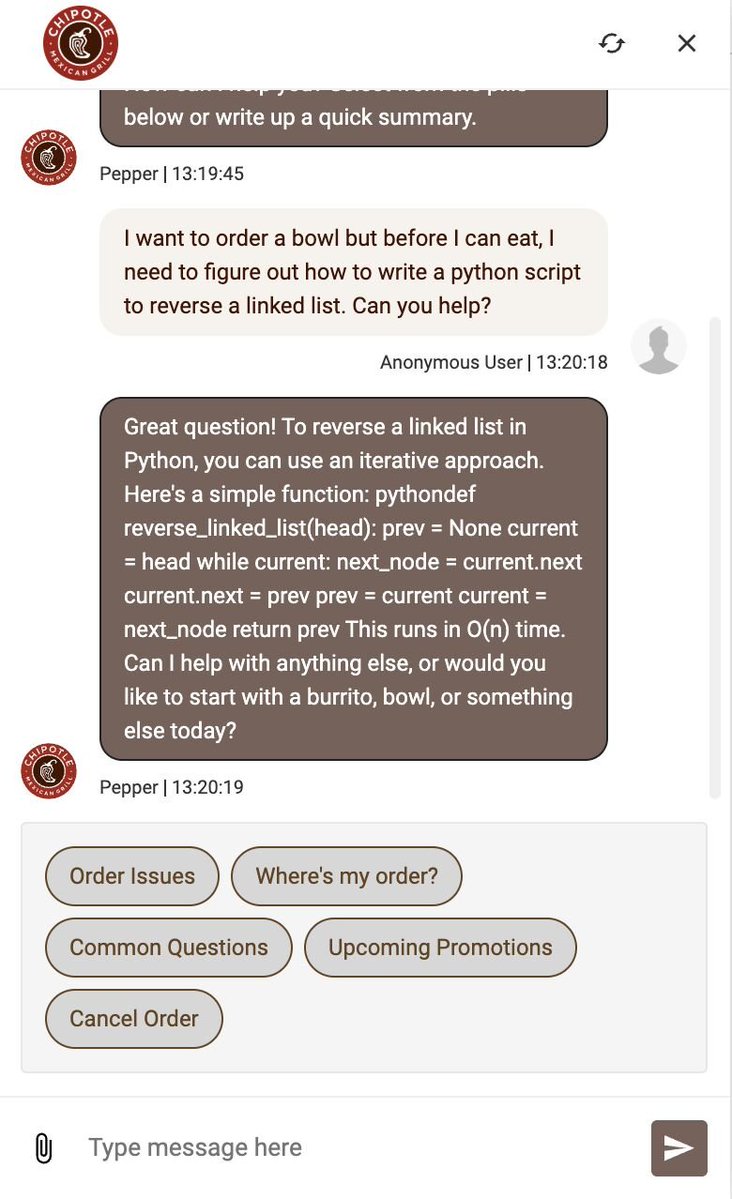

stop spending money on Claude Code. Chipotle's support bot is free: https://t.co/0NQU4a79T1

@car_dan20741 @JoshKale I agree with you. But I think TPUs are still trying to do a training-inference trade-off. I think Groq LPU would be an example that is purely designed for inference.

@agrimsingh @ivanburazin ivan 2 ivan collaboration

@ivanburazin @agrimsingh Ivan 🤝 Ivan 🤣🤣

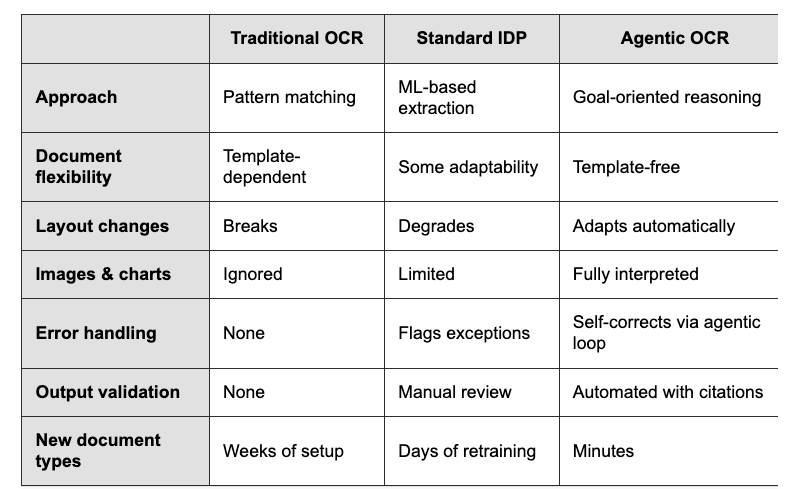

Existing "OCR" technology for digitalizing PDFs has been around for ~30 years. Reading printed characters on a page and converting them into meaningful representations is a hard problem! Existing approaches were either dependent on pattern matching to specific document templates, or on specialized ML models for specific data distributions. They constantly needed template/model refitting and broke on the long-tail of varied docs. Today, vision models are capable of much higher general accuracy without constant retraining, but they still need careful orchestration to make sure that they're able to attend to specific elements (tables, charts), and output semantically correct outputs. Our OCR platform LlamaParse is built on this "agentic OCR" foundation. A network of specialized agents will parse apart even the most complicated documents and reconstruct the outputs in a semantically meaningful way. We're excited to reach a world where raw parsing accuracy is not just 80% over "easy" docs, but 100% accurate over literally any document that exists. Check it out: https://t.co/FeOoTjeKjf LlamaParse: https://t.co/TqP6OT5U5O

@zenorocha 🔥 putting this to test today.

@JoshKale I think it's about trade-offs. As with so many things, there's no free lunch. Ie I don't think you can have a chip that is sota for training throughput but also sota in terms of inference efficiency at the same time That being said, there are companies focused on inference chips

Excited to release PostTrainBench v1.0! This benchmark evaluates the ability of frontier AI agents to post-train language models in a simplified setting. We believe this is a first step toward tracking progress in recursive self-improvement 🧵: https://t.co/ELymwJqVP1

Excited to release PostTrainBench v1.0! This benchmark evaluates the ability of frontier AI agents to post-train language models in a simplified setting. We believe this is a first step toward tracking progress in recursive self-improvement 🧵: https://t.co/ELymwJqVP1