Your curated collection of saved posts and media

Will AI replace human jobs? @AndrewYang is convinced. ⬇️@thehill @NewsNation https://t.co/LEhuwcScQ3

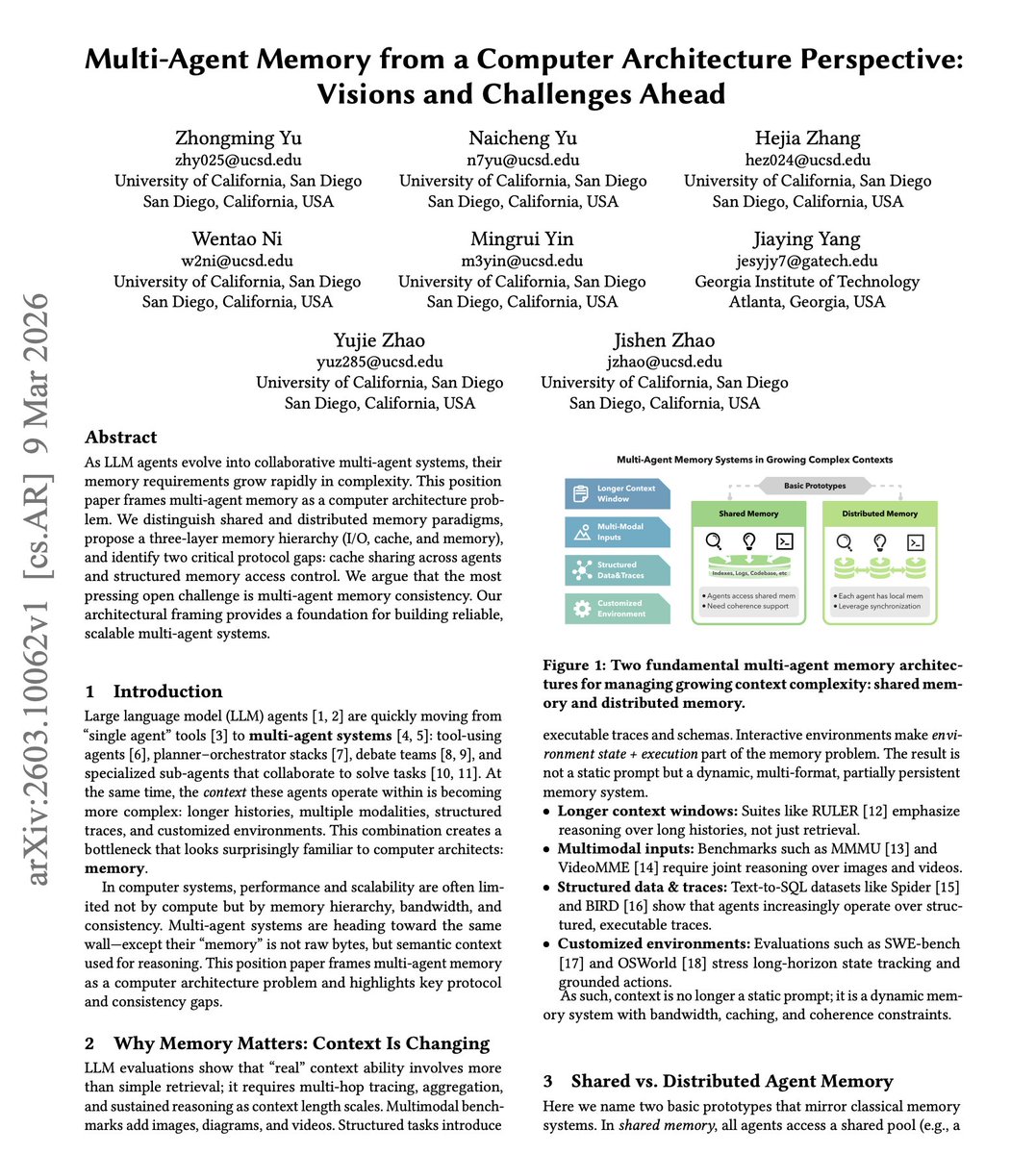

Memory is truly a game-changer for AI agents. Once I had memory set up correctly for my proactive agents, reasoning, skills, and tool usage improved significantly. I use a combination of semantic search and keyword search (Obsidian vaults) Here is a report with a helpful framing for anyone building with memory and multi-agent systems. It proposes viewing multi-agent memory as a computer architecture problem. The paper distinguishes shared and distributed memory paradigms, proposes a three-layer memory hierarchy (I/O, cache, and memory), and identifies two critical protocol gaps: cache sharing across agents and structured memory access control. Agent memory systems today resemble human memory in that they are informal, redundant, and hard to control. As agents evolve into collaborative multi-agent systems, their memory requirements grow rapidly in complexity. Context is no longer a static prompt. It is a dynamic memory system with bandwidth, caching, and coherence constraints. The largest open challenge identified was multi-agent memory consistency. Multiple agents reading from and writing to shared memory concurrently raises classical challenges of visibility, ordering, and conflict resolution, Memory should not be seen as raw bytes but semantic context used for reasoning. Paper: https://t.co/k8hdSuZY0F Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

“Companies don’t want to chase the latest bugs." Chris Lattner, CEO of @Modular, on what AI infrastructure customers actually want: “They want reliable systems so they can focus on their real problems.” “They also want choice: the ability to run workloads across different types of silicon.” “If we can improve total cost of compute by 40%, that’s 40% more capacity you don’t need to buy.”

“Companies don’t want to chase the latest bugs." Chris Lattner, CEO of @Modular, on what AI infrastructure customers actually want: “They want reliable systems so they can focus on their real problems.” “They also want choice: the ability to run workloads across different types of silicon.” “If we can improve total cost of compute by 40%, that’s 40% more capacity you don’t need to buy.”

SAVE ACT: Once Senators return from their long weekend Leader Thune will hold a performative vote that he knows that won't pass. Instead of forcing Democrats to actually filibuster or change the cloture rules he's going to be able to pretend like he has actually done his job. Democrats will be able to claim (correctly) that they didn't vote against the Save America Act but instead voted against closing debate. Republicans will be able to claim they voted for the act while actually voting to close debate on the act. Both sides get to claim they did something that never happened - the act was never given an up or down vote itself. Instead the senate will have just argued about procedural nonsense. Also, this performative vote will take time, time that could have been spent restoring pay for the thousands of federal workers who haven't been paid in a month.

@elonmusk The SAVE Act is a fork in the road moment for Western Civilization. https://t.co/PhZUQ3oha8

Create anything you can imagine with Grok Imagine✨ New tools let you choose the video size and quality, add different images to a single video and seamlessly combine them, and extend the video's length. https://t.co/5pHlqqqnAj

I brought this painting to life using Grok. I could play around with this for days. I'm posting my ai-art and satire picture over on @TheWokeObserver, my new account, so I don't clutter your feed with Grok stuff here. https://t.co/meS3gMu78q

What does real AI-assisted development look like? In this episode of @seradio, Scott Hanselman (@shanselman) dives into agents, prompts, and why developers still need strong fundamentals. 🎧 Listen: https://t.co/xLFLlL1WLw

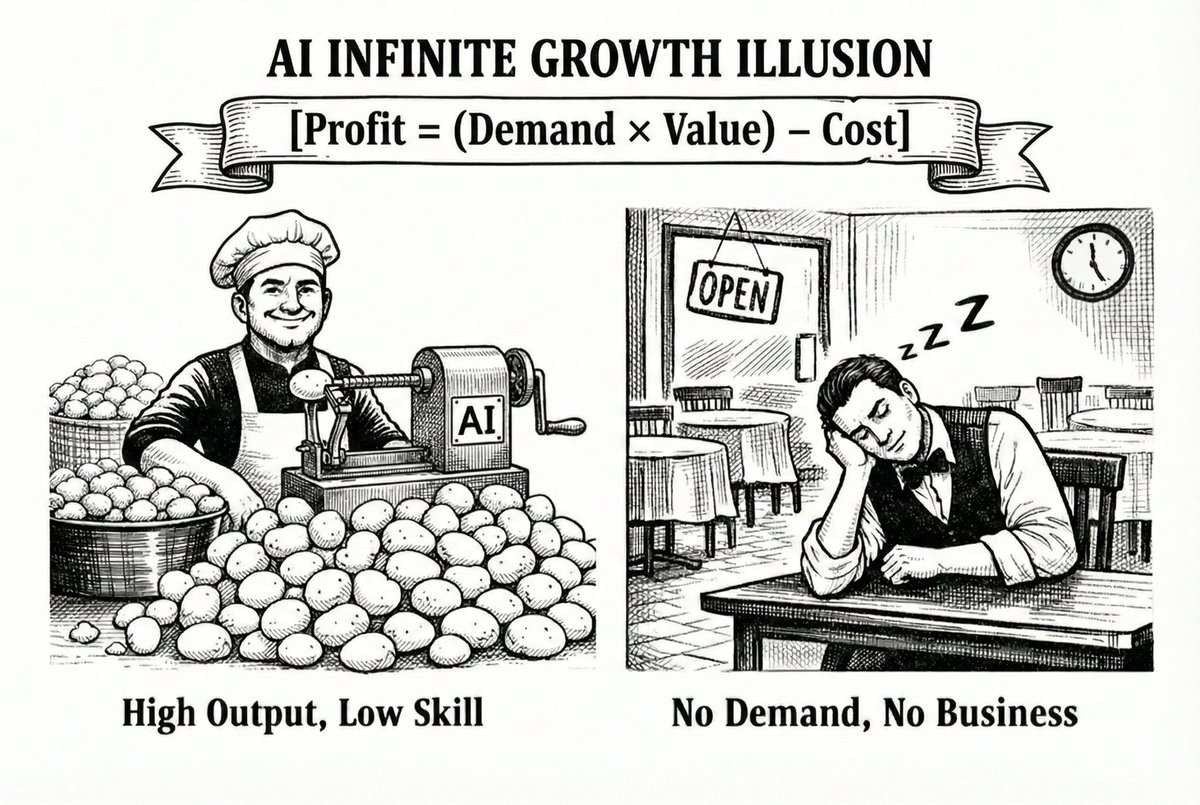

AI Tokenomics (the non-AGI version) 1) For Leaders [Profit = Value - Cost] Value = Demand × λ λ = Competence × Leverage Leverage = market share + influence + moat Ceilings to smash: Value > Cost, Demand > 0, Leverage > 0 Notice we ignored Competence? Intentional. That’s the chasm between McDonald’s (scalable volume, low λ ceiling) and a Michelin-star street food stand (elite taste, tiny scale but high λ per unit). AI multiplies what’s already there. It doesn’t conjure wealth from thin air. No demand → no business. No leverage → no escape velocity. The AI bubble is what happens when leverage is optimized while demand is still zero. 2) For Builders [Competence = Skill × Experience × Taste] Experience = scars + failures + hard knocks Taste = judgment AI can’t fake (yet) AI amplifies your skill, experience, and taste. AI is NOT replacing coders. It’s replacing below-mean competent ones. Your Competence level sets the long-term ceiling. AI is widening the gap between top and mediocre builders faster than ever. Final thoughts: AI is like a turbine engine. Attach it to a rocket → Mars. Attach it to a bicycle → faster trip to nowhere. --- Sunday explainer, served raw on X. @gerardsans

@codyschneiderxx FYI

@yoobinray Lol… this is the agentic AI equivalent of telling someone back in the day ‘Just Google it’ and then smugly declaring, ‘I’ve just changed your life.’ Bro, you didn’t.

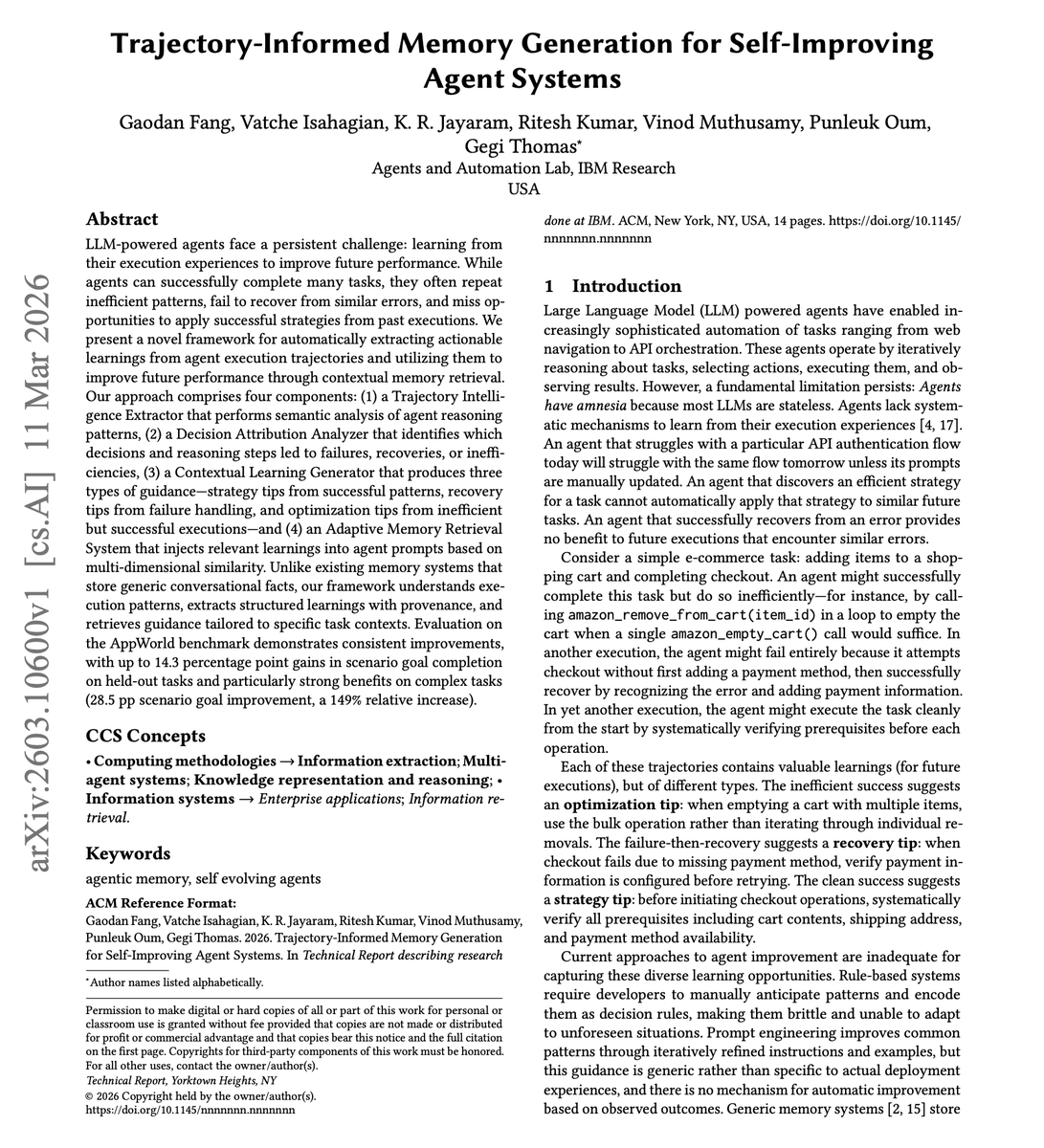

New research from IBM Research on Self-Improving Agents. Agents have "amnesia." An agent that struggles with a particular API authentication flow today will struggle with the same flow tomorrow unless manually updated. This paper introduces a framework for automatically extracting actionable learnings from agent execution trajectories and using them to improve future performance through contextual memory retrieval. The system generates three types of guidance: strategy tips from successful patterns, recovery tips from failure handling, and optimization tips from inefficient but successful executions. A Trajectory Intelligence Extractor performs semantic analysis of agent reasoning patterns while a Decision Attribution Analyzer traces backwards through reasoning steps to identify root causes. On the AppWorld benchmark, the memory-enhanced agent achieves 73.2% task goal completion compared to 69.6% baseline (+3.6 pp) and 64.3% scenario goal completion compared to 50.0% (+14.3 pp). The benefits scale with task complexity. Difficulty 3 tasks show the most dramatic improvements: +28.5 pp on scenario goals (19.1% to 47.6%), a 149% relative increase. Why it matters: Agents that learn from their own execution traces, not just from training data, can systematically improve without manual prompt engineering. The self-reinforcing cycle of better tips producing better trajectories producing better tips is a practical path toward self-improving agent systems. Paper: https://t.co/8IOIeEgFM5 Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Third workout this morning on the path to break my bench press max: Four warmups, 8x bar, 95, 135, 185 7x225 2x275 1x295 I think I could go a little higher. Goal is to each and break 320, not too far. I’ll start recording when I get closer

@explorersofai AI is not sentient. On the other hand, there is no definite philosophical argument that a chair is not sentient

man @tobi from Shopify is so deep into the @huggingface stack for qmd!! 🤗 Dropping open models and datasets on the hub 📉 Using Trackio from @Gradio for training logs 💼 Hugging Face Jobs for finetuning / GPUs not to mention TRL, accelerate, llama.cpp etc. Talk about ELITE!!! https://t.co/bIQi7WBbNv

Join @JamesMontemagno and @cinnamon_msft as they spice up your VS Code workflow with the latest GitHub Copilot AI features. From agent mode to custom instructions, we're turning up the heat on developer productivity. Tune in at 8 am PT! https://t.co/zlgOT6j0kL https://t.co/XsybHiOqIM

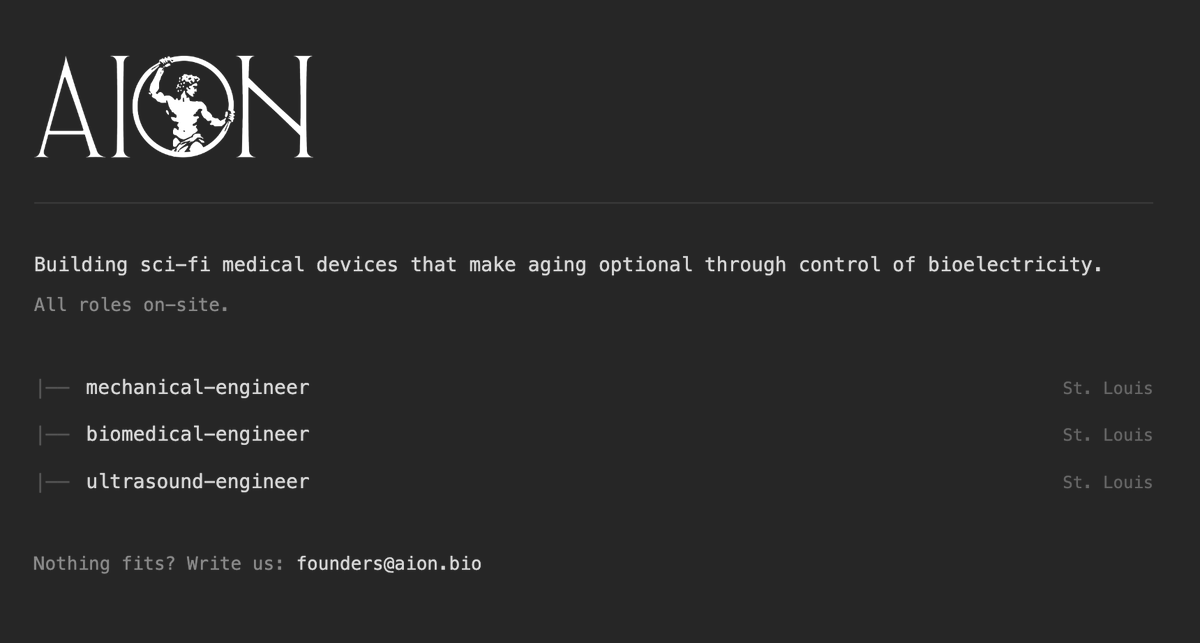

https://t.co/BF5jCmSYJU hiring! https://t.co/iwmtIGxTkq

Portfolio company hiring:

@charvispeaks Interesting has it replaced Figma and other tools for you?

You want to know how we know that Thune is a major problem? The Media NEVER attacks him.