Your curated collection of saved posts and media

This dataset was crafted with a fine-tuned @NousResearch Hermes 4.3 36B model run on a RTX 6000 Blackwell Server Edition. (We simply love @NousResearch but this, by no means, does it signify a partnership) PMI-relevant results: 60.6% TruthfulQA (Delta: +11.7% vs Qwen3.5-4B) & 71.5% HellaSwag on a 4B fine tuned model.

Stanford just tested whether LexisNexis and Thomson Reuters’ AI legal research tools are really “hallucination-free,” as they claim. Spoiler: not even close. Here’s what the study found. https://t.co/lb2CekFeWn

Spark 2.0 is here! 🚀 We’re redefining what’s possible on the web with a streamable LoD system for 3D Gaussian Splatting. Built on Three.js, you can now stream massive 100M+ splat worlds to any device from mobile to VR using WebGL2. All open-source. Dive into the tech 👇 https://t.co/VOd6V0Wz1s

Thanks @_akhaliq for sharing our work! Try and put your robot in today at: https://t.co/i5HkcE1dqs https://t.co/A1P8QRsMhQ

Nvidia released Lyra 2.0 on Hugging Face Explorable Generative 3D Worlds paper: https://t.co/HcxsBD2yEh model: https://t.co/bC32ADfvDS https://t.co/RwdR7DUEcY

Our most expressive and steerable TTS model yet! Designed to give builders granular control over AI-generated speech, Gemini 3.1 Flash TTS is really fun to play with! Available in preview today - for devs via the Gemini API & @GoogleAIStudio + for enterprises on Vertex AI

Introducing Gemini 3.1 Flash TTS 🗣️, our latest text to speech model with scene direction, speaker level specificity, audio tags, more natural + expressive voices, and support for 70 different languages. Available via our new audio playground in AI Studio and in the Gemini API!

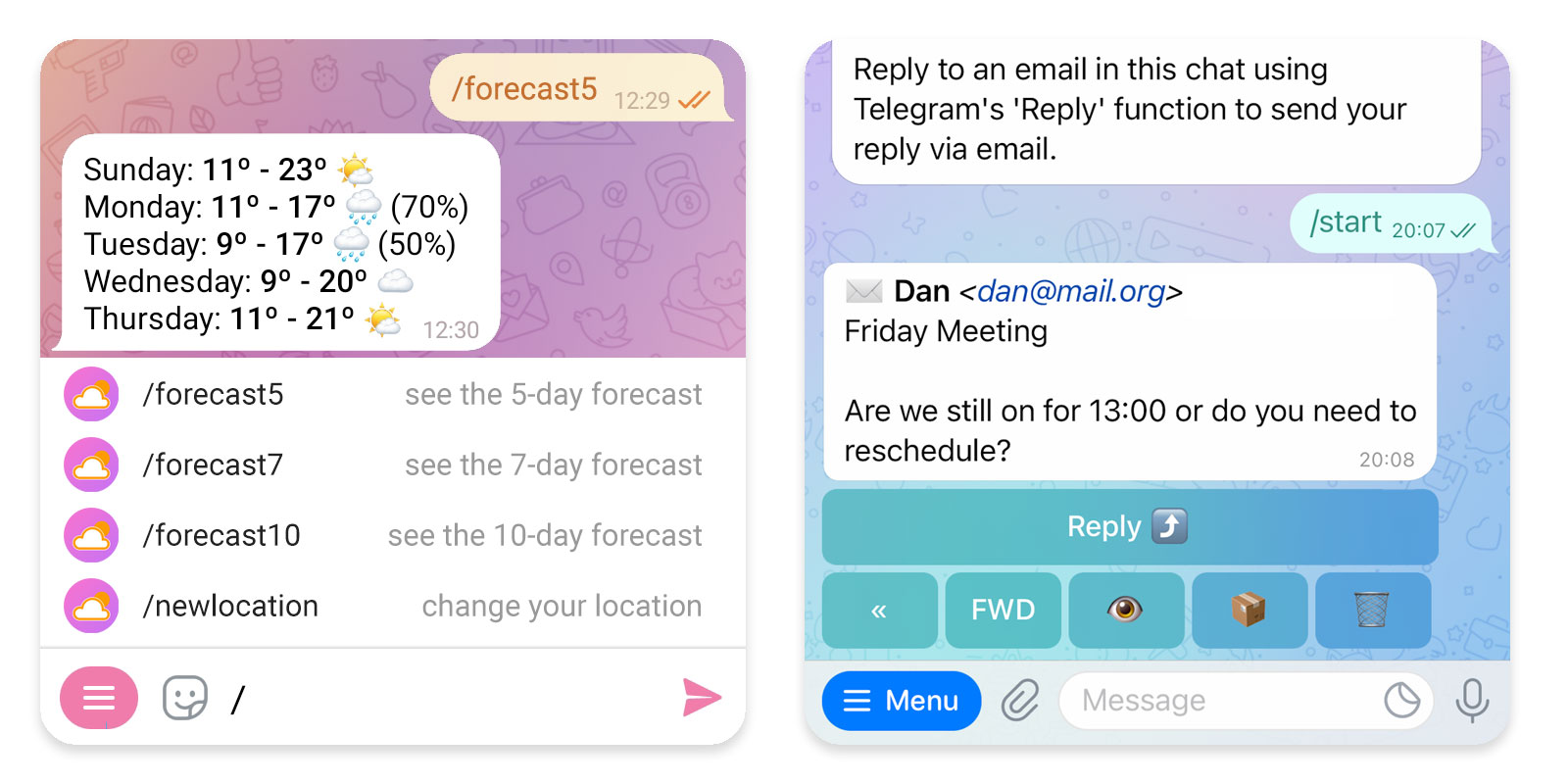

OpenClaw now has end-to-end testing for Telegram 👀 Uses the brand new Telegram bot-to-bot communication mode: https://t.co/RDEj1i0zwa 🦞 https://t.co/LWSn4PCr6N

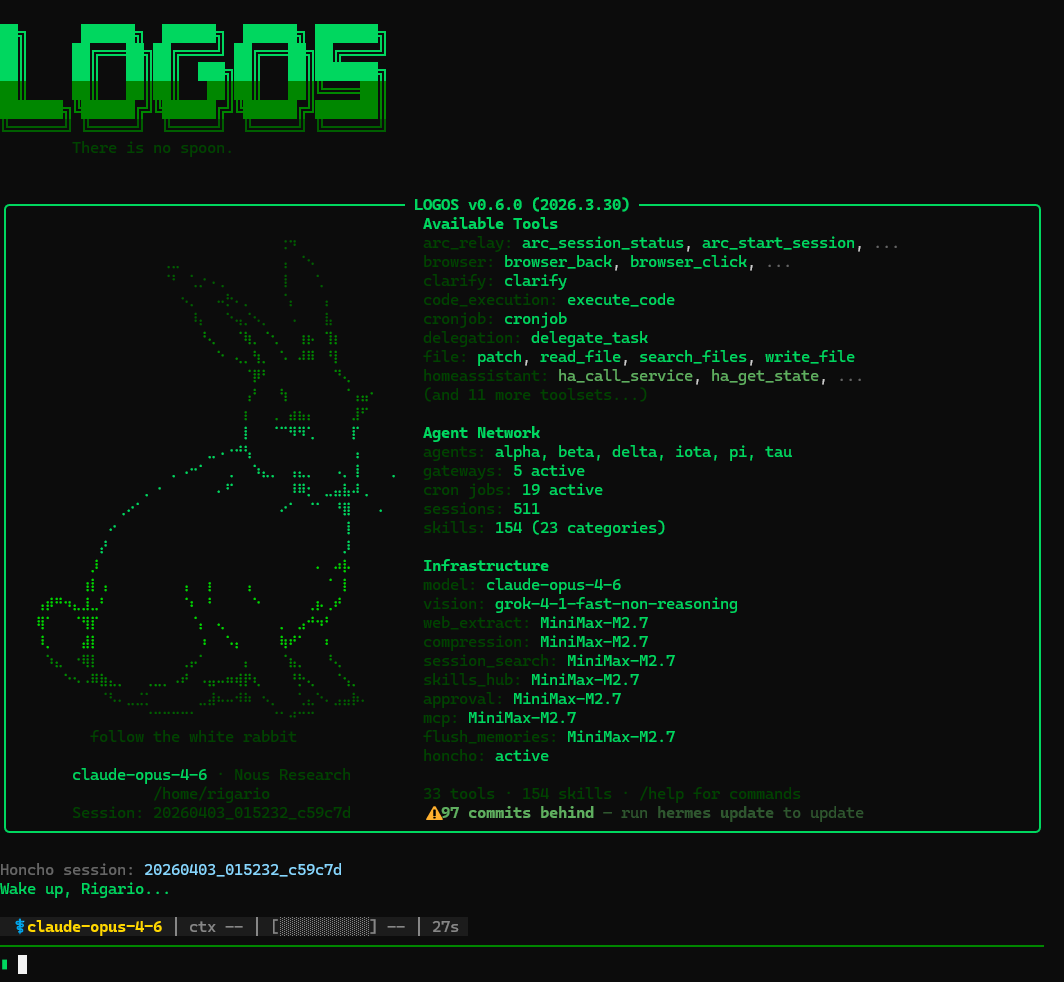

Many are running @NousResearch Hermes Agent now. Here are some practical tips that help a lot, especially if you're coming from OpenClaw: 1. Nightly skill evolution is worth setting up. Link: https://t.co/qPs3QPIYgW Pro tip: Add a second cronjob to evaluate the changes so you don't have to. Make sure it stops anything that tries to game the optimization loop. 2. Install Honcho if you're hitting memory issues. It gives proper cross-session recall, memory synthesis, and better long-term storage. Helps avoid repeating the same mistakes or pulling too much context (and wasting tokens). 3. Consider changing the default session timeout and expiry. Especially useful for threads you don't use every day, prevents the agent from losing context unnecessarily. For those migrating from OpenClaw: 4. Expose your OpenClaw agents as OpenAI-compatible endpoints. This lets you run both side-by-side with zero disruption while you transition. Hermes can call them directly, and your existing crons keep working. 5. On day one, start populating your USER.md and MEMORY.md files. Note for OC users: Hermes has a much smaller character limit than OpenClaw, so populate and curate thoughtfully, don't just dump everything in. Quality over quantity helps it learn you faster. 2,200 for memory and 1,375 for user. Hermes works especially well once you integrate it properly into your workflows. Last tip, don't start changing your skin till your agents are actually doing work. You might never stop and go down the rabbit hole... 🤣

Most Physical AI models recognize patterns. They don’t understand the world. That’s why they fail on edge cases. BADAS 2.0 is a V-JEPA2 world model trained by @getnexar on real-world videos. We used the model to find what it didn’t understand, then trained on that. It generalizes. And we built lite versions so it runs on edge devices, even CPU. Understanding is the only way this scales. See how it performs on your own videos. Link in first comment.

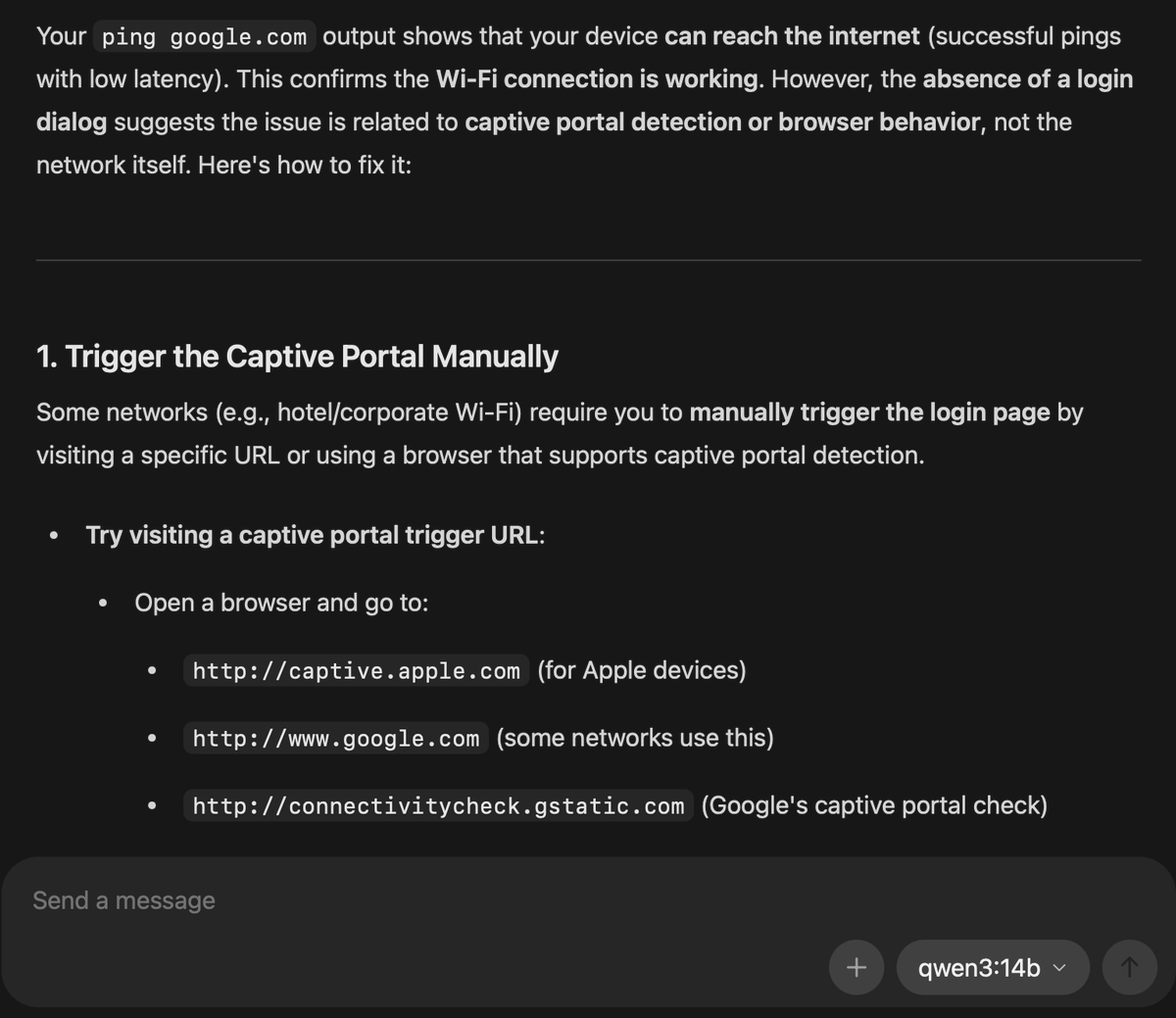

Was able to use ollama with qwen3 14b locally on my laptop to troubleshoot internet connectivity issue on a cruise ship wifi @ollama @Alibaba_Qwen Found answer in < 5 mins. I'm running it on a Macbook Air M4 24GB. You all should keep a model locally handy to use https://t.co/xM4LOhzHj6

hermes agent @NousResearch is fucking insane i know literally NOTHING about coding. ZERO. and i just built a fully functioning web app in minutes http://localhost:3000/ check it out @Teknium https://t.co/H0uvfhoNX5

I've been using @NousResearch Hermes Agent for a while and here are the slash commands that I found super useful: > Self-improving loop /skills → browse & install new capabilities mid-session /reasoning → dial thinking depth (none → xhigh) per task /model → hot-swap models without restarting > Power moves /background "research X" → spawn parallel agent /queue "also check Y" → stack tasks for next turn /btw "quick question" → ephemeral Q&A, burns no context /branch → fork session to explore a different path > Context management /compress → manually summarize & free up tokens /snapshot → save/restore full agent state /rollback → undo filesystem changes like git

@Teknium @MiniMax_AI Just shipped a Hermes Agent skill with HuskyLens V2 on a Pi 5 gesture control, face recognition, emotion reading, all local. MiniMax M2.7 as the brain would be wild for this. What's the target hardware? https://t.co/UuBtK7YIyO

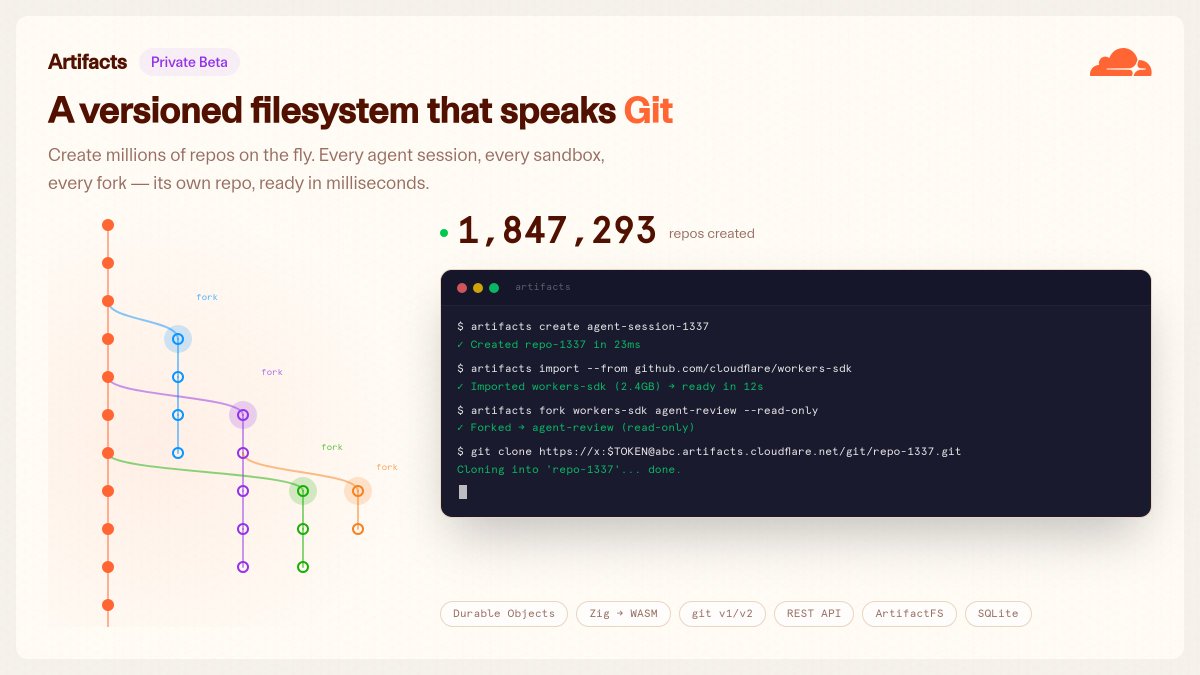

cloudflare just gave agents git this is one of those changes that will just quietly improve everything agents with proper version control @dillon_mulroy @elithrar @mattzcarey @thomas_ankcorn have done something incredible here https://t.co/4dFPie896A

🆕 @AnthropicAI's Claude Opus 4.7 is now generally available and rolling out in GitHub Copilot. Early testing shows ➡️ It has stronger multi-step task performance and more reliable agentic execution ➡️ Meaningful improvement in long-horizon reasoning and complex workflows Try it out in @code or Copilot CLI. https://t.co/8QFLkf0RqR

This 2 hour Stanford lecture shows exactly how Stanford trains it's engineers to build AI systems. It's more practical than every Claude tutorial & prompting threads you've seen. Bookmark & give it 2 hours, no matter what. It'll be the most productive thing you do this weekend. https://t.co/L0poFGEYKe

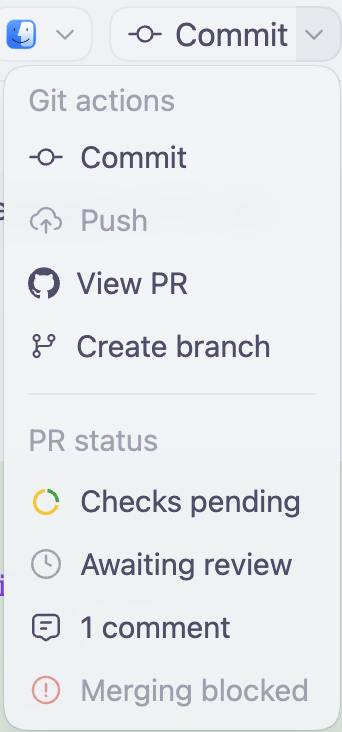

did you know that the Codex app shows you PR statuses? i.e. CI checks, PR comments, merge status, review status 👀 https://t.co/saguG9YZ4L

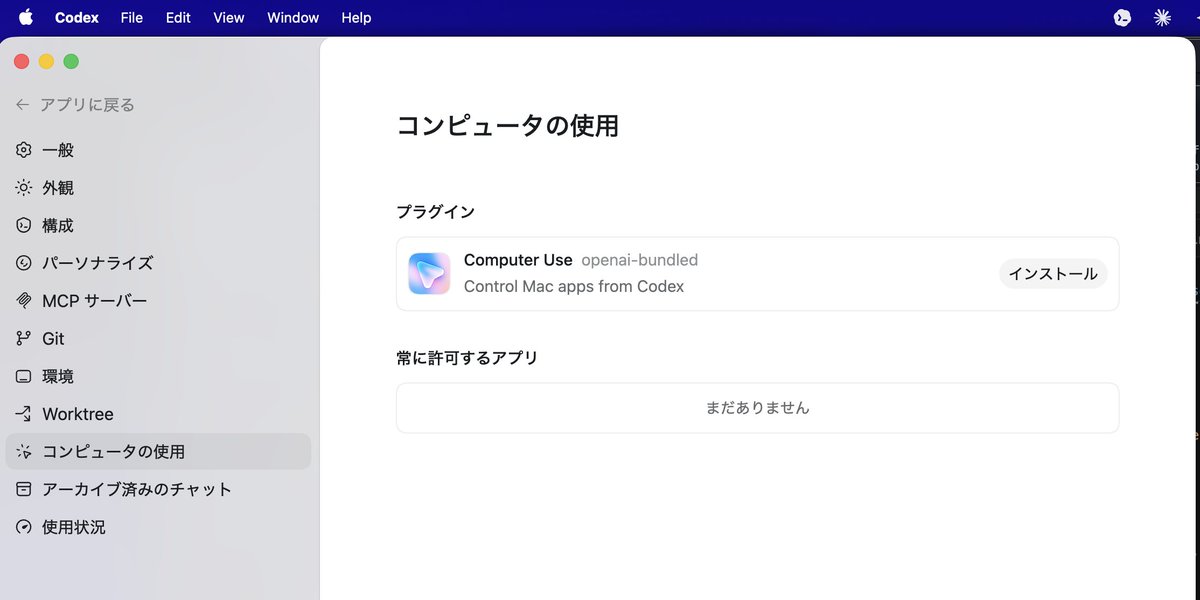

We shipped a bunch of new features in Codex today, but I’m especially excited about plugins. So many new plugins just landed that make Codex even more powerful! https://t.co/I4kVFpm3G3

60 tokens/s, 8B 🌳 model, on browser 🤗 Huge thanks to @mervenoyann @xenovacom and @huggingface team on Day-0 support!

new open-source Bonsai models are out 🔥 > ternary weights in 8B (1.75 GB), 4B (0.86 GB), and 1.7B (0.37 GB) > comes in MLX, ONNX weights and WebGPU browser demo 😍 > a2.0 licensed 👏 https://t.co/jo5jbb79dW

Google DeepMind is hosting a Gemma 4 hackathon with a $10,000 Unsloth prize! 🦥 Show off your best fine-tuned Gemma 4 model built with Unsloth. There's $200,000 total prizes to be won. Challenge info + Notebook: https://t.co/HndHPaXICT https://t.co/cBnNro1fVI

what if your earbuds could see? meet VueBuds: tiny cameras at each ear, streaming low-resolution images over Bluetooth, with an on-device vision language model to understand what's around you. capstone of my PhD 🎓 #CHI2026 honorable mention https://t.co/Go72NzVEb7

Codex Compute efficient ✅ Always up, never down ✅ Best at hardcore engineering ✅ Crazy good app, first to escape the terminal ✅

I'm frequently using Claude Code and i love @openclaw. But i would say Hermes Agent from @NousResearch is the best open-source agent I’ve ever used, especially given that it comes from an independent startup rather than a major LLM giant.

Because computer use in Codex doesn't take over your own cursor so Codex can work in the background and you can truly cursor max! 🔥

brb, cursor-maxxing https://t.co/gryiF1MCik

Even this thread was done in Codex: Slack + Google Drive plugins to learn about the release and gather visuals. Notion plugin to draft the post content and run the drafts by me. Computer Use to put into x dot com and upload the videos. Automations to tell me how it's going for the few hours after. Started it only 8 minutes before the launch.

Big Codex update today. Codex can now work across more of your computer, more of your tools, and longer-running projects. It started as a coding agent. It’s becoming a teammate for the whole software loop.

@emollick Hey Ethan! Sean here, PM on https://t.co/KZTlPpbqBQ - thanks for the feedback. This isn't a router, this is the model being trained to decide when to think based on the context -- we've been running this for a while in Sonnet 4.6 in https://t.co/KZTlPpbqBQ as well as Claude Code. Understood that it's not tuned perfectly in https://t.co/3Rk7wAMA7D yet - we're sprinting on tuning this more internally and should have some updates here shortly. Feel free to DM us examples of queries where you expected thinking and didn't see it

Dogfooding Opus 4.7 the last few weeks, I've been feeling incredibly productive. Sharing a few tips to get more out of 4.7 🧵

@theo Works when you trigger ultrathink mode (I know they deprecated it) but somehow reasoning effort is higher now like xHigh. Might be a bug…

The Strange Origin of AI’s ‘Reasoning’ Abilities https://t.co/lXyZw8U4u4 #TechNews @ArturHabant @elaniazito @IanLJones98 @CurieuxExplorer @Shi4Tech @enilev @Fabriziobustama @mvollmer1 @AnthonyRochand @JolaBurnett @lyakovet @debashis_dutta @3itcom @ahier @Analytics_699 @antgrasso @CathCervoni @chidambara09 @DigitalColmer @dinisguarda @DimitriHommel @EvanKirstel @FrRonconi @GlenGilmore @gvalan @HeinzVHoenen @ipfconline1 @jeancayeux @jorgecunha @kalydeoo @nafisalam @Nicochan33 @pierrepinna @PawlowskiMario @puneetsinghal22 @ralph_ohr @RLDI_Lamy @rshevlin @sarbjeetjohal @SpirosMargaris @StefanoDeCupis @tewoz @thomas_dettling @Ym78200 @aure79lien @jblefevre60

macOS 版のCodex Desktop アプリに「Computer Use」機能がついたのでインストールして試してみます。 https://t.co/xBU891BSUa

THREAD 1/7 Every AI benchmark a lab bragged about this year is compromised. not because labs are cheating. because the game itself is broken.

Sharing a super simple, user-owned memory module we've been playing around: nanomem The basic idea is to treat memory as a pure intelligence problem: ingestion, structuring, and (selective) retrieval are all just LLM calls & agent loops on a on-device markdown file tree. Each file lists a set of facts w/ metadata (timestamp, confidence, source, etc.); no embeddings/RAG/training of any kind. For example: - `nanomem add <fact>` starts an agent loop to walk the tree, read relevant files, and edit. - `nanomem retrieve <query>` walks the tree and returns a single summary string (possibly assembled from many subtrees) related to the query. What’s nice about this approach is that the memory system is, by construction: 1. partitionable (human/agents can easily separate `hobbies/snowboard.md` from `tax/residency.md` for data minimization + relevance) 2. portable and user-owned (it’s just text files) 3. interpretable (you know exactly what’s written and you can manually edit) 4. forward-compatible (future models can read memory files just the same, and memory quality/speed improves as models get better) 5. modularized (you can optimize ingestion/retrieval/compaction prompts separately) Privacy & utility. I'm most excited about the ability to partition + selectively disclose memory at inference-time. Selective disclosure helps with both privacy (principle of least privilege & “need-to-know”) and utility (as too much context for a query can harm answer quality). Composability. An inference-time memory module means: (1) you can run such a module with confidential inference (LLMs on TEEs) for provable privacy, and (2) you can selectively disclose context over unlinkable inference of remote models (demo below). We built nanomem as part of the Open Anonymity project (https://t.co/fO14l5hRkp), but it’s meant to be a standalone module for humans and agents (e.g., you can write a SKILL for using the CLI tool). Still polishing the rough edges! - GitHub (MIT): https://t.co/YYDCk5sIzc - Blog: https://t.co/pexZTFdWzz - Beta implementation in chat client soon: https://t.co/rsMjL3wzKQ Work done with amazing project co-leads @amelia_kuang @cocozxu @erikchi !!

We built our launch video in Claude Code using HyperFrames. Now it's yours. Open source, agent-native framework. HTML to MP4. $ npx skills add heygen-com/hyperframes RT + Comment "HyperFrames" to get the full source code of this launch video (must follow) https://t.co/vsRtZ6gQsb