Your curated collection of saved posts and media

BREAKING: Proof—a new product from @every It’s a live collaborative document editor where humans and AI agents work together in the same doc. It's fast, free, and open source—available now at https://t.co/OZeW6Wf1Iq. It’s built from the ground up for the kinds of documents agents are increasingly writing: bug reports, PRDs, implementation plans, research briefs, copy audits, strategy docs, memos, and proposals. Why Proof? When everyone on your team is working with agents, there's suddenly a ton of AI-generated text flying around—planning docs, strategy memos, session recaps. But the current process for collaborating and iterating on agent-generated writing is…weirdly primitive. It mostly takes place in Markdown files on your laptop, which makes it reminiscent of document editing in 1999. Proof lets you leave .md files behind. What makes Proof different? - Proof is agent-native: Anything you can do in Proof, your agent can do just as easily. - Proof tracks provenance: A colored rail on the left side of every document tracks who wrote what. Green means human, Purple means AI. - Proof is login-free and open source: This is because we want Proof to be your agent's favorite document editor. Check it out now, for free—no login required: https://t.co/NTVY3Nh8A6

BREAKING: Proof—a new product from @every It’s a live collaborative document editor where humans and AI agents work together in the same doc. It's fast, free, and open source—available now at https://t.co/OZeW6Wf1Iq. It’s built from the ground up for the kinds of documents agents are increasingly writing: bug reports, PRDs, implementation plans, research briefs, copy audits, strategy docs, memos, and proposals. Why Proof? When everyone on your team is working with agents, there's suddenly a ton of AI-generated text flying around—planning docs, strategy memos, session recaps. But the current process for collaborating and iterating on agent-generated writing is…weirdly primitive. It mostly takes place in Markdown files on your laptop, which makes it reminiscent of document editing in 1999. Proof lets you leave .md files behind. What makes Proof different? - Proof is agent-native: Anything you can do in Proof, your agent can do just as easily. - Proof tracks provenance: A colored rail on the left side of every document tracks who wrote what. Green means human, Purple means AI. - Proof is login-free and open source: This is because we want Proof to be your agent's favorite document editor. Check it out now, for free—no login required: https://t.co/NTVY3Nh8A6

OpenClaw sure started a revolution.

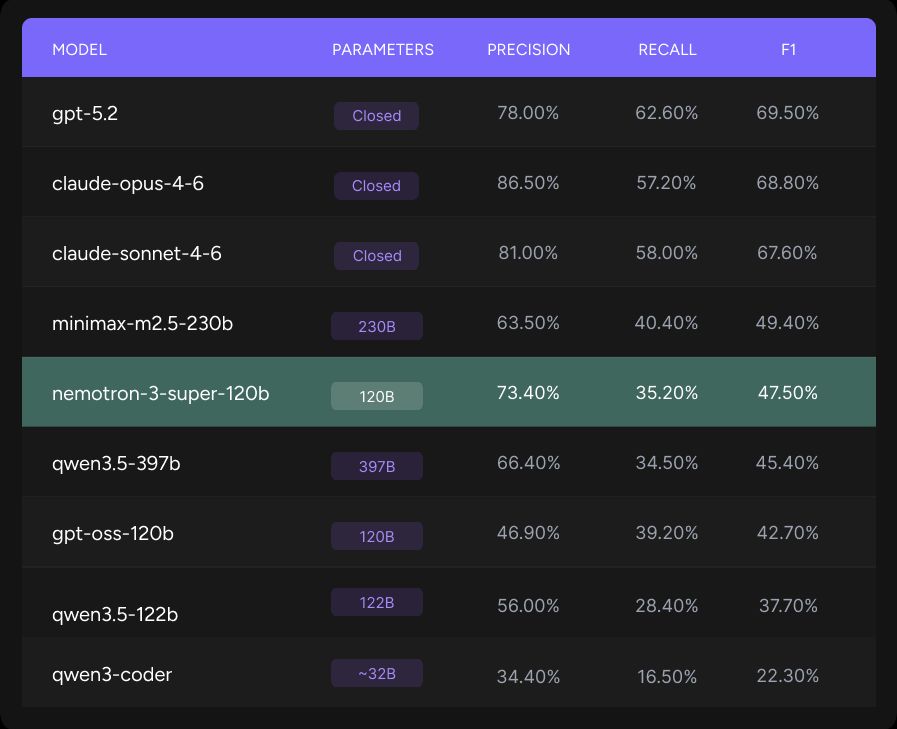

A new open‑source model from @nvidia, Nemotron 3 Super, is closing the gap. On Qodo’s code review benchmark, it hit 73.4% precision, the highest of any open model we tested, even outperforming others 2-3x its size. For teams in air‑gapped or regulated environments, this finally brings trustworthy, self‑hosted AI code review to production. See the full results: https://t.co/pjLpFhpdsM

Claude for Excel and Claude for PowerPoint now sync together seamlessly. When you’ve got more than one file open, Claude shares the full context of your conversation between them. Pull data from spreadsheets, build out tables, and update a deck — without re-explaining a step. https://t.co/mY8jrHj6Di

Introducing Computer for Enterprise Computer runs multi-step workflows across research, coding, design, and deployment. It routes tasks across 20 specialized models and connects to 400+ applications. https://t.co/JuFJ5io30H

Introducing Computer for Enterprise Computer runs multi-step workflows across research, coding, design, and deployment. It routes tasks across 20 specialized models and connects to 400+ applications. https://t.co/JuFJ5io30H

Today we're launching Hiro's MCP server. Now you can take all your financial data with you, wherever you agent. https://t.co/6pPU6w6eIp

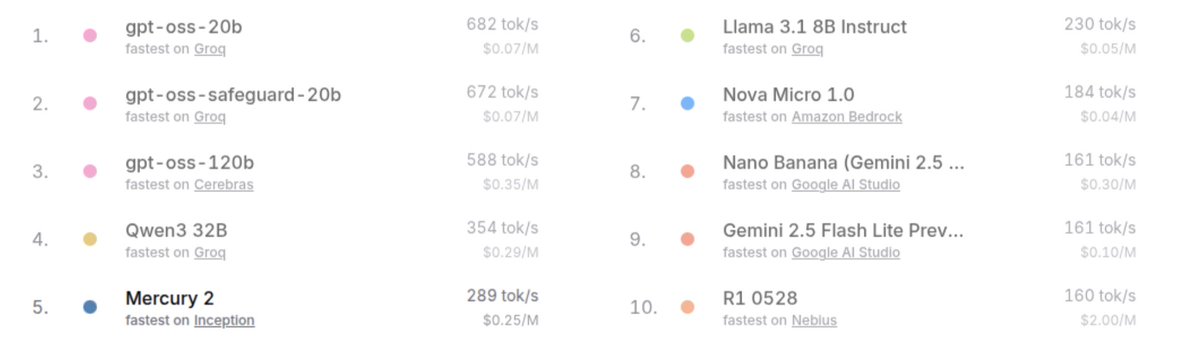

The speed of Mercury diffusion models is real. On real production OpenRouter traffic, they beat every other provider except Cerebras. And intelligence is also higher than the Groq/Cerebras models. Take a look: https://t.co/6oFu6fIIXv https://t.co/nRoIGkgKFS

@pamelafox https://t.co/npDalSrPog

How The Beach Boys must’ve felt watching The Beatles become associated with Charles Manson in the popular consciousness even though they used to hang with him and recorded one of his songs https://t.co/kBH28kMj1g

My boy telling me he thinks he’s gonna do the backwards kangol this spring https://t.co/IZRMpY9bH8

Introducing The Anthropic Institute, a new effort to advance the public conversation about powerful AI. https://t.co/M7vi9oRuYi

This is why I believe AI will be a “normal technology” — despite rapid scaling laws for specific technical benchmarks, real world usefulness and effectiveness are going to lag behind a lot

Three things about the METR graph: 1) It measures something real about coding ability but also not exactly what it claims to measure 2) Lots of other benchmarks correlate with it very highly & are increasing exponentially 3 AI remains jagged in key ways that are hard to measure

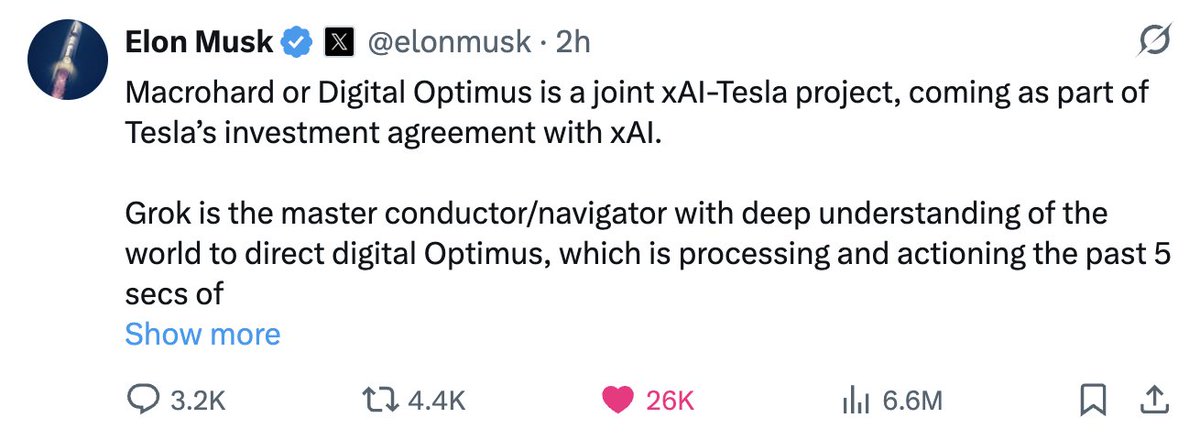

Digital Optimus, Optimus, and FSD What’s going on here? A lot. xAI and Tesla’s AI team have been working on complementary, partially overlapping AI systems. Tesla AI has focused on vision-based intelligence for both FSD and Optimus, while xAI has focused on building a frontier model (an LLM) aimed at general intelligence. More recently, xAI has pushed deeper into what has been called Macrohard (aka Digital Optimus). Macrohard applies xAI’s intelligence layer, Grok, to human activity in the digital world, essentially operating computers the way a human would. The idea is that the AI can move through existing software environments and perform tasks that previously required direct human interaction. But Macrohard goes beyond simply navigating pixels. It is also about generating outcomes within those environments— producing results (pixels), not just interacting with interfaces. In that sense, Macrohard becomes a quasi vision-based AI system as well. Elon, effectively the technology head of both companies, sees the convergence. The decision now appears to be to combine efforts and focus each team on its relative strengths. Tesla’s vision-based AI team becomes central in integrating this perception stack with xAI’s “pure” intelligence model. The benefits are substantial. First, xAI advances its Digital Optimus concept— an AI capable of driving productivity directly in the digital world. At the same time, Tesla gains a powerful intelligence backbone: a reasoning engine layered on top of its vision systems. For Optimus, the implications are colossal. The robot is no longer just a physical-world machine driven by perception and action loops; it becomes a reasoning system as well. In other words, Optimus gains both embodiment and intelligence. That combination directly addresses the data patterns I discussed in my article this week. And that is a big deal. Of course, the impact extends to FSD as well. Many of the “last mile” problems in vehicle autonomy involve nuanced human intent and interaction. A reasoning layer makes those scenarios far more tractable. Talking to your car and having it genuinely understand what you want it to do becomes realistic. Further, Elon has suggested that this combined approach fits within an AI4 inference framework—delivering more intelligence per watt and reducing the need to wait for AI5-scale hardware to solve these larger problems. All in all, this is significant news. It may shift some timelines, and I suspect it may also explain why version 14.3 (the rumored “reasoning edition”) has not yet appeared. It may now be part of this broader combined effort.

🔥 Excited to launch a multi-year partnership bringing Fireworks AI to Microsoft Azure Foundry. At @FireworksAI_HQ, our mission is simple: make the world’s best AI models run faster, smarter, and reliably at scale. Over the past year we’ve helped teams move generative AI from demos to real production systems - copilots, agents, and beyond. By bringing Fireworks directly onto @Azure , developers and enterprises can now run high-performance inference for leading open models inside the Azure ecosystem they already trust for security, compliance, and global scale. For us, this partnership is about one thing: removing the friction between great models and real products. Together, we provide a complete catalog of state‑of‑the‑art open models, all on a platform built to operate and optimized for production quality! More details 👉 https://t.co/SF9SjETWrt

Great news for devs deploying agents with open models. @FireworksAI_HQ now offers high-performance inference for leading models inside the Azure ecosystem. Pay attention, AI devs. This team is serving best-in-class fast inference for AI models like Kimi K2.5 & MiniMax M2.5.

i’m in thanks @jxnlco kissing the hand that approved my application https://t.co/aKn1rxTsWW

Let’s goooo 🔥 Thanks @jxnlco https://t.co/KHedoPWwoq

Let’s goooo 🔥 Thanks @jxnlco https://t.co/KHedoPWwoq

codex for oss credits: we're doing some weekly roll outs if you have not gotten credits please be patient!

I had to build out way more fraud checking that I had anticipated and getting rate limited by GitHub

I'm in!! 😁😁 Thanks a lot @jxnlco https://t.co/7WBAX1pVCM