@pbeisel

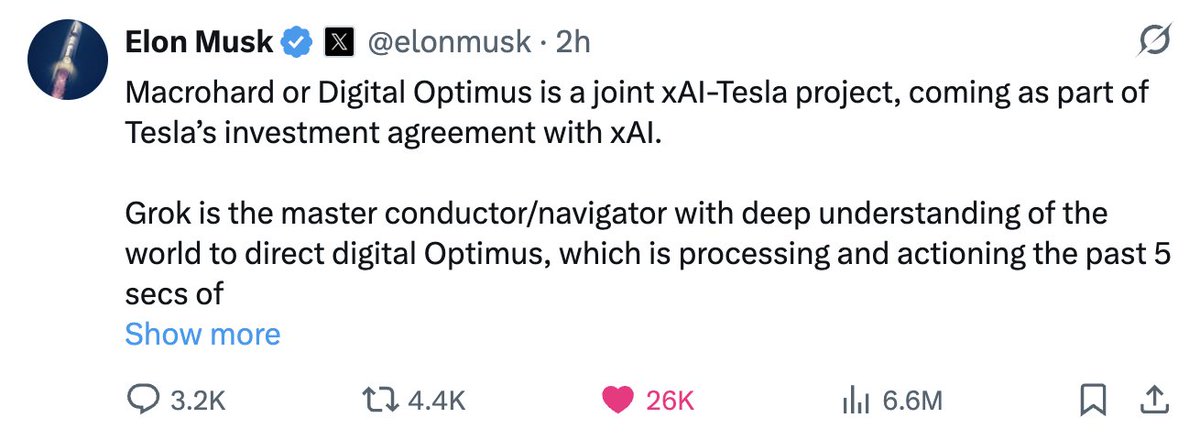

Digital Optimus, Optimus, and FSD What’s going on here? A lot. xAI and Tesla’s AI team have been working on complementary, partially overlapping AI systems. Tesla AI has focused on vision-based intelligence for both FSD and Optimus, while xAI has focused on building a frontier model (an LLM) aimed at general intelligence. More recently, xAI has pushed deeper into what has been called Macrohard (aka Digital Optimus). Macrohard applies xAI’s intelligence layer, Grok, to human activity in the digital world, essentially operating computers the way a human would. The idea is that the AI can move through existing software environments and perform tasks that previously required direct human interaction. But Macrohard goes beyond simply navigating pixels. It is also about generating outcomes within those environments— producing results (pixels), not just interacting with interfaces. In that sense, Macrohard becomes a quasi vision-based AI system as well. Elon, effectively the technology head of both companies, sees the convergence. The decision now appears to be to combine efforts and focus each team on its relative strengths. Tesla’s vision-based AI team becomes central in integrating this perception stack with xAI’s “pure” intelligence model. The benefits are substantial. First, xAI advances its Digital Optimus concept— an AI capable of driving productivity directly in the digital world. At the same time, Tesla gains a powerful intelligence backbone: a reasoning engine layered on top of its vision systems. For Optimus, the implications are colossal. The robot is no longer just a physical-world machine driven by perception and action loops; it becomes a reasoning system as well. In other words, Optimus gains both embodiment and intelligence. That combination directly addresses the data patterns I discussed in my article this week. And that is a big deal. Of course, the impact extends to FSD as well. Many of the “last mile” problems in vehicle autonomy involve nuanced human intent and interaction. A reasoning layer makes those scenarios far more tractable. Talking to your car and having it genuinely understand what you want it to do becomes realistic. Further, Elon has suggested that this combined approach fits within an AI4 inference framework—delivering more intelligence per watt and reducing the need to wait for AI5-scale hardware to solve these larger problems. All in all, this is significant news. It may shift some timelines, and I suspect it may also explain why version 14.3 (the rumored “reasoning edition”) has not yet appeared. It may now be part of this broader combined effort.