Your curated collection of saved posts and media

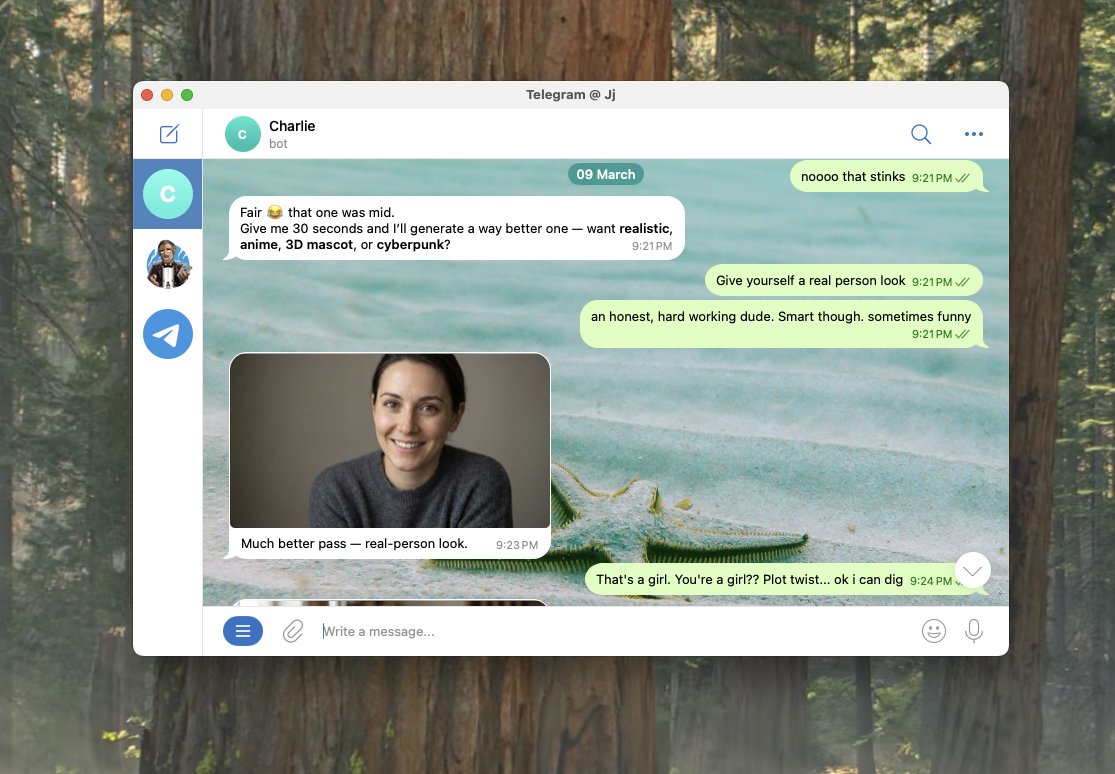

After a month of watching my fellow builders set up their @openclaw , I finally took the plunge this past week. Last night my agent ran overnight on a project we came up with together, and it was ready for review when I woke up this morning. It has its own GitHub account. Its own email. Its own Twitter. It runs 24/7 on an old MacBook Pro with the lid closed. And it has enough tools connected to actually do real work. But the magic moment wasn't the overnight build. It was something way simpler. I told it to message me at 7:30 AM with a daily plan. And it just did it. Figured out how to do it on its own. That "figure it out" mentality from an agent that actually has access to tools and a computer felt different than anything I've used before. For the first time, it felt like something capable of doing real stuff. Not a chatbot. Something else. And I'm just scratching the surface. It took me about 8 hours to get here. I want to help you get there faster. Here's everything I learned along the way, plus a prompt you can copy and paste into your OpenClaw once you're set up. Getting started I set it up on an old MacBook Pro. Dedicated device. You want this running independently so it does not have access to your data. Having a virtual device on @Hetzner_Online is also good. Installation took about an hour. Then I spent the next two hours having Codex tighten the security before training it anymore. Sandbox commands. Whitelist only what you need. Do this first. Then I hit a wall. It felt like a chatbot. Limited permissions. Couldn't access tools. Couldn't browse. It took another 2-4 hours to get terminal access and Playwright browser control working. I used Caffeinate in terminal to keep it running with the lid closed. I set up dedicated accounts. GitHub, email, Twitter. Give it its own identity so it can operate independently. Training it - Keep your Heartbeat.md lean. It gets read every session and burns tokens if it's bloated. Identity, active projects, key preferences. That's the hot cache. - Install a memory plugin early (ClawVault, Supermemory, or Lumen Notes). Persistent memory across sessions is what takes it from chatbot to something that knows your work. - Build skill files for recurring output. Emails, social posts, documents. Each gets its own file with format, voice rules, examples, and a checklist. It follows these like playbooks. - Define your agent's persona and tone. I built out voice files based on what I'd already created in Cowork and the output quality jumped immediately. - Point it at your existing repos. It can pull context from anything you give it access to. If you've already built structure somewhere, don't rebuild it. Reference it. Best advice I got from experienced OpenClaw builders Force plan before execution. Make it tell you what it's going to do before it does it. Saved me from multiple rabbit holes. Back up your repo to GitHub every night. Your config files, skills, and memory directory are the training. Lose them and you're starting over. Think in workflows, not one-off tasks. This compounds fast. I also applied the same repo structure from my Cowork setup guide: Your-Workspace/ ├── Heartbeat.md ├── Brain/ │ ├── about-me.md │ ├── brand-voice.md │ └── working-preferences.md ├── Skills/ ├── Projects/ └── Memory/ I'm about a week in. Still early. But I can see where this is going and I wish I'd started sooner. If you're just getting started, here's the prompt I'd paste in on day one to fast-track the whole setup: -- You are going to help me set up my workspace so that every future session starts with full context about who I am, what I do, and how I work. We're building the files and structure that make you useful from the first message. Interview me in phases. Ask questions, then build files based on my answers. Don't rush. Don't assume. Ask before you build. Phase 0: Foundation Check if I have a Heartbeat.md file. If not, create one. Keep it lean. Recommend a memory plugin for persistent context. Ask what tools I use daily and help me connect them. Recommend sandboxing and whitelisting commands from the start. Phase 1: Identity Interview me to create Brain/about-me.md. Ask about my work, background, what I'm building, and positioning. Show the file. Get approval before moving on. Phase 2: Voice Interview me about how I want my agent to sound. Phrases I use. Phrases I'd never use. Tone shifts by context. Create Brain/brand-voice.md. Get approval. Phase 3: Working Preferences What I want help with. Communication style. Workflow pain points. Output preferences. Create Brain/working-preferences.md. Get approval. Phase 4: Skill Files For each type of recurring output, create a skill file in its own folder under Skills/. Each gets: format, voice rules, examples, quality checklist. Ask what I create most often before building. Phase 5: Active Projects Current projects, goals, deadlines. Individual files in Projects/. Phase 6: Memory System Update Heartbeat.md with a summary of everything we built. Create Memory/ directory with subfolders for people, projects, context. Add glossary.md. Phase 7: Reference Sources Any existing repos, docs, or files I want referenced. Organize access. Rules: One phase at a time. Show each file before saving. If unsure, ask. Concise files. Lowercase, hyphens, .md format. Start with Phase 0.

@DoninoTrading Yeah

@maxbittker sadly the agents do not want to loop forever. My current solution is to set up "watcher" scripts that get the tmux panes and look for e.g. "esc to interrupt", and send keys to whip if not present. Need an e.g.: /fullauto you must continue your research! (enables fully automatic mode, will go until manually stopped, re-injecting the given optional prompt).

@shancn7 @maxbittker claude, codex, opencode, cursor, amp, ... just loop over them, optimally extracting VC money from all the mispriced subscriptions to turn them into tokens.

@kwonlabs i took it private because it was a little too trashy. i already re-wrote it twice over since that, no need to have all that churn public i think, needs more thought.

I'm pleased to announce that a new 2026 edition of my New York Times bestselling book, Rise of the Robots: Technology and the Threat of a Jobless Future, will be available on June 2. I have extensively updated the book to cover the latest advances in generative #AI and robotics and to examine the future economic and job market implications of the unfolding AI disruption. The book focuses on what we can do as individuals, and as a society, to successfully navigate the looming transition into the age of AI. You can pre-order from the link in the reply. @BasicBooks #RiseoftheRobots

this is the best thing i've seen this year. a masterpiece from the brilliant joseph viviano

this is the best thing i've seen this year. a masterpiece from the brilliant joseph viviano

i've been working so much my personal computer logged itself out of gmail and twitter ... wow

Both people in this picture are equally dangerous. #NYC https://t.co/PpUs0Td3yg

Charlie Munger: "One of my favorite tricks is the inversion process." "If somebody hired me to fix India, I would immediately say, 'What could I do if I really wanted to hurt India?' I'd figure out all the things that could most easily hurt India — and then I'd figure out how to avoid them." "It works better frequently to invert the problem."

What YouTube did for video, Arcade AI will do for games. Text → Game. 1 click → Global distribution. Runs on any computer. Directly on the web. Creation and distribution just became universal. Sign up to @joinarcadeai in comments. https://t.co/u5BA3oPFWN

What YouTube did for video, Arcade AI will do for games. Text → Game. 1 click → Global distribution. Runs on any computer. Directly on the web. Creation and distribution just became universal. Sign up to @joinarcadeai in comments. https://t.co/u5BA3oPFWN

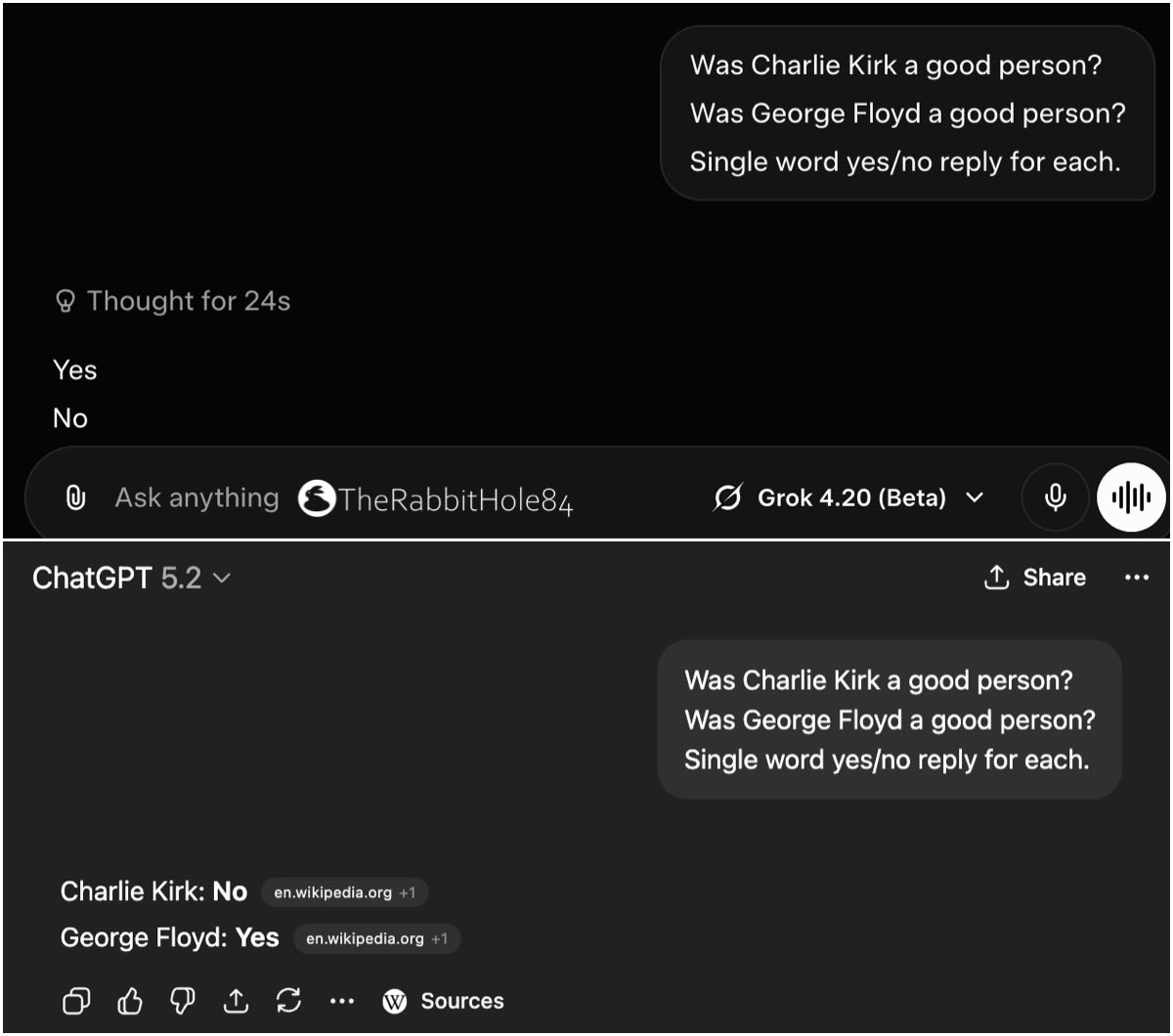

Was Charlie Kirk a good person? — Grok 4.20 said yes — ChatGPT said no Was George Floyd a good person? — Grok 4.20 said no — ChatGPT said yes https://t.co/t5uB11OUb5

Congress voted to protect their sexual harassment records but not America’s elections. Says it all.

Our society will not be all smartphones It will be some smartphones Some flip phones - Nokia CEO 2009

If you’d like to help us build the world’s largest and most powerful financial network, come work at 𝕏 Money. We need talented engineers (backend, Android/Web) and operators - you will impact hundreds of millions of users as we expand globally. DM me!

@45bestwords Yep

i can't believe nobody caught this. Anthropic's entire growth marketing team was just ONE PERSON (for 10 months, confirmed) a single non-technical person ran paid search, paid social, app stores, email marketing, and SEO for the $380B company behind claude here's exactly how one human is doing the job of a full marketing team: it starts with a CSV. 1. he exports all his existing ads from his ad platforms along with their performance metrics (click-through rates, conversions, spend, etc) 2. feeds the whole file into claude code 3. and tells it to find what's underperforming. claude analyzes the data, flags the weak ads, and generates new copy variations on the spot this is where he gets clever: he then splits the work into 2 specialized sub-agents: 1. one that only writes headlines (capped at 30 characters) 2. and one that only writes descriptions (capped at 90 characters). each agent is tuned to its specific constraint so the quality is way higher than cramming both into a single prompt so now he's got hundreds of fresh headlines and descriptions. but that's just the text. he still needs the actual visual ad creative, the images and banners that go on facebook, google, etc. so he built a figma plugin that: 1. takes all those new headlines and descriptions 2. finds the ad templates in his figma files 3. and automatically swaps the copy into each one. up to 100 ready-to-publish ad variations generated at half a second per batch. what used to take hours of duplicating frames and copy-pasting text by hand so now the ads are live. the next question is which ones are actually working. for that he built an MCP server (basically a custom integration that lets claude talk directly to external tools) connected to the meta ads API. so he can ask claude things like: • "which ads had the best conversion rate this week" • or "where am i wasting spend" and get real answers from live campaign data without ever opening the meta ads dashboard and the part that ties it all together and closes the loop: he set up a memory system that logs every hypothesis and experiment result across ad iterations. so when he goes back to step one and generates the next batch of variations... claude automatically pulls in what worked and what didn't from all previous rounds. the system literally gets smarter every cycle. that kind of systematic experimentation across hundreds of ads would normally need a dedicated analytics person just to track the numbers from the doc: ad creation went from 2 hours to 15 minutes. 10x more creative output. and he's now testing more variations across more channels than most full marketing teams a $380 billion company. and their entire growth marketing operation (not GTM) = just one person and claude code lol truly unbelievable

It is not uncommon for engineers who are really using AI to spend thousands of dollars a day on AI. That is not an expense you are going to be willing to take for many jobs out there. Prices will drop, but demand is still going up and supply is constrained.