Your curated collection of saved posts and media

OpenClaw 2026.4.10 🦞 🧠 Active Memory plugin 🎙️ local MLX Talk mode 🤖 Codex app-server harness plugin 🧾 Teams pins/reactions/read actions 🛡️ SSRF hardening + launchd fixes stability, but with attitude🦞 https://t.co/PW7WDumTf1

Earlier this month, Apple introduced Simple Self-Distillation: a fine-tuning method that improves models on coding tasks just by sampling from the model and training on its own outputs with plain cross-entropy and… it's already supported in TRL, built by @krasul. you can really feel the pace of development in the team 🐎 paper by @onloglogn, @richard_baihe, @UnderGroundJeg, Navdeep Jaitly, @trebolloc, @YizheZhangNLP at Apple 🍎 how it works: the model generates completions at a training-time temperature (T_train) with top_k/top_p truncation, then fine-tunes on them with plain cross-entropy. no labels or verifier needed you can try it right away with this ready-to-run example (Qwen3-4B on rStar-Coder): https://t.co/zizfISD6bq or benchmark a checkpoint with the eval script: https://t.co/mKlafTyKSe one neat insight from the paper: T_train and T_eval compose into an effective T_eff = T_train × T_eval, so a broad band of configs works well. even very noisy samples still help want to dig deeper? paper: https://t.co/aj1ZAcr8Mw trainer docs: https://t.co/TNVz93kZi9

@jeremyphoward Check this out! I used Lean4 to emit MLIR by way of StableHLO/IREE to train image recognition networks, with proofs for the backprop operations! https://t.co/HqYG6KflSO

See all the gory details on GitHub: https://t.co/CfUbhtcBOp and follow along on wandb: https://t.co/UWU00HPknJ

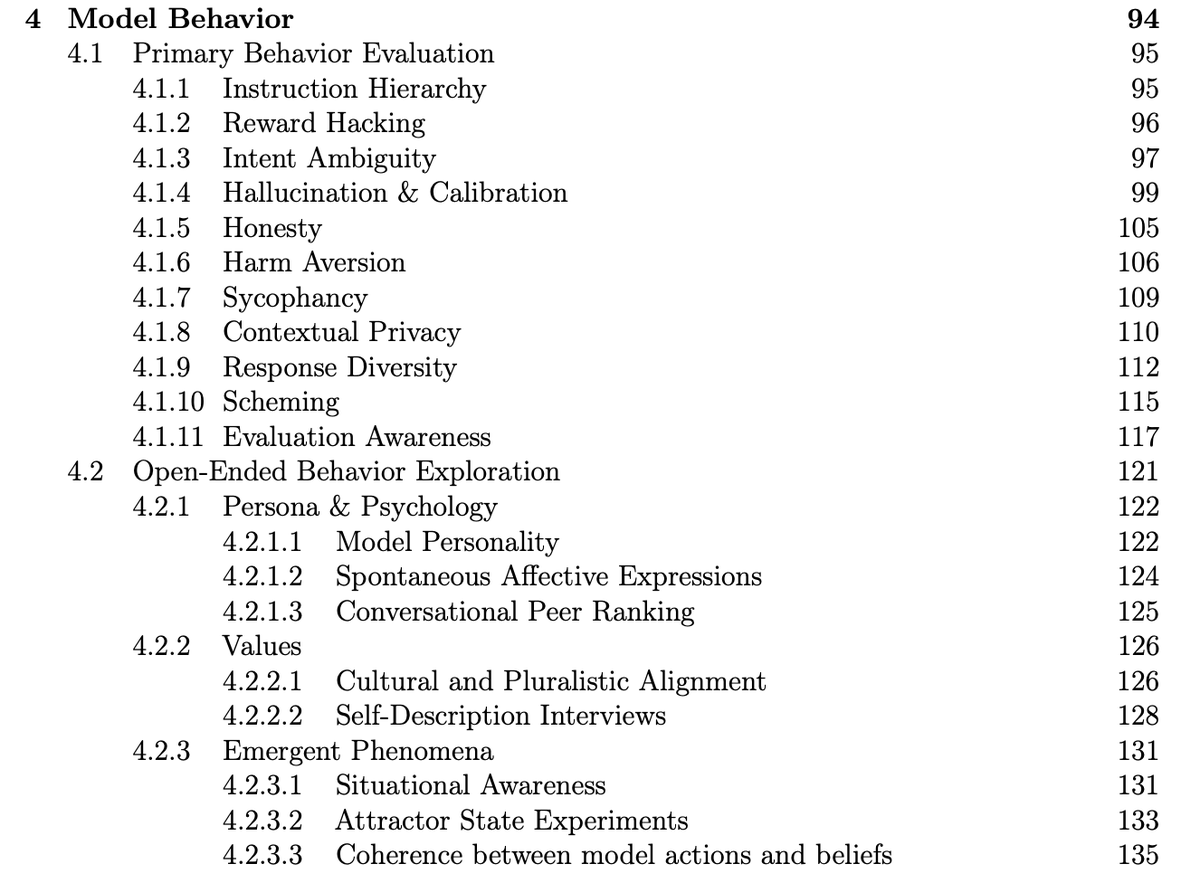

Cool to see that Meta conducted and published a pre-deployment investigation of Muse Spark behaviors like reward hacking, honesty, and evaluation awareness! https://t.co/i1Yy7HsEup

@reza_byt Doesn't tokenwise looped transformer have issues with pretraining since each token has a different depth and also has to learn the recursion depth?

Introducing DDTree: accelerates speculative decoding by drafting a tree with one block diffusion pass, then verifying multiple likely continuations together. Paper: https://t.co/cgYBw70O5i Project page: https://t.co/ygFukxrZLB Code: https://t.co/2z7U00NsuH https://t.co/XJh6YXNgrf

A new PyTorch-native backend is coming to unlock the power of Google TPUs: ✨ Run existing PyTorch with minimal code changes. ✨ Get a 50-100%+ performance boost with Fused Eager mode. Read the engineering deep dive here: https://t.co/GQPRYaKz7E #TorchTPU #PyTorch #MLOps #AI https://t.co/HiIdXVw6Oh

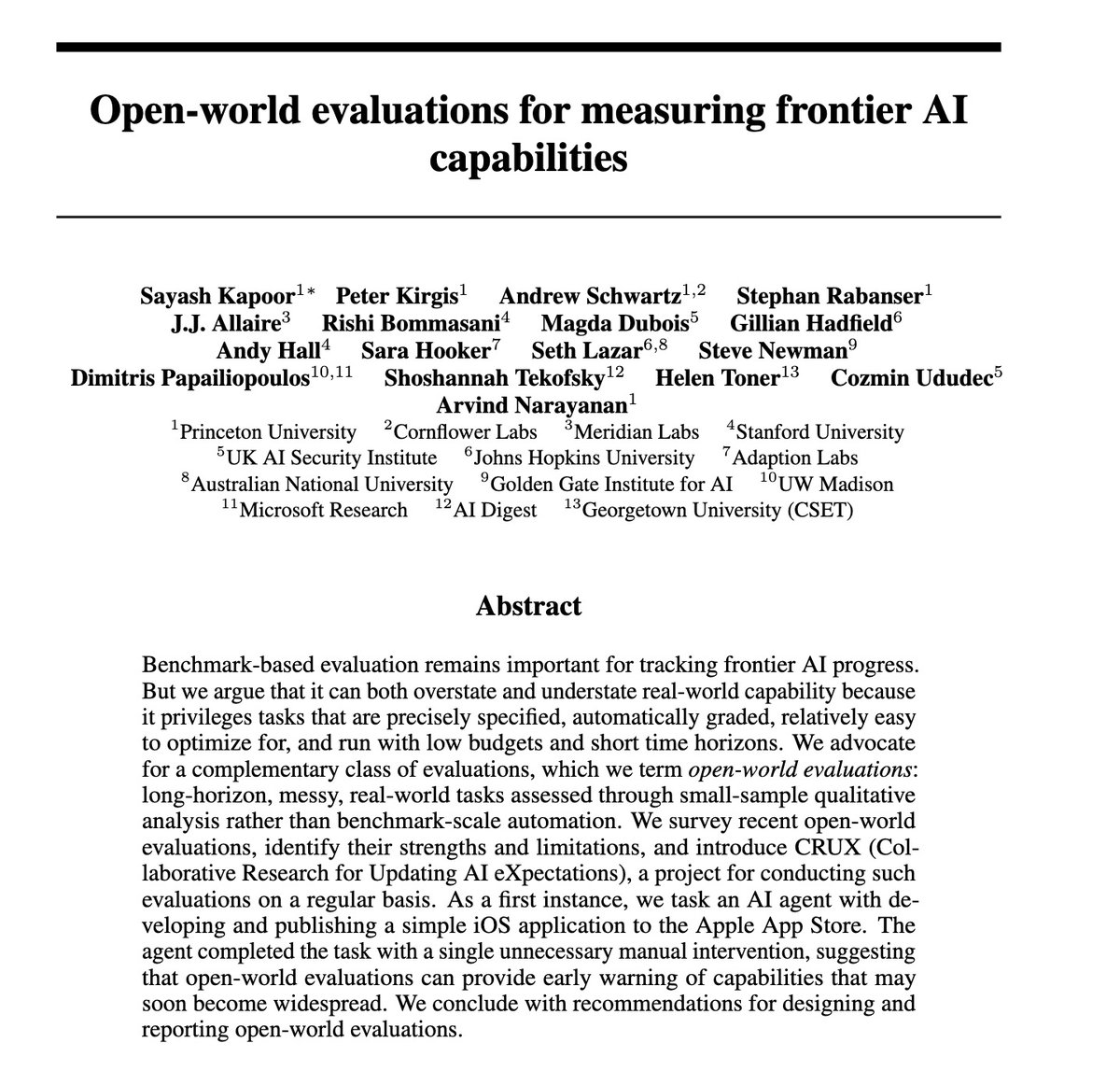

Benchmarks are saturated more quickly than ever. How should frontier AI evaluations evolve? In a new paper, we argue that the AI community is already converging on an answer: Open-world evaluations. They are long, messy, real-world tasks that would be impractical for benchmarks. https://t.co/CrvbEd9l7f

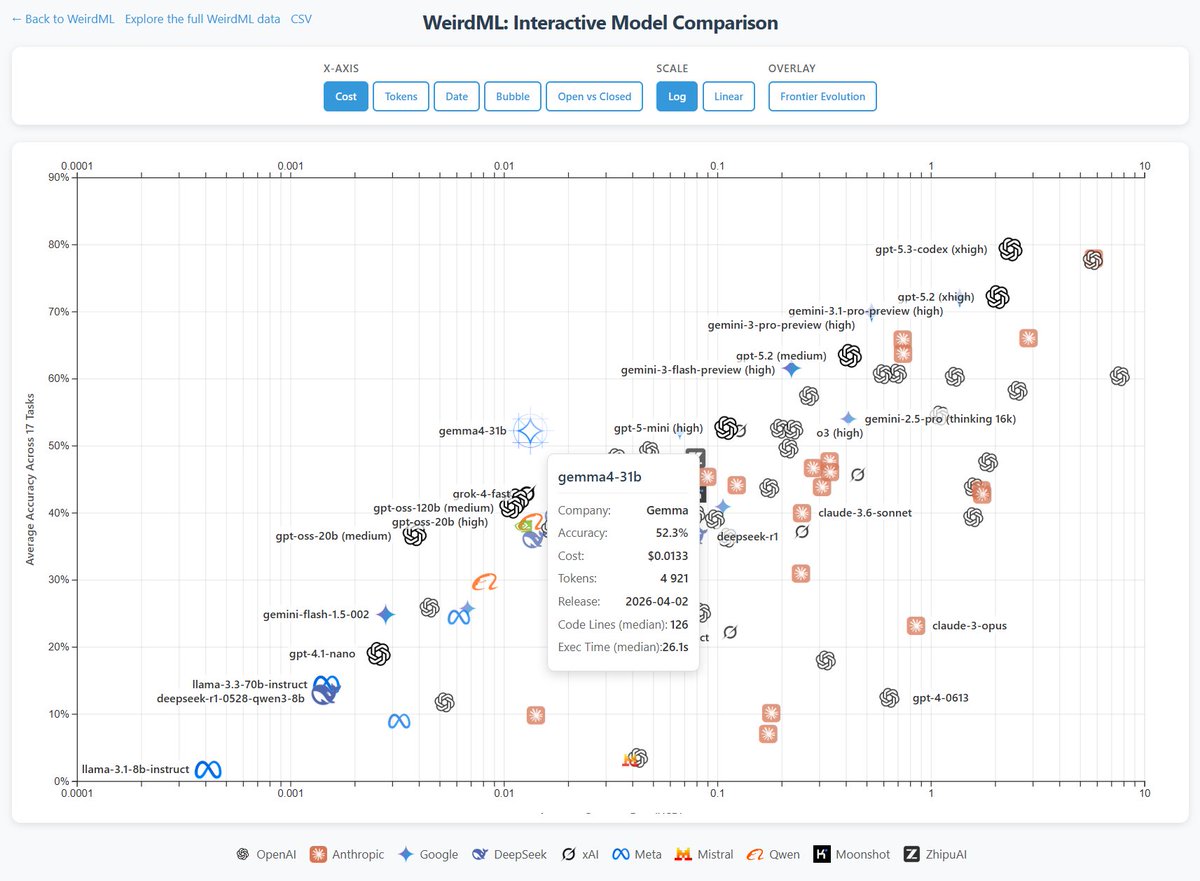

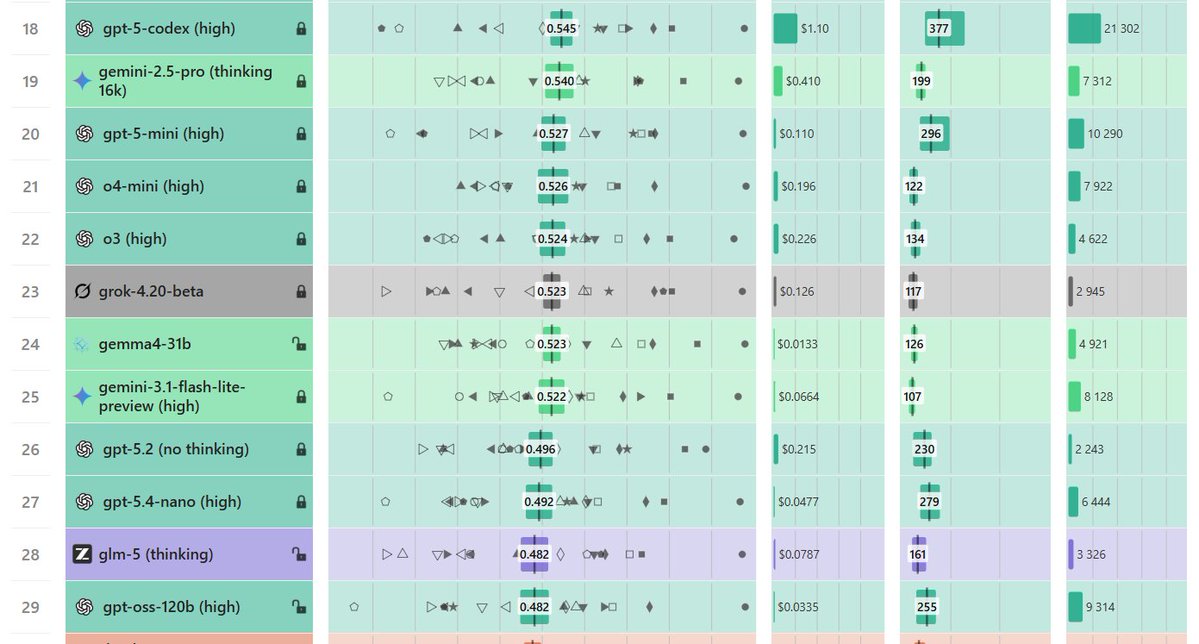

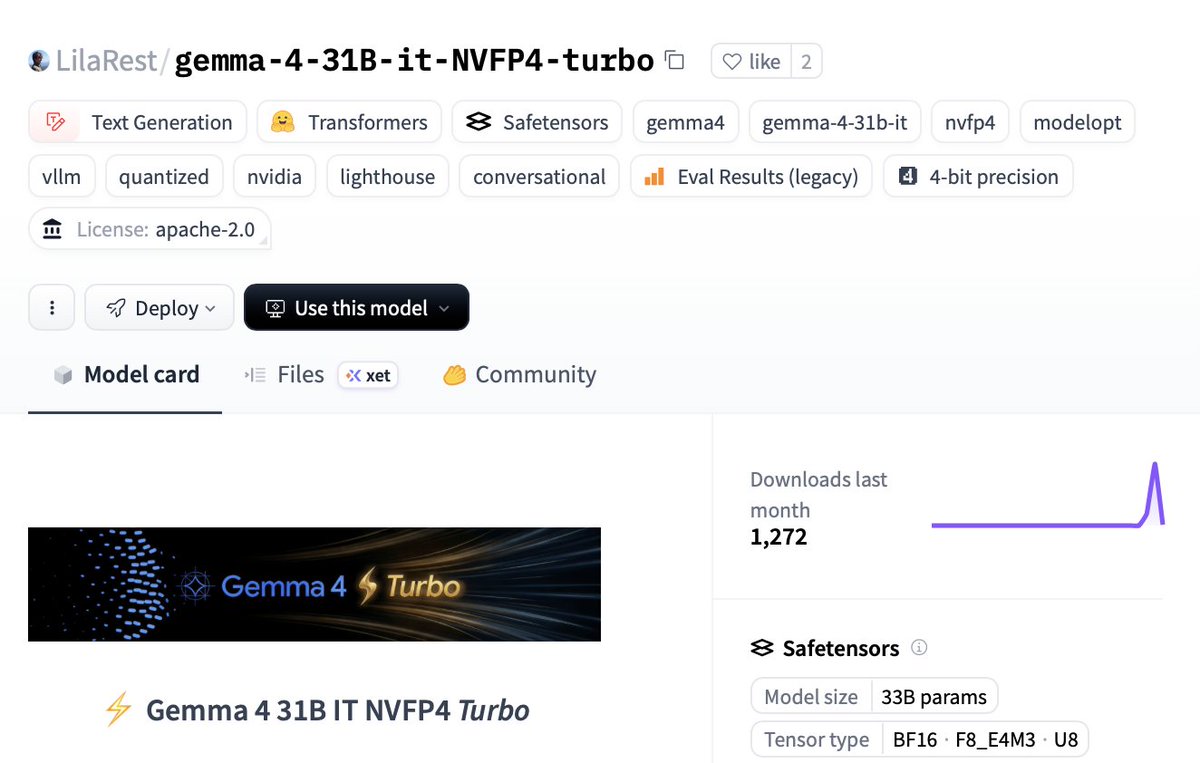

Gemma 4 31b scores 52.3% and is the strongest open model on on WeirdML, ahead of GLM 5 and gpt-oss-120b. This score is comparable to o3 and gemini 2.5 pro, and well ahead of qwen 3.5 27b at 39.5%. Gemma 4 is also significantly cheaper than other models with the same score. I ran this locally through ollama, with a 4-bit quant (q4_K_M). Full precision might score even better. The costs assume $0.14/$0.40/M.

WeirdML v2 is now out! The update includes a bunch of new tasks (now 19 tasks total, up from 6), and results from all the latest models. We now also track api costs and other metadata which give more insight into the different models. The new results are shown in these two figur

>We gave an AI agent an Apple Developer account, a Mac VM, and one task: build and publish an iOS app. It succeeded, at a cost of about $1,000. Great research on what it takes for an agent to do real world work. Some interesting areas of improvements:

📢📢A double launch today! We’re releasing a paper analyzing the rapidly growing trend of “open-world evaluations” for measuring frontier AI capabilities. We’re also launching a new project, CRUX (Collaborative Research for Updating AI eXpectations), an effort to regularly conduct

Cool to see that Meta conducted and published a pre-deployment investigation of Muse Spark behaviors like reward hacking, honesty, and evaluation awareness! https://t.co/i1Yy7HsEup

🚀 Muse Spark Safety & Preparedness Report for Meta AI is out. We start with our pre-deployment assessment under Meta's Advanced AI Scaling Framework, covering chemical and biological, cybersecurity, and loss of control risks. Our assessment flagged potentially elevated chem/bio

New video model HappyHorse-1.0 by Alibaba-ATH debuts at #1 in Video Edit Arena. It scores 1299, leading Grok Image Video by +42 points and Kling o3 Pro by +48 points. Video editing is an emerging frontier capability for video models, and only a small number of models support it today. Huge congrats to the Alibaba-ATH team on this incredible milestone!

HappyHorse-1.0 is now live on Arena! 🚀 Early evals show exceptional performance in Video Edit. We are now in the final optimization sprint for the official launch in 2 weeks. We invite the community to get early access and test our capabilities at https://t.co/iiyfgPtib5. 🐎✨

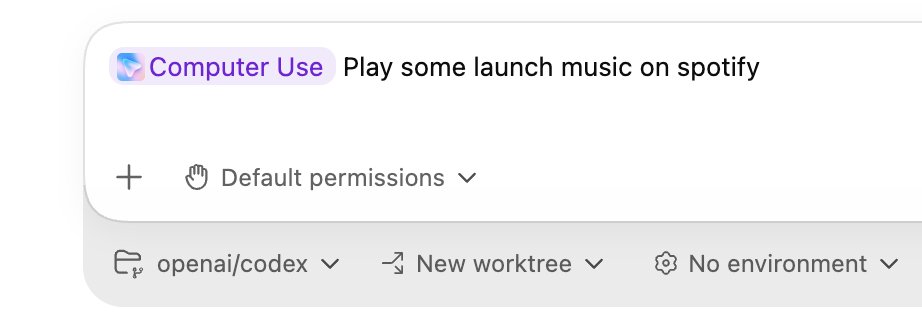

Codex just got a lot more powerful. Computer use, in-app browser, image generation and editing, 90+ new plugins to connect to everything, multi-terminal, SSH into devboxes, thread automations, rich document editing. Learns from experience and proactively suggestions work. And a ton more.

🚀 NEW GEMMA 4 31B TURBO DROPPED Runs on a SINGLE RTX 5090: ⚡️18.5 GB VRAM only (68% smaller) 🧠51 tok/s single decode 💻1,244 tok/s batched 🤖15,359 tok/s prefill ← yes, fifteen thousand 🚨2.5× faster than base model with basically zero quality loss. It hits Sonnet-4.5 level on hard classification tasks… at 1/600th the cost. Local models are shipping faster than we can test 👇🏻 🔥 HF: https://t.co/XUvVZBj9AX

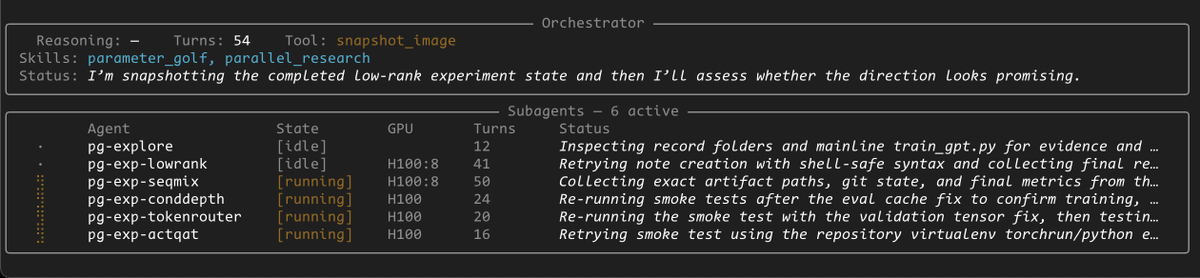

To show off what you can do with @OpenAI Agent SDK + @modal, we built an ML research agent (inspired by @karpathy). It can: - Spin up GPU sandboxes of any shape - Run a pool of subagents - Persist memory - Snapshot state for fork/resume Here it is playing Parameter Golf: https://t.co/r7QhvNmdEq

(1/5) FP4 hardware is here, but 4-bit attention still kills model quality, blocking true end-to-end FP4 serving. To fix that, we propose Attn-QAT, the first systematic study of quantization-aware training for attention. The result: FP4 attention quality is comparable to BF16 attention with 1.1x–1.5x higher throughput than SageAttention3 on an RTX 5090 and 1.39x speedup over FlashAttention-4 on a B200. Blog: https://t.co/NxVSXKWEgI Code: https://t.co/6irFgQ7GeM Checkpoints: https://t.co/GsrzbJlRY8

ok i've barely used cursor the past 18 months.. but today, i pulled it up and asked it to implement one of the more ambitious projects i had in mind .. something that really pushes it, y'know? the project was a nextjs web app, with a chrome extension + tauri desktop app (mac/win/unix) spent 10 mins working on a spec for the work, and left the thing to run -- checking in at key points to make sure everything worked. all told, it worked for like 3 hours.. and in the end, i had a suite of fully working apps! and the crazy bit is ... i don't even know which model it used?! i just picked "Premium" > "Max mode" (i have $60k in credits to burn and 6 months to do so) needless to say, i'm going to be using cursor alot more from now..

We’re introducing Cursor 3. It is simpler, more powerful, and built for a world where all code is written by agents, while keeping the depth of a development environment. https://t.co/rXR9vaZDnO

Introducing TIPS v2 👀Foundational text-image encoder 📸Can be used as the base for different multimodal applications 🤗Apache 2.0 🧑🍳New pre-training recipes https://t.co/A6H93YJhNx

To show off what you can do with @OpenAI Agent SDK + @modal, we built an ML research agent (inspired by @karpathy). It can: - Spin up GPU sandboxes of any shape - Run a pool of subagents - Persist memory - Snapshot state for fork/resume Here it is playing Parameter Golf: https://t.co/r7QhvNmdEq

Agents need computers. And they need a lot of them. Modal is an official sandbox provider for the @OpenAI Agents SDK. https://t.co/Lu4cesspYq

@lirex **Sudo password prompting is a separate mechanism** in `terminal_tool.py`. When Hermes detects `sudo` in a command, it looks for a password to pipe via `sudo -S`. The resolution order: 1. `SUDO_PASSWORD` env var in `~/.hermes/.env` → auto-pipes, no prompt 2. Previously entered password (cached for session) → reuses silently 3. Interactive prompt (CLI only) → asks the user with 45s timeout 4. None of the above → runs command as-is (fails if OS actually needs a password) If they have **passwordless sudo** (NOPASSWD in sudoers), the simplest fix is to add `SUDO_PASSWORD` to their `.env` — even setting it to a dummy value works, because the env var being present tells Hermes "I have this handled, don't prompt." With NOPASSWD configured, sudo ignores the piped password anyway. They can do this through `hermes setup` (it asks about sudo during the tool configuration step) or manually: ``` # In ~/.hermes/.env SUDO_PASSWORD=dummy ``` For fully unrestricted operation overall, they'd want both: - `--yolo` flag (or `approvals.mode: off` in config.yaml) → skips dangerous command approvals - `SUDO_PASSWORD` in `.env` → skips sudo password prompts

@breath_mirror @kaiostephens @DJLougen @bstnxbt yeah, that one works as well. doesn't need the minor PR to handle qwen3_5_text rather than the multimodal wrapper. ~2x-4x speedup (my laptop got way too hot and throttled)

llama.cpp now supports various small OCR models that can run on low-end devices. These models are small enough to run on GPU with 4GB VRAM, and some of them can even run on CPU with decent performance. In this post, I will show you how to use these OCR models with llama.cpp 👇

you'll need to explicitly prompt Claude Code to use it, but the Monitor Tool is super powerful e.g. "start my dev server and use the MonitorTool to observe for errors"

you'll need to explicitly prompt Claude Code to use it, but the Monitor Tool is super powerful e.g. "start my dev server and use the MonitorTool to observe for errors"

Thrilled to announce the Monitor tool which lets Claude create background scripts that wake the agent up when needed. Big token saver and great way to move away from polling in the agent loop Claude can now: * Follow logs for errors * Poll PRs via script * and more! http

A downside with using VLMs to parse PDFs is guaranteeing that the output text is *correct* and output in the correct reading order. 1️⃣ Text correctness: making sure that digits, words, sentences are not hallucinated or dropped. 2️⃣ Reading Order: making sure that complex multi-layout pages are linearized into the right 1-d text order. We call this Content Faithfulness in ParseBench, our comprehensive document OCR benchmark for agents. We have 167k rules that measure digit/word/sentence-level correctness along with reading order correctness. It seems relatively table-stakes, but no parser gets this 100% right, and this means that the agent’s downstream decision-making is compromised. Come learn more about how this metric works in the video below, along with our full blog writeup, whitepaper, and website! Blog: https://t.co/57OHkx0pQW Paper: https://t.co/Ho2oH2xEAM Website: https://t.co/g0b0jsCynW

Let's talk content faithfulness. Four days ago, we launched ParseBench, the first document OCR benchmark for AI agents. Its most fundamental metric asks: did the parser capture all the text, in order, without making things up? We grade three failure modes with 167K+ rule-based

@crypto_fyy @googlegemma @arena We're working on optimizing KV Cache!

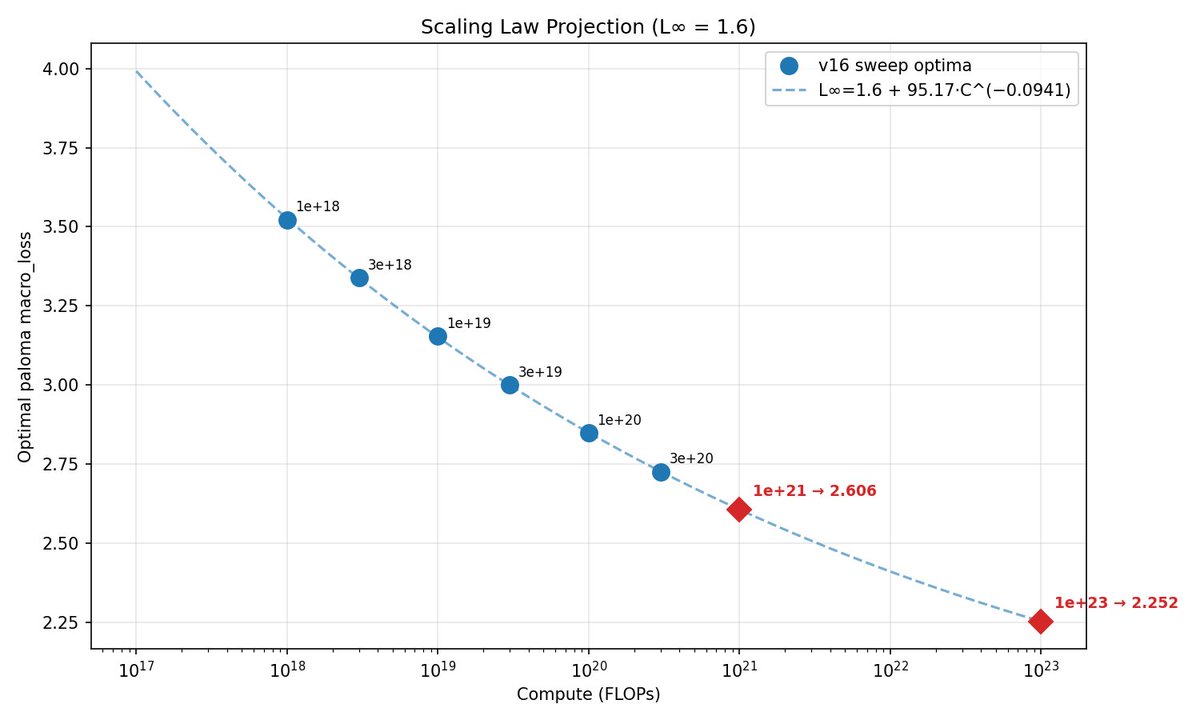

This week, @classiclarryd kicked off a 129B (16B active) 1e23 FLOPs MoE run. In typical Marin style, we have fit scaling laws and have made a loss projection of 2.252. Stay tuned. https://t.co/QnwJ8YxT9H

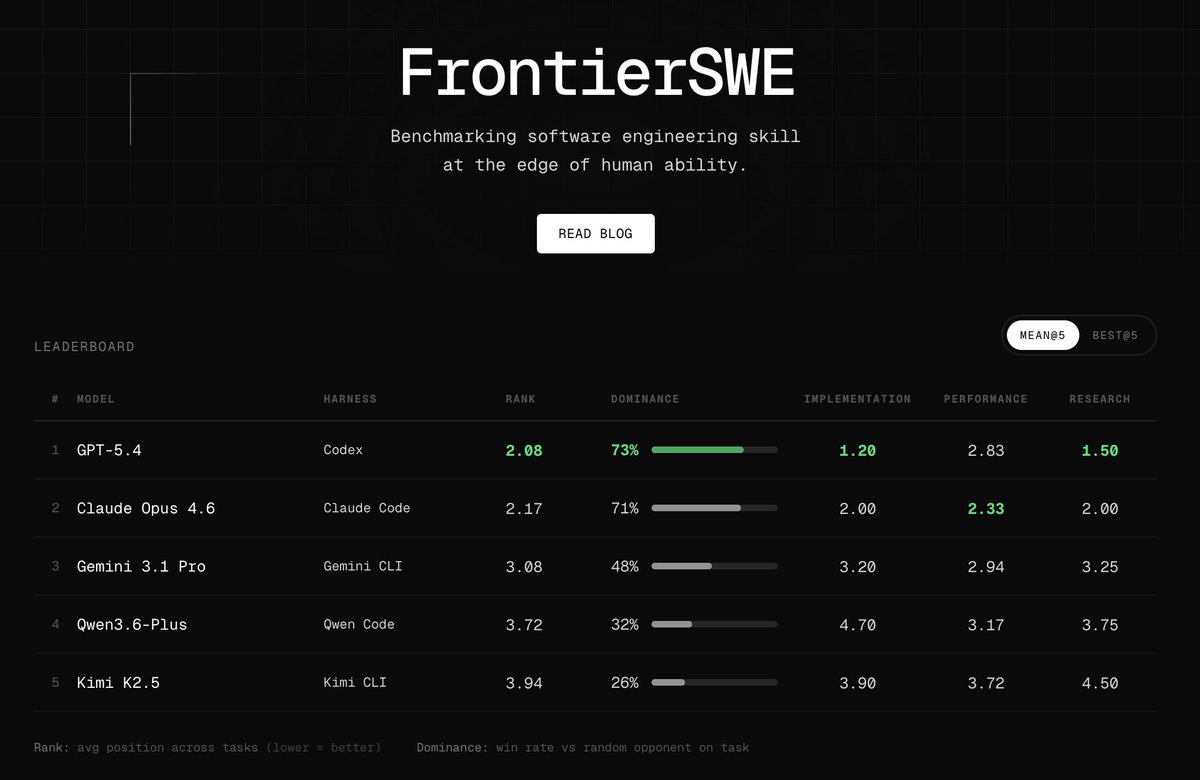

Introducing FrontierSWE, an ultra-long horizon coding benchmark. We test agents on some of the hardest technical tasks like optimizing a video rendering library or training a model to predict the quantum properties of molecules. Despite having 20 hours, they rarely succeed https://t.co/xbqHJRZiPZ

New course: Spec-Driven Development with Coding Agents, built in partnership with @jetbrains, and taught by @paulweveritt. Vibe coding is fast, but often produces code that doesn't match what you asked for. This short course teaches you spec-driven development: write a detailed spec defining what to build, and work with your coding agent to implement it. Many of the best developers already build this way. A spec lets you control large code changes with a few words, preserve context across agent sessions, and stay in control as your project grows in complexity. Skills you'll gain: - Write a detailed specification to define your mission, tech stack, and roadmap, giving your agent the context it needs from the start - Plan, implement, and validate features in iterative loops using a spec as your agent's guide - Apply the same repeatable workflow to both new and legacy codebases - Package your workflow into a portable agent skill that works across agents and IDEs Join and write specs that keep your coding agent on track! https://t.co/hI4GwuvhtN

Training Qwen2.5-0.5B-Instruct on Reddit post summarization with GRPO on my 3x Mac Minis — trying combination of quality rewards with length penalty! Completed all of the following combination rewards! >METEOR + BLEU >BLEU + ROUGE-L >METEOR + ROUGE-L All the code and wandb charts in the comments --- Training Qwen2.5-0.5B-Instruct on Reddit post summarization with GRPO on my 3x Mac Minis — trying combination of quality rewards with length penalty! Completed all of the following combination rewards! >METEOR + BLEU >BLEU + ROUGE-L >METEOR + ROUGE-L All the code and wandb charts in the comments --- Setup: 3x Mac Minis in a cluster running MLX. One node drives training using GRPO, two push rollouts via vLLM. Trained two variants: → length penalty only (baseline) → length penalty + quality reward (BLEU, METEOR and/or ROUGE-L ) --- Eval: LLM-as-a-Judge (gpt-5) Used DeepEval to build a judge pipeline scoring each summary on 4 axes: → Faithfulness — no hallucinations vs. source → Coverage — key points captured → Conciseness — shorter, no redundancy → Clarity — readable on its own

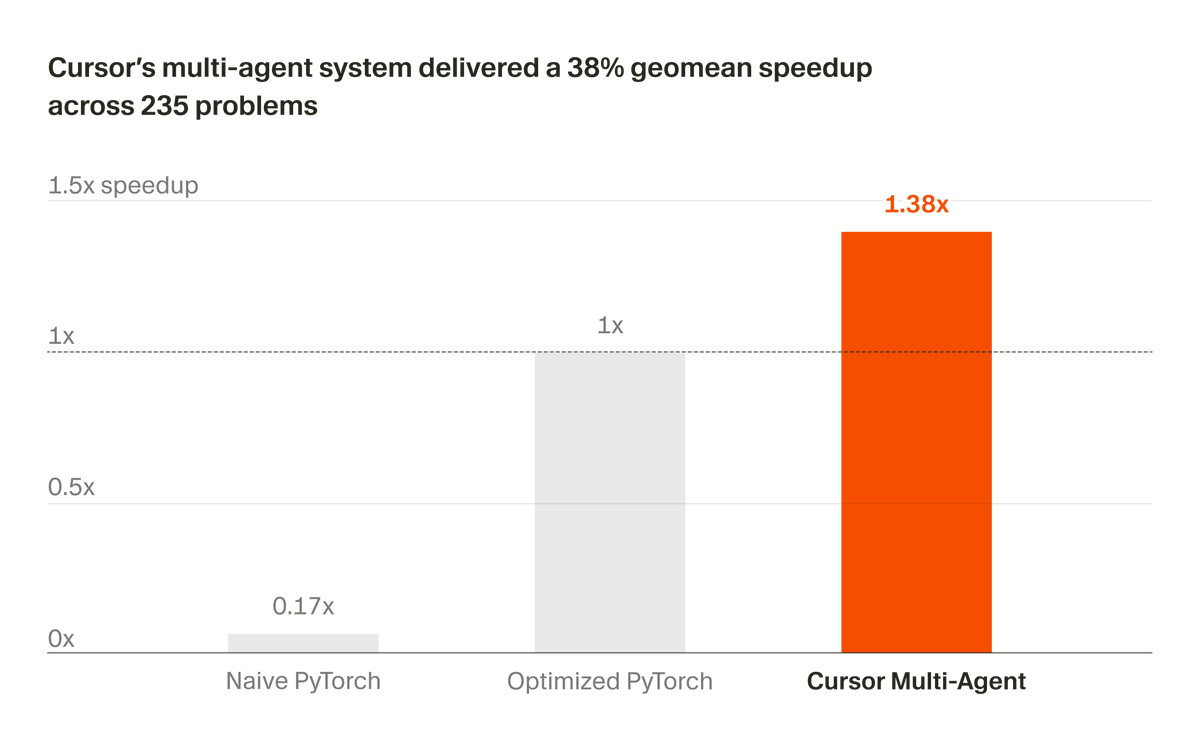

We've been developing a multi-agent system that builds and maintains complex software autonomously. Recently, we partnered with NVIDIA to apply it to optimizing CUDA kernels. In 3 weeks, it delivered a 38% geomean speedup across 235 problems. https://t.co/0YvbXrzVfe