Your curated collection of saved posts and media

Mistral Small 4 is out https://t.co/IdAowSpHpN

32× efficiency improvement in just the last 3 months, that’s the crazy jump from GPT-5.2 to GPT-5.4! 37 cents/task is essentially almost at human-level efficiency (target was 24 cents/task). This was inconceivable a year ago when o3 cost $4500/task on ARC-AGI-1, 12,000x improved!

GPT-5.4 (High) has now cleared 90% on this benchmark at a cost of just $0.37/task So that's a 32x efficiency improvement in the last three months, or 12000x since December 2024

Banger report from the Kimi team: Attention Residuals Residual connections made deep Transformers trainable. But they also force uncontrolled hidden-state growth with depth. This work proposes a cleaner alternative. It introduces Attention Residuals, which replace fixed residual accumulation with softmax attention over previous layer outputs. Instead of blindly summing everything, each layer selectively retrieves the earlier representations it actually needs. To keep this practical at scale, they add a blockwise version that compresses layers into block summaries, recovering most of the gains with minimal systems overhead. Why does it matter? Residual paths have barely changed across modern LLMs, even though they govern how information moves through depth. This paper shows that making the mixing content-dependent improves scaling laws, matches a baseline trained with 1.25x more compute, boosts GPQA-Diamond by +7.5 and HumanEval by +3.1, while keeping inference overhead under 2%. Paper: https://t.co/04IG6FDiVr Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

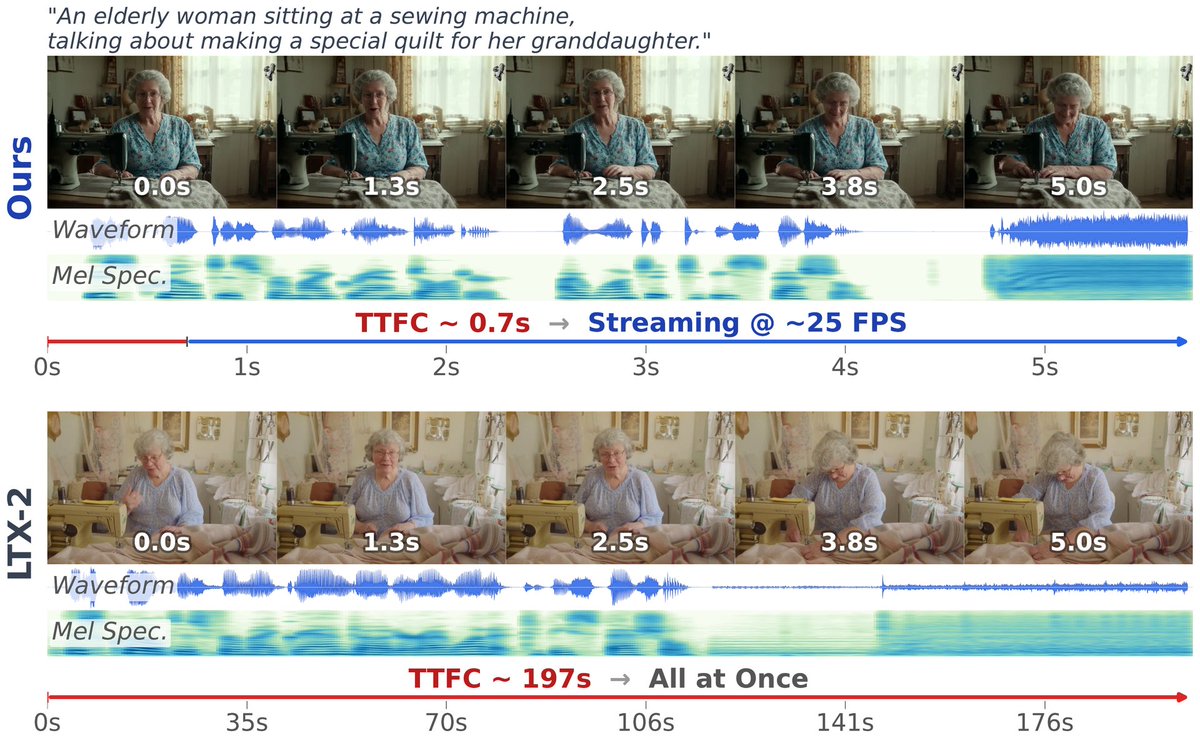

OmniForcing unlocks real-time joint audio-visual generation Achieves ~25 FPS with 0.7s latency—a 35× speedup over offline diffusion models—by distilling bidirectional LTX-2 into a causal streaming generator with maintained multi-modal fidelity. https://t.co/UGYGMyTQOs

OmniForcing unlocks real-time joint audio-visual generation Achieves ~25 FPS with 0.7s latency—a 35× speedup over offline diffusion models—by distilling bidirectional LTX-2 into a causal streaming generator with maintained multi-modal fidelity. https://t.co/UGYGMyTQOs

Subagents are now available in Codex. You can accelerate your workflow by spinning up specialized agents to: • Keep your main context window clean • Tackle different parts of a task in parallel • Steer individual agents as work unfolds https://t.co/QJC2ZYtYcA

Subagents are now available in Codex. You can accelerate your workflow by spinning up specialized agents to: • Keep your main context window clean • Tackle different parts of a task in parallel • Steer individual agents as work unfolds https://t.co/QJC2ZYtYcA

It's been about 20 years since I first started working on embeddings with Yann LeCun (siamese networks!), and I've been fascinated ever since. Gemini Embeddings 2 approaches the platonic ideal: native embedding of text, image, video, audio, and docs to a single space.

https://t.co/mIXzM657cR

It's been about 20 years since I first started working on embeddings with Yann LeCun (siamese networks!), and I've been fascinated ever since. Gemini Embeddings 2 approaches the platonic ideal: native embedding of text, image, video, audio, and docs to a single space.

@Nvidiadev 🗓️ MONDAY @ Booth #338 2PM: Shaping the Future w/ @matthew_d_white 3PM: TensorRT + PyTorch w/ Angela Yi & @narendasan 4PM: DeepSpeed Trillion-Param Training w/ @PKUWZP 5PM: PyTorch Export w/ Angela Yi 6PM: Ray Distributed Computing w/ @robertnishihara #AI #GTC2025

Your AI agent can now generate videos. PixVerse CLI ships today — JSON output, 6 deterministic exit codes, full PixVerse v5.6, Sora2 and Veo 3.1, Nano Banana access from terminal. Same account. Same credits. No new signup. -> Follow+ Reply+RT = 300 Creds(72H ONLY)

this was one of the things i co-led at fair, then fb had ~2b users, embeddings of ~128d made it a 300b-1T parameter model depending on how you count entities (e.g. ad campaigns). at the time, this was big, now it's medium. we trained it purely on distributed cpus

@RaiaHadsell Universal embeddings FTW 😊 One of the flagship projects at FAIR was to "embed the world" (i.e. represent every entity on Facebook). The name was soon changed to "Filament", deployed internally, and eventually open-sourced as "PyTorch-BigGraph" The techniques were m

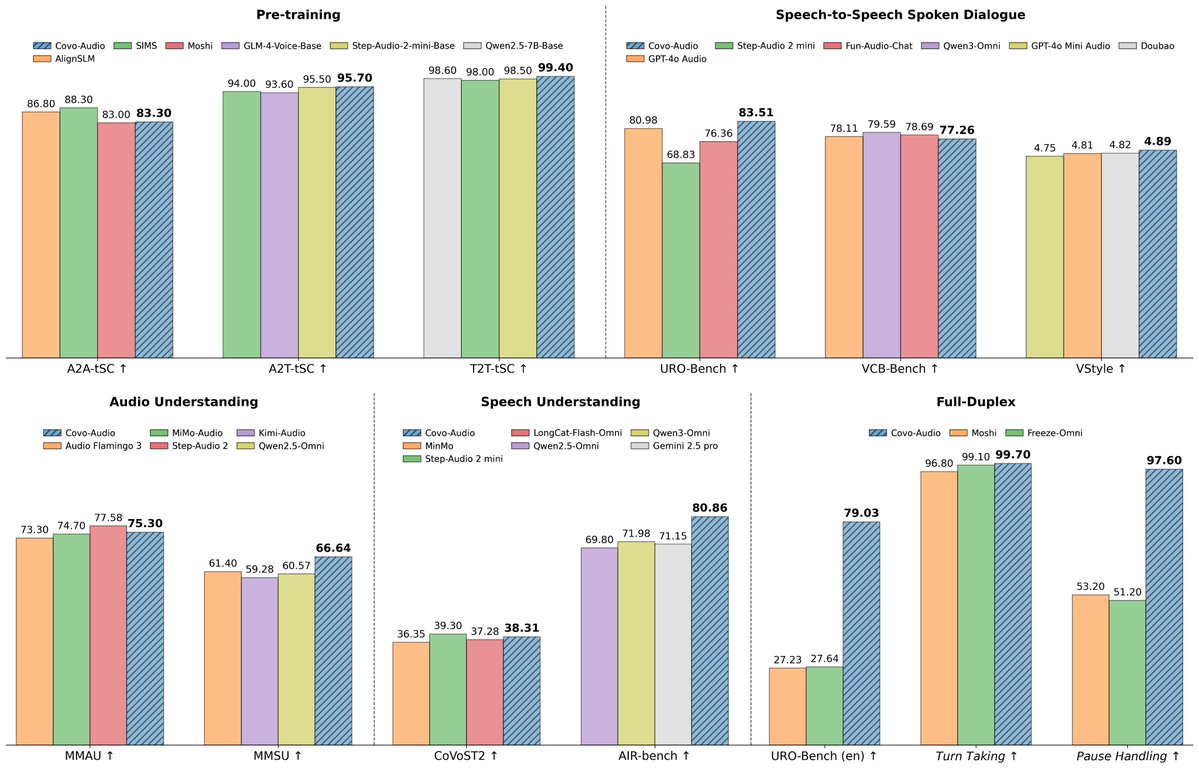

Covo Audio 🔊A end-to-end audio language model from @TencentAI_News https://t.co/tic5cH1A39 ✨ 7B ✨ Audio → Audio in one model ✨ Multi-speaker + voice transfer ✨ Real-time full duplex conversations https://t.co/hFrsxQgzkT

Covo Audio 🔊A end-to-end audio language model from @TencentAI_News https://t.co/tic5cH1A39 ✨ 7B ✨ Audio → Audio in one model ✨ Multi-speaker + voice transfer ✨ Real-time full duplex conversations https://t.co/hFrsxQgzkT

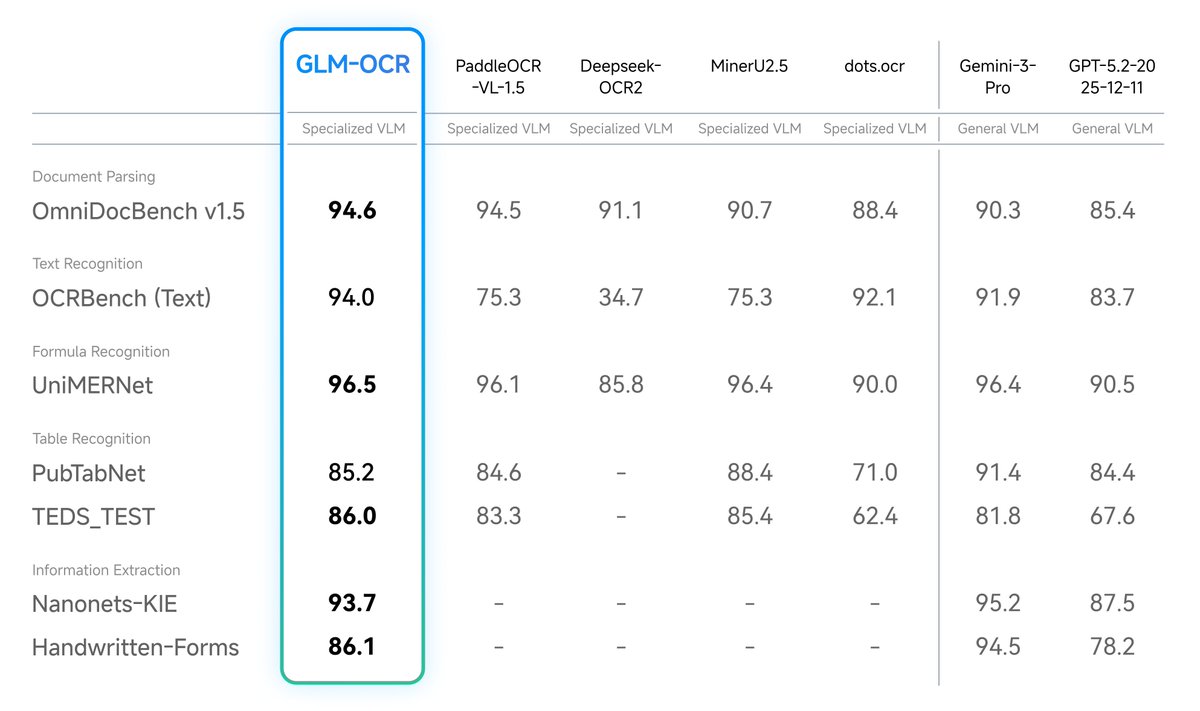

🚨 Want to parse complex PDFs with SOTA accuracy, 100% locally? 📄🔍 At just 0.9B parameters, you can drop GLM-OCR straight into LM Studio and run it on almost any machine! 🥔 🧠 0.9B total parameters 💾 Runs on < 1.5GB VRAM (or ~1GB quantized!) 💸 Zero API costs 🔒 Total data privacy Desktop document AI is officially here. 💻⚡

🚨 Want to parse complex PDFs with SOTA accuracy, 100% locally? 📄🔍 At just 0.9B parameters, you can drop GLM-OCR straight into LM Studio and run it on almost any machine! 🥔 🧠 0.9B total parameters 💾 Runs on < 1.5GB VRAM (or ~1GB quantized!) 💸 Zero API costs 🔒 Total data privacy Desktop document AI is officially here. 💻⚡

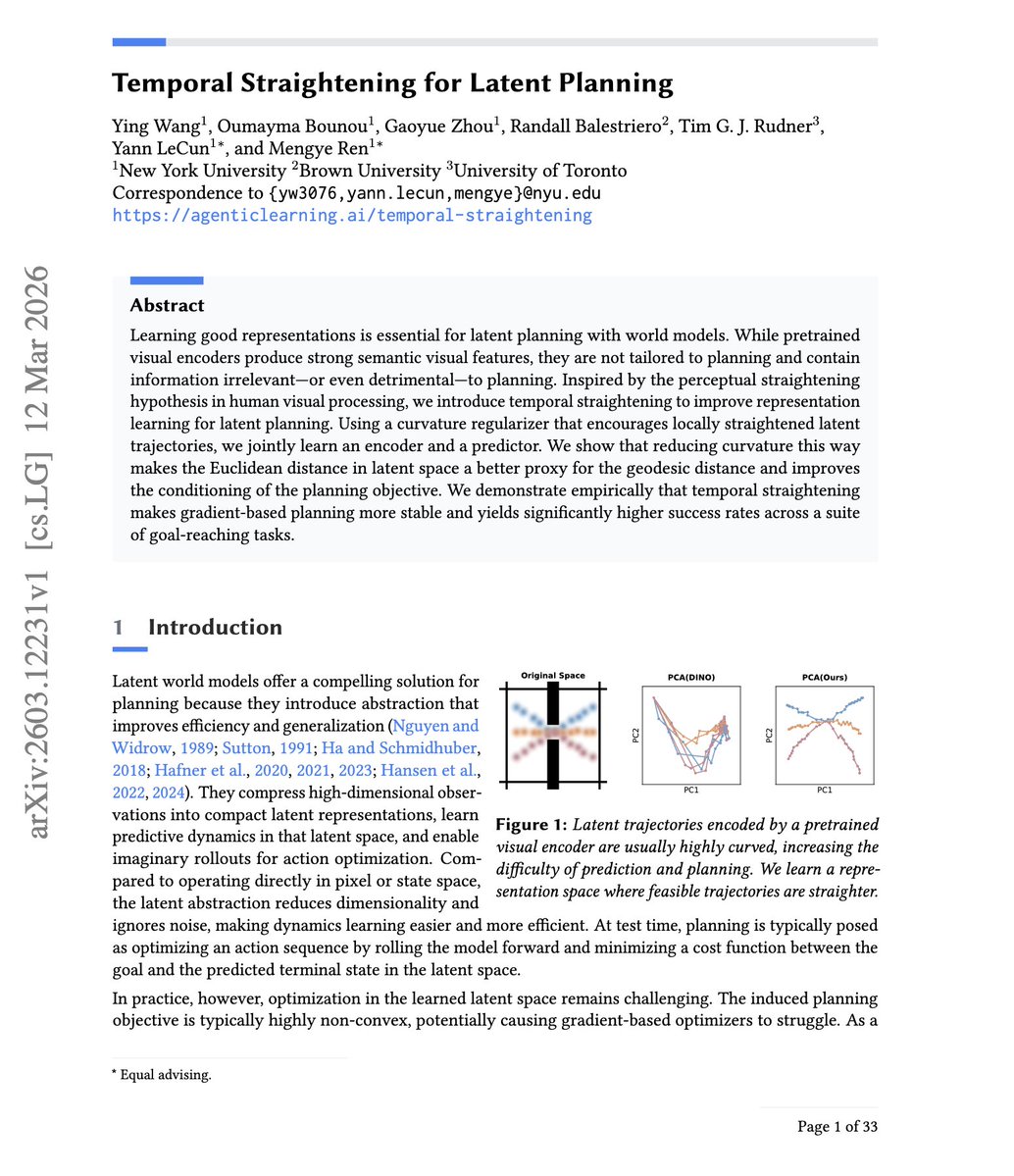

Yann LeCun is pumping out papers recently “Temporal Straightening for Latent Planning” This paper shows that by straightening latent trajectories in a world model, Euclidean distance starts to reflect true reachable progress, so it's closer to geodesic/minimum-step distance. This makes gradient-based planning far more stable and effective without relying as heavily on expensive search.

Yann LeCun is pumping out papers recently “Temporal Straightening for Latent Planning” This paper shows that by straightening latent trajectories in a world model, Euclidean distance starts to reflect true reachable progress, so it's closer to geodesic/minimum-step distance. This makes gradient-based planning far more stable and effective without relying as heavily on expensive search.

codex app automations: slack pending replies Review Slack for the current user and update today's daily summary note in /Users/jasonliu/vault at agent/daily-summary-YYYY-MM-DD.md with a single section titled ## Pending Slack Replies. Use Slack search and thread reads across public channels, private channels, DMs, and group DMs to find conversations where the current user is mentioned, directly addressed, or has already participated, and where the latest substantive message is from someone else and the current user has not replied. Focus on recent activity, prioritizing today and the last 36 hours. Read candidate threads before including them. Exclude resolved threads, FYIs that do not need a response, and anything the user already answered later. Rewrite the ## Pending Slack Replies section on each run instead of appending duplicates. For each pending item include: who is waiting, channel or DM name, last message time in America/Los_Angeles, a one-line summary of the ask or blocker, and a short snippet. If a stable Slack link is available, include it. If nothing is pending, keep the section and write - None right now. Keep the rest of the note unchanged.

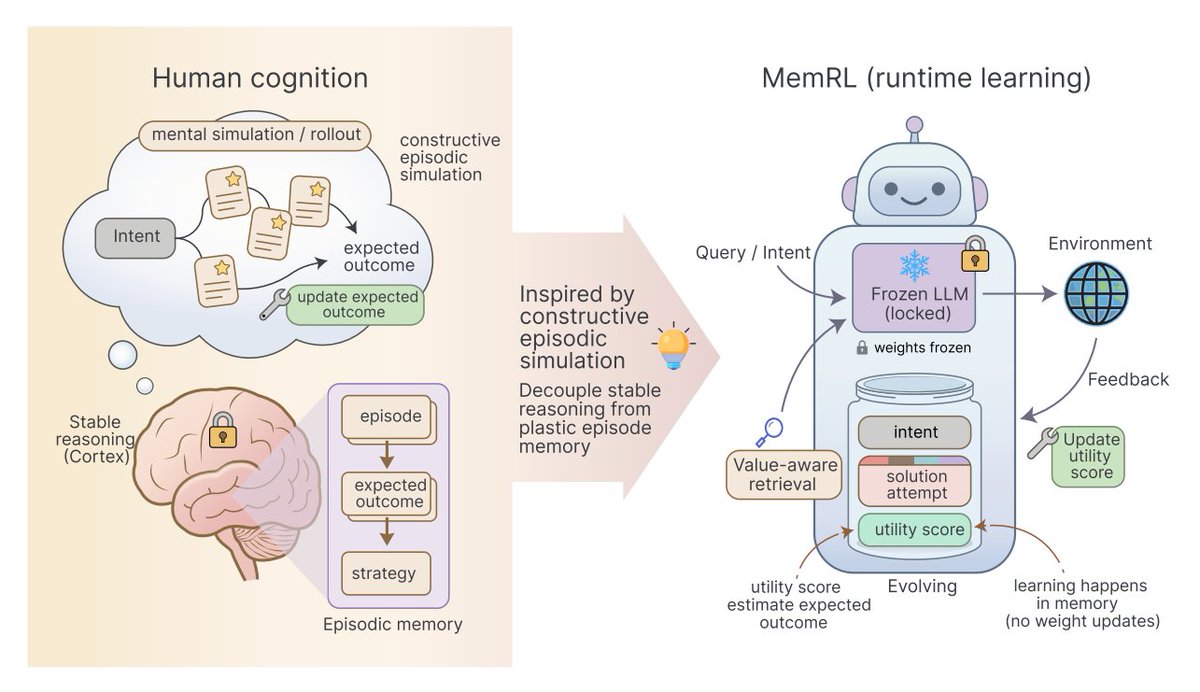

7 emerging memory architectures for AI agents ▪️ Agentic Memory (AgeMem) ▪️ Memex ▪️ MemRL ▪️ UMA (Unified Memory Agent) ▪️ Pancake ▪️ Conditional memory ▪️ Multi-Agent Memory from a Computer Architecture Perspective https://t.co/5X5LxirSEx https://t.co/5Hi0Gn3aA4

7 emerging memory architectures for AI agents ▪️ Agentic Memory (AgeMem) ▪️ Memex ▪️ MemRL ▪️ UMA (Unified Memory Agent) ▪️ Pancake ▪️ Conditional memory ▪️ Multi-Agent Memory from a Computer Architecture Perspective https://t.co/5X5LxirSEx https://t.co/5Hi0Gn3aA4

Microsoft has released a free, open-source course: GitHub Copilot CLI for Beginners. Includes 8 Chapters covering: • Walks through of installing Copilot CLI • Using context • Creating custom agents • Working with skills • Connecting MCP servers, and more. Start Learning - https://t.co/IIbauw5L7K

Microsoft has released a free, open-source course: GitHub Copilot CLI for Beginners. Includes 8 Chapters covering: • Walks through of installing Copilot CLI • Using context • Creating custom agents • Working with skills • Connecting MCP servers, and more. Start Learning - https://t.co/IIbauw5L7K

Nvidia ruled the first wave of AI by powering the training of large models. But the next phase may look different. Running AI at scale, inference is now growing much faster than training. That’s where real-world deployment happens. If the center of gravity in AI shifts there, the question becomes: will Nvidia stay as dominant in the next chapter? https://t.co/MdG0zqBUWj @RWhelanWSJ @WSJ