Your curated collection of saved posts and media

Your AI agent can now generate videos. PixVerse CLI ships today — JSON output, 6 deterministic exit codes, full PixVerse v5.6, Sora2 and Veo 3.1, Nano Banana access from terminal. Same account. Same credits. No new signup. -> Follow+ Reply+RT = 300 Creds(72H ONLY)

this was one of the things i co-led at fair, then fb had ~2b users, embeddings of ~128d made it a 300b-1T parameter model depending on how you count entities (e.g. ad campaigns). at the time, this was big, now it's medium. we trained it purely on distributed cpus

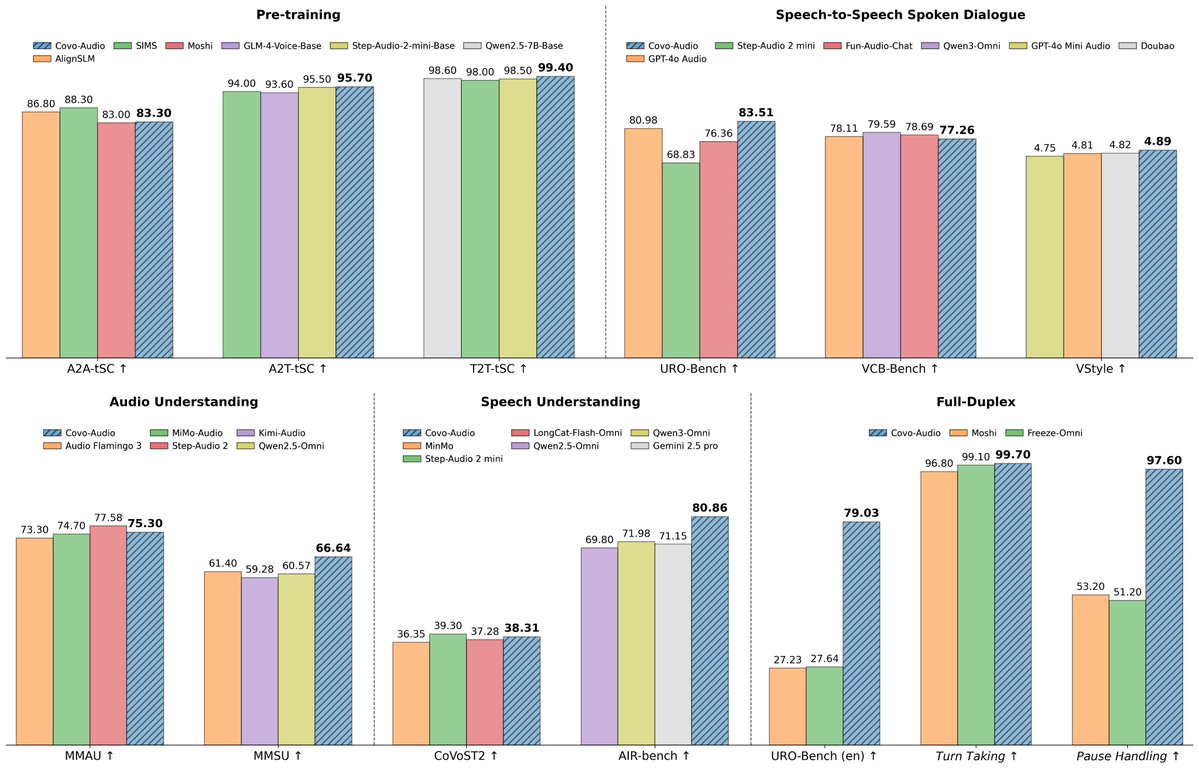

Covo Audio 🔊A end-to-end audio language model from @TencentAI_News https://t.co/tic5cH1A39 ✨ 7B ✨ Audio → Audio in one model ✨ Multi-speaker + voice transfer ✨ Real-time full duplex conversations https://t.co/hFrsxQgzkT

Covo Audio 🔊A end-to-end audio language model from @TencentAI_News https://t.co/tic5cH1A39 ✨ 7B ✨ Audio → Audio in one model ✨ Multi-speaker + voice transfer ✨ Real-time full duplex conversations https://t.co/hFrsxQgzkT

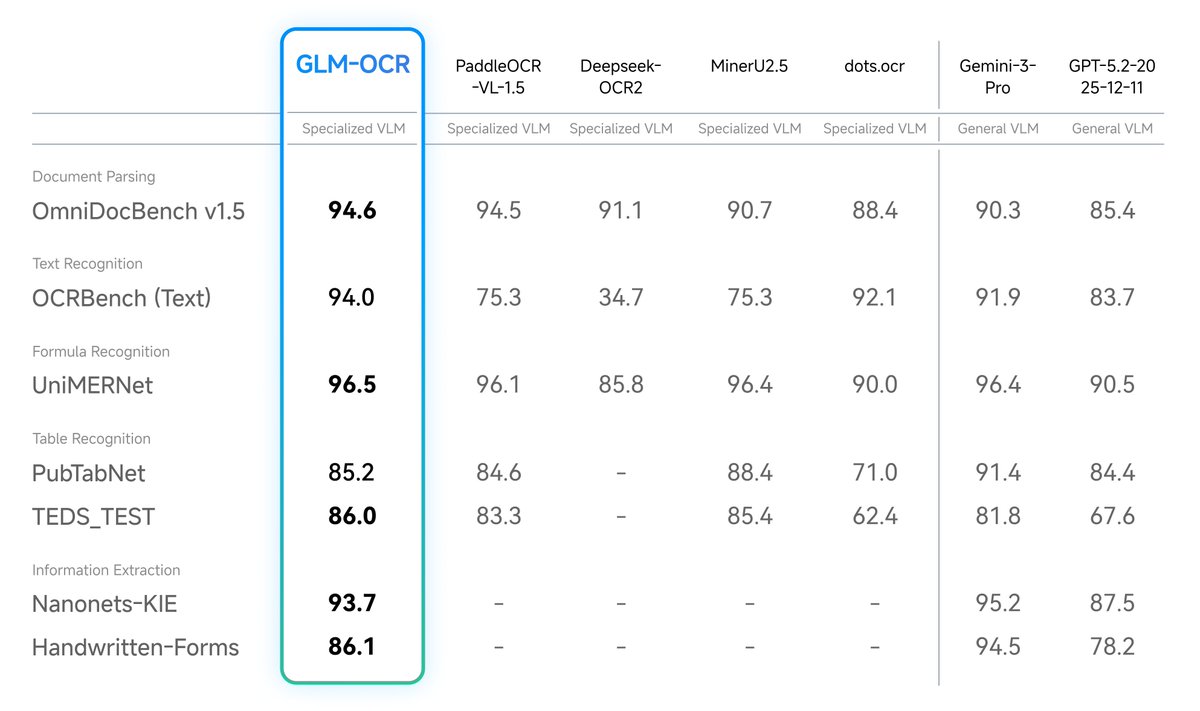

🚨 Want to parse complex PDFs with SOTA accuracy, 100% locally? 📄🔍 At just 0.9B parameters, you can drop GLM-OCR straight into LM Studio and run it on almost any machine! 🥔 🧠 0.9B total parameters 💾 Runs on < 1.5GB VRAM (or ~1GB quantized!) 💸 Zero API costs 🔒 Total data privacy Desktop document AI is officially here. 💻⚡

🚨 Want to parse complex PDFs with SOTA accuracy, 100% locally? 📄🔍 At just 0.9B parameters, you can drop GLM-OCR straight into LM Studio and run it on almost any machine! 🥔 🧠 0.9B total parameters 💾 Runs on < 1.5GB VRAM (or ~1GB quantized!) 💸 Zero API costs 🔒 Total data privacy Desktop document AI is officially here. 💻⚡

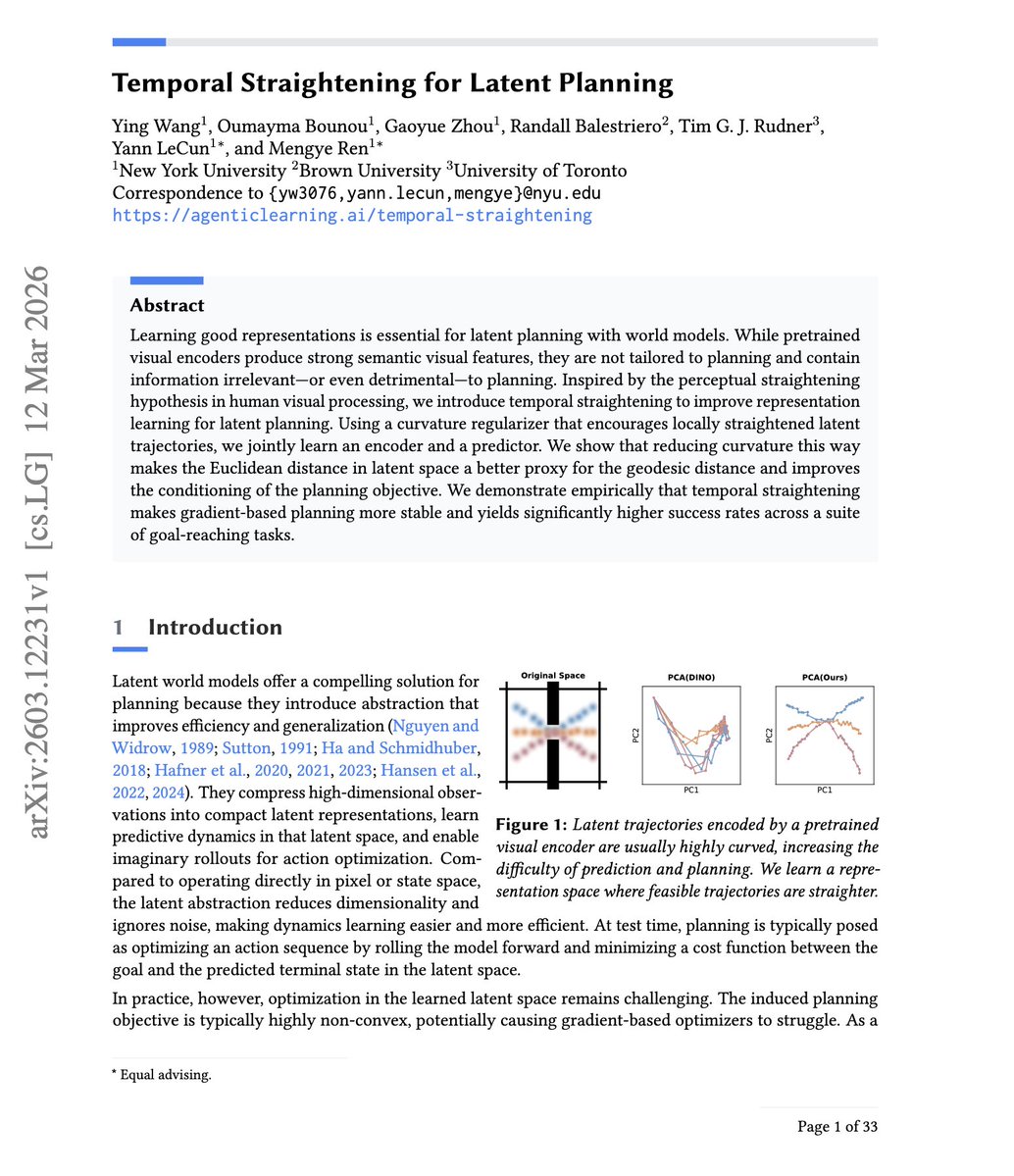

Yann LeCun is pumping out papers recently “Temporal Straightening for Latent Planning” This paper shows that by straightening latent trajectories in a world model, Euclidean distance starts to reflect true reachable progress, so it's closer to geodesic/minimum-step distance. This makes gradient-based planning far more stable and effective without relying as heavily on expensive search.

Yann LeCun is pumping out papers recently “Temporal Straightening for Latent Planning” This paper shows that by straightening latent trajectories in a world model, Euclidean distance starts to reflect true reachable progress, so it's closer to geodesic/minimum-step distance. This makes gradient-based planning far more stable and effective without relying as heavily on expensive search.

codex app automations: slack pending replies Review Slack for the current user and update today's daily summary note in /Users/jasonliu/vault at agent/daily-summary-YYYY-MM-DD.md with a single section titled ## Pending Slack Replies. Use Slack search and thread reads across public channels, private channels, DMs, and group DMs to find conversations where the current user is mentioned, directly addressed, or has already participated, and where the latest substantive message is from someone else and the current user has not replied. Focus on recent activity, prioritizing today and the last 36 hours. Read candidate threads before including them. Exclude resolved threads, FYIs that do not need a response, and anything the user already answered later. Rewrite the ## Pending Slack Replies section on each run instead of appending duplicates. For each pending item include: who is waiting, channel or DM name, last message time in America/Los_Angeles, a one-line summary of the ask or blocker, and a short snippet. If a stable Slack link is available, include it. If nothing is pending, keep the section and write - None right now. Keep the rest of the note unchanged.

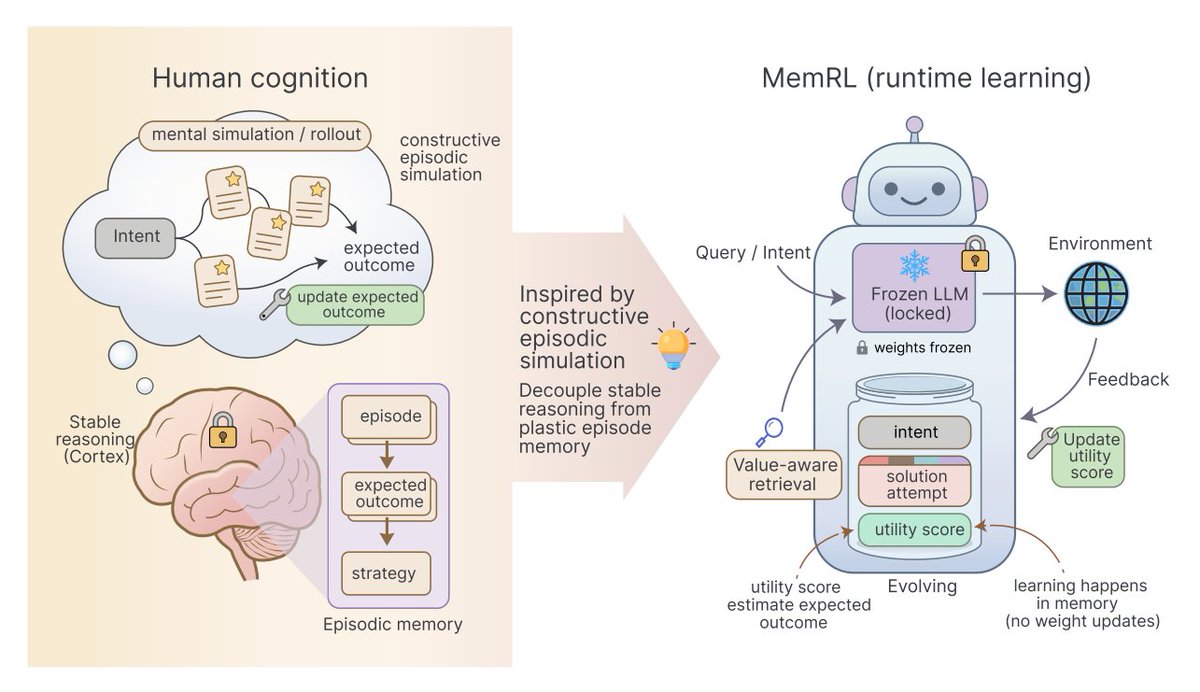

7 emerging memory architectures for AI agents ▪️ Agentic Memory (AgeMem) ▪️ Memex ▪️ MemRL ▪️ UMA (Unified Memory Agent) ▪️ Pancake ▪️ Conditional memory ▪️ Multi-Agent Memory from a Computer Architecture Perspective https://t.co/5X5LxirSEx https://t.co/5Hi0Gn3aA4

7 emerging memory architectures for AI agents ▪️ Agentic Memory (AgeMem) ▪️ Memex ▪️ MemRL ▪️ UMA (Unified Memory Agent) ▪️ Pancake ▪️ Conditional memory ▪️ Multi-Agent Memory from a Computer Architecture Perspective https://t.co/5X5LxirSEx https://t.co/5Hi0Gn3aA4

Microsoft has released a free, open-source course: GitHub Copilot CLI for Beginners. Includes 8 Chapters covering: • Walks through of installing Copilot CLI • Using context • Creating custom agents • Working with skills • Connecting MCP servers, and more. Start Learning - https://t.co/IIbauw5L7K

Microsoft has released a free, open-source course: GitHub Copilot CLI for Beginners. Includes 8 Chapters covering: • Walks through of installing Copilot CLI • Using context • Creating custom agents • Working with skills • Connecting MCP servers, and more. Start Learning - https://t.co/IIbauw5L7K

Nvidia ruled the first wave of AI by powering the training of large models. But the next phase may look different. Running AI at scale, inference is now growing much faster than training. That’s where real-world deployment happens. If the center of gravity in AI shifts there, the question becomes: will Nvidia stay as dominant in the next chapter? https://t.co/MdG0zqBUWj @RWhelanWSJ @WSJ

Every foundation model you've ever used has the same bug. It just got fixed. Since 2015, every deep network has been built the same way: each layer does some computation, adds its result to a running total, and passes it forward. Simple. But there's a problem, by layer 100, the signal from any single layer is buried under the sum of everything else. Each new layer matters less and less. Nobody fixed this because it worked well enough. Moonshot AI just changed that. Their new method, Attention Residuals, lets each layer look back at all previous layers and choose which ones actually matter right now. Instead of a blind running total, you get selective retrieval. The analogy: imagine writing an essay where every draft gets merged into one document automatically. By draft 50, your latest edits are invisible. AttnRes lets you keep every draft separate and pull from whichever ones you need. What this fixes: 1. Deeper layers no longer get drowned out 2. Training becomes more stable across the whole network 3. The model uses its own depth more efficiently To make it practical at scale, they group layers into blocks and attend over block summaries instead of every single layer. Overhead at inference: less than 2%. The result: 25% less compute to reach the same performance. Tested on a 48B-parameter model. Holds across sizes. Residual connections have been invisible plumbing for a decade. Now they're becoming dynamic. The next generation of models won't just pass through their own layers, they'll search them.