Your curated collection of saved posts and media

Great paper on automating agent skill acquisition.

Your AI agent can now generate videos. PixVerse CLI ships today — JSON output, 6 deterministic exit codes, full PixVerse v5.6, Sora2 and Veo 3.1, Nano Banana access from terminal. Same account. Same credits. No new signup. -> Follow+ Reply+RT = 300 Creds(72H ONLY)

说句心里话,Codex 比 Claude code 强很多 也可能是我写 Swift 有关,但它每次都能默默干很长时间,而且每次都几乎正确 相反 Claude code,一下子问这个权限,然后也没有把事情一次性办好 已经严重影响我,刷抖音了 而且Codex 有很便宜的正版方案,但 Claude code 没有。

说句心里话,Codex 比 Claude code 强很多 也可能是我写 Swift 有关,但它每次都能默默干很长时间,而且每次都几乎正确 相反 Claude code,一下子问这个权限,然后也没有把事情一次性办好 已经严重影响我,刷抖音了 而且Codex 有很便宜的正版方案,但 Claude code 没有。

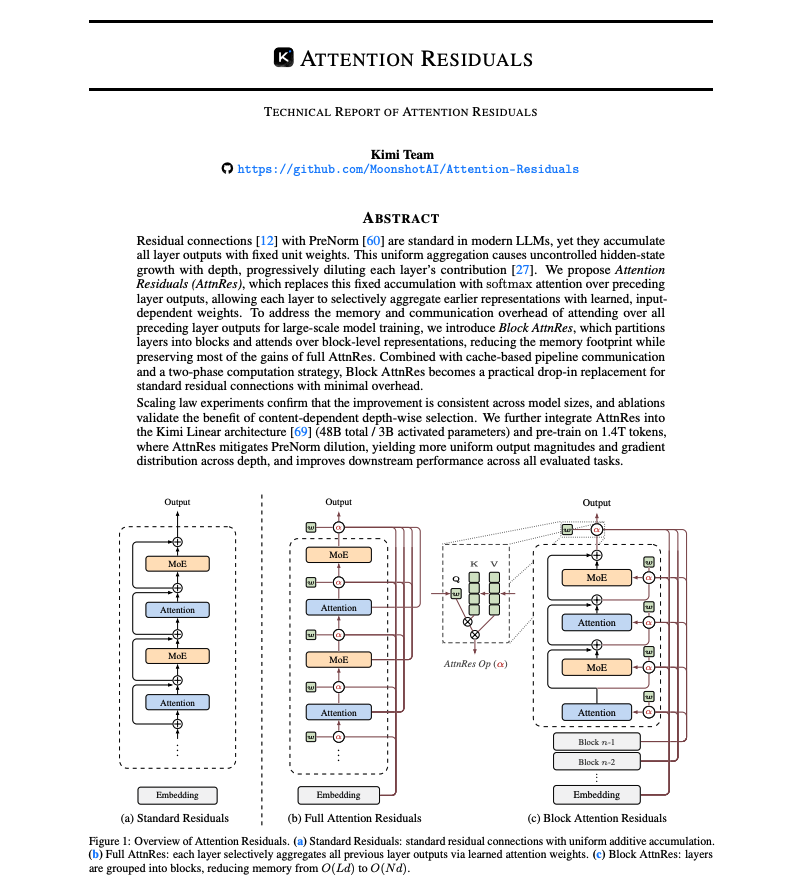

Banger report from the Kimi team: Attention Residuals Residual connections made deep Transformers trainable. But they also force uncontrolled hidden-state growth with depth. This work proposes a cleaner alternative. It introduces Attention Residuals, which replace fixed residual accumulation with softmax attention over previous layer outputs. Instead of blindly summing everything, each layer selectively retrieves the earlier representations it actually needs. To keep this practical at scale, they add a blockwise version that compresses layers into block summaries, recovering most of the gains with minimal systems overhead. Why does it matter? Residual paths have barely changed across modern LLMs, even though they govern how information moves through depth. This paper shows that making the mixing content-dependent improves scaling laws, matches a baseline trained with 1.25x more compute, boosts GPQA-Diamond by +7.5 and HumanEval by +3.1, while keeping inference overhead under 2%. Paper: https://t.co/04IG6FDiVr Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

GitHub already has millions of repos full of procedural knowledge. The work introduces a framework for extracting agent skills directly from open-source repos. The pipeline analyzes repo structure, identifies procedural knowledge through dense retrieval, and translates it into standardized SKILL.md format with a progressive disclosure architecture so agents can discover thousands of skills without context window degradation. Manually authoring agent skills doesn't scale. Automated extraction achieved 40% gains in knowledge transfer efficiency while matching human-crafted quality. Still early on this, and there is more work needed for self-discovered and self-improving skills to work well at scale. As the agent skill ecosystem grows, mining existing repos could unlock scalable capability acquisition without having to retrain models. Paper: https://t.co/MAt8Goetcr Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Banger report from the Kimi team: Attention Residuals Residual connections made deep Transformers trainable. But they also force uncontrolled hidden-state growth with depth. This work proposes a cleaner alternative. It introduces Attention Residuals, which replace fixed residual accumulation with softmax attention over previous layer outputs. Instead of blindly summing everything, each layer selectively retrieves the earlier representations it actually needs. To keep this practical at scale, they add a blockwise version that compresses layers into block summaries, recovering most of the gains with minimal systems overhead. Why does it matter? Residual paths have barely changed across modern LLMs, even though they govern how information moves through depth. This paper shows that making the mixing content-dependent improves scaling laws, matches a baseline trained with 1.25x more compute, boosts GPQA-Diamond by +7.5 and HumanEval by +3.1, while keeping inference overhead under 2%. Paper: https://t.co/04IG6FDiVr Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

🎉EgoEdit @Snapchat has been accepted to CVPR 2026! 🏆👻 We are bringing high-quality, real-time editing to egocentric videos. Our massive 100k video dataset and benchmark are ALREADY PUBLIC! 🔓🚀 🏠 Project Page: https://t.co/cEUZRxdLDf 🤗 Dataset: https://t.co/qCFRTY8cYG https://t.co/VuXQg2UfqC

🎉EgoEdit @Snapchat has been accepted to CVPR 2026! 🏆👻 We are bringing high-quality, real-time editing to egocentric videos. Our massive 100k video dataset and benchmark are ALREADY PUBLIC! 🔓🚀 🏠 Project Page: https://t.co/cEUZRxdLDf 🤗 Dataset: https://t.co/qCFRTY8cYG https://t.co/VuXQg2UfqC