@omarsar0

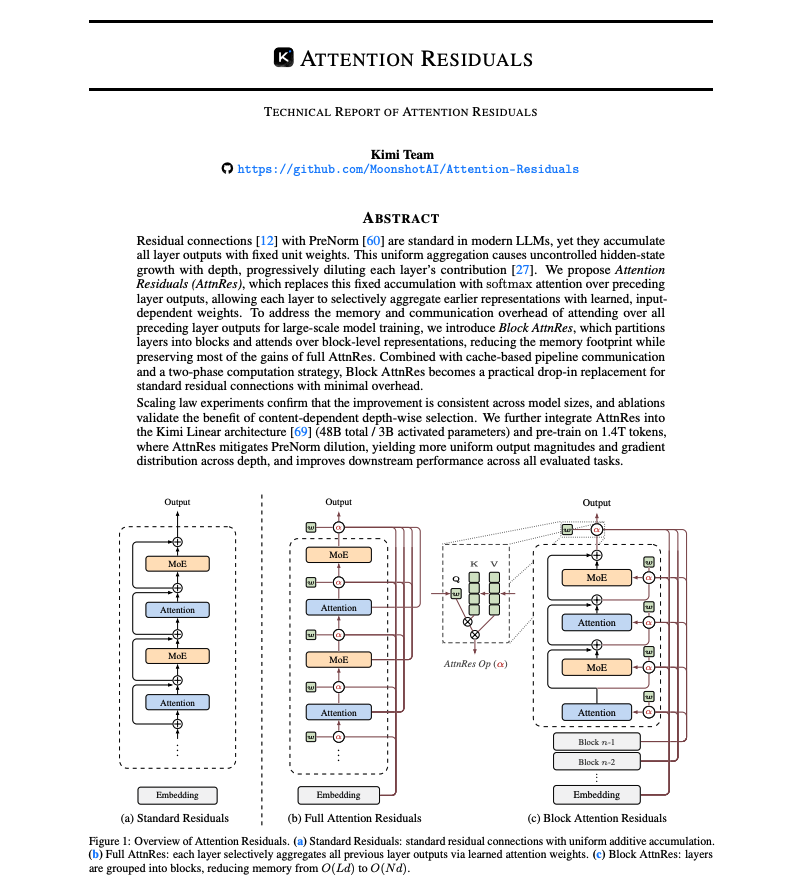

Banger report from the Kimi team: Attention Residuals Residual connections made deep Transformers trainable. But they also force uncontrolled hidden-state growth with depth. This work proposes a cleaner alternative. It introduces Attention Residuals, which replace fixed residual accumulation with softmax attention over previous layer outputs. Instead of blindly summing everything, each layer selectively retrieves the earlier representations it actually needs. To keep this practical at scale, they add a blockwise version that compresses layers into block summaries, recovering most of the gains with minimal systems overhead. Why does it matter? Residual paths have barely changed across modern LLMs, even though they govern how information moves through depth. This paper shows that making the mixing content-dependent improves scaling laws, matches a baseline trained with 1.25x more compute, boosts GPQA-Diamond by +7.5 and HumanEval by +3.1, while keeping inference overhead under 2%. Paper: https://t.co/04IG6FDiVr Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX