Your curated collection of saved posts and media

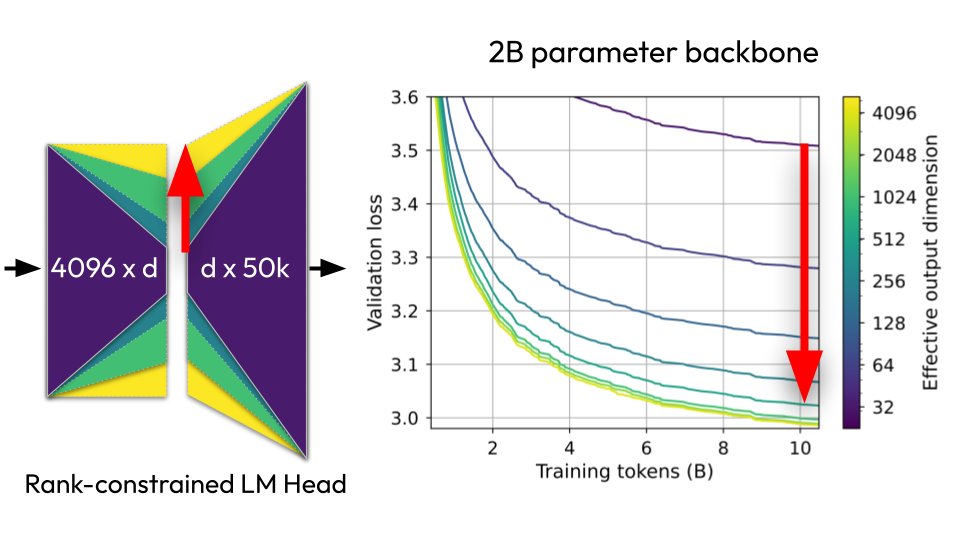

🧵New paper: "Lost in Backpropagation: The LM Head is a Gradient Bottleneck" The output layer of LLMs destroys 95-99% of your training signal during backpropagation, and this significantly slows down pretraining 👇 https://t.co/lnbGfesIFA

I'm pretty confident this can be leveraged to graft a modified backwards pass onto the LM head of a pretrained model to improve the validation loss over standard LM head bwd. More to come soon.

Do you want a 3D character interacting with an object/pet/another person, following a desired action? Presenting Hoi3DGen: Generating High-Quality Human-Object-Interactions in 3D. Project: https://t.co/EE87KSjQCX Code: https://t.co/ddpLjciTWC https://t.co/QPTyXw45kk

Do you want a 3D character interacting with an object/pet/another person, following a desired action? Presenting Hoi3DGen: Generating High-Quality Human-Object-Interactions in 3D. Project: https://t.co/EE87KSjQCX Code: https://t.co/ddpLjciTWC https://t.co/QPTyXw45kk

All AI posters at GTC. This is not for human consumption. This video is for AI to watch. Click the grok button and talk to it about what it learned by seeing all the AI posters (highly technical) presented at @NVIDIAGTC tonight. Thanks NVIDIA for the badge and access. https://t.co/mKqIv1f6Dt

Wow. Grok watched this video and made a complete list of everything it saw: https://t.co/fqC1fuwhwX Do you have any idea how cool this is? It read every poster.

@Yulun_Du @ilyasut SGD is a ResNet too (the blocks of it are fwd+bwd), the residual stream is the weights so... 🤔 We're not taking the Attention is All You Need part literally enough? :D

We mostly solved multi-node coordination decades ago in distributed computing. Turns out LLM teams face some of the same coordination problems today. Here is a really good read for anyone designing multi-agent systems. It applies distributed systems theory to LLM teams and finds the same O(n²) communication bottlenecks, straggler delays, and consistency conflicts showing up directly. Decentralized teams wasted more rounds communicating without making progress, but they also recovered faster when individual agents stalled. How does this relate to distributed systems? The work attempts to evaluate LLM teams as distributed systems. It lays out a principled framework instead of trial and error for deciding when teams help, how many agents to use, and what coordination structure fits the task. Designing LLM teams without distributed systems principles is like building a cluster without understanding consensus protocols. Paper: https://t.co/klHzUFJL1R

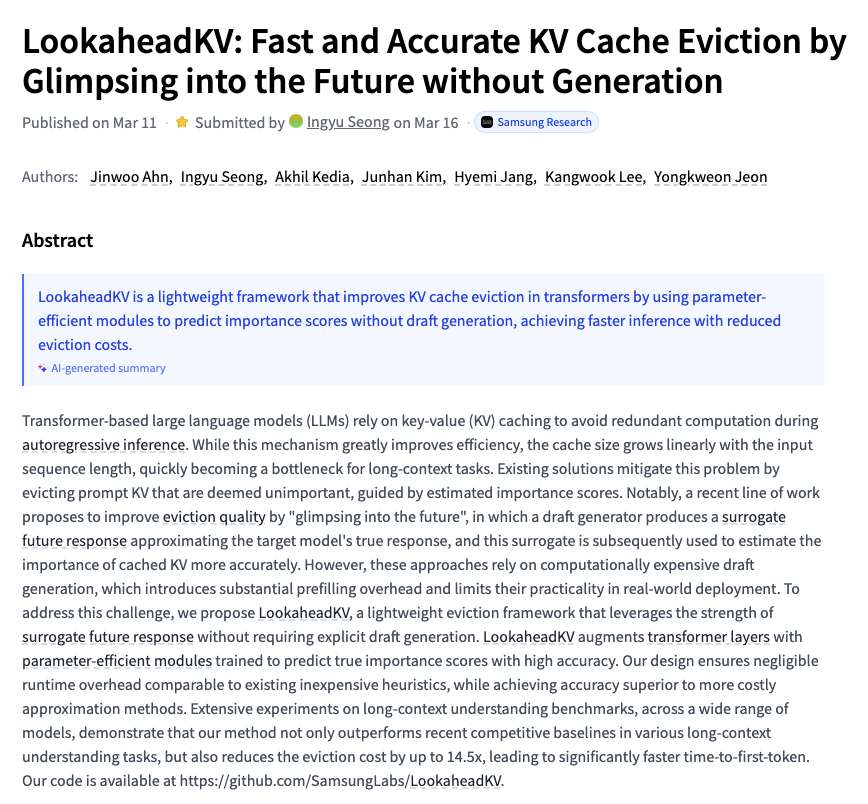

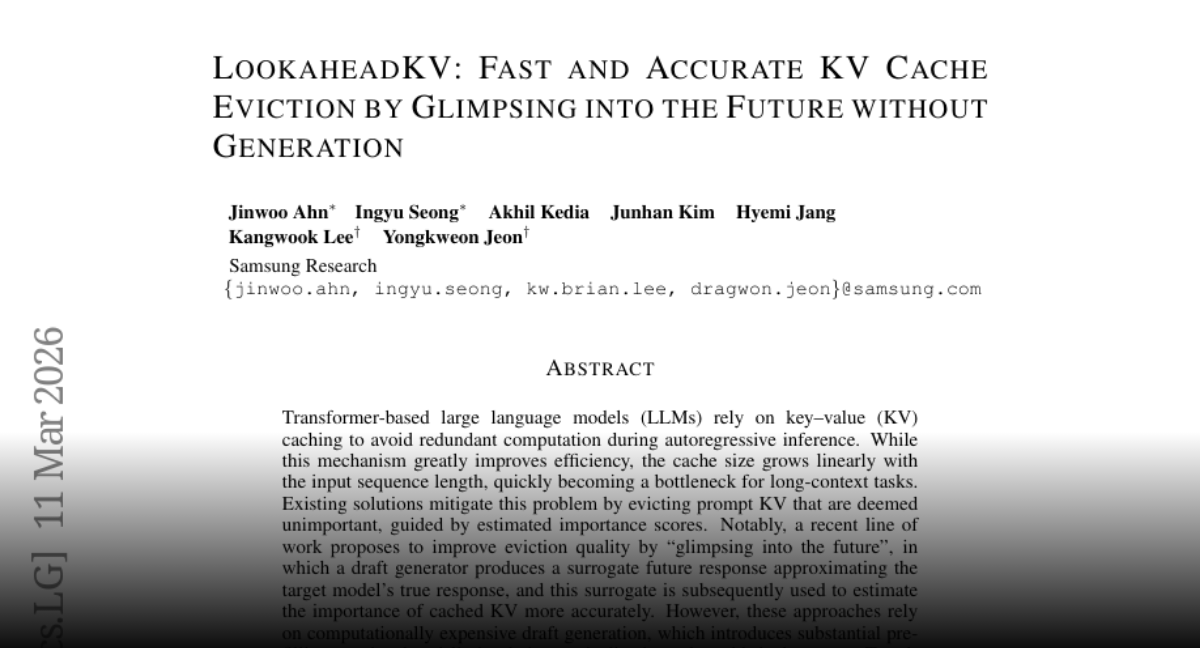

LookaheadKV Fast and Accurate KV Cache Eviction by Glimpsing into the Future without Generation paper: https://t.co/j8lLnqUARR https://t.co/URKtNQkFKx

LMEB Long-horizon Memory Embedding Benchmark paper: https://t.co/fT3sEwCRgd https://t.co/lCyEY9tadB

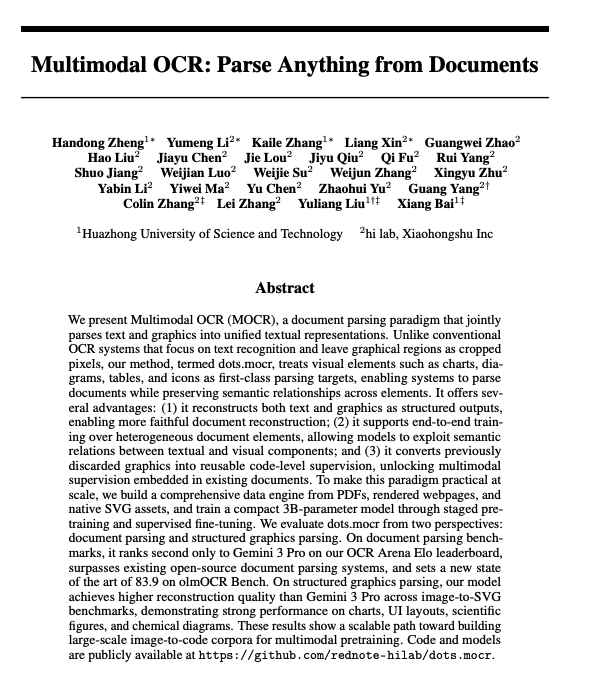

Multimodal OCR Parse Anything from Documents On document parsing benchmarks, it ranks second only to Gemini 3 Pro on our OCR Arena Elo leaderboard, surpasses existing open-source document parsing systems, and sets a new state of the art of 83.9 on olmOCR Bench. On structured graphics parsing, dots.mocr achieves higher reconstruction quality than Gemini 3 Pro across image-to-SVG benchmarks, demonstrating strong performance on charts, UI layouts, scientific figures, and chemical diagrams paper: https://t.co/d3MkBHMuWc

@ChristosTzamos Wait this is so awesome!! Both 1) the C compiler to LLM weights and 2) the logarithmic complexity hard-max attention and its potential generalizations. Inspiring!

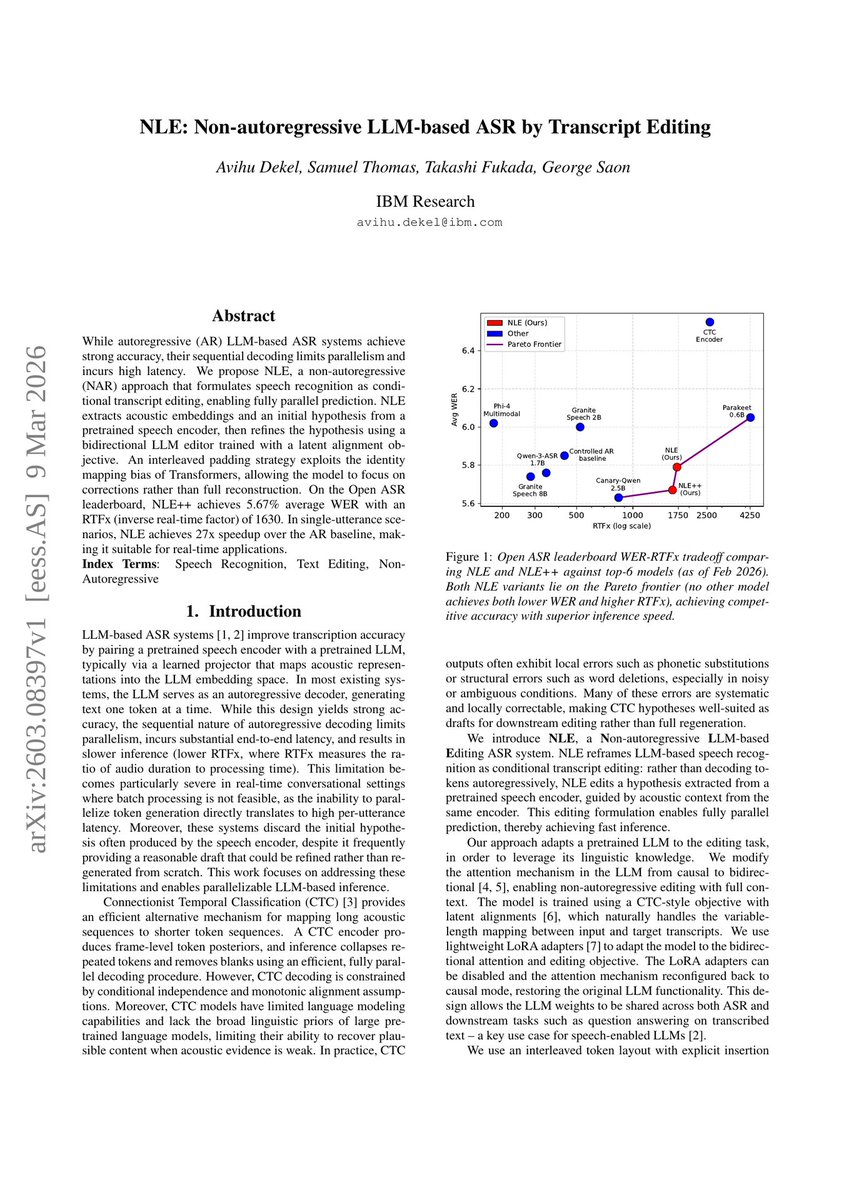

IBM released NLE: Non-autoregressive LLM-based ASR by Transcript Editing A non-autoregressive approach that formulates speech recognition as conditional transcript editing, achieving 27x speedup over autoregressive baselines with 5.67% WER. https://t.co/LtjPtUxf5a

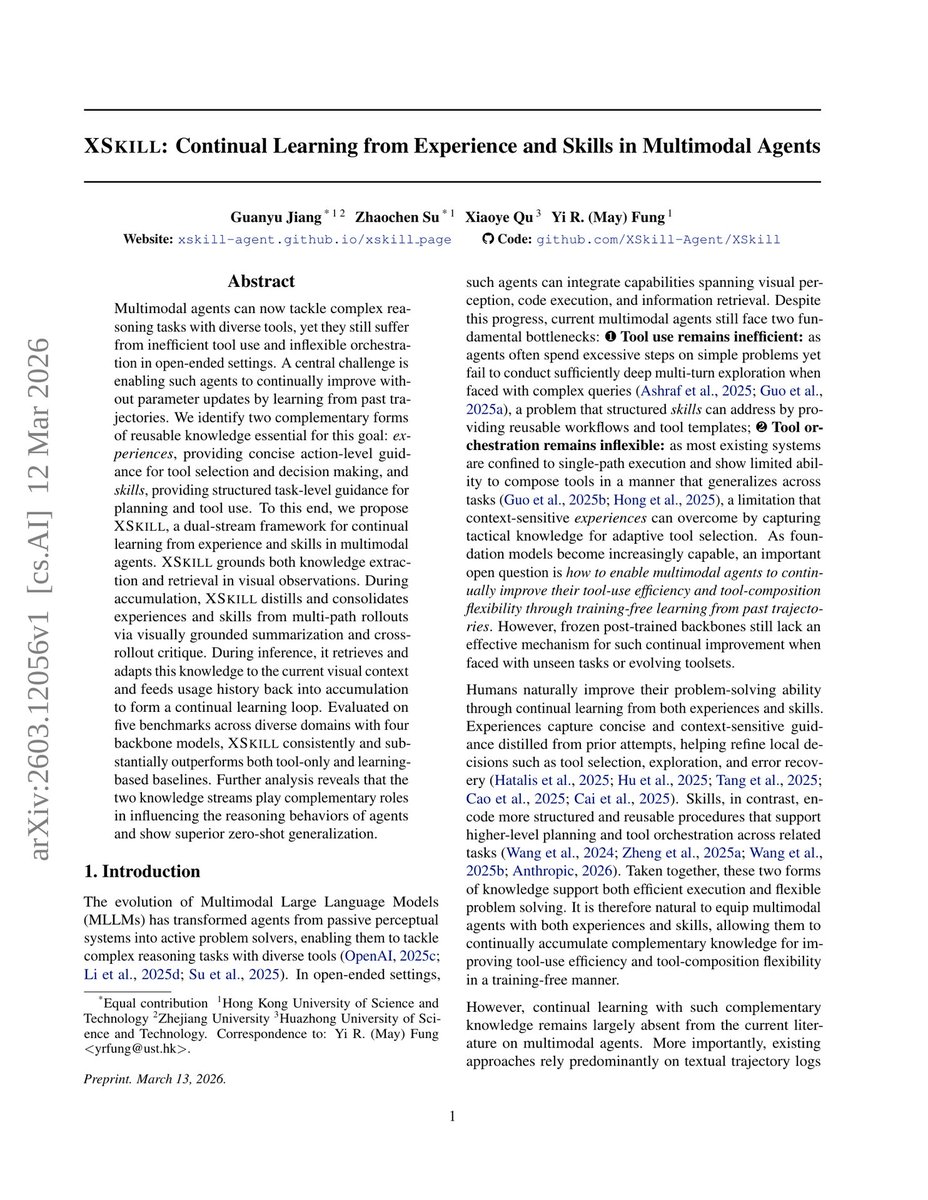

XSkill: Continual learning from experience and skills A dual-stream framework enabling multimodal agents to accumulate and reuse knowledge without parameter updates. Grounded in visual context, it distills structured workflows and tactical insights to improve reasoning and tool use.