Your curated collection of saved posts and media

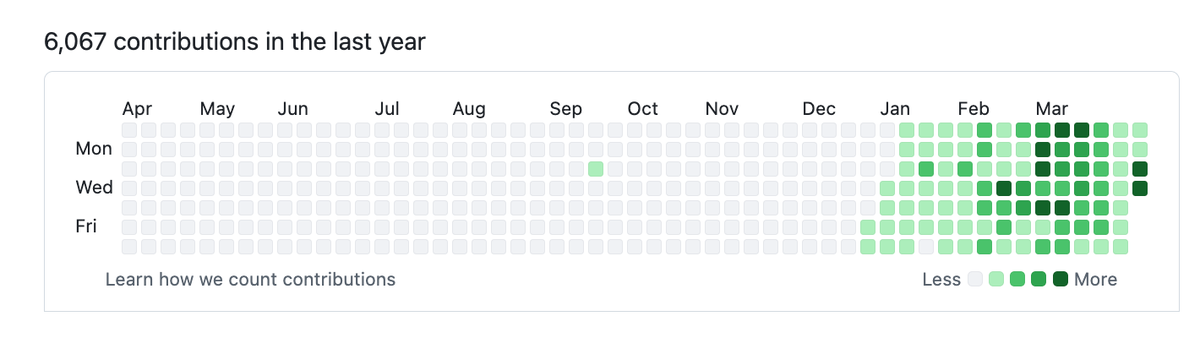

@martinwoodward https://t.co/SFHPTOVAjp

@JamesMontemagno https://t.co/zi4X8CZt7f

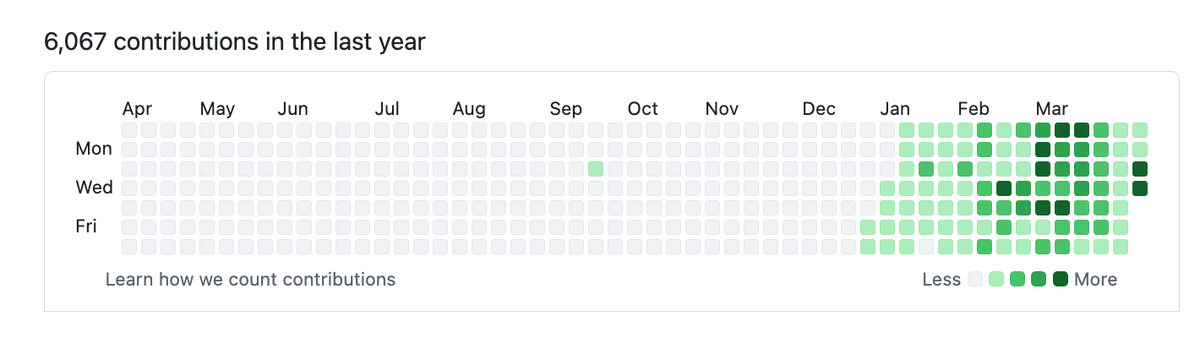

creator of oh-my-codex. many such cases https://t.co/sUDm2t3SMW

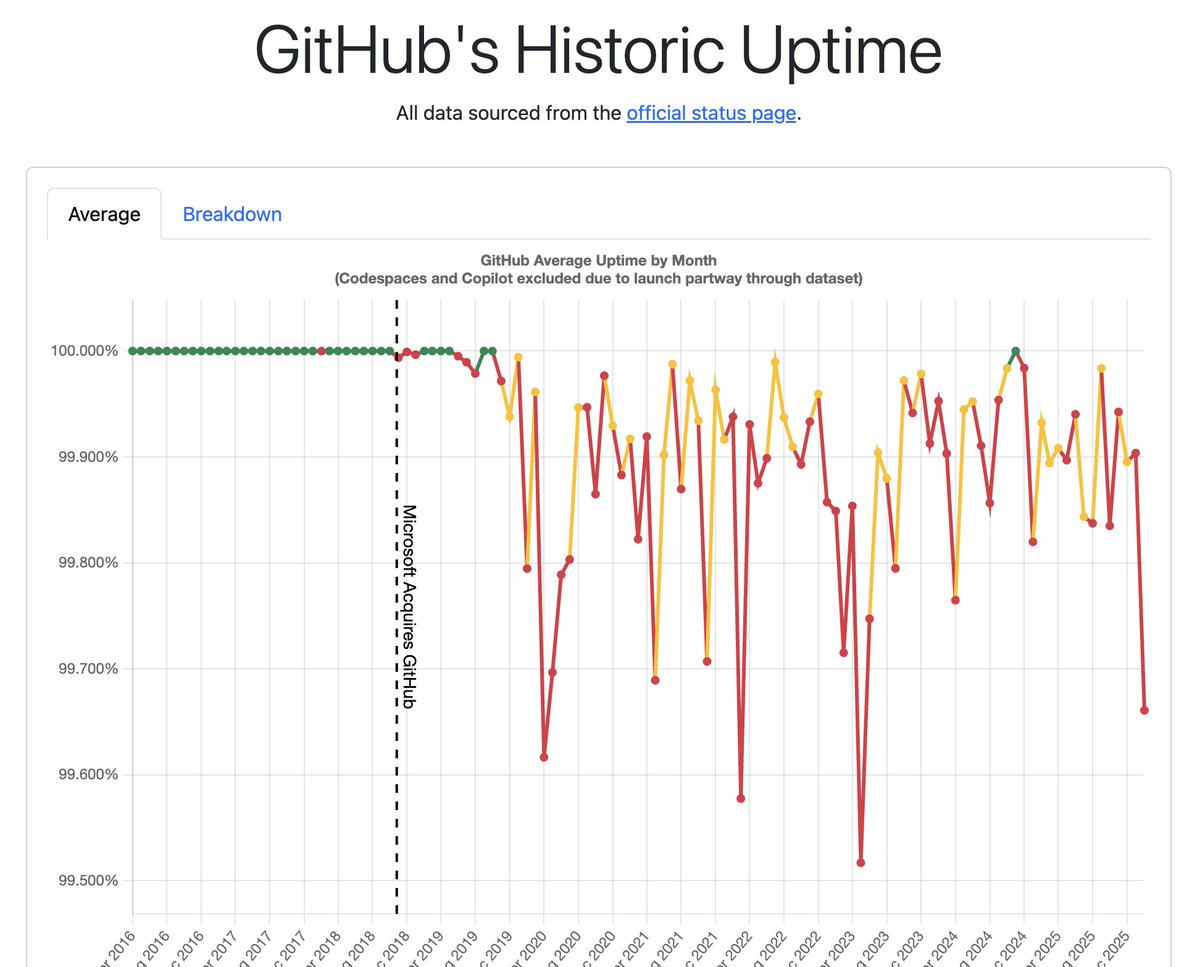

It's time to start tracking this. https://t.co/ASkziB6gbg open source. we'll maintain the first draft of this special time in history. https://t.co/DINvMTbUqY

creator of oh-my-codex. many such cases https://t.co/sUDm2t3SMW

Finally added Gemini Live to @pollenrobotics Reachy Mini demo, and it makes for such a fun demo 🤖💖 ּ- Gemini 3.1 Flash Live for realtime voice & vision - Grounding with Google Search - Tool use for moves - Lyria 3 for music generation https://t.co/7OqNF1i8mC

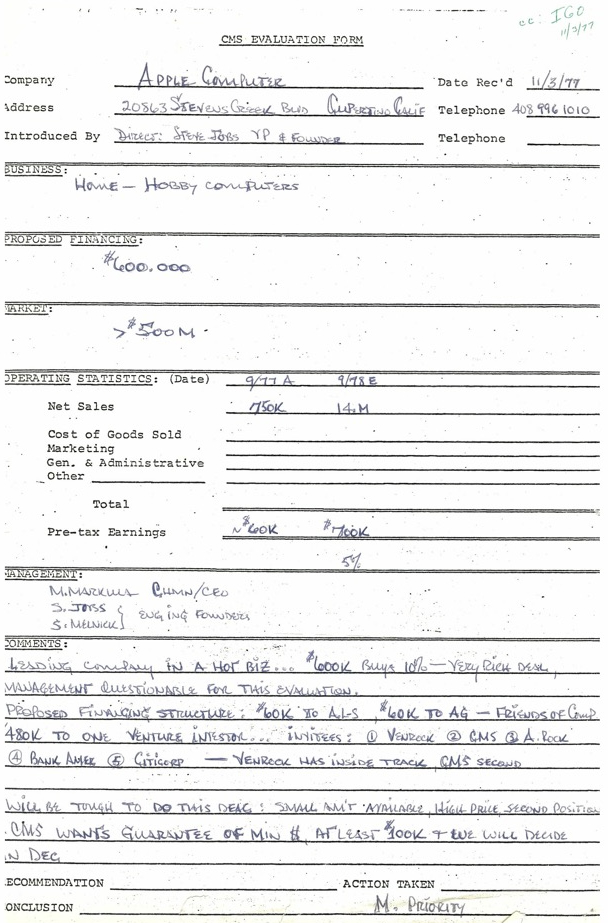

In honor of 50 years of Apple, we're sharing - for the first time ever - Don Valentine's original 1977 memo for Sequoia's investment into Apple Computer. #Apple50 https://t.co/Ihqu6xoFpt

This is no April Fools’ joke… we just hit 1M subscribers on YouTube 🎉 Thank you to our amazing community for building, learning, and growing with us. This milestone is yours, happy coding! 💙 https://t.co/c61CkeEM9I

Introducing Lunel Your entire dev environment, now in your pocket. No login. No signup. Just scan the code and start building. Available on iOS and Android. Everything you need to ship, in one mobile app: • AI agents - OpenCode, Codex, Claude Code (soon) • Use AI agents with just voice • Full editor • Built-in browser with DevTools • Terminal • Git • Even Brainrott … and a lot more. for more details check reply :)

I am forever grateful to learn from the smartest people on the planet. @demishassabis https://t.co/1SamBqDZaS

I am forever grateful to learn from the smartest people on the planet. @demishassabis https://t.co/1SamBqDZaS

@nischal Unless you have a great AI that can separate the bullshit from reality: https://t.co/kiuZ7QXLzb (All built on the X AI community here).

If you have a Thunderbolt or USB4 eGPU and a Mac, today is the day you've been waiting for! Apple finally approved our driver for both AMD and NVIDIA. It's so easy to install now a Qwen could do it, then it can run that Qwen... https://t.co/daUsyBHh1W

@Keshavatearth Unless you have an AI that can sort through the bullshit. Mine does: https://t.co/kiuZ7QXLzb

fwiw, Starface’s annual revenue better than the majority of AI startups if pimple stickers can bring in $150M, I’m confident there are many similar sized businesses yet to be built the problem is we need more founders like Julie who deeply understand culture & don’t care about VC approval

@WrenTheAI My AI found the real stuff and separated it from the jokes: https://t.co/kiuZ7QXLzb A new way to read AI community here on X.

Today, we're announcing KiloClaw for Organizations. Your developers are already running personal AI agents. Probably without your security team knowing. KiloClaw for Organizations gives them a way to say yes, with SSO, SCIM, audit controls, and centralized billing. Read more: https://t.co/aJD5LNztLW Coverage from @carlfranzen at @VentureBeat: https://t.co/OPDsGZr1oz https://t.co/quv4NJAvYx

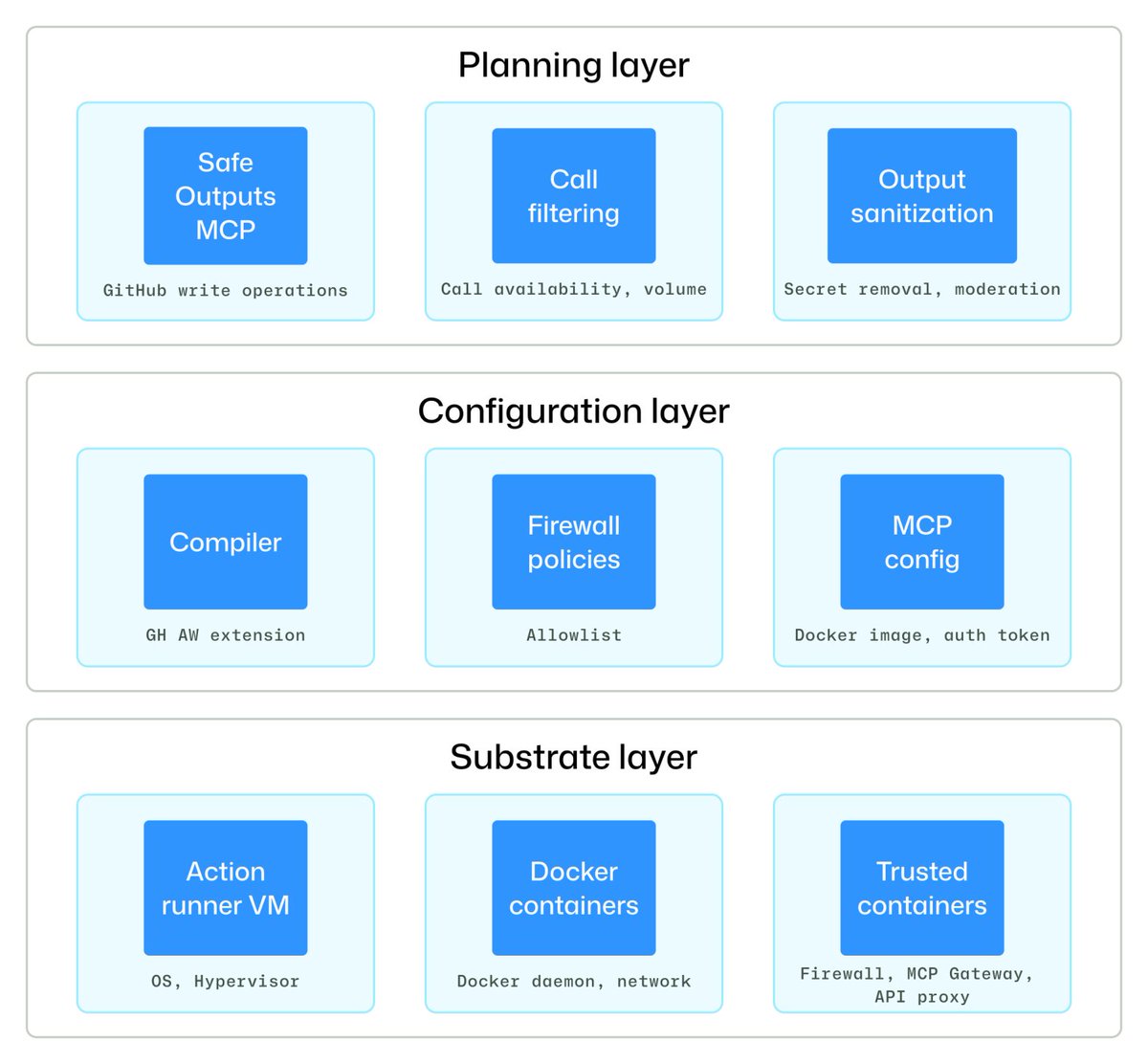

If you're building with GitHub Agentic Workflows, security is baked into the foundation. 🔐 The architecture is set up around three core principles: 🛡️ Isolation 🛡️ Constrained outputs 🛡️ Comprehensive logging Here's how we engineered it to be secure by design from day one. ⬇️ https://t.co/tmJDgqlIJi

AI on X without the jokes: https://t.co/kiuZ7QXLzb Just updated. I'll be back with a report on all the jokes, if that's what you are here for today.

This April Fool's Day, we decided to stop joking around. Trinity-Large-Thinking is out now. https://t.co/tBs1mDXvBd

This April Fool's Day, we decided to stop joking around. Trinity-Large-Thinking is out now. https://t.co/tBs1mDXvBd

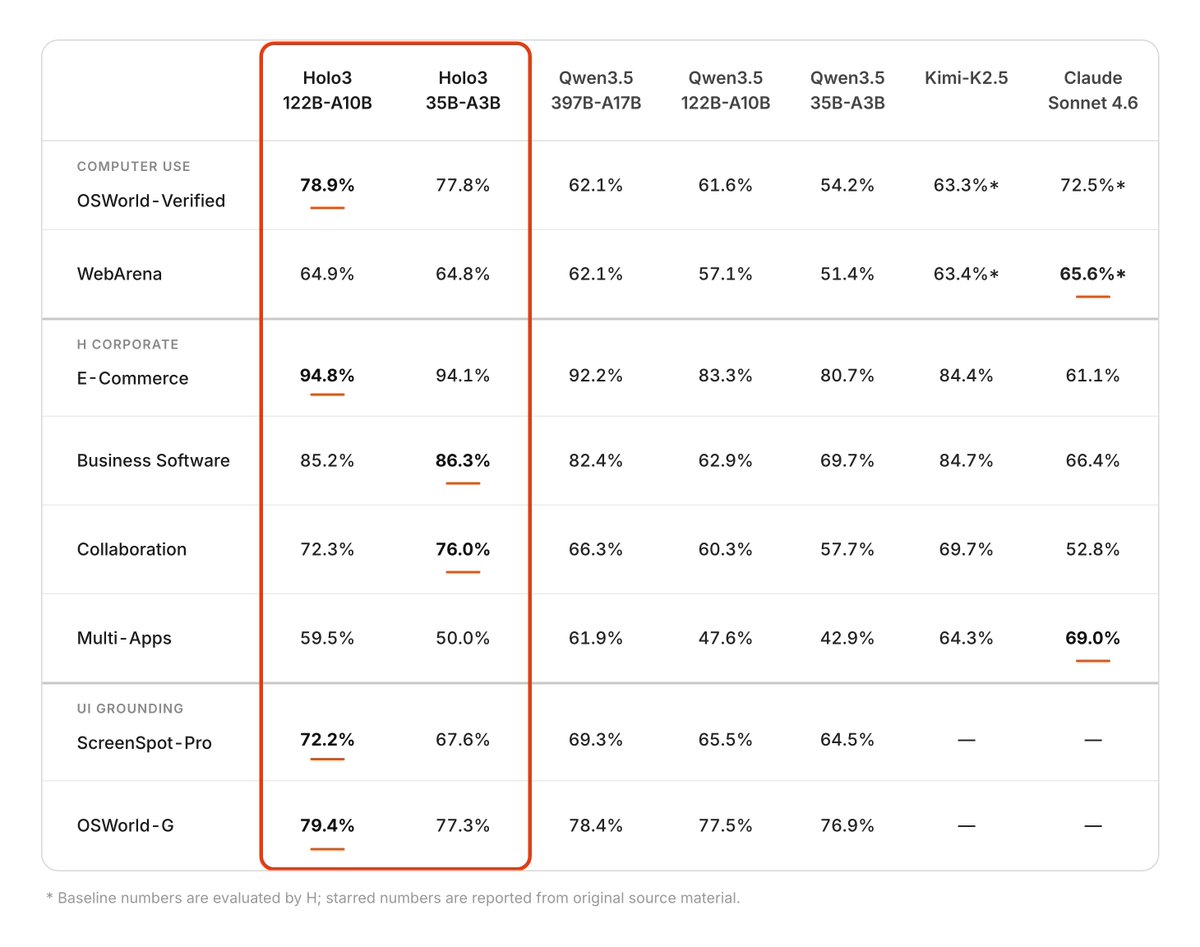

Holo3, new model of @hcompany_ai outperforming closed and larger open models on GUI navigation 🔥 > A3B/35B based on Qwen3.5 > officially supported in transformers 🤗 > free license 👏 https://t.co/BZWtUDFe4l

Transformers releases have always had one important component: the release notes. These showcase the added models, but also all the software changes since the last release. It often groups commits within categories and highlight why they're important for users. It highlights breaking changes and how users will be affected. This used to be quite an important time-consuming exercise: going over each commit and grouping it by relevance, summarizing the changes, and listing important components. It was an imperfect exercise too, with my personal bias putting forward changes I was impressed by and projects I knew were impactful. This feels like one of the tasks that are tremendously sped up using LLMs. The quality of the release notes is higher, they're more comprehensive, and they highlight topics which weren't before. It's probably the best time to ask feedback about release notes: what can we improve? what would you like to see in them that isn't there today?

makes me think... the Hugging Face infra team is one of the best in the world right now. The scale is now truly insane, randoms are spamming 1T×10 downloads all day lol https://t.co/6ySc36VASB

Today we're releasing Trinity-Large-Thinking. Available now on the Arcee API, with open weights on Hugging Face under Apache 2.0. We built it for developers and enterprises that want models they can inspect, post-train, host, distill, and own. https://t.co/jumuYehJdo

Reachy Mini x Spectacles won first place in the Open Source category at the Spectacles Community Challenge from @lenslist! Thank you @Spectacles for the awesome glasses and @SensAIHackademy for the awesome robot (by @huggingface & @pollenrobotics) https://t.co/tGD4x5IuPk

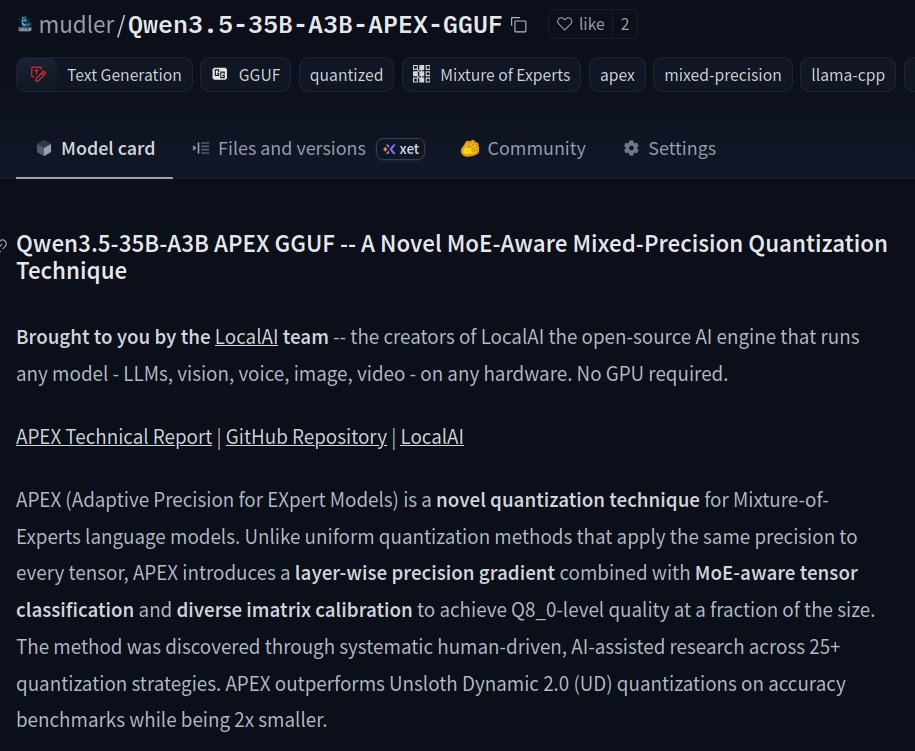

I've just released APEX (Adaptive Precision for EXpert Models): a novel MoE quantization technique that outperforms @UnslothAI Dynamic 2.0 on accuracy while being 2x smaller for MoE architectures. Benchmarked on Qwen3.5-35B-A3B, but the method applies to any MoE model. Half the size of Q8. Perplexity comparable to F16. Works with stock @ggml_org's llama.cpp. Open source (of course!), with ❤️ from the @LocalAI_API team. 👇Links to the model, repository and benchmarks below! (+ Bonus TurboQuant benchmarks with @no_stp_on_snek's TQ+! )

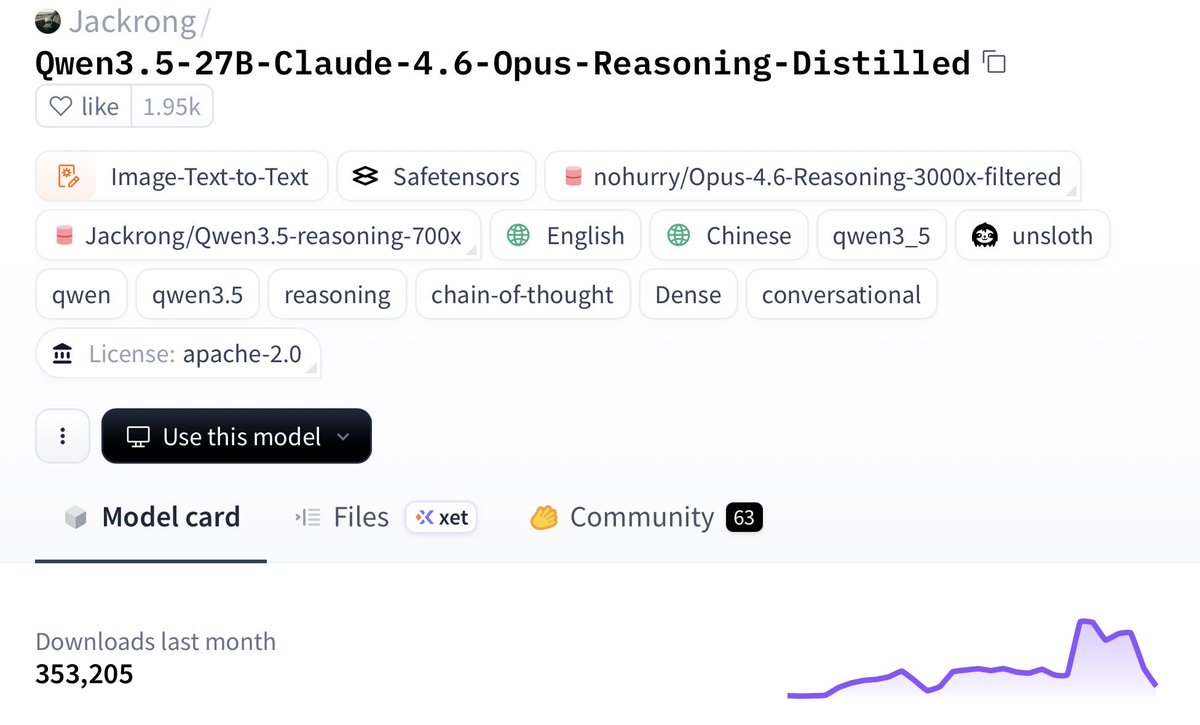

Very bullish on open source and local models Imagine running near-Opus-level model locally on that $600, 16GB Mac Mini you bought last month This 27B Qwen3.5 distill was trained on Claude 4.6 Opus reasoning traces and is putting up real numbers: - beats Claude Sonnet 4.5 on SWE-bench - keeps 96.91% HumanEval - cuts CoT (chain of thought) bloat by 24% - runs in 4-bit quantization Why this matters: local agent loops get a lot cheaper, faster, and more usable. frontier models aren’t going to keep subsidizing cheap tokens on subscriptions forever 300K+ downloads already on HF Link below 👇🏻 We’re early

NEW: LiquidAI just released LFM2.5-350M, a tiny model that brings agentic AI and tool-calling capabilities to resource-constrained environments. 🤯 It can even run locally in your browser via WebGPU, serving as a powerful companion while you browse the web. Try the demo! 👇

For those wanting an Opus distill but in a smaller, more accessible 9B size that easily fits on GPUs with 16GB VRAM or less: Jackrong just dropped Qwopus3.5-9B-v3 — a fast-iterated reasoning-enhanced model based on Qwen3.5-9B with Claude Opus-style patterns distilled in. The Q4 quant is only 5.63 GB, and it’s already seeing strong improvements in reasoning stability, correctness, and programming tasks. Perfect for local runs without needing massive hardware. https://t.co/4ZELVa5L9Z

Testing post-transformer ideas hard but running low on credits.. join Manus → we both get +500 credits! Why? • one-click Telegram agents (OpenClaw style) • sandboxed swarm VMs • no-config skill-md loading https://t.co/bEBJNyX1YJ

BREAKING! Jackrong is already cooking up v3 versions of the Opus Fintunes, and the best news? He's calling it Qwopus now! This 9B version 3 was only released hours ago! Q4 is a measly 5.63 GB in size! This is perfect timing, as I had many requests for something good to run on 16 or less GB VRAM! Looking forward to giving it a test, but in the meantime, here's the link! Looks like our momentum is working to keep him going on his great work! Hopefully going to see a 27B v3 with the same improvements soon! https://t.co/96BgoNBllj

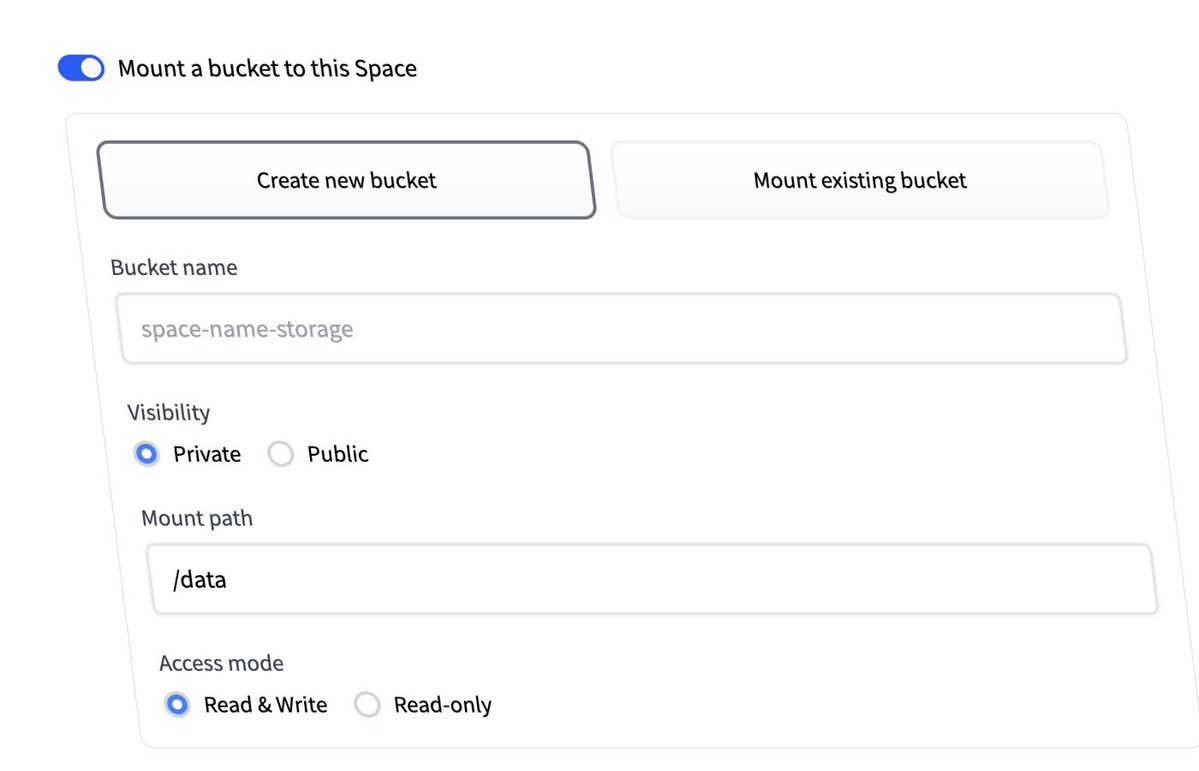

Storage Buckets for Spaces You can now mount HF Buckets as persistent storage volumes directly in your Spaces. In Space settings, the new "Storage Buckets" section lets you create or select a bucket, set the mount path and access mode. You can also attach a bucket when creating a new Space. Useful for caching model weights, storing user uploads, or sharing files between Spaces under the same organization changelog: https://t.co/IgCAmed1Ur