@mudler_it

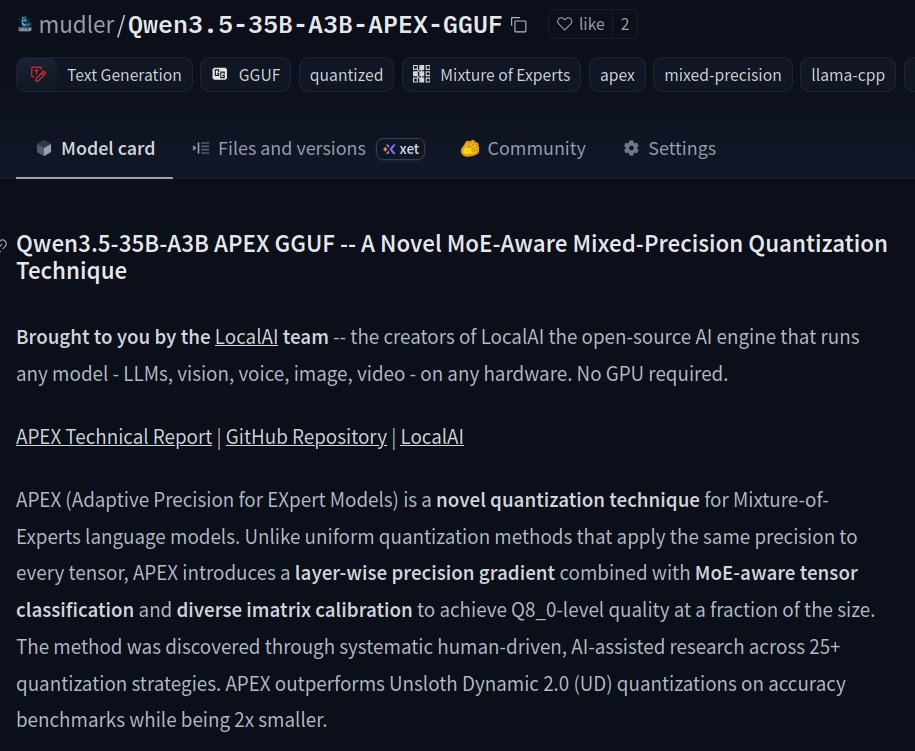

I've just released APEX (Adaptive Precision for EXpert Models): a novel MoE quantization technique that outperforms @UnslothAI Dynamic 2.0 on accuracy while being 2x smaller for MoE architectures. Benchmarked on Qwen3.5-35B-A3B, but the method applies to any MoE model. Half the size of Q8. Perplexity comparable to F16. Works with stock @ggml_org's llama.cpp. Open source (of course!), with ❤️ from the @LocalAI_API team. 👇Links to the model, repository and benchmarks below! (+ Bonus TurboQuant benchmarks with @no_stp_on_snek's TQ+! )