Your curated collection of saved posts and media

Train smarter. 🦾 Deploy faster. 💨 Eat better croissants. 🥐 Early bird pricing for #PyTorchCon Europe saves you €200 if you register by 27 February. See you in Paris, 7-8 April. 👉 https://t.co/vPcgBQu8u5 https://t.co/WbNZj16d3U

Matt White (@matthew_d_white) will speak at #NEARCON 2026 in San Francisco, Feb 23–24. Autonomous Systems In Practice examines how #AutonomouSystems are built, evaluated, constrained, secured, and made reliable beyond demos. Mon, Feb 23, 11:20–11:50 AM PT. With Sergey Astretsov (@sergeiest), @near_ai; Nalin Mittal, @Google; Noëlle Becker Moreno (@1stNoL), @@edgeandnode. Agenda: https://t.co/A79jGPff8K

PyTorch Day India 2026 session recordings are now available. On February 7, 460 builders gathered in Bengaluru for a full day of technical talks focused on kernels, compilers, inference, and production-grade AI systems. Co-hosted by IBM, NVIDIA, Red Hat, and PyTorch Foundation, the event highlighted heterogeneous compute, platform stability, and end-to-end performance from edge to data center. 🔗 Full event playlist: https://t.co/0ELH0O0Ut3 #PyTorch #AIInfrastructure #OpenSource

The schedule for #PyTorchCon Europe is live! 💥 Join us 7-8 April in Paris for 2 days of research breakthroughs, production #AI & #PyTorch ecosystem innovation. Explore the full agenda: https://t.co/ECBkOSYTI2 Secure your pass today: https://t.co/uy8MR1KdV0 https://t.co/LEDsoQN4Nf

Trying to tune your Expert Parallel (EP) communication for hyperscale mixture-of-experts (MoE) models? This post, ‘Optimizing Communication for Mixture-of-Experts Training with Hybrid Expert Parallel’, details an efficient MoE EP communication solution, Hybrid-EP, and its use in the NVIDIA Megatron family of frameworks, on NVIDIA Quantum InfiniBand and NVIDIA Spectrum-X Ethernet platforms. It also dives into the effectiveness of Hybrid-EP in real-world model training. Read the full post: https://t.co/4NOFpaiFYz #PyTorch #OpenSourceAI #AI #Inference #Innovation

⏰ 5 days left! Early bird pricing for #PyTorchCon Europe ends 27 February. Save €200 when you register now. ICYMI the schedule is live! Start planning your 7-8 April in Paris: https://t.co/XXIopbwG2z Register: https://t.co/Oxa4w2vTOh https://t.co/nHcQM9bTKD

Helion's autotuner has been a powerful tool for optimizing ML kernels, but it came with a challenge: long autotuning sessions that could take 10+ minutes, sometimes even hours. The PyTorch team at Meta set out to solve this bottleneck using machine learning itself 🖇️ Read here how they did it: https://t.co/N8lYGeXuv9 Spoiler alert: Using Likelihood-Free Bayesian Optimization Pattern Search, they achieved a 36.5% reduction in autotuning time for NVIDIA B200 kernels while improving kernel latency by 2.6%. For AMD MI350 kernels, they saw a 25.9% time reduction with 1.7% better latency. Some kernels showed even more dramatic improvements—up to 50% faster autotuning and >15% latency gains. ✍️ Ethan Che, Oguz Ulgen, Maximilian Balandat, Jongsok Choi, Jason Ansel (Meta) #PyTorch #Helion #MachineLearning #BayesianOptimization #OpenSourceAI #Performance

PyTorch Foundation Announces New Members as Agentic AI Demand Grows We welcomed nine new members since December 2025, adding five Silver and four Associate organizations spanning AI startups, leading universities, and global governments. New Silver members include Clockwork Systems, Inc., Emmi AI, National IT Industry Promotion Agency, @nota_ai, and yasp. New Associate members include @CarnegieMellon, CommonAI CIC, Monash University, and @uniofleicester. Membership growth underscores rising demand for open, community-driven, production-ready AI tooling as agentic AI accelerates, strengthening the ecosystem around PyTorch Foundation projects, including PyTorch, @vllm_project, @DeepSpeedAI, and @raydistributed. “From training frameworks like PyTorch and optimization systems like DeepSpeed that create capabilities such as advanced tool calling, to inference engines and orchestration layers like vLLM and Ray that operationalize them, the Foundation hosts critical layers of the open source AI stack. The growth of our membership reflects a shared recognition that these capabilities must be built collaboratively in a vendor-neutral environment.” – Mark Collier (@sparkycollier), GM of AI at The Linux Foundation and Executive Director of the PyTorch Foundation 🔗 Read the full announcement: https://t.co/xOhazHWfUP #PyTorch #AIInfrastructure #OpenSourceAI

The CFP is live 🎤 Speak at #KubeCon + #CloudNativeCon + #OpenInfraSummit + #PyTorchCon China 2026, happening 8-9 September in Shanghai. Got insights on #CloudNative, #AI, infra, or #OpenSource? Submit by 3 May. Apply now: https://t.co/V7rdULpoqp https://t.co/vFe1h09qkZ

⏳ 3 days left to save! Spend only €449 on your #PyTorchCon Europe ticket if you regsiter by 27 February. Join researchers, developers, and #AI engineers from 7-8 April in Paris and help shape #PyTorch in production. Schedule: https://t.co/p0Q83FeHbC Register: https://t.co/pyVwnUS4EW

🚀 Announcing the @PyTorch OpenEnv Hackathon with CV and @SHACK15sf Build RL environments, post-train models, and tackle 5 major RL + agentic orchestration challenges 💰 $100K+ cash in prizes 👥 Teams up to 4 📍 In-person in San Francisco Top judges, mentors, and speakers from: @Meta @huggingface @UCBerkeley @UnslothAI @fleet_ai @SnorkelAI @PatronusAI @mercor_ai @HalluminateAI @scale_AI @CoreWeave @OpenPipeAI @northflank @cursor_ai and Scaler AI Labs Register below 👇

New @DeepSpeedAI updates make large-scale multimodal training simpler and more memory-efficient. Our latest blog introduces a PyTorch-identical backward API that helps code multimodal training loops easy, plus low-precision model states (BF16/FP16) that can reduce peak memory by up to 40% when combined with torch.autocast. 🖇️ Read the full post for details: https://t.co/sSHMGhRixV #DeepSpeed #PyTorch #MemoryEfficiency #MultimodalTraining #OpenSourceAI

Matt White will speak at Dubai AI Festival, joining a panel discussion on who powers the global AI infrastructure. The session brings together leaders shaping the systems, platforms, and governance models that underpin AI at scale. As General Manager of AI at Linux Foundation, Matt will share perspectives from the open ecosystem driving AI development and deployment worldwide. 📍 Dubai World Trade Centre 📅 April 7–8, 2026 🔗 Learn more: https://t.co/GzABsuPw0b #PyTorch #AIInfrastructure #OpenSourceAI

🚨 Prices for #PyTorchCon Europe go up tomorrow, 27 Feb at 23:59 CET! Register for just €449 and join us from 7-8 April in Paris. Two days of #GenAI, large-scale training, performance engineering, and real-world #PyTorch deployments. Secure your pass now! https://t.co/mE4FXCU7aD

⏰ Final hours. Early bird rates for #PyTorchCon Europe end TODAY at 23:59 CET. Join us 7-8 April in Paris. Technical sessions, poster presentations, community expo, and the Flare Party. 🔥 Schedule: https://t.co/HBq4XhQLd2 Register: https://t.co/uzweFa52qd https://t.co/NWKdVCKdQO

Our February PyTorch Foundation newsletter is live: Inside: leadership and membership announcements, live schedule for PyTorch Conference Europe 2026 in Paris, a recap of PyTorch Day India 2026, ambassador highlights, and recent technical blogs from across the ecosystem. 📨 Subscribe for updates delivered directly to your inbox: https://t.co/PdpCPZTflP 👉 Read: https://t.co/RFWKZrc94Q #PyTorch #PyTorchCon #AIInfrastructure #OpenSourceA

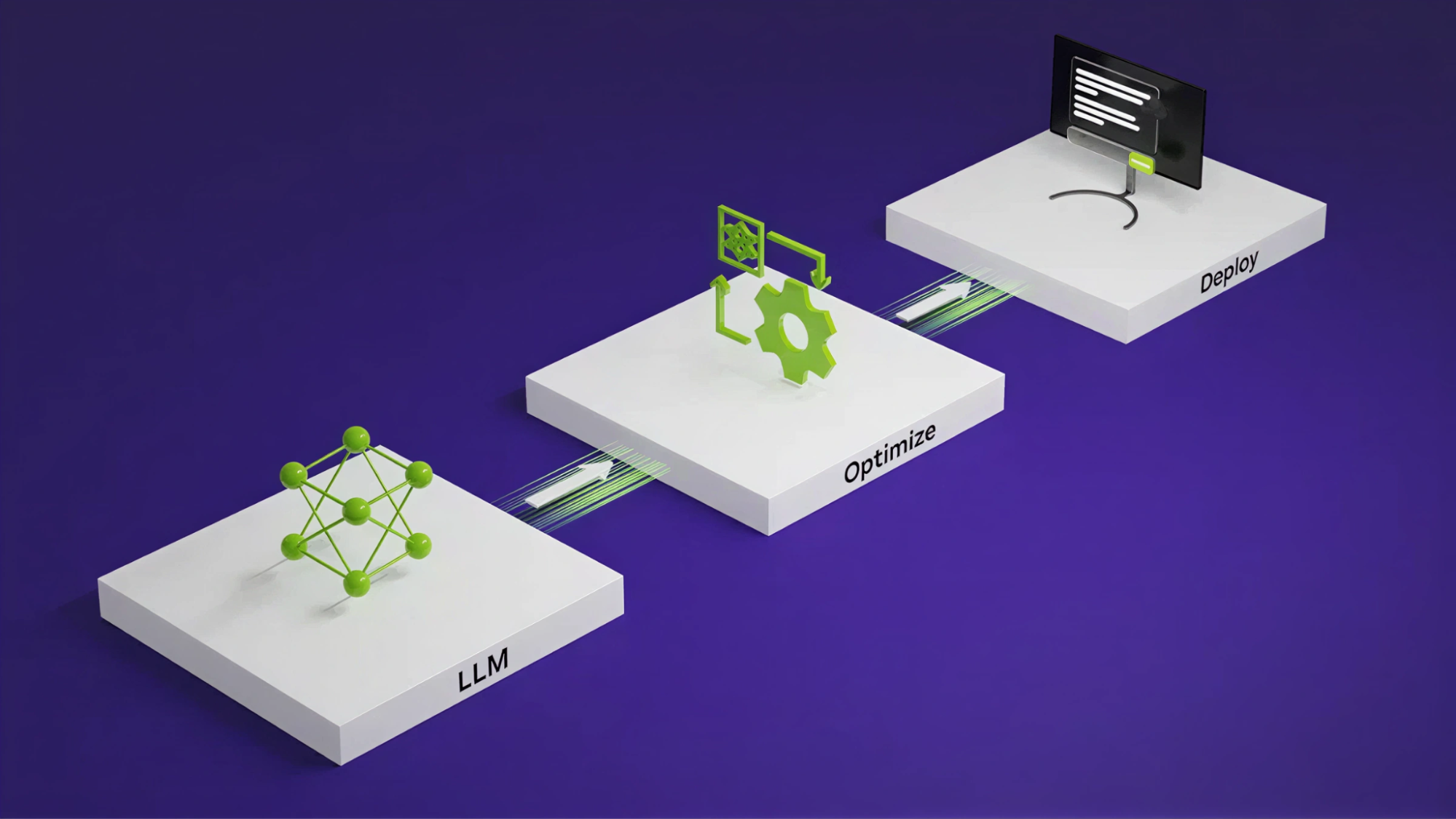

Are you tired of hand-tuning each model you develop? What if you could describe the architecture once and let a system apply graph transformations and optimized kernels? NVIDIA TensorRT LLM AutoDeploy marks a shift toward approaching inference optimization as a compiler and runtime responsibility rather than a burden on the model author. This approach enables faster experimentation, broader model coverage, and a cleaner separation between model design and deployment. Learn more from our documentation, examples scripts, and the blog, ‘Automating Inference Optimizations with NVIDIA TensorRT LLM AutoDeploy’ Read the full post: https://t.co/qlIO7q35oY #PyTorch #OpenSourceAI #AI #Inference #Innovation

New to the PyTorch Ecosystem Landscape: Kubetorch. Kubetorch enables ML research and development on Kubernetes across training, inference, RL, evals, data processing, and more, in a simple and unopinionated package. Learn more: https://t.co/YadOKc3sQo #PyTorch #Kubernetes #MLOps #AIInfrastructure

Doc-to-LoRA: Learning to Instantly Internalize Contexts https://t.co/bDqLdqhmB9 https://t.co/UOHnPZ8sfO

@togelius I wasn't familiar with that one, but this idea has definitely been in the literature for a while. We actually actively disclaim credit for the general idea. https://t.co/wV8l0WOsjM

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. https://t.co/rM77LJejuk

For those wondering how mass domestic surveillance could be consistent with "all lawful use" of AI models, I recommend a declassified report from the ODNI on just how much can be done with commercially available data (CAI): "...to identify ever person who attended a protest" https://t.co/GsfWtmSKvd

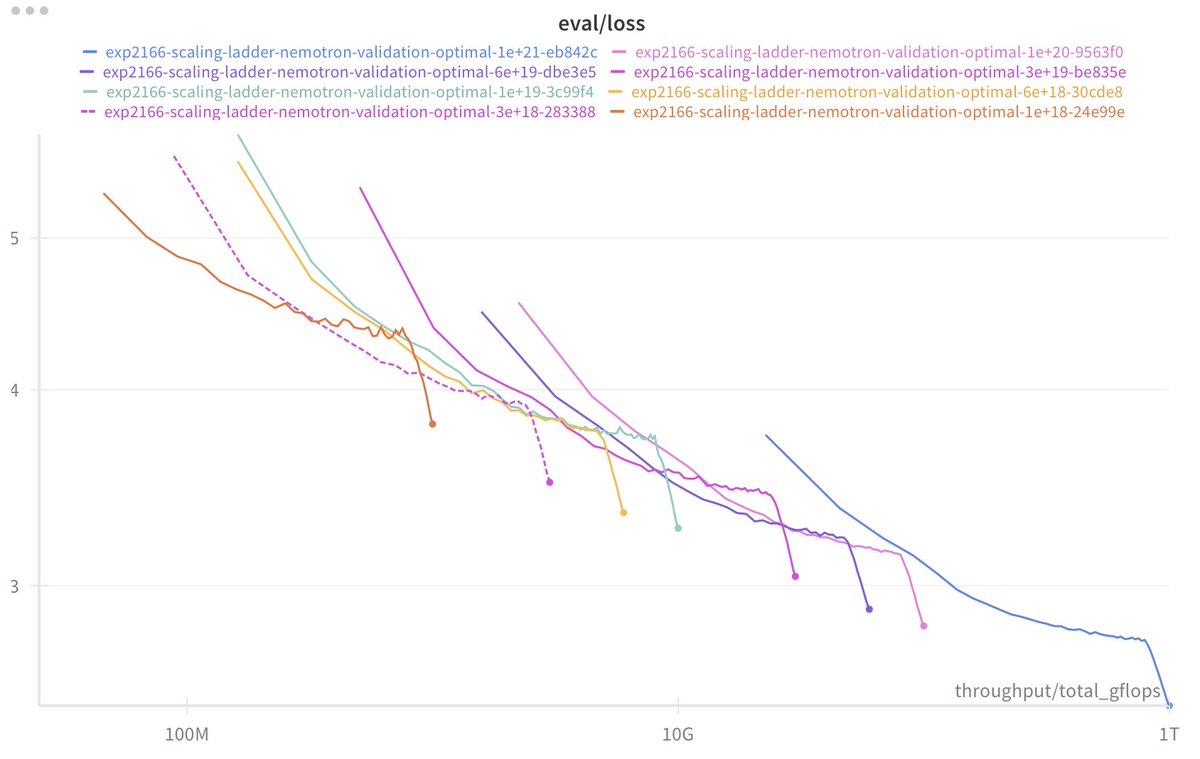

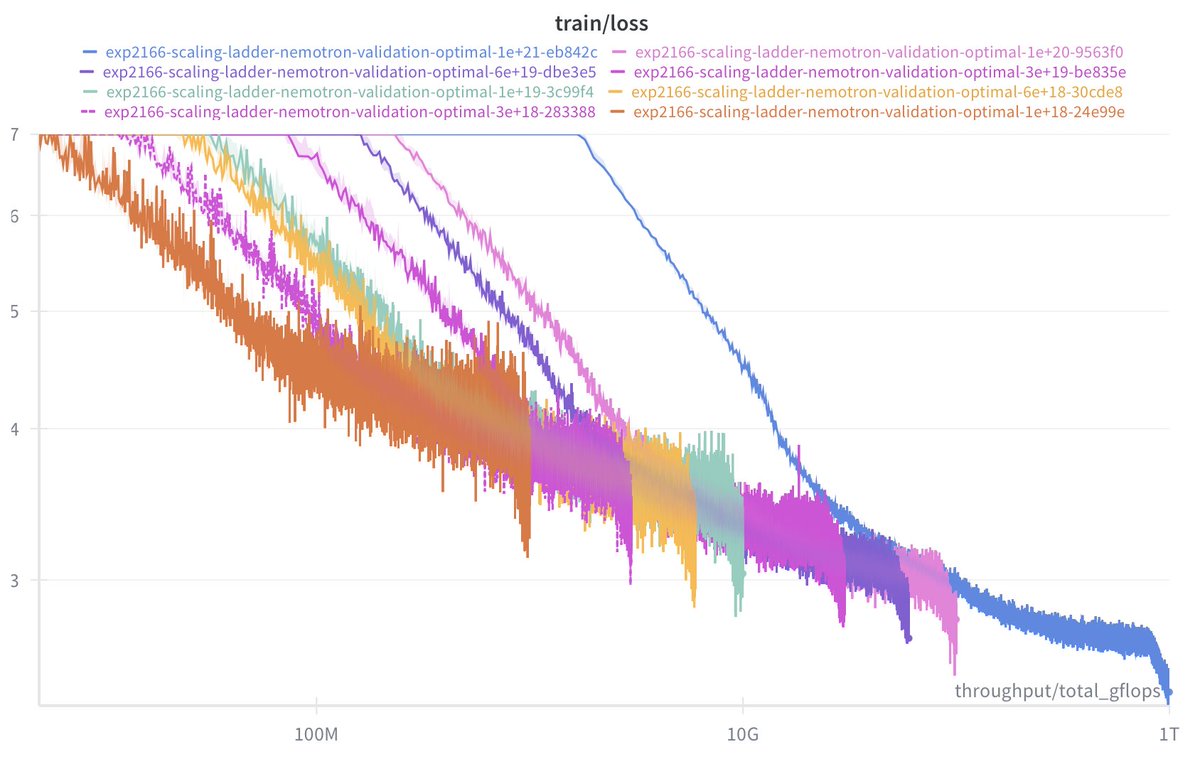

2026 aesthetic: stable scaling runs https://t.co/HnalPzYKJk

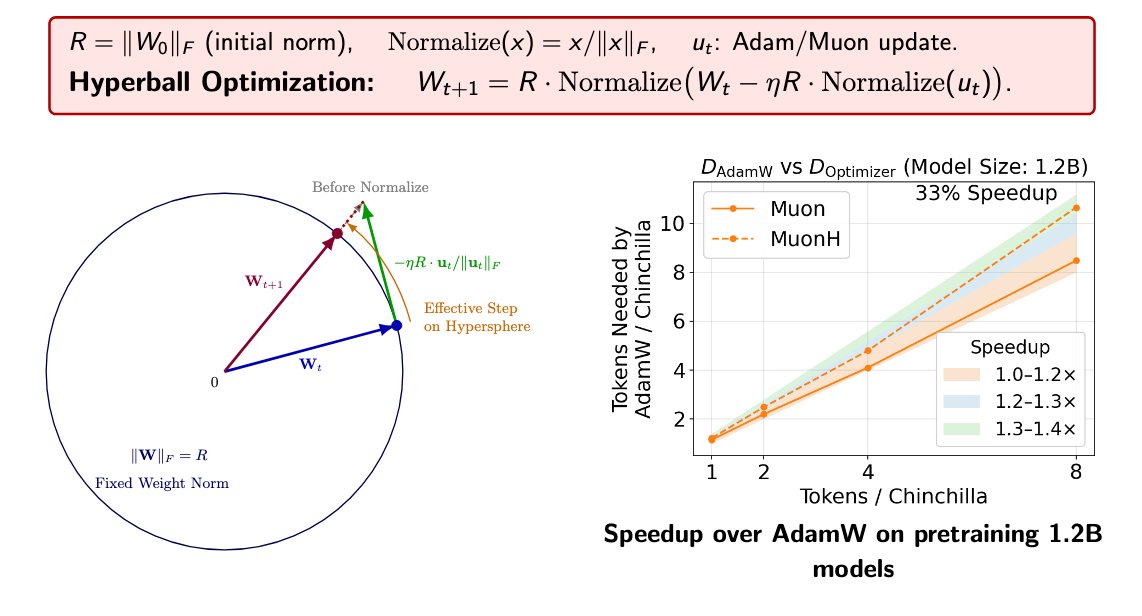

(1/n) Introducing Hyperball — an optimizer wrapper that keeps weight & update norm constant and lets you control the effective (angular) step size directly. Result: sustained speedups across scales + strong hyperparameter transfer. https://t.co/1vRMHgZgoX