@PyTorch

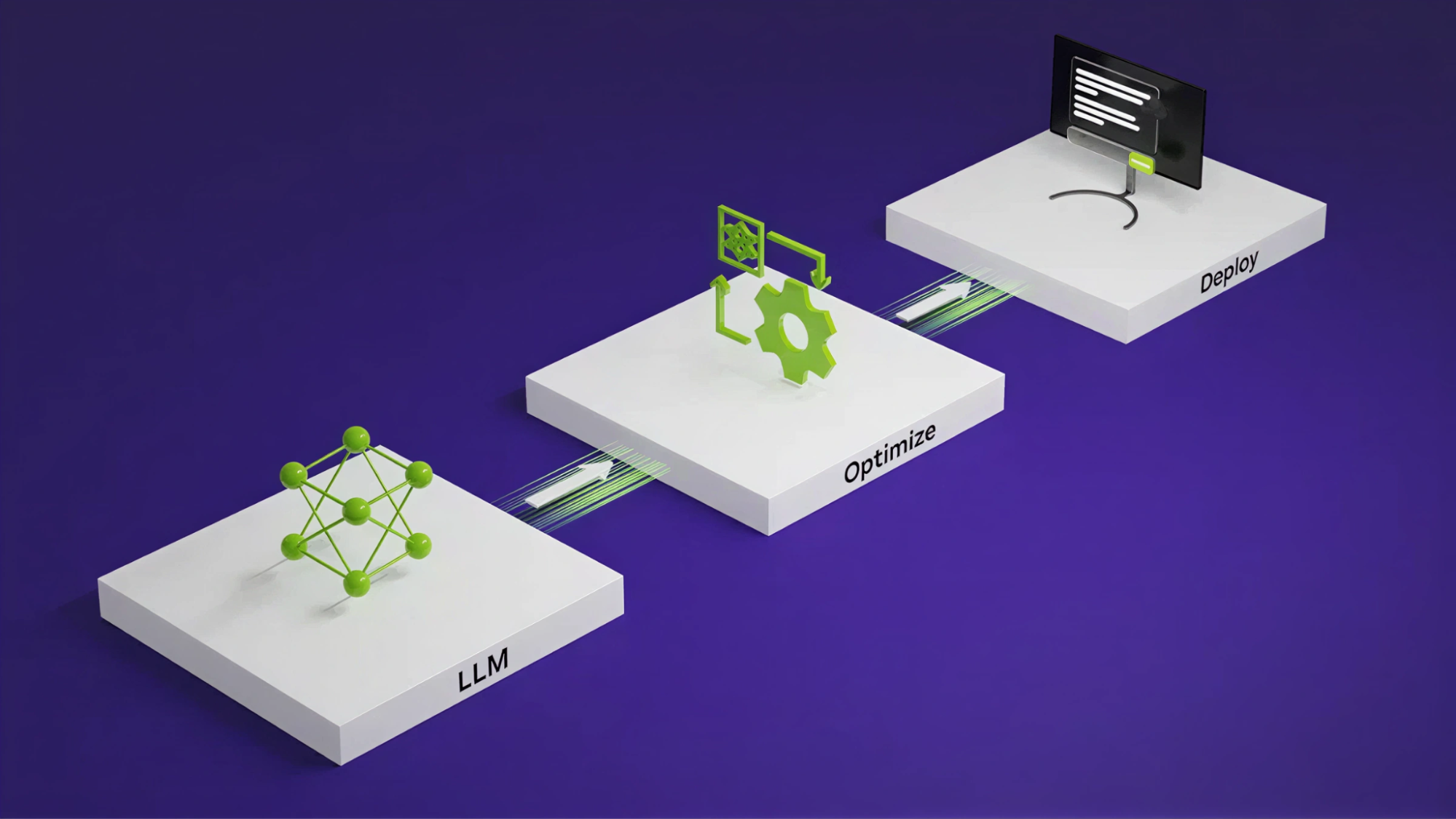

Are you tired of hand-tuning each model you develop? What if you could describe the architecture once and let a system apply graph transformations and optimized kernels? NVIDIA TensorRT LLM AutoDeploy marks a shift toward approaching inference optimization as a compiler and runtime responsibility rather than a burden on the model author. This approach enables faster experimentation, broader model coverage, and a cleaner separation between model design and deployment. Learn more from our documentation, examples scripts, and the blog, ‘Automating Inference Optimizations with NVIDIA TensorRT LLM AutoDeploy’ Read the full post: https://t.co/qlIO7q35oY #PyTorch #OpenSourceAI #AI #Inference #Innovation