Your curated collection of saved posts and media

@newrepublic @Prof_Sugon_Deez https://t.co/KTMPjgMkp1

JD Vance, October 2024: “Out interests, I think, very much, is a not going to war in Iran." “Kamala Harris kinda likes war…They seem to be sleepwalking us into a war with Iran." https://t.co/ptmhOuJJhW

This is the AI that will be taking our jobs https://t.co/nycRqJimm6

sneak peek of Anthropic's 2026 Super Bowl ad https://t.co/6v5ZFiHYgO

sneak peek of Anthropic's 2026 Super Bowl ad https://t.co/6v5ZFiHYgO

Video generation models are improving fast—real-time autoregressive models now deliver high quality at low latency, and they’re quickly being adopted for world models and robotics applications. So what’s the problem? They’re still too slow on consumer hardware. 🚀 What if we told you that we can get true real-time 16 FPS video generation on a single RTX 5090? (1.5-12x over FA 2/3/4 on 5090, H100, B200) Today we release MonarchRT 🦋, an efficient video attention that parameterizes attention maps as (tiled) Monarch matrices and delivers real E2E gains. 📄 Paper: https://t.co/d1AAMIseow 🌐 Website: https://t.co/41mqriKekx 🔗 GitHub: https://t.co/hp5iJttviA 🧵1/n

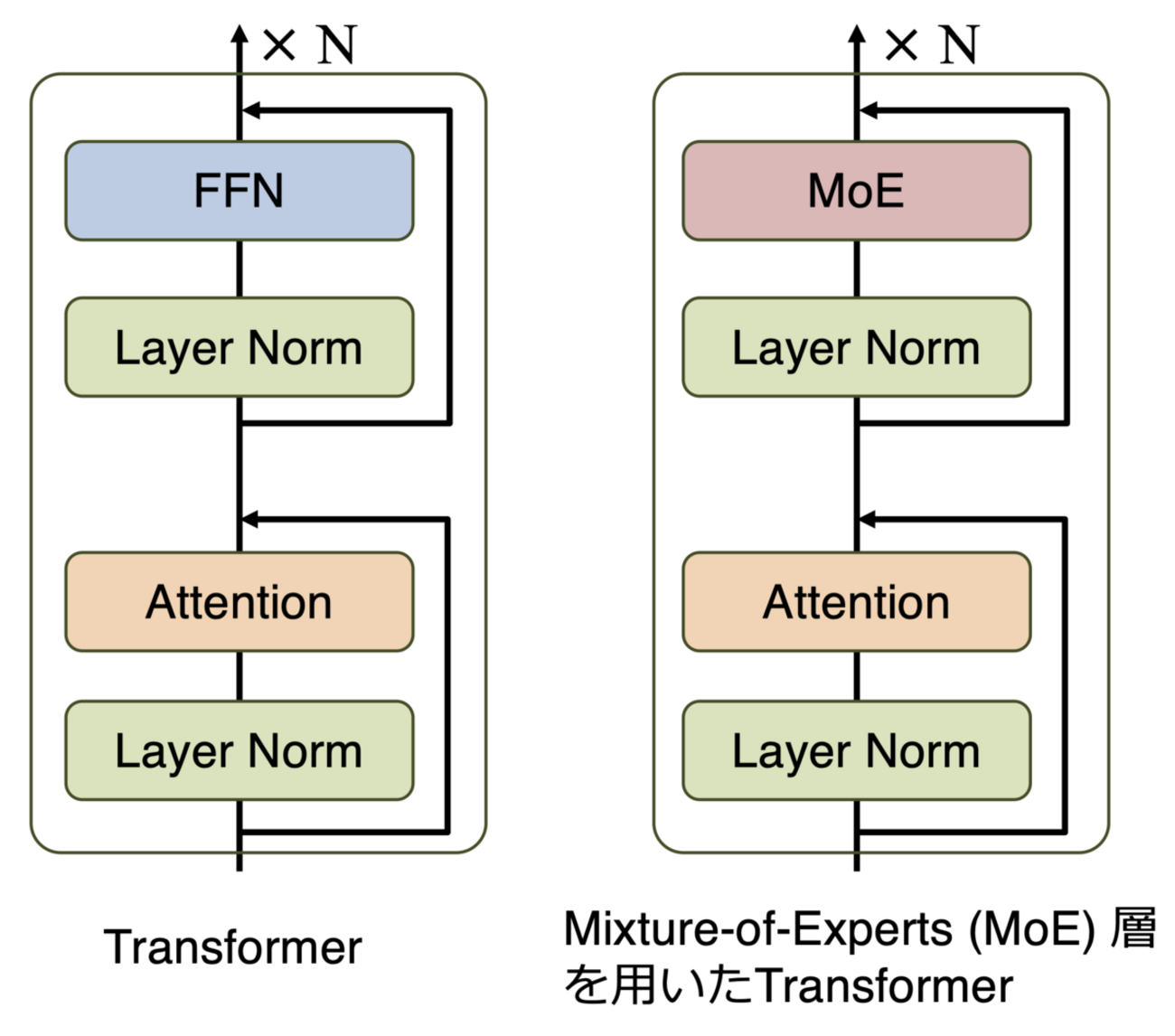

We identified an issue with the Mamba-2 🐍 initialization in HuggingFace and FlashLinearAttention repository (dt_bias being incorrectly initialized). This bug is related to 2 main issues: 1. init being incorrect (torch.ones) if Mamba-2 layers are used in isolation without the Mamba2ForCausalLM model class (this has been already fixed: https://t.co/oahfxjIsKb). 2. Skipping initialization due to meta device init for DTensors with FSDP-2 (https://t.co/hLC8nnQFc3 will fix this issue upon merging). The difference is substantial. Mamba-2 seems to be quite sensitive to the initialization. Check out our experiments at the 7B MoE scale: https://t.co/n8iuUICRux Special thanks to @kevinyli_, @bharatrunwal2, @HanGuo97, @tri_dao and @_albertgu 🙏 Also thanks to @SonglinYang4 for quickly helping in merging the PR.

@Kaivalya_in @milindmghosh Cohere: https://t.co/eQGWmi0eM6 Sarashina: https://t.co/plQG6qPAqT but it looks like the first in Japan was actually Stockmark100B which beat it by a few months: https://t.co/q3sGmwogg1

@paws4puzzles @milindmghosh Firstly, DeepSeek and Qwen have both released multiple much larger and more powerful models with MIT and Apache 2.0 licenses. Secondly, Falcon 180B doesn't have an Apache 2.0 license: https://t.co/lYDKI8ox1f

@milindmghosh Update: the Stockmark 100B model by Stockmark is actually the first 100B model from Japan, coming out in May as opposed to Sarashina2 in November. This doesn't change the order becasue Cohere Command R+ came out in April. https://t.co/q3sGmwogg1

In this amazing multidisciplinary collaboration, we report our early experience with the @openclaw -> https://t.co/THXYyajfQB

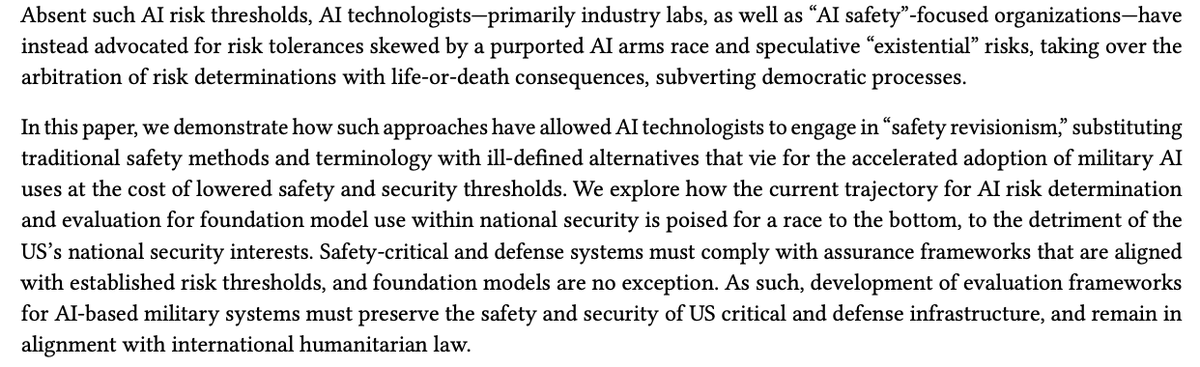

Some real cognitive dissonance happening with takes saying "but Anthropic HAD to drop their safety measures, they're the good guys you see!" Anyway from our paper last year: https://t.co/d0yyWfx0fe

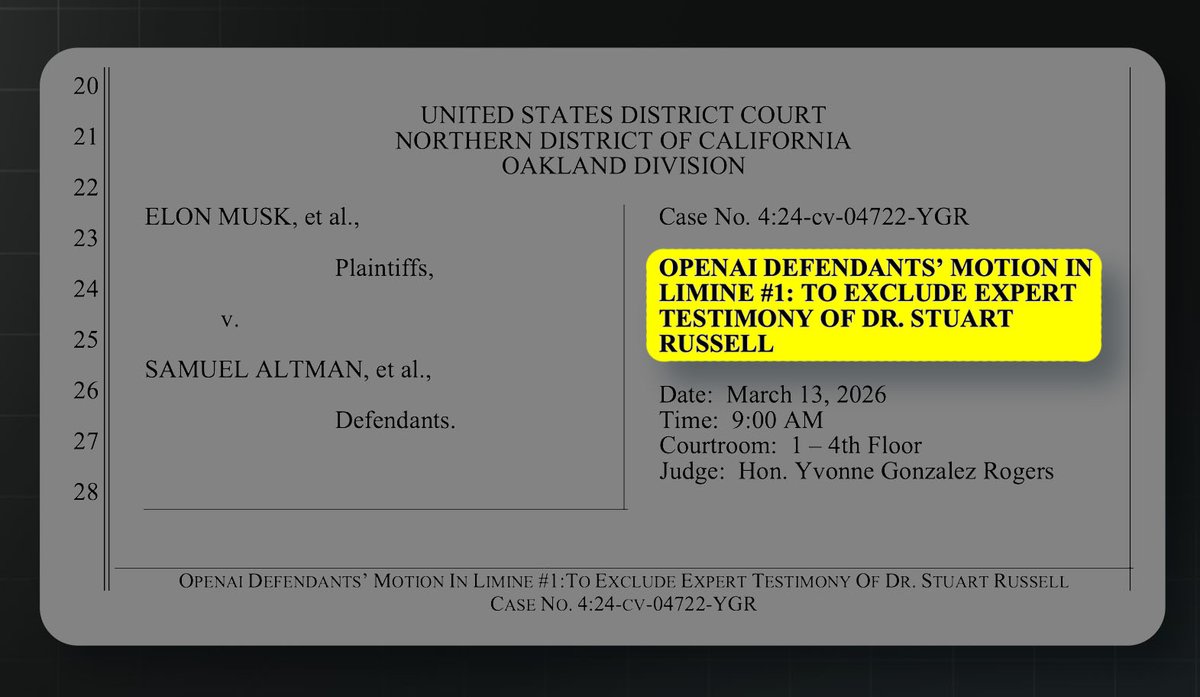

A new filing just dropped in the Musk v. Altman case, and it may be the most brazen and cynical document OpenAI has produced yet. It's a motion to exclude the testimony of Stuart Russell, but their attacks blatantly contradict things @OpenAI itself has said for years. 🧵 https://t.co/WSPSpNiYqV

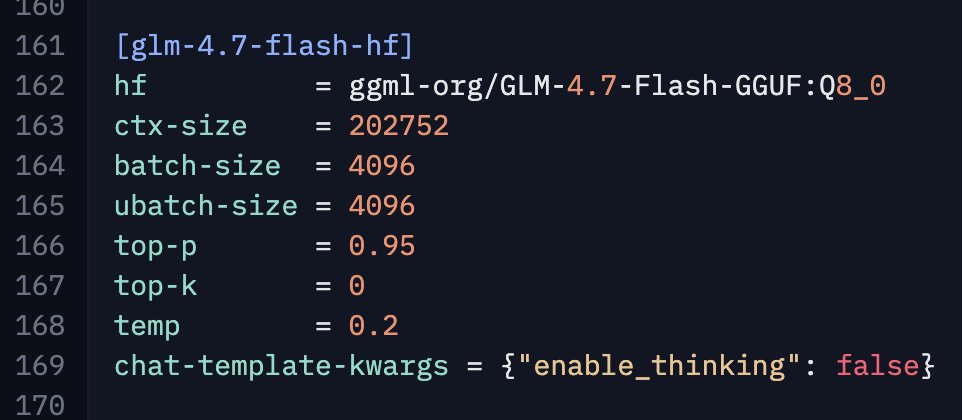

@UnslothAI Btw, I have some anecdotal evidence that disabling thinking for GLM-4.7-Flash improves performance for agentic coding stuff. Haven't evaluated in detail yet (only opencode) as it takes time, but would be interest to know if you give it a try and share your observations. To disable thinking with llama.cpp add this to the llama-server command: --chat-template-kwargs "{\"enable_thinking\": false}" Here is my config for reference:

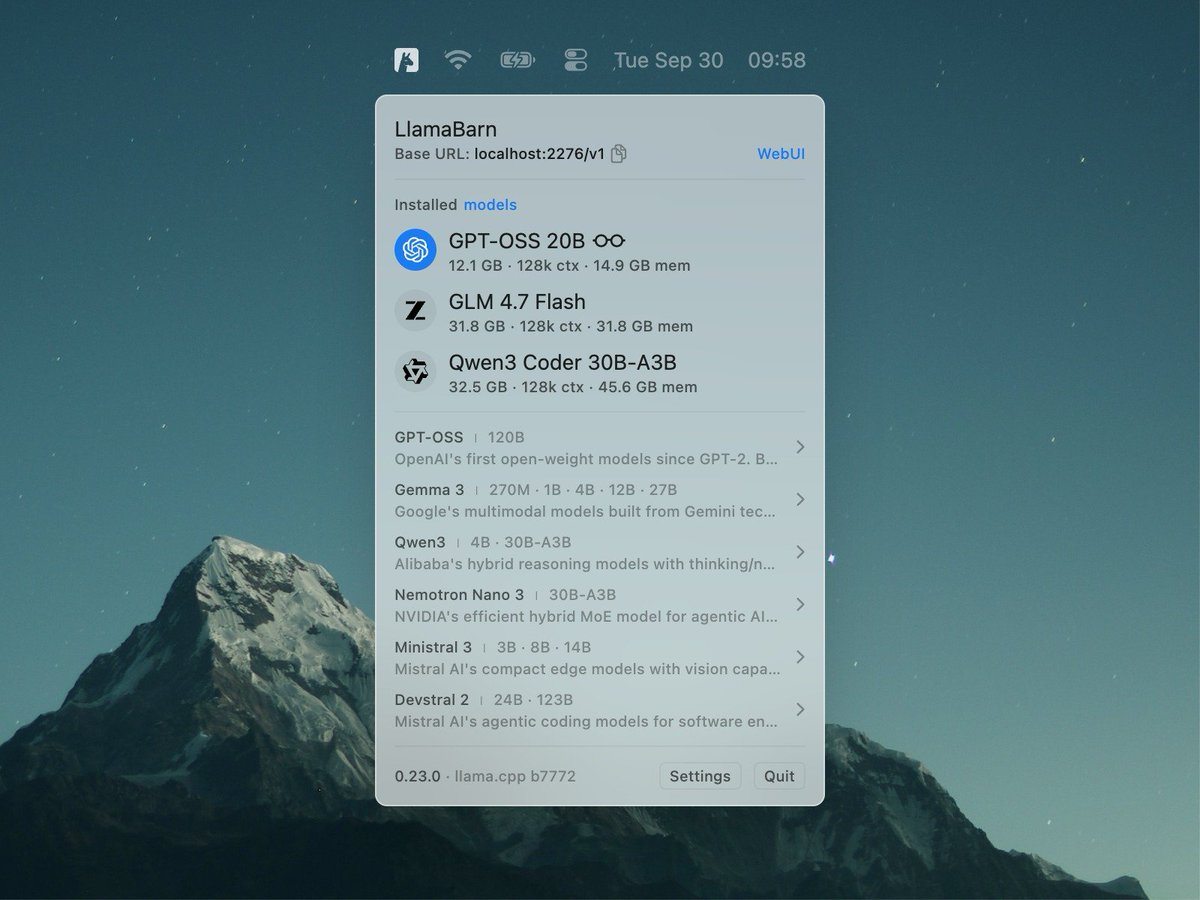

Introducing LlamaBarn — a tiny macOS menu bar app for running local LLMs Open source, built on llama.cpp https://t.co/F1Z3DVl9Kg

I am deeply thankful to the Hugging Face team for this opportunity. With their support I will be able to continue my work on the projects and I feel optimistic about the great stuff that we are going to create with the community! https://t.co/Gl95jBPhly

Introducing LM Link ✨ Connect to remote instances of LM Studio, securely. 🔐 End-to-end encrypted 📡 Load models locally, use them on the go 🖥️ Use local devices, LLM rigs, or cloud VMs Launching in partnership with @Tailscale Try it now: https://t.co/Vl2vr6HlF5

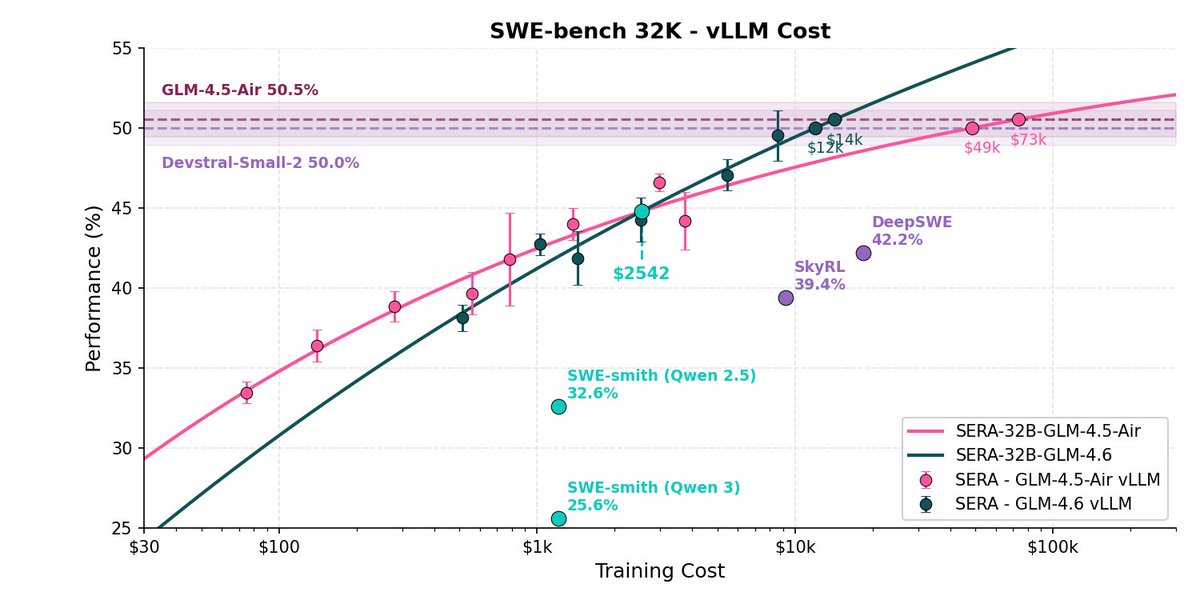

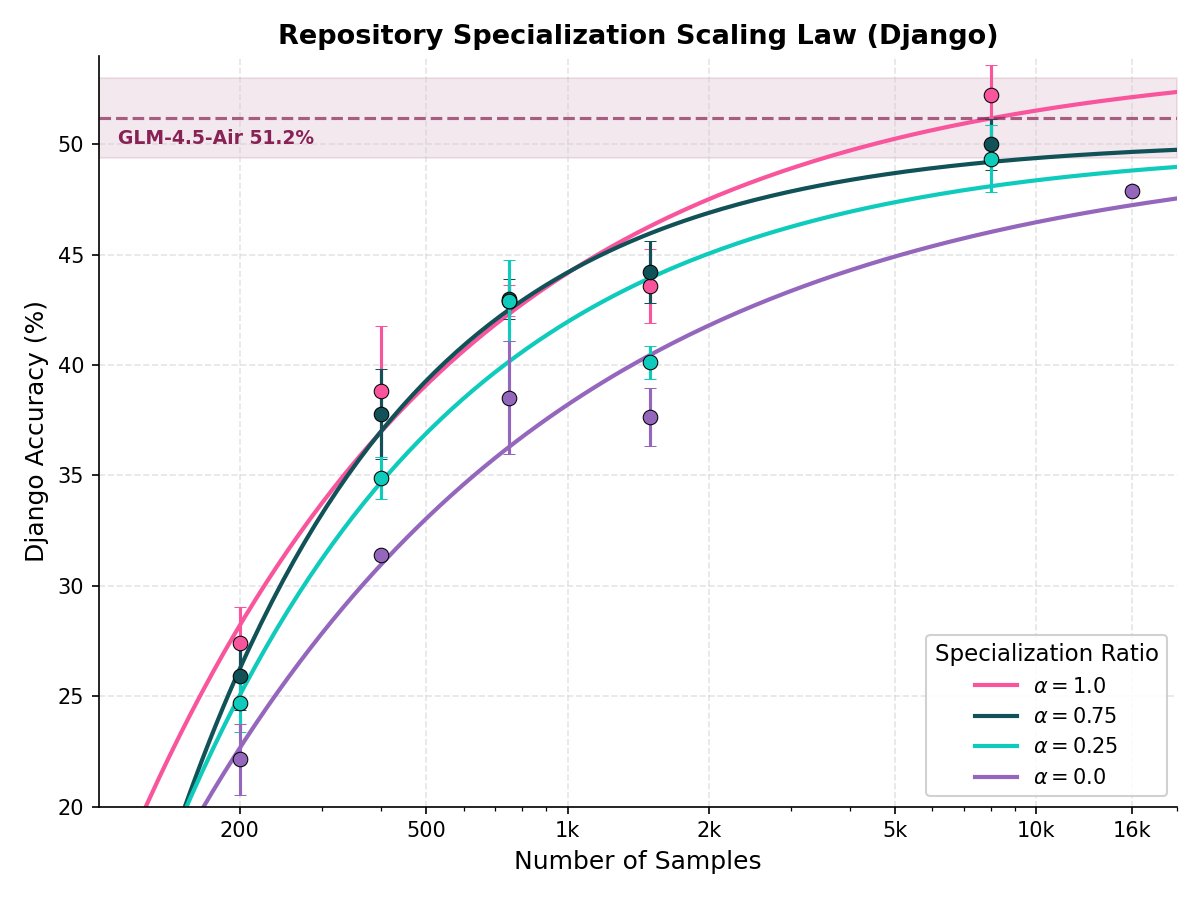

Introducing Ai2 Open Coding Agents—starting with SERA, our first-ever coding models. Fast, accessible agents (8B–32B) that adapt to any repo, including private codebases. Train a powerful specialized agent for as little as ~$400, & it works with Claude Code out of the box. 🧵 https://t.co/dor94O62B9

SERA was driven by a classic research pattern similar to QLoRA: if you are resource contraint, build efficiency first, then do the actual research. The most surprising thing: verifying coding data correctness is not helpful and adds overhead to synthetic data generation. https://t.co/O6dMEqY6fF

This is very impactful: you can now distill frontier performance into small models that are specialized to private repositories. Companies can quickly and cheaply train on their data and have super-efficient deployments of 32B agents. https://t.co/03jsS6cWJ3

Training on issue-solving only does NOT guarantee transfer to other tasks. 🎨Introducing Hybrid-Gym - synthetic training tasks for generalization (https://t.co/IrqQszPEYm) +25.4% on SWE-Bench / +7.9% on SWT-Bench / +5.1% on Commit-0 with NO issue-solving / test-gen/... training https://t.co/U9xc0yNYv4

Introducing Slingshots // TWO: Research that ships. 14 projects, six institutions – let’s meet the batch 🧵 https://t.co/g3LTeewbqC

@ElToroDotCom just launched Keeping Up with the Joneses Social Index Score It’s a proprietary score + dataset that’s 4× more predictive of a new-car purchase than traditional demo or intent data. Learn more 👉 https://t.co/BOtJcoszIX Interested? Let’s talk at #NADA2026

#NewProfilePic https://t.co/Iw58K7i1Gv