@ggerganov

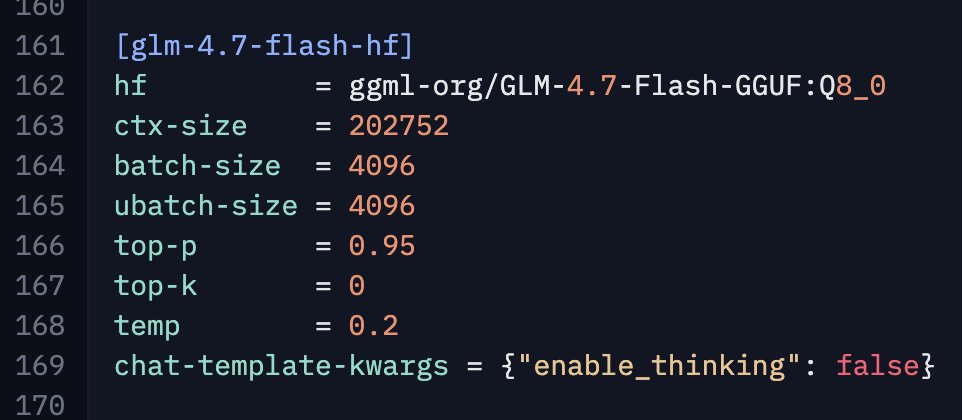

@UnslothAI Btw, I have some anecdotal evidence that disabling thinking for GLM-4.7-Flash improves performance for agentic coding stuff. Haven't evaluated in detail yet (only opencode) as it takes time, but would be interest to know if you give it a try and share your observations. To disable thinking with llama.cpp add this to the llama-server command: --chat-template-kwargs "{\"enable_thinking\": false}" Here is my config for reference: