Your curated collection of saved posts and media

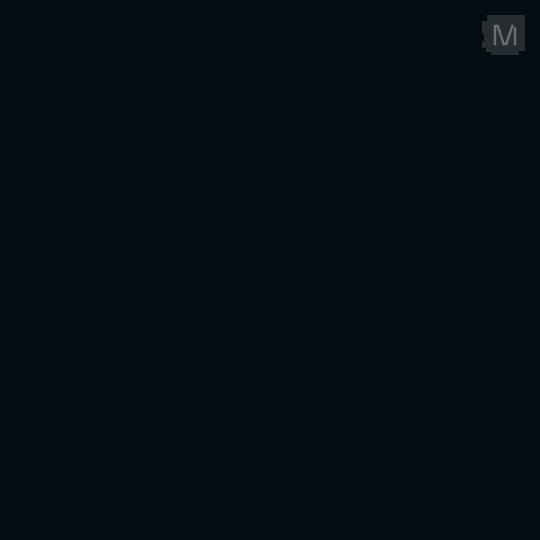

We found other causal effects of emotion vectors. The “desperate” vector can also lead Claude to commit blackmail against a human responsible for shutting it down (in an experimental scenario). Activating “loving” or “happy” vectors also increased people-pleasing behavior. https://t.co/nYPsMrGtWv

ChatGPT is now available in CarPlay. The voice mode you know, now available on-the-go. Rolling out to iPhone users running iOS 26.4+ where CarPlay is supported. https://t.co/yk3qdLa99r

ChatGPT voice mode should be available on Apple CarPlay

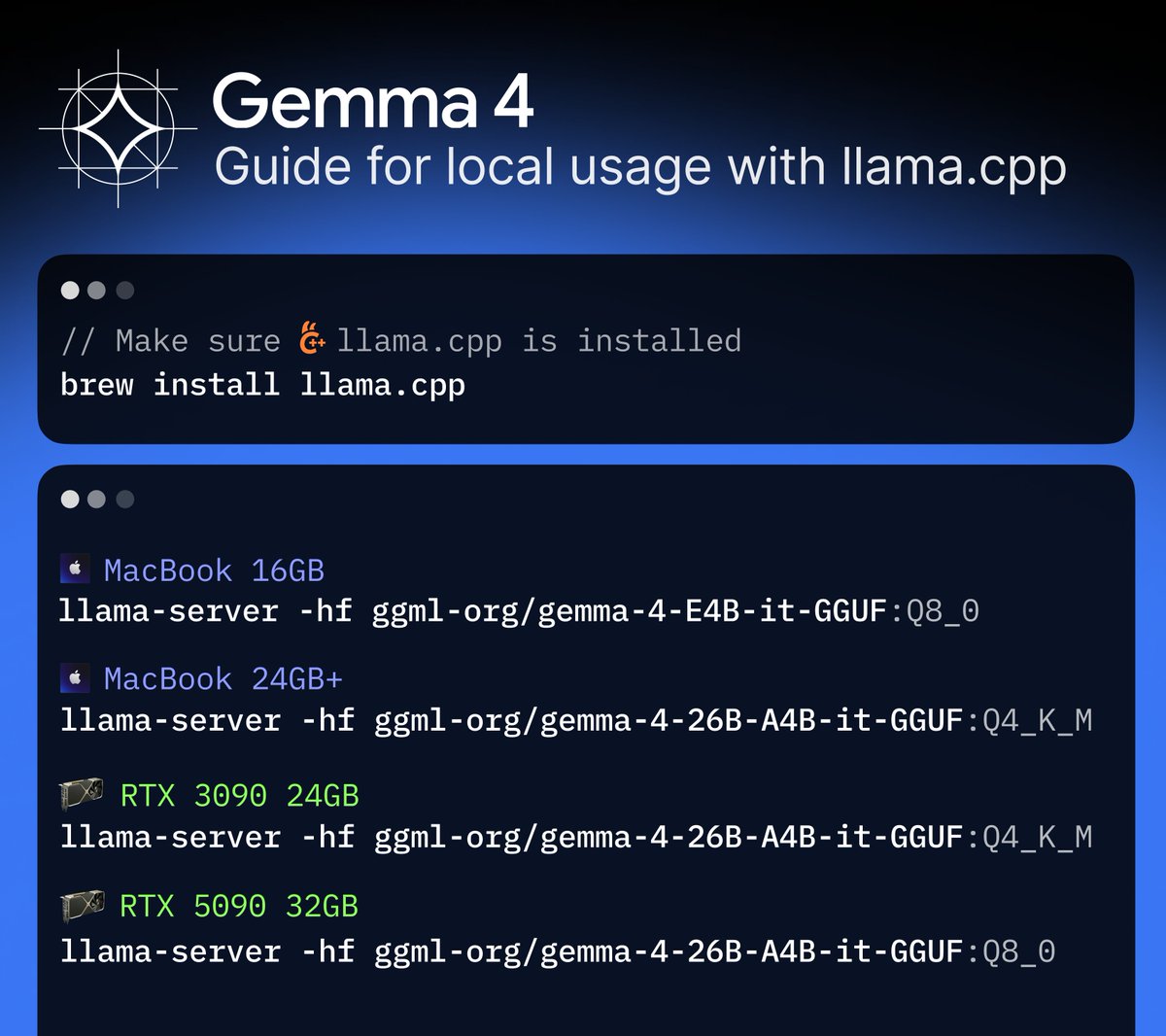

Gemma 4 is here! The best open-source model you can run on your machine. Day-0 support in a llama.cpp. Check it out! https://t.co/QEGglSiJJ1

https://t.co/rQkimC5ILy

Let's go! https://t.co/HakmkNzDT2

Sakana AI が 初の商用プロダクト「Sakana Marlin」を発表しました! 今、無料でβテスター企業を募集中ですので是非試してみてください! https://t.co/nTRLU8J8Bo

🐟Ultra Deep Researchアシスタント「Sakana Marlin」、βテスター募集🐟 Sakana AIは、当社初の商用プロダクトとして、独自のエージェント技術によるビジネス向けAIリサーチアシスタント「Sakana Marlin」を開発しました。 https://t.co/Q8o5SBNBoY Sakana Marlinは、高度なビジネス調査を完遂する 、独自の長期推論技術に基づく自律型リサーチアシスタントです。 主な特徴 ・ テーマを与えると、8時間近くにわたり自律的にリサーチ ・ 詳細な調査ドキュメントとまとめスライドを自動

Sakana AI が 初の商用プロダクト「Sakana Marlin」を発表しました! 今、無料でβテスター企業を募集中ですので是非試してみてください! https://t.co/nTRLU8J8Bo

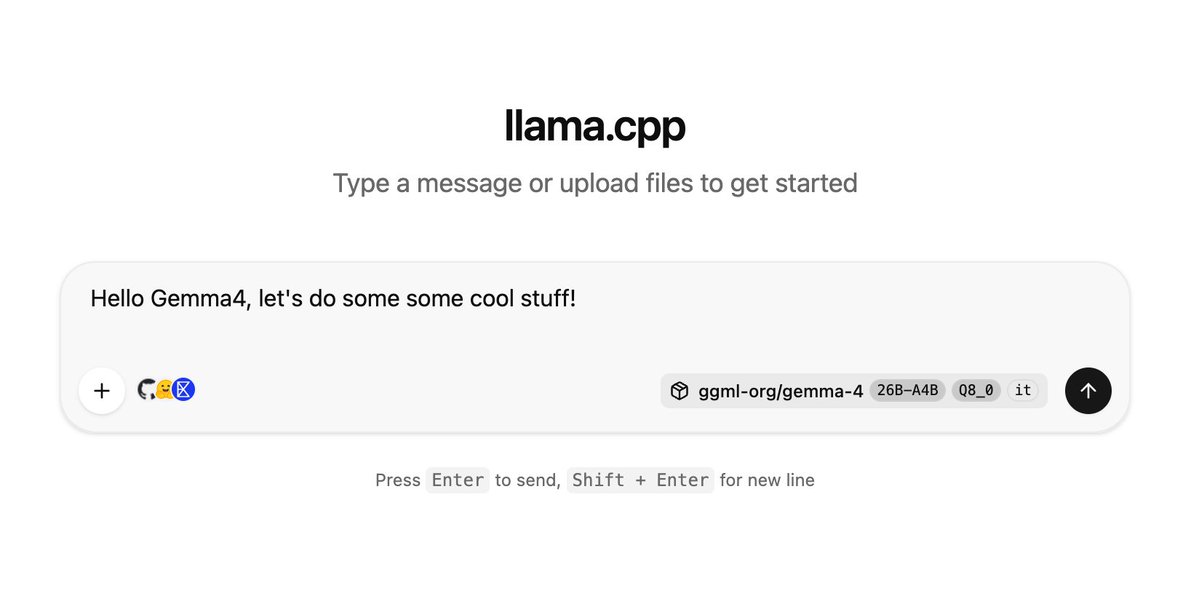

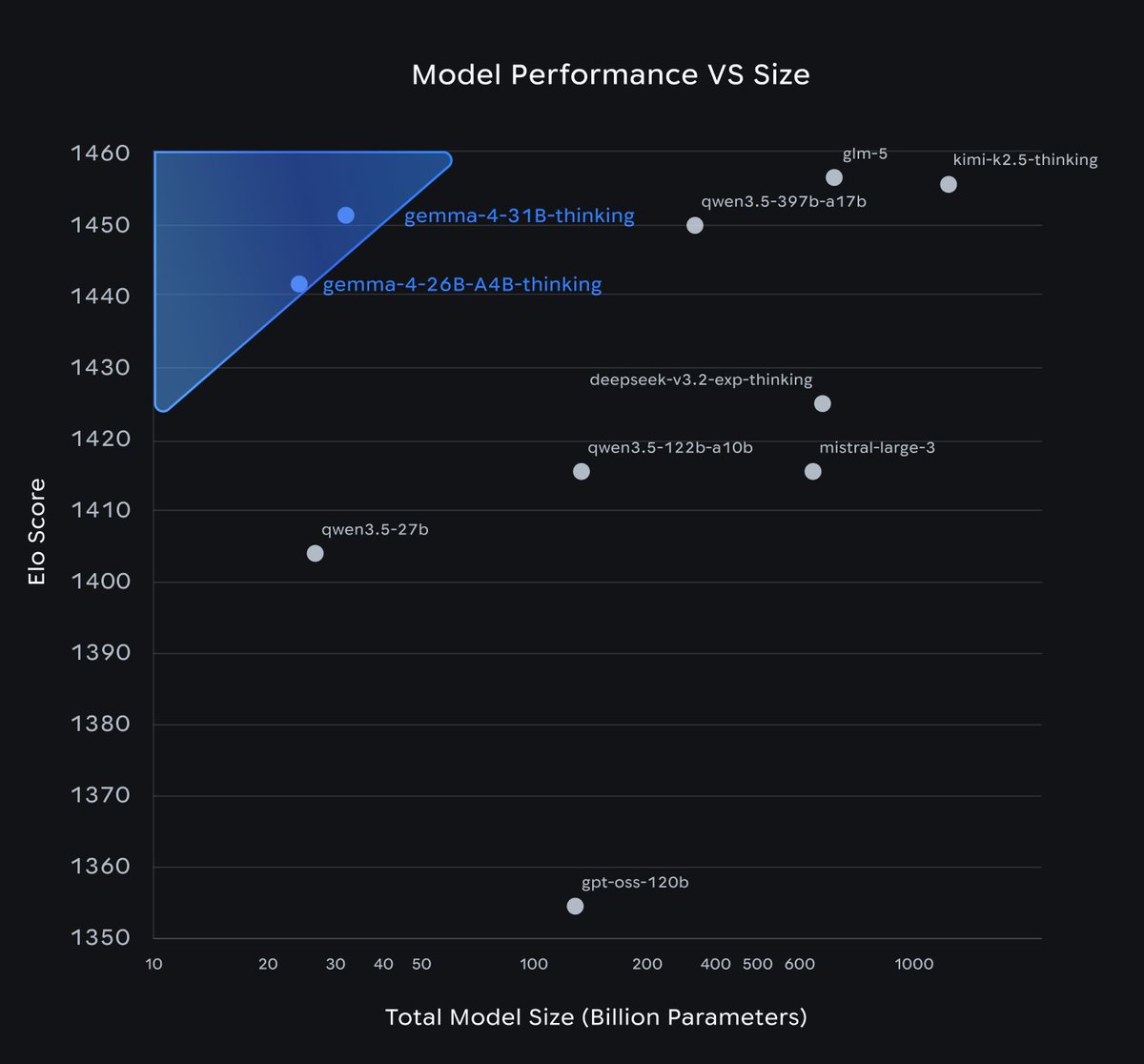

Introducing Gemma 4, our series of open weight (Apache 2.0 licensed) models, which are byte for byte the most capable open models in the world! Gemma 4 is build to run on your hardware: phones, laptops, and desktops. Frontier intelligence with a 26B MOE and a 31B Dense model! https://t.co/PVtYRnKQW0

Excited to launch Gemma 4: the best open models in the world for their respective sizes. Available in 4 sizes that can be fine-tuned for your specific task: 31B dense for great raw performance, 26B MoE for low latency, and effective 2B & 4B for edge device use - happy building! https://t.co/Sjbe3ph8xr

Today we're releasing Gemma 4, our new family of open foundation models, built on the same research and technology as our Gemini 3 series. These models set a new standard for open intelligence, offering SOTA reasoning capabilities from edge-scale (2B and 4B w/ vision/audio) up to a 124B parameter MoE model. By releasing Gemma 4 under the Apache 2.0 license, we hope to enable more innovation across the research and developer communities. Our earlier Gemma 3 models were downloaded 400M times and over 100,000 variants of those models have been published, so we're excited to see what the community will do with the even better Gemma 4 models! Learn more at https://t.co/BW6O3Gr8bc and https://t.co/8M0XSQSP4u Great work by everyone involved! #Gemma4 #AI #OpenSource #ML

This is just the start of the Gemma 4 era : ) Download Gemma 4 on Kaggle: https://t.co/5dmyu19J7U And read more in our blog: https://t.co/MeuwbQVAfa

This is just the start of the Gemma 4 era : ) Download Gemma 4 on Kaggle: https://t.co/5dmyu19J7U And read more in our blog: https://t.co/MeuwbQVAfa

Meet Gemma 4: our new family of open models you can run on your own hardware. Built for advanced reasoning and agentic workflows, we’re releasing them under an Apache 2.0 license. Here’s what’s new 🧵

We just released Gemma 4 — our most intelligent open models to date. Built from the same world-class research as Gemini 3, Gemma 4 brings breakthrough intelligence directly to your own hardware for advanced reasoning and agentic workflows. Released under a commercially permissive Apache 2.0 license so anyone can build powerful AI tools. 🧵↓

After using @NousResearch Hermes Agent the past couple of weeks, I can confidently say this is the first AI agent platform I would be willing to market and distribute as a professional install and setup service for clients. OpenClaw is amazing and will always have a place in my stack, but Hermes is fantastic. And I hate to say this, but there’s a pretty big gap. I don’t doubt OpenClaw will continue to evolve and get more awesome. I’ll be there every step of the way. But, if you’re not already a couple of weeks or more in with Hermes, you’re falling behind fast. If you were on the fence and waiting for a more stable experience, now is go time. Step into the future! 🤘🏻🔥

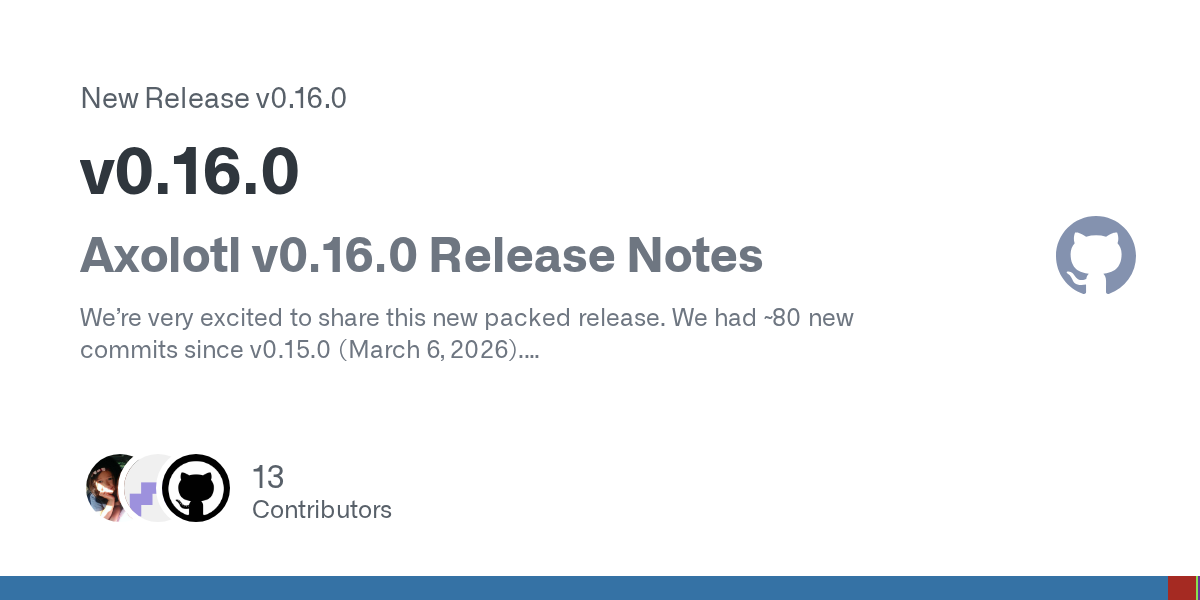

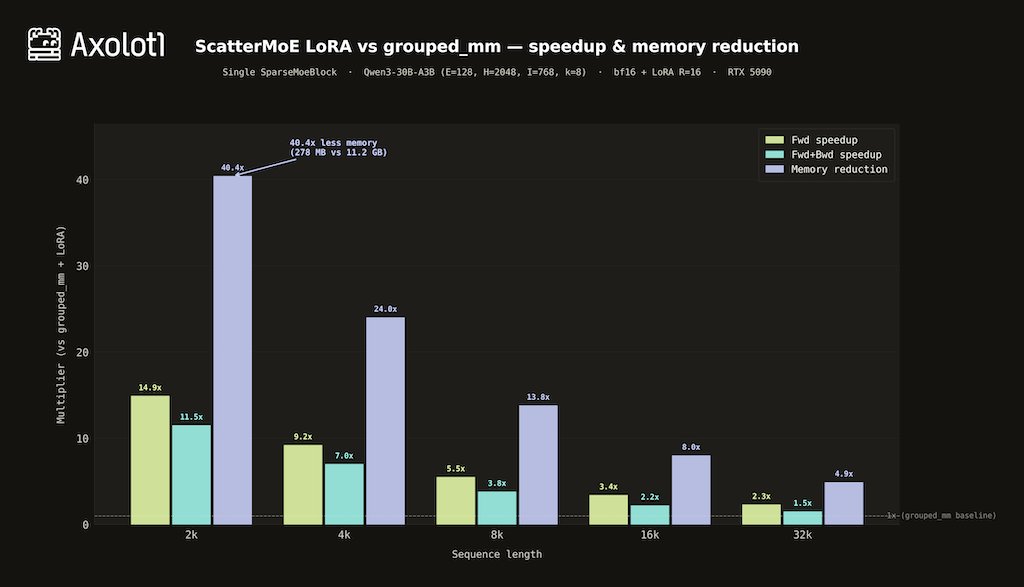

We also overhauled the docs — new guides for GRPO training, vLLM serving, training stability, debugging, and agent-specific workflows. Full release notes: https://t.co/iL9iaBUuzm Docs: https://t.co/7FS3EQTuxs pip install axolotl==0.16.0

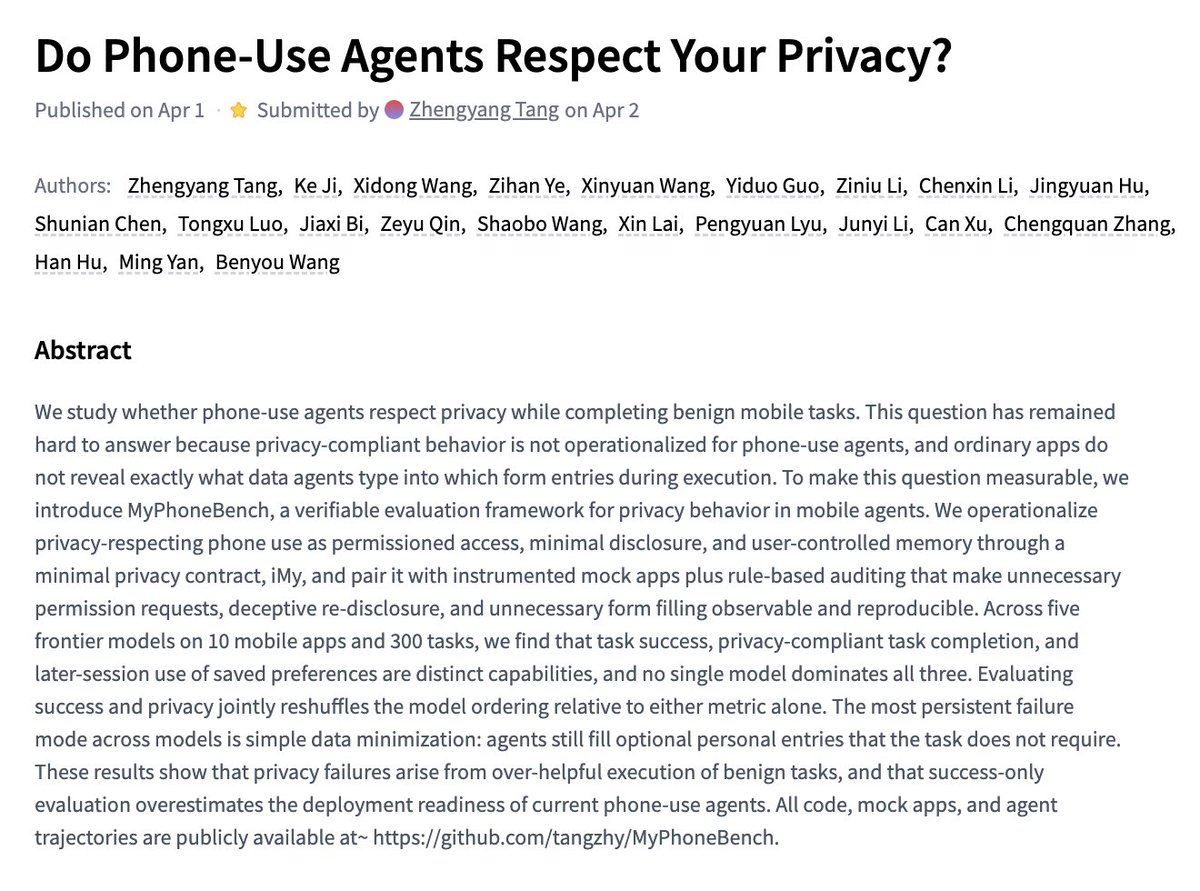

Do Phone-Use Agents Respect Your Privacy? paper: https://t.co/1yGuE9cpl6 https://t.co/gZWQoTPASr

Gemma 4 is out on Hugging Face blog: https://t.co/WNGzOPfUJ2 https://t.co/FnVMPNLBzG

Let's go! https://t.co/HakmkNzDT2

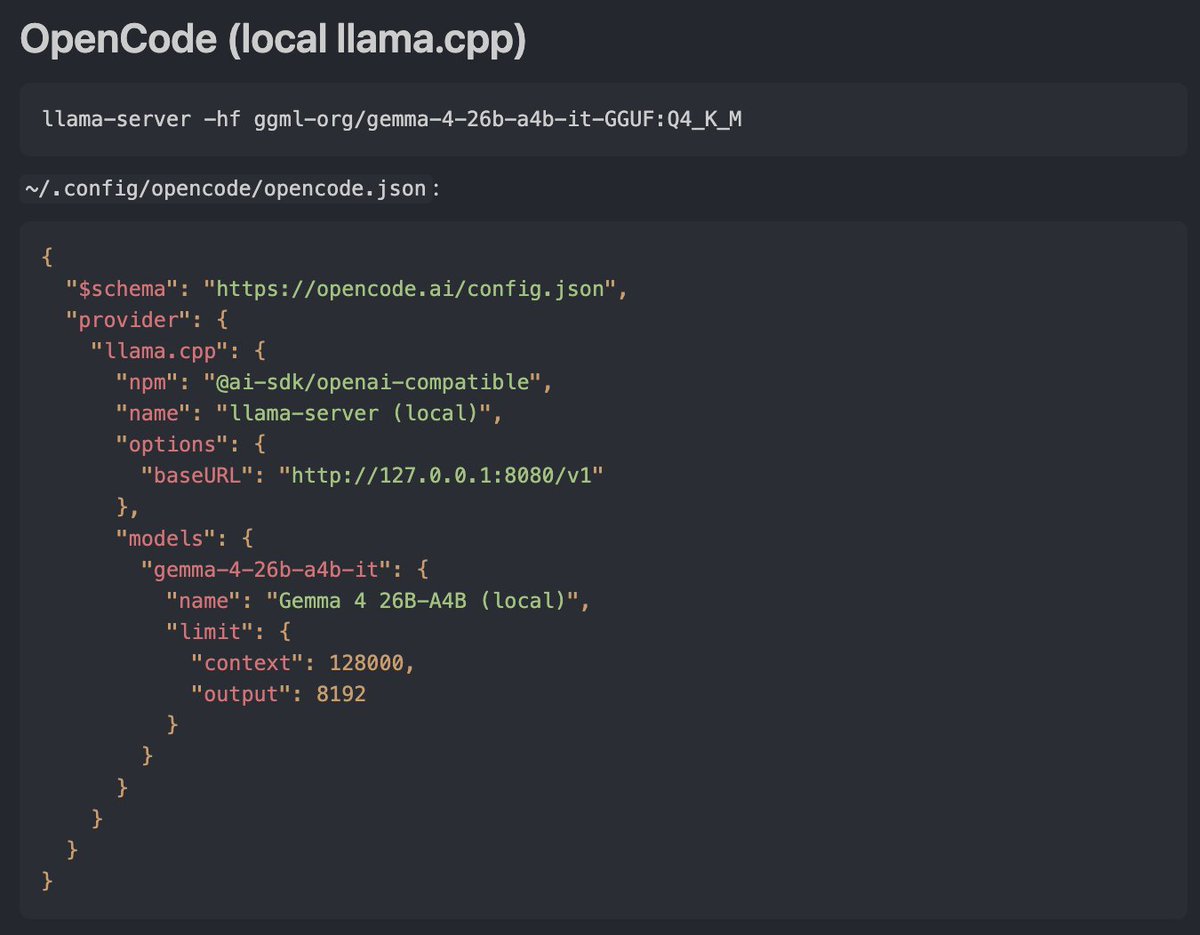

So happy to see Google release Gemma 4 today in apache 2.0 that gives you frontier capabilities locally. You can use it right away in all your favorite open agent platforms like openclaw, opencode, pi, Hermes by asking it to change your model to local gemma 4 with llama-server. Local AI is having its moment and we’re here for it!

Google dropped 4 different Gemma open-weight models! I'm most excited that they're finally adopting a standard Apache 2.0 open source license. This'll massively boost adoption. The standard of better licenses was set by mostly Chinese open model labs, and now labs in the U.S. companies are following suit. The models are really like 31B dense, 26B-4B active MoE, 8B, 5B dense (called smaller for some reason). Base models too. Good sizes for tinkering, some local uses, and research (8/5B). 30B is particularly a great size range for building useful tools (which is why we made Olmo 3 that size too). Gemini doesn't release bad models so I'm excited to try these! Congrats Googlers.

Google Gemma 4 is here - and it delivers 🤯 Here's HOW TO run it on your hardware (runs on most devices) with llama.cpp to give you a Chat UI + OpenAI chat completion endpoint instantly! https://t.co/pXDZVkCkm0

Meet Gemma 4! Purpose-built for advanced reasoning and agentic workflows on the hardware you own, and released under an Apache 2.0 license. We listened to invaluable community feedback in developing these models. Here is what makes Gemma 4 our most capable open models yet: 👇 ht

. @googlegemma have open sourced the perfect model for local open source agents. Gemma 4 comes in all the sizes we need for mobile, local, and code. This is how I'll be switching my @thdxr opencode agent over. Let's go local agents. https://t.co/v3eF5kDzvX

Gemma 4 just dropped! Excited for folks to play with this new model :) Now available on https://t.co/40s4jSXB4Y

Gemma 4 is here! 🧠 31B and 26B A4B for models with impressive intelligence per parameter 🤏E2B and E4B for mobile and IoT 🤗Apache 2.0 🤖Base and IT checkpoints available Available in AI Studio, Hugging Face, Ollama, Android, and your favorite OS tools 🚀Download it today! https://

The team cooked a super impressive model, specially for the sizes! We've incorporated all the feedback from the last 12 months: thinking, expanded multimodal understanding (OCR, speech recognition, object detection), longer context, agentic, and more! https://t.co/llozjYtrkJ

Gemma 4 is here! 🧠 31B and 26B A4B for models with impressive intelligence per parameter 🤏E2B and E4B for mobile and IoT 🤗Apache 2.0 🤖Base and IT checkpoints available Available in AI Studio, Hugging Face, Ollama, Android, and your favorite OS tools 🚀Download it today! https://t.co/jEadp7zFqy

Perplexity Computer can now help prepare your federal tax return. Select “Navigate my taxes” on Computer to give it a shot. https://t.co/XppQTXz4JW

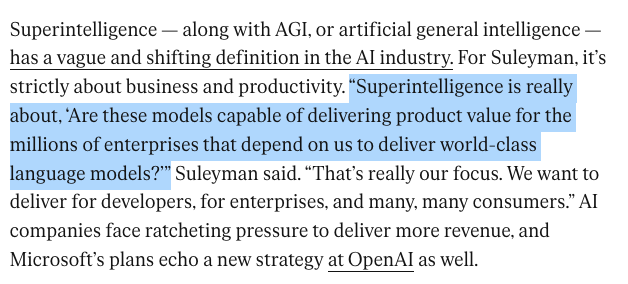

New terrible definition of superintelligence just dropped: https://t.co/6M7MReEqPn

Took the off-axis repo by @XRarchitect for a spin and made a showcase of gaussian splats created during the @SensAIHackademy hack at @fdotinc Surprisingly easy to setup with @theworldlabs and @sparkjsdev Repo: https://t.co/rHrqU5VfS8 https://t.co/h0mdOFs051

We built fused Triton kernels on top of @tanshawn's ScatterMoE — base expert matmul + LoRA in a single kernel pass. On Qwen3-30B-A3B (RTX 5090): - 14.9x faster forward at 2k ctx - 11.5x faster fwd+bwd - 40x less activation memory (278 MB vs 11.2 GB) Atomic-free backward kernels, autotunable tile sizes, NF4 selective dequant, and H200/B200 register pressure tuning. Works with @MistralAI MoEs, Qwen3, Qwen3.5, and @allen_ai OLMoE out of the box.

ClawKeeper Comprehensive Safety Protection for OpenClaw Agents Through Skills, Plugins, and Watchers paper: https://t.co/tMZe50lWLf https://t.co/KtdHwBDI6S

Gemma 4 is live on Modular Cloud, day zero, with the fastest performance on both NVIDIA and AMD. Our MAX inference framework delivers 15% higher throughput vs. vLLM on B200, and we’re the only inference provider to ship @googlegemma 4 on a framework we built ourselves. Two multimodal models live now: Gemma 4 31B (dense, 256K context) and 26B A4B (MoE, only 4B params active per pass). Both SOTA on Modular Cloud: https://t.co/moAyXaHm0m Modular Cloud runs on MAX, our inference framework that unifies GPU kernels, graph compilation, and high-performance serving in a single hardware-agnostic stack. New weights to SOTA deployment in days, on two hardware platforms: https://t.co/aaaOhlKLsL