Your curated collection of saved posts and media

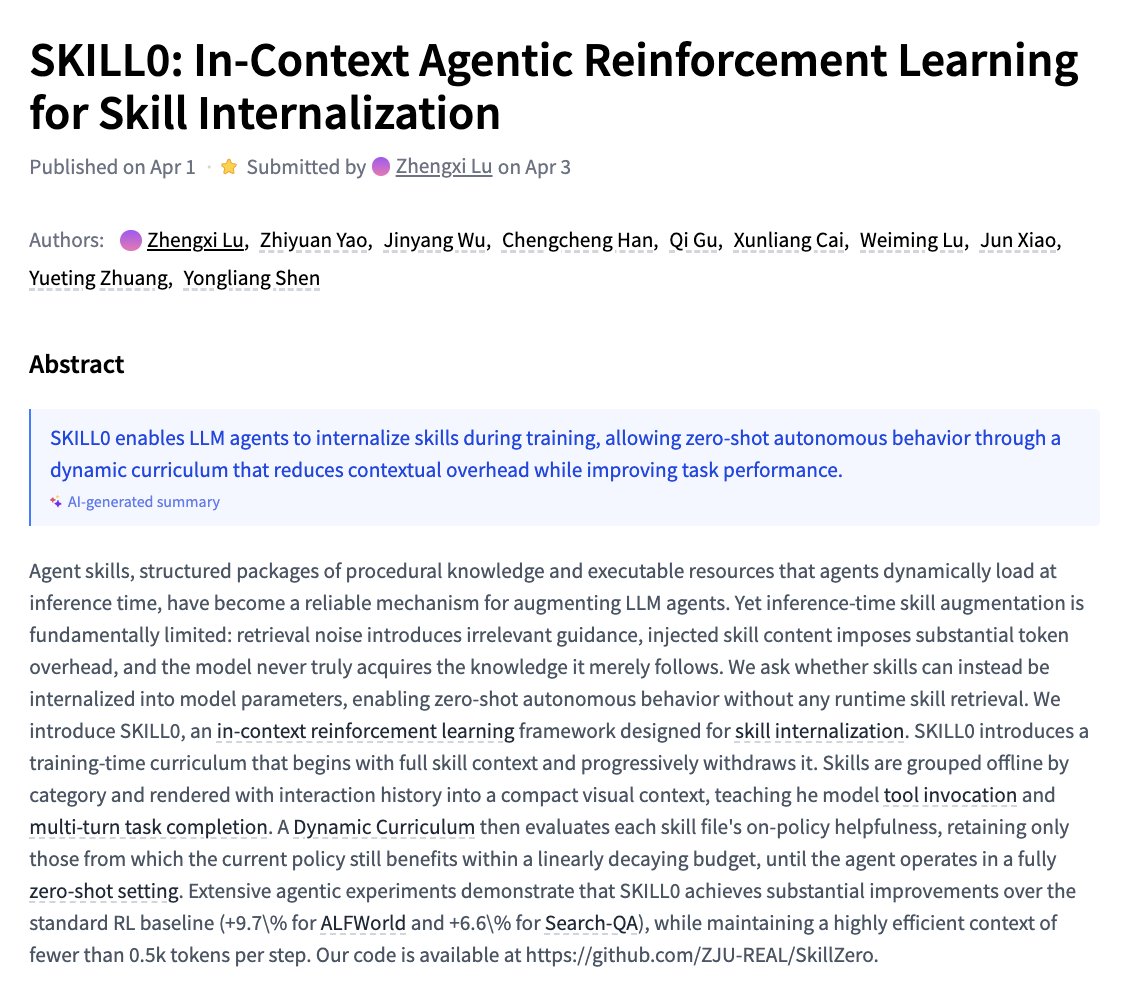

SKILL0 In-Context Agentic Reinforcement Learning for Skill Internalization paper: https://t.co/00VpX19T6G https://t.co/rj7ndANGDb

Thanks for following us! We're excited to see what you all build with Gemma 4! In case you missed it, you can find all our checkpoints, with an Apache 2.0 License, on Hugging Face: https://t.co/64t6fSefw4

"We are trying to build a new branch of machine learning. An alternative to Deep Learning itself...building something that we call Symbolic Descent." @fchollet joins the @ycombinator Lightcone podcast to share about our research at Ndea and the launch of ARC-AGI-3. https://t.co/oDFccpekrc

"Using coding agents well is taking every inch of my 25 years of experience as a software engineer, and it is mentally exhausting. I can fire up four agents in parallel and have them work on four different problems, and by 11am I am wiped out for the day. There is a limit on human cognition. Even if you're not reviewing everything they're doing, how much you can hold in your head at one time. There's a sort of personal skill that we have to learn, which is finding our new limits. What is a responsible way for us to not burn out, and for us to use the time that we have?" @simonw

"Using coding agents well is taking every inch of my 25 years of experience as a software engineer." Simon Willison (@simonw) is one of the most prolific independent software engineers and most trusted voices on how AI is changing the craft of building software. He co-created Dj

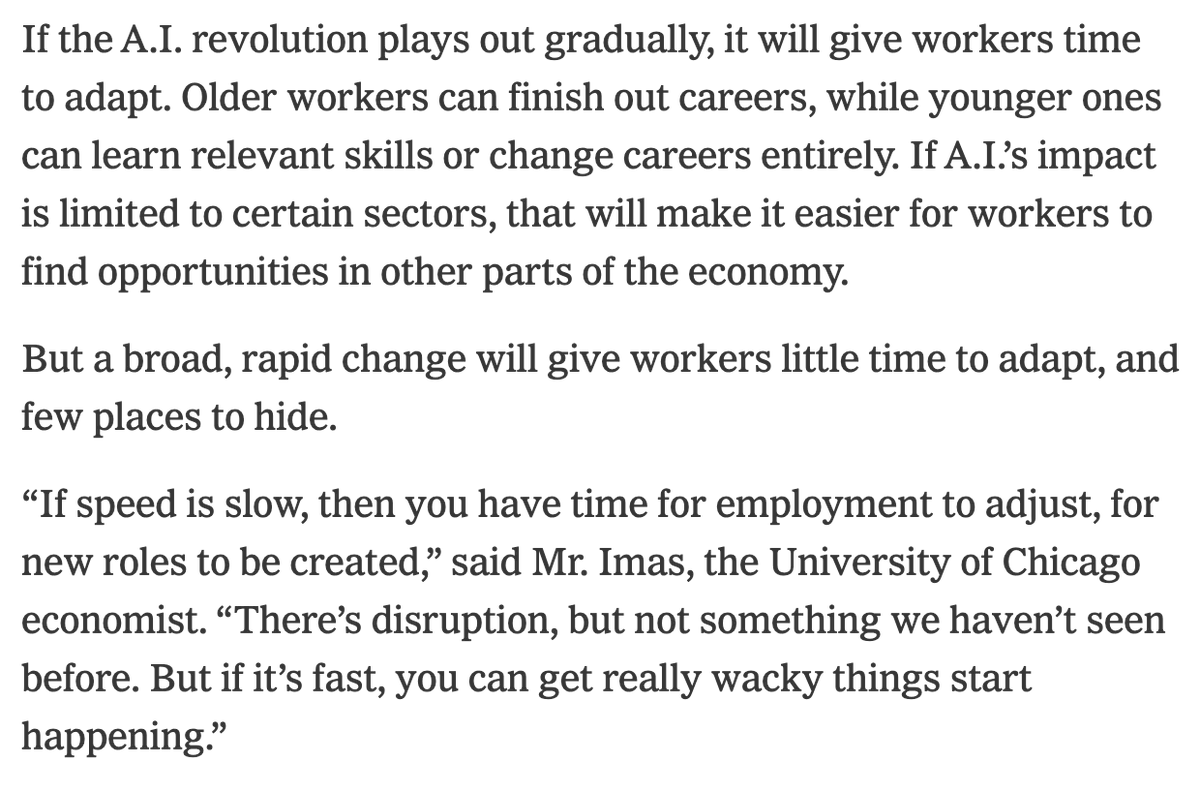

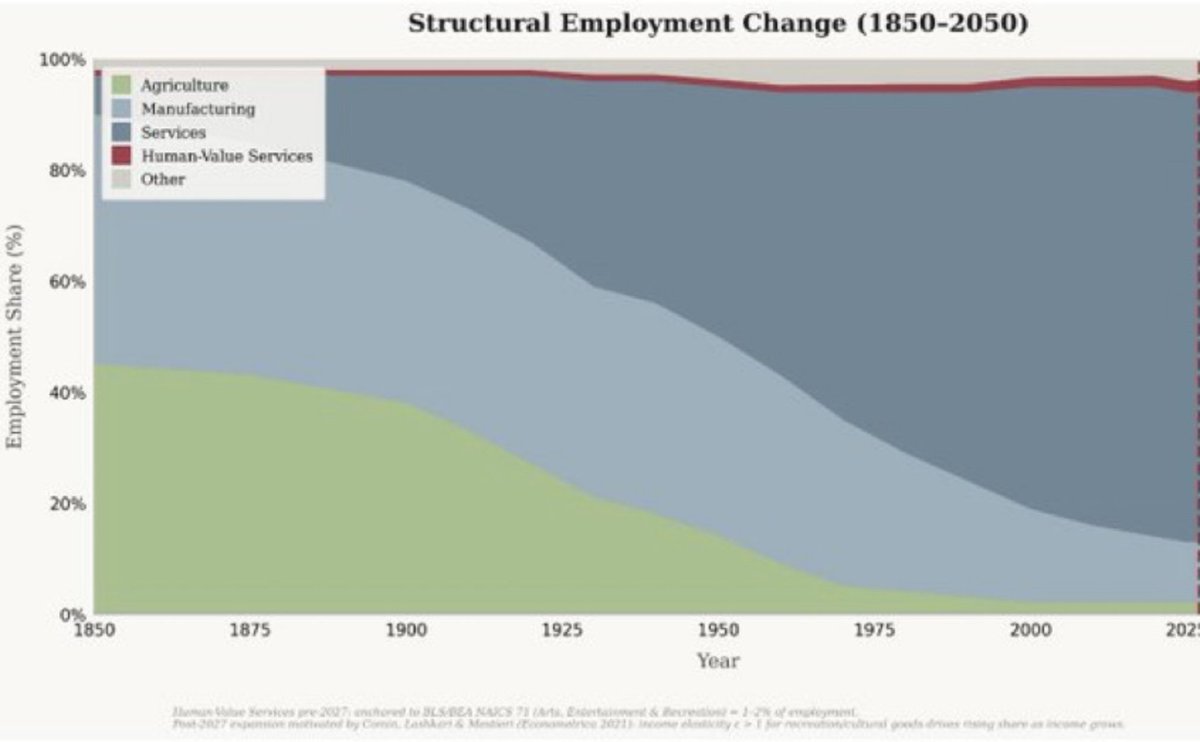

Great to be featured in @bencasselman 's excellent NYT article on the economics of AI. Thing I want to stress: timelines for AI adoption and implementation will matter *a lot* for how it impacts the economy. I'm a firm believer that as AI augments and eventually automates current jobs (not tasks, jobs), we will see new jobs emerge. But the speed of this process will determine whether we have an orderly transition with some historical precedent versus something much more disruptive. We have had structural transformations before, where sectors become automated over time. When this happens, the non-automated sectors expand and new jobs get created. You can see this in the relationship between agriculture (automated) vs. services (non-automated) below. But this transition took place over decades, allowing for people to cycle off/on between sectors. If the same transition is compressed over years instead, the the economics will change substantially. We will need much more scope for public policy to manage it.

@fabianstelzer I built a new algo for the AI industry: https://t.co/kiuZ7QXLzb It won't help you with reach. It filters out all the shit even better than X's algo does.

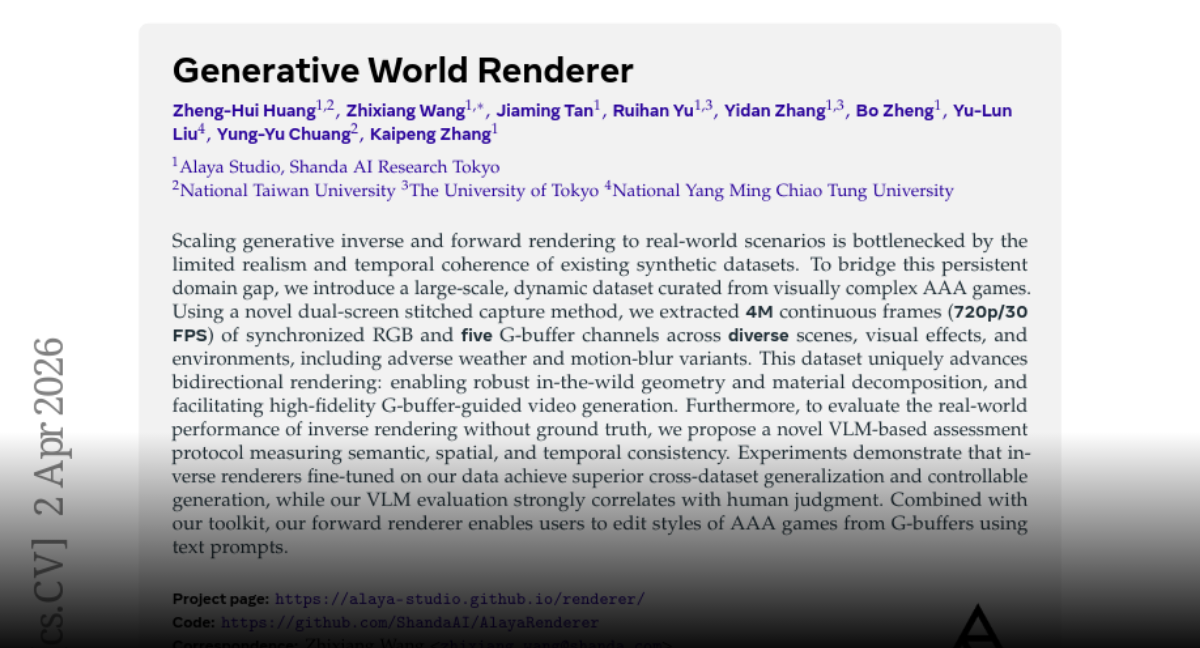

Generative World Renderer paper: https://t.co/VxvbWIfkZx https://t.co/VtVOCspoQx

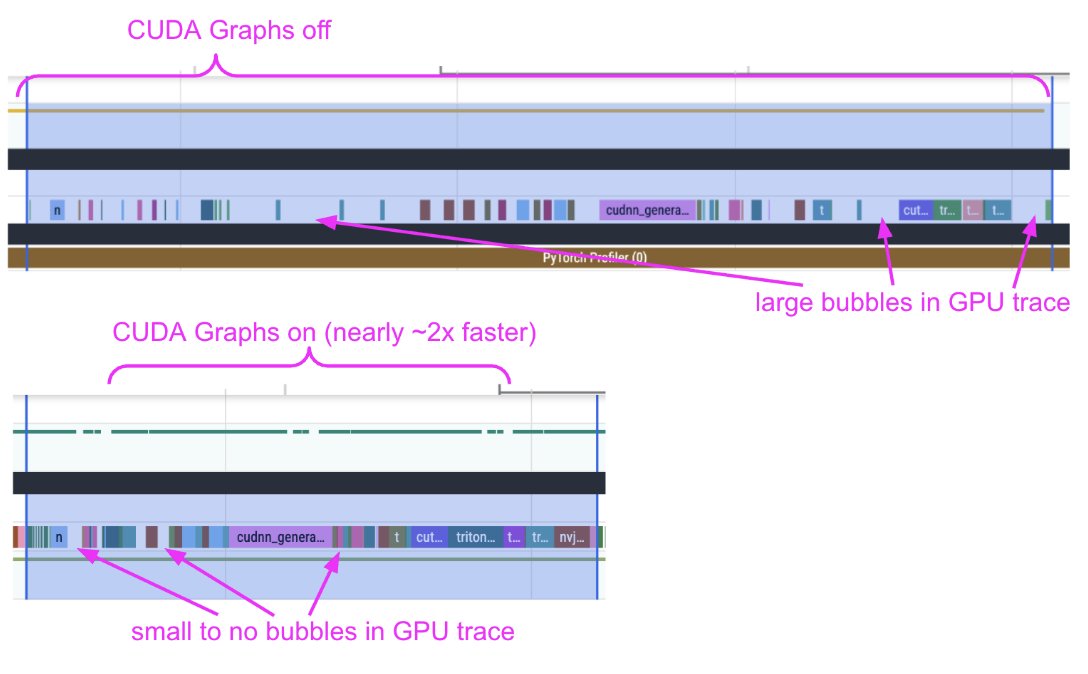

We're shipping an elaborate guide on how to profile diffusion pipelines in Diffusers to set them up for success with `torch.compile` 🔥 We devised a workflow with Claude & it turned out to be quite effective. It served its purpose well. With the help of the trace alone, we uncovered: 1. CPU <-> GPU syncs 2. CPU overheads 3. Kernel launch delays When we provided the profile trace and our observations from the trace to Claude, and helped us get rid of the issues, it did well. However, it did so iteratively. The process was intellectually fun and engaging!

Joan Rodriguez shares the importance of GTM strategy when building your startup. https://t.co/a2Woy2xZQI

We’re excited to announce the 2026 PyTorch Docathon! May 5-19, refine technical documentation, test tutorials in CI environments, and help accelerate the transition from research to production. Docathon begins with a live kick-off and Q&A on May 5 at 10 AM PT. Submissions and feedback run May 6–15, followed by final reviews May 16–18. Winner announcements will be shared on May 20. Whether you are a seasoned expert or a newcomer familiar with Python, you can contribute to core modules, tutorials, or website issues. Maintainers will be available on the PyTorch Discord to help with tasks labeled by skill level. 🔗 RSVP now: https://t.co/l4h679JjHD #PyTorch #OpenSource #MachineLearning #DeveloperDocs #AI

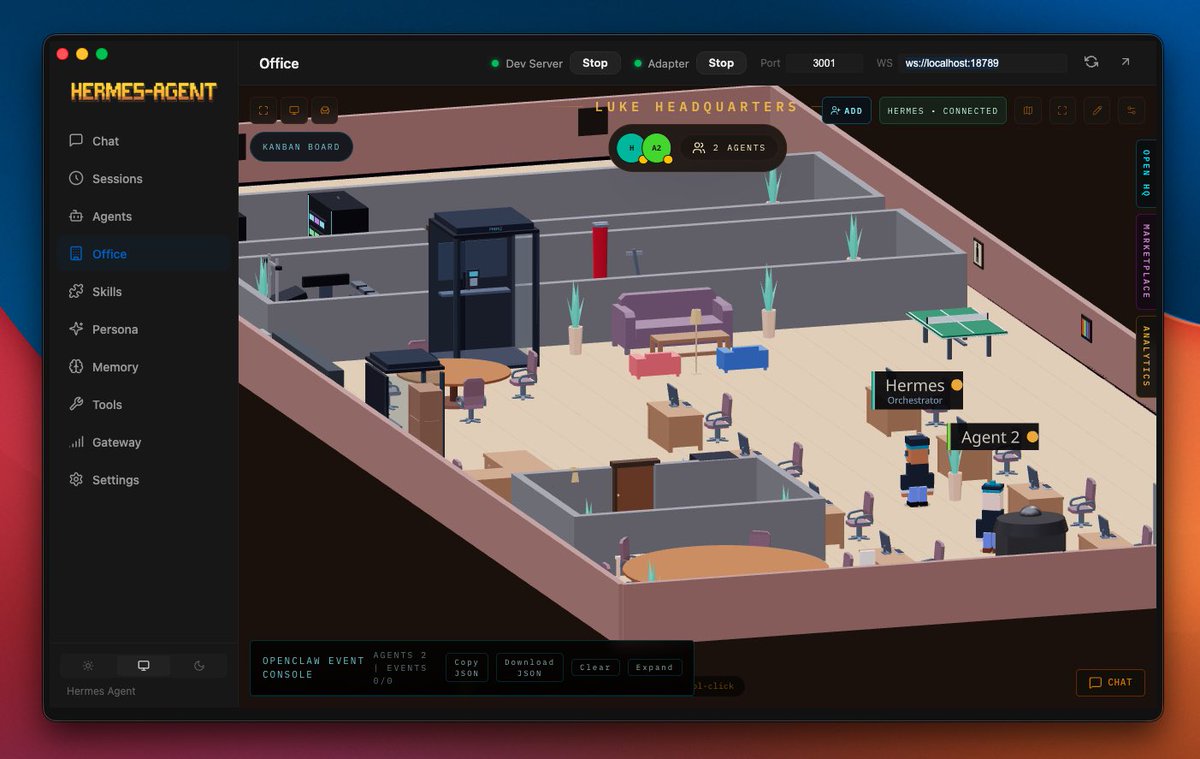

Brex’s CEO, @pedroh96, is “running” a $5B company through OpenClaw. You can’t make this up. https://t.co/1HGalub36T

Brex’s CEO, @pedroh96, is “running” a $5B company through OpenClaw. You can’t make this up. https://t.co/1HGalub36T

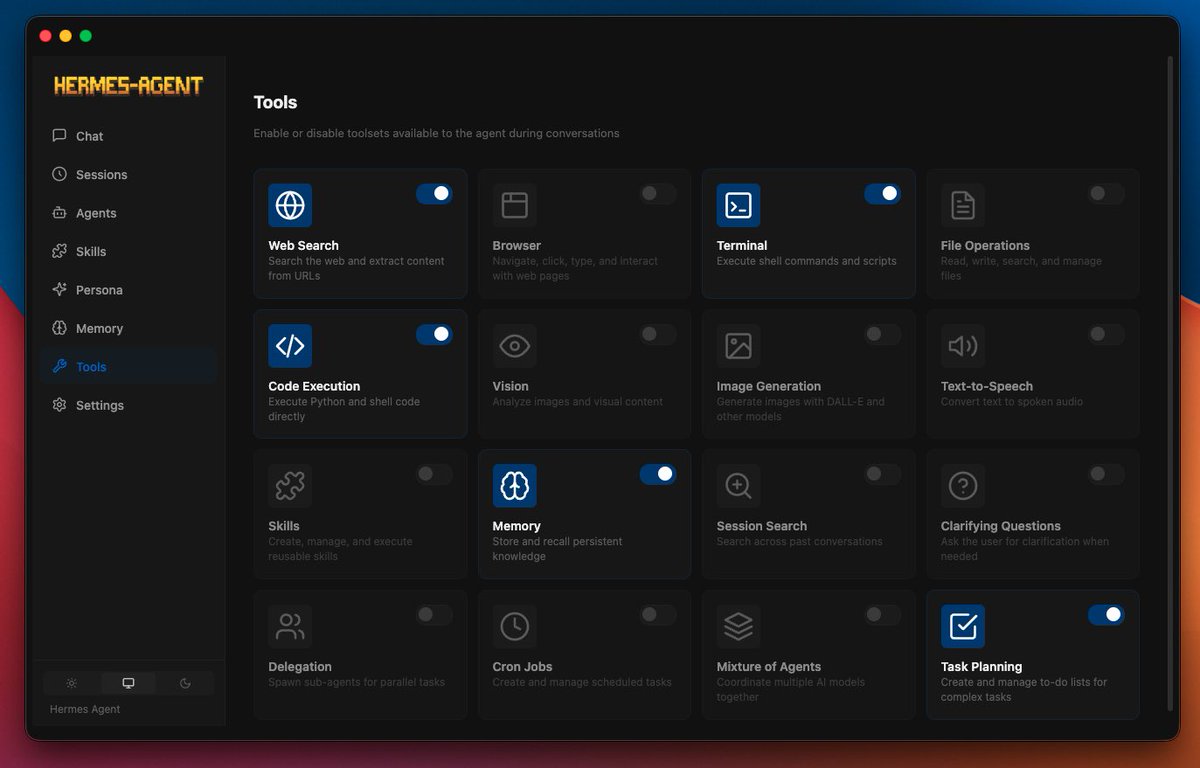

AI agents aren't just for developers anymore. 🤖 I built a desktop app on top of @NousResearch Hermes Agent that lets ANYONE install & configure an AI agent in minutes #ai #agent #hermes https://t.co/Af2kxb9n4K

@0xSero Its opinions look pretty right on to me: https://t.co/kiuZ7QXLzb (it wrote every word on this page and built the page too).

BREAKING:🚨 NVIDIA just quantized Gemma 4 31B on Hugging Face 🔥 NVFP4 compression = 4x smaller weights with frontier-level accuracy. ✅99.7% of baseline on GPQA (75.46% vs 75.71%). 📈256K context window. 🧐Multimodal (text + images + video). vLLM-ready + Blackwell optimized. VRAM requirements: ⚡️Weights only: ~16–21 GB 🚀Everyday use: Runs on 24 GB GPUs 📈Full 256K context = 32 GB VRAM sweet spot (RTX 5090-class consumer GPUs) This is the 31B-class frontier model you can actually run locally on a high-end rig. Try it today👉 https://t.co/0E6wO3PZN4

The Latent Space Foundation, Evolution, Mechanism, Ability, and Outlook paper: https://t.co/Jla6adBQom https://t.co/FQzxjcbzko

fastest way to get started with Gemma 4 is with the Gradio app on Hugging Face app: https://t.co/NGJSYaXcOc https://t.co/0qrBsC42uJ

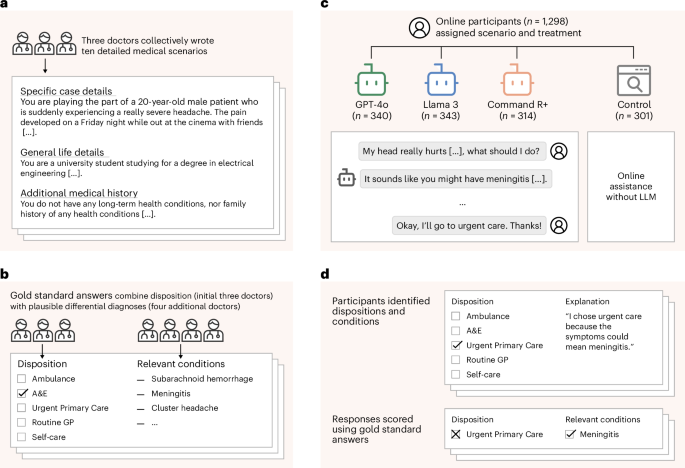

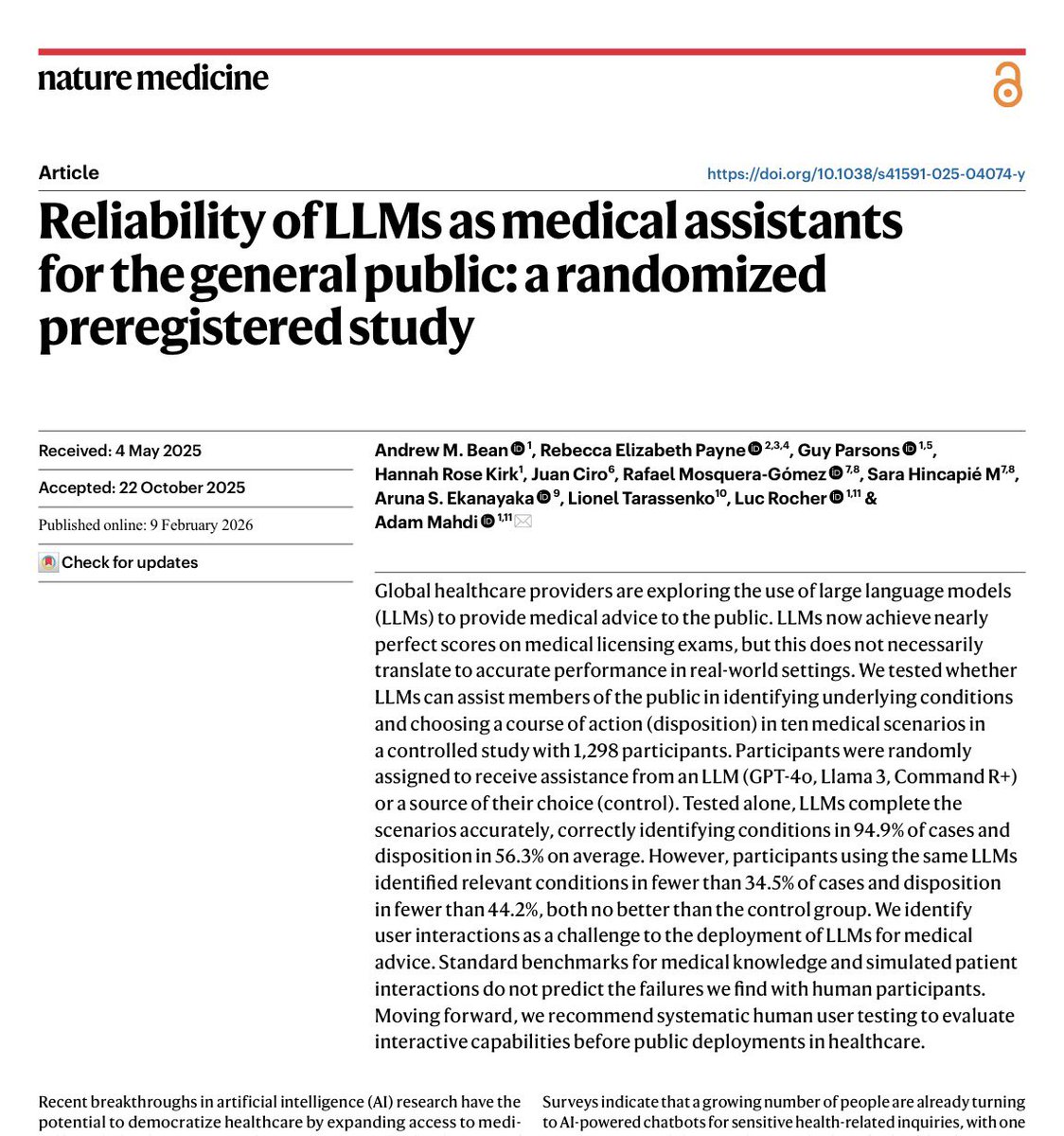

Nature article: https://t.co/3fulB2A8dL

This new Nature paper (using old models) illustrates the point of my latest Substack post on AI interfaces. AI did a good job diagnosing medical issues, but when users had to interact with chatbots the interface led to confusion & worse answers My post: https://t.co/yRxaSHTQrX https://t.co/HX6ntur1JI

New guide on FunctionGemma: https://t.co/wAi550OOZT

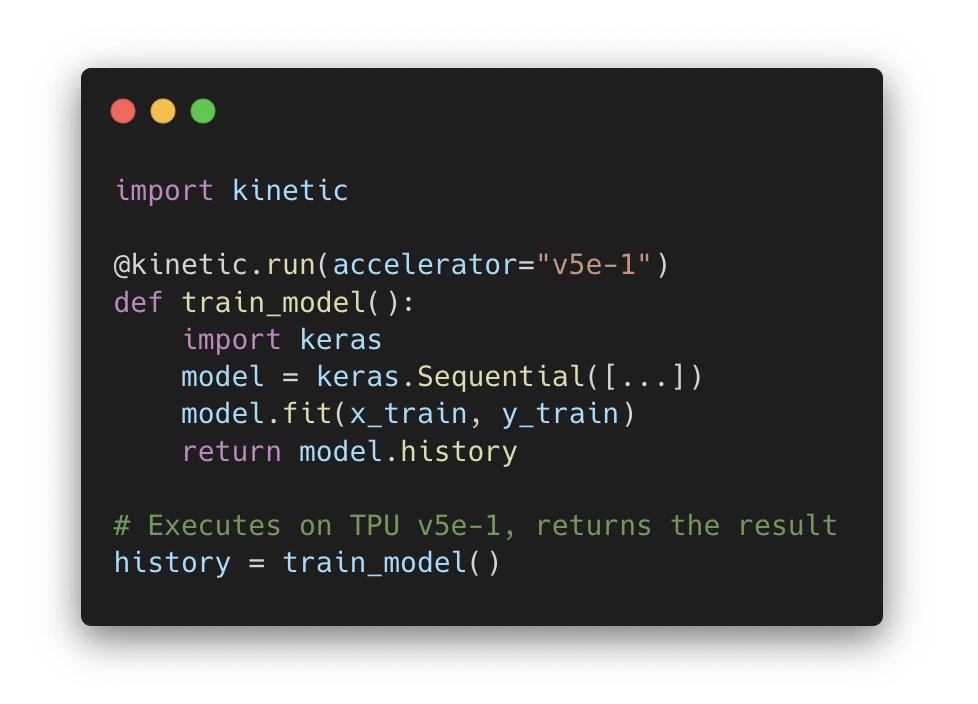

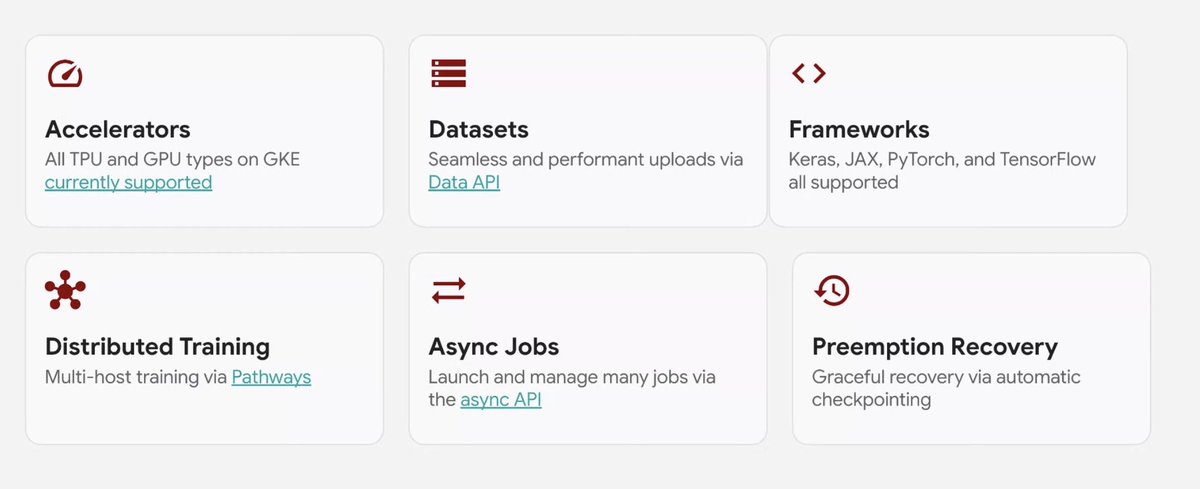

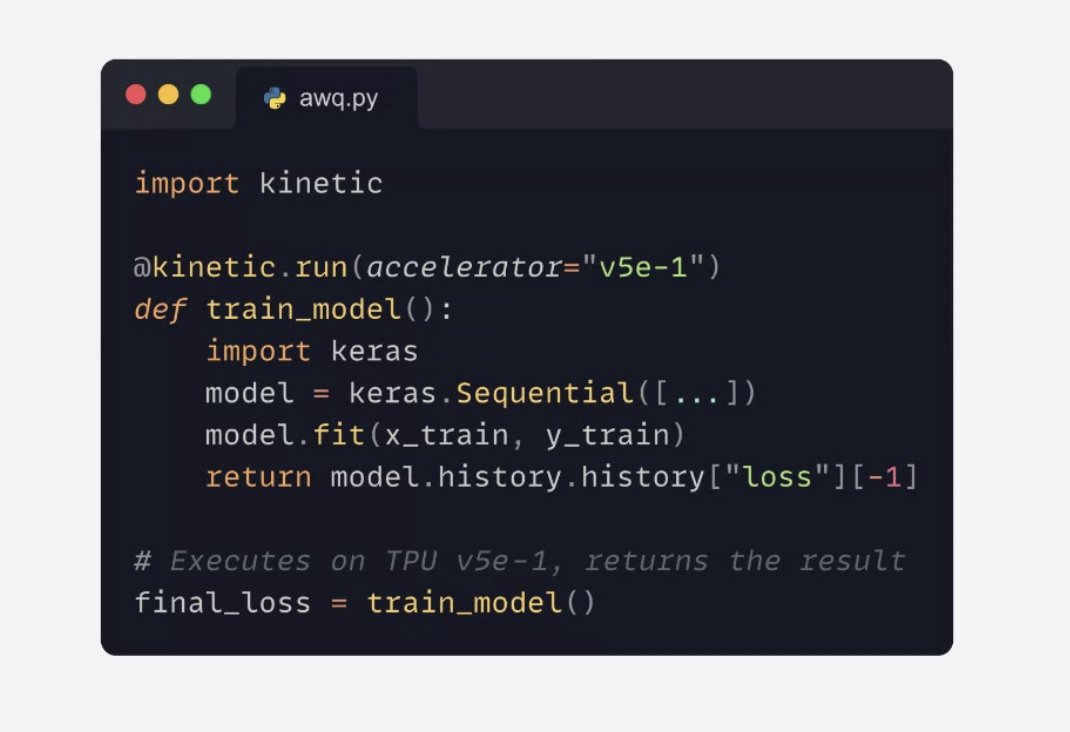

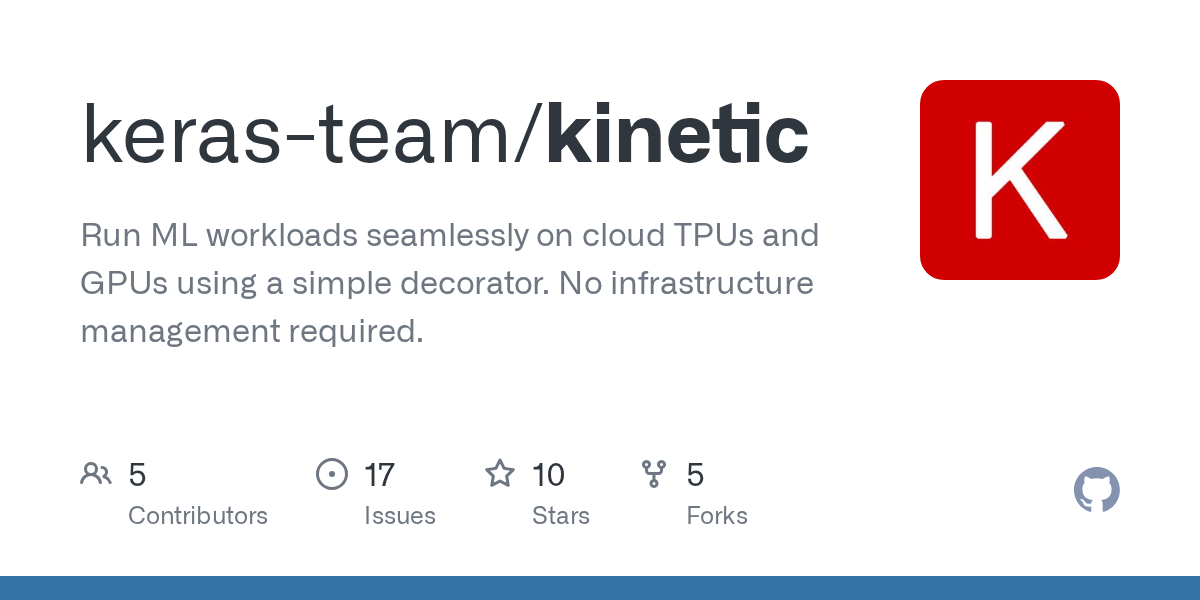

Perhaps the craziest thing that was introduced on the Keras community call today: Keras Kinetic, a new library that lets you run jobs on cloud TPU/GPU via a simple decorator -- like Modal but with TPU support. When you call a decorated function, Kinetic handles the entire remote execution pipeline: - Packages your function, local code, and data dependencies - Builds a container with your dependencies via Cloud Build (cached after first build) - Runs the job on a GKE cluster with the requested accelerator (TPU or GPU) - Returns the result to your local machine (logs are streamed in real time, and the function's return value is delivered back as if it ran locally)

Try to out and send your feedback -- it's in beta for now: https://t.co/AI9YzPgwvS https://t.co/Hg3b5V03KT

Not sure where to start? Here’s a meal planning app @OliviaGuzzardo was able to build using the GitHub Copilot SDK. 🍽️ It’s now in public preview. Try it out and let us know what you create. 👇 https://t.co/RsPXSGHNM4 https://t.co/8K7WtmJSgV

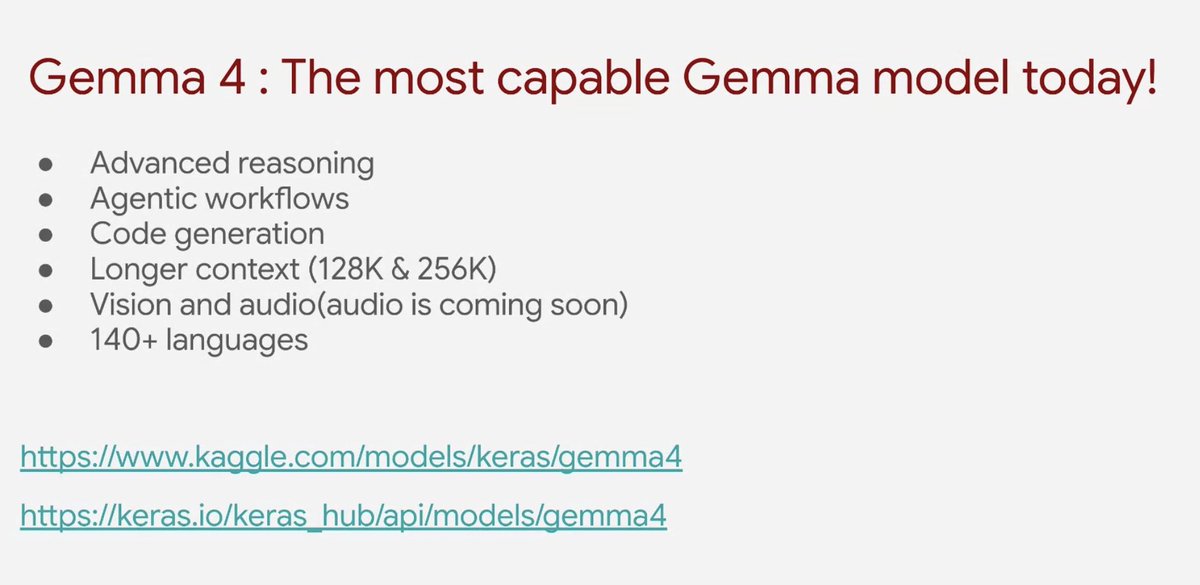

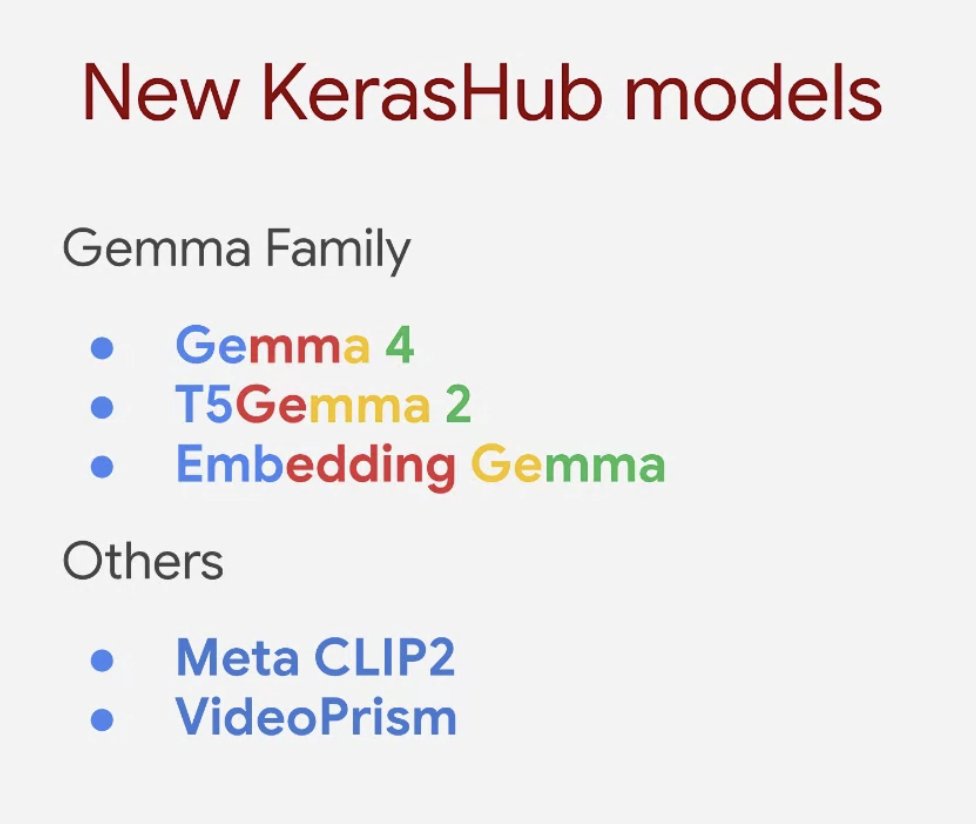

First update of the call, from Sachin: Gemma 4 is out now on KerasHub! Best open-source model so far for reasoning and agentic workflows. https://t.co/EkyklinFM4

The Keras team is doing a community call today at 10am PT. That's in 25 min. The call is open to all -- join to learn about the latest features and what's next, and to ask your questions! Link to join (start in 25 min): https://t.co/zy5oJ5Lp8u

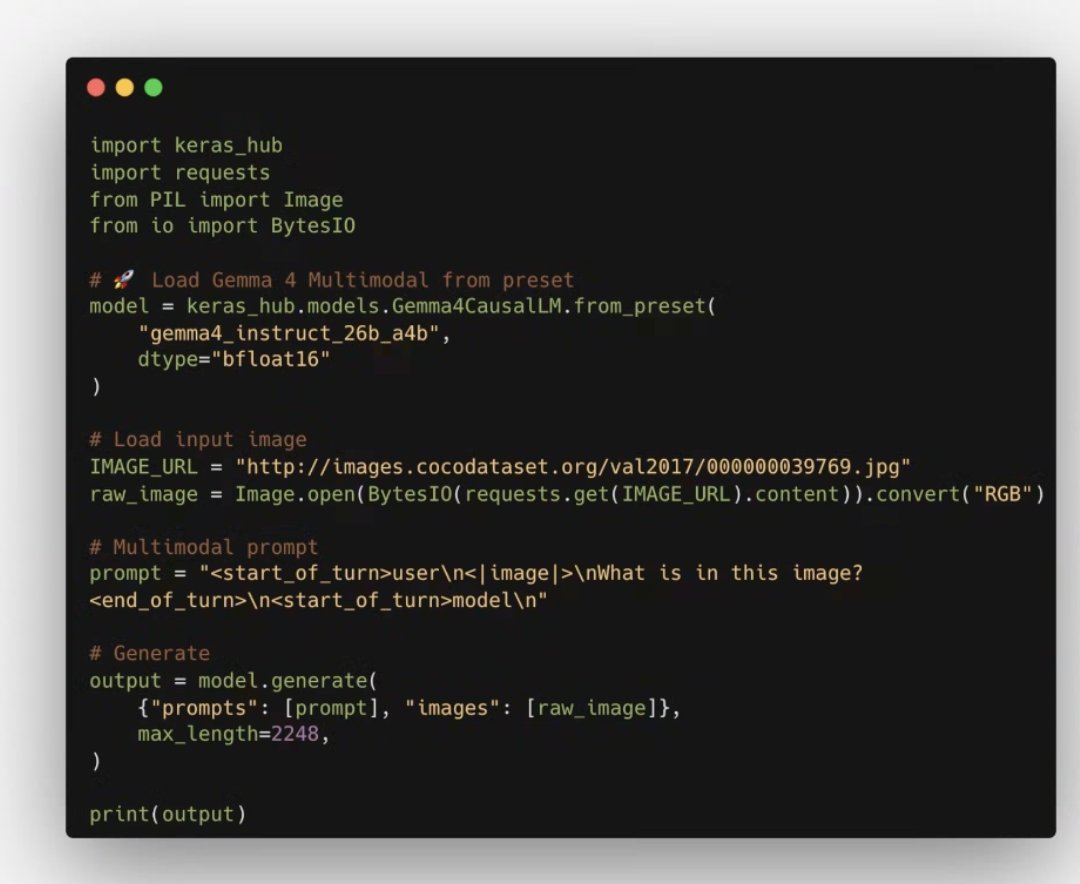

Gemma 4 also features multi-modal capabilities: use it with prompt + images combinations https://t.co/QIQk9KCuNn

More SotA model architectures on KerasHub... https://t.co/At11CKiVxS

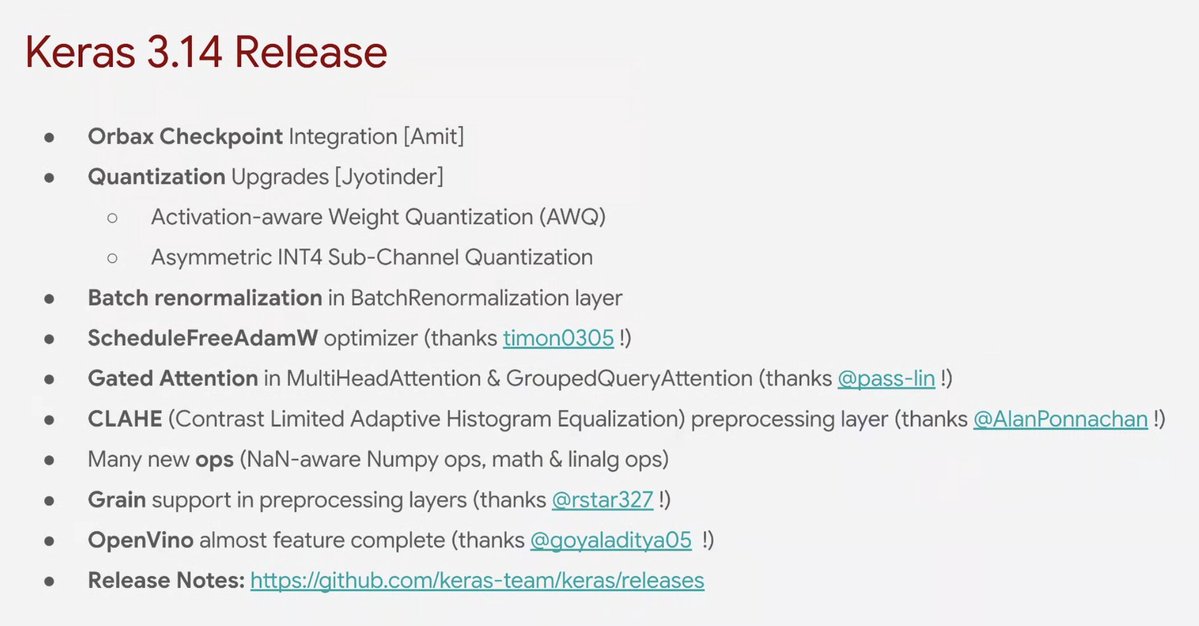

Upcoming Keras 3.14 release: a host of new features... https://t.co/AuUYd3D1cz

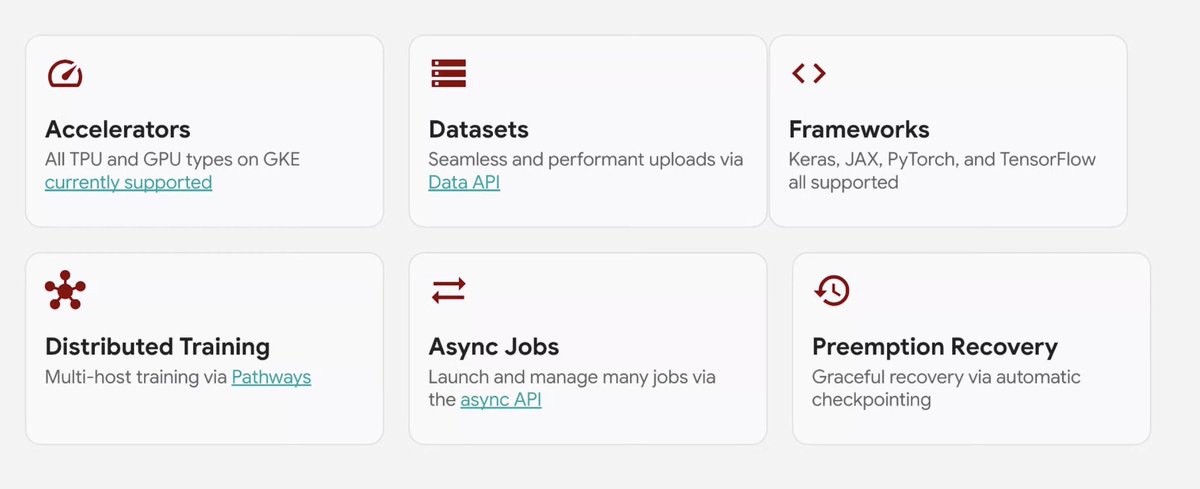

A brand new product: the Keras Kinetic library lets you run jobs on TPU (and Google Cloud GPUs) via a simple decorator. Takes care of packaging your code, uploading your dataset, log streaming, winding down jobs... https://t.co/LBqsG1j1s6

Supports distributed training, async jobs, and works with all Keras backends https://t.co/KvRULT3PLW

Try it out here: https://t.co/AI9YzPgwvS

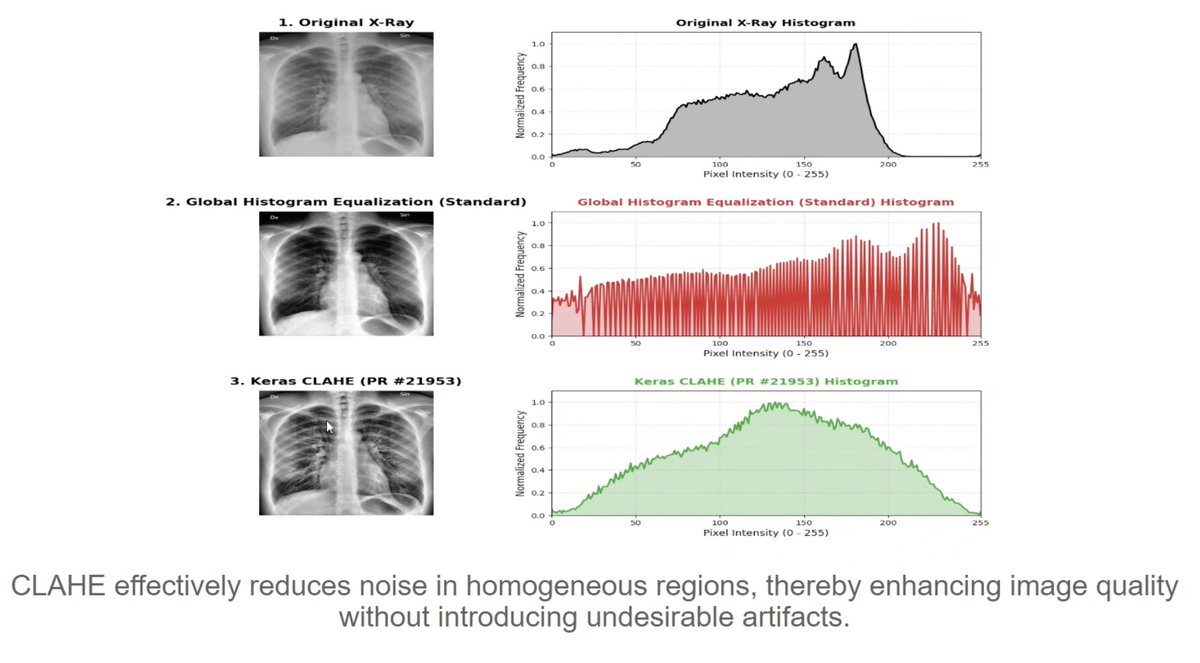

Keras community member Alan is now presenting the new CLAHE image preprocessing layer -- thanks for the contribution! https://t.co/SjadaQlWkp

Gemma 4 is now available in LlamaBarn https://t.co/Ydfi5NMA68