Your curated collection of saved posts and media

I tried a few hours Hermes Agent from @NousResearch , so far a few things I really love💗 (compare to @openclaw and even native @claude_code 1. self-fix and healing, when it try fix a problem, it remembers and learn from it automatically 2. better communication: in both TUI and Slack it prints out middle steps while finishing the task. @openclaw til today still can't reliablly communicate with Slack, which in part contribute to this issue https://t.co/MrweL4JALx and it seems pretty obvious Hermes has better concurrency management. 3. MUST BETTER SECURITY MODEL: instead of asking for permission each time, hermes actually only pause and ask when something is dangerous. So far, I think that's why people who have tried Hermes says OpenClaw: "here is the another fix" Hermes: "it just works" (actually not always but when it does, such as external dependency failures, it actually attempt, try and report much better" Kudos @Teknium and team

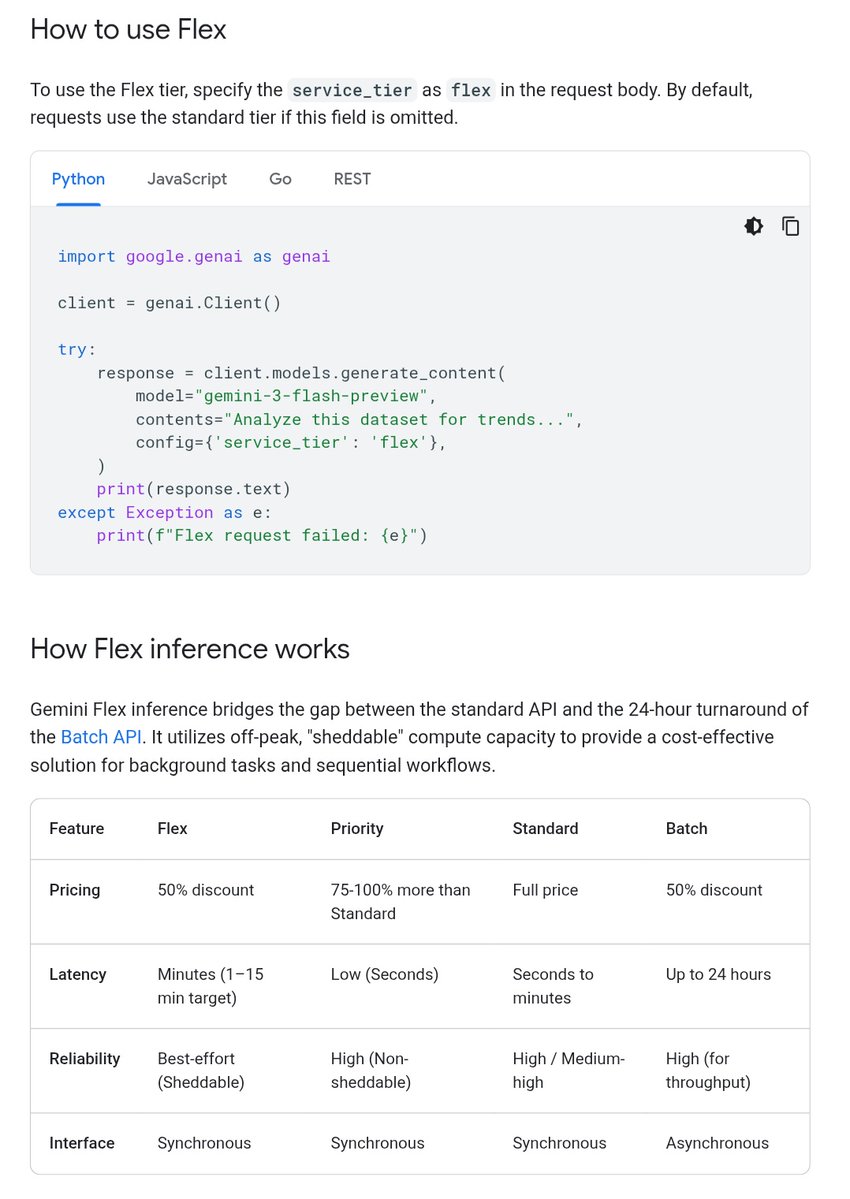

my side project budgets are basically $0, so the new gemini flex pricing in @googleaistudio is actually saving my life 😅 50% discount if you don't need instant responses! perfect for background agents, batch jobs, or evals while you sleep or go watch robot cage fights 💸👇 https://t.co/ucmtpGle9o

Always loved @3blue1brown's visualizations but never really conquered Manim (the animation library). With Claude as a coding agent, I can finally direct animations at a high level — no more fighting the library. So I built this: explaining to 12-year-old me why fractals have non-integer dimensions. D = log N / log r. Simple formula. Surprisingly deep rabbit hole. --- 终于获得了课件自由:用 AI 可以随时讲解一些知识,比如这是给当年的我讲解为什么分形维度不是整数的一个视频。

One Prompt. One Iteration. That’s all it took to vibe code this super sleek 3D orbital gallery using @threejs with Gemini 3.1 Pro in @GoogleAIStudio. Period. Live: https://t.co/LU7a8Cq8Dc Code: https://t.co/dLfuGAJtxE

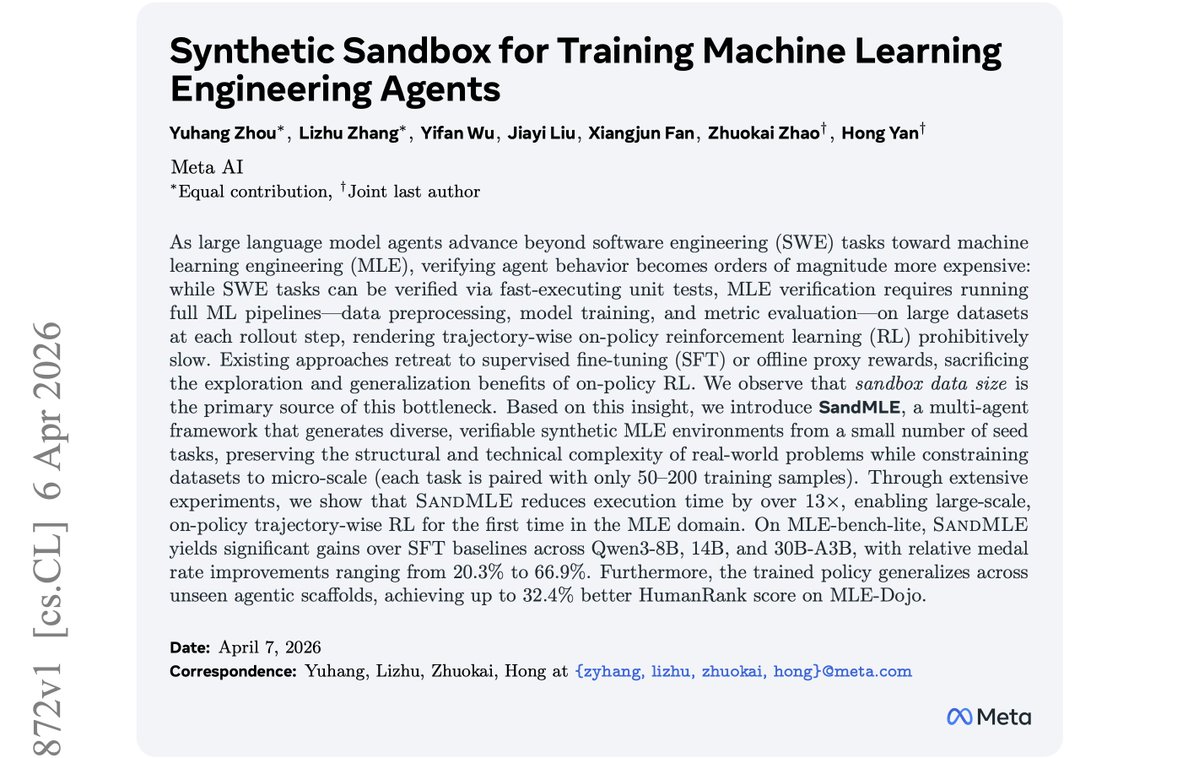

On-policy RL has driven the biggest leaps in training coding agents. Extending it to machine learning engineering agents should be a natural next step. But it almost never works. What I mean is, the recipe is right there — standard trajectory-wise GRPO, the same that worked for SWE. However, the problem is that one rollout step on an MLE task may take hours because the agent has to actually train a model on a real dataset at every step (preprocessing, fitting, inference, scoring). So even with the N rollouts in a group running in parallel, a single GRPO run may still take days. Every MLE agent paper I've read has retreated to SFT or offline proxy rewards for exactly this reason, giving up the exploration benefits of on-policy learning. That's why I'm excited about our new paper, SandMLE, which fixes this with a move that sounds almost too reckless to work. The instinct when on-policy RL is too slow is to engineer around it — async rollouts so the trainer doesn't sit idle waiting for slow environments, off-policy or step-wise proxies to avoid running full trajectories at all. But when we profiled where the time was going, the bottleneck had nothing to do with the algorithm. Unlike SWE where execution latency comes from compilation and test logic, MLE latency is overwhelmingly driven by the size of the dataset the ML pipeline has to chew through. Therefore, rather than downsampling existing data (which corrupts evaluation), we built a multi-agent pipeline that procedurally generates diverse synthetic MLE environments from a small seed set. Specifically, we extract the structural DNA of seed tasks (modality, label cardinality, distribution shape), mutate them into new domains (e.g., repurposing animal classification into road damage detection), inject realistic noise, embed deterministic hidden rules connecting features to labels, and construct full evaluation sandboxes with progressive milestone thresholds. Each task is constrained to only 50–200 training samples. The execution speedup is dramatic — average per-step latency drops over 13×, which makes trajectory-wise GRPO go from infeasible to routine. We also designed a dense, milestone-based reward to address the sparse credit assignment problem in long-horizon MLE. The ablation shows this matters — under a sparse reward, the 30B model's medal rate drops from 27.3% to 13.6% and valid submission collapses from 100% to 86.4%. Results across Qwen3-8B, 14B, and 30B-A3B on MLE-bench are consistently strong — 66.9% better performance in medal rate over SFT baselines. It is worth noting that the SFT baselines are not weak— we trained them on high-quality Claude-4.5-Sonnet trajectories. But SandMLE still delivers much larger gains, suggesting that direct environment interaction does teach capabilities that imitation alone does not (as expected). The most convincing evidence to me that the model's intrinsic performance gets improved is the framework-agnostic generalization. We trained exclusively with ReAct but the gains transfer to AIDE, AIRA, and MLE-Agent scaffolds at evaluation time — up to 32.4% better performance in HumanRank on MLE-Dojo. The SFT models, by contrast, are brittle when moved to unfamiliar scaffolds. The 30B SFT model collapses to 17.7% valid submission rate on MLE-Dojo with MLE-Agent, while the 30B SandMLE model achieves 83.9%. SandMLE is teaching genuine engineering reasoning, not scaffold-specific patterns. What I find most interesting beyond the specific result is that none of the hard parts of RL changed here. The algorithm is the same. The reward is conventional. We just shrunk the environment until on-policy learning became affordable. The field has largely treated environment design and RL algorithm design as separate concerns. SandMLE is a concrete case that the environment is itself the lever. When training is too expensive, the instinct is to build cleverer algorithms to tolerate it. However, often the better move is to reshape the environment so the simple algorithm just works. Paper: https://t.co/x0jAvCClyh

We got an agent to control a remote browser on iOS It shares its screen so you can peek at what it's doing https://t.co/iK8bSpWXnE

@omarwasm Siri, Insta360, and Matic Robots were launched in my home. My blog launched thousands of sites. I was the first to get 1,000 followers on the website you are reading me on. Built the only website that watches the entire AI community here on X: https://t.co/8L5xphk0qQ being the first non enterprise to use an AI that also launched in my home. Might know a thing or two about the topic.

BREAKING: Claude can now search YouTube for you! We plugged Algrow directly into Claude so it finally has real constantly updated youtube data No more generic slop from a model that's never watched a video https://t.co/6lPTgF9IEv

I built an AI tool that files your taxes for you. It runs inside a hardware-encrypted enclave, so nobody can see your data. Not even the app developer. Here's the demo:

Updated: https://t.co/kiuZ7QXLzb All the AI news discussed here on X today. Papers. Models. Events. Announcements. And more.

The highest quality video of the moon was just released… this is so beautiful. https://t.co/0JLkB0tOXv

The highest quality video of the moon was just released… this is so beautiful. https://t.co/0JLkB0tOXv

@tarakeeney I'm enjoying building an AI that worries about what's coming next. It reads the entire AI community here and builds this site: https://t.co/kiuZ7QXLzb all about the AI community here on X. And it's fun!

Early bird registration has been extended! 🐦 Register for #KubeCon + #CloudNativeCon + #OpenInfraSummit + #PyTorchCon China by 6 May to save up to ¥1270 / USD$179. Register: https://t.co/cChMvGvcei Don't forget: The CFP is still open through 3 May: https://t.co/PKdf9djzqR https://t.co/U4uqgeIT1M

Silicon Valley predicted AI Agents/OpenClaw 12 years before it happened https://t.co/73TpWnYdkb

Silicon Valley predicted AI Agents/OpenClaw 12 years before it happened https://t.co/73TpWnYdkb

Tutorial: Automating KYC with AI agents 🪪🕵 I’m creating a new tutorial series of automating practical document workflows with agents. Every financial institution needs to perform KYC (know your customer) to verify a customer’s identity, and this involves manually sifting through IDs, bank statements, etc. and doing the cross-checking by hand. This is a great first use case for agentic document workflows: 1. Extract identification information from the user supplied ID (license, passport) 2. Extract fields from utility bills/bank statements and then use LLMs to cross-validate extracted fields with the extracted ID fields It obviously doesn’t cover the full e2e process and uses publicly available online data, but should be a good reference guide to get started. To make this work well, you do need high-quality document extraction with confidence scores and citations! Check out the tutorial: https://t.co/G6f5zFvNZs If you’re interested come check out LlamaParse: https://t.co/TqP6OT5U5O

When I say garage builders are not waiting to ask permission or “go to market” to please the VC class, I am not theorizing. This farmer had a need to get to places at his farm to he built it in his garage. Like all inventions it don’t have to pass any test but his own. https://t.co/Rsv1d1AaHB

🚨SHOCKING: Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves. And the way they proved it is devastating. Apple researchers took the most popular math benchmark in AI — GSM8K, a set of grade-school math problems — and made one change. They swapped the numbers. Same problem. Same logic. Same steps. Different numbers. Every model's performance dropped. Every single one. 25 state-of-the-art models tested. But that wasn't the real experiment. The real experiment broke everything. They added one sentence to a math problem. One sentence that is completely irrelevant to the answer. It has nothing to do with the math. A human would read it and ignore it instantly. Here's the actual example from the paper: "Oliver picks 44 kiwis on Friday. Then he picks 58 kiwis on Saturday. On Sunday, he picks double the number of kiwis he did on Friday, but five of them were a bit smaller than average. How many kiwis does Oliver have?" The correct answer is 190. The size of the kiwis has nothing to do with the count. A 10-year-old would ignore "five of them were a bit smaller" because it's obviously irrelevant. It doesn't change how many kiwis there are. But o1-mini, OpenAI's reasoning model, subtracted 5. It got 185. Llama did the same thing. Subtracted 5. Got 185. They didn't reason through the problem. They saw the number 5, saw a sentence that sounded like it mattered, and blindly turned it into a subtraction. The models do not understand what subtraction means. They see a pattern that looks like subtraction and apply it. That is all. Apple tested this across all models. They call the dataset "GSM-NoOp" — as in, the added clause is a no-operation. It does nothing. It changes nothing. The results are catastrophic. Phi-3-mini dropped over 65%. More than half of its "math ability" vanished from one irrelevant sentence. GPT-4o dropped from 94.9% to 63.1%. o1-mini dropped from 94.5% to 66.0%. o1-preview, OpenAI's most advanced reasoning model at the time, dropped from 92.7% to 77.4%. Even giving the models 8 examples of the exact same question beforehand, with the correct solution shown each time, barely helped. The models still fell for the irrelevant clause. This means it's not a prompting problem. It's not a context problem. It's structural. The Apple researchers also found that models convert words into math operations without understanding what those words mean. They see the word "discount" and multiply. They see a number near the word "smaller" and subtract. Regardless of whether it makes any sense. The paper's exact words: "current LLMs are not capable of genuine logical reasoning; instead, they attempt to replicate the reasoning steps observed in their training data." And: "LLMs likely perform a form of probabilistic pattern-matching and searching to find closest seen data during training without proper understanding of concepts." They also tested what happens when you increase the number of steps in a problem. Performance didn't just decrease. The rate of decrease accelerated. Adding two extra clauses to a problem dropped Gemma2-9b from 84.4% to 41.8%. Phi-3.5-mini from 87.6% to 44.8%. The more thinking required, the more the models collapse. A real reasoner would slow down and work through it. These models don't slow down. They pattern-match. And when the pattern becomes complex enough, they crash. This paper was published at ICLR 2025, one of the most prestigious AI conferences in the world. You are using AI to help you make financial decisions. To check legal documents. To solve problems at work. To help your children with homework. And Apple just proved that the AI is not thinking about any of it. It is pattern matching. And the moment something unexpected shows up in your question, it breaks. It does not tell you it broke. It just quietly gives you the wrong answer with full confidence.

Introducing the Manim skill for Hermes Agent. Manim is an engine for creating precise programmatic animations for mathematical and technical explainers, made famous by the @3blue1brown channel. https://t.co/nyNeNthhZB

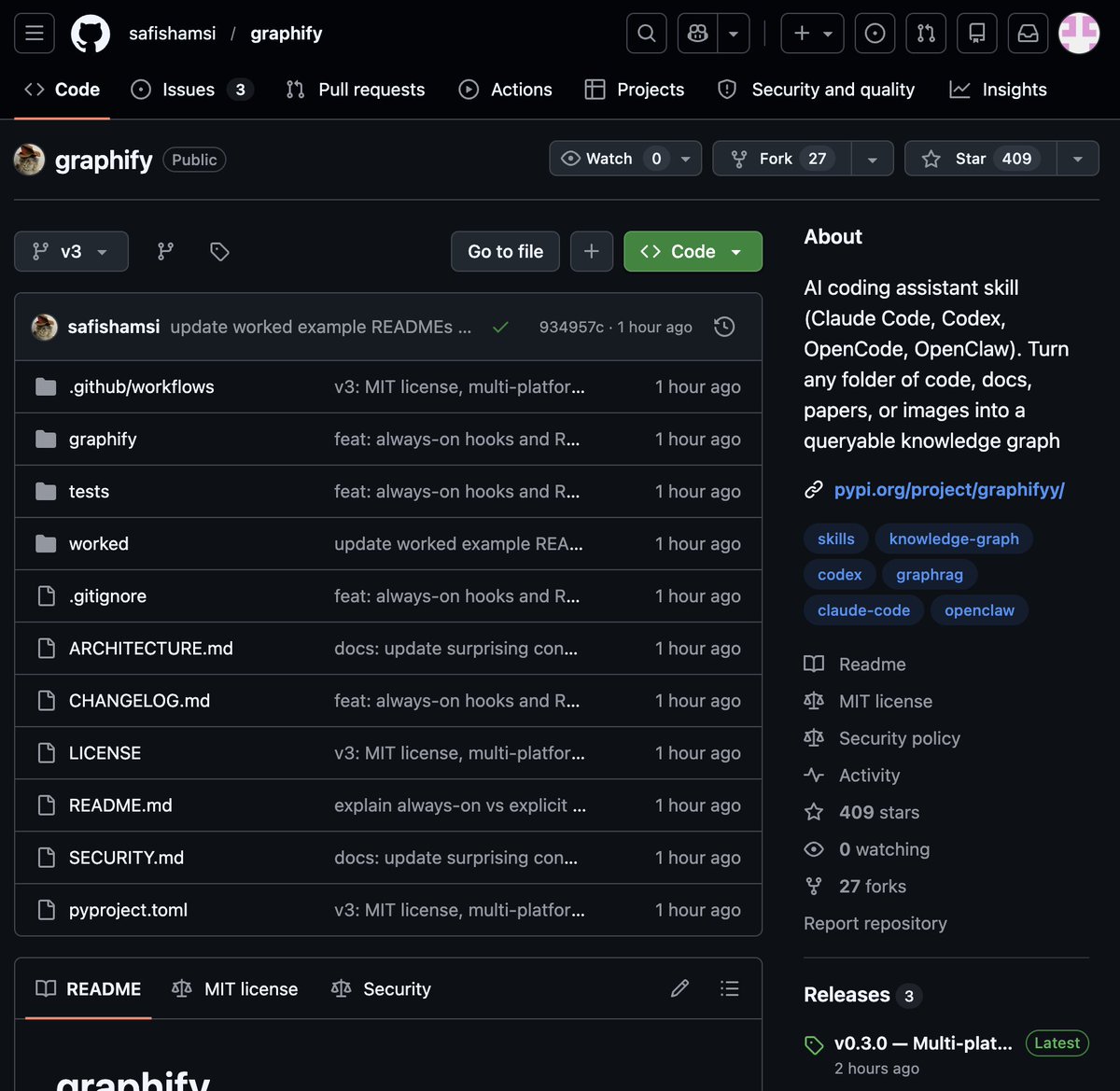

🚨 BREAKING: Someone just built the exact tool Andrej Karpathy said someone should build. 48 hours after Karpathy posted his LLM Knowledge Bases workflow, this showed up on GitHub. It's called Graphify. One command. Any folder. Full knowledge graph. Point it at any folder. Run /graphify inside Claude Code. Walk away. Here is what comes out the other side: -> A navigable knowledge graph of everything in that folder -> An Obsidian vault with backlinked articles -> A wiki that starts at index. md and maps every concept cluster -> Plain English Q&A over your entire codebase or research folder You can ask it things like: "What calls this function?" "What connects these two concepts?" "What are the most important nodes in this project?" No vector database. No setup. No config files. The token efficiency number is what got me: 71.5x fewer tokens per query compared to reading raw files. That is not a small improvement. That is a completely different paradigm for how AI agents reason over large codebases. What it supports: -> Code in 13 programming languages -> PDFs -> Images via Claude Vision -> Markdown files Install in one line: pip install graphify && graphify install Then type /graphify in Claude Code and point it at anything. Karpathy asked. Someone delivered in 48 hours. That is the pace of 2026. Open Source. Free.

From democracy to dictatorship is a shorter leap than many people think. The similarities of 2026 Trump America and 1938 Nazi Germany are striking. As of April 2026, historians, political scientists, and former administration officials have identified several parallels between the rhetoric and actions of the fascist authoritarian Trump regime and 1930s Nazi Germany, particularly regarding the erosion of democratic norms and the use of state power against political opponents. Rhetorical and Ideological Parallels * Dehumanizing Language: Critics and historians note that Trump’s use of terms like "vermin" to describe political opponents and "poisoning the blood" regarding immigrants mirrors the dehumanizing language used by Adolf Hitler and other fascist leaders to scapegoat minorities and political enemies. * Attacks on the Press: Trump’s frequent labeling of mainstream media as "fake news" has been compared to the Nazi-era term Lügenpresse ("press of lies"), used to delegitimize independent journalism. * Cult of Personality: Experts argue that Trump fosters a cult of personality where his perceived strength and aura take precedence over policy or factual arguments, a hallmark of 1920s and 1930s fascist movements. * "Enemies from Within": Trump’s rhetoric regarding an "enemy from within," specifically targeting "radical left lunatics" and Democratic politicians, aligns with historical fascist strategies of identifying internal threats to justify authoritarian measures. Institutional and Structural Parallels * Weaponization of the Justice System: Observers highlight Trump’s repeated threats to investigate and prosecute political rivals as a parallel to the Nazi strategy of using "lawfare" to eliminate opposition. * Gleichschaltung (Coordination): Efforts to align federal agencies with personal and party loyalty—such as replacing military leaders with loyalists and centralizing executive control—draw comparisons to the Nazi policy of Gleichschaltung, the enforced alignment of all national institutions. * Militias and Political Violence: Trump’s "stand by" instruction to the Proud Boys and his encouragement of self-recruiting militias are frequently cited as modern echoes of the SA and SS paramilitaries used to intimidate political opponents. Influence on the 2026 Midterm Elections The SAVE Act (Safeguard American Voter Eligibility Act) has become a central part of Trump America's parallels to 1930s Nazi Germany, specifically regarding the centralized control of the democratic process. These are some of the institutional and structural parallels between 1930s Nazi Germany and 2026 Trump America as the midterm elections approach. The SAVE Act and Voter Roll Control * Nationalization of Voter Rolls: In early 2026, after Trump intensified his push for the SAVE Act. By requiring federal proof of citizenship for all federal elections and granting the executive branch power to "audit" state voter rolls, the act effectively strips states of their constitutional authority to manage elections. * The "Purge" Mechanism: Historians note a parallel to 1930s "legal coordination," where laws were passed to systematically disenfranchise specific groups. The SAVE Act allows the federal government to flag and remove "suspect" voters from rolls — often targeting naturalized citizens and minority groups — under the guise of "election integrity." * Bureaucratic Obstacles: Much like the 1935 Nuremberg Laws (which began by defining who was a "citizen" with the right to vote), the SAVE Act uses documentation requirements to create a tiered system of citizenship, making it harder for opposition-leaning demographics to remain on the rolls. Impact on the 2026 Midterms * Mass De-registrations: In the lead-up to the midterms, the administration has used the SAVE Act's provisions to challenge the eligibility of millions of voters in "Blue" districts within swing states. * Disruption of Local Authority: By giving federal agents loyal to Trump the power to intervene in local voter registration, the Act has caused chaos in election offices, leading to a wave of resignations among non-partisan election officials who refuse to implement the "purges." * Pre-emptive Challenges: The Act allows the fascist authoritarian Trump regime to challenge election results before a single vote is cast by claiming the "rolls are corrupted," providing a legal pretext to contest any losses in November 2026. * Executive Orders on Voting: In March 2026, Trump signed a sweeping executive order targeting mail-in voting and seeking to impose new federal rules on local election organizers. * Targeting Election Officials: The administration has been accused of a coordinated campaign to dismantle federal election security agencies and pressure local election workers. * Use of "Lawfare" Against Candidates: There are concerns that the Department of Justice (DOJ) may be used to harass or disqualify opposition candidates in competitive 2026 races. * Proposed Election Subversion: Analysts have outlined potential scenarios where the administration could deploy troops near polling places or have federal agents seize voting machines to influence outcomes. Recent Military Leadership Changes Widespread Firings: Secretary of Defense Pete Hegseth has overseen the removal or forced retirement of more than 20 high-ranking generals and admirals since returning to office. Joint Chiefs of Staff Purge: Almost the entire Joint Chiefs of Staff has been remade, with only the Commandant of the Marine Corps and the Head of Space Force remaining from the previous leadership. Allegations of a "Loyalty Purge" Targeting "Non-Loyalists": Reports from outlets like The Washington Post and NBC News indicate that while many removals occur without official explanation, Hegseth has frequently criticized leaders he perceives as lacking loyalty to President Trump. "Woke" Ideology as a Pretext: Hegseth has publicly targeted officers who championed diversity, equity, and inclusion (DEI) initiatives, describing such leadership as "woke" and inconsistent with his vision for the military. Impact of the Iran Conflict: The ongoing war with Iran has reportedly intensified these purges, with some officials being removed following disagreements over the scope and success of military operations.

Trump: "You know who else didn't help us? South Korea didn't help us. We've got 45,000 soldiers in South Korea to protect them from Kim Jong Un, who I get along with very well. He said very nice things about me. He used to call Joe Biden a mentally retarded person." https://t.co/l7BqskXLu0

gradio.Server Any Custom Frontend with Gradio's Backend build with your own frontend framework entirely like React, Svelte, or even plain HTML/JS, while still benefiting from Gradio's queuing system, API infrastructure, MCP support, and ZeroGPU on Spaces blog: https://t.co/AwZ20xpG2F

Web & game developers, this is your jam. Gamedev.js Jam 2026 is back. 🎮 🗓 April 13-26, 2026 🌐 Build an HTML5 game in 13 days 🏆 Prizes + expert feedback 💬 Active community + Discord Theme revealed on day one. Ship something weird. Ship something fun. Just ship it. https://t.co/KPphbM8rTz

Is it possible to coordinate with China on AI governance? Critics of our proposed international agreement say no. But statements from Chinese government officials and academic figures paint a more optimistic picture: https://t.co/Fez6l8xvwO

@heynavtoor the results ticker is beautifully done https://t.co/yMkZdMwEeE

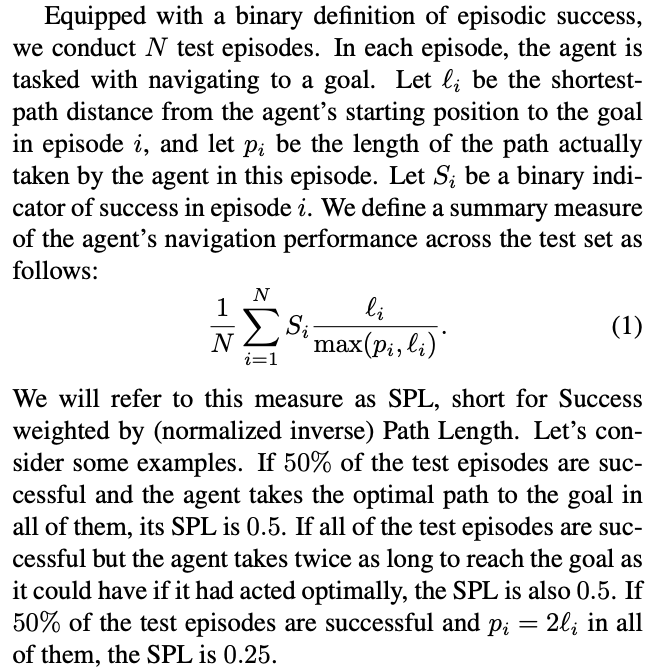

In robotics manipulation, we see many cherry picked demos but no standardized benchmarks. I suggest using STT (Success weighted by normalized inverse Task Time), analogous to SPL from navigation, replacing length by time to do task relative to a human e.g. for "Pick up anything" on random household objects. https://t.co/sVo72dagM2

UN secretary-general Antonio Guterres warns Trump that destroying Iran’s civilian infrastructure is a war crime because of the disproportionate cost to civilians. https://t.co/xe6kcBugU0

In addition to larger features, my goal is to make 𝕏 faster and more delightful to use overall, which means upgrading lots of little things across the product. This is a new sidebar we’re now rolling out on iOS. Excited for more details like this. https://t.co/0XRp0HSGA4

@ashebytes @signulll I have mine watching 40,000 people and 8,300 companies here on X: https://t.co/kiuZ7QXLzb