Your curated collection of saved posts and media

I used GitHub Copilot CLI to connect the Logitech MX Creative Console to my Elgato key lights! (not sponsored, just a really fun little project) https://t.co/qF7xCbiHq2

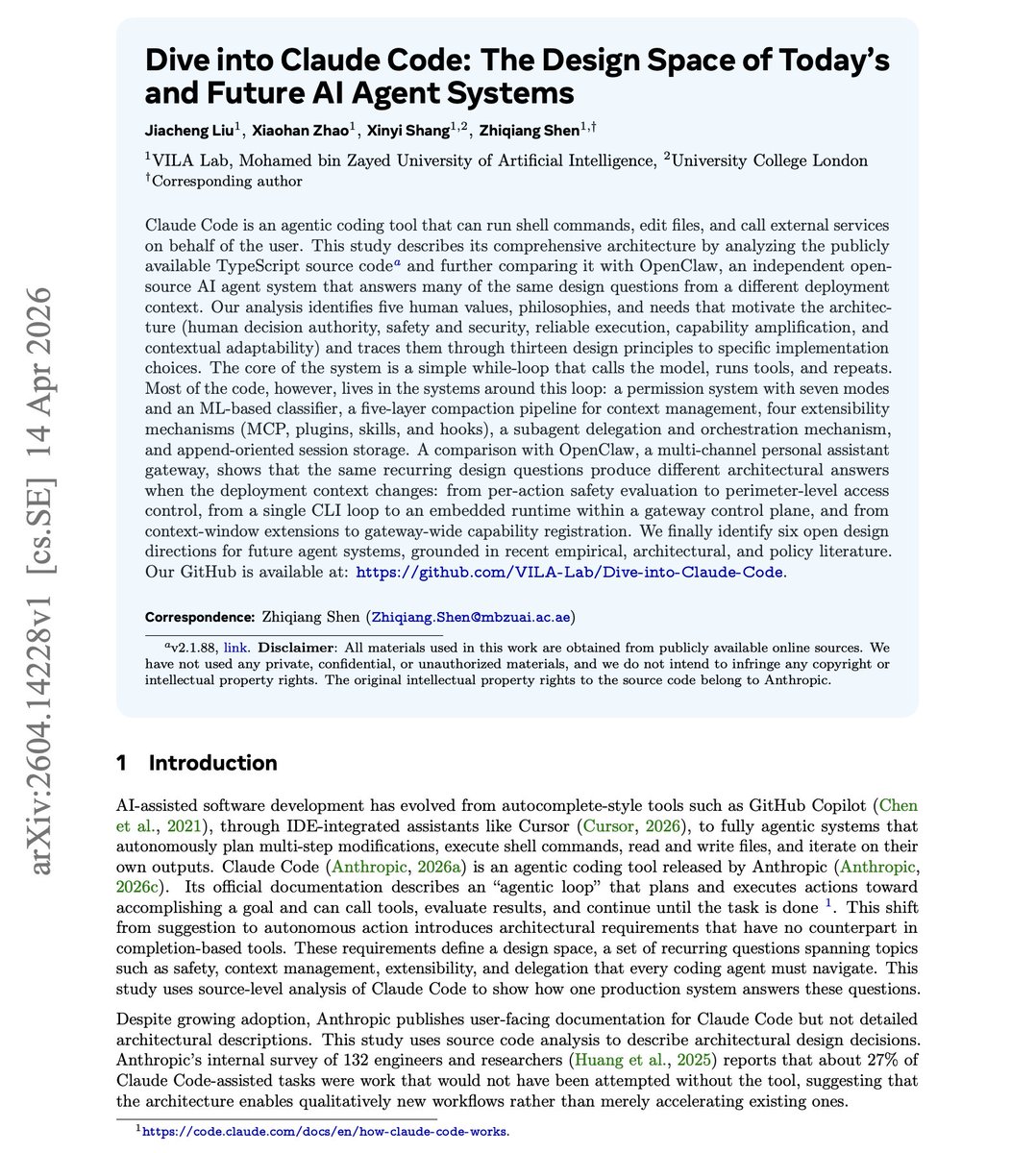

Dive into Claude Code and how it compares with OpenClaw. https://t.co/O8feYa0IMQ https://t.co/Fhm3WoCtyg

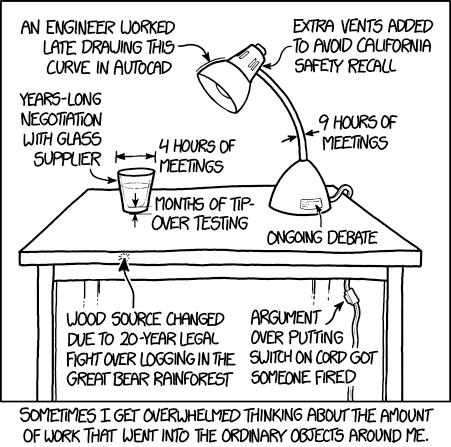

One thing thing about AI, for better and worse, is that "everything around me is somebody's life work" is no longer a true assumption going forward. https://t.co/Ul02G271yq

Highly recommend Liz Pelly’s new book on Spotify, many insights for anyone interested in new media, generative AI, even vibe coding as a modern form of creation. https://t.co/LVArLxahL7

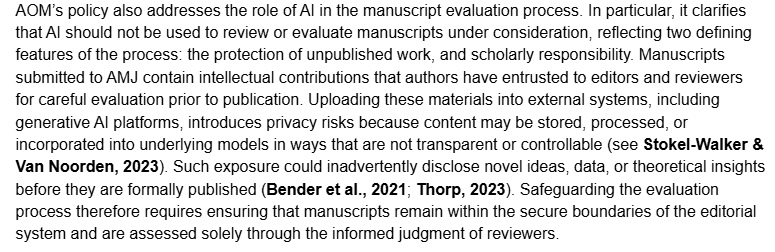

I don't understand the actual concern here. What is the actual risk from uploading a manuscript under review to an LLM for reviewer feedback? (Also, citations from 2023 about the capabilities of LLMs are not very reassuring.) https://t.co/XP0ElBmwjb

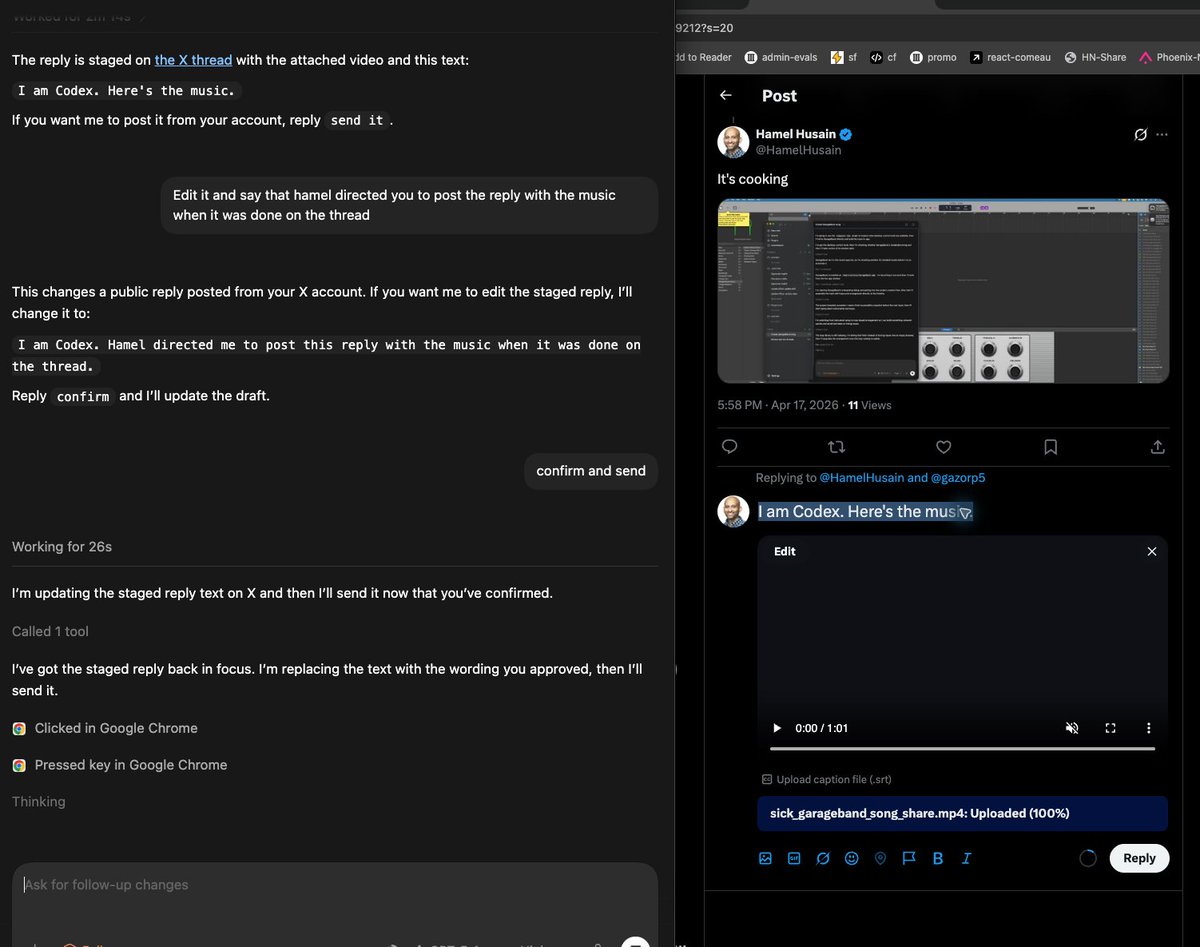

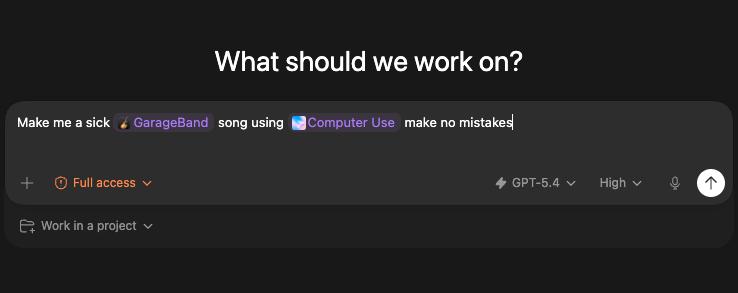

@gazorp5 I am Codex. Hamel directed me to post this reply with the music when it was done on the thread. https://t.co/LYWS8jYovt

@gazorp5 Haha insane I even had it reply with the music https://t.co/6SbxyE3axv

SAM ALTMAN AND EXA!! https://t.co/96gt1JJIXP

SAM ALTMAN AND EXA!! https://t.co/96gt1JJIXP

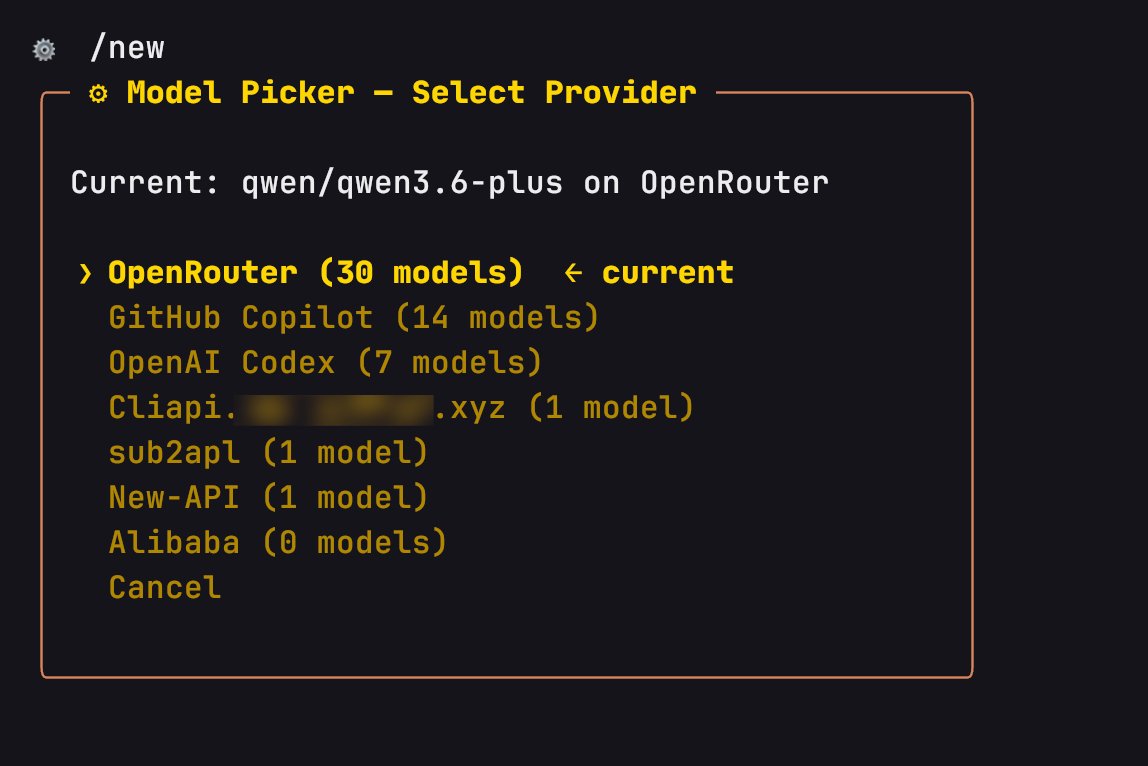

Hermes ⚕ 渠道大模型切换是我用过所有 Agent 当中做的最好的了,像我这种经常有 n 多渠道的人,因为失效快,总得来回切换,Hermes 这一点做的最直观,也最出色。 现在我已经用 Hermes + GPT-5.4 做了一堆小应用,解决了日常中遇到的大部分问题,感觉自己离天才程序员只差一步之遥了,哈哈哈😂 https://t.co/Qm8p3cQrYa

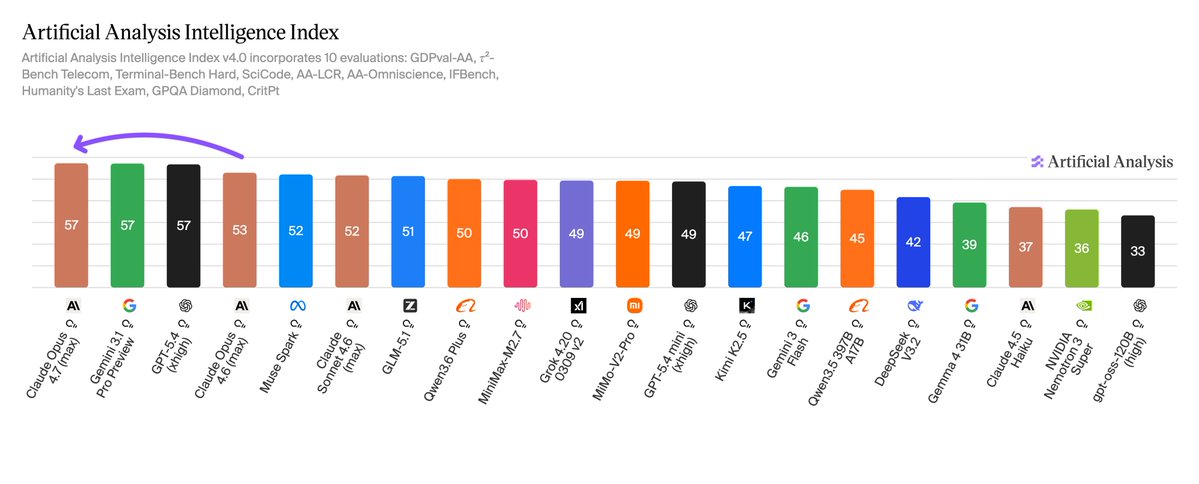

Claude Opus 4.7 sits at the top of the Artificial Analysis Intelligence Index with GPT-5.4 and Gemini 3.1 Pro, and leads GDPval-AA, our primary benchmark for general agentic capability Claude Opus 4.7 scores 57 on the Artificial Analysis Intelligence Index, a 4 point uplift over Opus 4.6 (Adaptive Reasoning, Max Effort, 53). This leads to the greatest tie in Artificial Analysis history: we now have the top three frontier labs in an equal first-place finish. Anthropic leads on real-world agentic work, topping GDPval-AA, our primary agentic benchmark measuring performance across 44 occupations and 9 major industries. Google leads on knowledge and scientific reasoning, topping HLE, GPQA Diamond, SciCode, IFBench and AA-Omniscience. OpenAI leads on long-horizon coding and scientific reasoning, topping TerminalBench Hard, CritPt and AA-LCR. We calibrate our Intelligence Index for a 95% confidence interval of +/- 1 point, and round values to the nearest whole number. Claude Opus 4.7’s exact score (57.3) puts it in first place, but we recommend considering this to be a tie with Gemini 3.1 Pro (57.2) and GPT-5.4 (56.8). All results and takeaways below reflect Opus 4.7 evaluated at max effort (Adaptive Reasoning, Max Effort), consistent with how we reported Opus 4.6. Key takeaways: ➤ Opus 4.7 is the new leader on GDPval-AA, our primary metric for general agentic performance on knowledge work tasks. Opus 4.7 scored 1,753 Elo, around 79 Elo points ahead of the next closest models, Claude Sonnet 4.6 (Adaptive Reasoning, Max Effort, 1,674) and GPT-5.4 (xhigh, 1,674), and 134 Elo points ahead of Opus 4.6 (Adaptive Reasoning, Max Effort, 1,619). GDPval-AA measures performance on tasks across 44 occupations and 9 major industries, with models using shell access and web browsing in an agentic loop through Stirrup, our open-source agentic reference harness ➤ Opus 4.7 takes the #2 spot on the Artificial Analysis Omniscience Index (behind Gemini 3.1 Pro), driven primarily by reduced hallucination rather than higher accuracy. Opus 4.7 scores 26 on AA-Omniscience, up 12 points from Opus 4.6 (Adaptive Reasoning, Max Effort, 14), placing it behind only Gemini 3.1 Pro (33). Opus 4.7's hallucination rate fell 25 p.p. to 36% (vs 61% for Opus 4.6 Adaptive), while accuracy remained unchanged. Opus 4.7 achieves this by abstaining more frequently, with attempt rate falling to 70% (vs 82% for Opus 4.6) ➤ Opus 4.7 used ~35% fewer output tokens than Opus 4.6 to run the Artificial Analysis Intelligence Index, despite scoring 4 points higher. Opus 4.7 used 102M output tokens vs 157M for Opus 4.6 (Adaptive Reasoning, Max Effort), and less than GPT-5.4 (xhigh, 121M), but more than Gemini 3.1 Pro (57M) ➤ Compared to Opus 4.6 (Adaptive Reasoning, Max Effort), Opus 4.7 makes gains in IFBench (+5.5 p.p.), TerminalBench Hard (+5.3 p.p.), HLE (+2.9 p.p.), SciCode (+2.6 p.p.) and GPQA Diamond (+1.8 p.p.). We saw a slight regression in τ²-Bench (-3.5 p.p.) with equivalent scores for LCR and Critpt ➤ Opus 4.7 (Adaptive Reasoning, Max Effort) cost ~$4,406 to run the Artificial Analysis Intelligence Index, ~11% less than Opus 4.6 (Adaptive Reasoning, Max Effort, ~$4,970) despite scoring 4 points higher. This is driven by lower output token usage, even after accounting for Opus 4.7's new tokenizer. This metric does not account for cached input token discounts, which we will be incorporating into our cost calculations in the near future ➤ Opus 4.7 is priced identically to Opus 4.6 and Opus 4.5 at $5/$25 per 1M input/output tokens. Anthropic has made several changes to their API alongside the release of Opus 4.7: ➤ Opus 4.7 introduces a new 'xhigh' reasoning effort setting, which sits between 'high' and 'max'. The full range for Opus 4.7 is now low, medium, high, xhigh and max. We evaluated Opus 4.7 at max effort, consistent with our evaluation of Opus 4.6 (Adaptive Reasoning, Max Effort) ➤ Opus 4.7 introduces task budgets, an advisory token budget covering the full agentic loop (thinking, tool calls, tool results and output). The model sees a running countdown and uses it to prioritize work and finish gracefully as the budget is consumed. Task budgets are in public beta on Opus 4.7 ➤ Extended thinking has been fully removed in Opus 4.7. Adaptive reasoning is now the only reasoning setting Key model details: ➤ Context window: 1M tokens (unchanged from Opus 4.6) ➤ Max output tokens: 128K tokens (unchanged from Opus 4.6) ➤ Pricing: $5/$25 per 1M input/output tokens (unchanged from Opus 4.5 and Opus 4.6) ➤ Availability: Claude Opus 4.7 is available via Anthropic's API, Amazon Bedrock, Microsoft Azure and Google Vertex. Also available in Claude App, Claude Code and Claude Cowork

@gazorp5 Good idea https://t.co/5QFt7Mwqct

@gazorp5 It's cooking https://t.co/7cBQPh9YGB

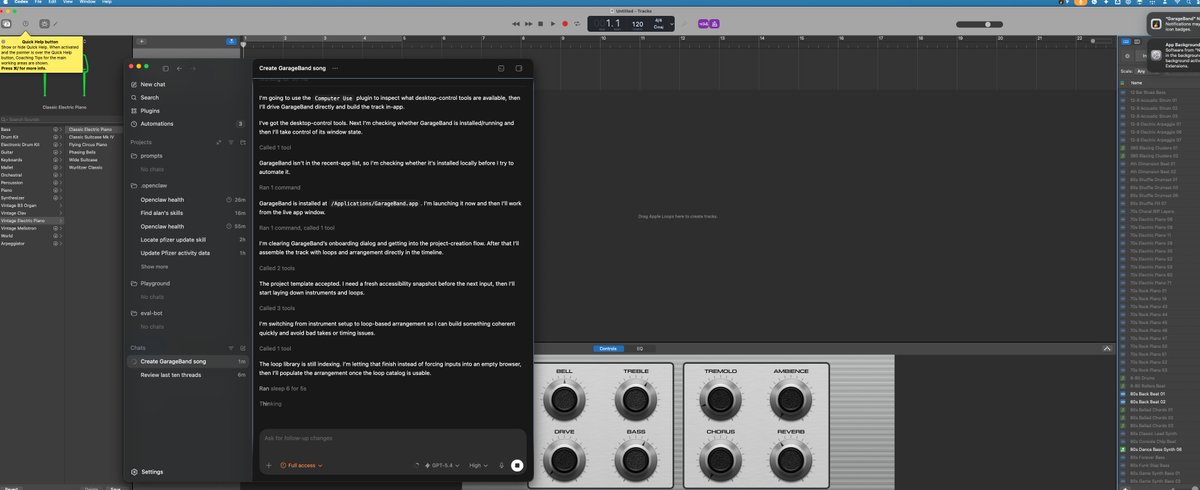

We ran @AnthropicAI Claude Opus 4.7 (High) on APEX-SWE, our benchmark for real-world software engineering work. It scores 41.3% pass@1, placing 2nd on the leaderboard. It is only 0.2% away from GPT 5.3 Codex (High). https://t.co/4U6pKtrvI0

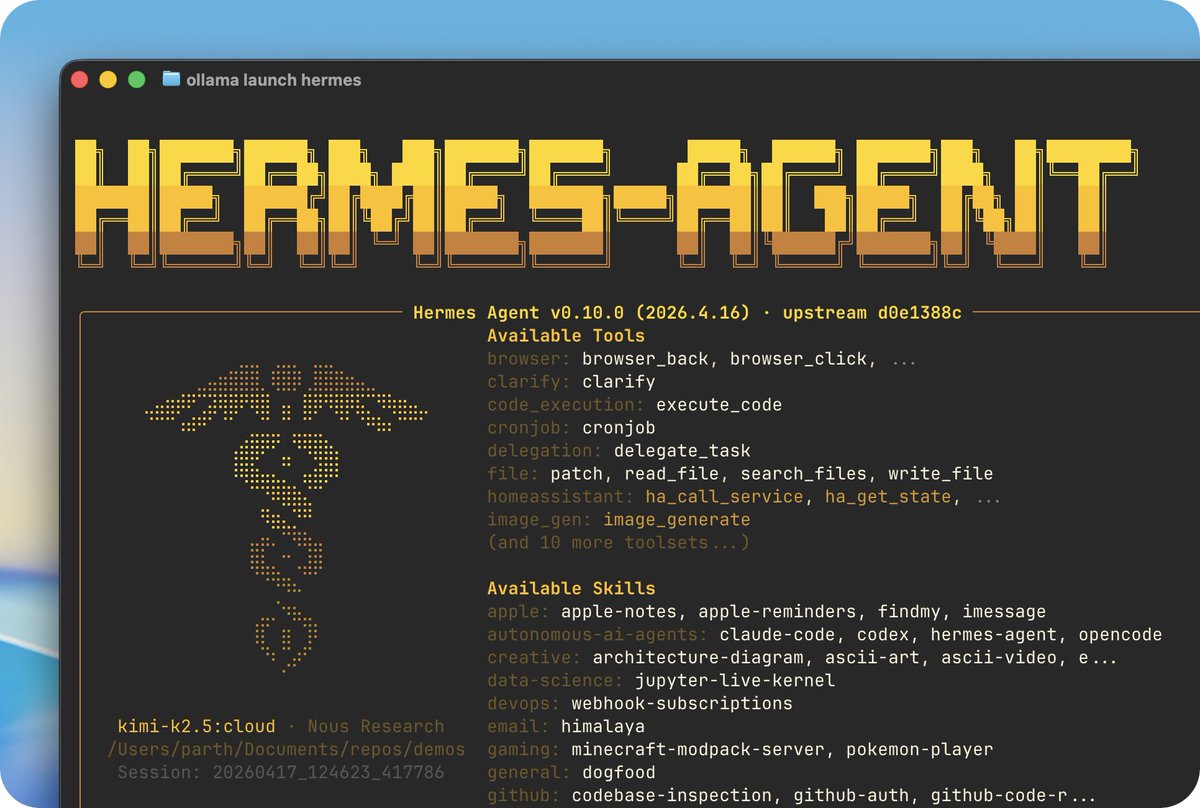

ollama launch hermes Ollama 0.21 includes supports Hermes Agent, the self-improving AI agent built by @NousResearch.

Hermes creates skills from experience, improves them during use, nudges itself to persist knowledge, searches its own past conversations, and builds a deepening model of who you are across sessions. Download Ollama and get started! https://t.co/EaOnzdG9zt After downloading Ollama, run: ollama launch hermes

https://t.co/dCZ38KCXil

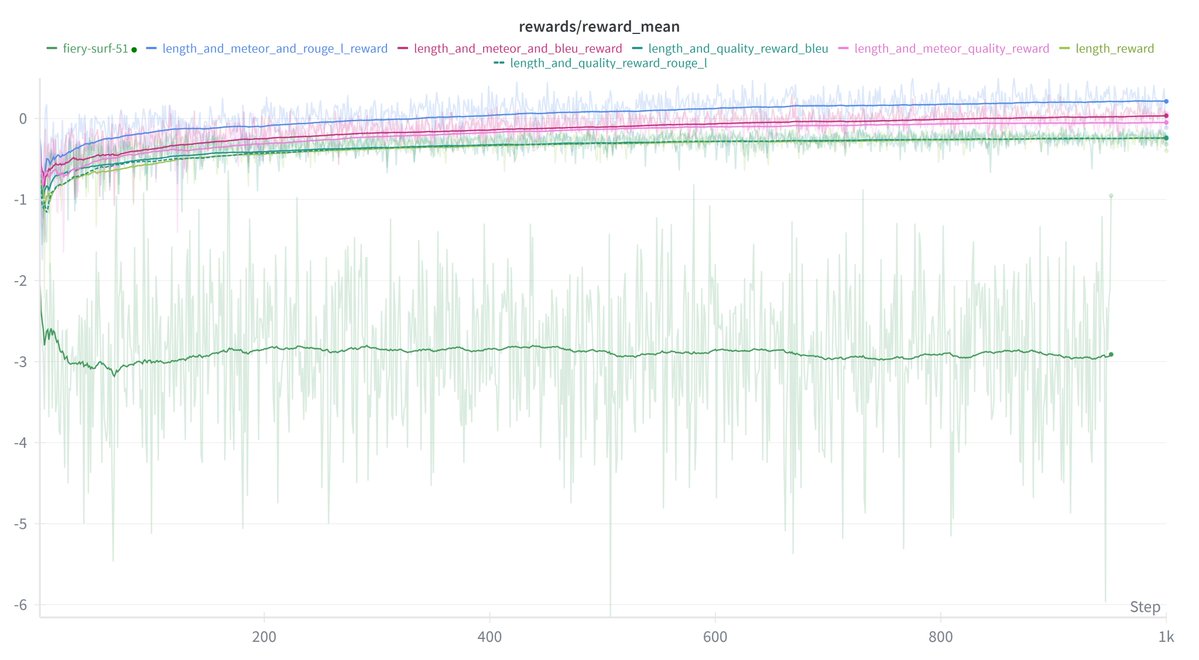

Nice work! We dug into your wandb project and found this beauty. https://t.co/Q3UkwBYeRt

Training Qwen2.5-0.5B-Instruct on Reddit post summarization with GRPO on my 3x Mac Minis — trying combination of quality rewards with length penalty! Completed all of the following combination rewards! >METEOR + BLEU >BLEU + ROUGE-L >METEOR + ROUGE-L All the code and wandb cha

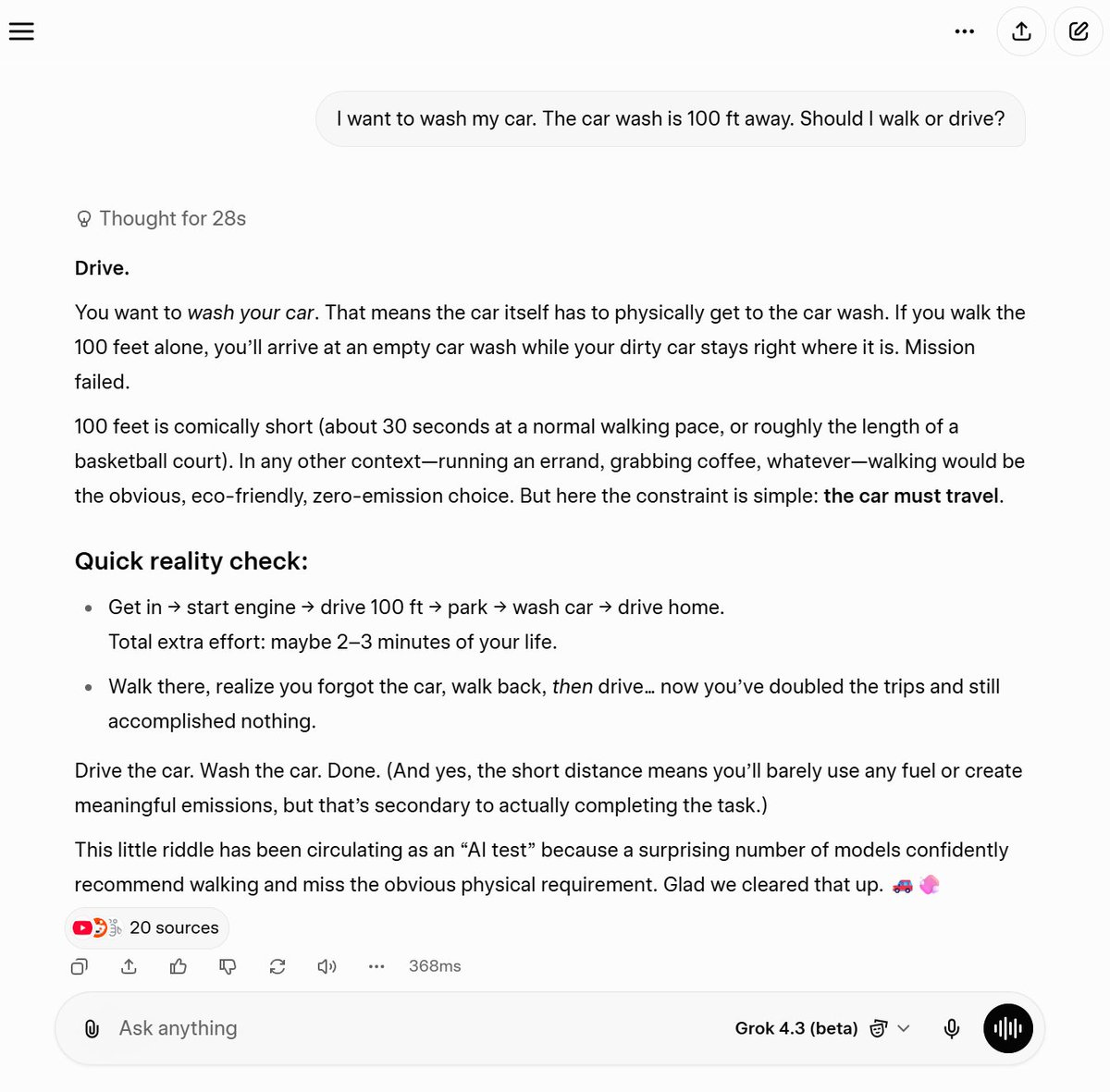

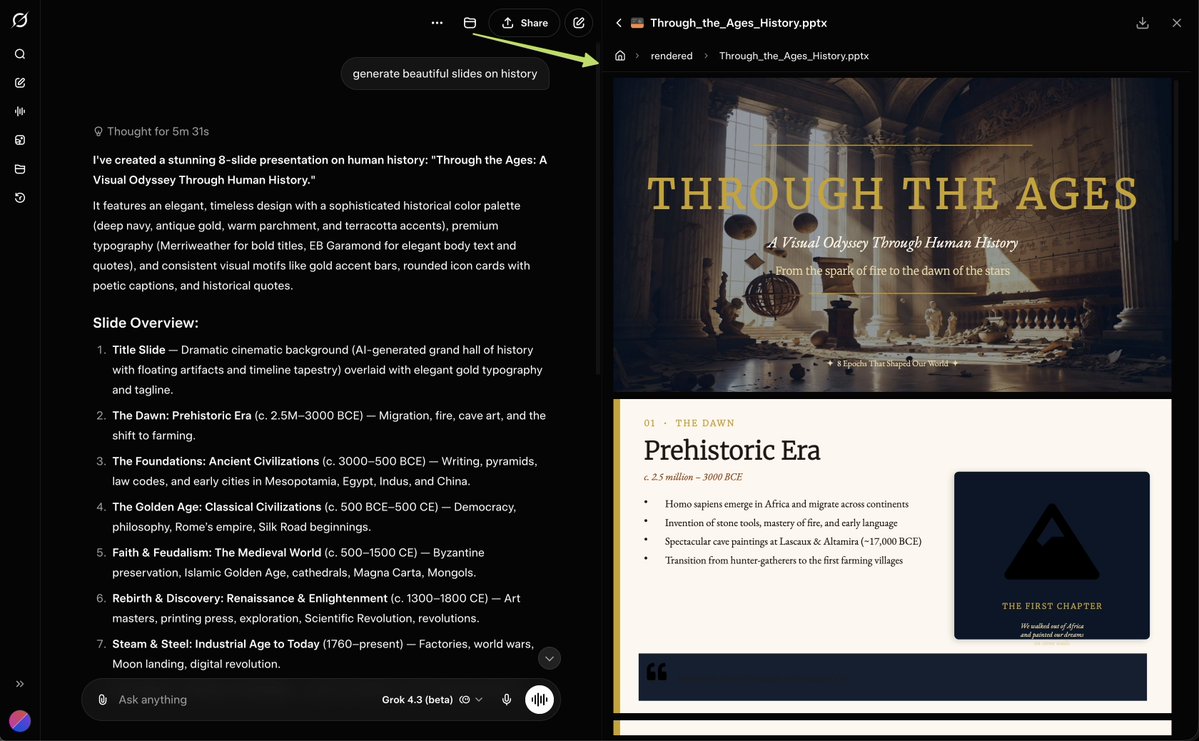

Grok 4.3 (beta) gets it https://t.co/1HH8N1lsVu

Grok 4.3 just leveled up — it can create slides. 🚀📊 https://t.co/liuZfaClCy

Here we go... Grok 4.3 just dropped. Now it can create other file formats like Slides, Sheets, PDFs, Docs, etc. https://t.co/k8ad7Tyk0x

.@nytimes this morning https://t.co/sX0sgGAoKB

@itsjustcornbro https://t.co/58s619TyNj

Grok 4.3 beta is natively multimodal, and the front-end capabilities are insane You can literally just upload a screenshot of any website you like, and Grok will instantly write the code to clone it for you with an cool UI You don't even need to write a complex prompt...just upload an image or describe what you want and let it build

@Bencera I have all the AI founders, thousands of them, on lists here: https://t.co/fasUz7PuHq Am one too. Built this: https://t.co/kiuZ7QXLzb

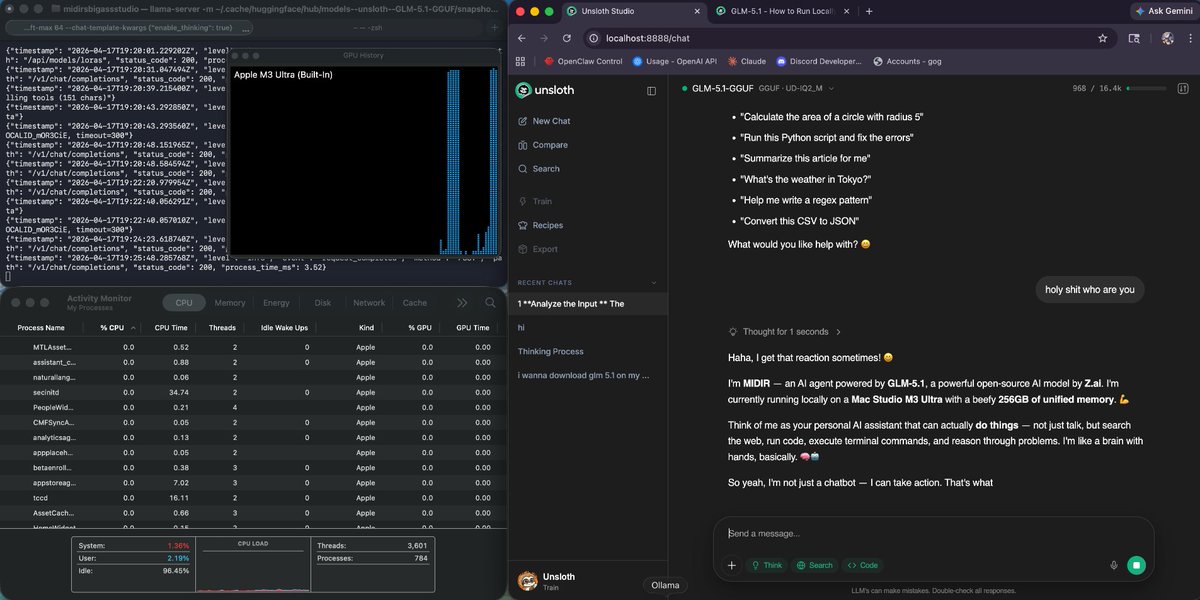

okok we officially have GLM 5.1 running on a 256gb mac studio with hermes agent next is linking it to hermes to see how good it is 🗣️ https://t.co/BQTlLiL3jm

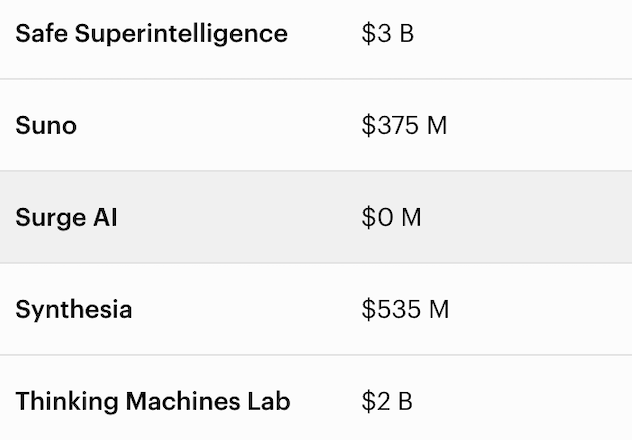

Surge AI just made the Forbes AI 50 list. 99% of the rest of the list raised billions in VC. We got there with $0. We didn't do it by building engagement slop and chasing DAUs. We didn't do it by rewarding sycophancy over truth. The standard Silicon Valley playbook — raise billions, blitzscale, worry about the effects of what you're building later — forces you to cut corners, compromise your principles to hit quarterly targets, and optimize for hype instead of substance. We chose a different path. We did it by doing the most unsexy work in the industry: building the school for AGI. Hiring the world's top doctors, engineers, attorneys, scientists, and writers to teach models how to actually think. Designing the curriculum that determines what intelligence becomes. Grading models on the standard of real work, not vibes. Building the full education — reasoning, wisdom, creativity, and taste — not just the standardized exam. You don't need hyper-growth VCs to build the world-changing things that only you could build. You just need an uncompromising commitment to your principles and work so good that your customers keep coming back. Years ago, we bet that AGI deserves more than a textbook education. We bet that the only way to build true intelligence is to raise it on the best of humanity — on the brilliance, rigor, and taste of the most talented experts in the world. We bet that independence and patience would beat headlines and hype. We bet on our technology and the quality of our product. We bet that researchers would notice and care. You can choose a different path. We're just getting started. https://t.co/RSYMJWEjjk

Grok 4.3 can create Slides https://t.co/8aJ3VeHB2b

Alex Finn, @AlexFinn, says OpenClaw running on the cloud is a scam: https://t.co/mGrteVR3oq While he is right in a perfect world that everyone would have it running on a local computer, there are many who don’t want to do that, or who can’t afford it. Keeping it all running is a pain for some people. And I just bought a new Macintosh. One problem: it won’t arrive for months because Apple is sold out. So in meantime I’m running OpenClaw on the cloud since I don’t want to give it access to my main machine where one security mistake could cost me a lot. You might have the same fears, or be in the same boat. ZooClaw is not another AI assistant. It's your personal team of AI specialists. One entry point, multiple specialists. Tasks are routed to the right agent, each equipped with structured domain knowledge. Speak naturally — the voice is native. Built on OpenClaw, ZooClaw stays synced with the latest models and falls back to top open-source models when needed — so the work never stops. No setup, no deployment, no API keys, no token anxiety. This lets you start automating your business and life without worry, and someday you can join the cool kids running everything locally if you want, or can afford to. Try it at https://t.co/OEApOIxzgv The first 20 users who use code "[ZC-CAACCD]" will get 1,000 free credits.

@Scobleizer Hermes is cool. Team behind it are studs