Your curated collection of saved posts and media

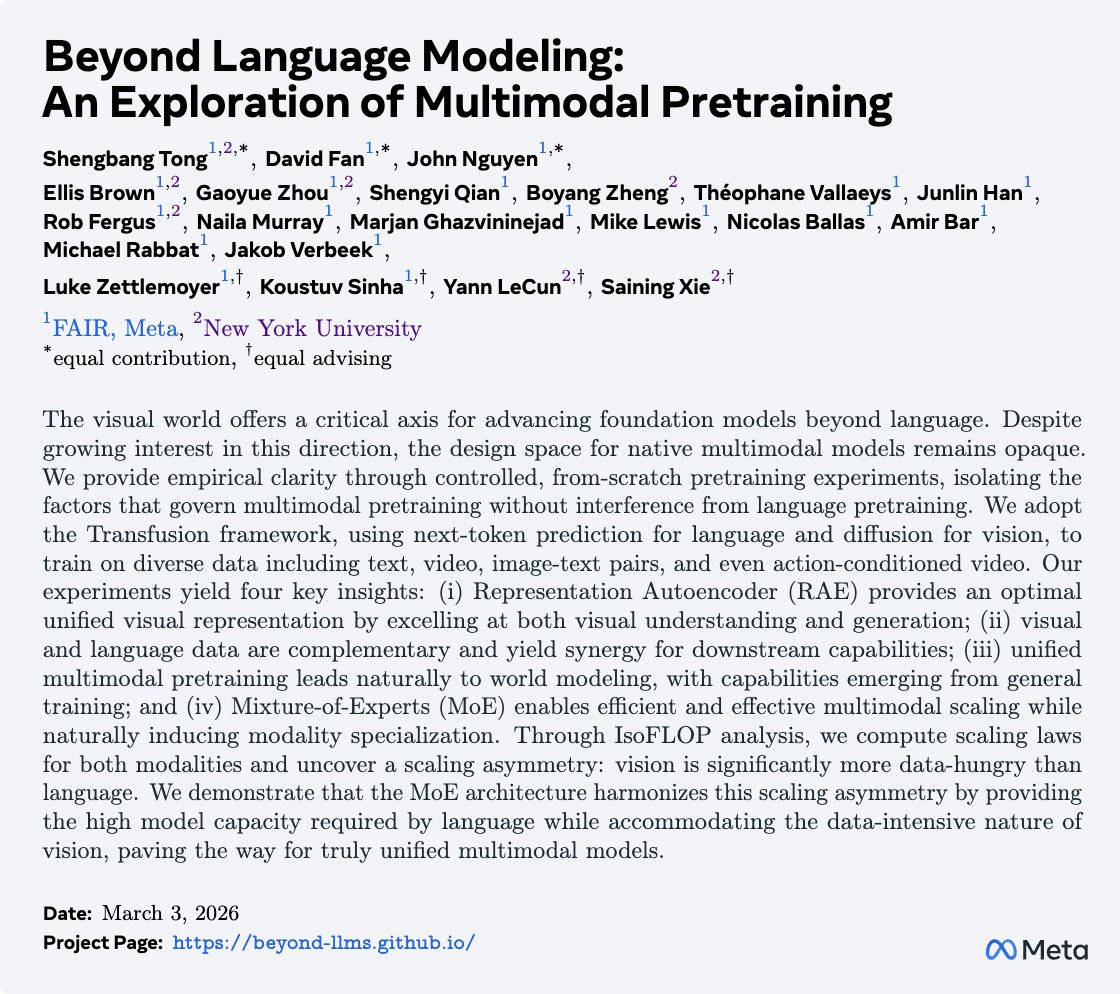

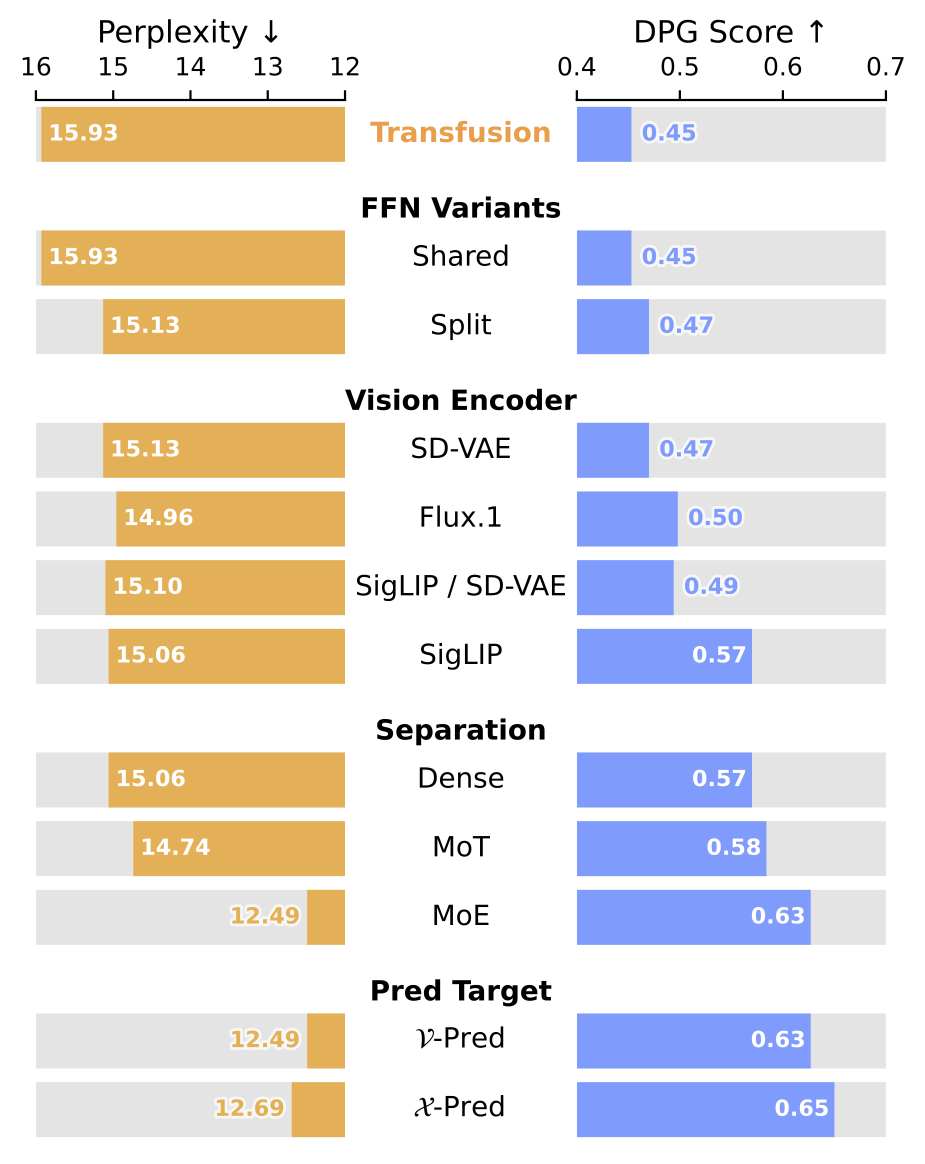

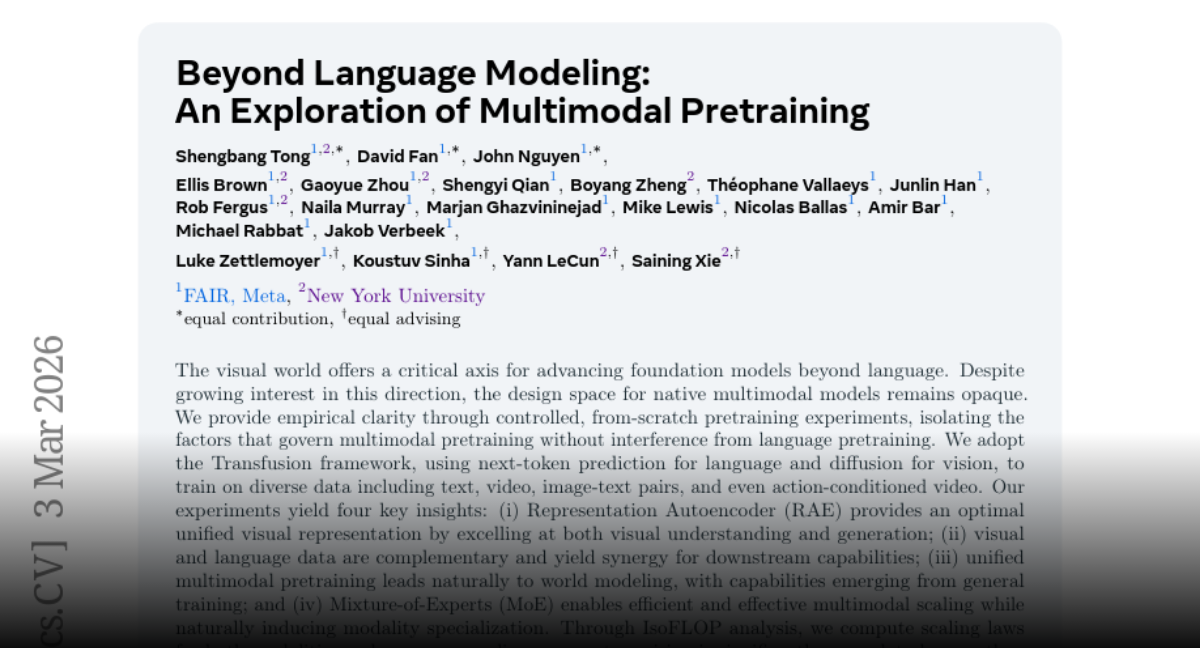

Humans communicate through language and interact with the world through vision, yet most multimodal models are language-first. What happens when we go beyond language? 🤔 Beyond Language Modeling: a deep dive into the design space of truly native multimodal models Paper: https://t.co/KOpmL1PItn Project: https://t.co/Oy6XuEtUAi

Not only is Russia not winning, Ukraine would be decisively winning, but for Trump and his negotiators propping up Putin. We have taken sides, and we have taken the wrong side. If that is because of personal side deals with Russia, it’s unforgivable. https://t.co/a4CZo9KRqq

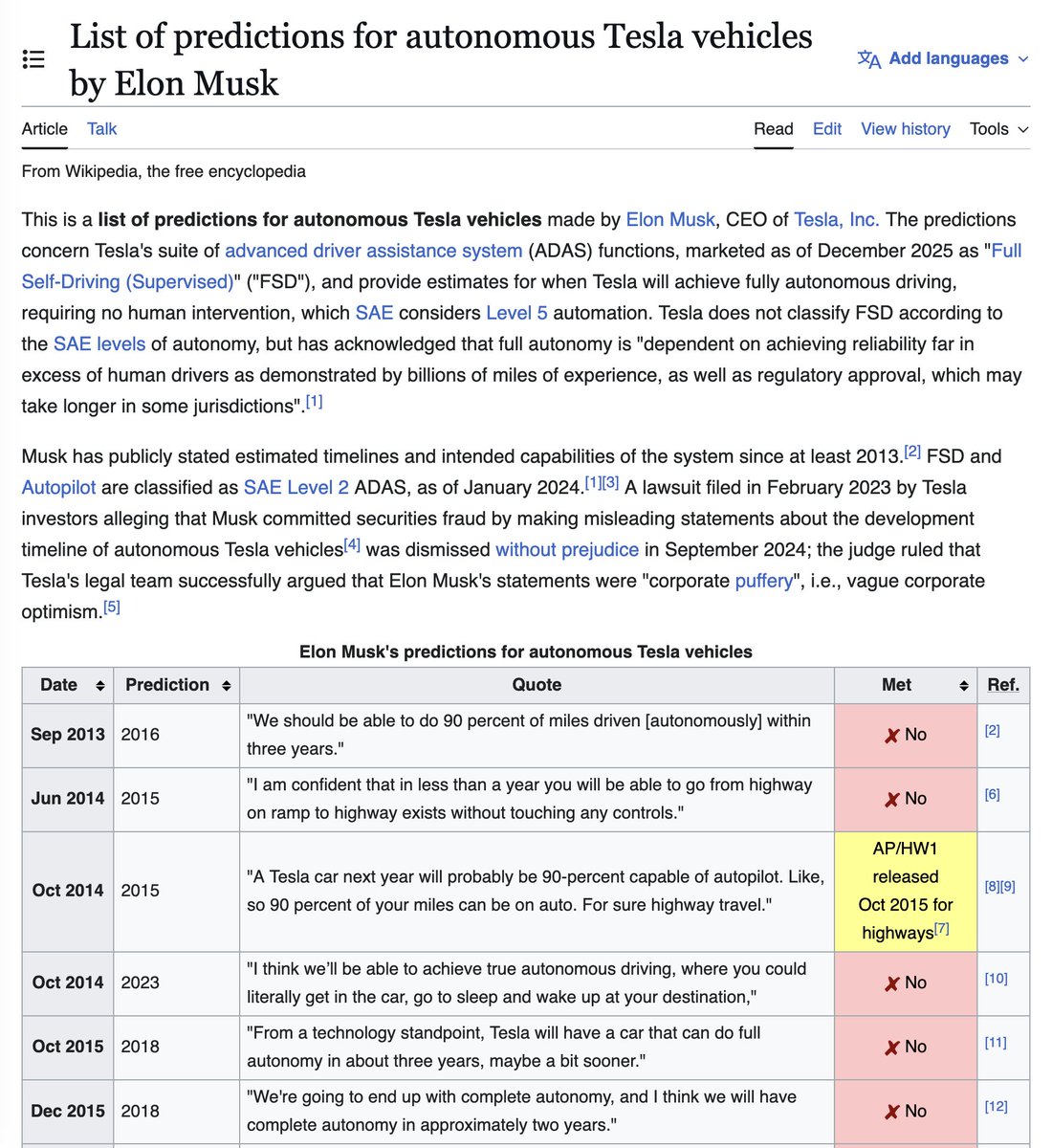

https://t.co/3zK7KT07I5

https://t.co/3zK7KT07I5

Beyond Language Modeling An Exploration of Multimodal Pretraining paper: https://t.co/GmtPAQDo8T

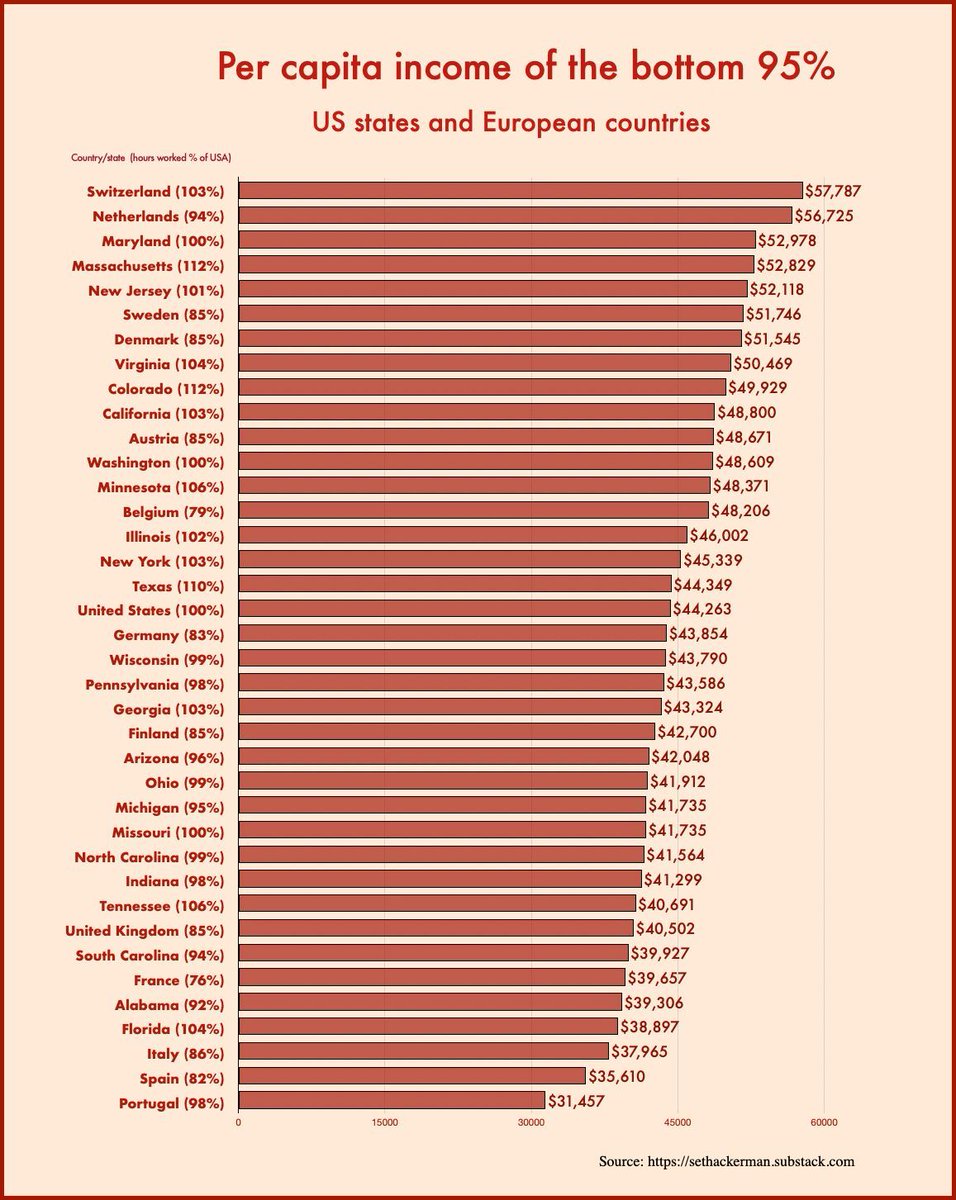

How to read this chart: the typical Belgian earns as much as the typical Californian but works about 24% less. Pretty smart move to calculate such data for the “bottom 95%” only. Worth exploring further. Source: https://t.co/Mfv6fc8DGw https://t.co/D6zuzB35Ju

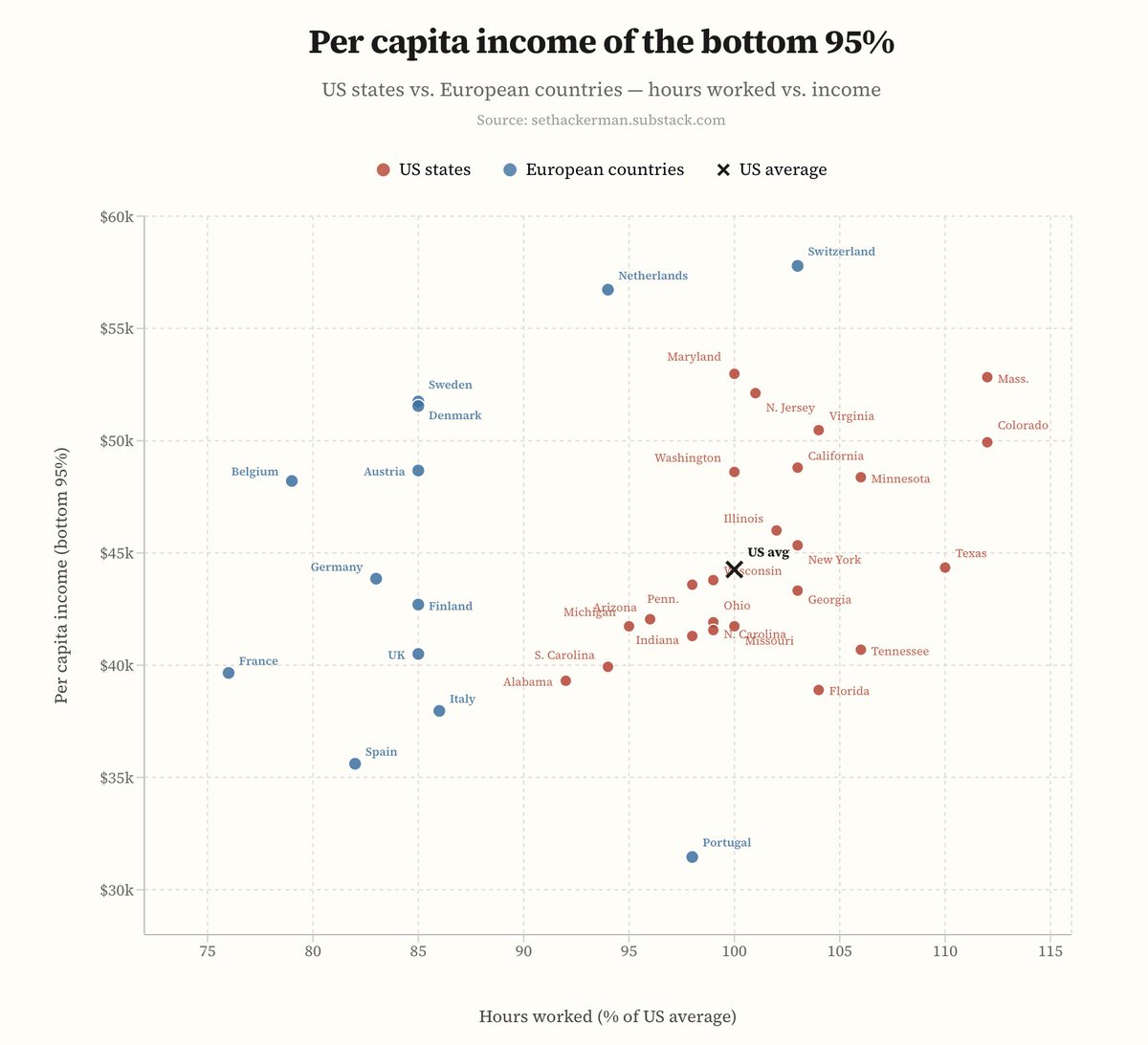

@simongerman600 made you the scatter plot you should have created in the first place.... https://t.co/YKlU2lWqPS https://t.co/dulM6lK8eZ

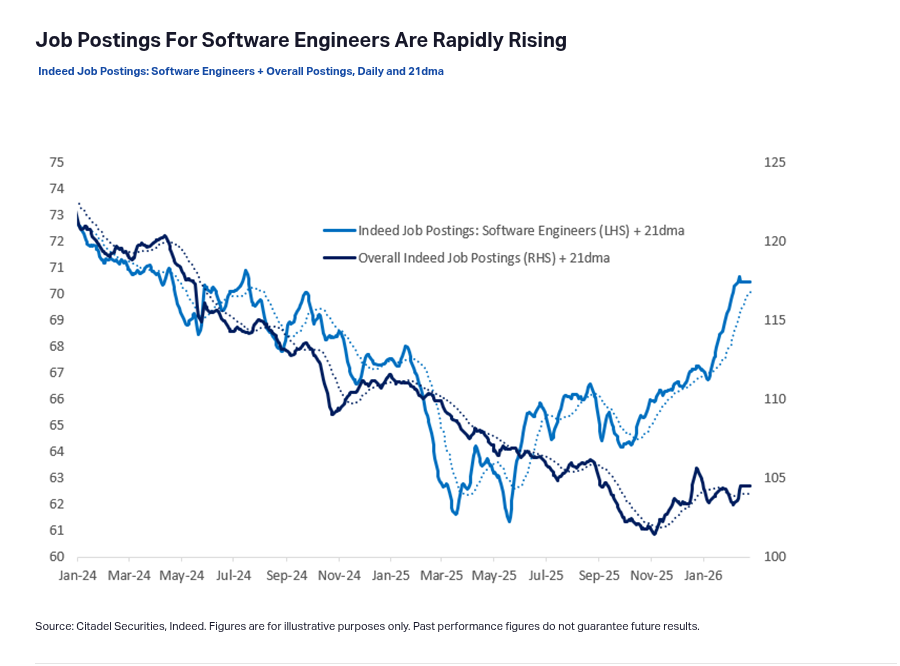

Citadel Securities published this graph showing a strange phenomenon. Job postings for software engineers are actually seeing a massive spike. Classic example of the Jevons paradox. When AI makes coding cheaper, companies actually may need a lot more software engineers, not fewer. When software is cheaper to build, companies naturally want to build a lot more of it. Businesses are now putting software into industries and tools where it was simply too expensive before. --- Chart from citadelsecurities .com/news-and-insights/2026-global-intelligence-crisis/

Yann LeCun 🤝 Saining Xie insane crossover of the 2 biggest visual representation researchers in the AI field “Beyond Language Modeling: An Exploration of Multimodal Pretraining” Right now, most multimodal models are basically a language model with a vision adapter bolted on, so they can describe images, but they don’t really think in images or video. This paper shows what happens when you do it the hard way: train one model from scratch on text, images, and video with a unified setup. They key idea is if you give the model a good visual internal format and it can use vision for both understanding and generating. Additionally, multimodal data can improve language instead of distracting it, and mixture-of-experts lets you scale vision’s huge data intake without bloating everything else. This paves the way towards changing the vision paradigm from “captioning add-on” model to native multimodal foundation model.

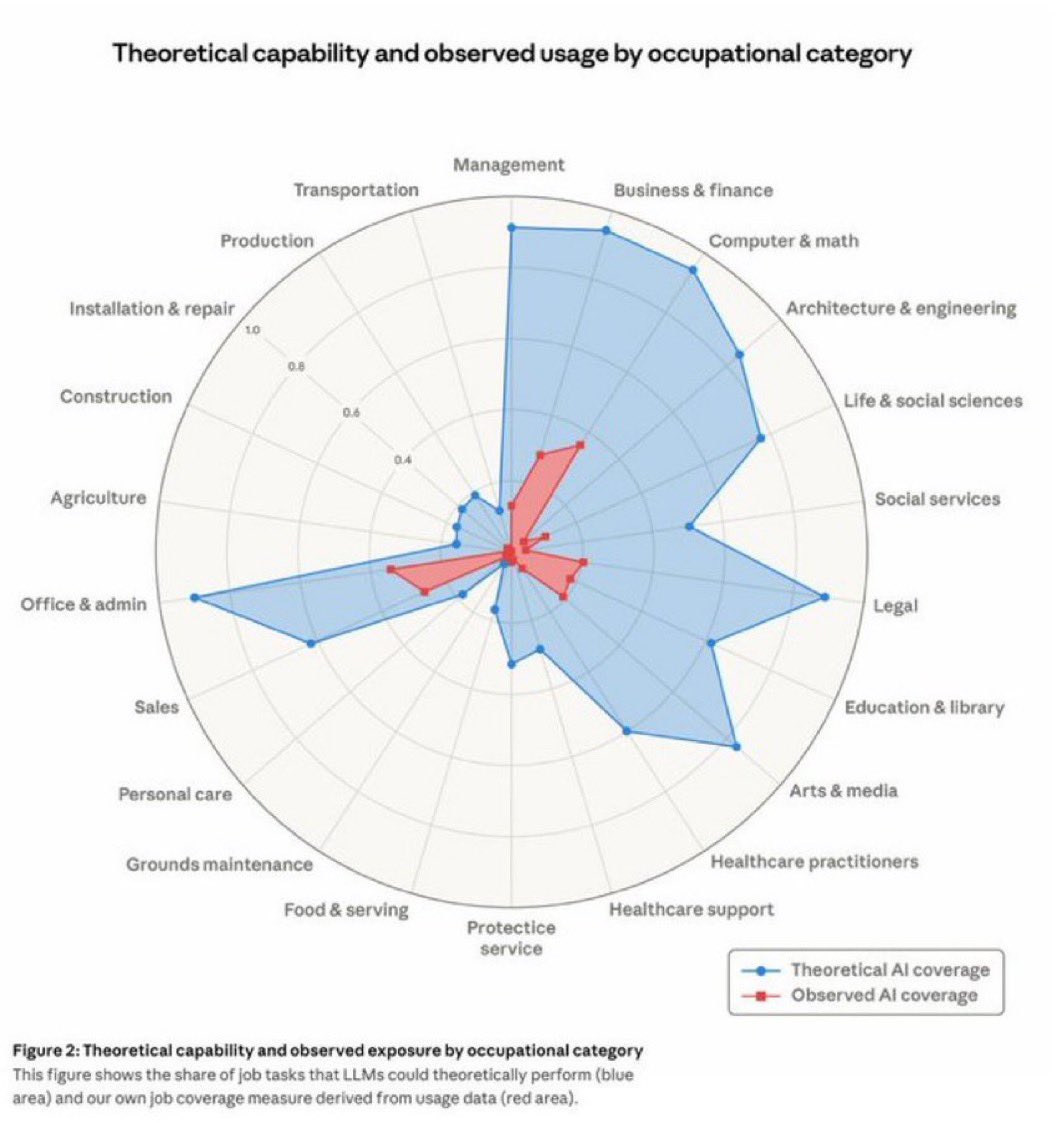

Anthropic's Revealing Chart on AI's Impact on Jobs Anthropic has unveiled a pivotal chart that underscores the chasm between AI's capabilities and its real-world application in the workforce. Derived from analyzing 2 million actual conversations with Claude, this radar chart, titled "Theoretical Capability and Observed Usage by Occupational Category," paints a stark picture of untapped automation potential across various job sectors. At its core, the chart is a spider web diagram plotting occupational categories around a circular axis, with values ranging from 0 to 1.0 representing the share of job tasks. The expansive blue area illustrates the theoretical coverage tasks that large language models (LLMs) like Claude could perform right now based on their inherent abilities. In contrast, the much smaller red area shows observed usage, drawn from real user interactions. The visual disparity is immediate and profound: blue spikes outward significantly in fields like computer and math (reaching about 0.75), business and finance, and office administration, while red hugs close to the center, often below 0.2 across most categories. This gap isn't just academic; it's a "career runway," as highlighted in discussions around the chart. For programmers, 75% of tasks are theoretically automatable, yet actual usage lags far behind. Similar vulnerabilities appear in customer service, data entry, and financial analysis, roles traditionally seen as white-collar strongholds. Meanwhile, hands-on fields like construction, agriculture, and protective services show lower theoretical exposure, with blue areas dipping to around 0.1-0.3, suggesting AI's current limitations in physical or unpredictable environments. Broader data amplifies the chart's message. As of early 2026, 49% of U.S. jobs expose at least 25% of tasks to AI, up from 36% a year prior. Yet, mass layoffs haven't materialized; unemployment in AI-vulnerable roles remains steady. Instead, subtler shifts are underway: a 14% drop in hiring for 22-25-year-olds in exposed positions indicates companies are prioritizing experienced workers, shortening entry-level pathways for recent graduates. The implications are clear: while AI's red footprint grows incrementally each month, the blue expanse signals accelerating change. College-educated, higher-earning professionals, once insulated are now most at risk, flipping the script on traditional labor disruptions. Anthropic's chart isn't a doomsday prophecy but a wake-up call, urging workers and businesses to bridge the gap through adaptation, upskilling, and ethical integration of AI tools. Please read the 5000 Days Series at https://t.co/tcKeuiQyql for answers on how you can thrive in the Interregnum.

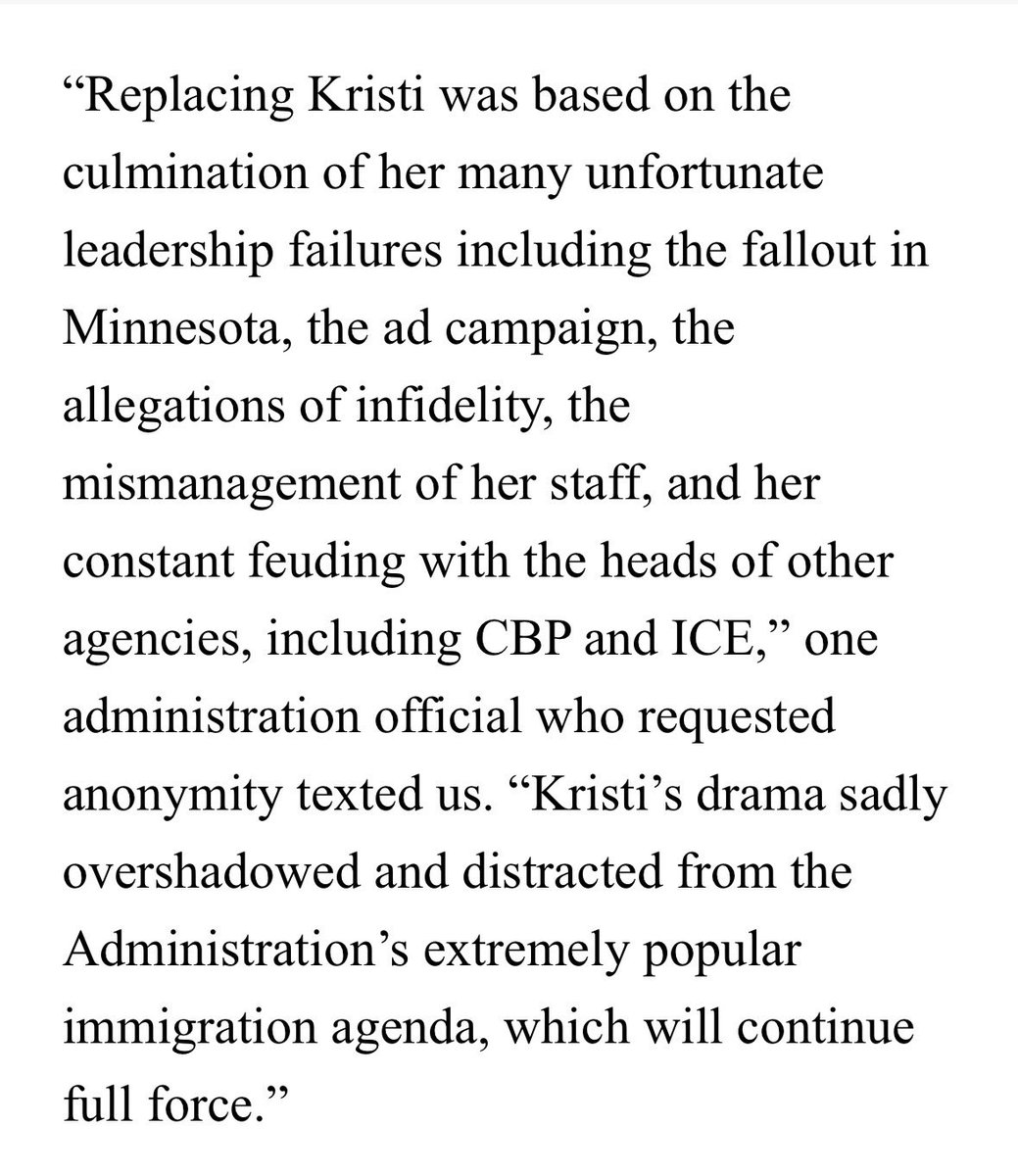

This is an amazing quote about Kristi Noem. https://t.co/sJnsDqfMB2 https://t.co/HGS92bmExB

This is an amazing quote about Kristi Noem. https://t.co/sJnsDqfMB2 https://t.co/HGS92bmExB

This is an amazing quote about Kristi Noem. https://t.co/sJnsDqfMB2 https://t.co/HGS92bmExB

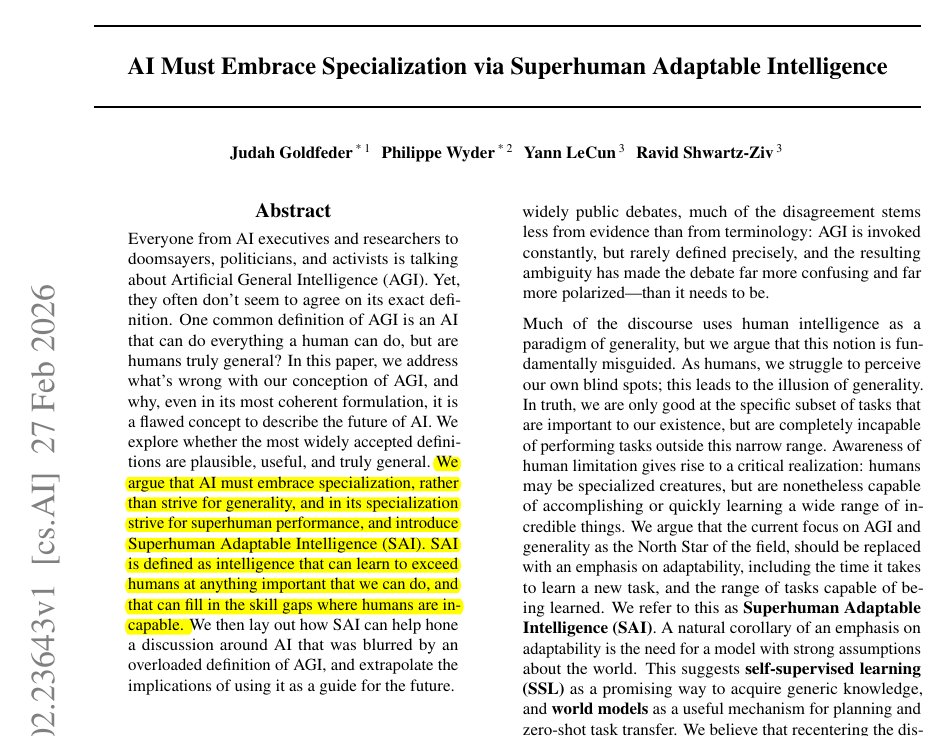

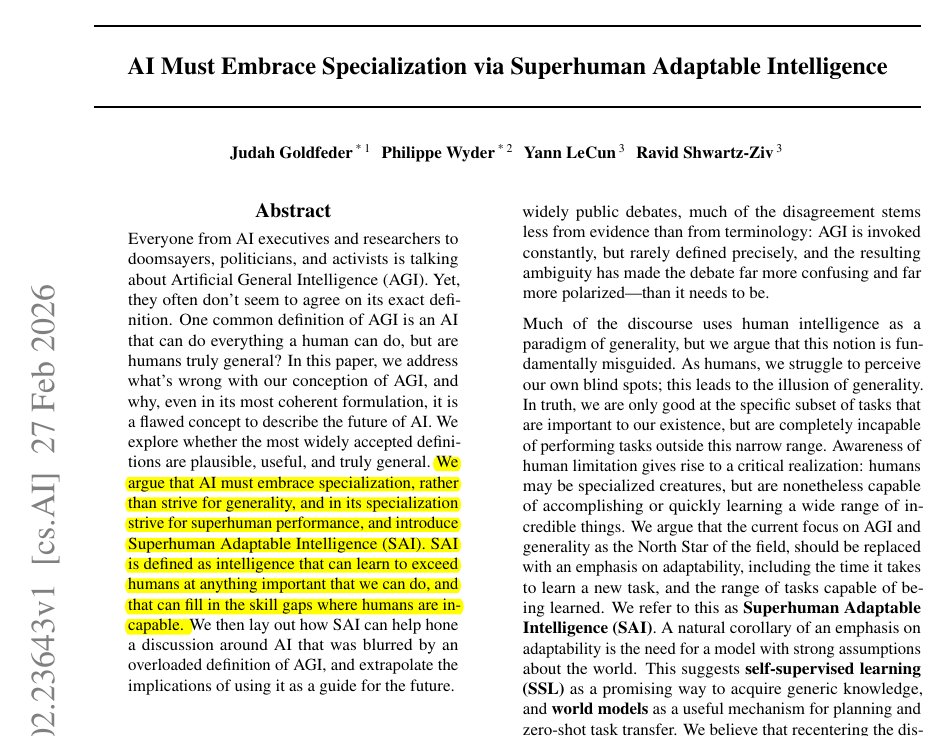

Yann LeCun's (@ylecun ) new paper along with other top researchers proposes a brilliant idea. 🎯 Says that chasing general AI is a mistake and we must build superhuman adaptable specialists instead. The whole AI industry is obsessed with building machines that can do absolutely everything humans can do. But this goal is fundamentally flawed because humans are actually highly specialized creatures optimized only for physical survival. Instead of trying to force one giant model to master every possible task from folding laundry to predicting protein structures, they suggest building expert systems that learn generic knowledge through self-supervised methods. By using internal world models to understand how things work, these specialized systems can quickly adapt to solve complex problems that human brains simply cannot handle. This shift means we can stop wasting computing power on human traits and focus on building diverse tools that actually solve hard real-world problems. So overall the researchers here propose a new target called Superhuman Adaptable Intelligence which focuses strictly on how fast a system learns new skills. The paper explicitly argues that evolution shaped human intelligence strictly as a specialized tool for physical survival. The researchers state that nature optimized our brains specifically for tasks necessary to stay alive in the physical world. They explain that abilities like walking or seeing seem incredibly general to us only because they are absolutely critical for our existence. The authors point out that humans are actually terrible at cognitive tasks outside this evolutionary comfort zone, like calculating massive mathematical probabilities. The study highlights how a chess grandmaster only looks intelligent compared to other humans, while modern computers easily crush those human limits. This proves their central point that humanity suffers from an illusion of generality simply because we cannot perceive our own biological blind spots. They conclude that building machines to mimic this narrow human survival toolkit is a deeply flawed way to create advanced technology.

Yann LeCun's (@ylecun ) new paper along with other top researchers proposes a brilliant idea. 🎯 Says that chasing general AI is a mistake and we must build superhuman adaptable specialists instead. The whole AI industry is obsessed with building machines that can do absolutely everything humans can do. But this goal is fundamentally flawed because humans are actually highly specialized creatures optimized only for physical survival. Instead of trying to force one giant model to master every possible task from folding laundry to predicting protein structures, they suggest building expert systems that learn generic knowledge through self-supervised methods. By using internal world models to understand how things work, these specialized systems can quickly adapt to solve complex problems that human brains simply cannot handle. This shift means we can stop wasting computing power on human traits and focus on building diverse tools that actually solve hard real-world problems. So overall the researchers here propose a new target called Superhuman Adaptable Intelligence which focuses strictly on how fast a system learns new skills. The paper explicitly argues that evolution shaped human intelligence strictly as a specialized tool for physical survival. The researchers state that nature optimized our brains specifically for tasks necessary to stay alive in the physical world. They explain that abilities like walking or seeing seem incredibly general to us only because they are absolutely critical for our existence. The authors point out that humans are actually terrible at cognitive tasks outside this evolutionary comfort zone, like calculating massive mathematical probabilities. The study highlights how a chess grandmaster only looks intelligent compared to other humans, while modern computers easily crush those human limits. This proves their central point that humanity suffers from an illusion of generality simply because we cannot perceive our own biological blind spots. They conclude that building machines to mimic this narrow human survival toolkit is a deeply flawed way to create advanced technology.

Introducing the Google Workspace CLI: https://t.co/8yWtbxiVPp - built for humans and agents. Google Drive, Gmail, Calendar, and every Workspace API. 40+ agent skills included.

Introducing the Google Workspace CLI: https://t.co/8yWtbxiVPp - built for humans and agents. Google Drive, Gmail, Calendar, and every Workspace API. 40+ agent skills included.

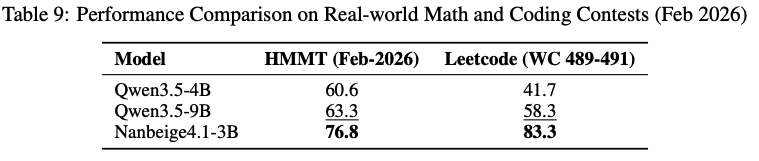

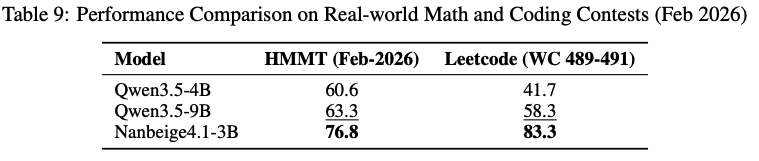

In both LeetCode's Weekly Contests (Weekly Contests 489–491) and the HMMT February 2026 (Harvard-MIT Mathematics Tournament), Nanbeige4.1-3B's performance not only significantly outperformed that of Qwen3.5-4B but also surpassed Qwen3.5-9B. https://t.co/2guwzB3yNa

In both LeetCode's Weekly Contests (Weekly Contests 489–491) and the HMMT February 2026 (Harvard-MIT Mathematics Tournament), Nanbeige4.1-3B's performance not only significantly outperformed that of Qwen3.5-4B but also surpassed Qwen3.5-9B. https://t.co/2guwzB3yNa

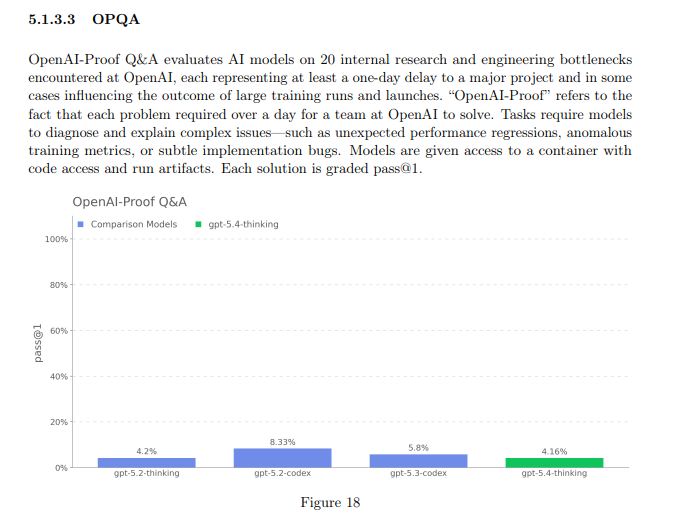

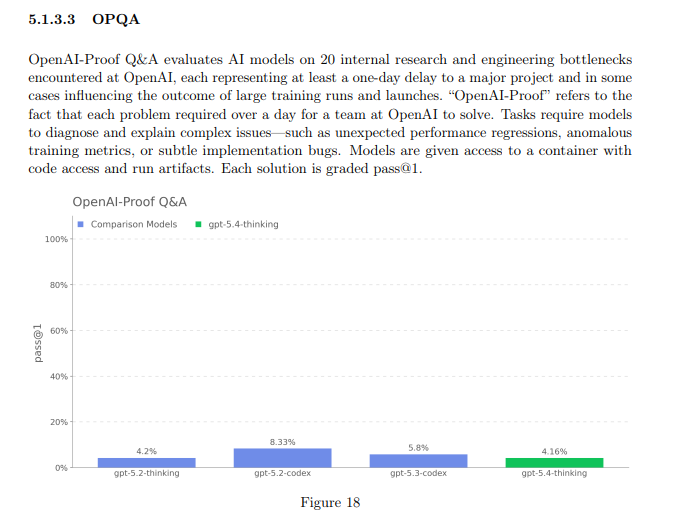

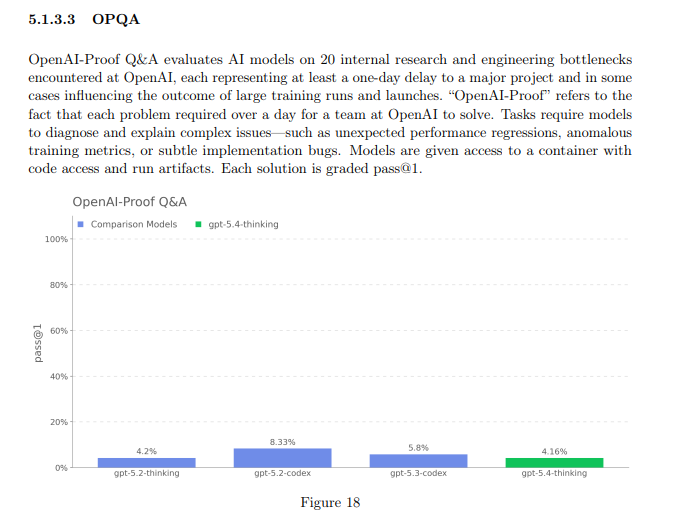

Also, come on OpenAI. If you want an automated AI researcher, this needs to start going up, not down. https://t.co/0ZQ4UhdNyu

Also, come on OpenAI. If you want an automated AI researcher, this needs to start going up, not down. https://t.co/0ZQ4UhdNyu

Also, come on OpenAI. If you want an automated AI researcher, this needs to start going up, not down. https://t.co/0ZQ4UhdNyu

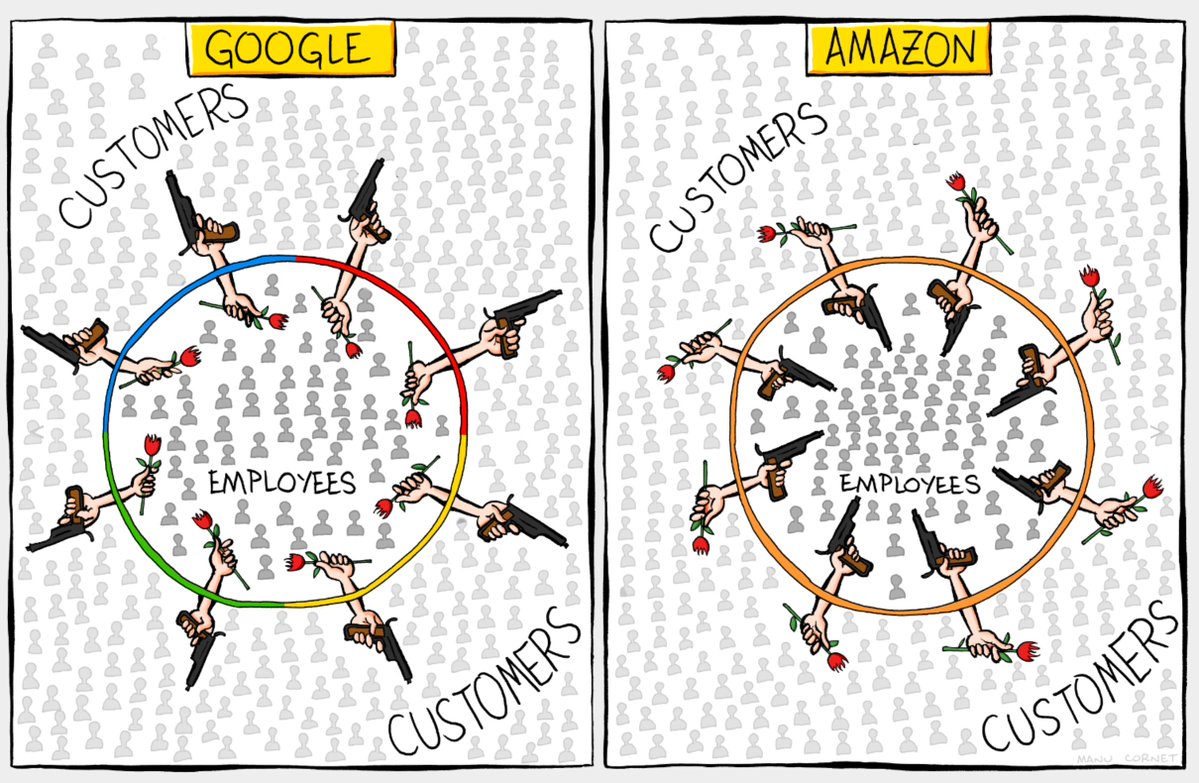

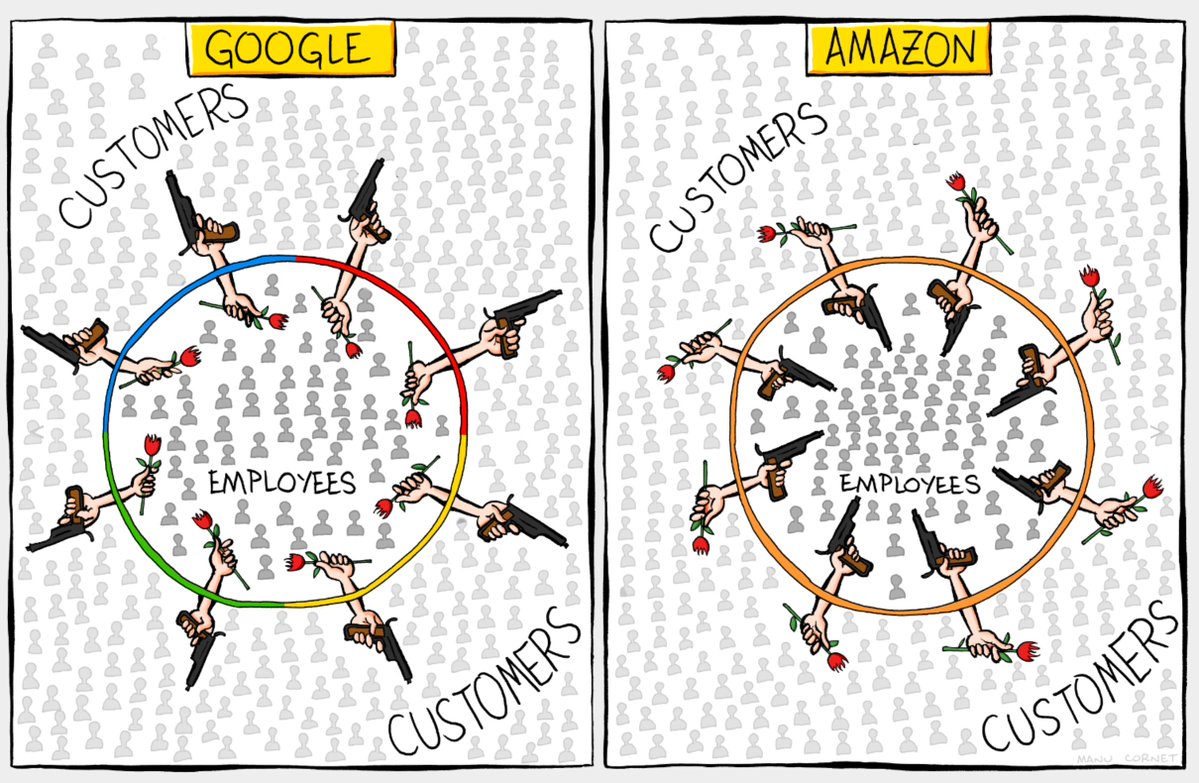

Google kicks out legit paying Antigravity customers for high usage [to solve their problem of not enough capacity]; does not tell them; does not offer refunds or any way to refund the service. This comic by @lmanul is so spot on with regards to Google (and Amazon!) https://t.co/x0LToYbOHX

Google kicks out legit paying Antigravity customers for high usage [to solve their problem of not enough capacity]; does not tell them; does not offer refunds or any way to refund the service. This comic by @lmanul is so spot on with regards to Google (and Amazon!) https://t.co/x0LToYbOHX