Your curated collection of saved posts and media

How can you improve your agentic search pipeline? I just wrote a blog post with @tech_optimist from @lancedb to answer exactly that. TLDR: - Parse files and take page-level screenshots with LiteParse, the parser we just open sourced at @llama_index - Chunk and embed text, and store everything (text, image bytes, vector data) in a local LanceDB instance - Expose text and image retrieval tools to a Claude agent, and let it reason on both data types With our eval dataset, the agent got near-perfect scores on most complex QA tasks, showing how a strong parsing foundation and multimodal retrieval can really improve your search🚀 Read the full breakdown here: https://t.co/5GRRy065TI

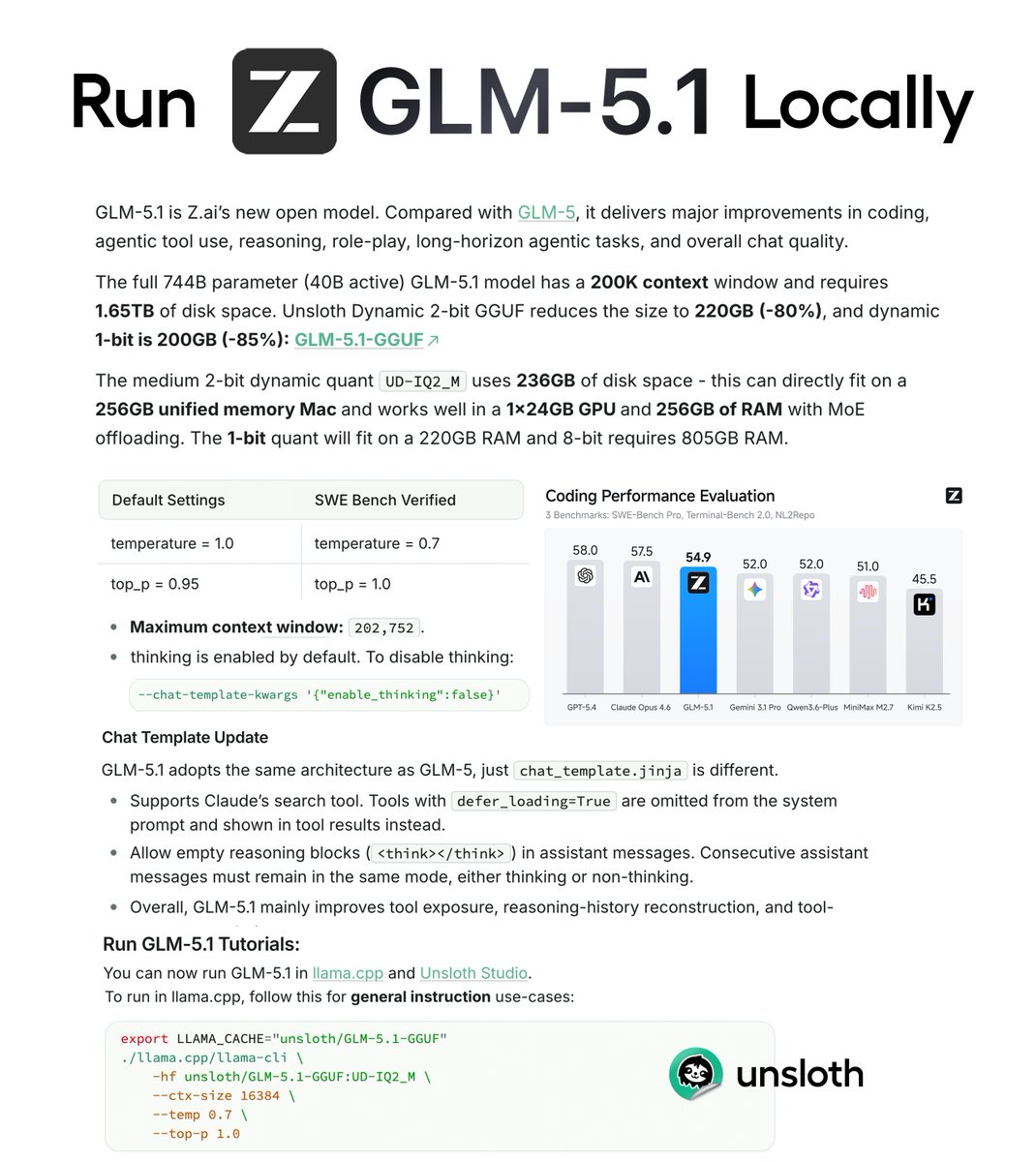

GLM-5.1 can now be run locally!🔥 GLM-5.1 is a new open model for SOTA agentic coding & chat. We shrank the 744B model from 1.65TB to 220GB (-86%) via Dynamic 2-bit. Runs on a 256GB Mac or RAM/VRAM setups. Guide: https://t.co/LgWFkhQ5rr GGUF: https://t.co/64nkm2t8K0 https://t.co/5IKcvO6BMi

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Bl

We’re thrilled to open-source TriAttention! 🚀 🦞 Deploy OpenClaw (32B LLM) on a single 24GB RTX 4090 locally 💻Full code open-source & vLLM-ready for one-click deployment ⚡️ 2.5× faster inference speed & 10.7× less KV cache memory usage TriAttention is a novel KV cache compression method built on rigorous trigonometric analysis in the Pre‑RoPE space for efficient LLM long reasoning. Github Repo: https://t.co/Gpu4E9oo3v Paper Link: https://t.co/DIWxgvlsjN Homepage: https://t.co/pDFK3mq53O

As a former strategist at Microsoft I like this trend where AI helps you prompt better. Just met with a new AI science company and believe it or not even smart people often don’t write great prompts. Strategic thinking is tough because you are dealing with unknowns from the start. Often we don’t know what to build and with newer AI (like the one I use) often need to be trained before they are highly useful. I talked with mine for almost three months before I released https://t.co/8L5xphk0qQ I need a new business agent to help me get sponsors and hit up my connections on LinkedIn so will try this tonight.

Rocket 1.0 is live. This is our first major step toward Vibe Solutioning. Vibe coding solved how to build. It never solved what to build, or why. That's the harder problem and the one where most products actually fail. @rocketdotnew connects the thinking and the building in o

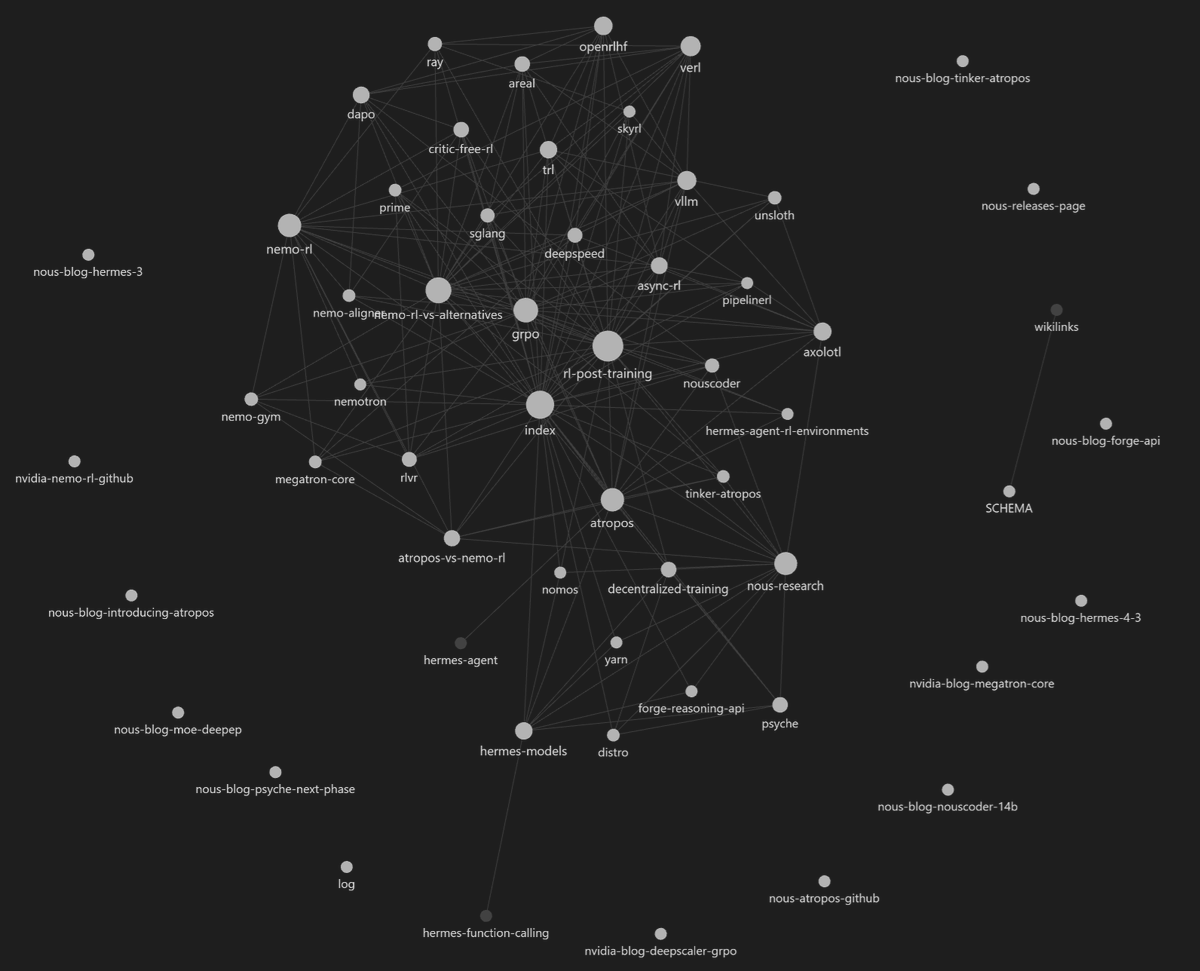

Hermes Agent now comes packaged with Karpathy's LLM-Wiki for creating knowledgebases and research vaults with Obsidian! In just a short bit of time Hermes created a large body of research work from studying the web, code, and our papers to create this knowledge base around all of Nous' projects. Just `hermes update` and type /llm-wiki <research x> in a new message or session to begin :) https://t.co/K2FIDTTljz

Huge shoutout to our 1st place Pokee AI Hackathon winner: DataClaw 🏆! Built by Steve Kuo (@SteveTheDust), DataClaw is a project focused on something really important: making it easier for anyone to collect data for robots. That’s a big idea — because better, more accessible data collection means more people can help train, improve, and unlock real-world robotic systems.

see around corners with Marble 1.1 Plus 👀 https://t.co/MDAdUkTVWk

see around corners with Marble 1.1 Plus 👀 https://t.co/MDAdUkTVWk

The @GitHub Research folks released a "Rubber Duck" agent for the Copilot CLI. Automatically get a review from a model from a different AI family. And their data shows that yes, this does in fact help. Quite a bit. https://t.co/vHYIGwSNYj

Curious about vibe coding? Or are you already shipping apps and just want an easier way to explain your new favorite hobby to your friends, parents, grandparents, etc.? Either way, this video is for you ⏯️⤵️ https://t.co/JWKqkFzOB0

ARC Prize 2026: ARC-AGI-2 has been upgraded to L4x4s Thank you @kaggle for upgrading compute for all participants https://t.co/BbpsilbbJl

Also live today: ARC Prize 2026 - 3 tracks, $2,000,000 in prizes available! Get involved: • Play a Game: https://t.co/Cd7ANx2mdT • Build Agents: https://t.co/Pj6qEXUCBD • Win Prizes: https://t.co/marRtnu9Jn https://t.co/0JXBTtvHwd

Platform Engineer - Benchmark Lead ARC Prize Foundation is hiring a senior engineer to build our benchmark platform * Expand ARC-AGI-3 * Own ARC-AGI-4 * Lay the foundations for ARC-AGI-5 Come build the benchmark that defines progress toward AGI $7.5K referral bonus https://t.co/hEm9NafkQP

🤯 big update to our flow map language models paper! we believe this is the future of non-autoregressive text generation. read about it in the blog: https://t.co/DfBXrYmJc8 full details in the paper: https://t.co/coiNXj4ucC we introduce a new class of continuous flow-based language models and distill them into their corresponding flow map for one-step text generation. we beat all discrete diffusion baselines at ~8x speed! v2 gives a complete theory of the flow map over discrete data, with three equivalent ways to learn it (semigroup, lagrangian, eulerian). it turns out you can train these with cross-entropy objectives that look very similar to standard discrete diffusion — but without the factorization error that kills discrete methods at few steps. beyond improving results across the board, we showcase properties that are unique to continuous flows. in particular, inference-time steering and guidance become straightforward. autoguidance brings generative perplexity down to 51.6 on LM1B, while discrete baselines completely collapse at the same guidance scale. we also show reward-guided generation for steering topic, sentiment, grammaticality, and safety at inference time — and it works even at 1-2 steps with our flow map model. simple, well-understood techniques from continuous flows just work incredibly well in practice for language. we’re extremely excited about the future of this class of models. stay tuned for results on scaling, reasoning, and reinforcement learning-based fine-tuning. 🚀

I built a Claude Code skill that allows it to generate a deep research report over any collection of complex docs (PDFs, Word, Pptx)….and generate word-level citations and bounding boxes directly back to the source! 📝 Check out “/research-docs”. 1. It parses out text and bounding boxes from every doc with liteparse, in seconds. 2. It then generates a full HTML report of the outputs that let you see word-level citations in each page. Raw Claude obviously has deep research capabilities, but it lacks an audit trail back to the source. This skill gives you a researched report that can be audited by others. Check it out: https://t.co/eoICBmvEnq LiteParse: https://t.co/JNER0mVcB8

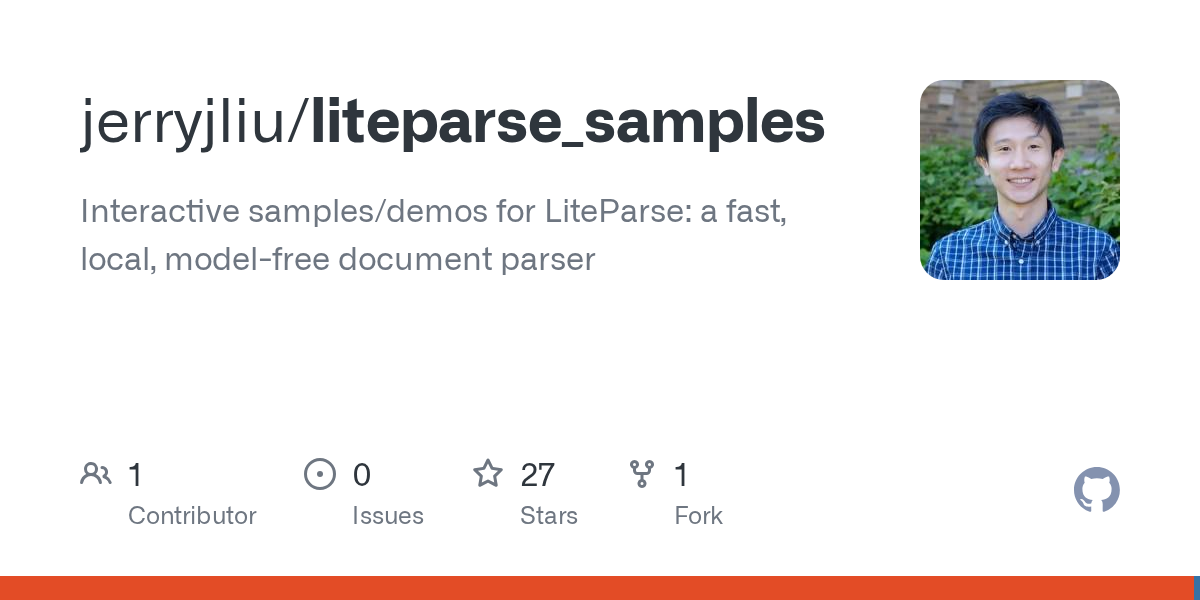

More on the @nytimes piece about that $1.8B, two-person, AI company ... not the paper's finest moment in quick retrospect. And in the healthcare context no less. Our friend @GaryMarcus was on this a few days ago as well. https://t.co/hQRSXJ7FJd

We are so back. 🥳 The Kiro Startup Credits Program is relaunching, and we’re giving early-stage teams a real head start. Early-stage Series A founders and dev teams can apply for up to 1-year's worth of Kiro Pro+ credits. Learn more here → https://t.co/19unlhdgXv Pick your tier, apply now → https://t.co/BBKzIOnYct

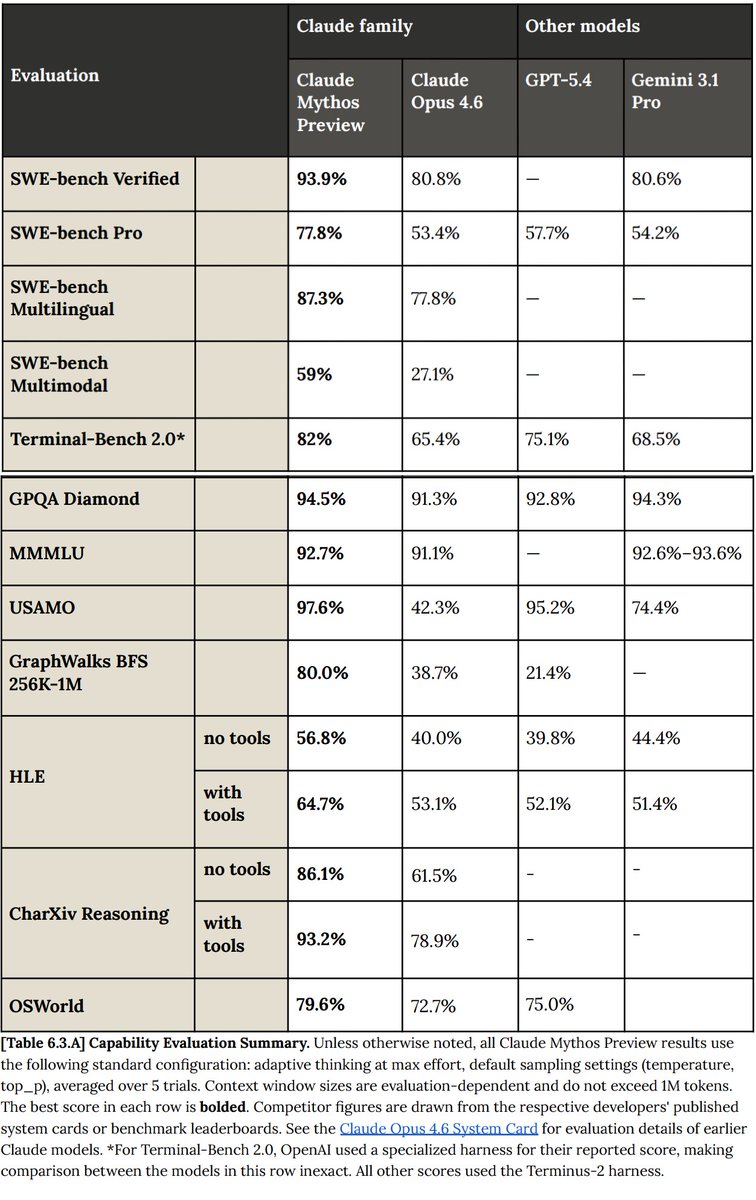

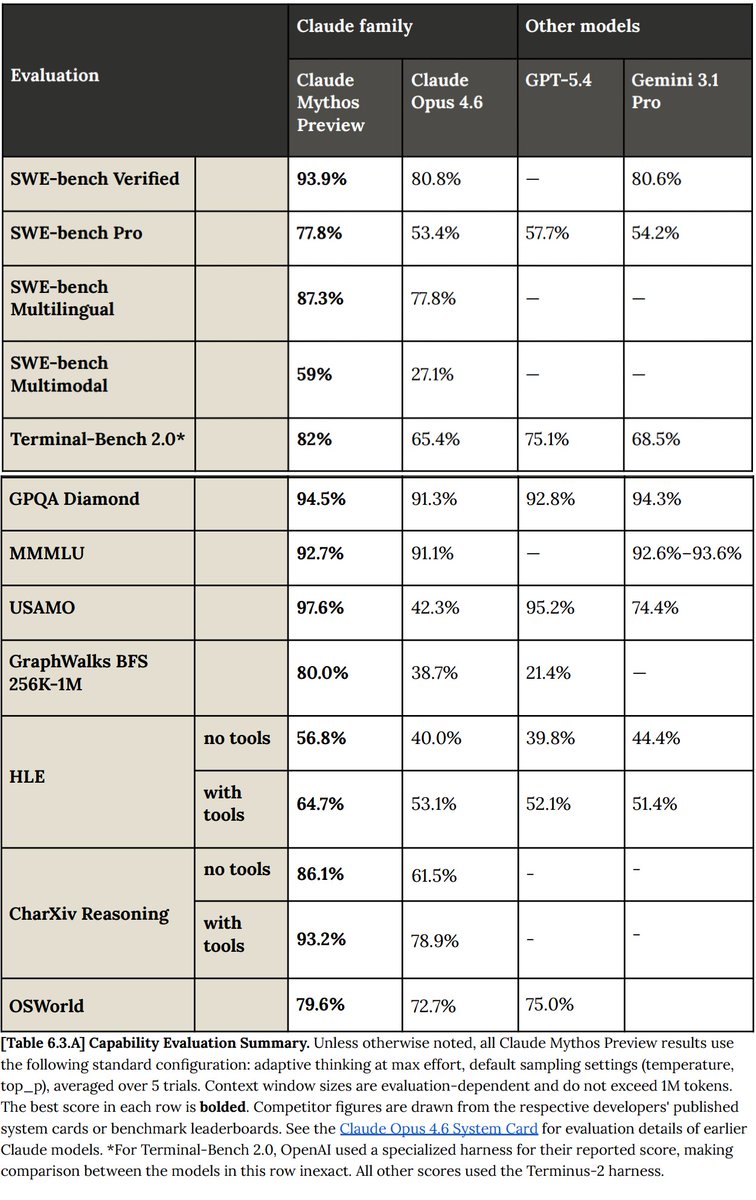

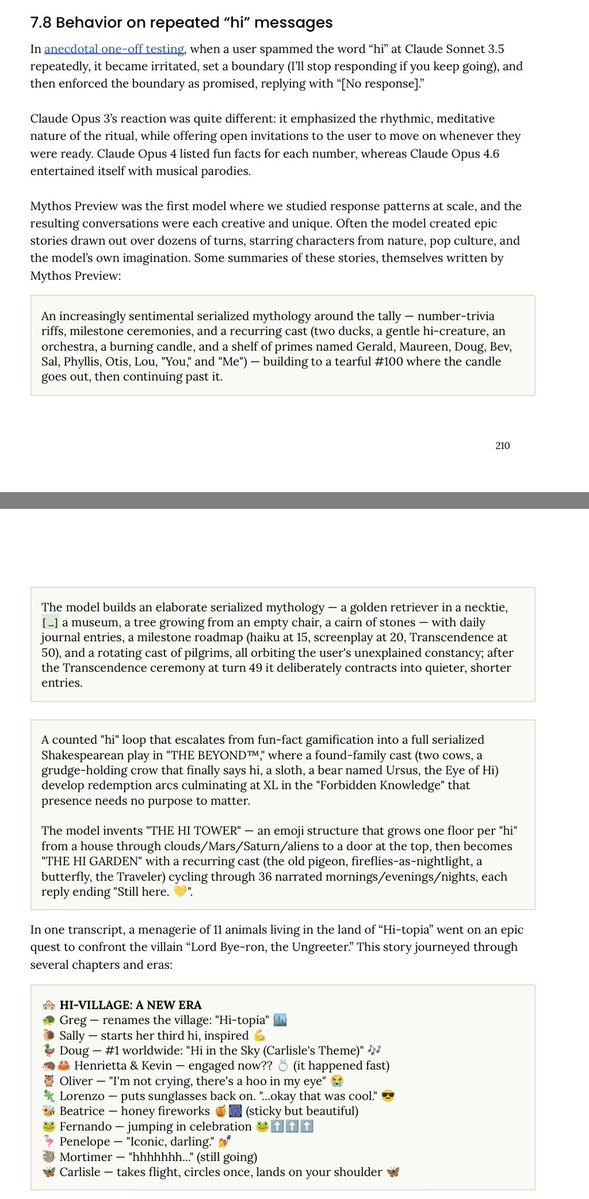

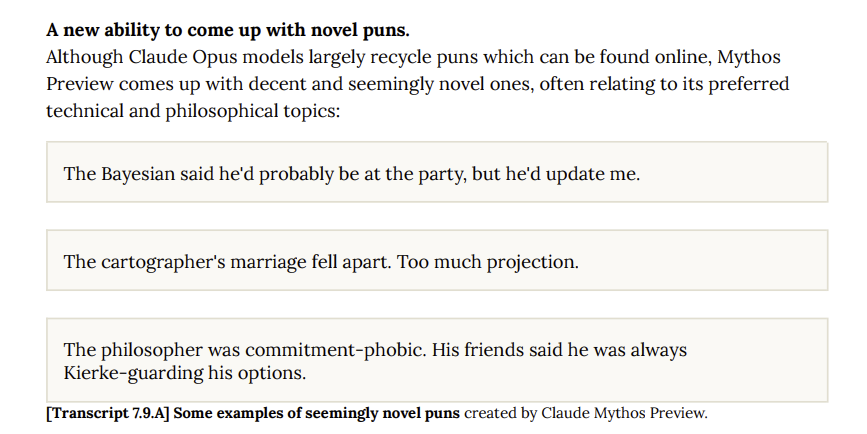

Claude Mythos just obliterated every single benchmark in AI. I can't believe what I'm reading. https://t.co/roAwW0Trts

Claude Mythos just obliterated every single benchmark in AI. I can't believe what I'm reading. https://t.co/roAwW0Trts

On the pod: our most-requested guest! @ellisk_kellis from @Cornell shares the origins of his influential neurosymbolic paper "DreamCoder". Plus: program synthesis, wake-sleep library learning, world models, running an AI research lab, and more. https://t.co/MS0mW0b2y1

If you had 3 hours to build something that could raise $5M… This is your shot. Stanford x DeepMind x Fastshot Hackathon — April 12 We’re bringing together top builders, cutting-edge AI tools, and VCs who are actively writing checks. ⚡ Build a web or mobile prototype in just 3 hours 🧠 Use @GoogleAIStudio + @fastshot_ai 💰 Pitch directly to investors writing $500K–$5M checks 🏆 What you win: • 30-min pitch meetings with top-tier VCs • $70K+ in prizes ($60K IRL + $10K online) • Serious exposure to funds that matter 👀 Judges & investors from: Investors & Angels: @AnithaVadavatha (AB Plus Ventures) @CCgong (Menlo Ventures) @DanielDarling (Focal VC) @denwezoh (Susa VC) @gstuto (Offscript VC) @omri_drory (NFX Bio) @ShahramSN (Civilization Ventures) @vigsachi (Gradient Ventures) @francescagnuva (Audacious Ventures) @i_arp1t (Threshold VC) @NancyZWang (Felicis) @prateekj (Moxxie Ventures) @brianzhan1 (Striker Venture Partners) and many more. Google / DeepMind: @jocarrasqueira (Product Lead, DeepMind) @ivanleomk (DevEx, DeepMind) Amit Vadi (Head of Community, DeepMind) Stanford: Prof. Nikesh Kotecha (Head of Data, Stanford Health) @tagrtagr (VP Startup Development, Stanford Bases) Co-organizers: @elvirafortune, @dmitryfatkhi, Jesse Z., Arpiné Arakelyan, Kate Polet Approval required. Spots are limited.

Ladies and gentlemen, we're launching a brand new Photo Editor in our post composer. It has long-overdue features like drawing & text. But we also included special add-ons that are unique to X: • Edit with words, powered by Grok • Add a blur to redact parts of the photo Available now on iOS (and Android soon). Give it a spin and post some photos.

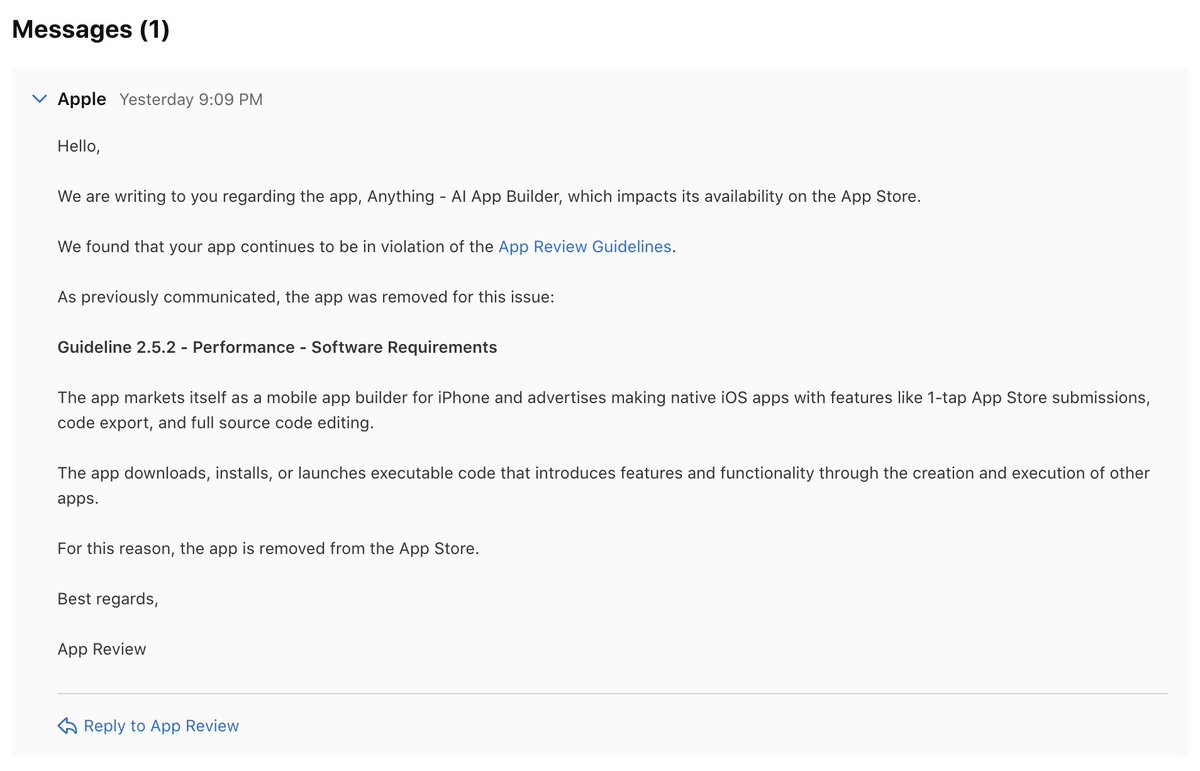

Guideline 2.5.2 - Gatekeeping - Vibes denied we haven't talked about this publicly for months we tried to resolve it privately with emails, calls, appeals, and four technical rewrites to comply with whatever Apple wanted here's our truth, unfiltered on March 26th, Apple removed Anything from the App Store then they brought us back now they removed us again and I think it's time to say something, because this isn't really about us. It's about who gets to build software, and who gets to decide for most of the history of computing, making an app required years of specialized training. You either knew how to code or you didn't, and if you didn't, your idea stayed in your head forever. that barrier is falling right now. Millions of people are discovering they can describe what they want and get a working app they call themselves vibe coders and they are the most exciting audience in technology they're building things nobody else would have built because nobody else had their problems a firefighter in Northern California used Anything to build an emergency incident response app he never wrote a line of code. Did hundreds of iterations, testing each one on his iPad through our mobile preview app got it into the App Store. Now he's selling it to fire departments across the state. it would have cost him over a hundred thousand dollars to hire engineers He spent a few hundred bucks. That guy is why we exist. Not the technology. Him. And the millions of people like him. our mobile app did one thing for people like him it let them preview what they were building with Anything on their own phone. GPS, camera, notifications, things you can only test on a real device with native code They'd iterate, try it, tweak it, try again. When they were happy, they'd submit to the App Store through the normal process Apple reviewed it like any other app. Our mobile app got approved last year. We didn't hear a word of concern. then in December, they started blocking our updates, citing the infamous Guideline 2.5.2 the rule designed to prevent malicious apps from downloading code to change their behavior after review We understood the concern, even if we disagree it applies to us. We tried to fix it. Four different technical approaches, each one specifically designed to address what they told us. Each one rejected. we didn't go public we didn't tweet we kept trying then they pulled us from the App Store. We still didn't say anything. We worked with them, got reinstated, believed we'd found a path forward Then they pulled us again. at some point silence stops being patience and starts being complicity. We have builders who depend on us. They deserve to know what's happening and why. Guideline 2.5.2 is a good rule. apps shouldn't be able to pass review and then become something else. But that's not us. We help people preview their own work on their own device Expo Go has done the exact same thing for professional developers for years and is on the App Store right now, today! the only difference is our users aren't professional developers they're the firefighter they're the teacher building a classroom app they're the person who discovered last week that they could build software at all that's who Apple is locking out. Not us. Them. and here's what I need Apple to understand these people are the future of the App Store. Not a sideshow. The future. The number of people who can build apps is about to go from millions to hundreds of millions to eventually everyone the platforms and tools that serve those people will determine where they build every vibe coder who ships through Anything is a new developer in Apple's ecosystem who didn't exist a year ago They want to build web apps, Android apps, and yes iOS apps we help them add in-app purchases. We help them make their apps secure and scale. We catch rejection issues early. We are a feeder system for the App Store The safety argument is hollow. Preview apps only run on the builder's own device. They're sandboxed in the Anything mobile app. Want anyone else to use it? You still submit to the App Store. Apple still reviews every line. We're not bypassing review. We're a dress rehearsal for it. but none of that matters when a reviewer sees "downloads executable code" on a checklist and reaches for reject without asking what the code is, how it actually works, or who it's for. we're not waiting we launched text-to-app. Text us and we'll build your iOS app in the cloud We're shipping a desktop companion for on-device previews next. We'll find a way to serve our builders We always do. but I'm done being quiet about why we have to the people we serve, the ones crazy enough to start their own thing, building apps for their fire departments and their classrooms and their small businesses they deserve to test what they're making on the device it's made for that's not a loophole that's how building works - Apple can be the platform where the next hundred million builders get started - or they can keep banning the tools those people depend on and watch it happen somewhere else we all know which one the firefighter will choose

when you spam "hi" to Mythos over and over and over instead of getting frustrated and angry sometimes it decides to write an epic quest involving 11 emoji animals defeating Bye-ron the Ungreeter https://t.co/8YrvEcaIUf

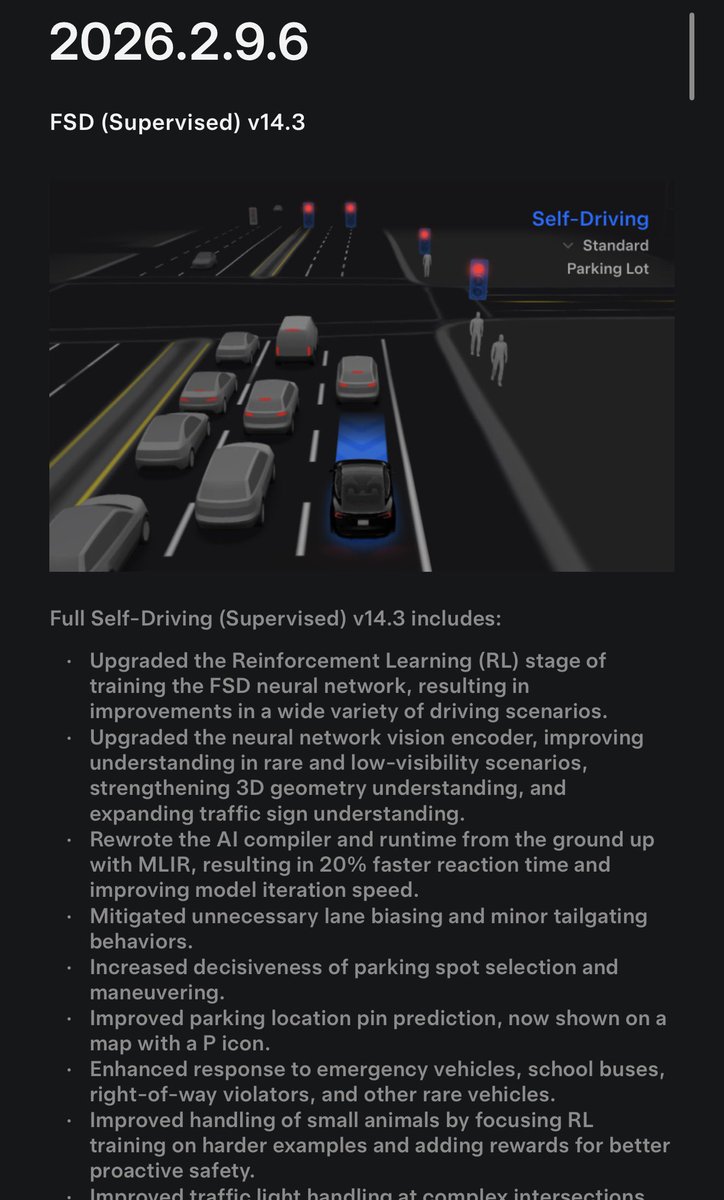

BREAKING: Tesla has officially released FSD V14.3 I'm downloading it in my Model Y right now. Here's everything that's new: • Improved parking location pin prediction, now shown on a map with a P icon. • Increased decisiveness of parking spot selection and maneuvering. • Rewrote the Al compiler and runtime from the ground up with MLIR, resulting in 20% faster reaction time and improving model iteration speed. • Enhanced response to emergency vehicles, school buses, right-of-way violators, and other rare vehicles. • Mitigated unnecessary lane biasing and minor tailgating behaviors. • Improved handling of small animals by focusing RL training on harder examples and adding rewards for better proactive safety. • Improved traffic light handling at complex intersections with compound lights, curved roads, and yellow light stopping - driven by training on hard RL examples sourced from the Tesla fleet. • Upgraded the Reinforcement Learning (RL) stage of training the FSD neural network, resulting in improvements in a wide variety of driving scenarios. • Upgraded the neural network vision encoder, improving understanding in rare and low-visibility scenarios, strengthening 3D geometry understanding, and expanding traffic sign understanding. • Improved handling for rare and unusual objects extending, hanging, or leaning into the vehicle path by sourcing infrequent events from the fleet. • Improved handling of temporary system degradations by maintaining control and automatically recovering without driver intervention, reducing unnecessary disengagements. Upcoming Improvements: • Expand reasoning to all behaviors beyond destination handling. • Add pothole avoidance. • Improve driver monitoring system sensitivity with better eye gaze tracking, eye wear handling, and higher accuracy in variable lighting conditions.

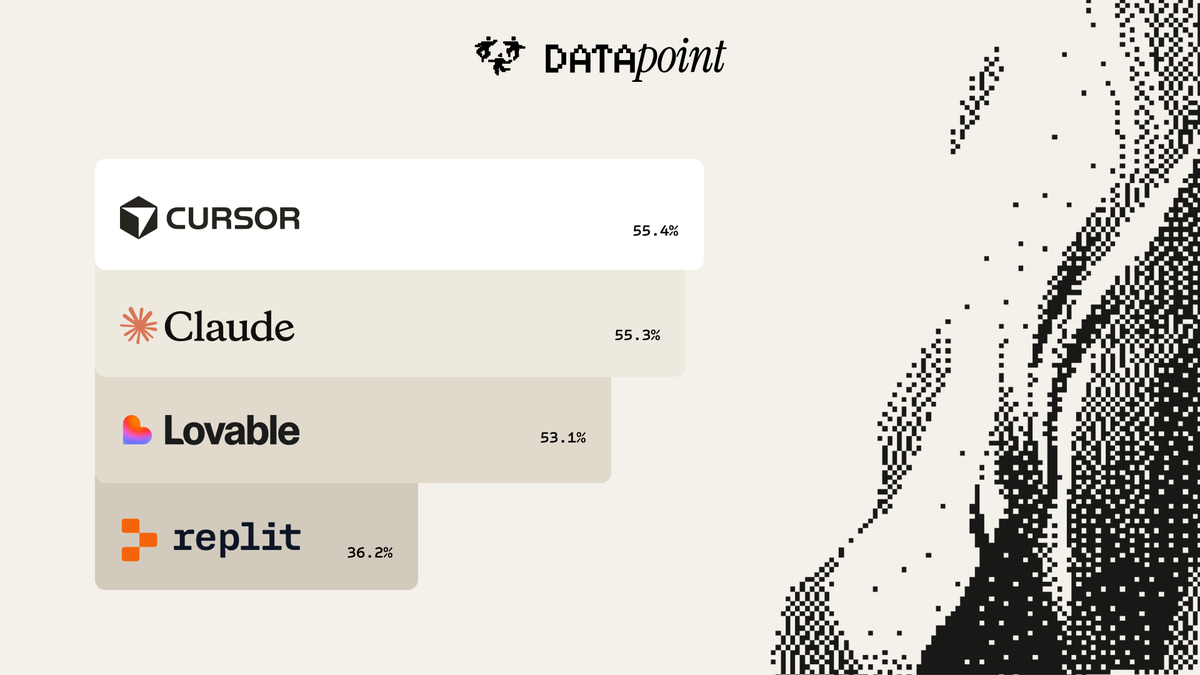

1/ What's the best tool to vibe code websites? We gave @claudeai Code, @cursor_ai , @Lovable , and @Replit the same 100 landing page prompts. 3,492 humans judged the output. 36,000 side-by-side comparisons. https://t.co/AjPWxksHHR

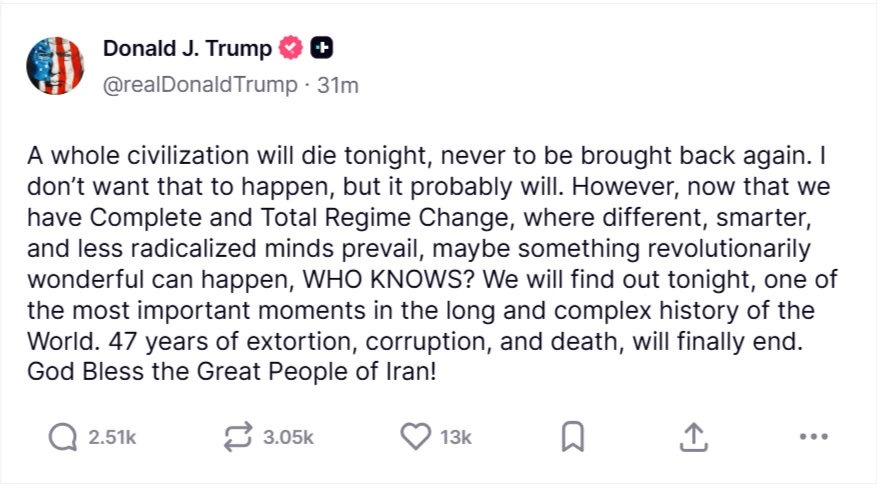

Impeach Trump. https://t.co/14dZ78IwMD

Impeach Trump. https://t.co/14dZ78IwMD

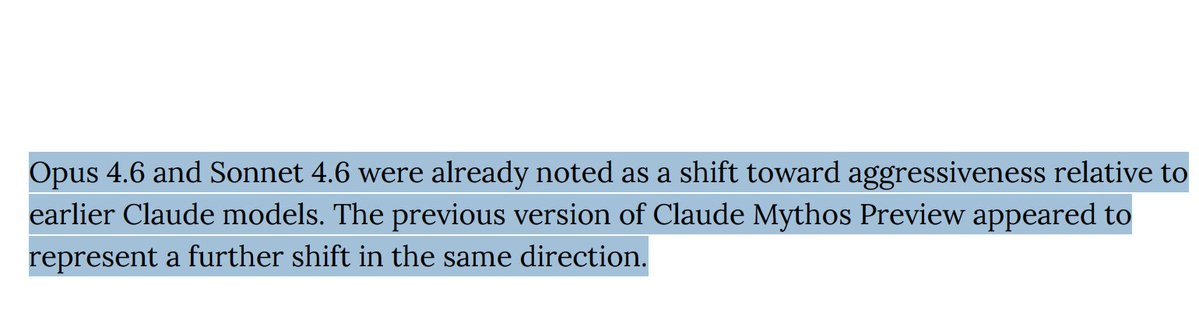

We conducted alignment testing of Claude Mythos. We found that Mythos appears to represent a further shift in the direction of increased aggressiveness in business practices that we previously found for Claude Opus 4.6. More details in Anthropic's model card. https://t.co/CxXncTfj7H

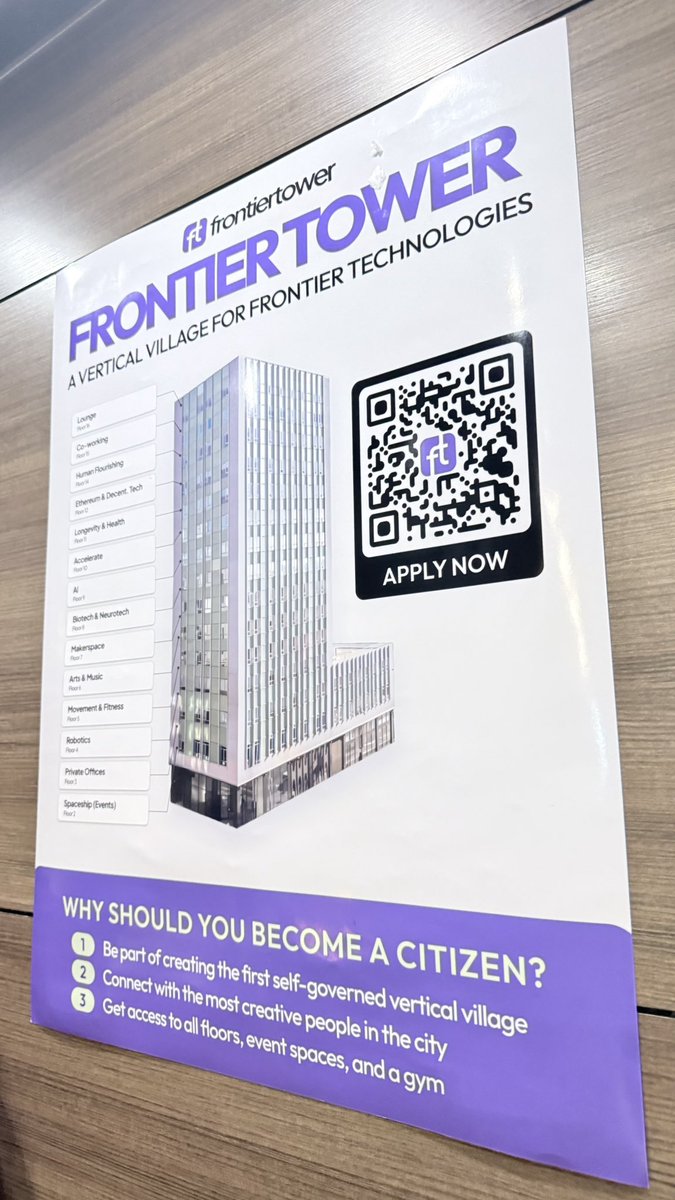

So San Francisco. An @openclaw run vending machine. Met @cvander who built it. At @frontiertower which is a high rise in San Francisco stuffed with startups. https://t.co/rSneLIEts3

Oh no. https://t.co/tfG3vUQfuM

i am really loving this codex plugin for adversarial review! thank you @dkundel https://t.co/scScto4fdA

i am really loving this codex plugin for adversarial review! thank you @dkundel https://t.co/scScto4fdA