@yukangchen_

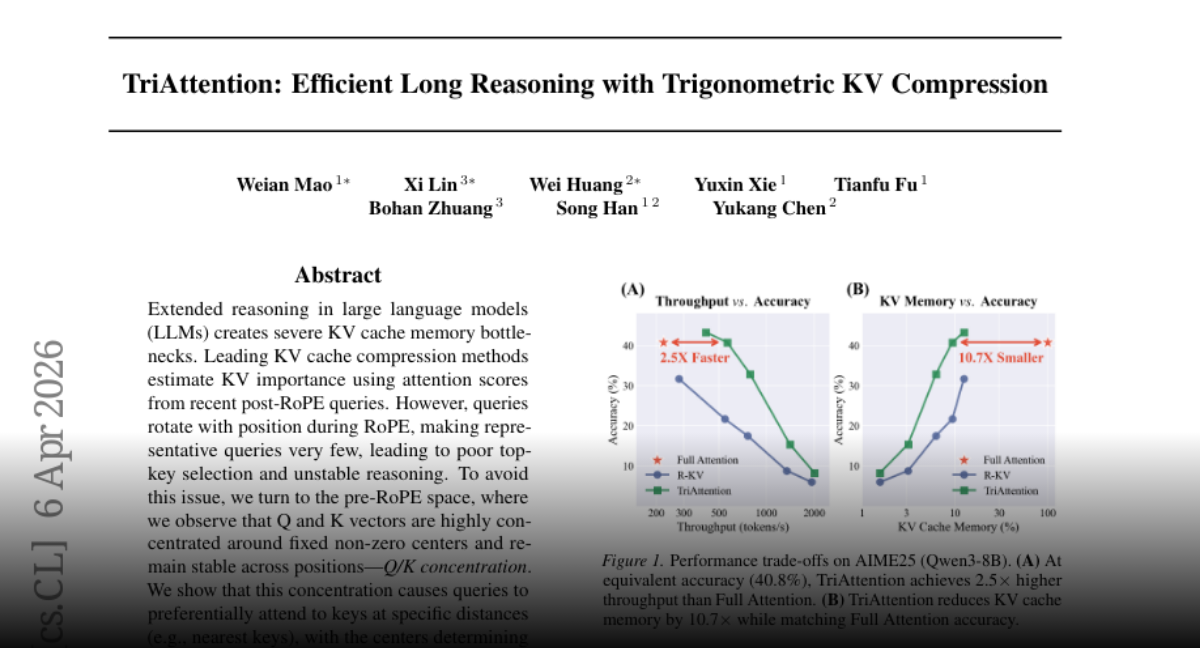

We’re thrilled to open-source TriAttention! 🚀 🦞 Deploy OpenClaw (32B LLM) on a single 24GB RTX 4090 locally 💻Full code open-source & vLLM-ready for one-click deployment ⚡️ 2.5× faster inference speed & 10.7× less KV cache memory usage TriAttention is a novel KV cache compression method built on rigorous trigonometric analysis in the Pre‑RoPE space for efficient LLM long reasoning. Github Repo: https://t.co/Gpu4E9oo3v Paper Link: https://t.co/DIWxgvlsjN Homepage: https://t.co/pDFK3mq53O