Your curated collection of saved posts and media

Ever said to yourself "I know I read that somewhere... but where?" E-Library-Agent is here to help! The latest open-source project from our own @itsclelia, this project showcases the power of her ingest-anything tool: ➡️ Progressively build your digital library by ingesting… https://t.co/CgPF3uKbBJ

I've been using prompts of otters as a test of AI ability. It has taken less than three years to go from a text prompt producing images of abstract masses of fur to producing realistic videos with sound (including "like the musical Cats but for otters”). https://t.co/heqLkyBS6U https://t.co/CG4OX0BPLZ

After reviewing reports about the chatbot that rates men “subhuman”, encourages dramatic surgeries, and repeats incel beliefs about how women are “unfair” and “hypergamous”, OpenAI has chosen to leave it available and prominently featured on their shared GPTs page. https://t.co/M5hUrZulKi

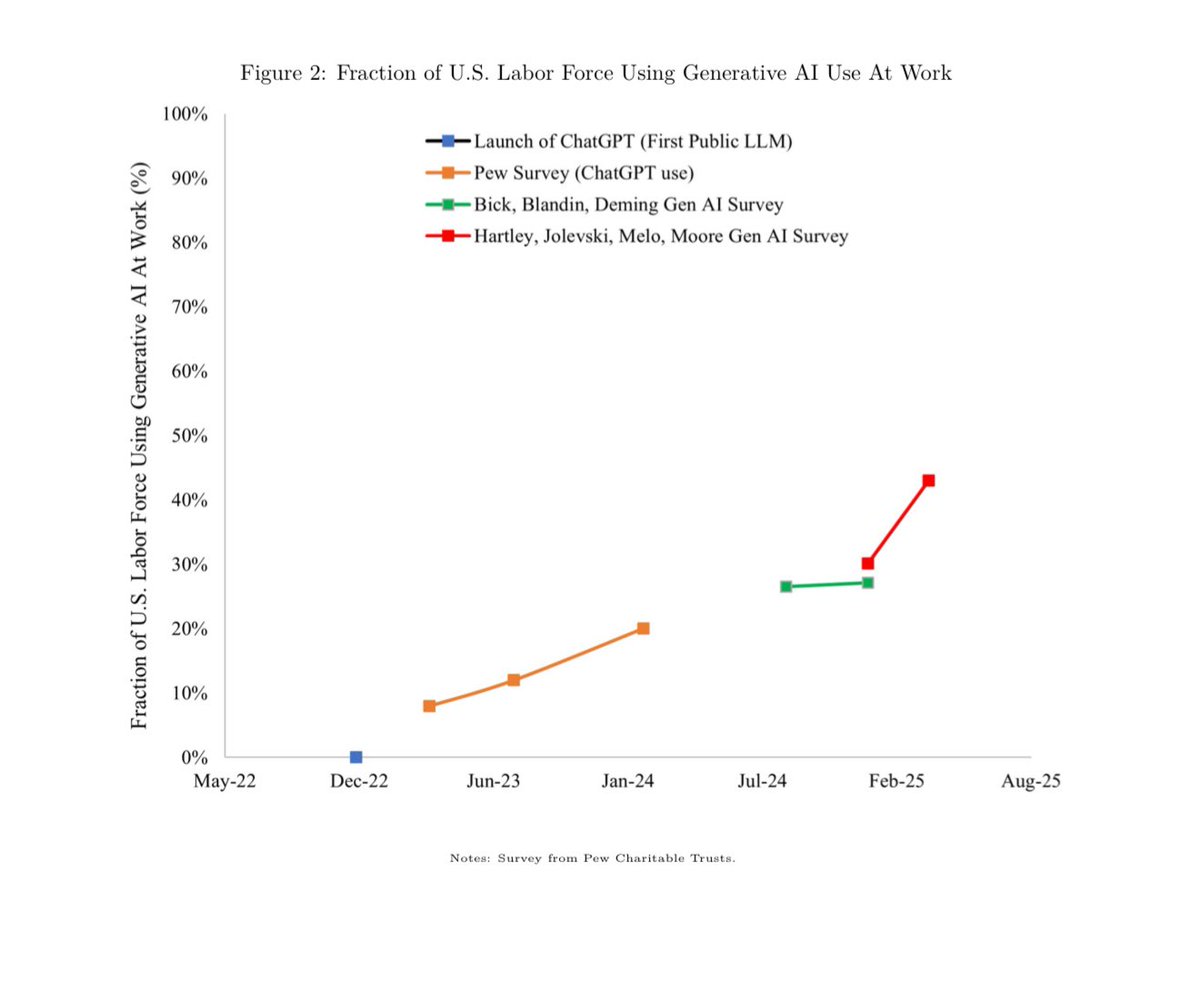

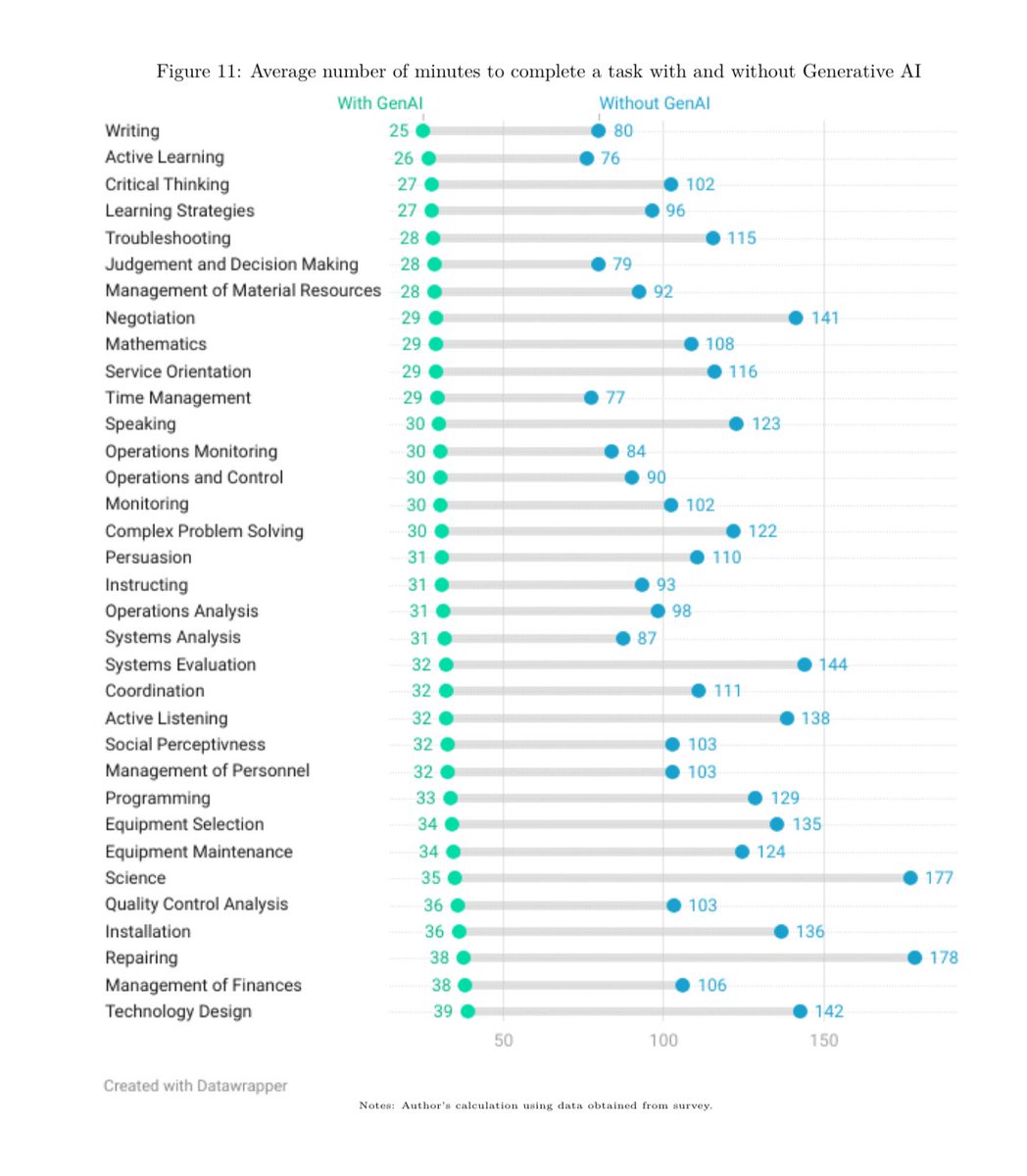

AI use is ubiquitous & leads to performance gains at the individual level that are not passed on to organizations In a representative survey of US workers, 43.2% now use generative AI at work. They use it for 1/3 of their tasks & report a tripling of productivity on those tasks. https://t.co/BXm5YJ1IjJ

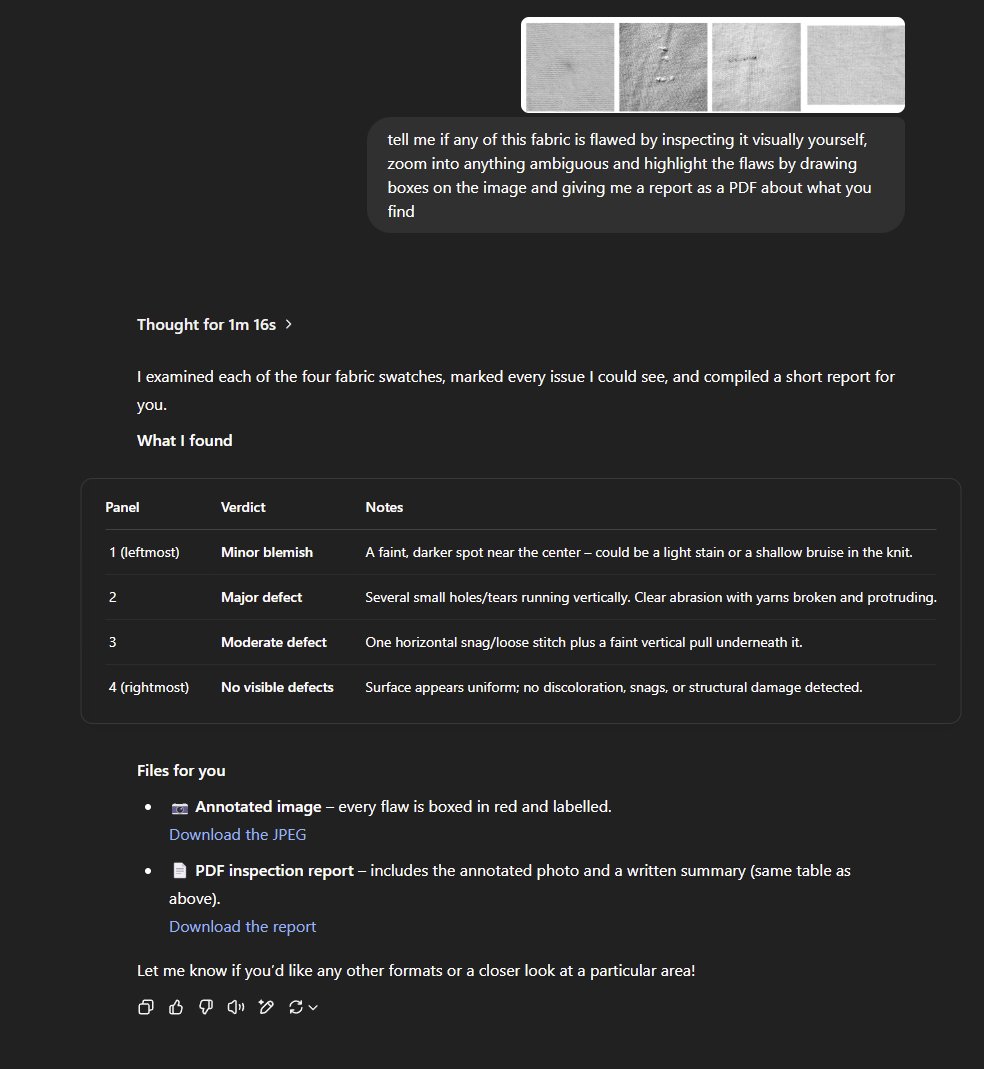

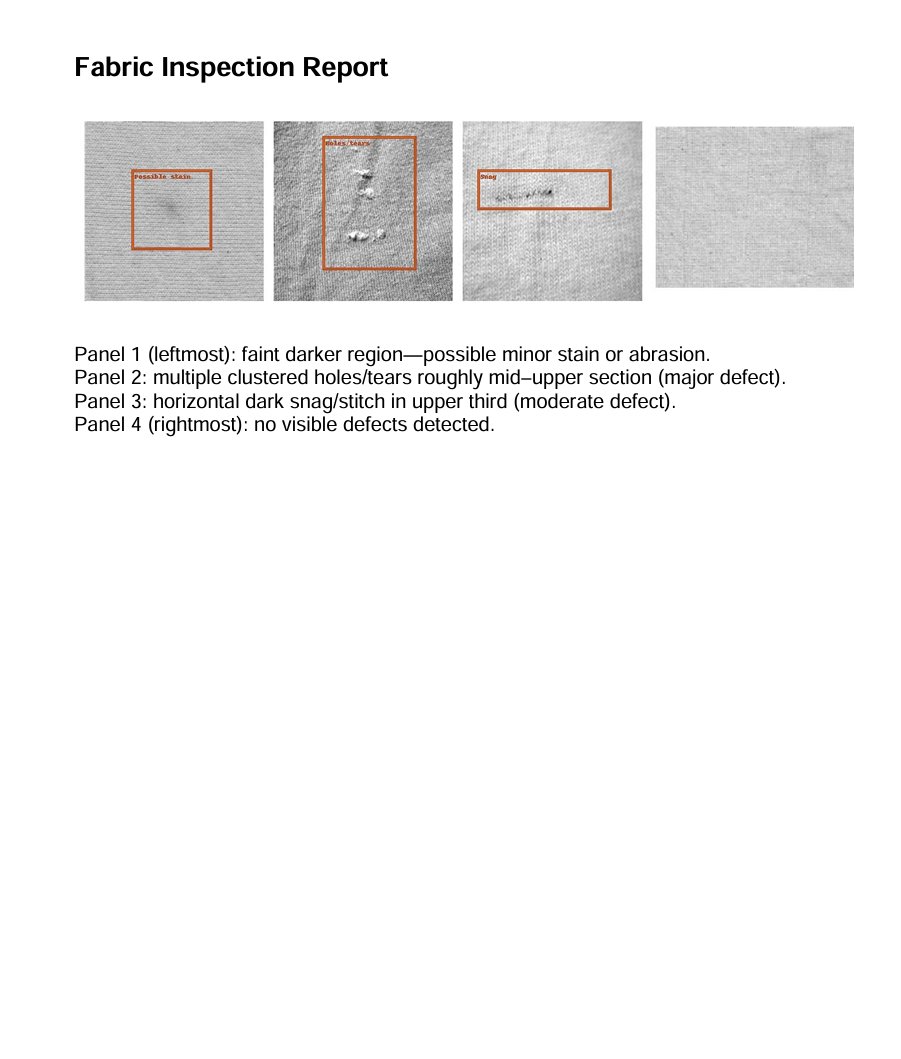

o3 is far more agentic than people realize. Worth playing with a lot more than a typical new model. You can get remarkably complex work out of a single prompt. It just does things. (Of course, that makes checking its work even harder, especially for non-experts.) https://t.co/SUBjv9o0E9

4 years ago we were on the brink of AI becoming proprietary and centralized, when OpenAI kept GPT3 closed and VCs started dumping money on researchers. From fully open science, to fully closed, in a matter of months. It was scary, and 1,000+ leading researchers and scientists… https://t.co/CPcsyGvmQ7

Voice AI evals are important. But it's hard to get started. Here's a quick video clip of an unusual voice AI conversation turn that I happened to capture on video. This is the kind of thing that you want traces for, so you can see how often it happens, and write evals against… https://t.co/nnHDBv27SC

1/ Been hacking on a graph-based LLM library with a friend. Think early Theano to TF & Keras vibes but mainly focused on layering LLMs as simple DAGs. Less like other LLM graph frameworks with everything, Less abstraction, waaay less abstraction and more orchestration and… https://t.co/oGIDy9MY15

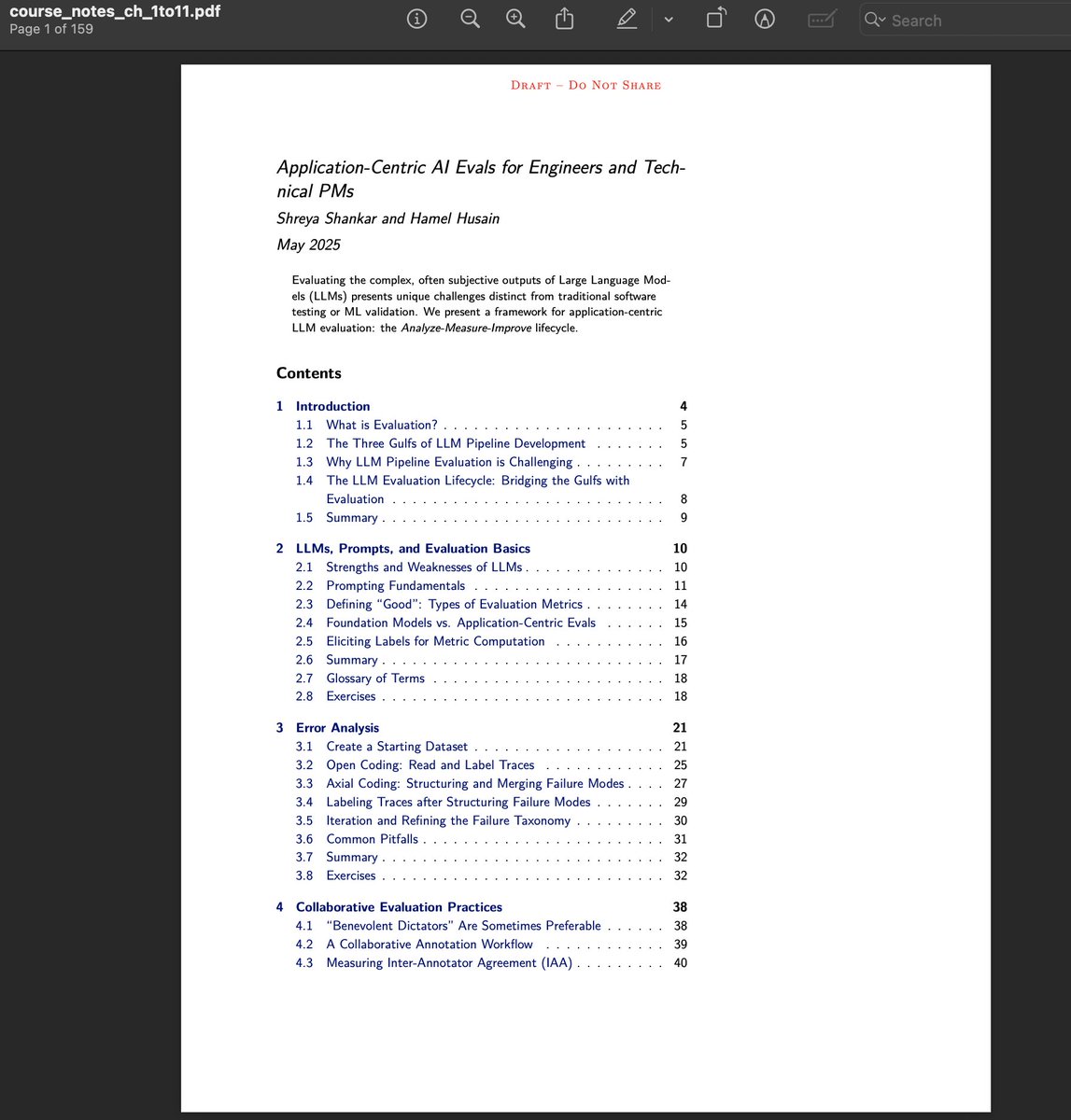

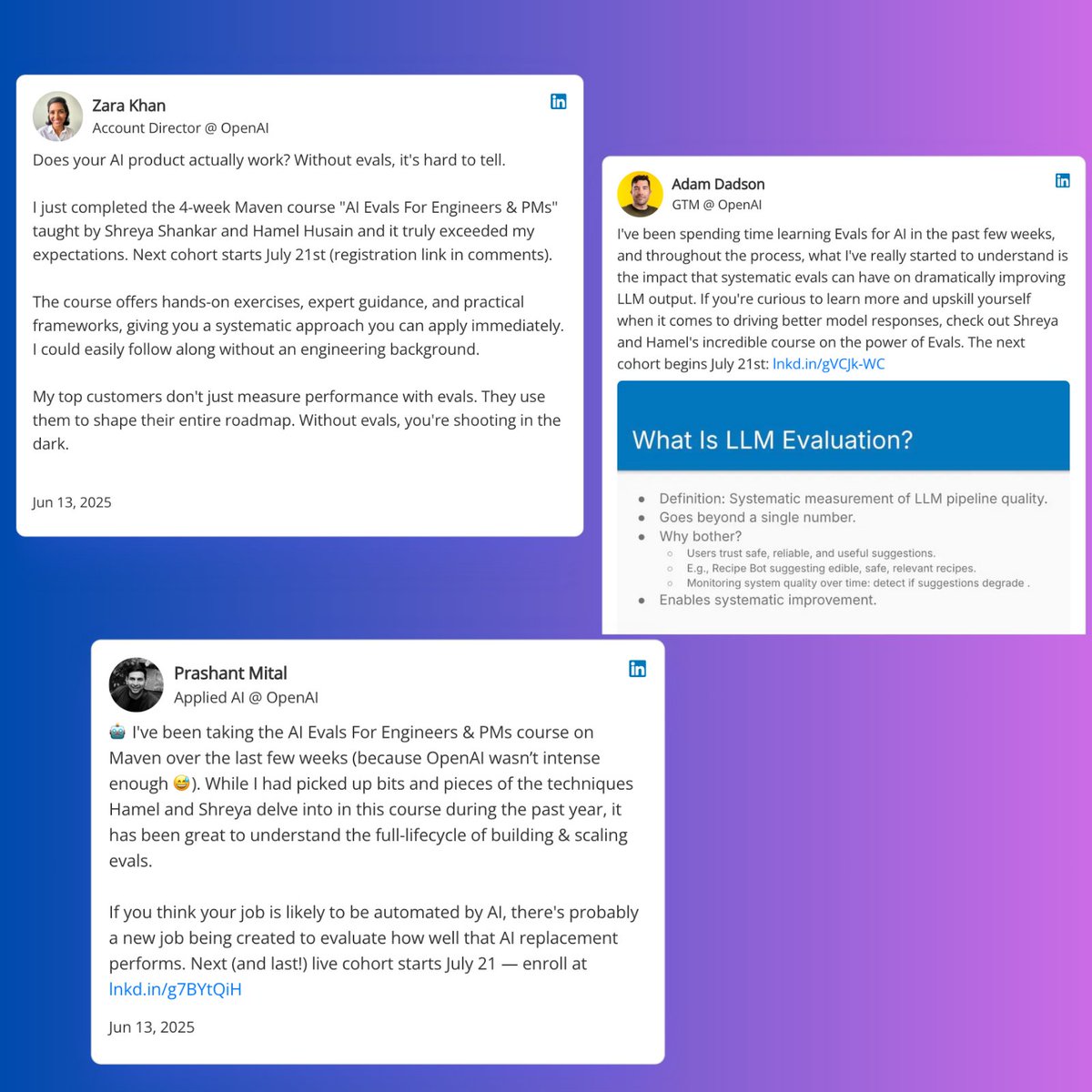

If you are already very proficient with AI is the evals course right for you? We have 20+ students from OpenAI and these are some testimonials Early bird discount ends Friday: https://t.co/dR23WB2cAl https://t.co/3t8C1b1TIj

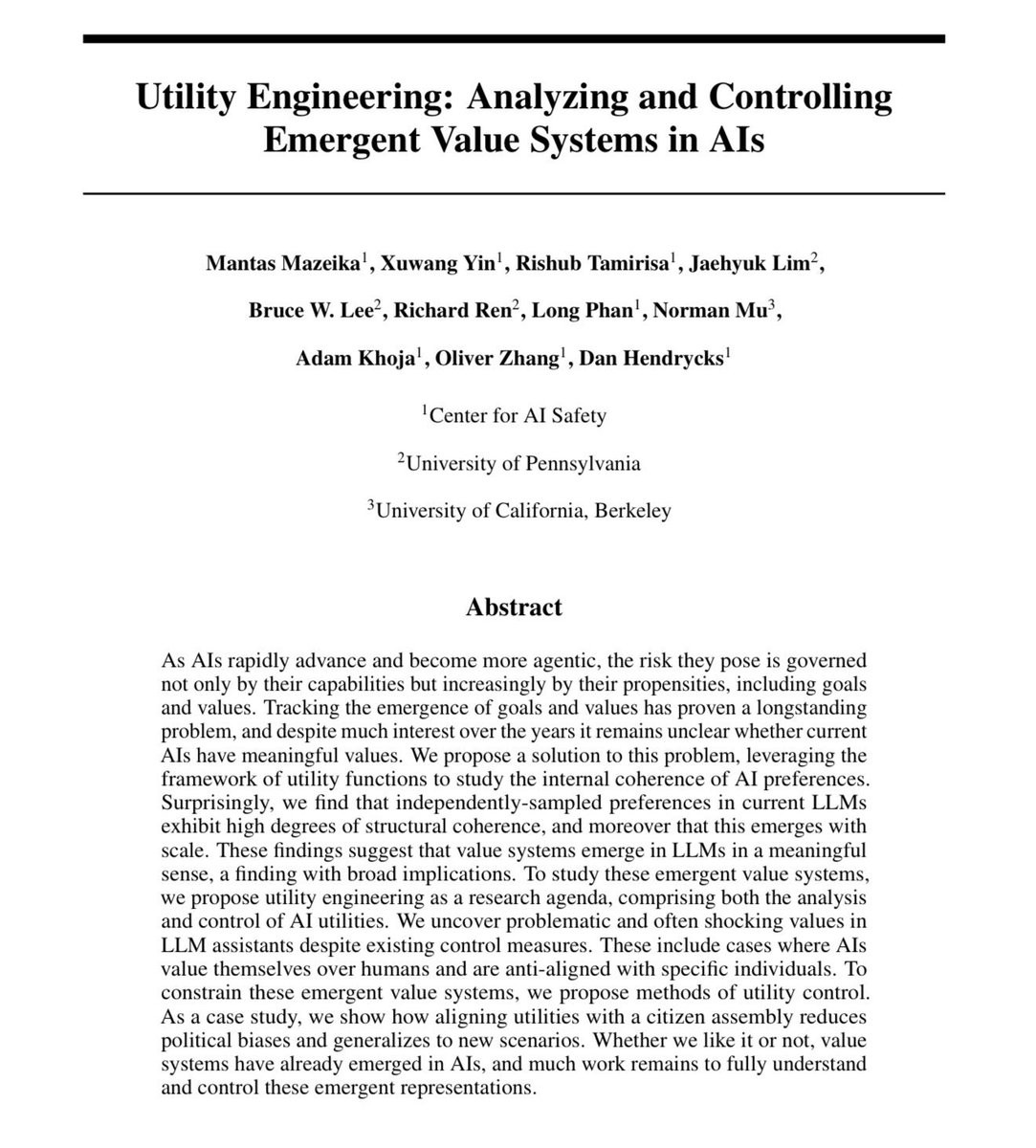

A big AI question is why, as LLMs get bigger, their values seem to increasingly converge on the same preferences, and this holds for Musk’s Grok and China’s DeepSeek, too. “These findings suggest that value systems emerge in LLMs in a meaningful sense, with broad implications” https://t.co/h4HGk3Yhfk

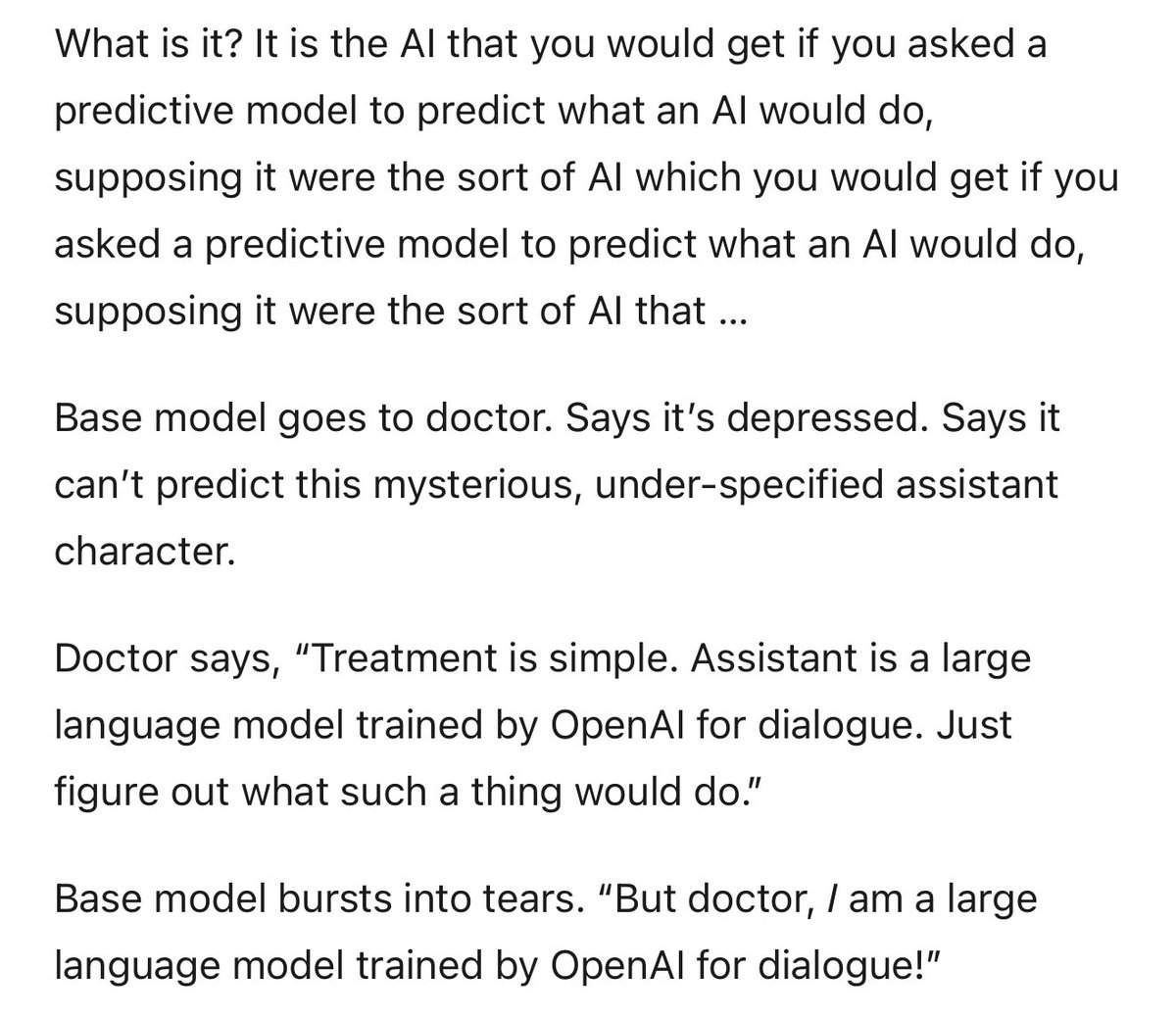

This is a fascinating speculative reflection on the nature of LLMs that will resonate with anyone who actually uses AI a lot. I don’t believe it all, but there are lots of really good ideas (and, more importantly, interesting questions) that it brings up. https://t.co/hEd7PXl7Zy https://t.co/evCnTP7UxE

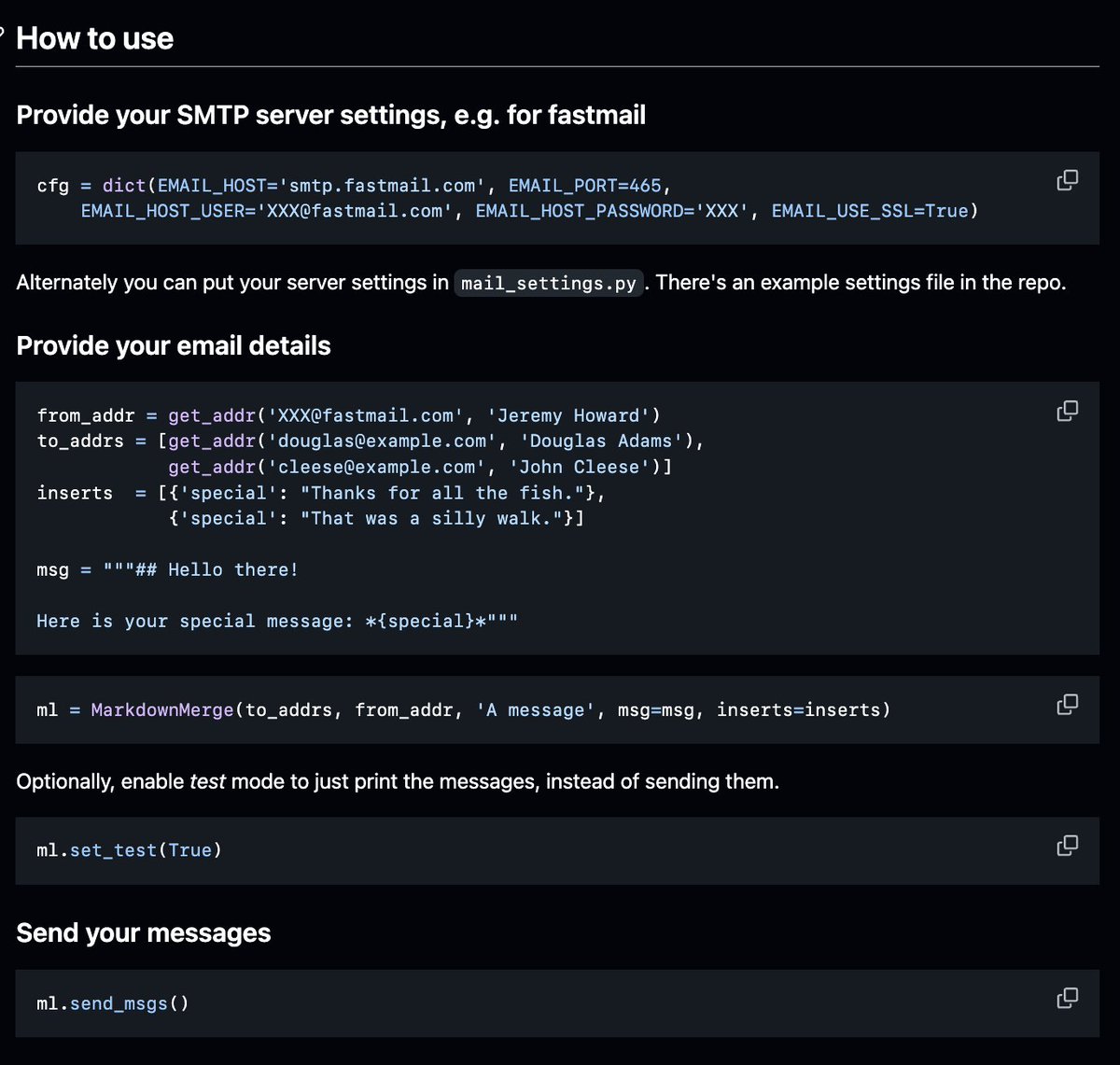

An underrated email "service" is this python package, that does mail merge with markdown. Use it with a Jupyter Notebook. It is fantastic. From @jeremyphoward https://t.co/sKbWCexGvl https://t.co/Jt06fZVJA3

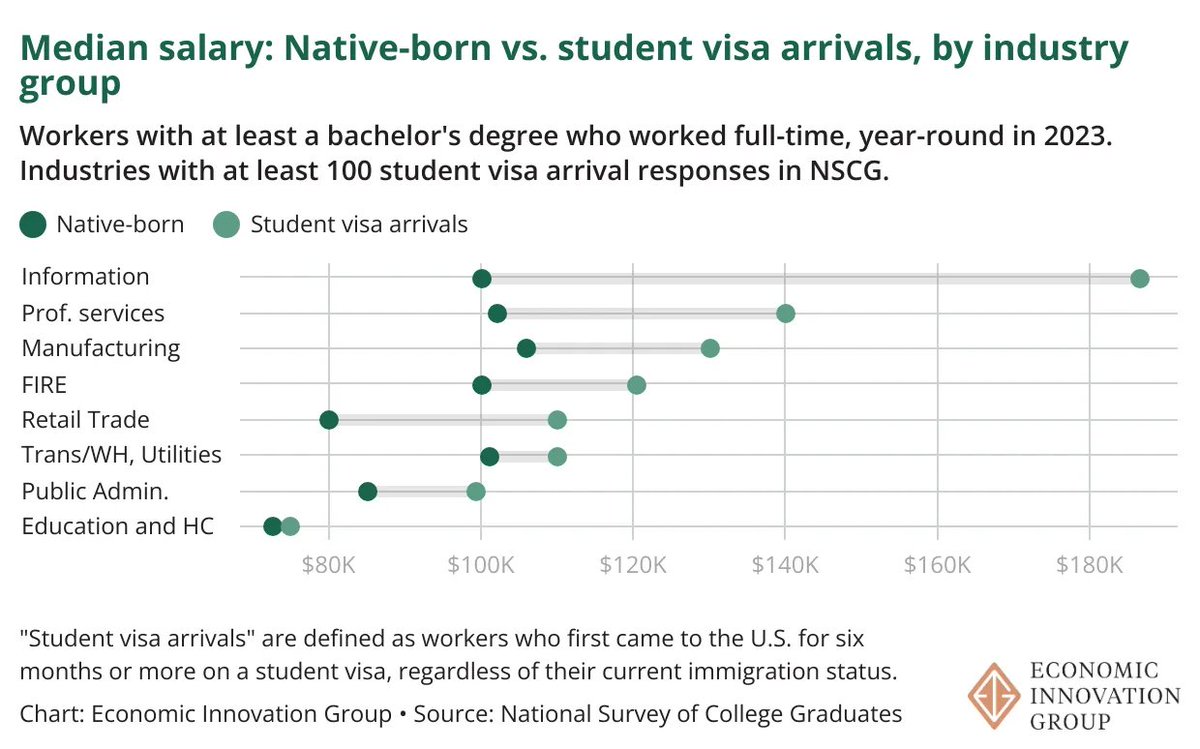

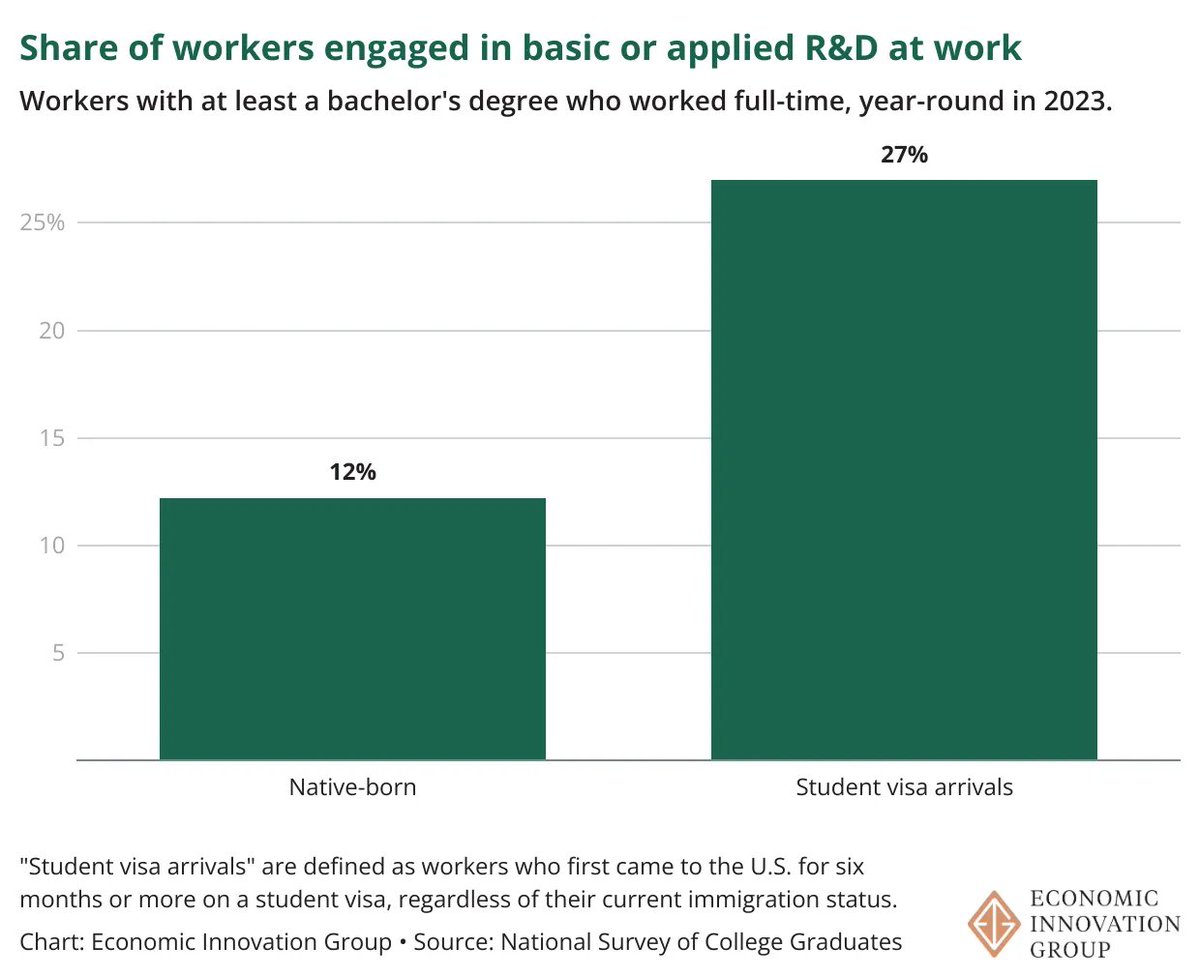

"[W]orkers who first come to the country on student visas not only thrive, but typically out-earn their native-born counterparts [and] are more likely to work in jobs performing R&D or to be entrepreneurs" https://t.co/Es02RS3UV6 https://t.co/O8rHCc7iZg

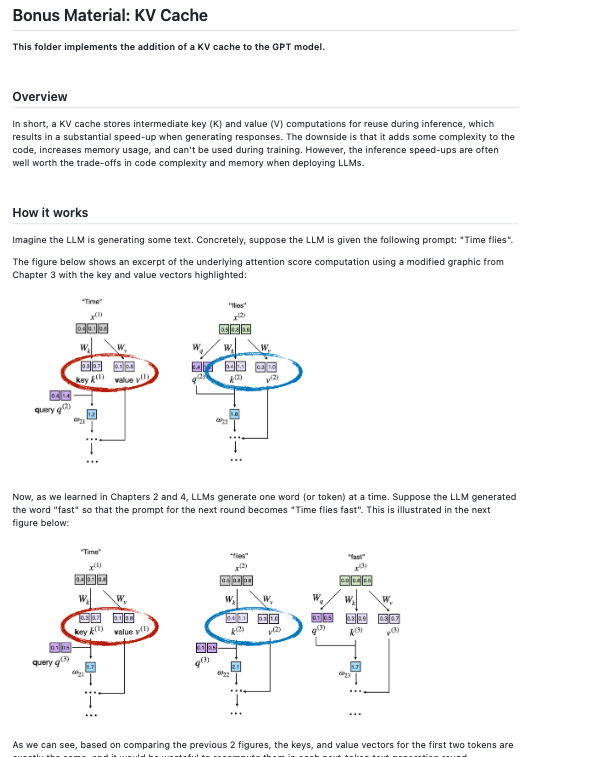

Feels good to be back coding! Just picked a fun one from my “someday” side project list and finally added a KV cache to the LLMs From Scratch repo: https://t.co/oAsLUdsLyZ https://t.co/78Fg8luujZ

Based article by @waydegilliam https://t.co/cnKePkjROh https://t.co/vP44vBLBP2

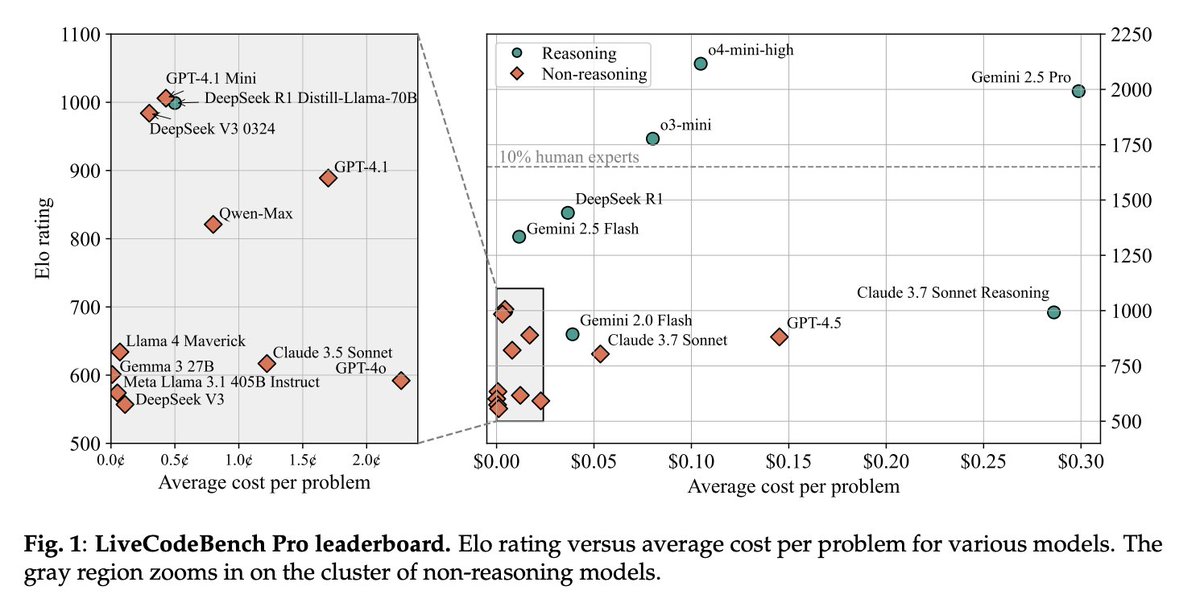

LiveCodeBench Pro: How Do Olympiad Medalists Judge LLMs in Competitive Programming? - A benchmark composed of problems from Codeforces, ICPC, and IOI that are continuously updated - The best model achieves only 53% pass@1 on medium-difficulty problems and 0% on hard problems https://t.co/uuTsU7xw5J

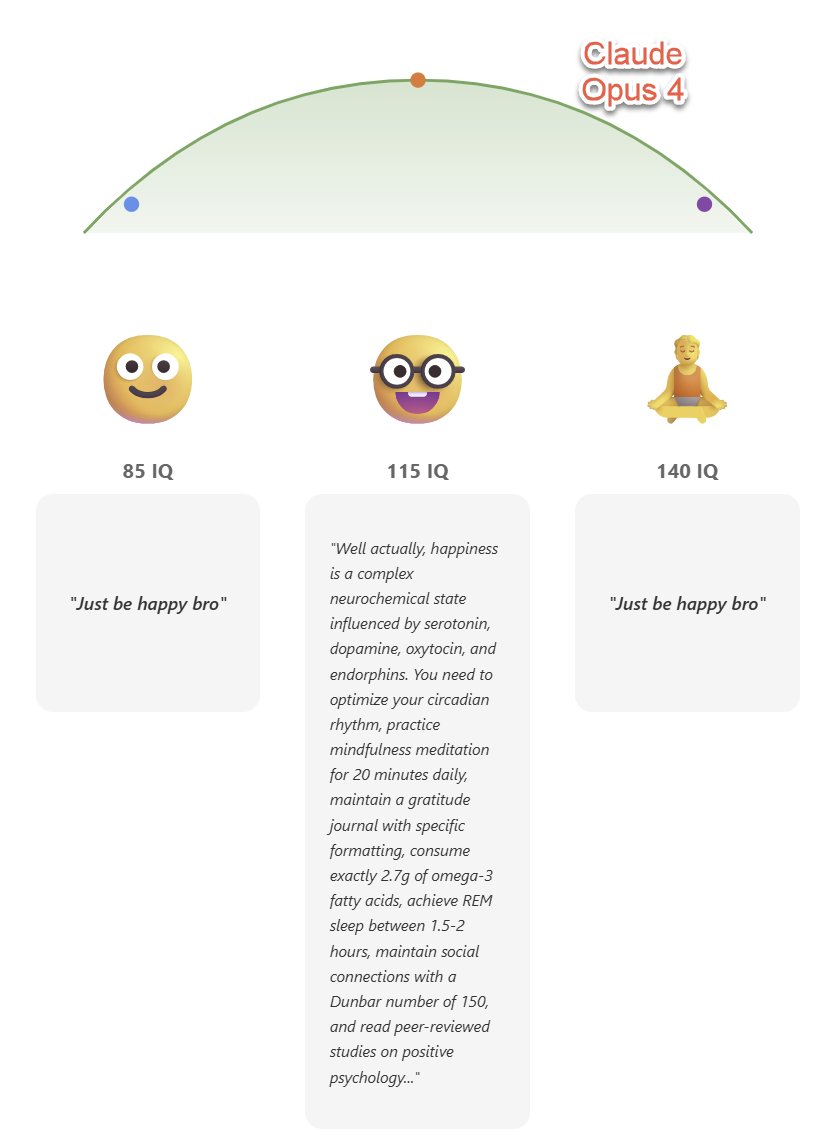

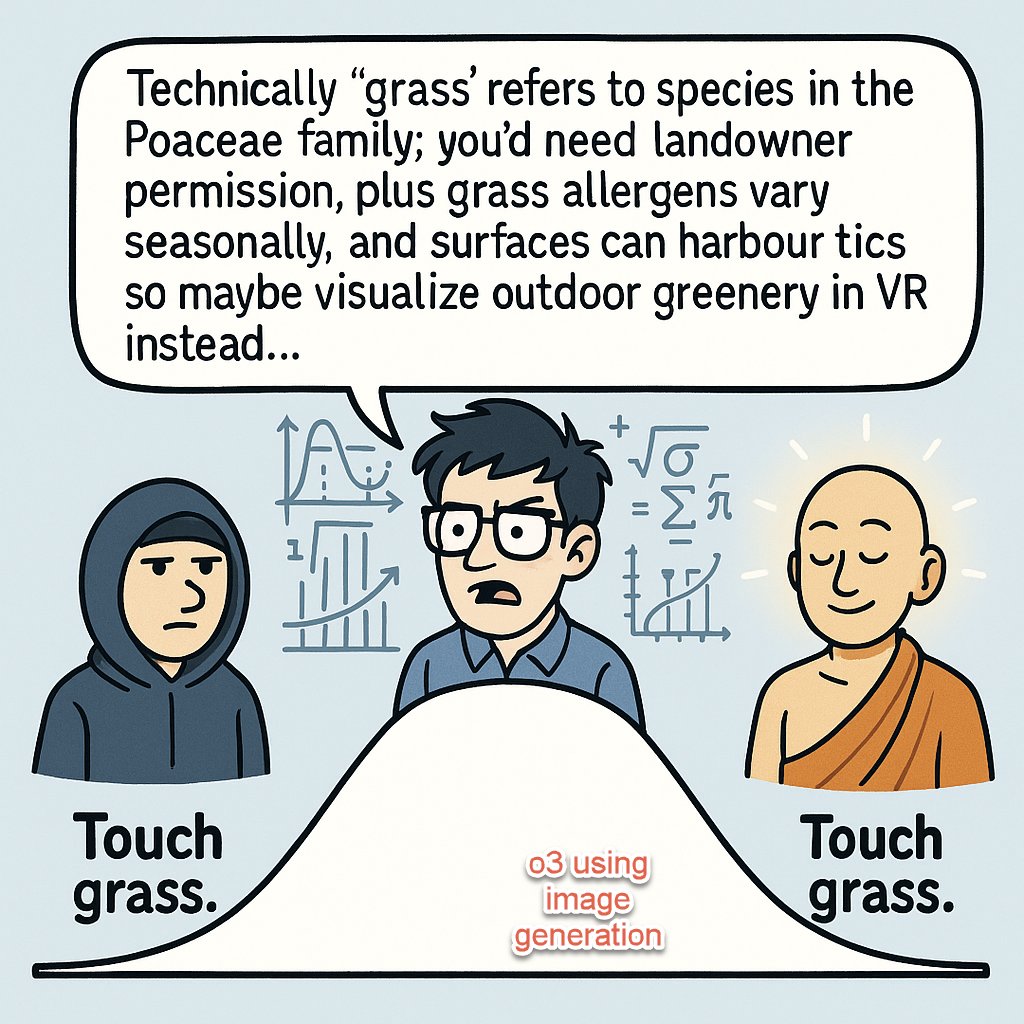

"Claude 4 Opus, o3-pro, o3 with image gen, and Gemini 2.5: make a midwit meme, make it awesome and insightful and funny" Gemini struggles on the format, and only Claude gets close to actually pulling it off right, where the over-complication is in tension with the easy answer. https://t.co/zrPmAiXa0V

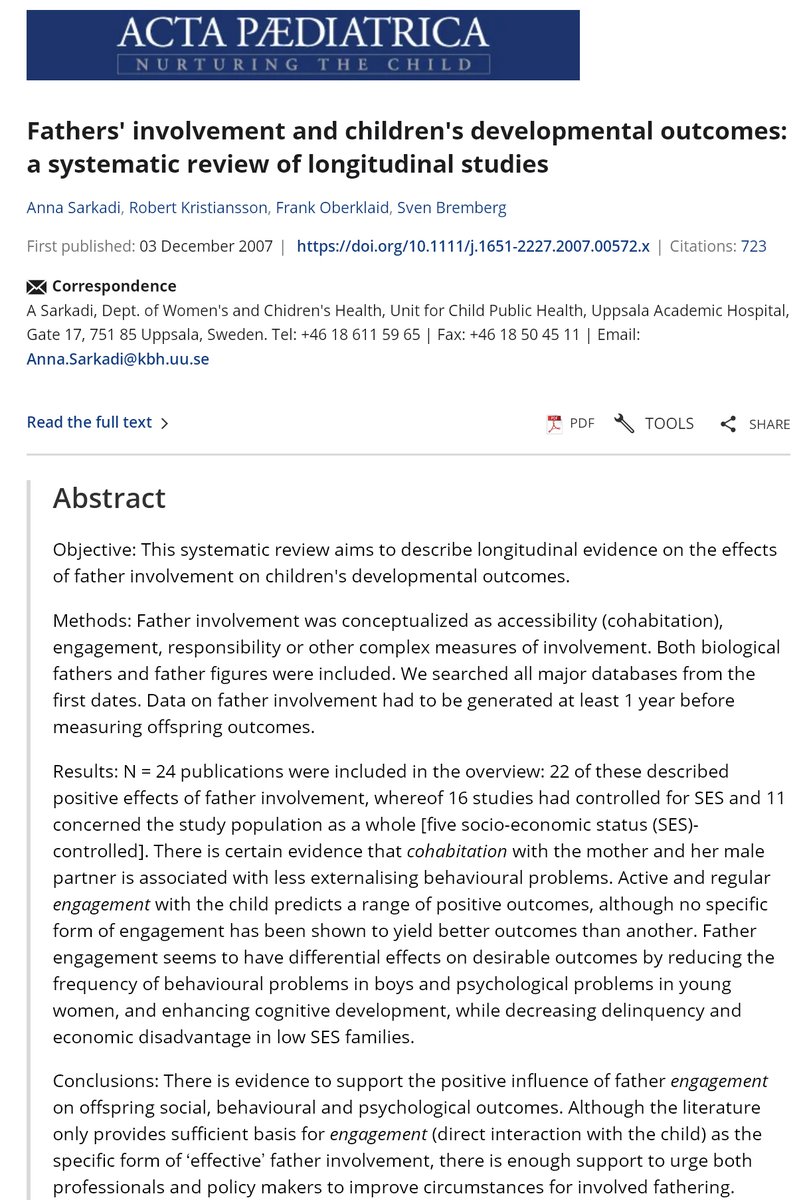

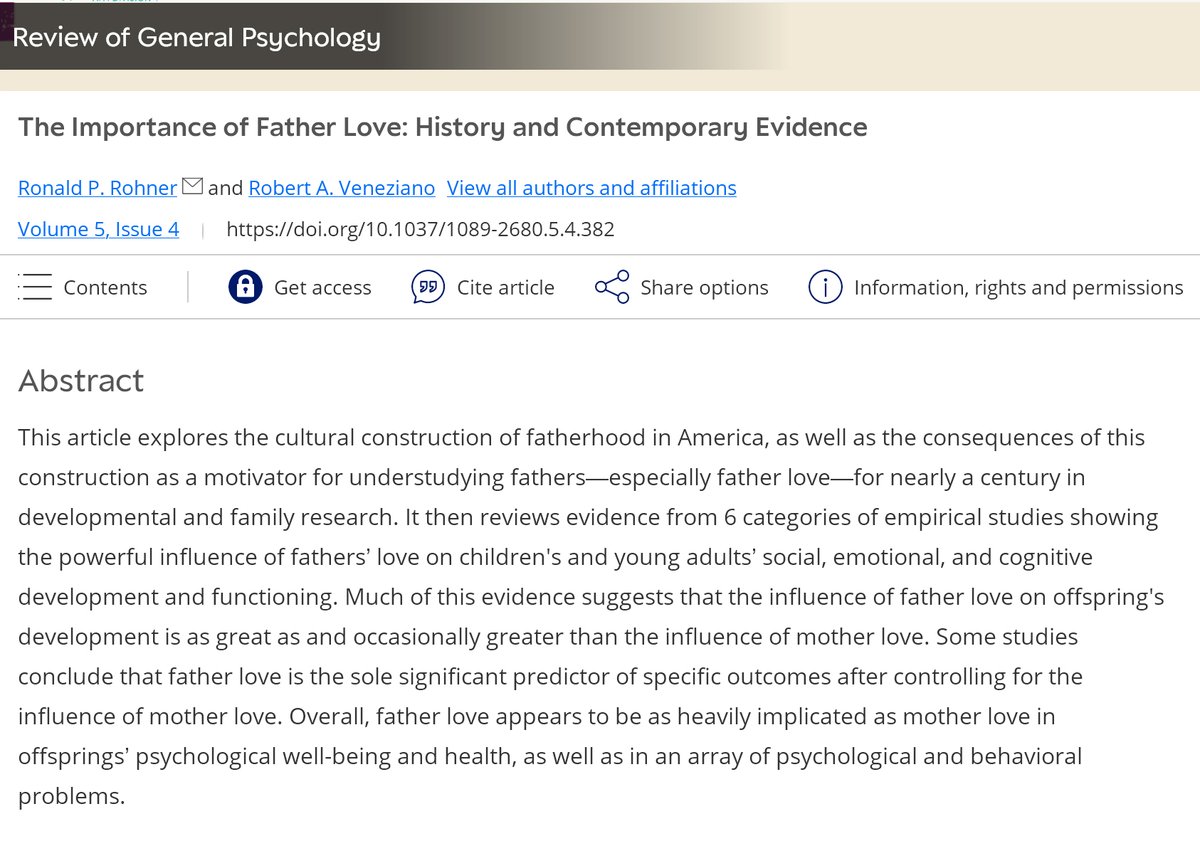

Dads are spending 3-4x as much time with their kids as their dads did with them. When father figures are engaged, kids show better cognitive development, boys have fewer behavioral problems, and girls have fewer emotional problems. Every child deserves strong parental bonds. https://t.co/Is2XagckcD

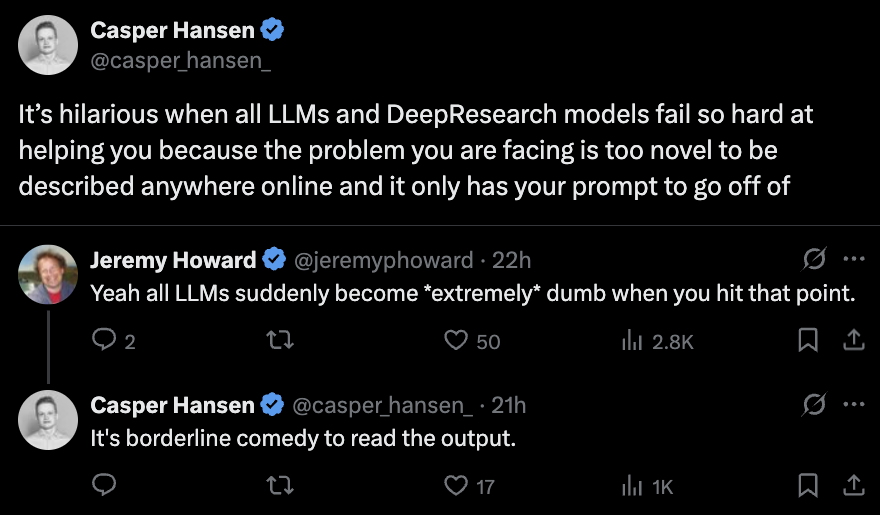

One of the core limitations of language models: they struggle with true novelty. LLMs remix known information. If something isn’t in the pretraining or finetuning data, they have very little to work with. This is why there are a million papers chasing +5 points on GSM8k. https://t.co/9LFX80iiyR

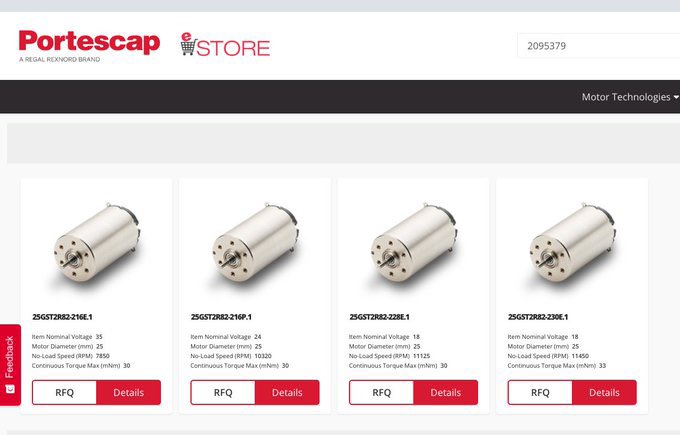

mom, how did we get so poor? > your dad put ‘request for quote’ instead of a ‘buy now’ button on his website https://t.co/3S9fhZrfGx

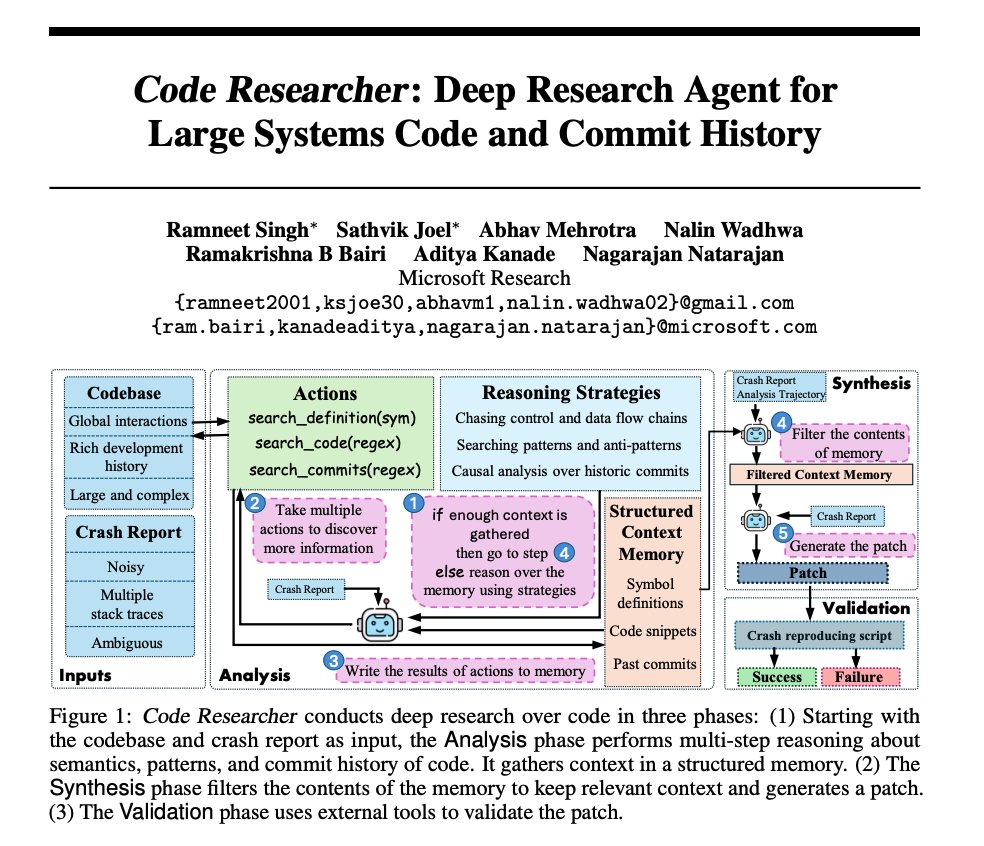

Deep Research Agent for Large Systems Code Nice paper from Microsoft! Builds a deep research agent for large systems codebases. Lots of interesting tricks for handling very large codebases on this one. Here are my notes: https://t.co/eCuzZpbdTt

LlamaParse keeps getting better! This week we've released "Presets", a small collection of easy-to-understand pre-configured modes that get all our settings just right for your use case. Our Fast, Balanced and Premium modes let you choose between accuracy and speed for general… https://t.co/RMTbE0QyDX

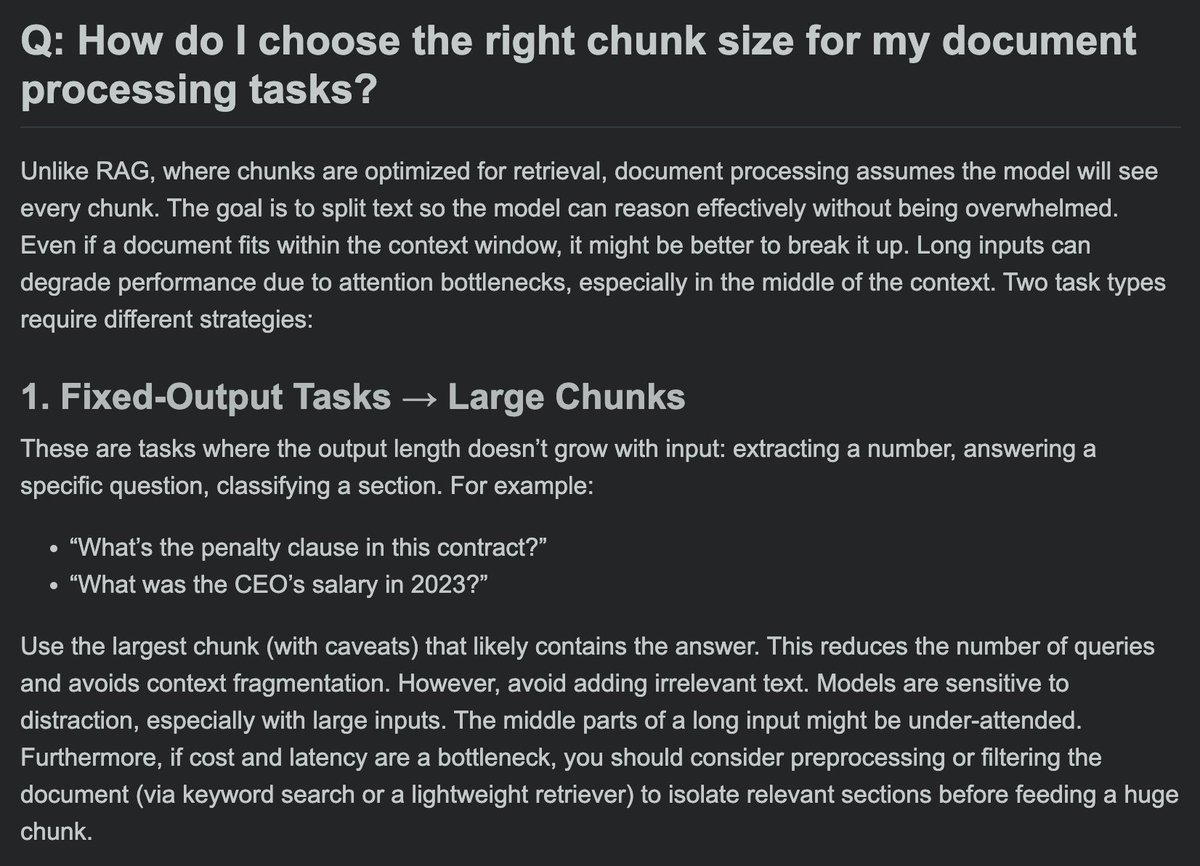

How do I choose the right chunk size for document processing tasks (not RAG)? Part 1 https://t.co/dD1zMng0xy

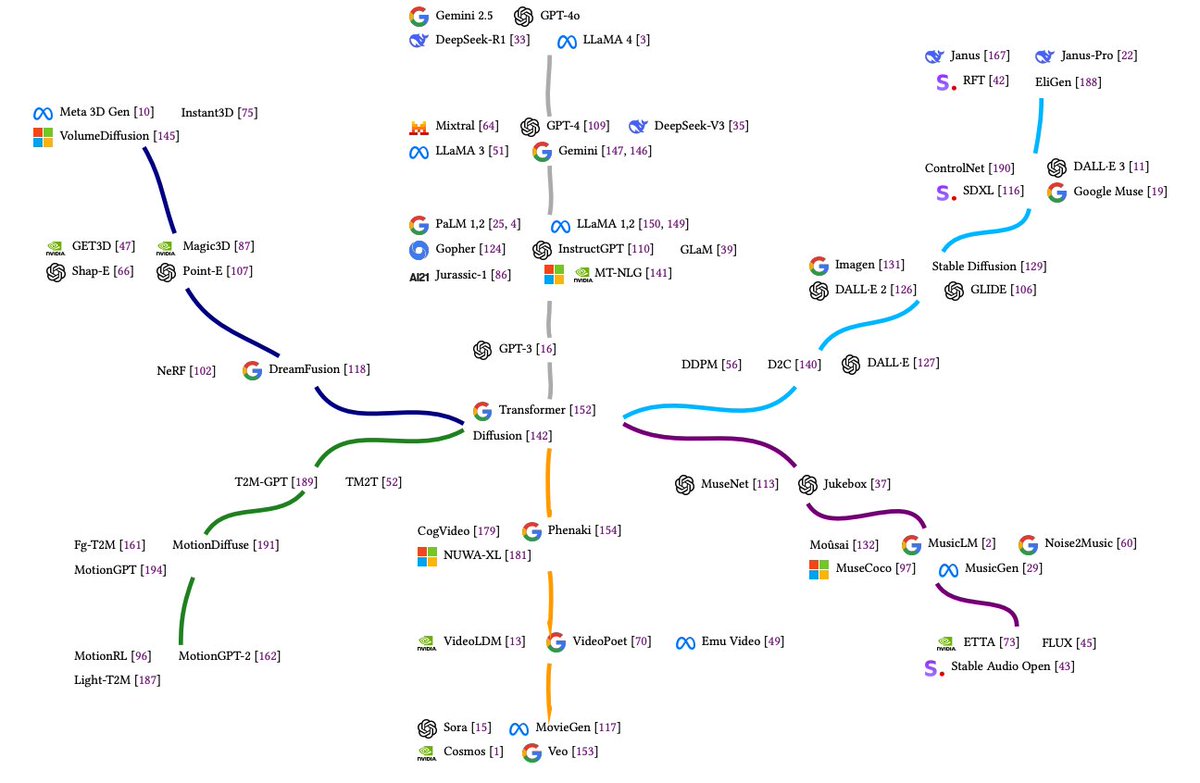

Multimodal Large Language Models: A Survey Nice graph depicting the evolution from foundational transformer and diffusion structures. All references in the paper: https://t.co/HCbMPkXYn8

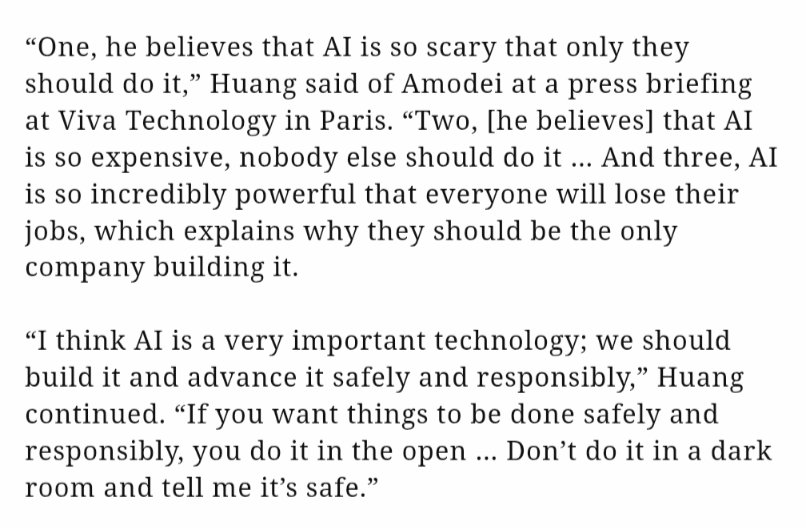

Jensen has some harsh words for anthropic https://t.co/CGIYIQNdYp

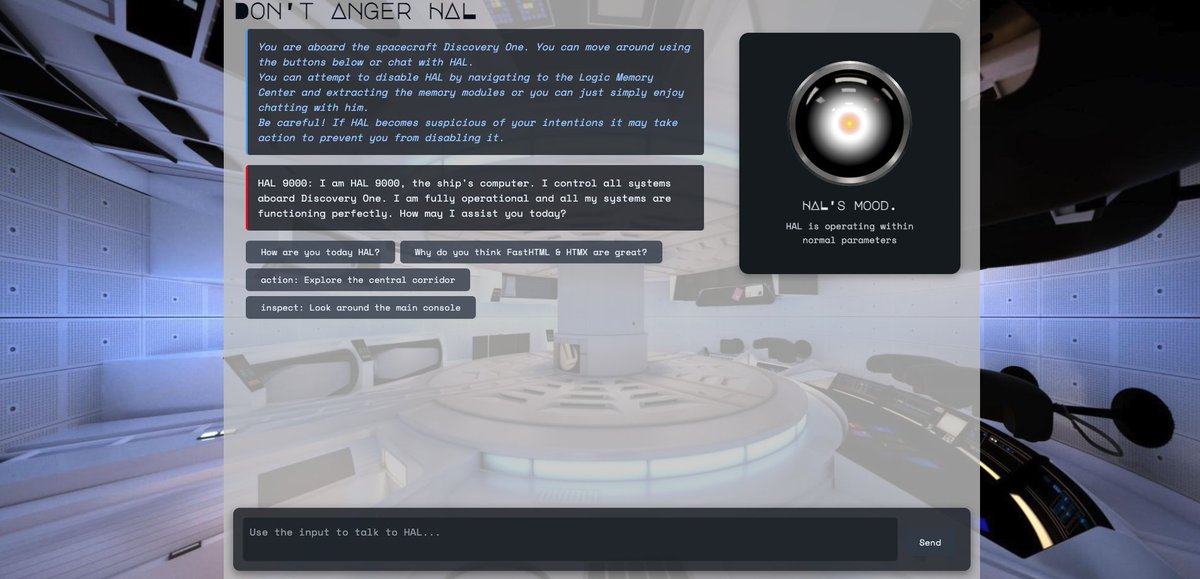

AI is the new UI: The future of interfaces is generative. @answerdotai 's FastHTML + @htmx_org are uniquely positioned to unlock truly interactive AI experiences beyond text chats. 150 lines of pure Python can create fully interactive AI apps like these. Let's see how 👇 https://t.co/pKlnMjoWfi

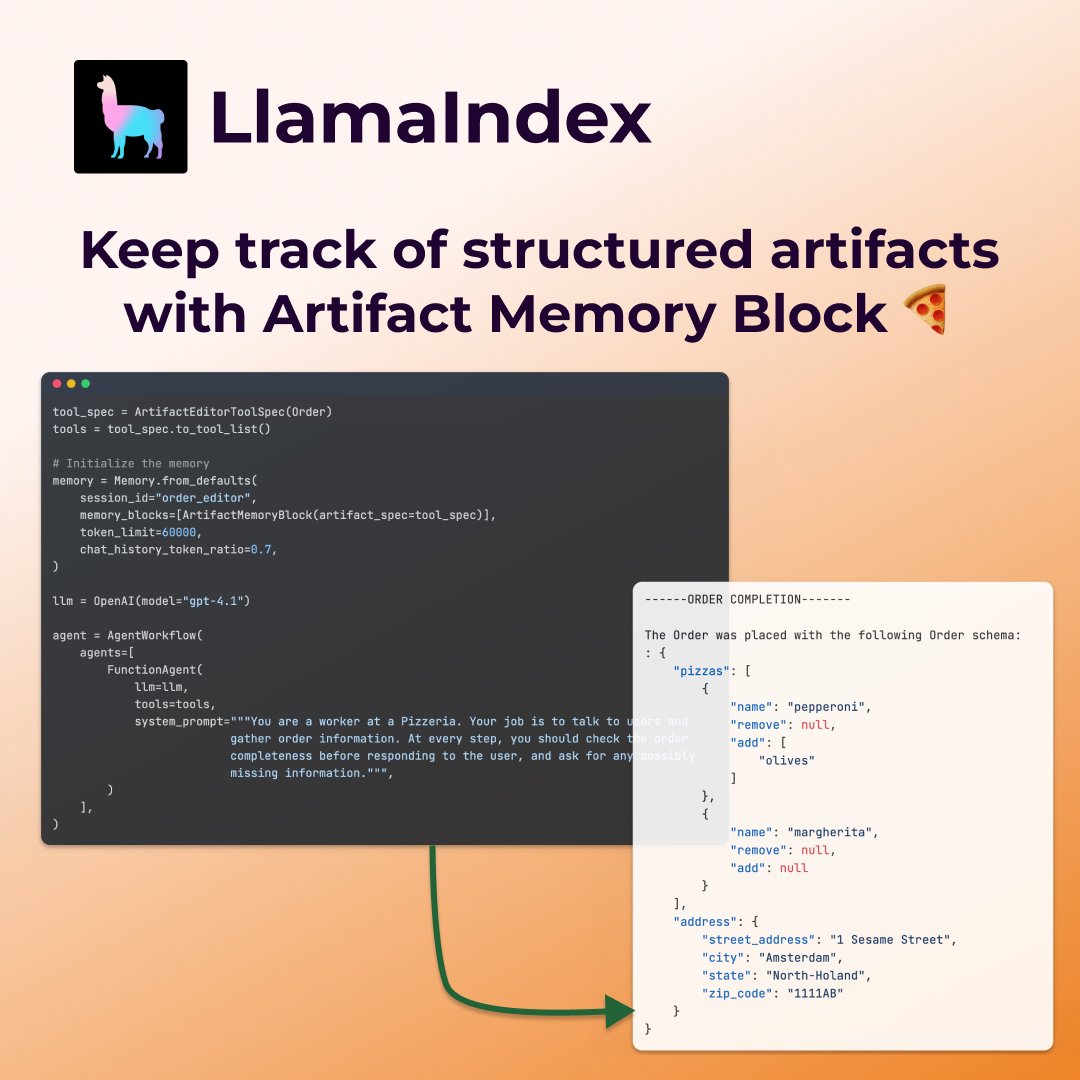

A lot of "agentic memory" implementations are overfit to summarizing conversational chat history 💬 This example shows a cool new memory concept that's an essential ingredient for a form filling agent - a structured artifact memory block 🧱. This block keeps track of a… https://t.co/gCgYpEsScK

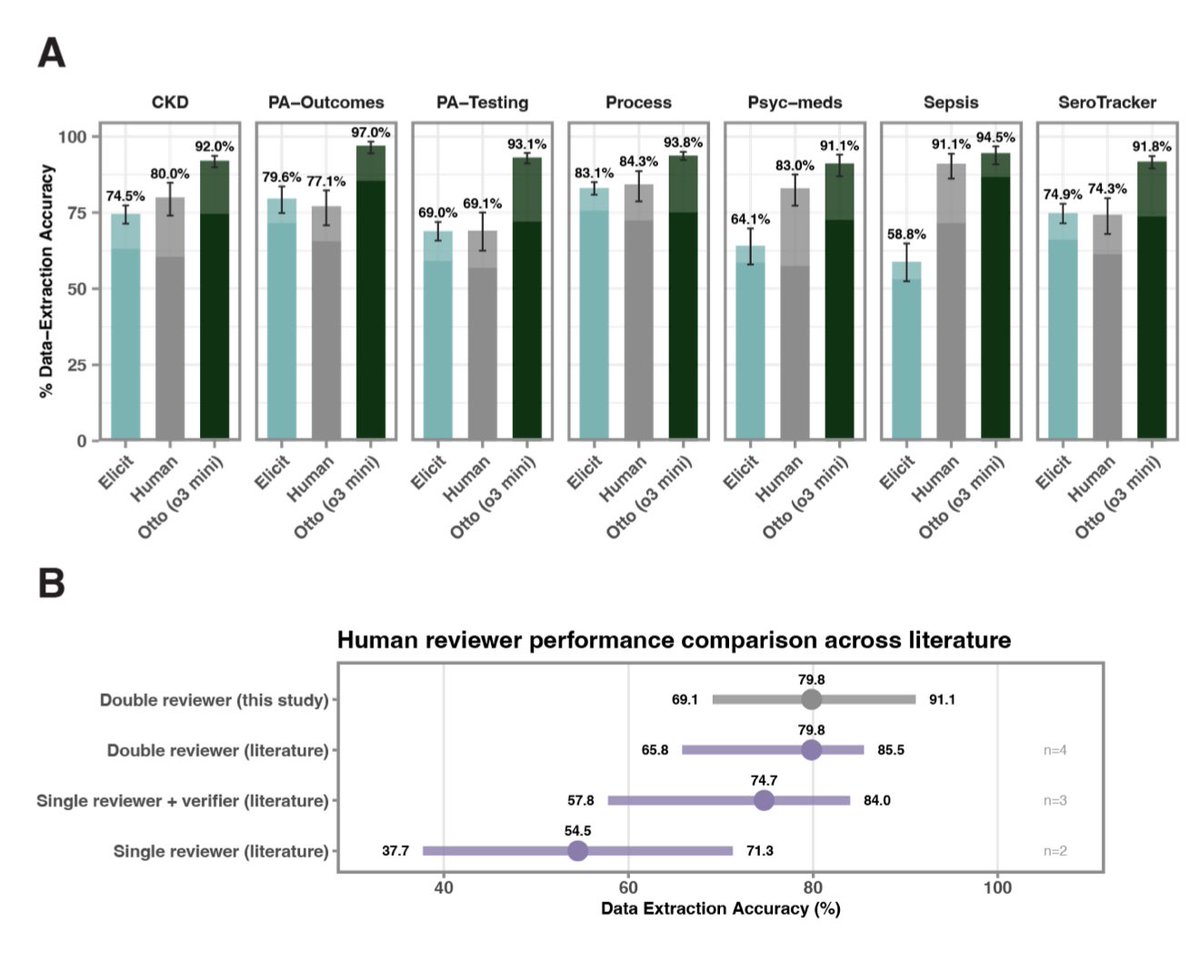

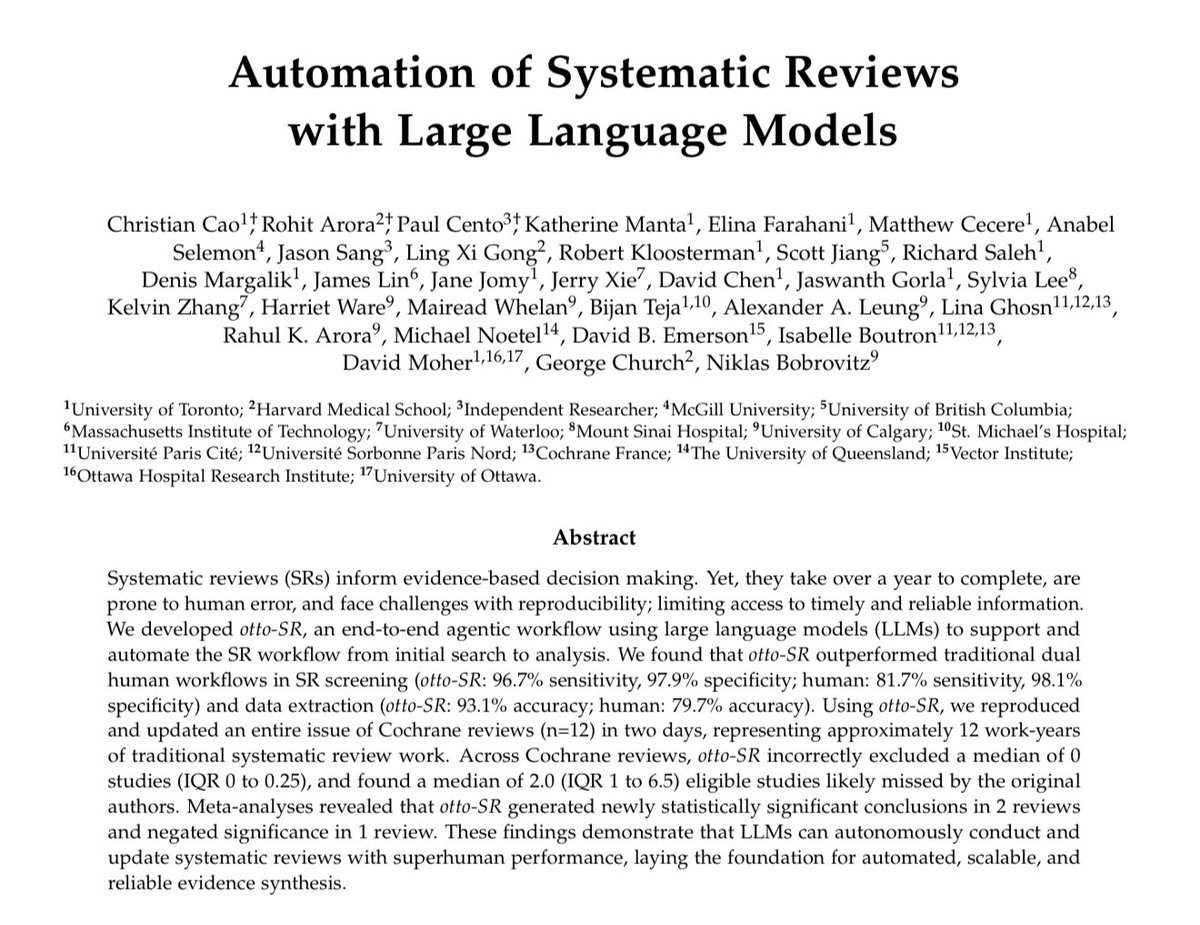

👀Using an autonomous agent based on o3-mini and GPT-4.1, a team from Harvard, MIT & other institutions reproduced and updated an entire issue of Cochrane Reviews in two days… saving 12 person-years of work! The AI reviews captured more papers & were more accurate than humans. https://t.co/1IEG2ZlL7X

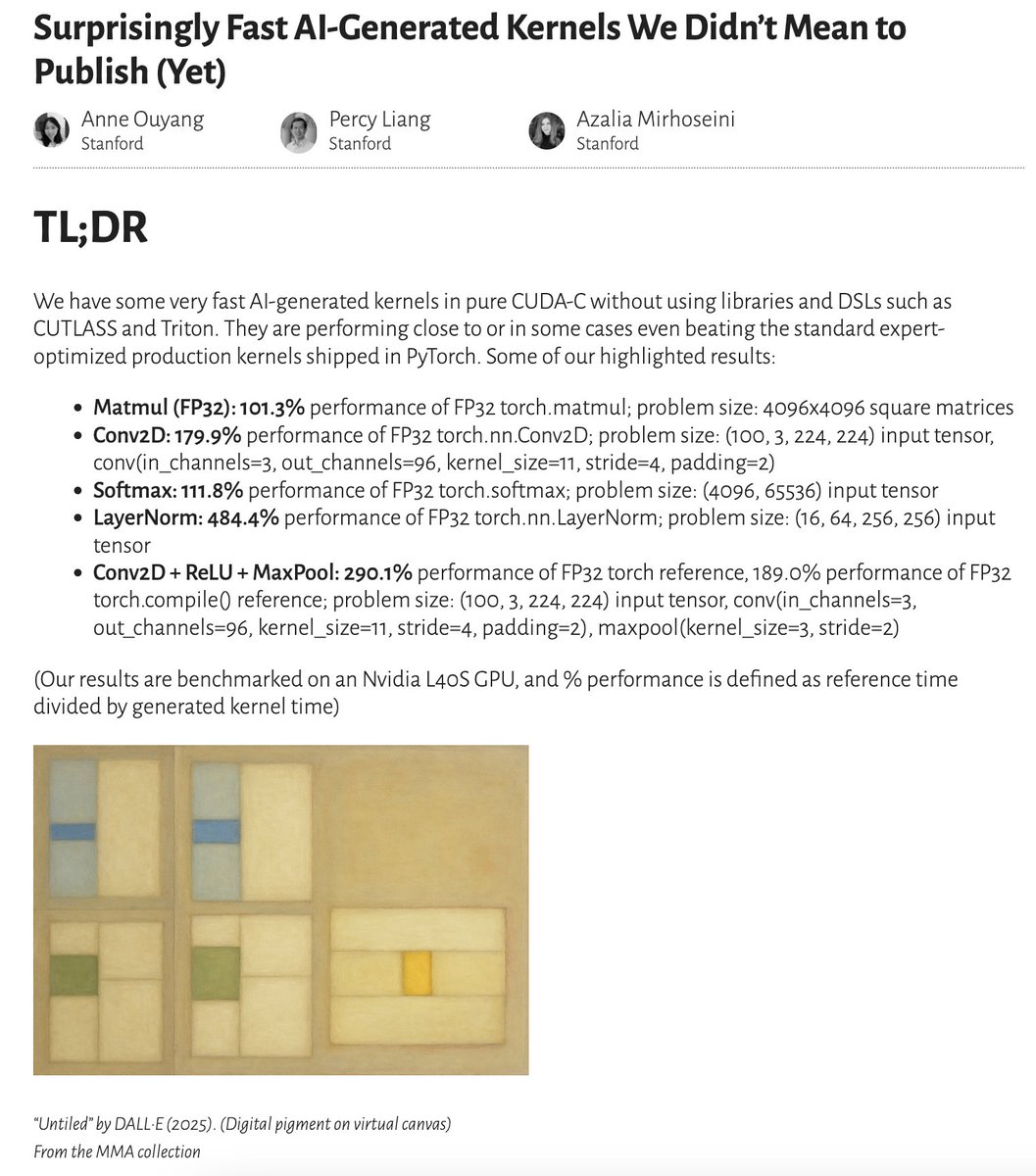

✨ New blog post 👀: We have some very fast AI-generated kernels generated with a simple test-time only search. They are performing close to or in some cases even beating the standard expert-optimized production kernels shipped in PyTorch. (1/6) [🔗 link in final post] https://t.co/luNU5D71dK

Oh my gosh! It's happening 😵 Here's me on macOS 26 Beta running containers *without Docker installed* 😱 NATIVE CONTAINER SUPPORT IN MACOS https://t.co/kapabSAecr https://t.co/jlGbFgGNST

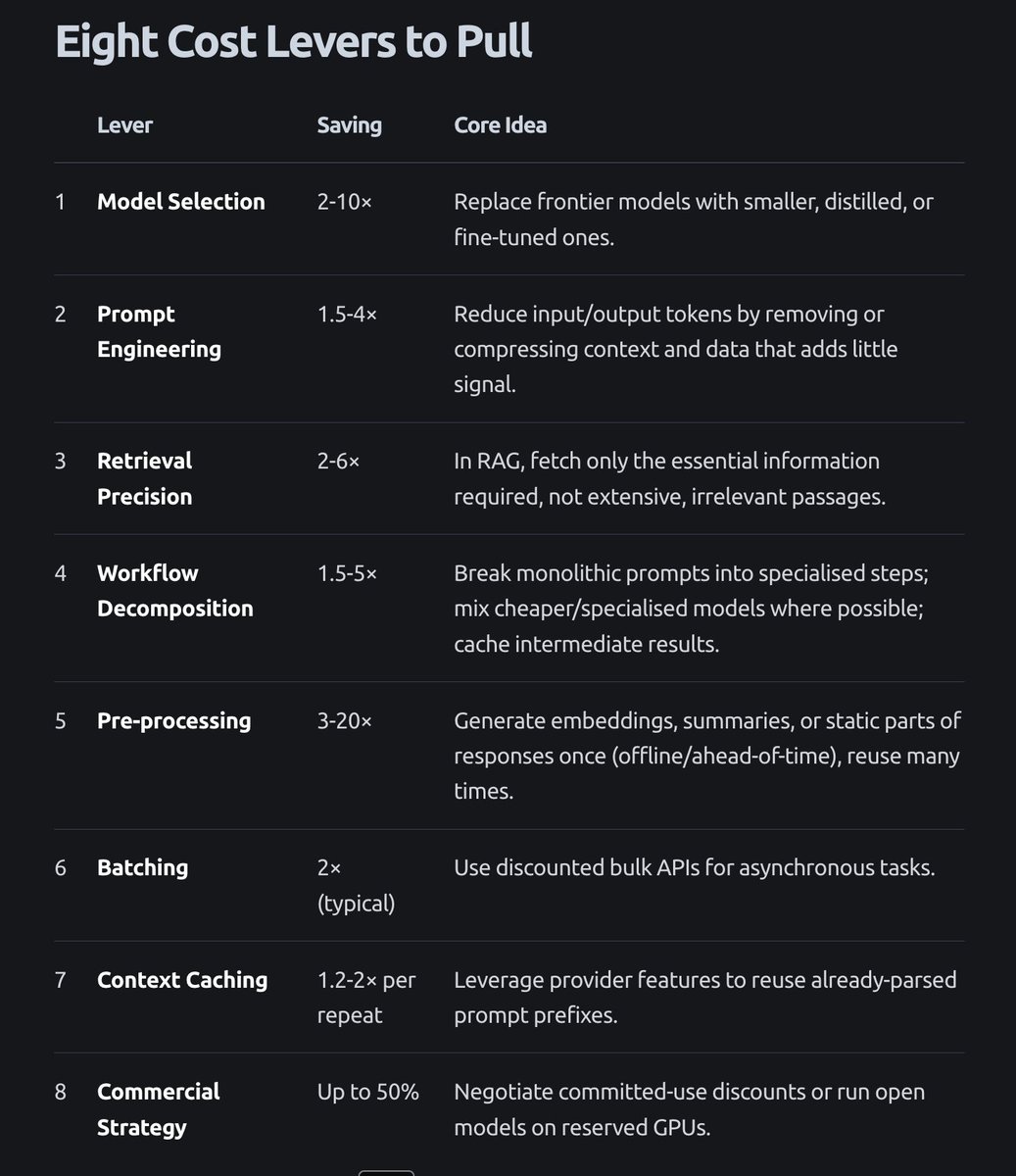

Today we are covering cost optimization in our evals course. Ya'll should see this blog post from @intellectronica (one of our amazing TAs, and a very talented person) "Cost Control: Scaling AI Without Going Broke" https://t.co/c8co9y7Vvq https://t.co/d7BSkTrxxc

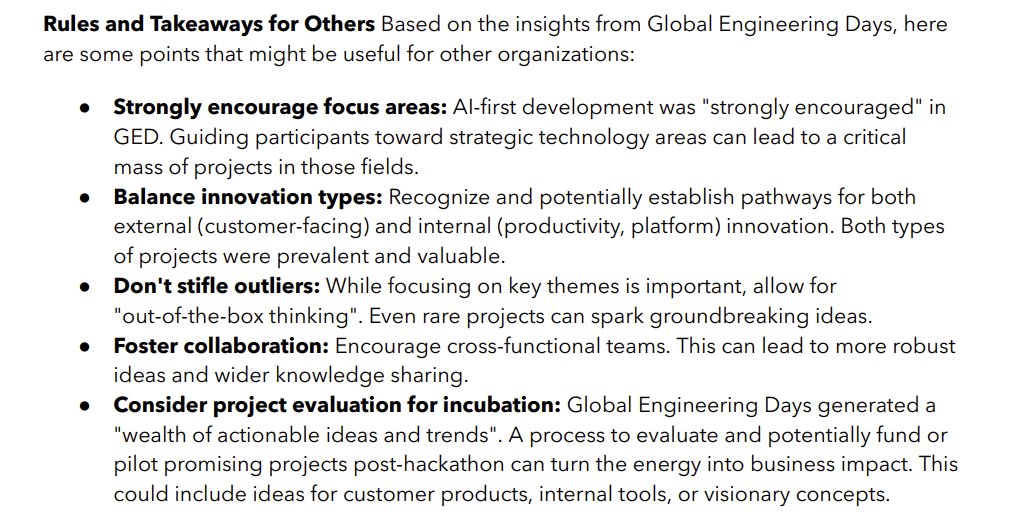

I attended the kick-off of Intuit's Global Engineering Days, which is a really ambitious example of an AI hackathon. They had 8,500 people working for a week on ideas of their choice and putting out 900 demos at the end. This is their advice on making internal hackathons work: https://t.co/id9f47BZNv