@casper_hansen_

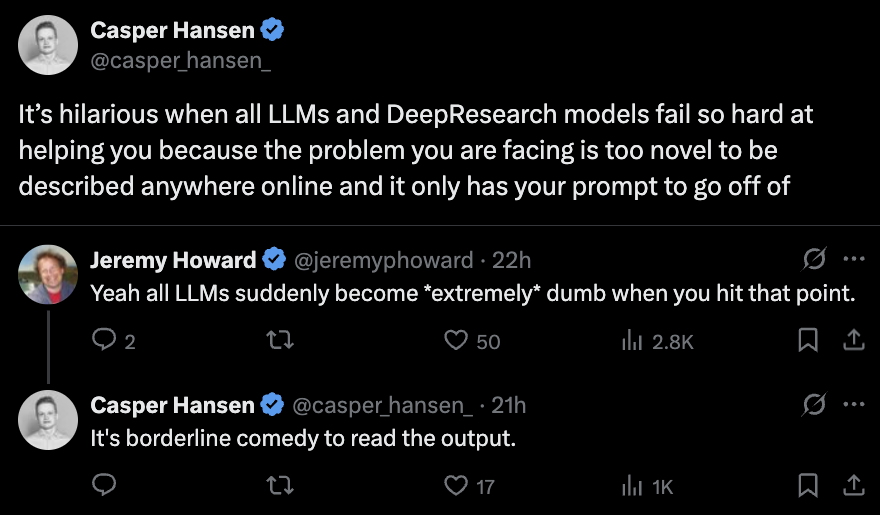

One of the core limitations of language models: they struggle with true novelty. LLMs remix known information. If something isn’t in the pretraining or finetuning data, they have very little to work with. This is why there are a million papers chasing +5 points on GSM8k. https://t.co/9LFX80iiyR