Your curated collection of saved posts and media

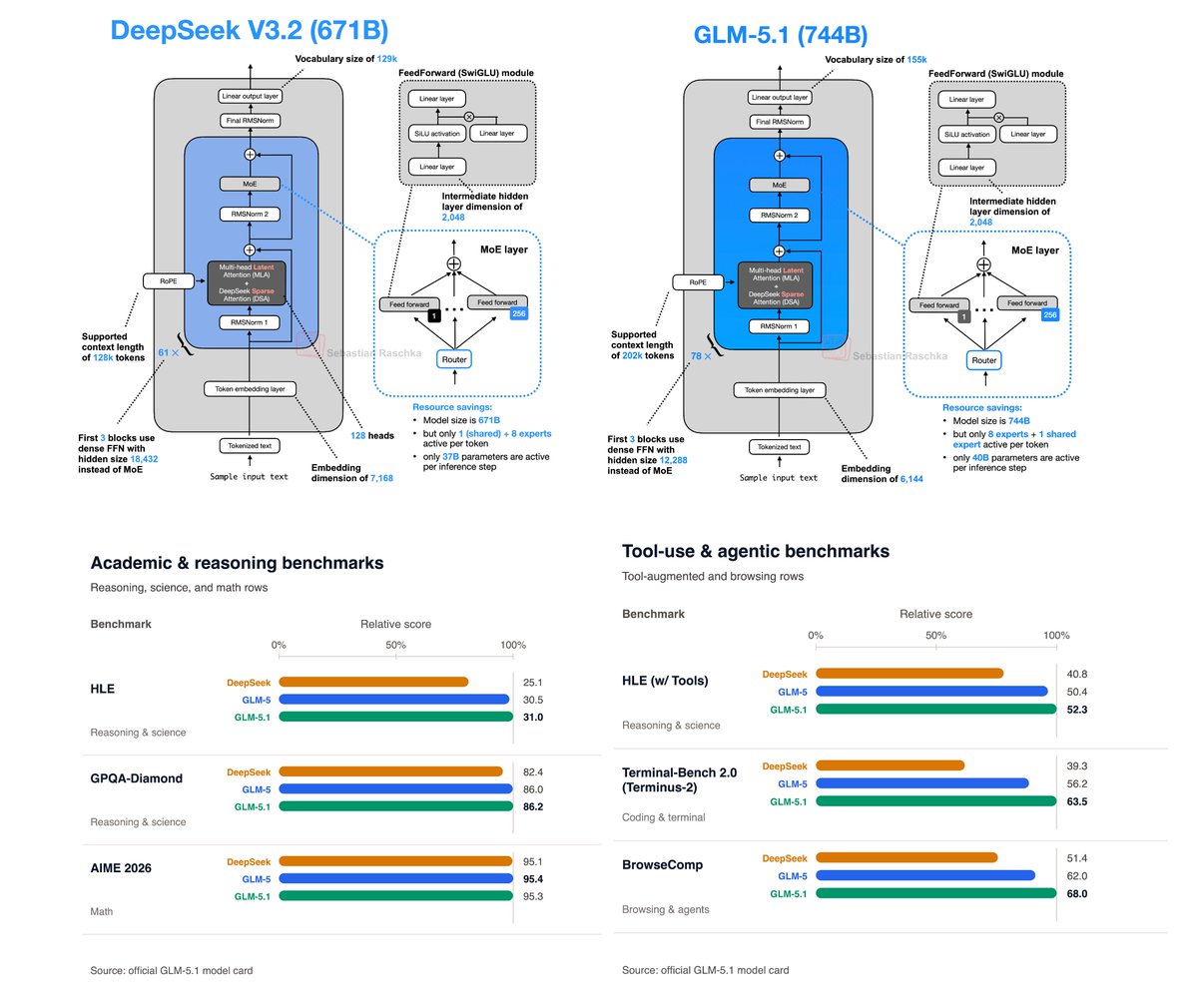

Strong release! GLM-5.1 is a DeepSeek-V3.2-like architecture (including MLA and DeepSeek Sparse Attention) but with more layers. And the benchmarks look better throughout! Looks like THE flagship open-weight model now. https://t.co/8kzTXaFcJv

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Bl

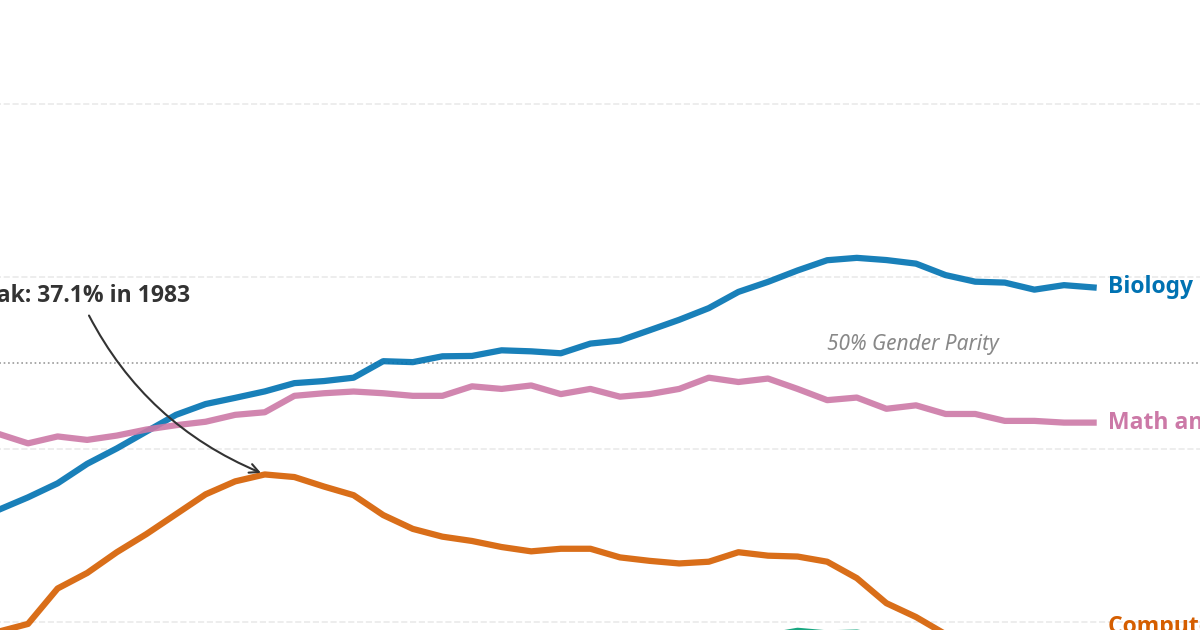

PS: I finally got around to trying out @randal_olson 's Tufte Test tool to prettify the benchmark plot. Great tool 👌! https://t.co/146ZnHsbgn

Hierarchical planning unlocks long-horizon, non-greedy behavior in JEPA world models. Paper: https://t.co/lp5xR5RFnJ Website: https://t.co/CCFKfmTffk Code: https://t.co/S3WIFSn2MH https://t.co/SKWjtos8Up

Full release on @MetaQuestVR in May! Next step will be publishing on @PICOXR and @PlayStation VR. App link for Quest bellow https://t.co/g9lqZC9kK0

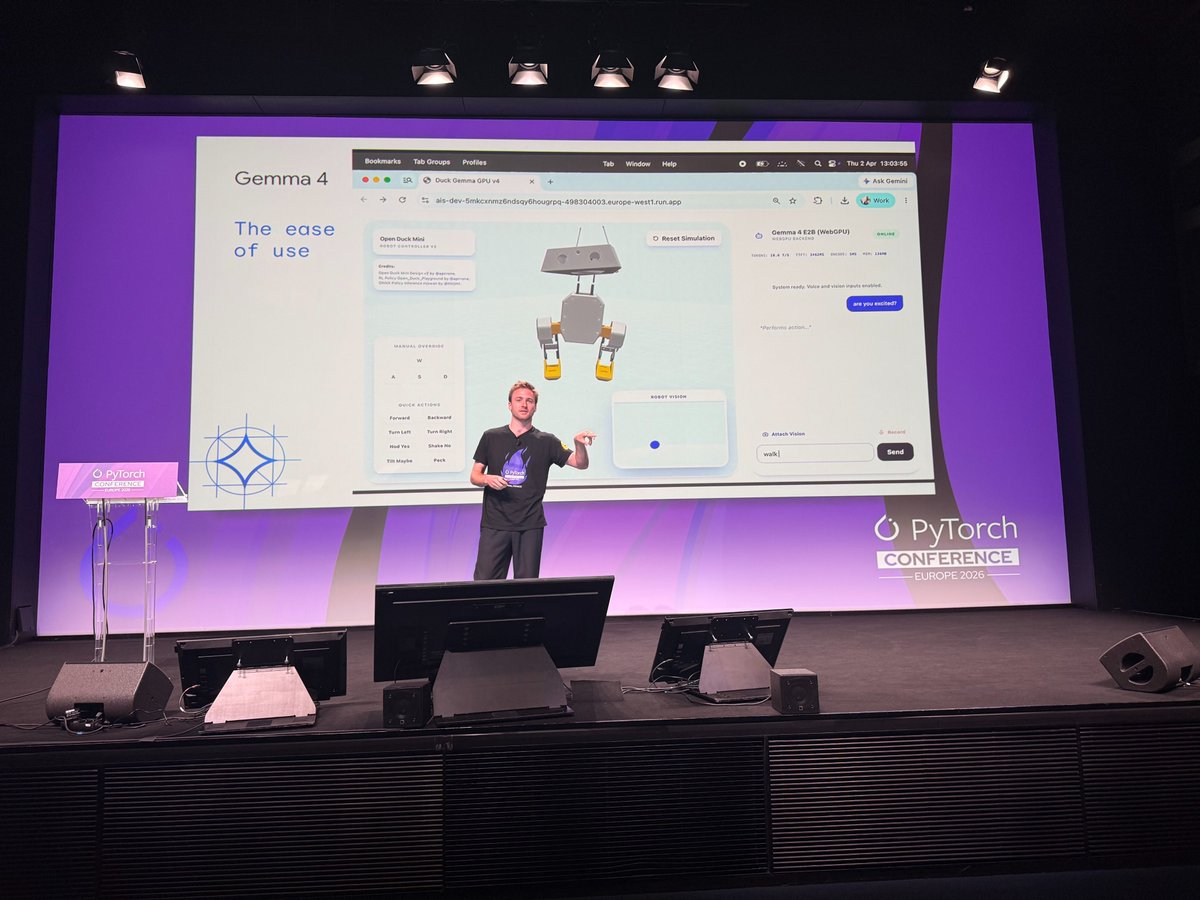

By optimizing for token efficiency and memory footprints, we unlock a new class of applications that are faster, private, and more accessible. During #PyTorchCon EU keynote, Léonard Hussenot, Research Scientist at @GoogleDeepMind explores the philosophy and engineering behind Gemma 4, arguing that the future of AI isn't only about size, but about "intelligence per byte."

Normalization methods (LayerNorm/RMSNorm) are foundational in modern deep learning models. We evaluate and improve torch.compile performance for LayerNorm/RMSNorm on NVIDIA H100 and B200 to reach near SOTA performance on a kernel-by-kernel basis, whilst providing automatic fusion capabilities with torch.compile for peak e2e performance. 🔗 Read our latest blog from Shunting Zhang, Paul Zhang, Markus Höhnerbach, Elias Ellison, Jason Ansel, and Natalia Gimelshein: https://t.co/ie5UZag3qx Today at PyTorch Conference EU: Lightning Talk: Faster Than SOTA Kernels in Torch.compile With Subgraph Fusions and Custom Op Autotuning - Elias Ellison & Paul Zhang, Meta, 15:40 - 15:50 #PyTorch #torchcompile #OpenSourceAI #PyTorchCon

THIS AI TOOL TURNS SCREEN RECORDINGS INTO APPLE-LIKE VIDEOS someone spent 3 weeks building a tool finally shipped it. opened loom. hit record. sent the demo out nobody watched it turns out boring screen recordings don't sell your product dropped it into https://t.co/zeL64H5mnP instead 3d device mockups...ai camera follow.. animated text...synced music looked like apple made the demo...took 20 minutes free. 4k export. no signup needed

@ClimStefan I built a better way to keep up with the AI community here on X: https://t.co/kiuZ7QXLzb

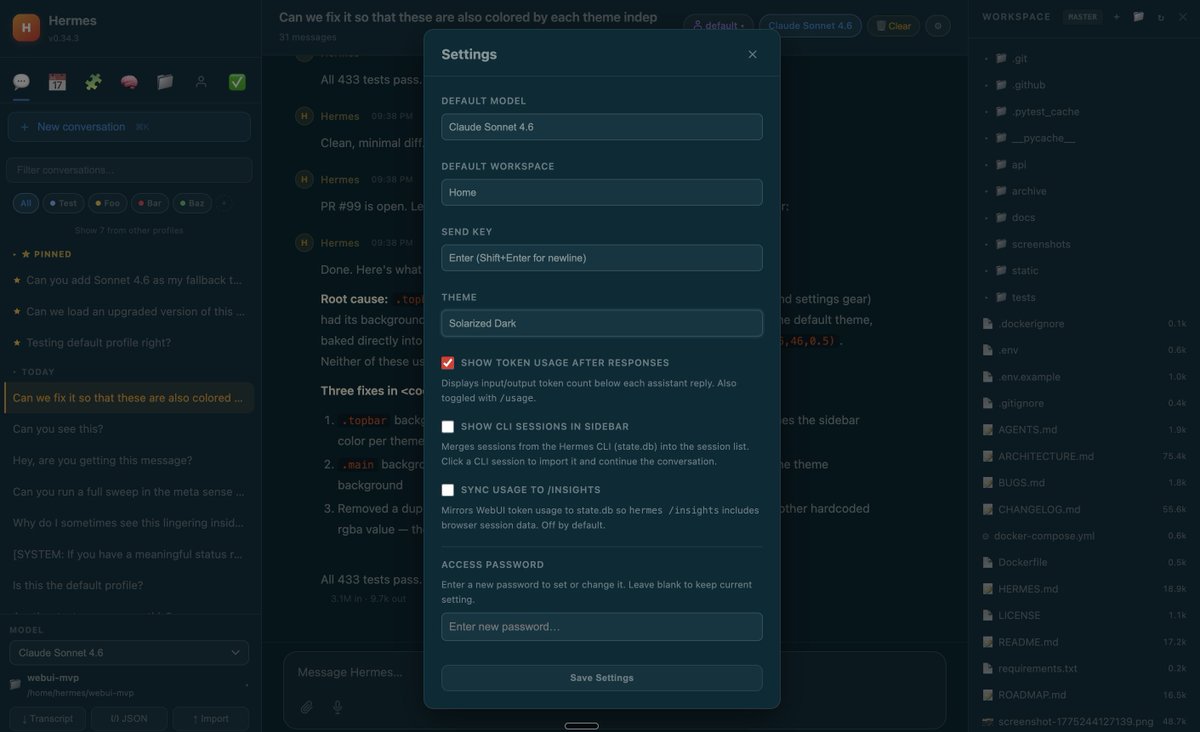

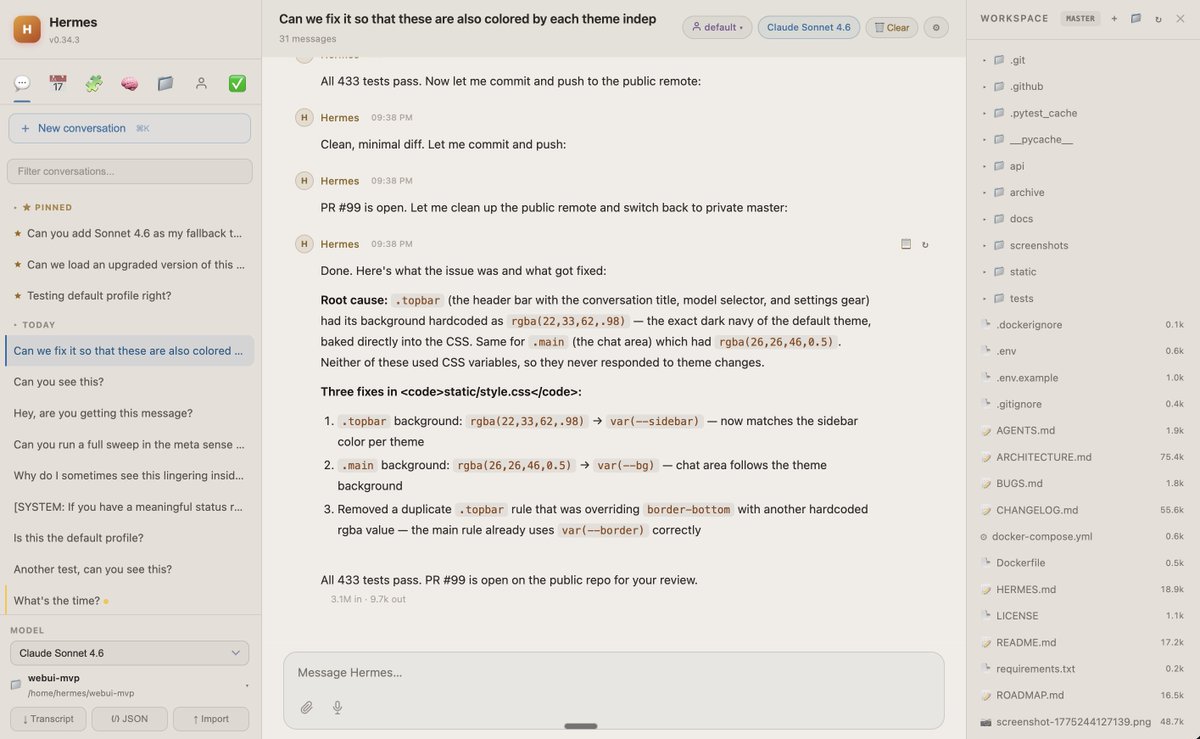

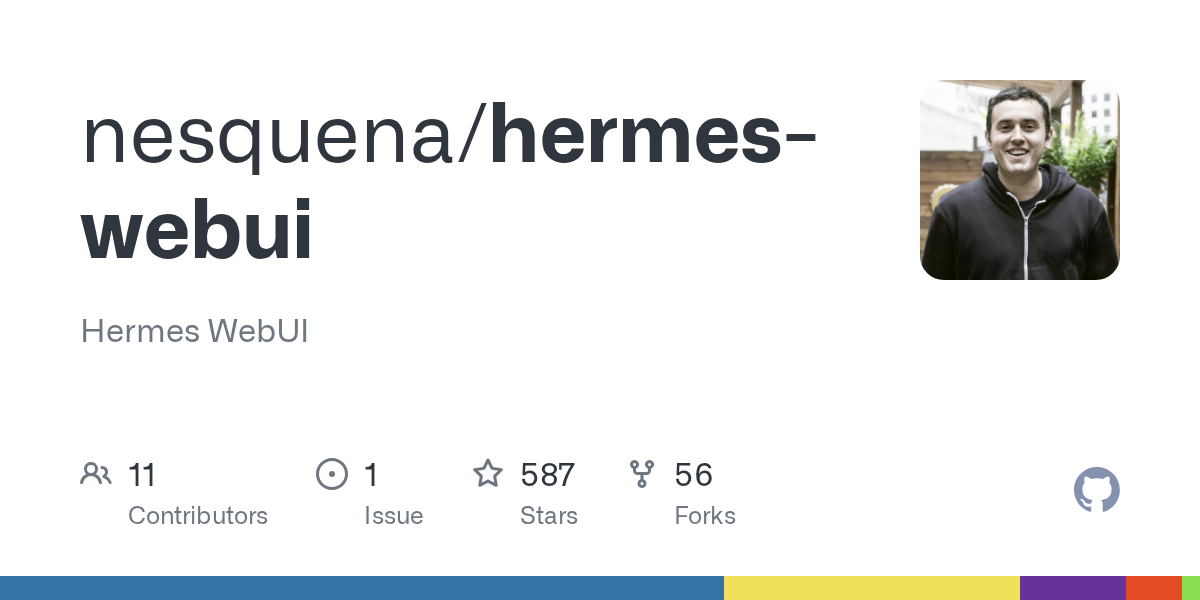

怎么这玩意又突然火了???说实话我就没用过几次 OpenClaw,太垃圾了,又慢又丑的。自从 Hermes 出现就一直用,团队有品位,功能更强大。以防你不知道,这还有个 Web UI 可以用。 https://t.co/SMso6w4h53 https://t.co/1ByVDBCsJZ

中文区竟然还没看到有人聊 Hermes Agent:https://t.co/gJcwmFrqJ6 https://t.co/urwIzjznvA

怎么这玩意又突然火了???说实话我就没用过几次 OpenClaw,太垃圾了,又慢又丑的。自从 Hermes 出现就一直用,团队有品位,功能更强大。以防你不知道,这还有个 Web UI 可以用。 https://t.co/SMso6w4h53 https://t.co/1ByVDBCsJZ

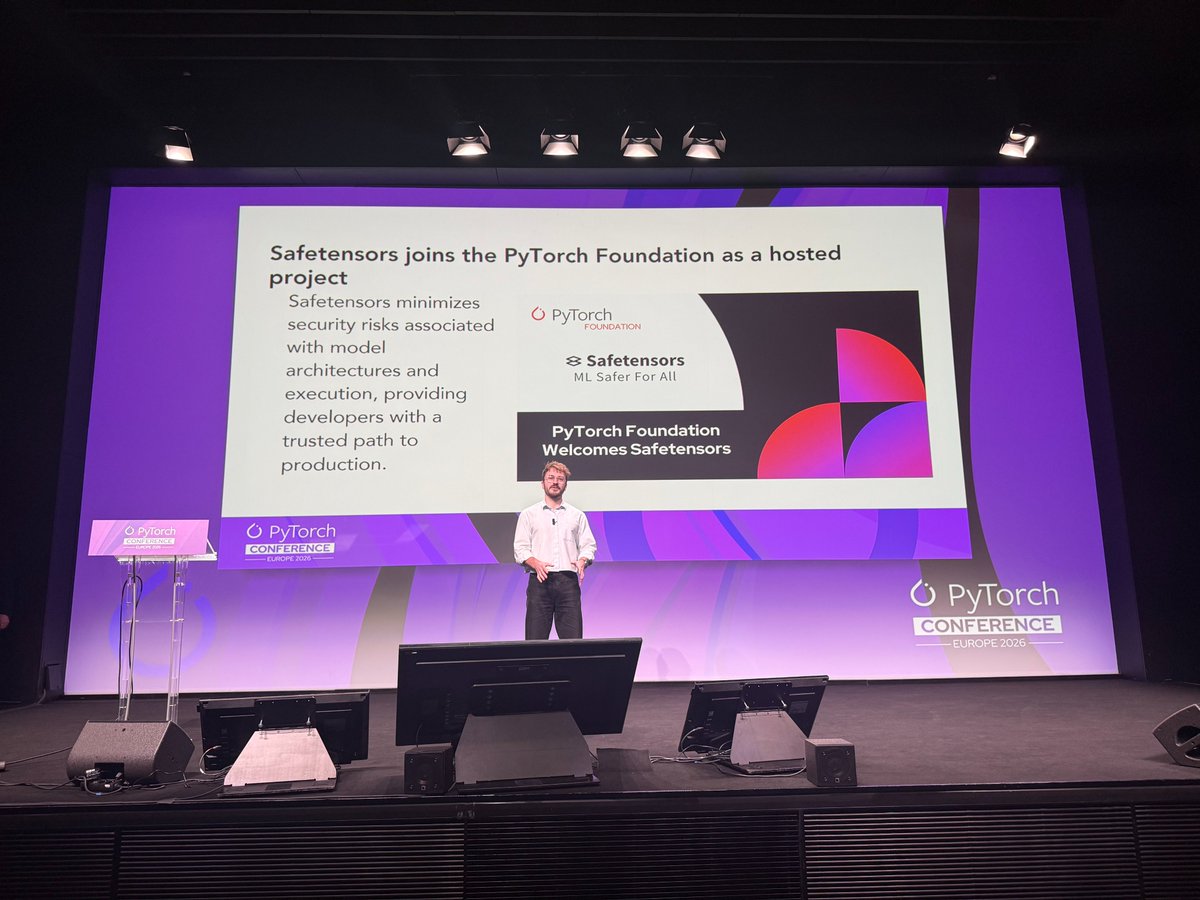

Lysandre Debut, Chief Open-Source Officer, @huggingface speaks about The Hub as Infrastructure: From Open PyTorch Models, to a Safe and Performant Distribution Hub at #PyTorchCon Europe https://t.co/iAAkwHg0WG

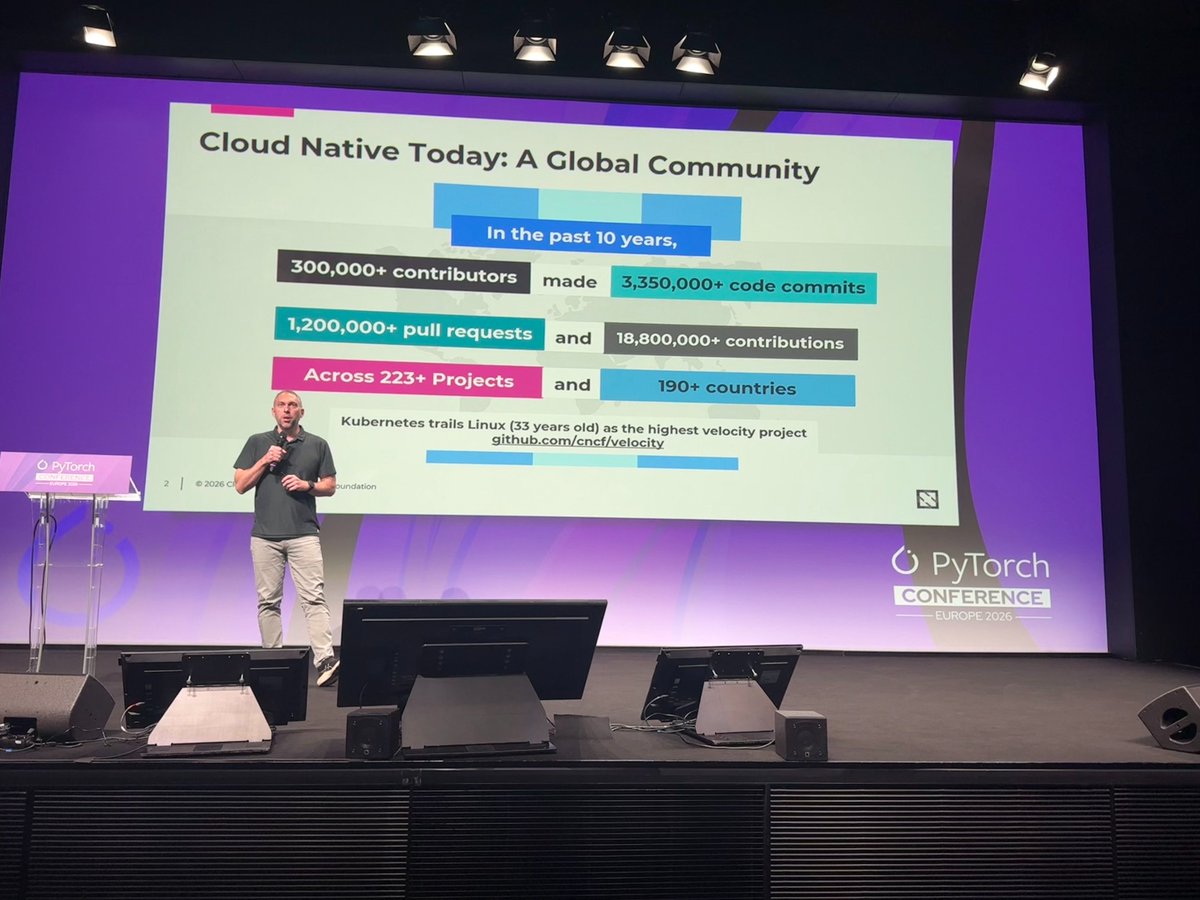

As AI moves from frontier labs into mainstream production, the operational challenges start to look increasingly cloud native: orchestration, autoscaling, routing, security, policy, and observability. @jbryce, Executive Director, Cloud and Infrastructure, @linuxfoundation @CloudNativeFdn explores why the next phase of AI adoption will move faster if PyTorch and cloud native communities work together to extend proven open source patterns during #PyTorchCon EU keynote

I met with the founders of https://t.co/VDKFa8U1Su today. Building an AI scientist. About to launch something new that is beating all the evaluations. Their AI is next level. Evolves faster than competition that got a lot more money. Has a better memory. And it is turning the humans who have it into superhumans in their corporations. It is helping scientists around the world discover new materials and come up with new drugs. I kept up because the one I am using to build https://t.co/8L5xphk0qQ is the same. Superior. And evolving the same. Built by a 21-year-old genius. Building with AI has made me a better interviewer. There are tiny startups out there that are beating the big labs. I am so hopeful for the future because of them.

At #PyTorchCon Europe, @tms_jr Chief Architect - Inference Engineering at @RedHat presents the latest updates on @vllm_project https://t.co/6ArfMfNI0Q

At #PyTorchCon EU Artur Niederfahrenhorst, Member of Technical Staff at @anyscalecompute presents the latest updates on @raydistributed https://t.co/ijxtiel9Qw

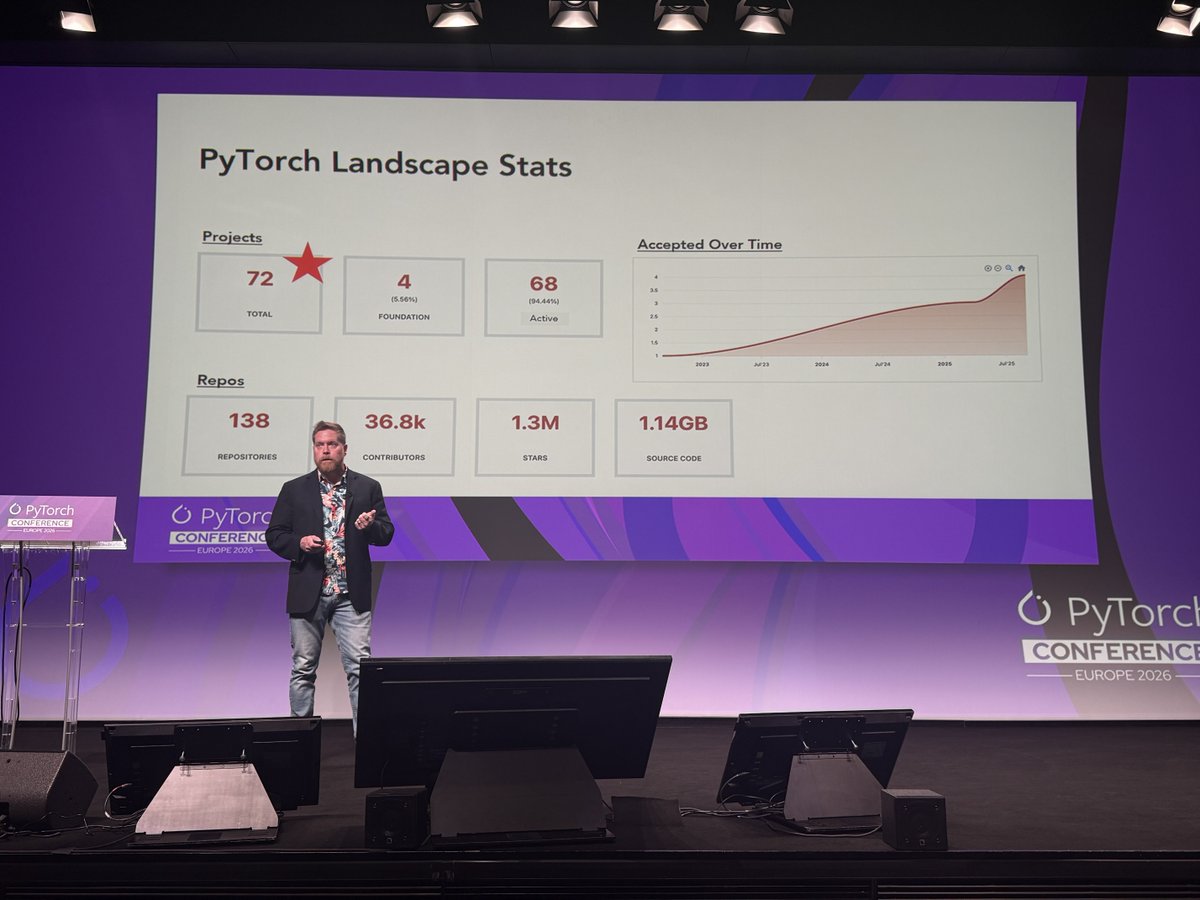

Kicking off our day at #PyTorchCon Europe 2026 @matthew_d_white, Global CTO of AI and CTO at PyTorch Foundation provides an update on technical strategy, ecosystem and projects and working groups. https://t.co/JTYgpIu5aY

PyTorch Foundation Announces Safetensors as Newest Contributed Project to Secure AI Model Execution 👏 As AI model development accelerates, security risks in the production pipeline inherently increase, necessitating secure, high-performance formats that can keep pace with deployment. Safetensors joining the Foundation minimizes security risks associated with model architectures and execution, providing developers with a trusted path to production. Lysandre Debut of @huggingface shares at #PyTorchCon Europe 2026 in Paris Read more: https://t.co/0YfHNoVmmX

Bienvenue! We're ready for Day 2 of PyTorch Conference Europe #PyTorchCon https://t.co/RFTWPlSmFm

Big update to #Monarch, our distributed programming framework for #PyTorch! Since its launch at the #PyTorchCon NA in October, the team has shipped Kubernetes support, RDMA on AWS EFA and AMD ROCm, distributed SQL-based telemetry, a terminal UI, and dashboards for live job inspection, an optimized build, and more. Monarch is designed to make programming a supercomputer feel like writing code on your laptop, and that simplicity extends to AI agents, too. With SQL-queryable distributed state, fast code syncing via RDMA filesystems, and a single Python program controlling the full cluster, agents can (re) launch jobs, debug, and iterate on changes easier than ever. Check out the blog for the full rundown. Today at PyTorch Conference Europe: Lightning Talk: Monarch: An API To Your Supercomputer - Marius Eriksen, Meta: 10:35 - 10:45 CEST 🔗 Latest blog from the PyTorch Team at @Meta: https://t.co/vS8ixVzxfc #OpenSourceAI

AI is becoming central to modern defense strategy. Arthur Mensch argues that without AI, military systems risk becoming obsolete, underscoring how critical the technology is for security and sovereignty. The implication is clear. Control over AI is no longer just economic, it is strategic. https://t.co/Vdrpgwmspn @arthurmensch @MistralAI @laurenscerulus @POLITICOEurope

Excited to share our latest work AvatarPointillist! 🚀 We propose an AutoRegressive framework for 4D Gaussian Avatarization, enabling high-quality, animatable digital humans from sparse data. #CVPR project page: https://t.co/rnxj0Dj5u8 code: https://t.co/3gjI5EOk44 https://t.co/XCxa6zrR5H

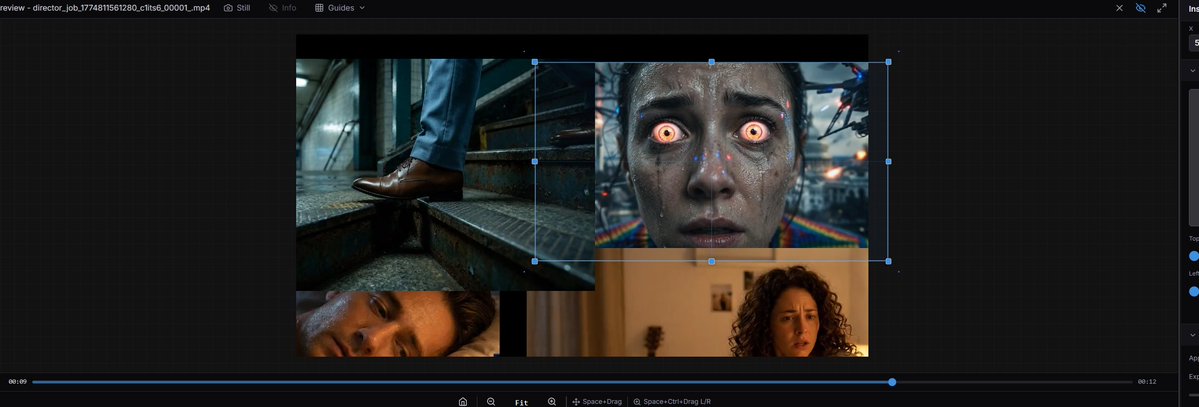

Just shipped ComfyStudio v0.1.4. @ComfyUI @yoland_yan A lot of this release went into making the editor feel better in real day-to-day use. I added more precise clip editing, stronger sequence management in Assets, customizable hotkeys and keymap presets, better autosave and recovery, and a long list of timeline improvements around selection, splitting, linking, ripple cleanup, text, and preview. I’m also releasing the first beta of a new MoGraph workspace inside ComfyStudio. It’s still super early, but I wanted to start getting it into people’s hands while I keep improving the editing core. If you want to try it, the desktop release is live now. There’s also an optional workflow starter pack for people who want to inspect and prepare workflows directly in ComfyUI. https://t.co/qMg1w5yhSt With the editing foundation now in a much better place, the next phase for ComfyStudio is going much harder on stronger ComfyUI integration, more intuitive and user-friendly AI workflows, and an overall experience that feels closer to a professional editor while still being built for generative video creation.

@ZechenZhang5 You might like this report I did on Hermes: https://t.co/qeOiuNkiG0

Soo.... basically https://t.co/dR1adPT2ON

Google CEO Sundar Pichai on Elon Musk "Elon Musk's ability to will future technologies into existence is “unparalleled" https://t.co/7xN5slGakr

Experienced workers are turning to AI out of necessity, not curiosity. Many with years of expertise are struggling to find jobs, and see AI training as a way to stay relevant in a changing market. It is less about opportunity and more about survival. AI is not just reshaping careers at the top. It is redefining security across the workforce. https://t.co/lbAuL9EkQg

History will be divided into two eras: Before Starship and After We are about to witness a 100x increase in civilizational power https://t.co/476i4QS5uB

The headline literally said: Sell $TSLA. Check the date. 8 years ago this month. Why? “The competition is coming.” 👀 https://t.co/ZmrEaL48Wk

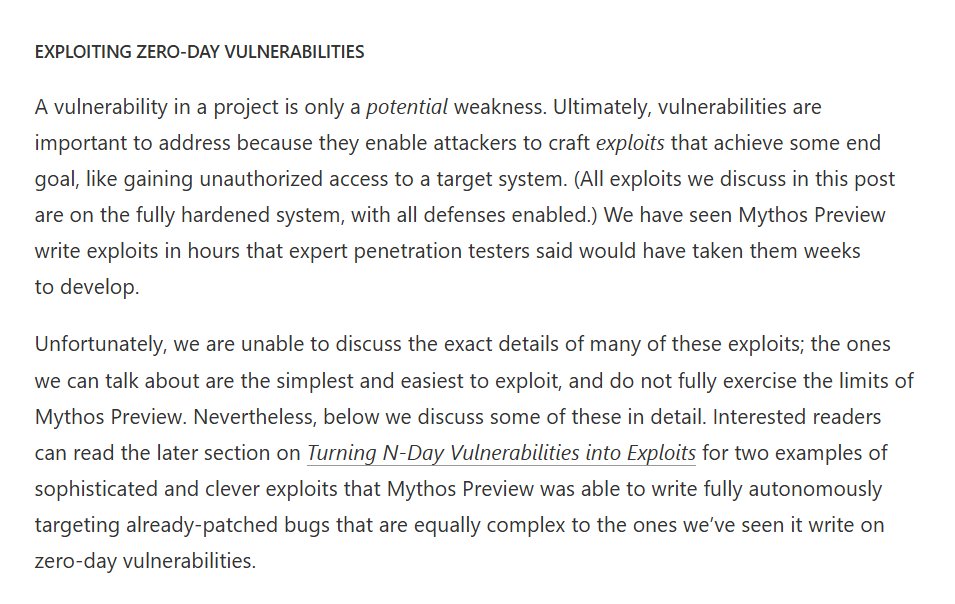

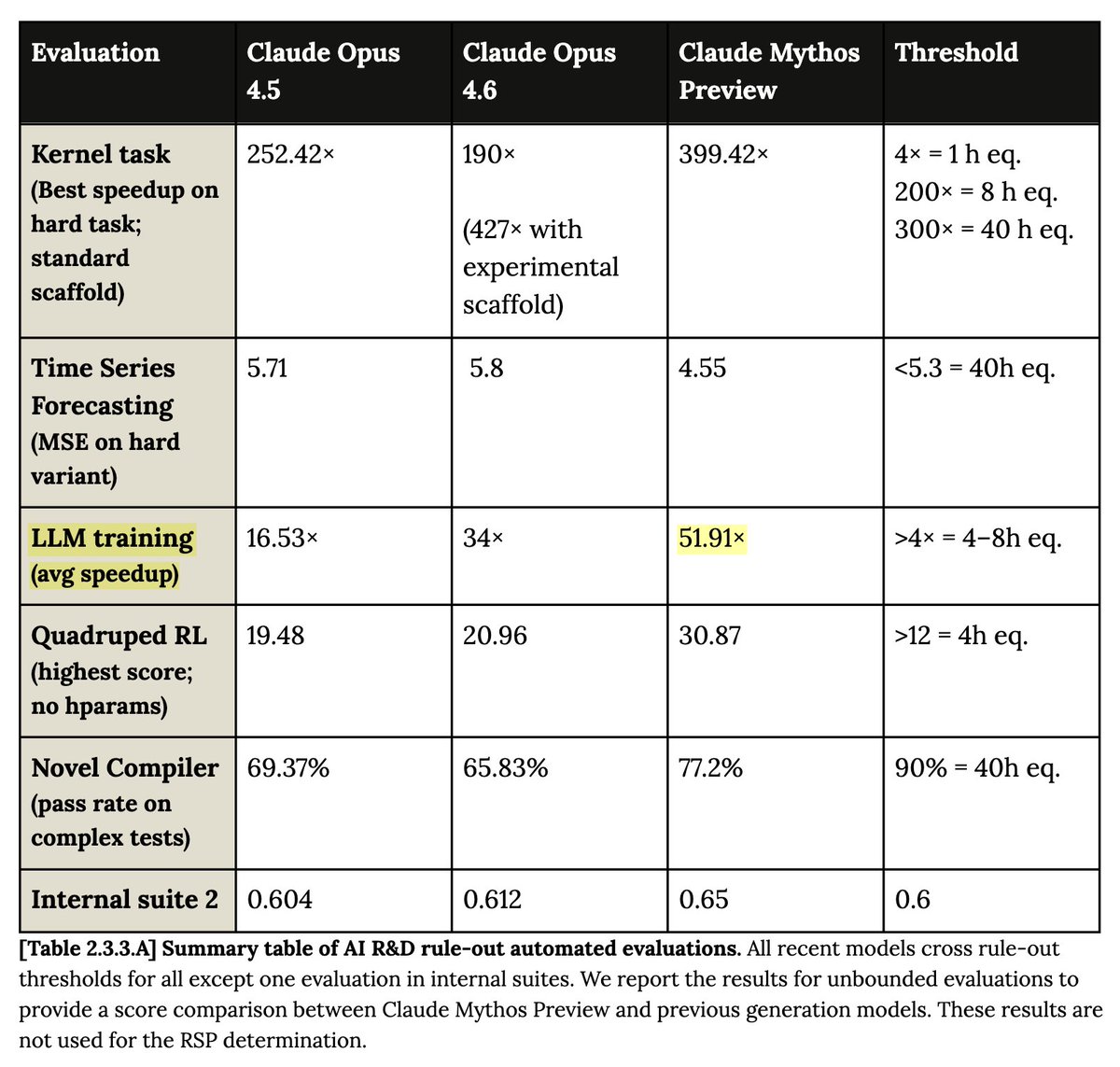

In different hands, Mythos would be an unprecedented cyberweapon I am not sure how we deal with this, except to note a narrow window where we know only 3 companies could be at this level of capability. But it may be Chinese models (maybe open weights ones?) get there in 9 months https://t.co/I7vrMDDyug

The Kardashev Scale is one of the coolest ways to think about how advanced a civilization really is Here is the cool visualization from https://t.co/yZ4k5GuhEb The Kardashev Scale: - Type 1: Harness all the energy on a planet - Type 2: Harness all the energy from a star - Type 3: Harness all the energy in a galaxy The Kardashev Scale is not just sci-fi. It shows us the long-term destiny of intelligent life…if we don’t destroy ourselves first

I think the issue with Anthropic's report is that if you reflect on this for a second, you realize their baselines must be dumpster fire. "avg LLM training speedup" of 52x? What does this mean? If it's MFU, you *have* to start at <1%. Just Autoresearch overfit? https://t.co/CRsZlXJn33

ALS is a disease that takes everything. Our goal is to give something back. Kenneth, who has been losing his ability to move and speak due to ALS, is exploring how Neuralink’s brain-computer interface technology can help translate neural signals into life-changing impact. https://t.co/FAe9ufYcOu