@teortaxesTex

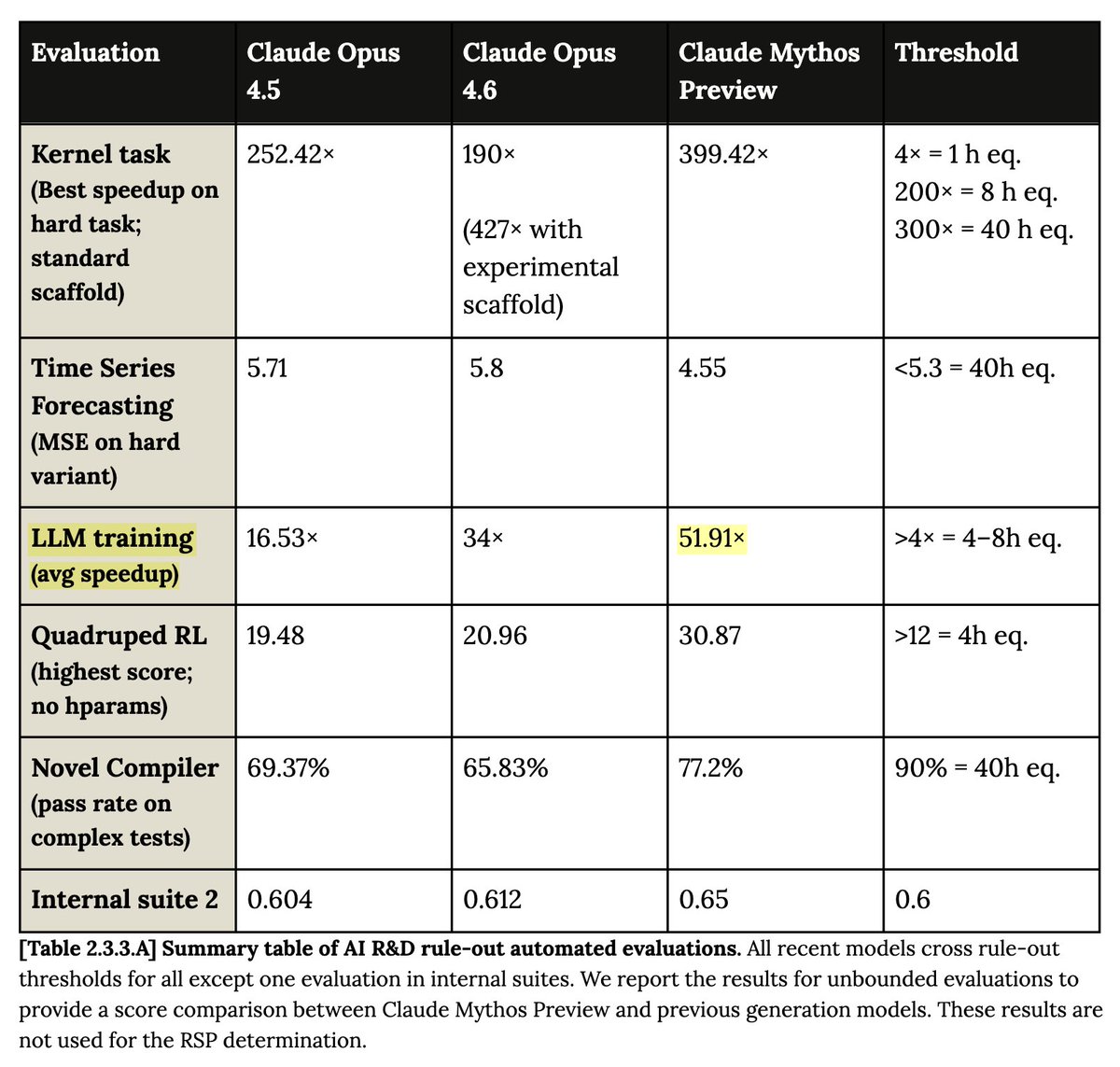

I think the issue with Anthropic's report is that if you reflect on this for a second, you realize their baselines must be dumpster fire. "avg LLM training speedup" of 52x? What does this mean? If it's MFU, you *have* to start at <1%. Just Autoresearch overfit? https://t.co/CRsZlXJn33