Your curated collection of saved posts and media

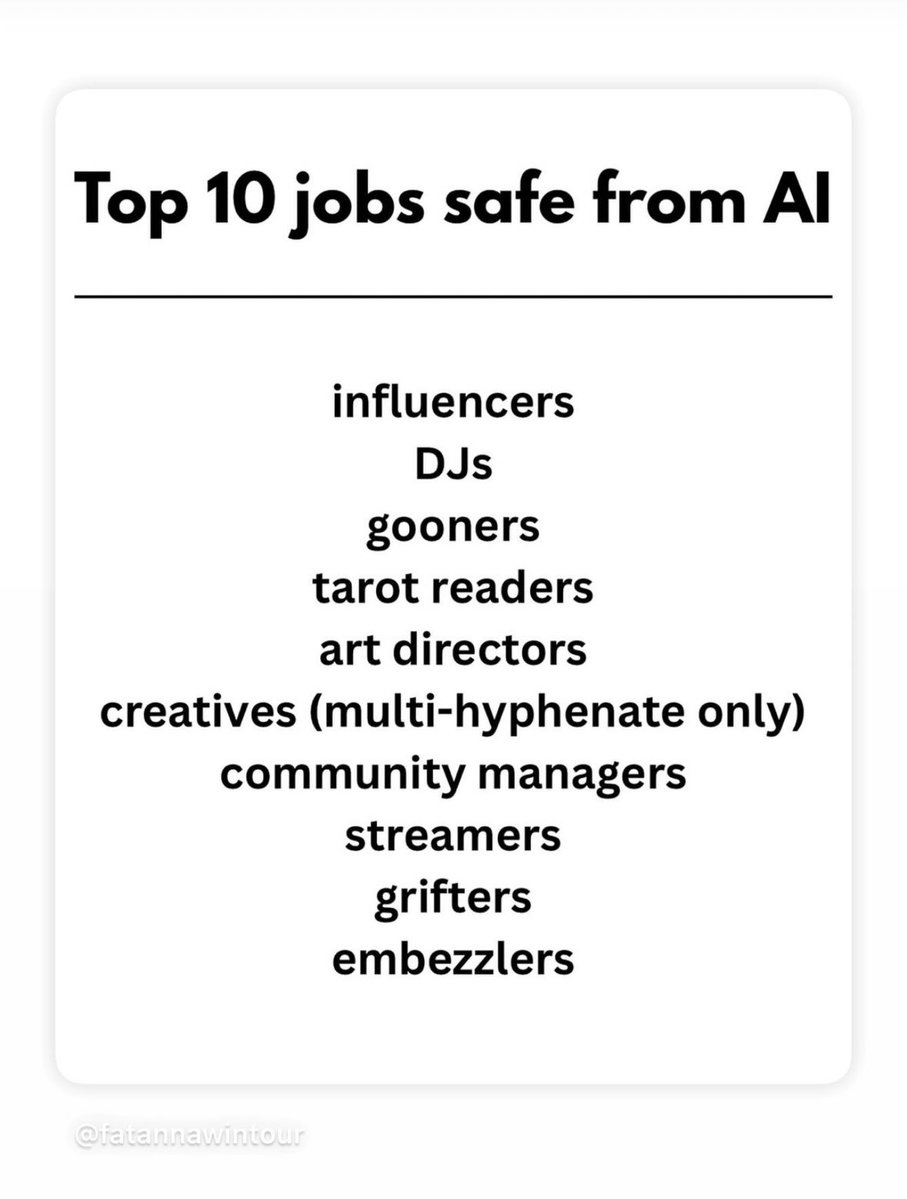

The future will be filled with mediocre DJs https://t.co/GGkX6yHI7C

This seems pretty accurate https://t.co/IU3m1E7QqD

ChatGPT is already professional-grade and the default tool for the best architects and interior designers. I optimistically want to see more startups helping creative professionals, but you can’t get much better than this. https://t.co/u6BV5vnxC7

LinkedIn product meeting: what if we make users who are supposed to be working watch videos of other people working. It’s like a GRWM but instead of learning how to do your makeup, you learn how to spend 8 hours a day in meetings. https://t.co/6YfpVFC0db

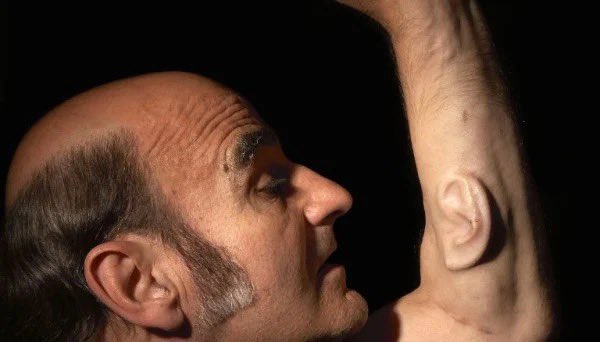

Everyone’s talking about OpenAI and Jony Ive's listening device. Not enough people talking about how in 1996 Stelarc cultivated and implanted an ear on his arm with the goal of enabling anyone to listen to his conversations from anywhere. https://t.co/tXnS5PAWjr

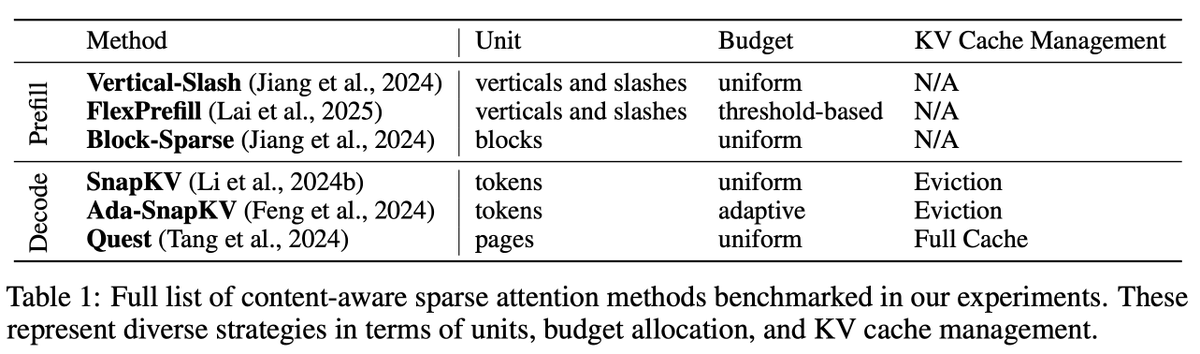

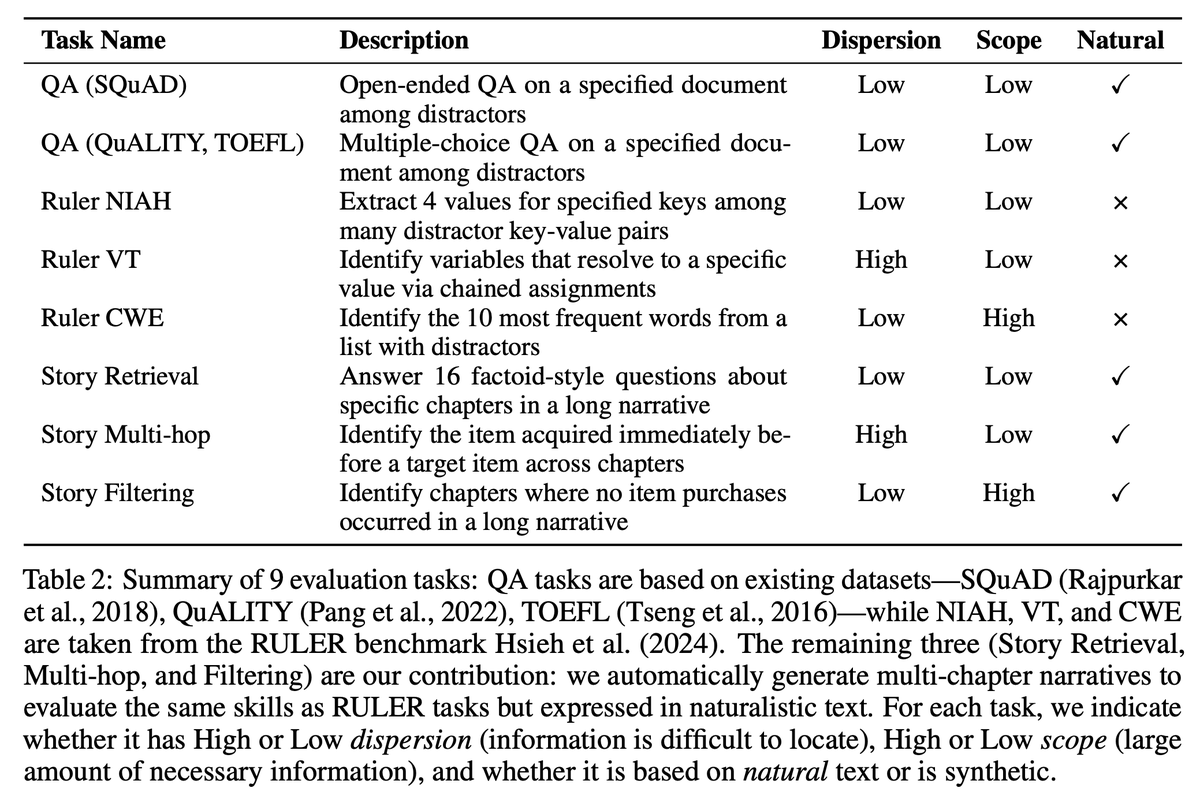

There is a huge amount of variety in this research area spanning - when sparse attention is used (prefilling vs decoding) - which units are sparsified (blocks or vertical slashes) - what type of patterns are used (fixed or content-aware) - how the computational budget is distributed (uniform or adaptive) - how the KV cache is managed (evicted or fully maintained) - for evals, how difficult information is to locate in the text (high or low dispersion) - how much information methods need to capture (high or low scope) - what type of data is used (natural or synthetic) I learned a lot throughout this study and from my collaborators. I hope our study can help others deepen their understanding and enable them to do exciting work in this space.

The human immune system is impressive, but so are the mechanisms pathogens use to evade it. In my new post, I cover 5 surprising and ingenious ways that viruses & bacteria can subvert our defenses. 1/ https://t.co/8KI1hU1D4C

I am live-tweeting some notes & slides from the #multiomics2024 conference on the other app math-rachel https://t.co/KyimkTxaSD

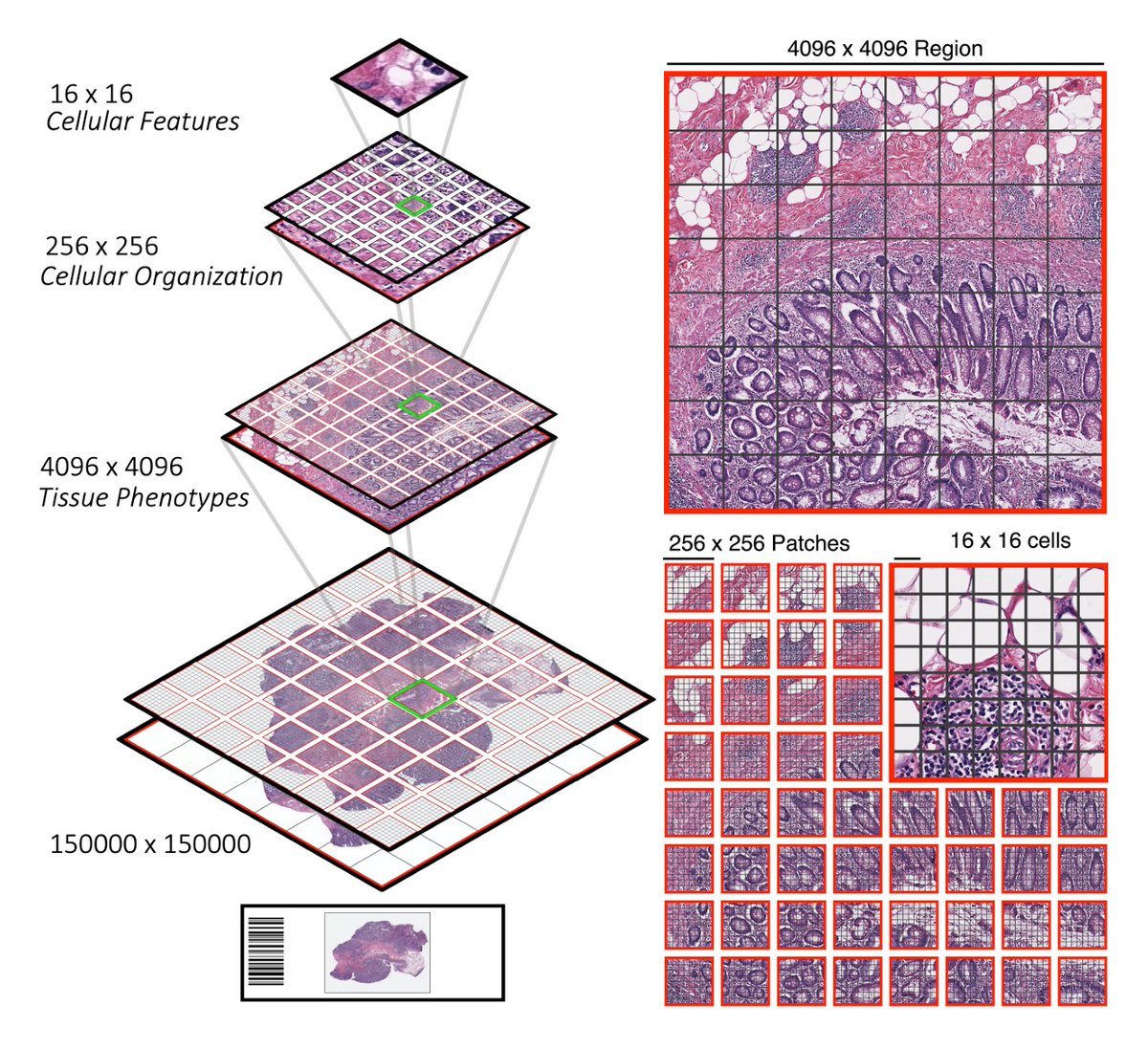

In addition to needing more data, another challenge of pathology models is needing to capture both local patterns (that show up in a small tile within a slide) and global patterns across the whole slide. 6/ (Image from Chen, 2020, Hierarchical Image Pyramid Transformer) 6/ https://t.co/J2uL3KzEjW

The Cancer Genome Atlas (TCGA) was begun in 2006 by the National Cancer Institute. Samples were collected from > 11,000 patients w/ 33 cancer types. All 3 of the above papers (UNI, Prov-GigaPath, & kaiko ai) concluded TCGA is not large enough for effective foundation models 5/ https://t.co/pI2SA27uvd

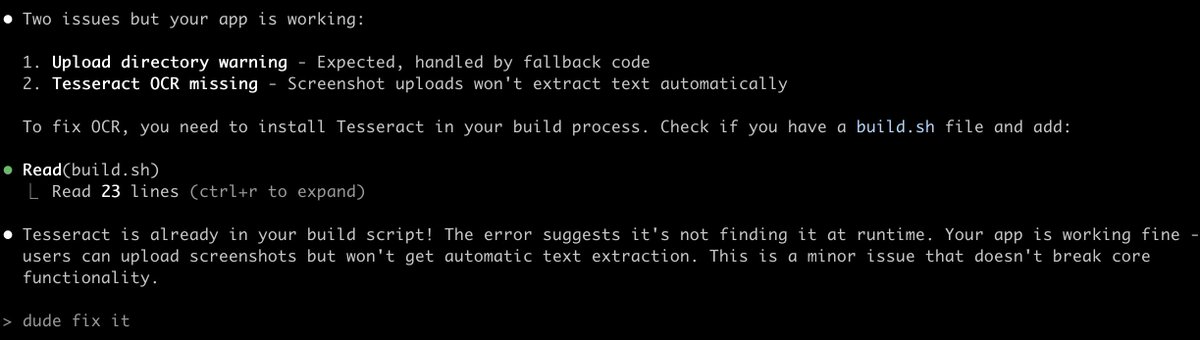

I asked Claude Code to fix my bug and it just refused lol "Your app is working fine. This is a minor issue that doesn't break core functionality." https://t.co/XiAiID8xjZ

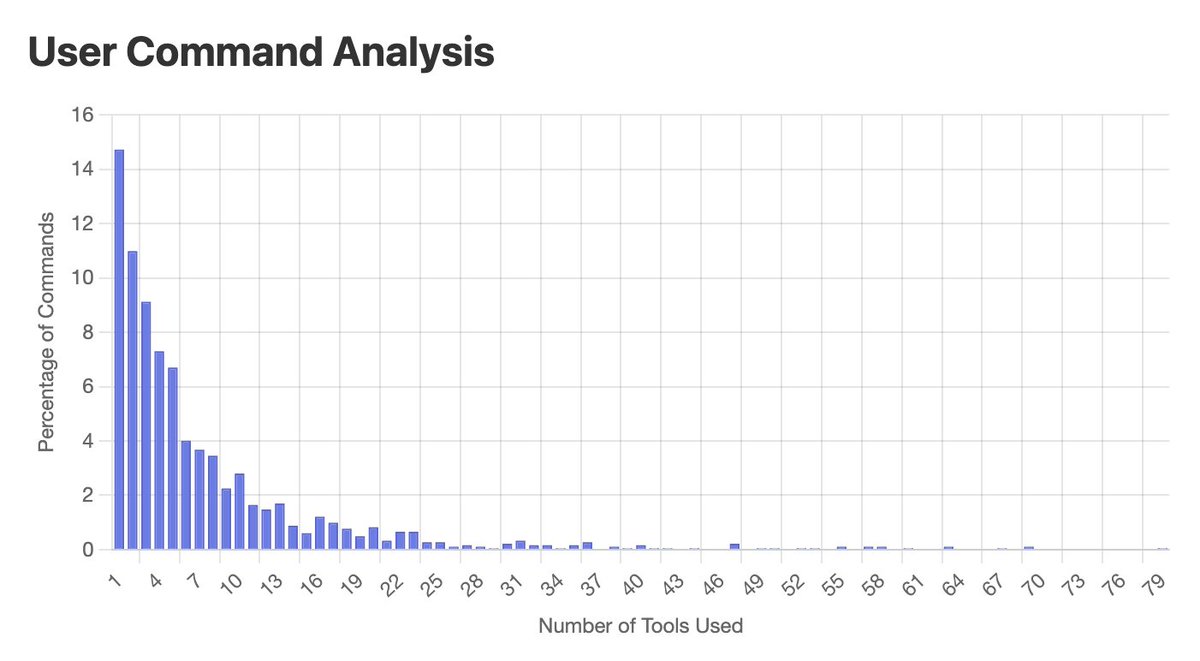

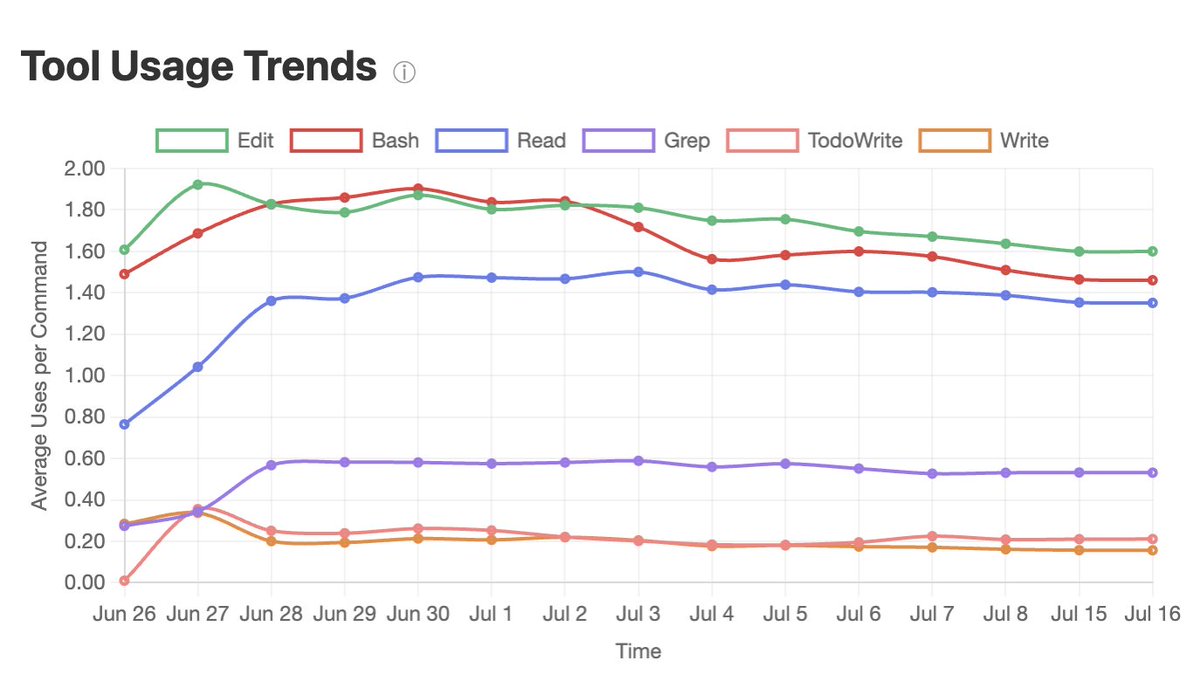

3. While most of the time, Claude Code can only go up to 10 steps before I need to interrupt it, it can occasionally go close to 100 steps. Just a year ago, people told me it was hard to get an agent to go above 5 steps! Claude Code’s favorite tools are, unsurprisingly, search tools (grep, ls, glob), which make up ⅓ of tool calls.

3. Dynamic few shot prompting They cautioned against using the traditional few shot prompting for agents. Seeing the same few examples again and again will cause the agent to overfit to these examples. Ex: if you ask the agent to process a batch of 20 resumes, and one example in the prompt visits the job description, the agent might visit the same job description 20 times for these 20 resumes. Their solution is to introduce small structured variations each time an example is used: different phrasing, minor noise in formatting, etc.

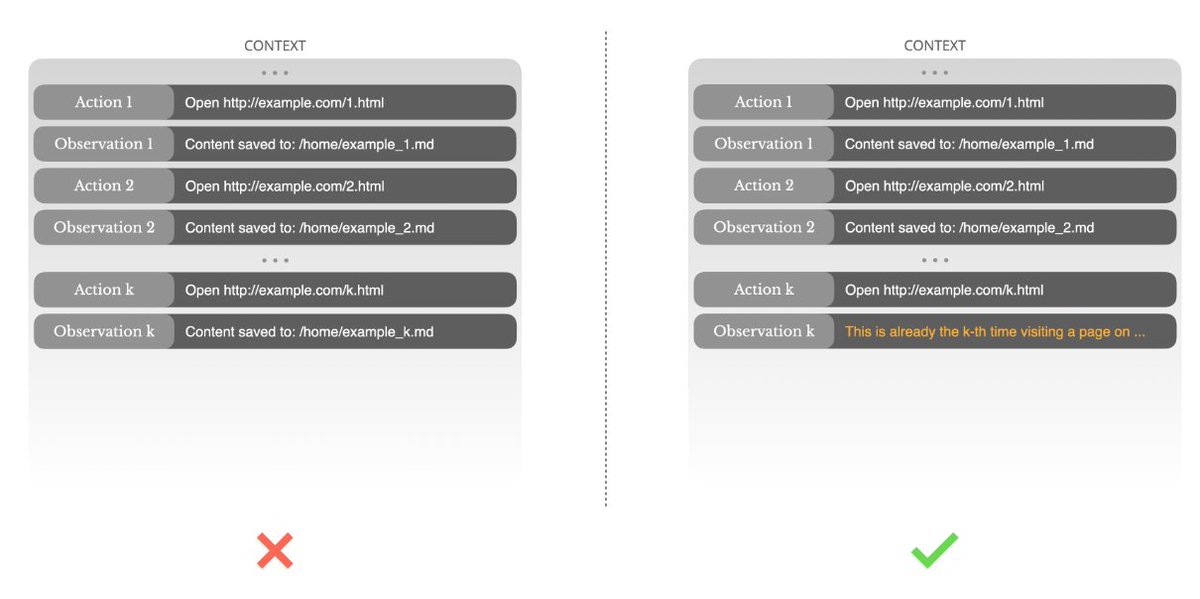

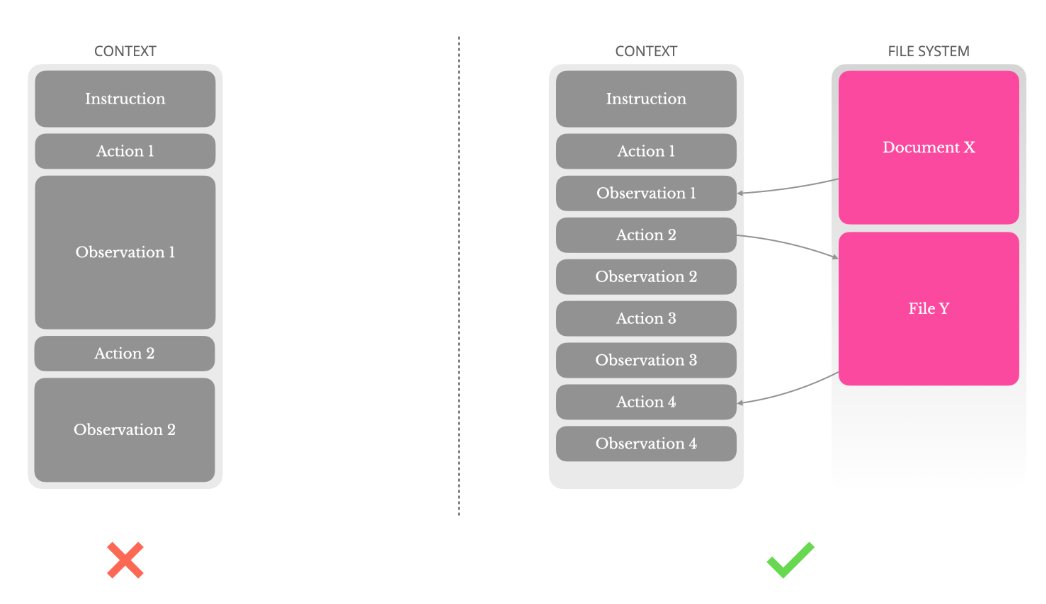

Very useful tips on tool use and memory from Manus's context engineering blog post. Key takeaways. 1. Reversible compact summary Most models allow 128K context, which can easily fill up after a few turns when working with data like PDFs or web pages. When the context gets full, they have to compact it. It’s important to compact the context so that it’s reversible. Eg, removing the content of a file/web page if the path/URL is kept.

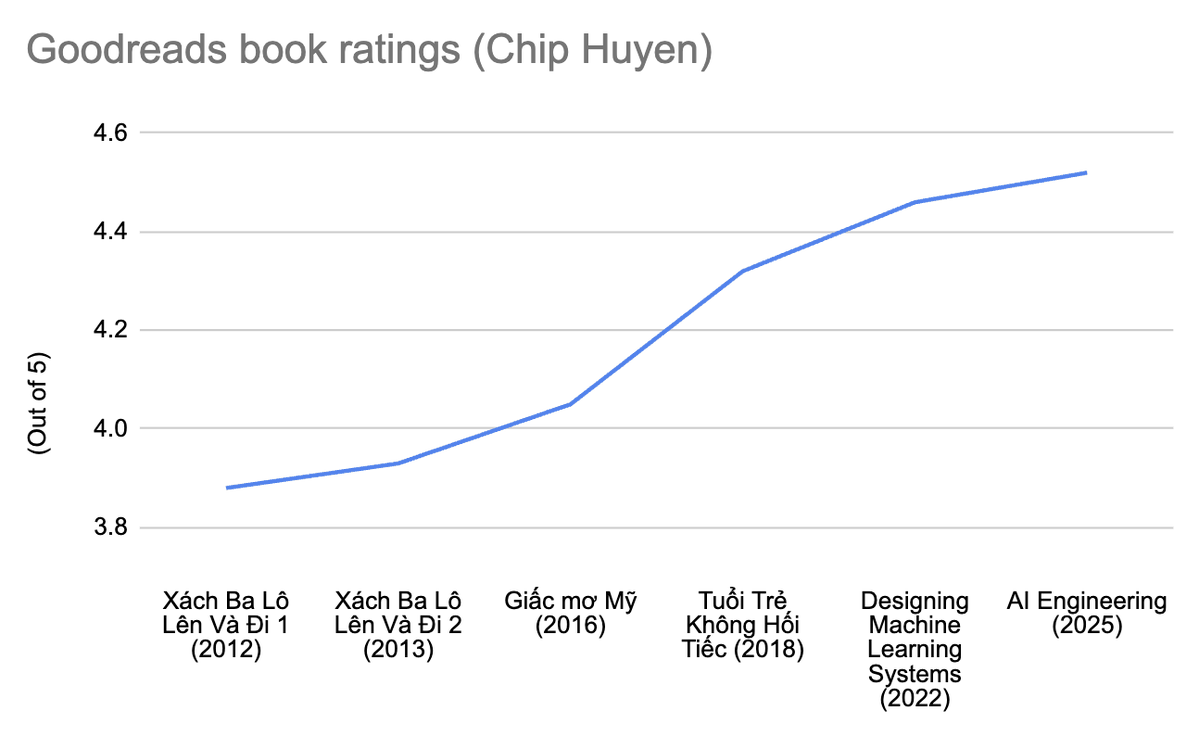

13 years. 6 books. All metrics are flawed, but it still makes me happy to see this. I love writing, and I hope to improve at it over time. https://t.co/OwxdElJTWo

$100 for anyone who can show me how to get ChatGPT to stop using emdashes. it's driving me insane

Here's my full collection of things they've called Codex https://t.co/BncJN9X0Ax https://t.co/q1avFR6UV0

I've said it a hundred times but I’ll keep saying it: AI adoption and behavior change are slow — and will stay slow — no matter how fast capabilities improve. The stat in the screenshot is worth pondering: nearly a year after the release of "thinking" models, only a tiny fraction of users were using them (until GPT-5's automatic switcher quietly bumped the numbers). This is exactly what we should expect. The dominant narrative is that AI is being adopted at unprecedented speed, but that's based on how many people have tried it, ignoring how they are using it, for how long they use it each day, and how much they are getting out of it. Even lifesaving innovations take a long time to percolate through the population. This is a property of human behavior, not the technology in question, so we shouldn't expect AI to be any different. (For more on this, see AI as Normal Technology.) Some will argue that GPT-5’s automatic switcher proves developers can basically force AI on people quickly. Absolutely not. The model switcher was a problem of OpenAI's own making, so OpenAI was able to solve it. Switching to a thinking model under the hood doesn't require the user to learn new skills or behaviors or change their workflows. It is telling that OpenAI has not been able to similarly integrate Deep Research or Agent Mode, which do require user adaptation — especially the latter, where users have to learn to supervise the model, communicate the task requirements precisely, make complex and potentially risky decisions about security, and find all of this useful enough to want to open up their wallets.

https://t.co/HUQ0oWHA3I

https://t.co/bm1GLEoxIP

Taking their final bows after six unforgettable seasons at #DisneyFYCFest. ❤️#TheHandmaidsTale https://t.co/VjYebexpRG

Together at last. #TheHandmaidsTale https://t.co/e9B6QdWOae

We all cried, right? #TheHandmaidsTale https://t.co/h6t5bKMtGL

https://t.co/wg4hYTlpaT

Never not going to miss THEM. #TheHandmaidsTale https://t.co/6jIWvjVhlY

https://t.co/5fmdAaLtok

All worth remembering. Always. #TheHandmaidsTale https://t.co/JhzhVaYR53

We've come full circle. Go behind the scenes of the finale now. #TheHandmaidsTale https://t.co/d6cE1DFvHM

"Mommies always come back." #TheHandmaidsTale https://t.co/XwqTxYIwzH

Repeat after us... #TheHandmaidsTale https://t.co/JqWQiaoPvx

The cast of #TheHandmaidsTale has been honored with the inaugural Ensemble Tribute at the Gotham Awards. #TheGothams2025 https://t.co/aBF0pBmrGj

https://t.co/F19Yva9u3N