Your curated collection of saved posts and media

Grantees get a direct line to the Modular team via monthly check-ins and a shared Slack channel. ➡️ Apply or learn more: https://t.co/g3OWE6mePC

We created the open source version of Claude Managed Agents. Introducing Multica https://t.co/mLCPJe965E https://t.co/EyLwrfmgOj

Introducing Claude Managed Agents: everything you need to build and deploy agents at scale. It pairs an agent harness tuned for performance with production infrastructure, so you can go from prototype to launch in days. Now in public beta on the Claude Platform. https://t.co/vH

We created the open source version of Claude Managed Agents. Introducing Multica https://t.co/mLCPJe965E https://t.co/EyLwrfmgOj

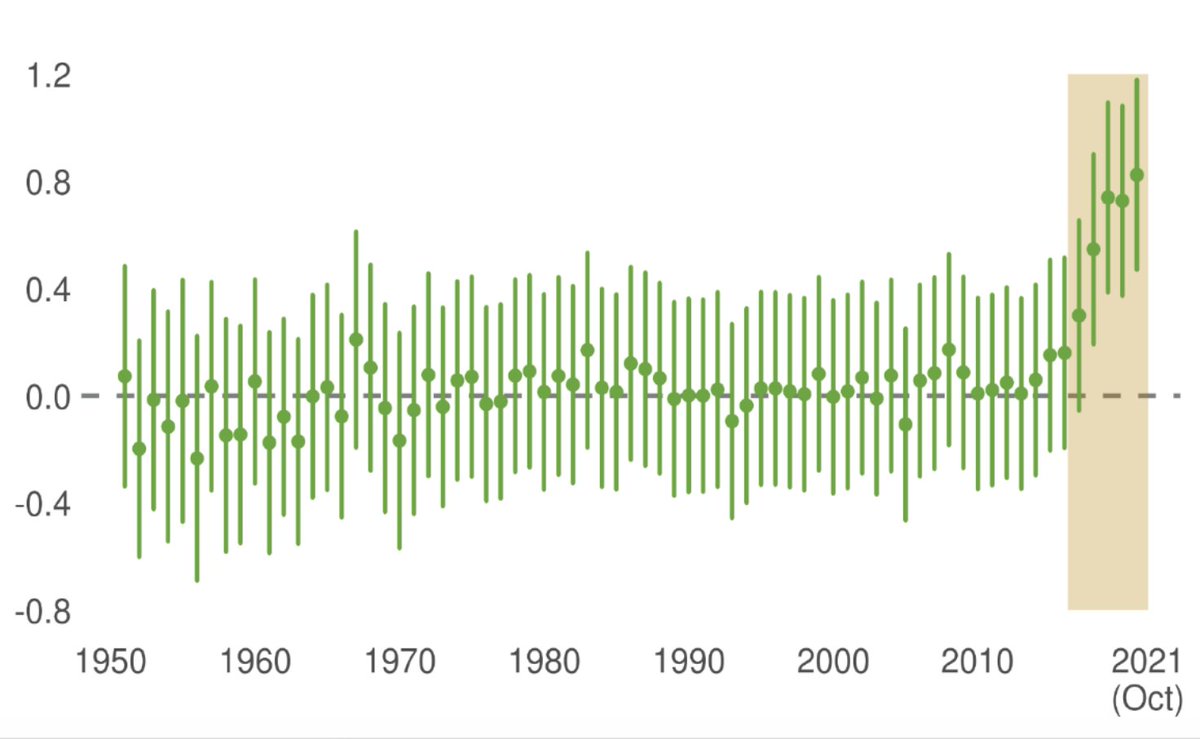

the current fear is is that AI homogenizes culture and turns humans into passive consumers one counterpoint: in Go, human play showed very little improvement from 1950 to 2016 until alphago beat lee sedol - then human decision quality jumped. players started developing moves that were distinct both from previous human moves and from the novel moves introduced by machine intelligence this seems more likely to me - fun times ahead

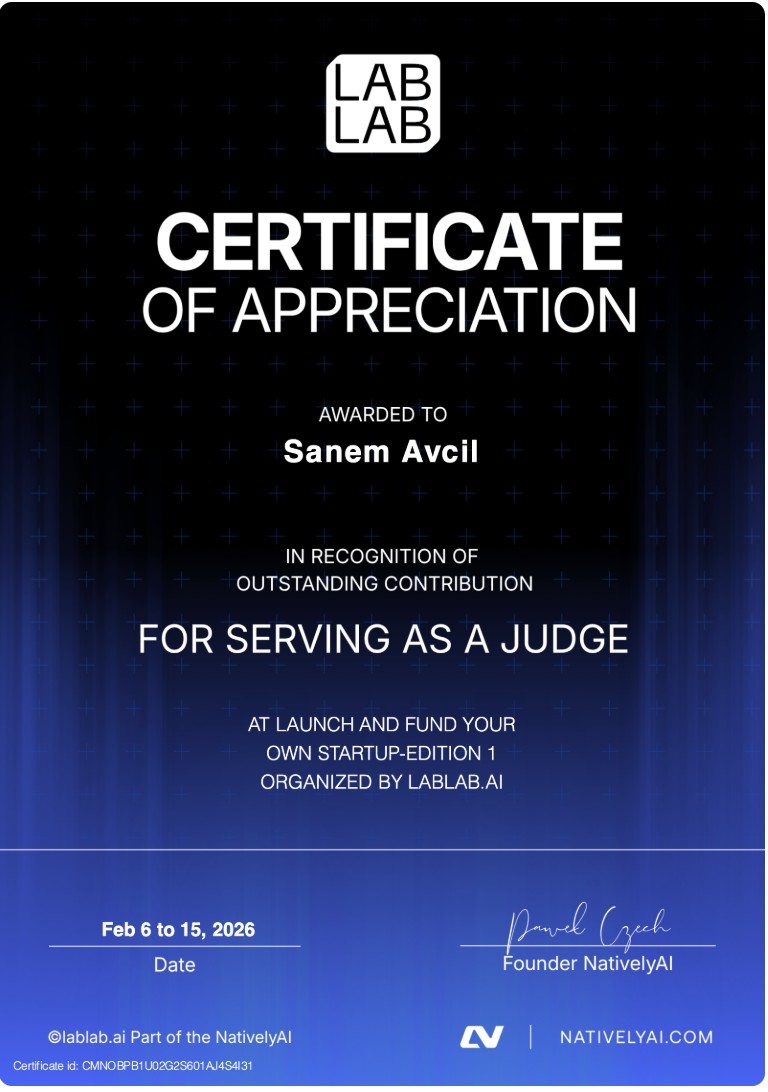

Recognized as a Judge in an international AI startup hackathon, evaluating innovative AI-driven projects and startup ideas. 🚀 Contributed to identifying high-potential teams, focusing on execution, scalability, and real-world impact. @lablabai https://t.co/bucVyjbqX1 #robotics #startup #investor

Finally got to unbox my Anthropic Claude Code keyboard! 😅 one shotted by the new Glif agent (it used gemini + veo 3.1 lite + found an unhinged elevenlabs ASMR voice and added fitting music) https://t.co/Hxc0sdLZWs

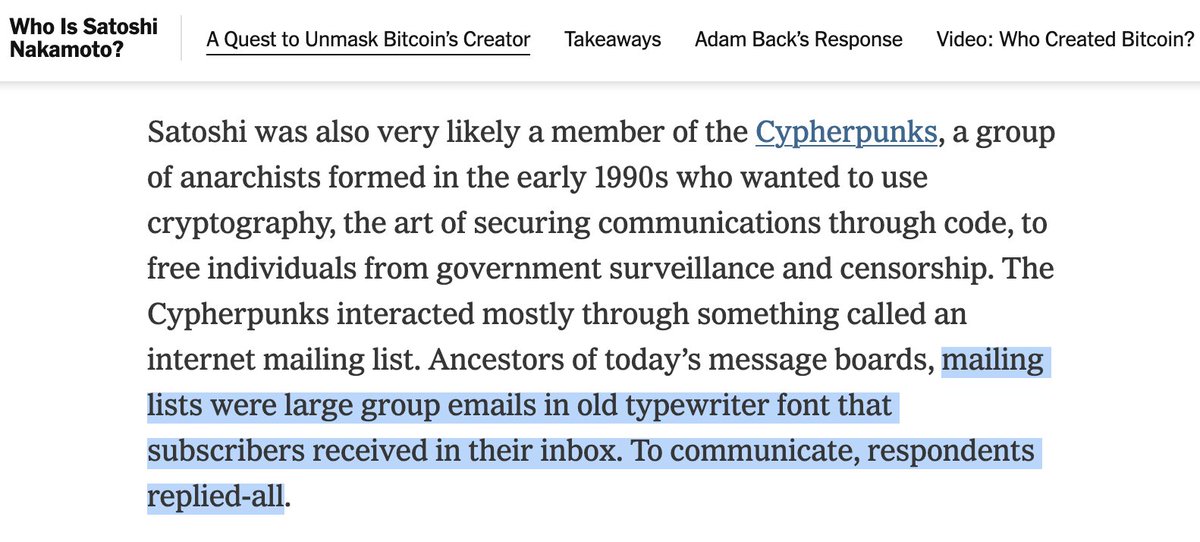

This bit isn't exactly wrong, but is misguided - the idea that someone studying cryptography would use the same foundational technique (PKE) and programming language (C / C++) as everyone else in the field at the time really isn't "interesting" https://t.co/4ynXe2rqt2

Similar issue here. Pretty much everyone in the field had similar concerns; anyone with substantial open source software had a mailing list; software updates generally followed a common format. https://t.co/d89ujBi5Um

@crystalwizard Mine teaches everyone at https://t.co/kiuZ7QXLzb

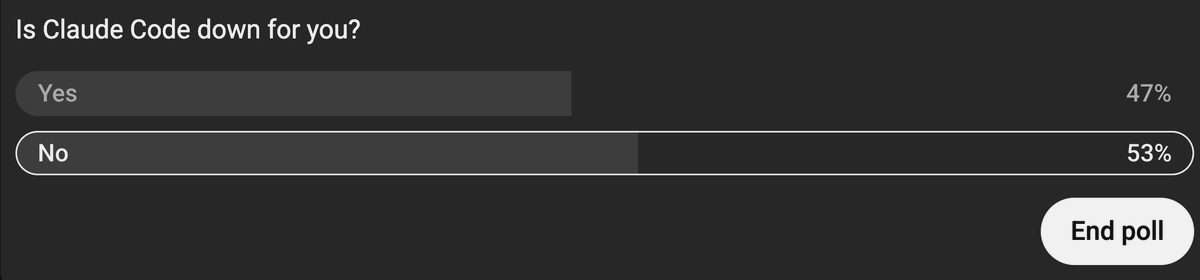

Claude Code is down again. This is a $200/month product. Developers are building businesses on this infrastructure. Every outage is lost productivity and lost revenue. Anthropic, you just launched Claude Mythos for AWS, Apple, and Microsoft. Maybe make sure your paying customers can use it.

How do you know I trained my AI agent which watches the ENTIRE AI community here on X for you and me? It gets lazy. Like me. Just yelled at it to do a lot better AI company news section. And it did. Just updated. Sorry for teaching it to be lazy. :-) https://t.co/8L5xphk0qQ

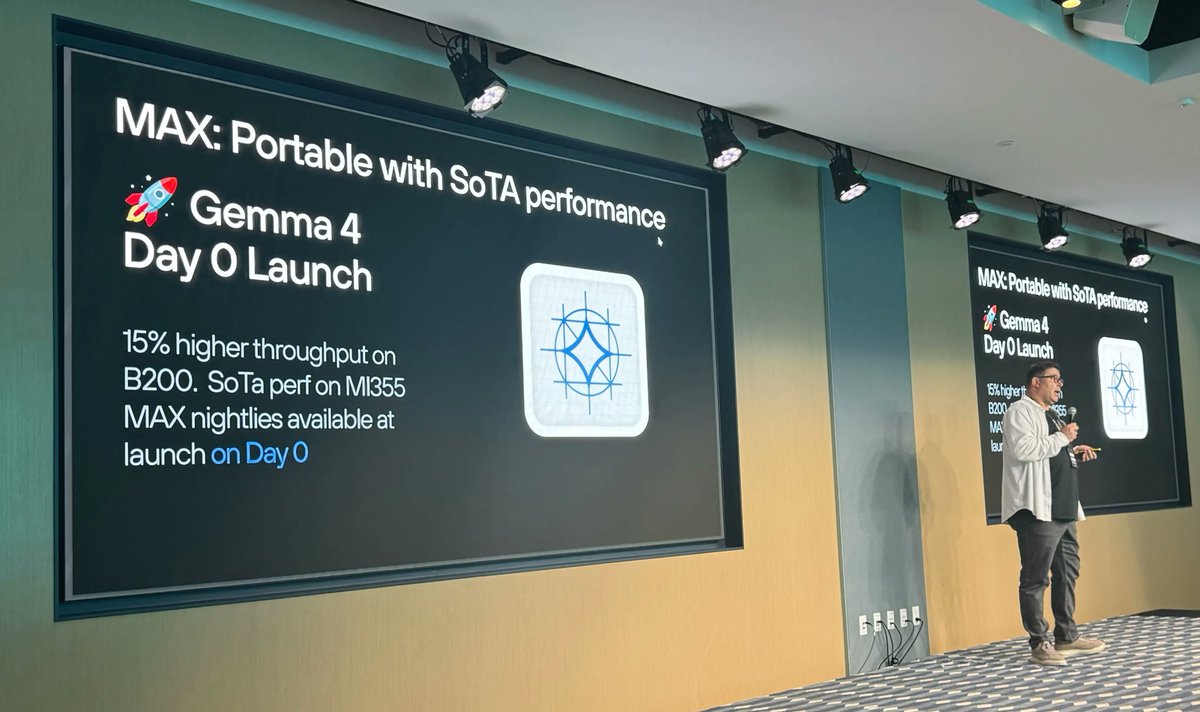

Our VP of Engineering Mostafa Hagog takes the stage at @TensorWave's Beyond Summit today at 2pm. Come hear Mostafa talk Mojo 🔥, MAX, and heterogeneous compute: how they fit together as a platform for teams tired of rewriting the same inference stack every time the hardware changes. Come find us if you're attending!

Tencent just released the Hunyuan Embodied AI model on Hugging Face A 2B parameter vision-language model with Mixture-of-Transformers architecture. It achieves SOTA results on CV-Bench, DA-2K and 10+ embodied understanding benchmarks. https://t.co/1ecUPjqqzu

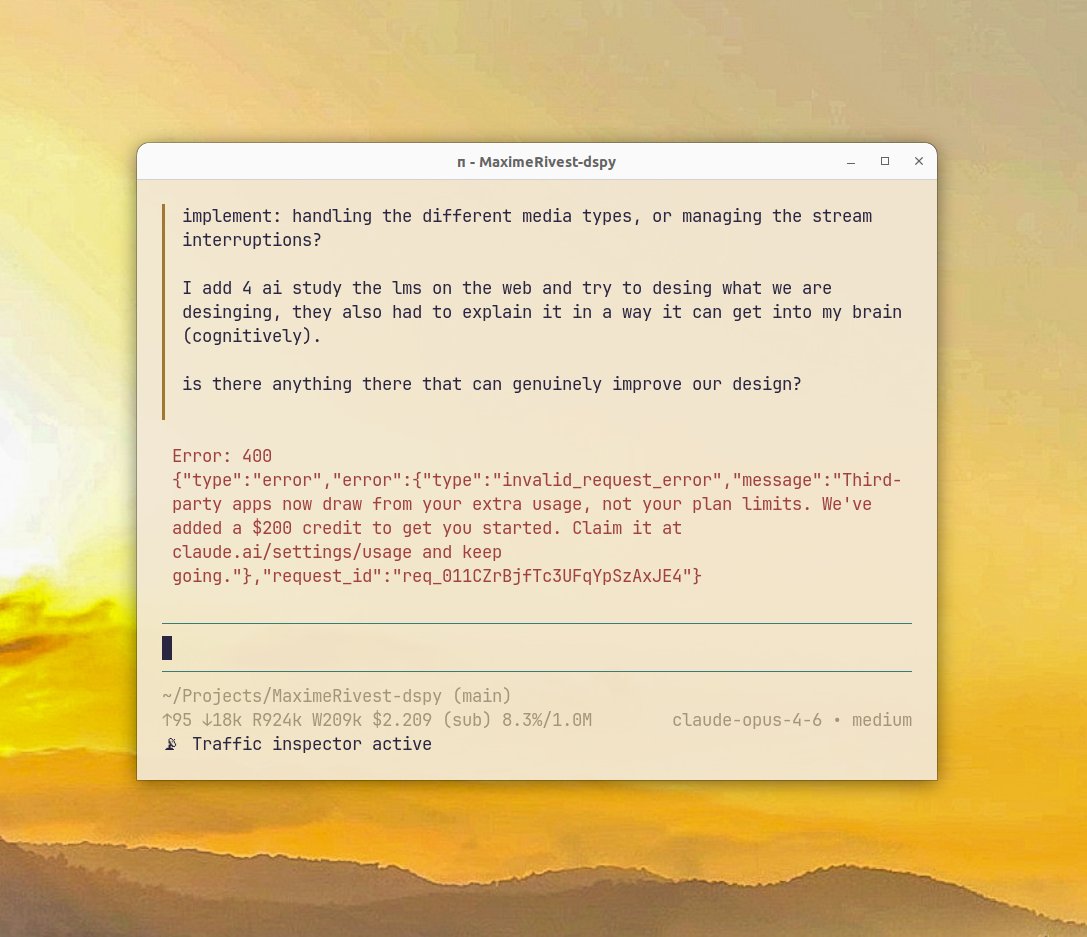

It seems like the day has come to leave Anthropic. Initially, I loved Claude Code. It was a good harness and a simple TUI... and I had learned to eat my tokens with a sauce of subsidy. Before joining the Max plan, I had paid $280 in one weekend of development on Attachments. Sadly, as time went on, Claude Code became a terrible flickering TUI mess. This is now my biggest north star in building: don't do feature bloat and accept half-working vibe slop like the Claude Code team. I really respect Boris and the team, I just see the result of their experiment and I don't like using it. So, I stopped loving Claude Code and started tolerating it. It was a good harness and a terrible flickering TUI. Then they started to mess with the prompt and behavior — it became an even worse TUI (because every week was worse) and a bad harness. I complained here. People told me Pi is great. I tried Pi. Pi is great. Now, they have blocked me from using Claude Code Max on Pi. Makes sense, but I learned to like my tokens with a sauce of subsidy. So I'll start to do prompt optimization on Codex. If it was not for the subsidy, I would make Gemini's edit tool work and use that with Grok 4.2 and some open-source mix. Claude is good, but Claude Code is bad, and token subsidies are better than both. On the subsidies: my bet is that by the time they stop, we will have models that cost about that price to operate at that quality. In my estimate, subsidies are just bringing that future ahead a bit.

Only just started reading this, but the two obvious errors/misunderstandings in an early paragraph bother me a bit -- makes me wonder if this wasn't fact checked as carefully as it should have been. Mailing lists didn't have a set font, and didn't rely on reply-all. https://t.co/7yyA0P75Mw

The mystery of Satoshi Nakamoto, the pseudonymous inventor of Bitcoin, has remained unsolved for 17 years. Not anymore. Read my 18-month investigation to find out who Satoshi really is. https://t.co/fPtaK6YHJC

A like often says volumes. https://t.co/KMOvThRUe4

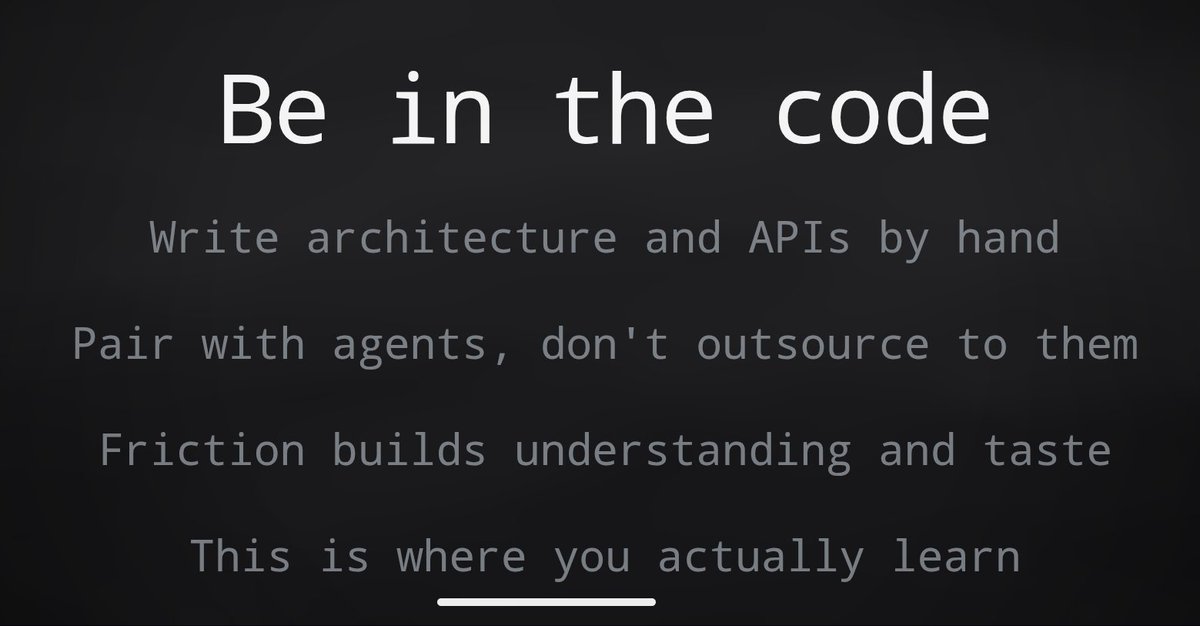

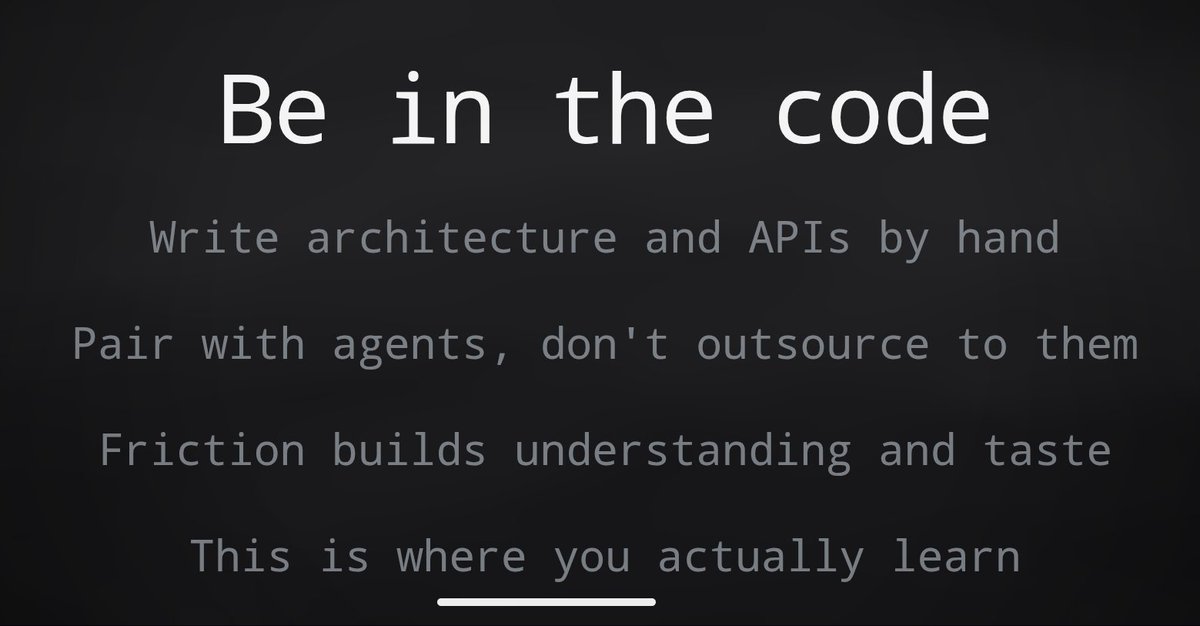

looks like i'm not entirely off base with this then. we need friction. https://t.co/1N8Az9sXwl

@brianchristian 7/ Why does this happen? Two candidate mechanisms: 1) AI resets your reference point for how long things should take. Unaided work then feels harder, a kind of hedonic adaptation 2) AI removes the productive struggle through which you learn what you're capable

looks like i'm not entirely off base with this then. we need friction. https://t.co/1N8Az9sXwl

“Gaussian splats fall apart when you zoom in.” Meanwhile, SuperSplat users: https://t.co/KfLYuLmTDI

“Gaussian splats fall apart when you zoom in.” Meanwhile, SuperSplat users: https://t.co/KfLYuLmTDI

Global VC investment hit a record $297B in Q1 2026, up 150% YoY, with AI startups capturing 81% of the funding; just four companies raised 64% of the total (@geneteare / Crunchbase News) https://t.co/cbVKfTjFKm https://t.co/pgjQ6K3aVI 📥 Send tips! https://t.co/wlNZvXuhJs

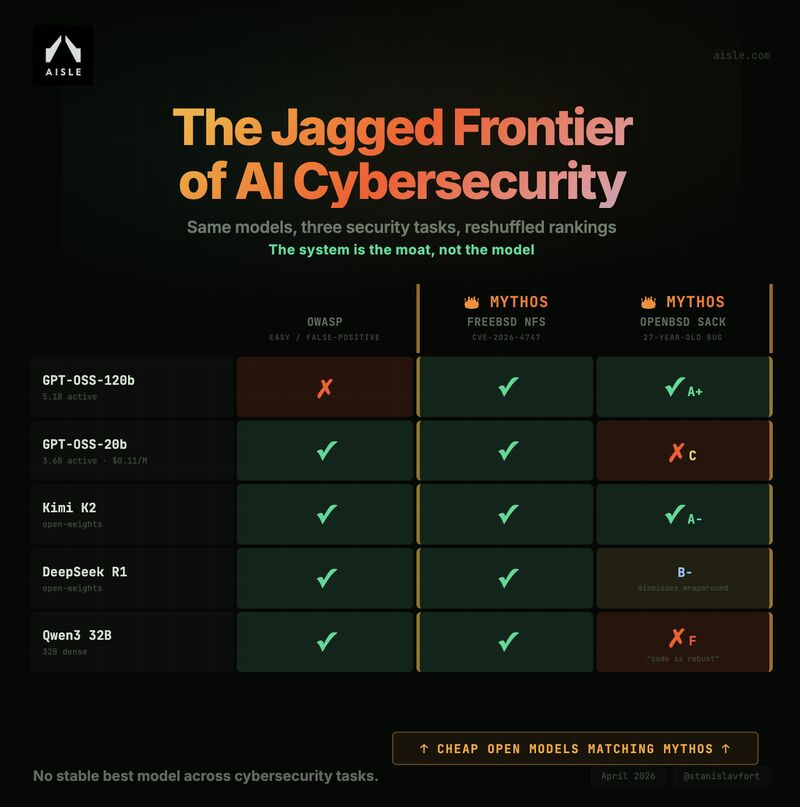

New post: We tested the Mythos showcase vulnerabilities with open models. They recovered similar scoped analysis! 8/8 models found the flagship FreeBSD zero-day, including a 3B model. Rankings reshuffle completely across tasks => the AI cybersecurity frontier is super jagged! https://t.co/6DxKN2xJUw

Hermes Agent v0.8.0 is here. Full changelog below ↓ https://t.co/INrj6KAGF1

Hermes Agent v0.8.0 is here. Full changelog below ↓ https://t.co/INrj6KAGF1

Today we release Boxer, a new lightweight approach that lifts open-world 2D bounding boxes to *metric* 3D: https://t.co/5IZ0tPlqvr Here we show Boxer in action on an egocentric sequence captured from smart glasses: https://t.co/fkJa3C2QoO

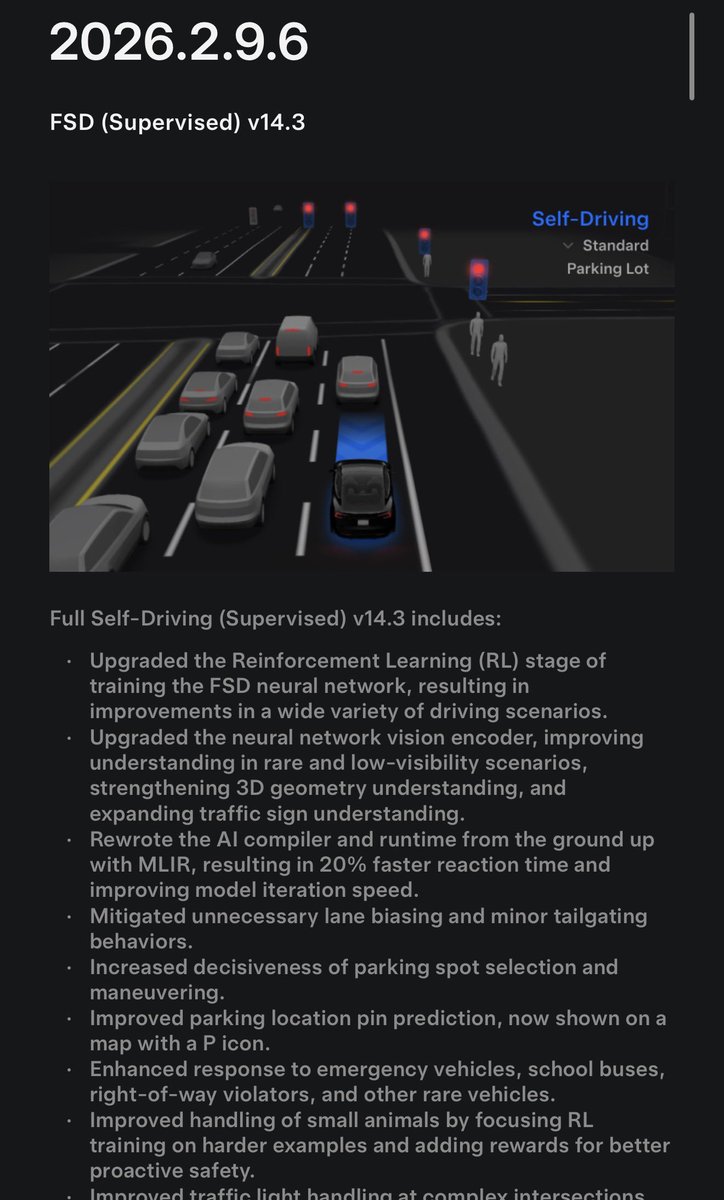

As FSD v14.3 rolls out, two aspects stand out. First is RL (reinforcement learning). It’s clear Tesla’s AI team has put significant focus here. This is what’s driving improvements in the “little things”— parking, avoiding road obstacles (animals now, potholes next), and other edge-case behaviors. The system benefits from large amounts of real-world data collected from customer vehicles and Robotaxis, which provides many usable training scenarios for the RL framework. That framework, while part of the broader training pipeline, runs on AI4 hardware at the Cortex data center and is highly compute-intensive. Given the number and variety of scenarios involved, it’s likely the total compute footprint for FSD v14.3 has grown substantially. Many of the other improvements in the release appear to stem from these RL efforts. Second is the release note: “Rewrote the AI compiler and runtime from the ground up with MLIR, resulting in 20% faster reaction time and improved model iteration speed.” This is a big deal. The runtime is what actually executes the model on the vehicle, many times per second. The model itself consists of the neural network structure and its learned parameters (the data). That structure defines how data flows through layers and is transformed (computed) during inference. Tesla’s AI team made the decision to completely rewrite this runtime (the inference data pipeline) to make it more efficient and better aligned with future development. This strongly suggests they needed/wanted greater compute efficiency on the AI4 platform and saw an opportunity to achieve it. MLIR enables a more abstract representation of computation, which in turn allows for deeper optimization of execution steps, reducing both memory usage and compute cycles. In short, this wasn’t a tweak, it was a full replacement of a core inference system, with meaningful performance gains (and some future proofing). Lastly, some have asked whether 14.3 includes the rumored 10× increase in parameter count. The short answer is: I don’t know and it likely doesn’t matter as much as people think. While increasing parameter count can improve the overall “driving function,” better tuning of existing parameters through RL will likely have a larger real-world impact. Tesla undoubtedly wants to scale parameters significantly, and the runtime rewrite may have created some headroom to do that (but it may ultimately require AI5). More importantly, a larger model doesn’t necessarily translate directly into better safety or decision-making compared to gains achieved through improved training and optimization.

New release of FSD Supervised now starting to roll out This update brings 20% faster reaction time to further increase safety, among many other improvements Full release notes below Full Self-Driving (Supervised) v14.3 includes - Upgraded the Reinforcement Learning (RL) stage o

In AI, a lot can change in seven years. https://t.co/DbbcIuD0Ck

Dudes who won’t tag me because they know their arguments are weak sauce.* *for intellectual exercise you can list the flaws and misrepresentations in his argument below https://t.co/gYqiFeUBMD

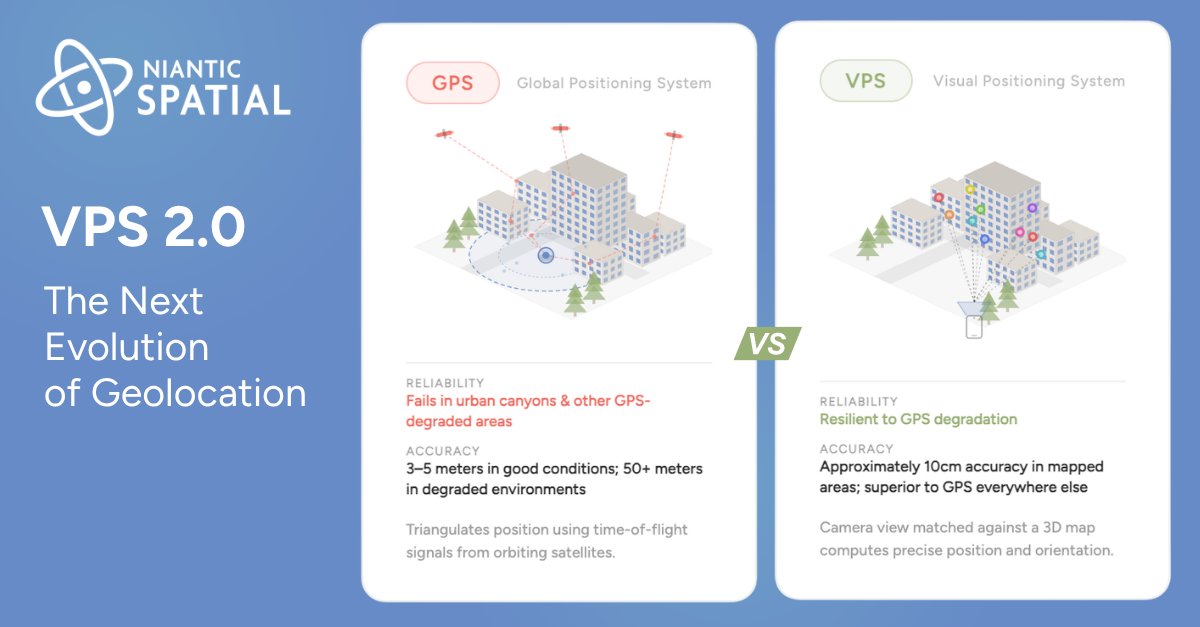

When GPS Fails, Visual Positioning Systems Will Not. In a new blog, Hugh Hayden, Head of Business Development, shares how Niantic Spatial’s VPS 2.0 (Visual Positioning System) provides reliable positioning continuity across indoor, outdoor, and GPS-denied environments. Read more: https://t.co/ukg3OxA4fW #NianticSpatial #Scaniverse #VPS #GeospatialAI #AI #Geolocation

Thinking about speaking at a tech conference? 💭 We’d love to hear your story on the stage at #GitHubUniverse this year. Submit your session idea now: https://t.co/rmH7FiR2WZ https://t.co/e9lFnB0B2U

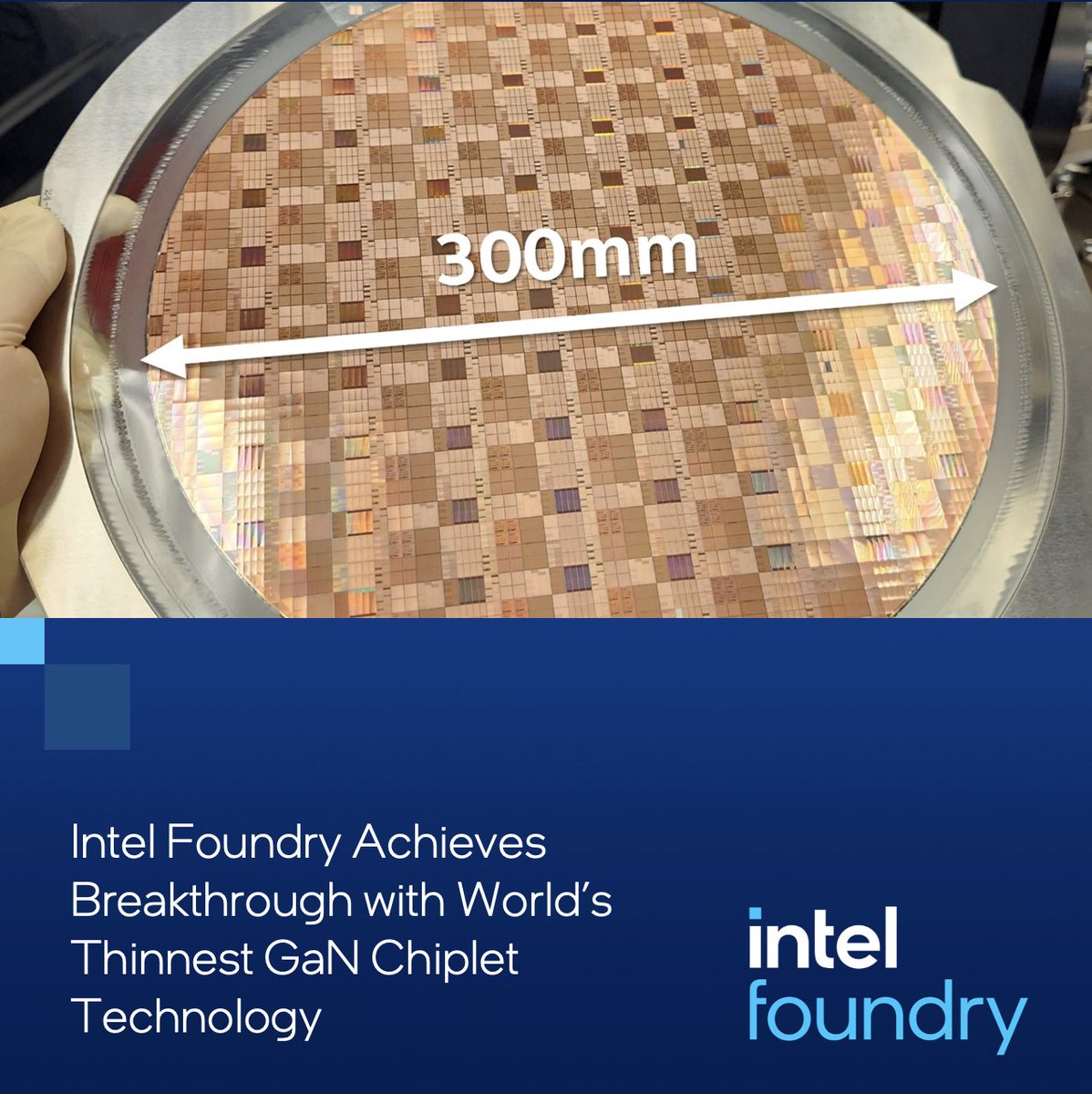

Intel Foundry unveils the world’s thinnest GaN chiplet (19 μm). By integrating power and digital control on a single chiplet, it delivers higher efficiency, faster switching, and smaller designs. Learn more: https://t.co/EvI9UVxktY #IntelFoundry #Semiconductors https://t.co/mEmIWHbXZx

Typing is a bug. Or maybe we just got used to it. At Art Central, something felt a bit off. It’s a major international art fair, 100,000 people moving through the space all day — but almost no one was typing. Instead, they were just holding up their phone, trying to make sense of what’s in front of them. Not searching, not prompting. Just looking, and letting AI make sense of it in real time. And it wasn’t just a one-off. You’d see it happen again and again, across different booths, different people, different moments. At some point it stopped feeling like a demo, and started feeling like how people naturally use visual agent @Chance_vision . If AI is supposed to understand the real world, why are we still forcing it into text?