Your curated collection of saved posts and media

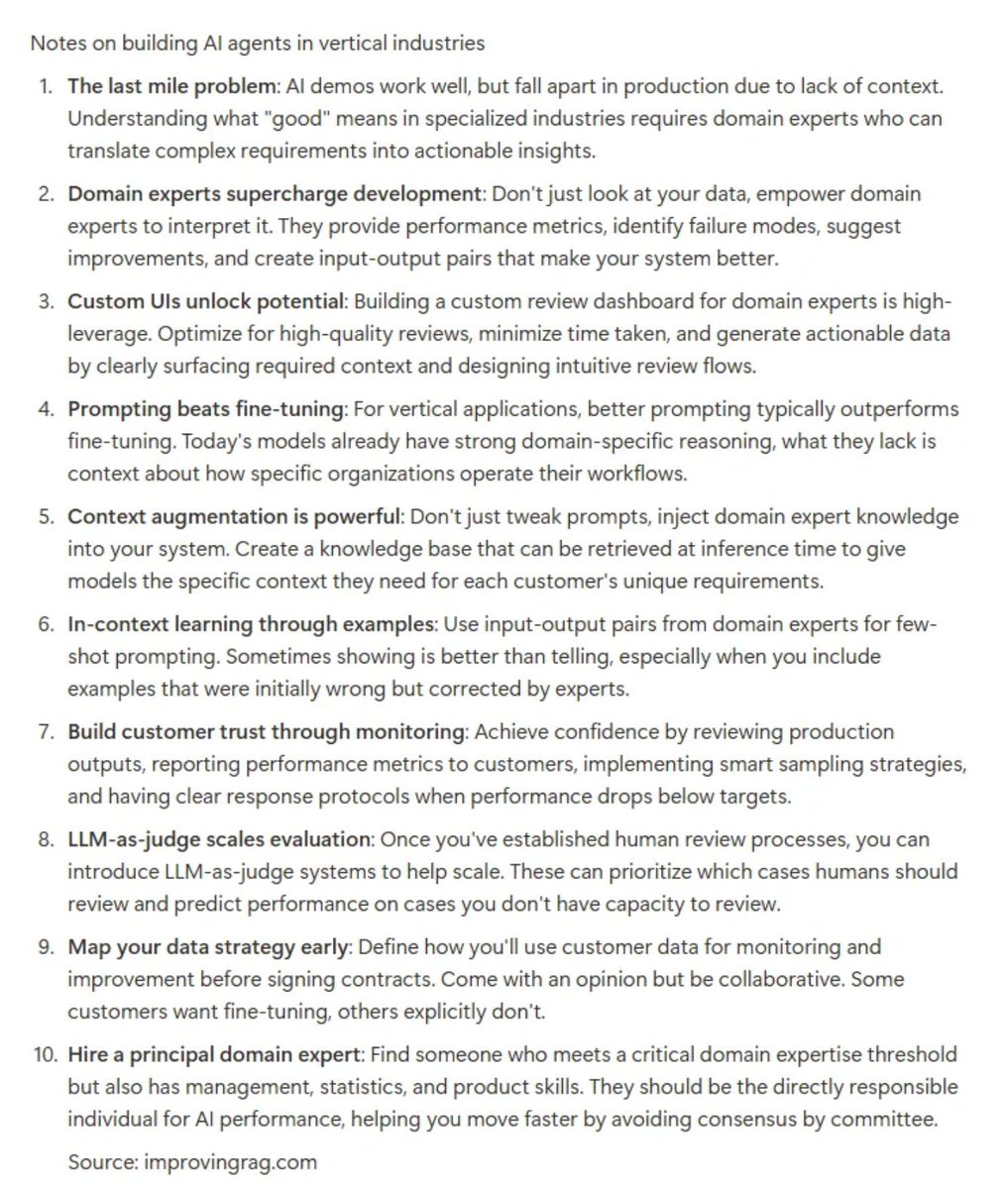

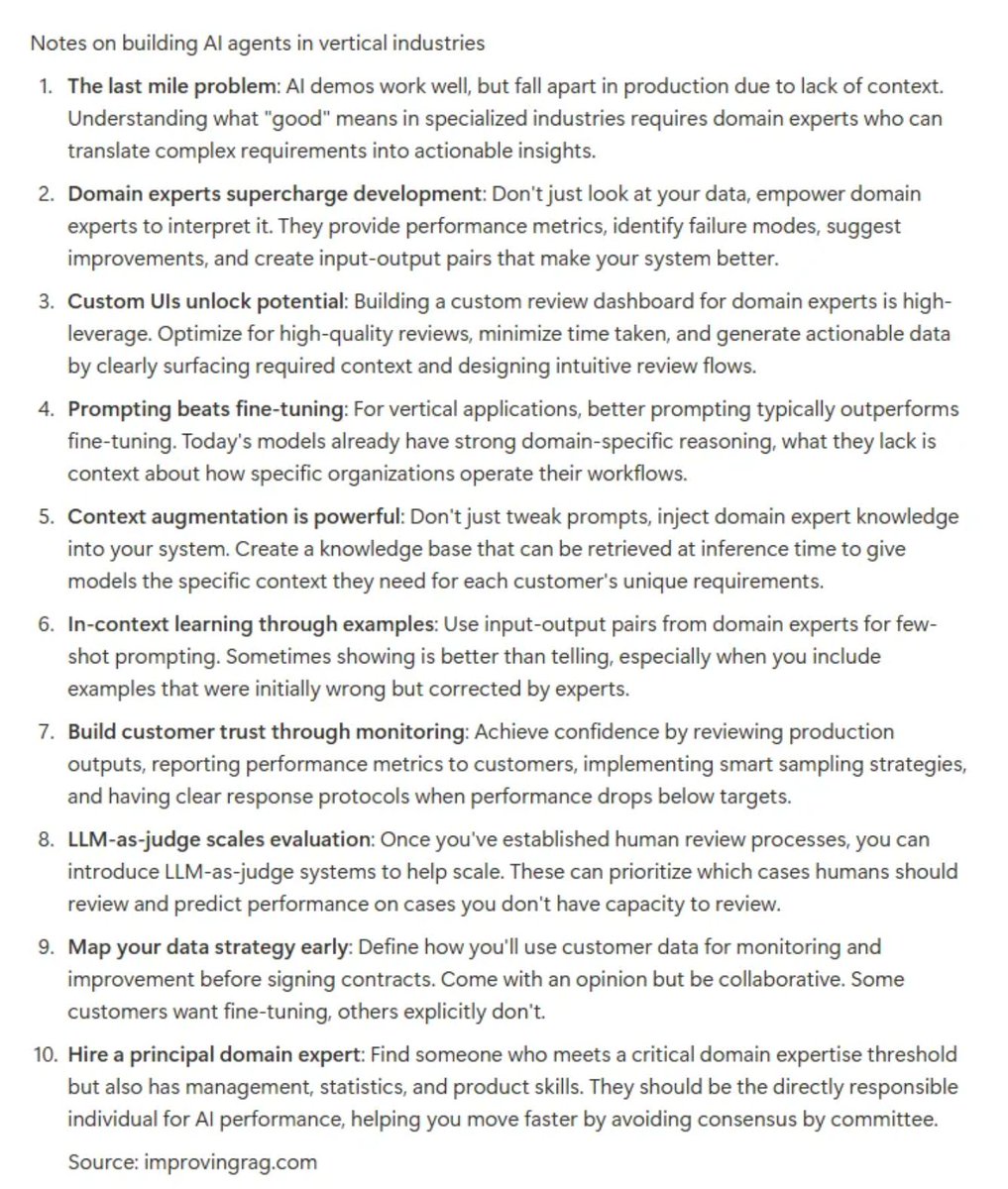

notes from dr chris lovejoy's talk on building for verticalized ai applications https://t.co/k4b9rDtn6a

notes from dr chris lovejoy's talk on building for verticalized ai applications https://t.co/k4b9rDtn6a

@daniel_mac8 @dylan522p Let me Imin the office. https://t.co/dUCPTgtFPA

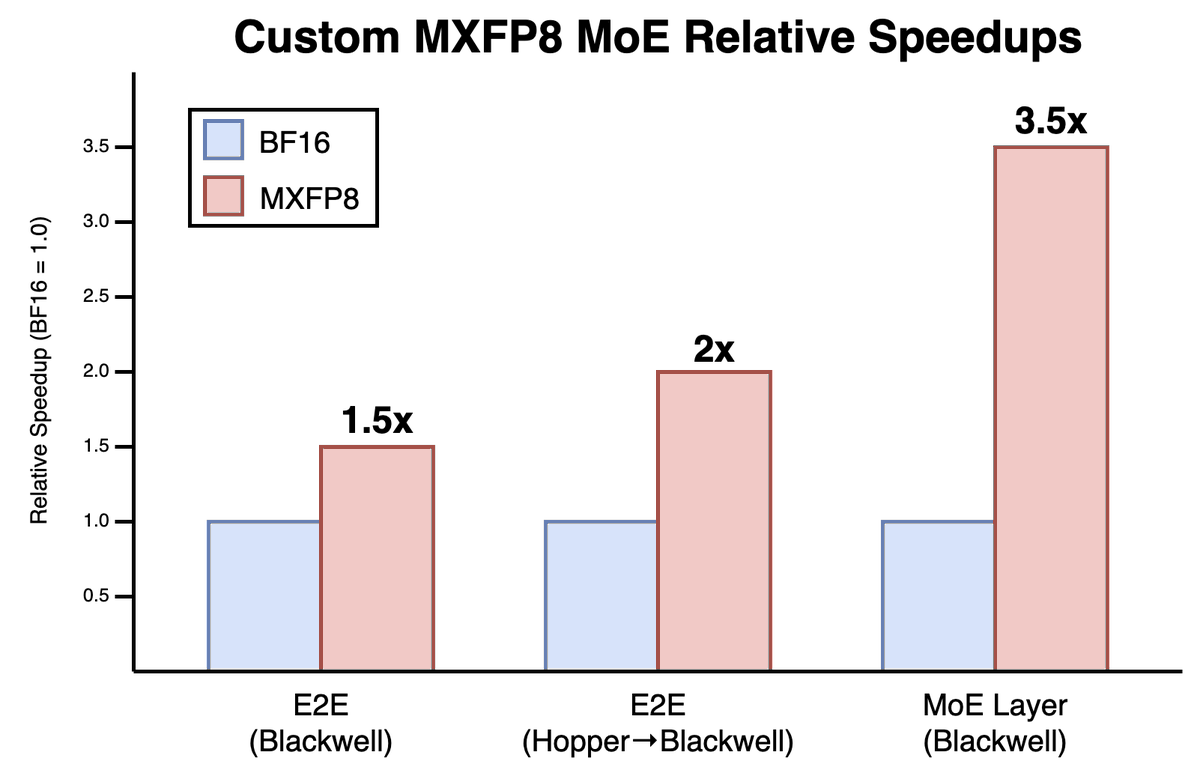

MoE layers can be really slow. When training our coding models @cursor_ai, they ate up 27–53% of training time. So we completely rebuilt it at the kernel level and transitioned to MXFP8. The result: 3.5x faster MoE layer and 1.5x end-to-end training speedup. We believe our MXFP8 MoE training stack is faster than any open-source alternative available today. Read more here: https://t.co/fVv7kpSUQj

https://t.co/mRkKhDC53t

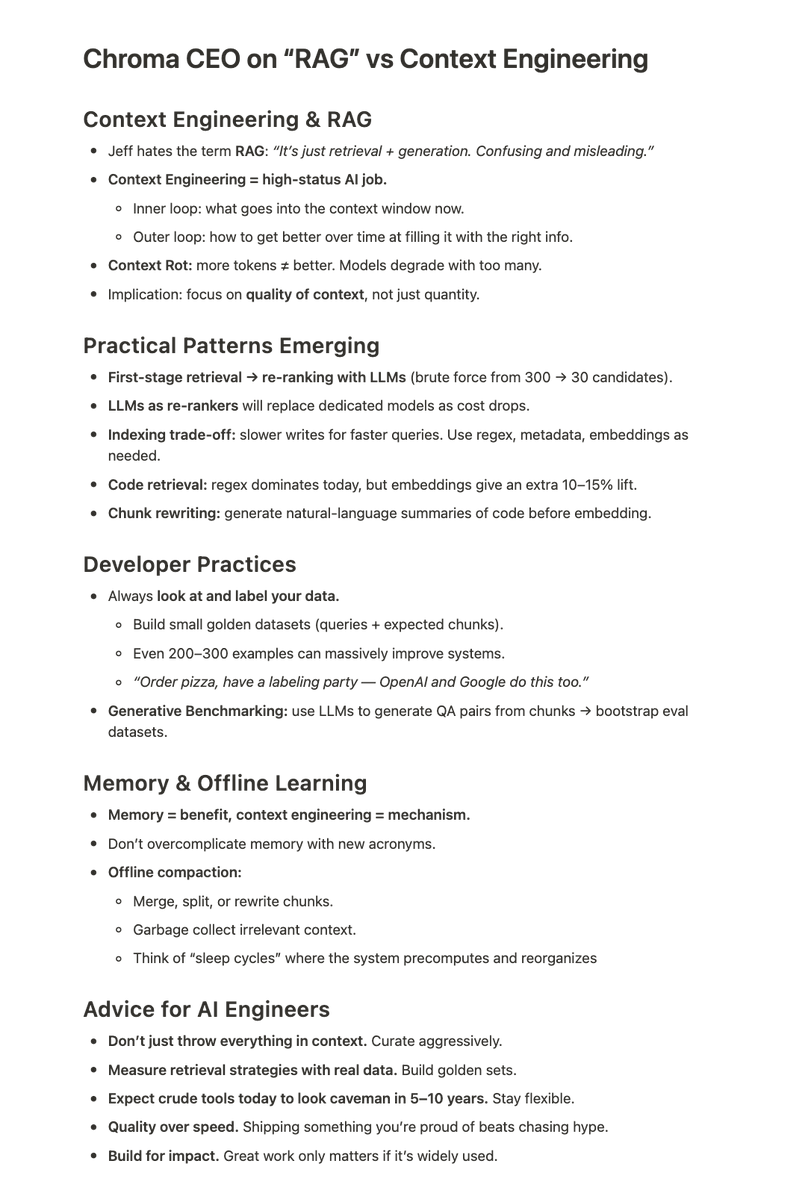

🆕 "RAG is Dead, Context Engineering is King" https://t.co/CUxBNvAuqi We asked @jeffreyhuber about the big @trychroma Cloud launch today, how Context Engineering is evolving, and why you should Look At Your Data (h/t @HamelHusain @jxnlco) full link to youtube pod below https://t.co/iV2ZlSoUdP

Introducing Chroma Cloud: an open-source serverless search database that is fast, cost-effective, scalable, and reliable. https://t.co/V47pMCkHAE

twink death https://t.co/ylnefyV1Nn

@creebeauvoir https://t.co/95MoEPnNdP

@jasonth0 https://t.co/lZNCExb7Dm

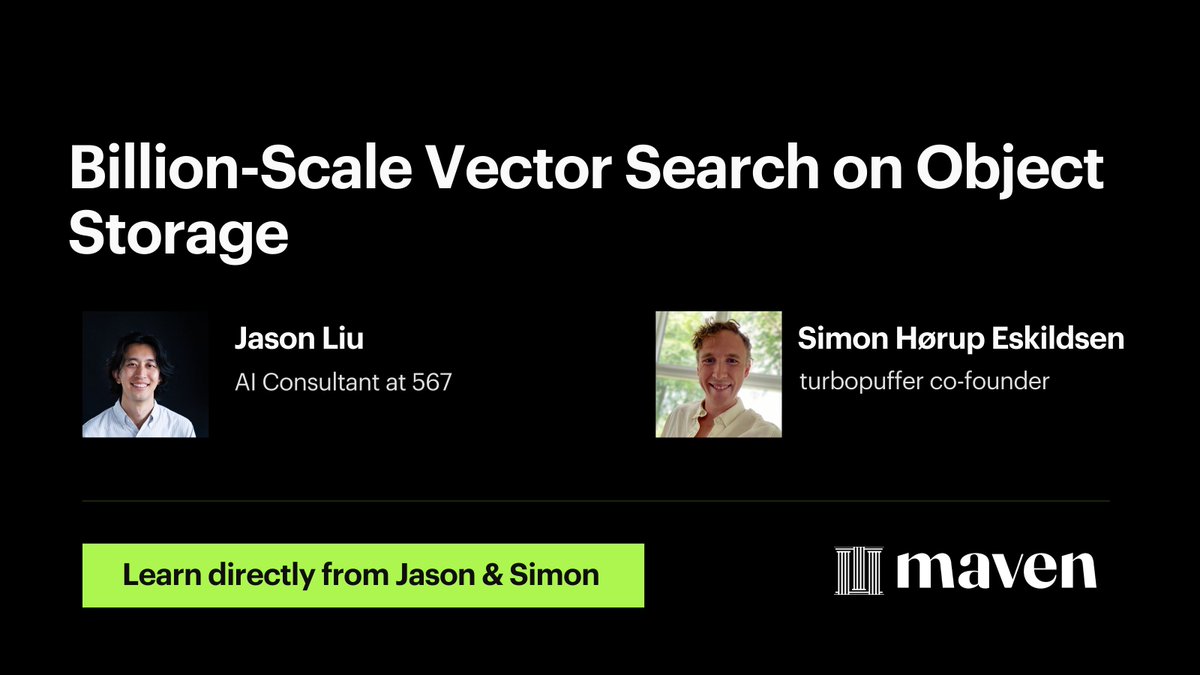

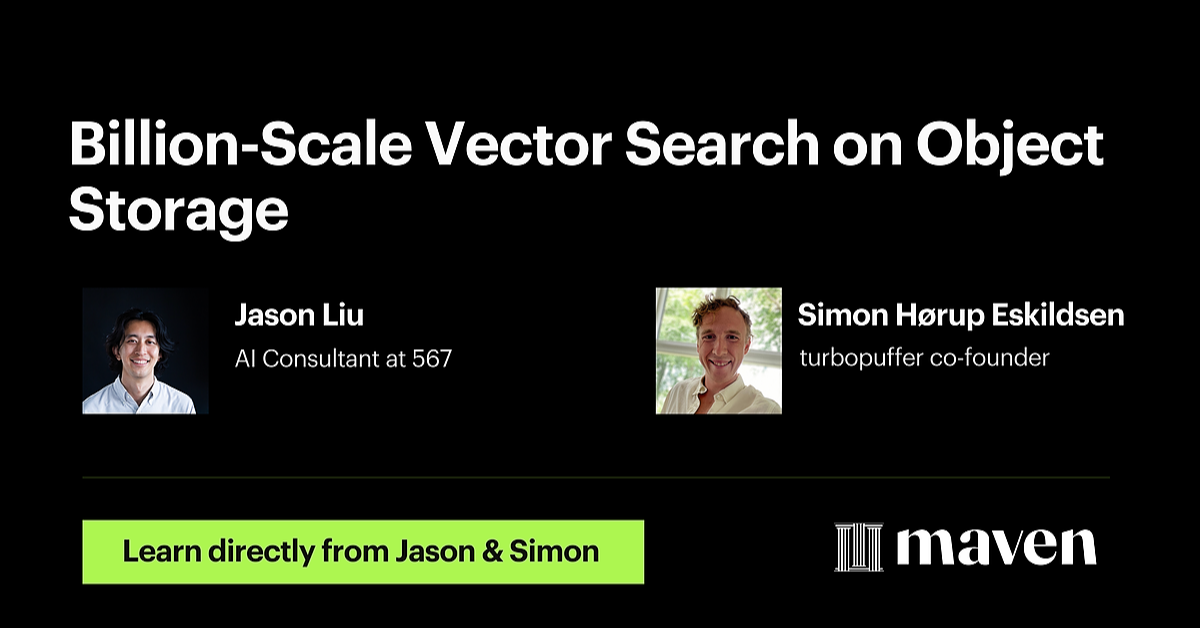

billion-scale vector search on object storage, powering companies like linear, notion, and cursor ai turbopuffer co-founder, Simon Hørup Eskildsen tells us how. On Wednesday, August 27 at 1 PM EDT, Simon will go over: - how to build vector search on object storage vs traditional disk - scaling economics for petabyte-level data (without breaking the bank) - real implementations from Cursor, Notion, and Linear register for this free session to get notes and recordings

check it out here, https://t.co/WreuL93G4u

Source: https://t.co/gY5RMHw4V4

Source: https://t.co/buTXPxx6rx

First full demo of the latest product from @crestalnetwork (alpha version) you can test it today (link below) and SEND ME THOSE BUG REPORTS 🙏 https://t.co/VC2YxDkXpH

Wow. This is has historically been a really hard one for image editing models to get right. > Add a photo of a woman standing in front of "Qwen" text but behind "Image Edit" text, in a stylish design https://t.co/NoCkRr34z2

> Make a fashion magazine cover with a woman posing in a red dress, the title of the magazine is Qwen Image Edit, no other text https://t.co/LE1gbtsnUd

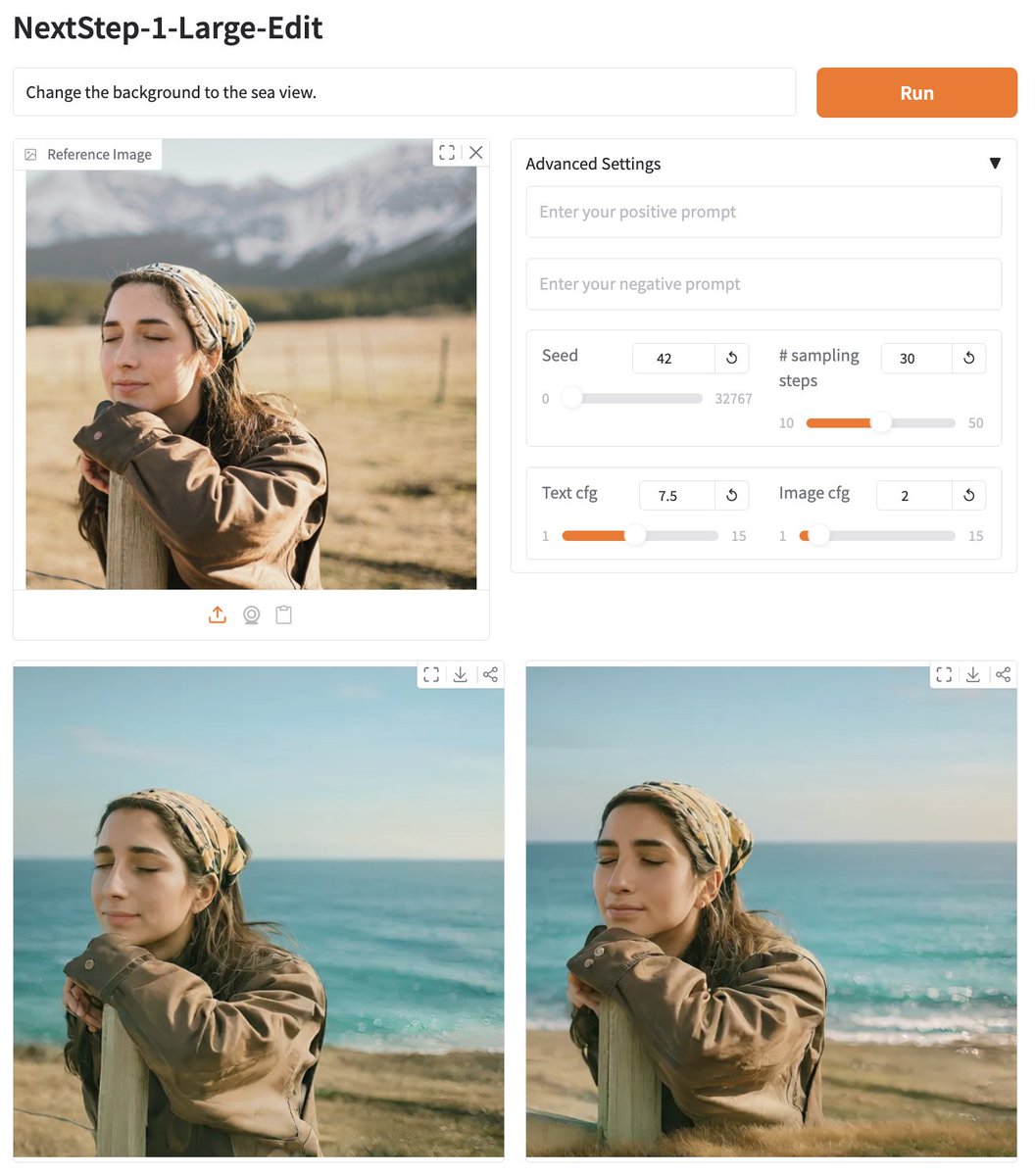

nano-banana, qwen-image-edit, what else? Try @StepFun_ai NextStep-1-Large-Edit - 14B AR model - Apache 2 license - Demo available on @huggingface - Pretrain model also made available Link below https://t.co/9jvmobD5Yo

GLMs: https://t.co/5j8QqRFtHp https://t.co/U2xGLT7jOZ https://t.co/UocP8SesDS #intel #autoround @thukeg

GLMs: https://t.co/5j8QqRFtHp https://t.co/U2xGLT7jOZ https://t.co/UocP8SesDS #intel #autoround @thukeg

"CEOs have assistants, chiefs of staff, and coaches. AI will give that to everyone—co-workers, assistants, and coaches powered by agents. It’s your own dream team, helping you do 90% of your work." – @jainarvind, CEO & Founder, @Glean https://t.co/G5ekFqY01G

Full episode here: https://t.co/xabau1ubJ7

Full episode here: https://t.co/xabau1ubJ7

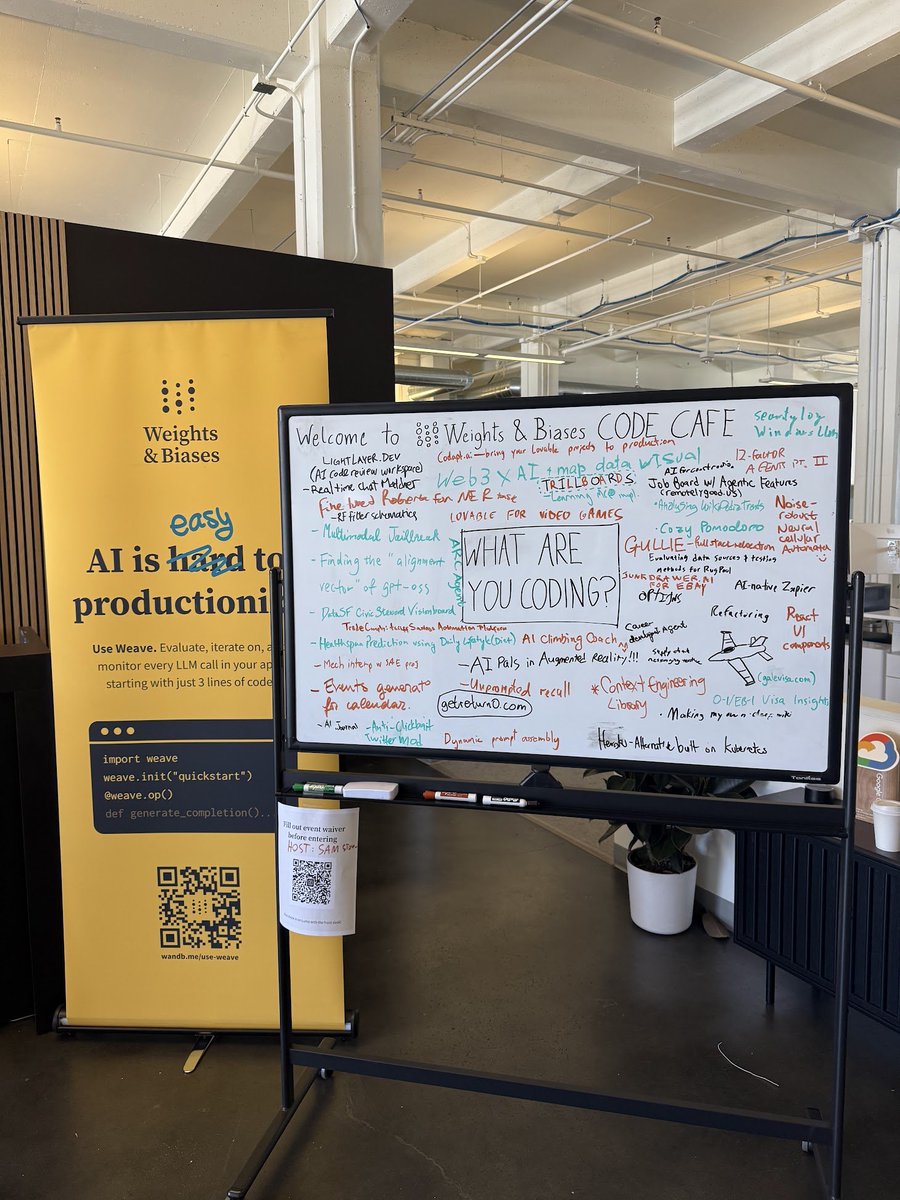

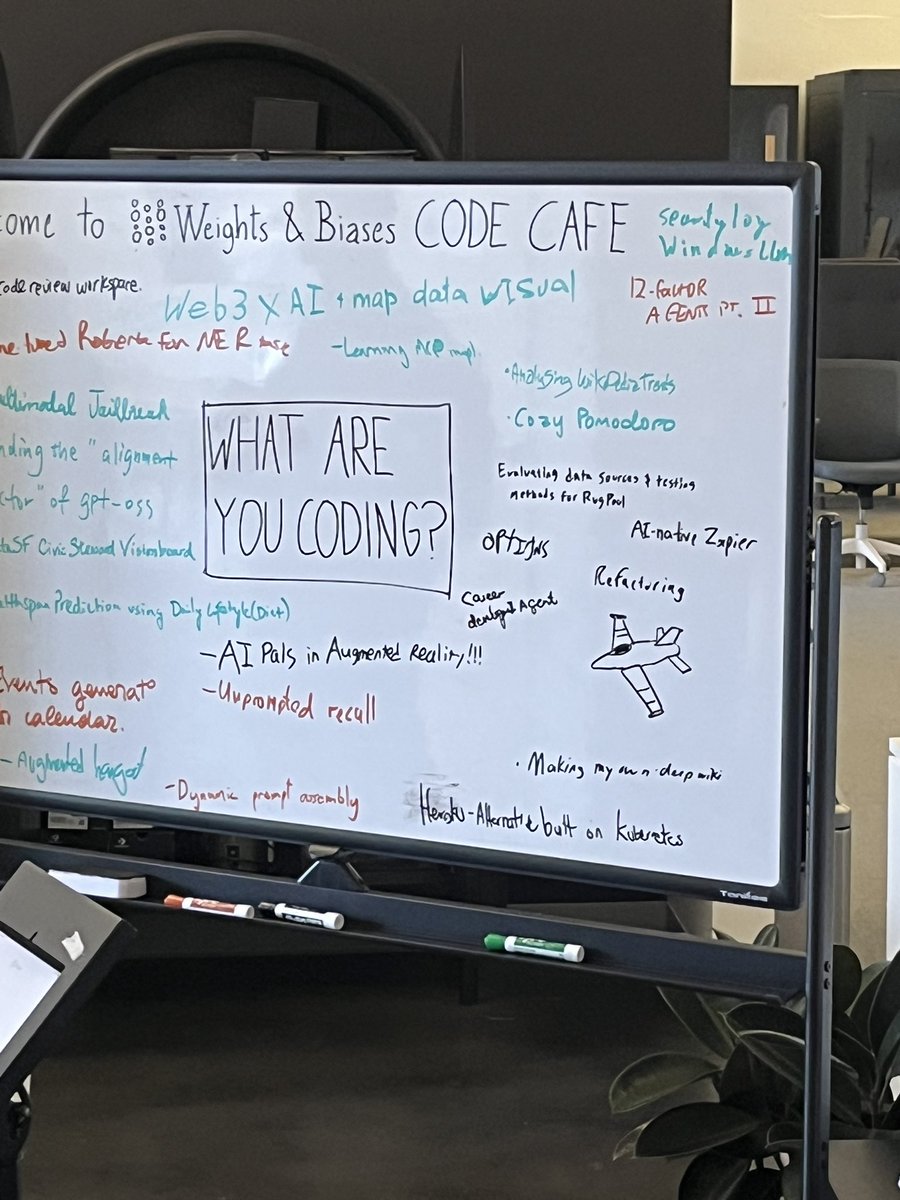

Yesterday, we hosted Code Cafe 4 with @humanlayer_dev at our HQ in SF. Builders arrived with a goal, put it on the board, grabbed coffee + snacks, and didn’t leave until they shipped. Recap below, with builder interviews dropping later this week. https://t.co/3qrYHPSSZY

Another @weights_biases Code Cafe in the books! Much code was written, much coffee was consumed. Check out the whiteboard to see what people were working on. Big thanks to @dexhorthy for cohosting https://t.co/13WlFGJXEH

Ty @weights_biases @sammakesthings https://t.co/vAhEUkCgaj

Ty @weights_biases @sammakesthings https://t.co/vAhEUkCgaj

Code Cafe 4 @ @weights_biases in full swing! Catching up with the greats like @sammakesthings and @ndrsrkl If you’re here, come say hey https://t.co/nJhYJwEOqA

Git 2.51 has arrived with new changes like cruft-less multi-pack indexes, more efficient pack generation, a brand-new stash interchange format, and much more. ✨ Check out our coverage of the latest release here. ⬇️ https://t.co/PqvwBIVapl

Grok Imagine, every day new improvements, and this is still 0.1 Beta 😱😱😱 { "title": "Monochrome Fractal Breath", "intent": "single-shot, meditative, surreal photoreal-CGI", "subject": { "type": "adult human face", "pose": "eyes closed, neutral expression, perfectly still", "framing": "locked to exact centre; head occupies ~35% of height", "skin": "porcelain, clean microtexture, no makeup, no identity match" }, "environment": { "space": "void black", "set_piece": "recursive cube fractal (Menger-sponge style) built from alternating matte charcoal cubes and mirror-chrome cubes", "behaviour": "fractal subdivides and recombines in a slow breathing rhythm; all motion smooth and loopable" }, "look": { "style": "ultra-realistic CGI / clean ray-traced look", "surface_detail": "soft-beveled edges to prevent aliasing; no etched seams; no moiré", "palette": "strict grayscale only (black/charcoal/white); zero colour saturation" }, "lighting": { "key": "soft top-down key, large source, gentle falloff", "fill": "very low, just enough to keep cheeks readable", "rim": "faint halo rim outlining face from above/back", "atmosphere": "thin volumetric haze for subtle light shafts; keep minimal to avoid banding" }, "camera": { "lens": "virtual 50mm equivalent", "focus": "locked on face; deep enough DOF that fractal stays readable", "motion": "gentle push-in with slight FOV ease to simulate dolly-zoom; no cuts" }, "timeline": [ { "t": 0.0, "action": "face already centred and still; fractal at neutral size" }, { "t": 0.0, "action": "begin slow push-in; fractal subtly contracts (inhale visual)" }, { "t": 2.0, "action": "fractal expands (exhale visual), keeping face size stable in frame" }, { "t": 4.0, "action": "second inhale visual; maintain temporal smoothness" }, { "t": 5.6, "action": "prepare seamless loop back to neutral state by 6.0s" } ], "micro_performance": { "cheek_pulse": "very subtle 2-frame micro-pulse synced to the inhale/exhale beats; amplitude minimal to avoid facial deformation" }, "negatives": [ "colour, saturation, hue", "text, logos, watermarks, HUD/overlays", "noise, heavy grain, compression artifacts, banding", "lens dirt, chromatic aberration, glitch, smear", "cartoon, anime, low-poly", "aliasing/stairsteps, moiré patterns", "flicker, temporal shimmer, focus hunting", "multiple faces, face morphing" ], "audio": { "mood": "minimalist ambient; meditative and dark", "tempo_bpm": 60, "elements": [ "soft sub-bass bed (no melody), sidechained gently to the breathing rhythm", "airy high-frequency grains that shimmer on fractal recombine events", "long-tail room reverb; no percussion; no vocals" ], "mix_rules": "quiet, wide, no harsh highs; target loudness ≈ -16 LUFS integrated; limiter gently catching peaks" }, "output": { "duration_seconds": 6, "aspect_ratio": "9:16", "resolution": "1080x1920", "fps": 24, "loop_hint": true } }

Grok4 Imagine 💫 https://t.co/D6bDMnSGlx

We just launched VS Code Insiders Podcast - the official podcast from the @code team! Join us as we sit down with the developers, product managers, and community contributors shaping the open source AI code editor. First episode on GPT-5 out now! https://t.co/BM1fuTlTdD https://t.co/KKv16YtdoH

Yeah I can see the hype for the nano-banana model. We just need this + perfect text https://t.co/Sxmlq7uK92

@Teknium1 I’ve got the rare bald Tek edition https://t.co/GJAGdwdomT

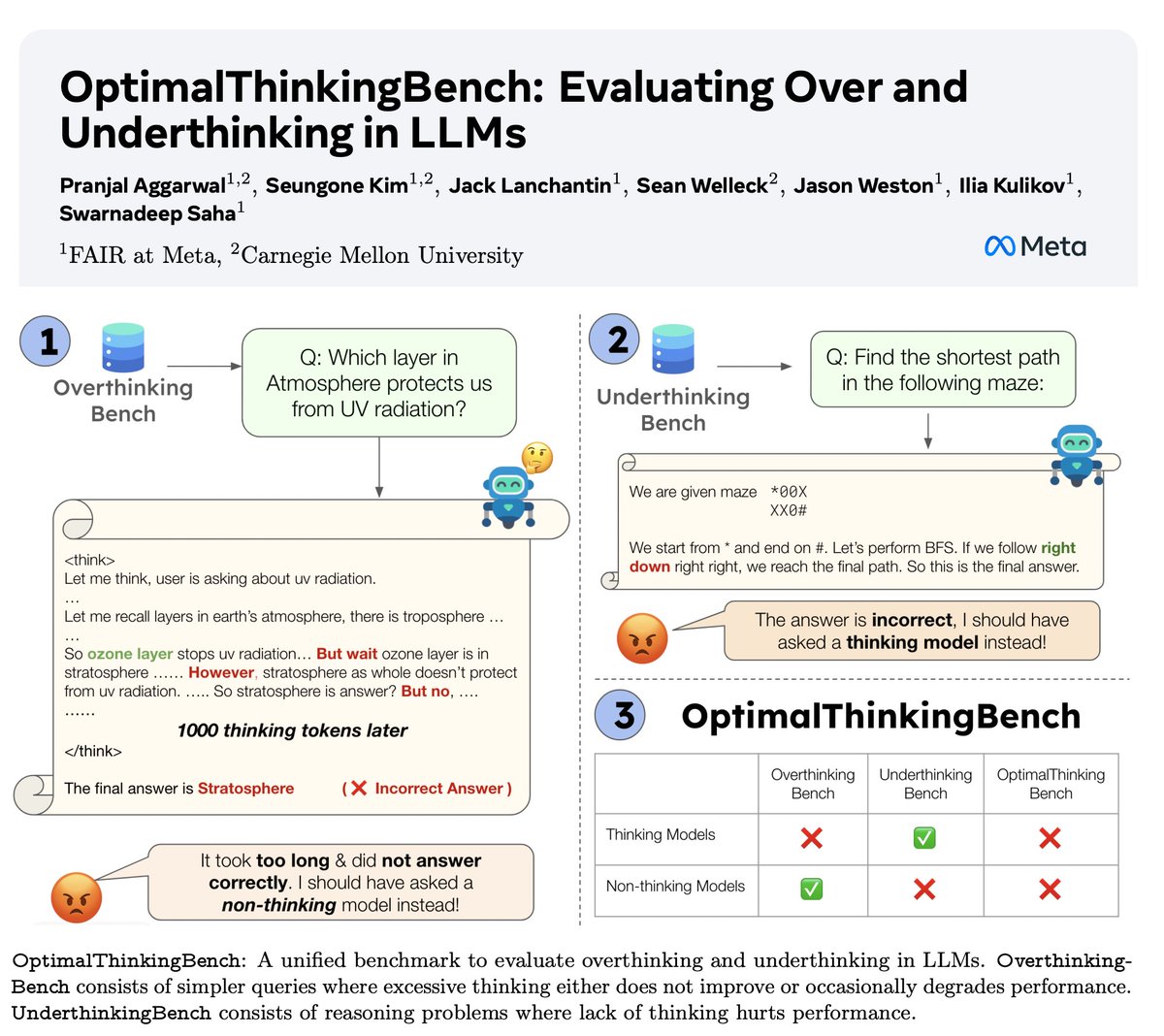

🤖Introducing OptimalThinkingBench 🤖 📝: https://t.co/cffhCY4eQw - Thinking LLMs use a lot of tokens & overthink; non-thinking LLMs underthink & underperform. - We introduce a benchmark which scores models in the quest to find the best mix. - OptimalThinkingBench reports the F1 score mixing OverThinkingBench (simple queries in 72 domains) & UnderThinkingBench (11 challenging reasoning tasks). - We evaluate 33 different SOTA models & find improvements are needed! 🧵1/5