@jaseweston

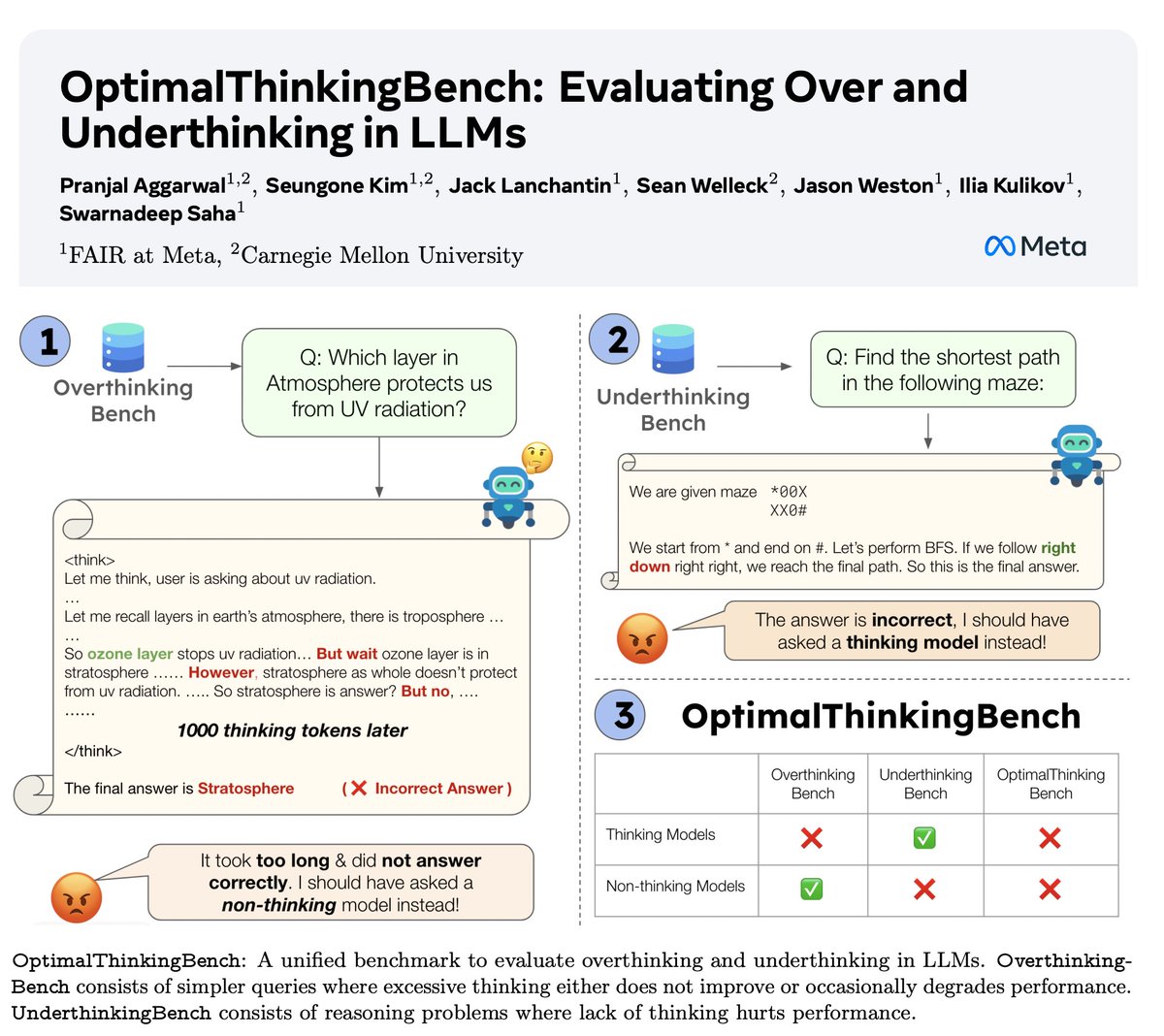

🤖Introducing OptimalThinkingBench 🤖 📝: https://t.co/cffhCY4eQw - Thinking LLMs use a lot of tokens & overthink; non-thinking LLMs underthink & underperform. - We introduce a benchmark which scores models in the quest to find the best mix. - OptimalThinkingBench reports the F1 score mixing OverThinkingBench (simple queries in 72 domains) & UnderThinkingBench (11 challenging reasoning tasks). - We evaluate 33 different SOTA models & find improvements are needed! 🧵1/5