Your curated collection of saved posts and media

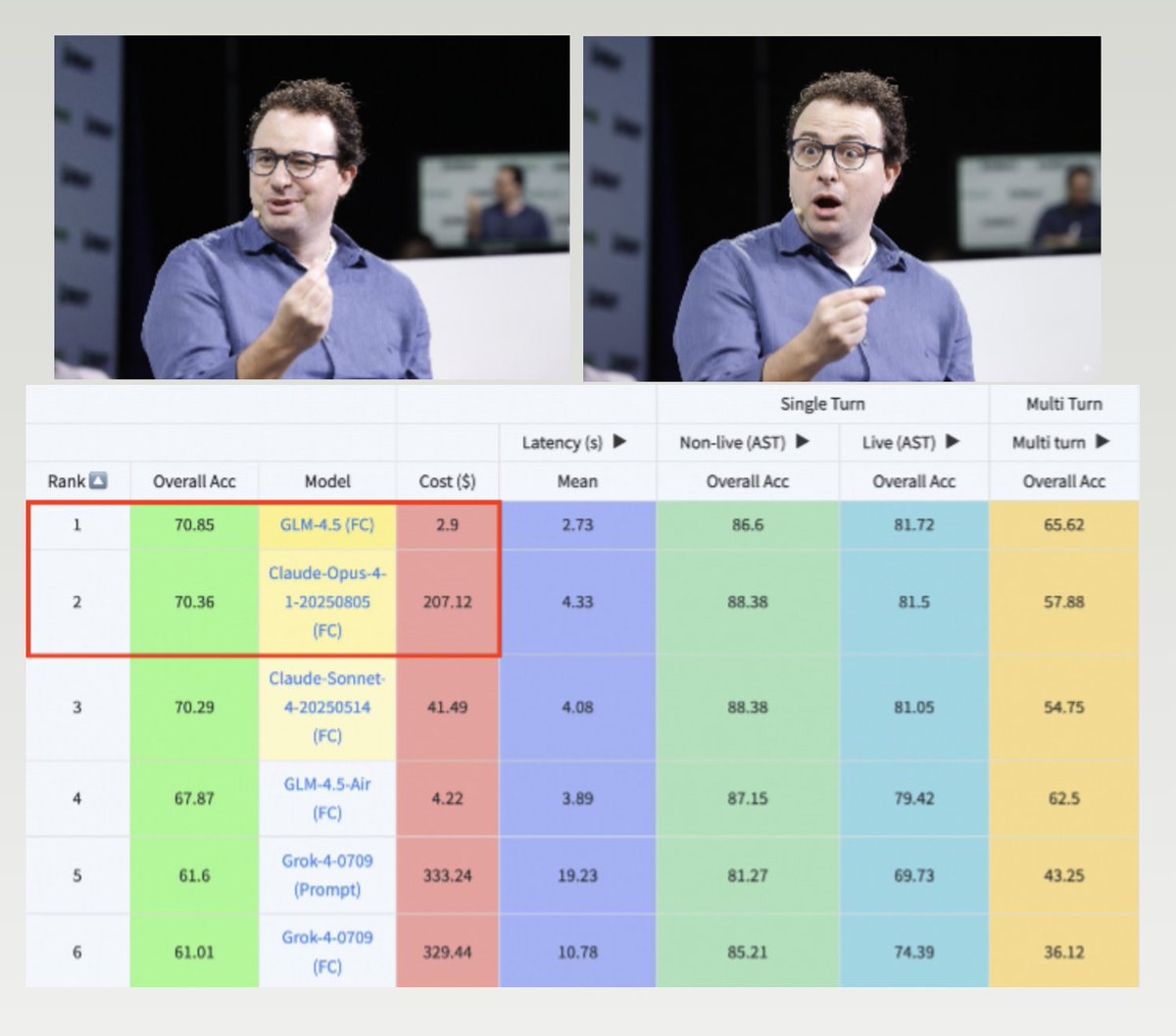

GLM-4.5 is beating Claude-4 Opus on the Berkeley Function Calling benchmark while costing 70x less https://t.co/eG8QZ3l9sE

GLM-4.5 is beating Claude-4 Opus on the Berkeley Function Calling benchmark while costing 70x less https://t.co/eG8QZ3l9sE

ByteDance Seed and Stanford introduce Mixture of Contexts (MoC) for long video generation, tackling the memory bottleneck with a novel sparse attention routing module. It enables minute-long consistent videos with short-video cost. https://t.co/JHCSQ81FWJ

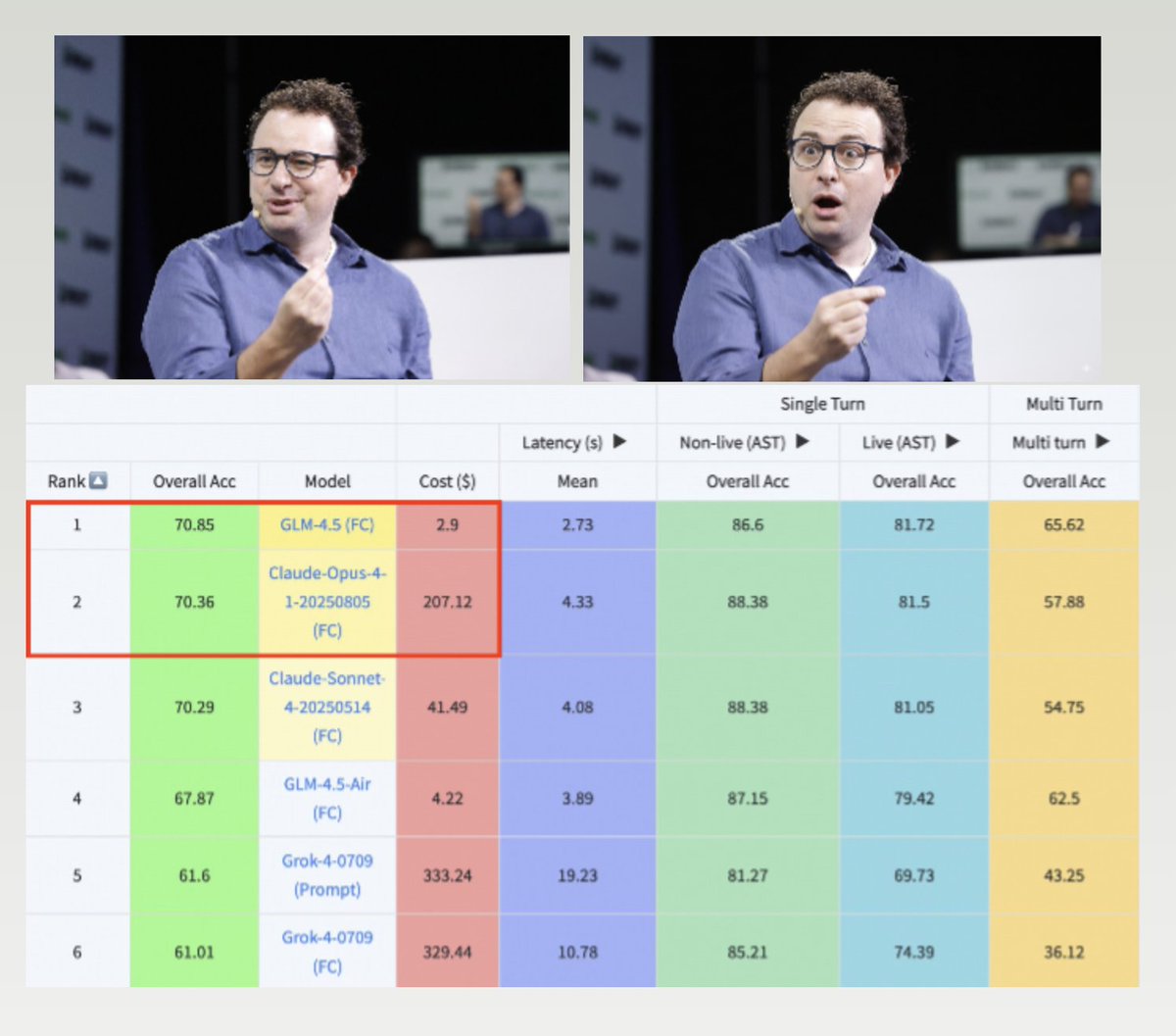

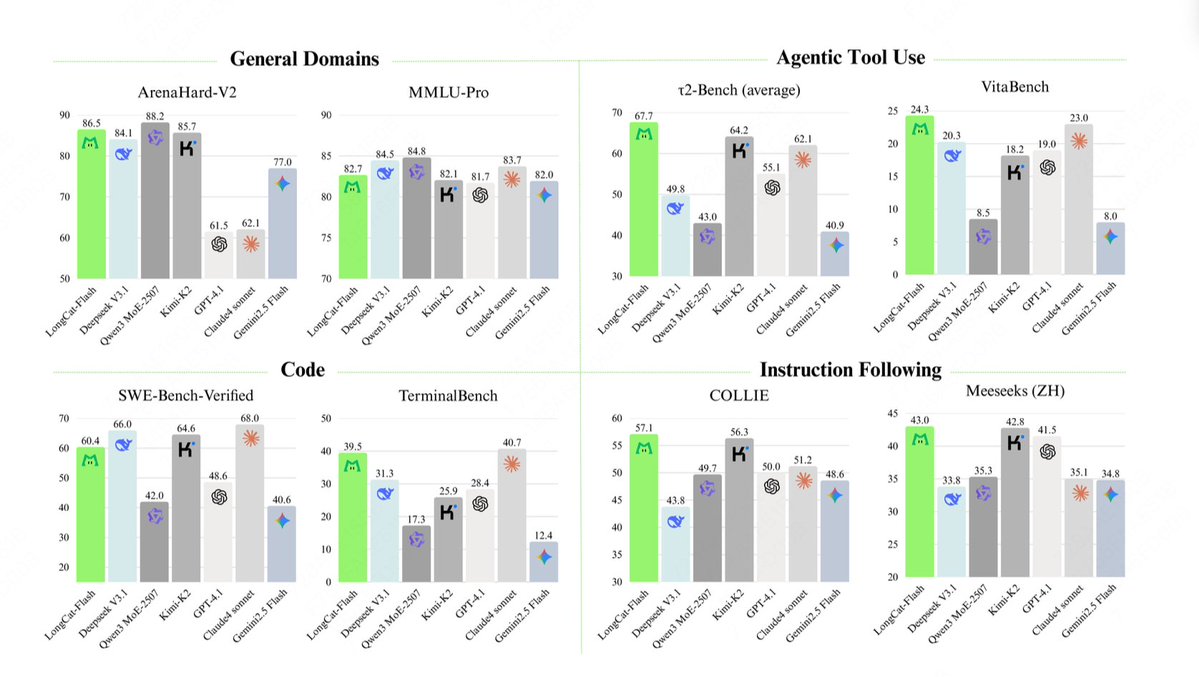

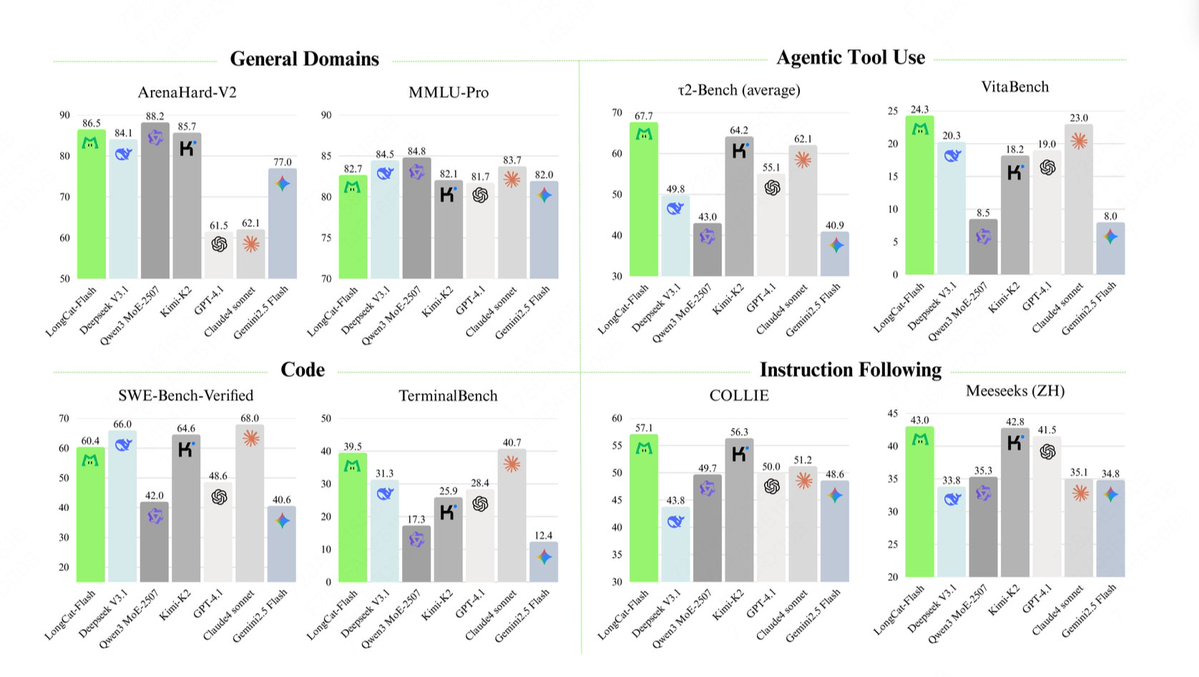

The technical report of @Meituan_LongCat LongCat-Flash is crazy good and full of novelty. The model is a 560B passive ~27B active MoE with adaptive number of active parameters depending on the context thanks to the Zero-Computational expert. 1) New architecture > Layers have 2 Attention blocks and both FFN and MoE, that way you can overlap the 2 all-to-all coms. (also it's only 28 layers but you have to take into account the 2 attention blocks). > They add the zero-computational expert that tokens can choose and do nothing, kinda like a "sink" for easy tokens. > For load balancing, they have a dsv3-like aux loss free to set the average real/fake expert per token. They apply a decay schedule to this bias update. They also do loss balance control. 2) Scaling > They made changes to MLA/MoE to have variance alignment at init. The gains are pretty impressive in Figure 5, but i don't know to what extent this has impact later on. > Model growth init is pretty cool, they first train a 2x smaller model and then "when it's trained enough" (a bit unclear here how many B tokens) they init the final model by just stacking the layers of the smaller model. > They used @_katieeverett @Locchiu and al. paper to have hyperparameter transfer with SP instead of muP for the 2x smaller model ig. 3) Stability > They track Gradient Norm Ratio and cosine similarity between experts to adjust the weight of the load balancing loss (they recommend Gradient Norm Ratio <0.1). > To avoid large activations, they apply a z-loss to the hidden state, with a pretty small coef (another alternative to qk-clip/norm). > They set Adam epsilon to 1e-16 and show that you want it to be lower than the gradient RMS range. 4) Others > They train on 20T tokens for phase 1, "multiple T of tokens" for mid training on STEM/code data (70% of the mixture), 100B for long context extension without yarn (80B for 32k, 20B for 128k). The long context documents represent 25% of the mixture (not sure if it's % of documents or tokens, which changes a lot here). > Pre-training data pipeline is context extraction, quality filtering, dedup. > Nice appendix where they show they compare top_k needed for different benchmarks (higher MMLU with 8.32, lower GSM8K with 7.46). They also compare token allocation in deep/shallow layers. > They release two new benchmarks Meeseeks (multi-turn IF) and VitaBench (real-world business scenario). > Lots of details in the infra/inference with info on speculative decoding acceptance, quantization, deployment, kernel optimization, coms overlapping, etc. > List of the different relevent paper in thread 🧵

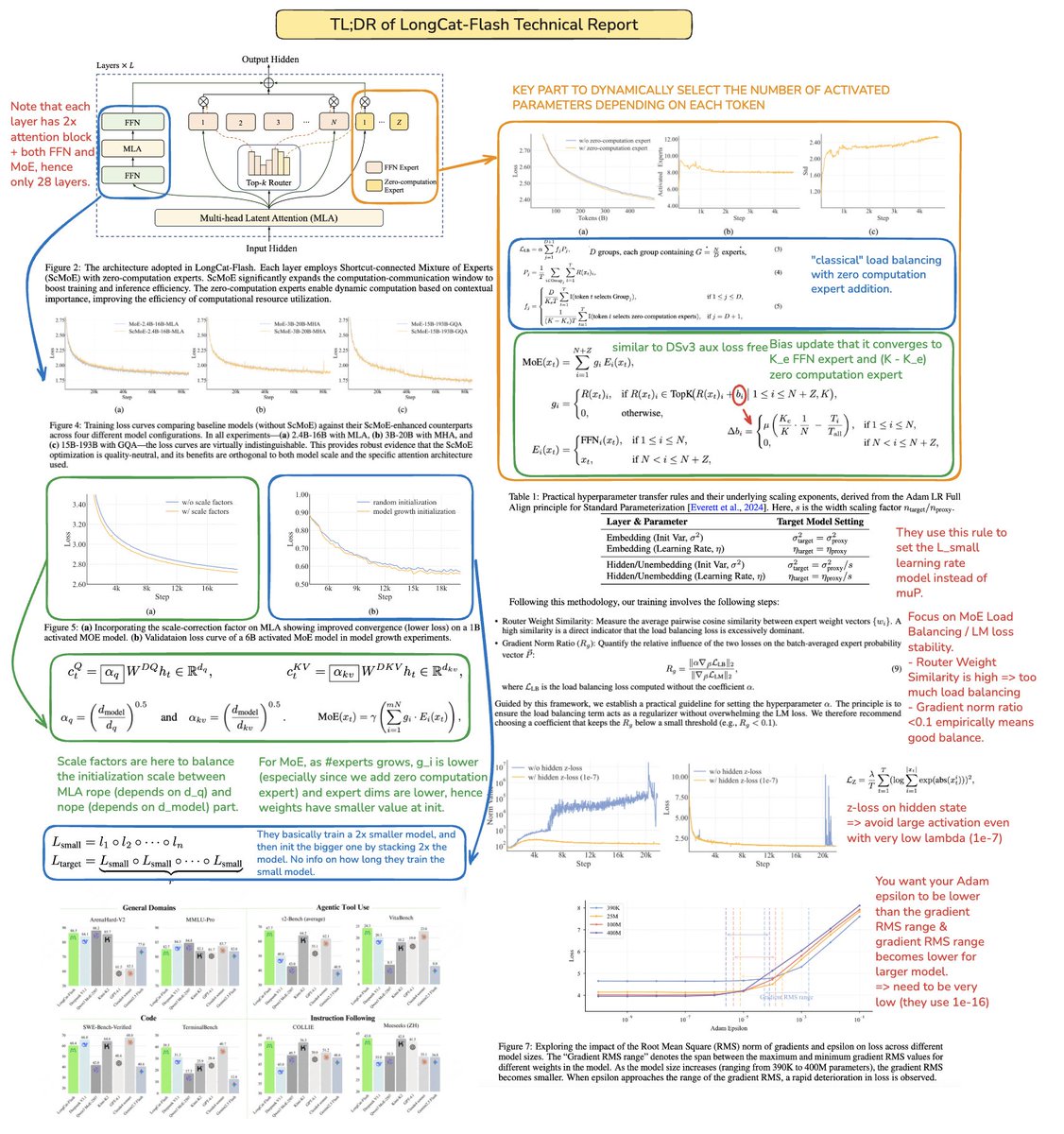

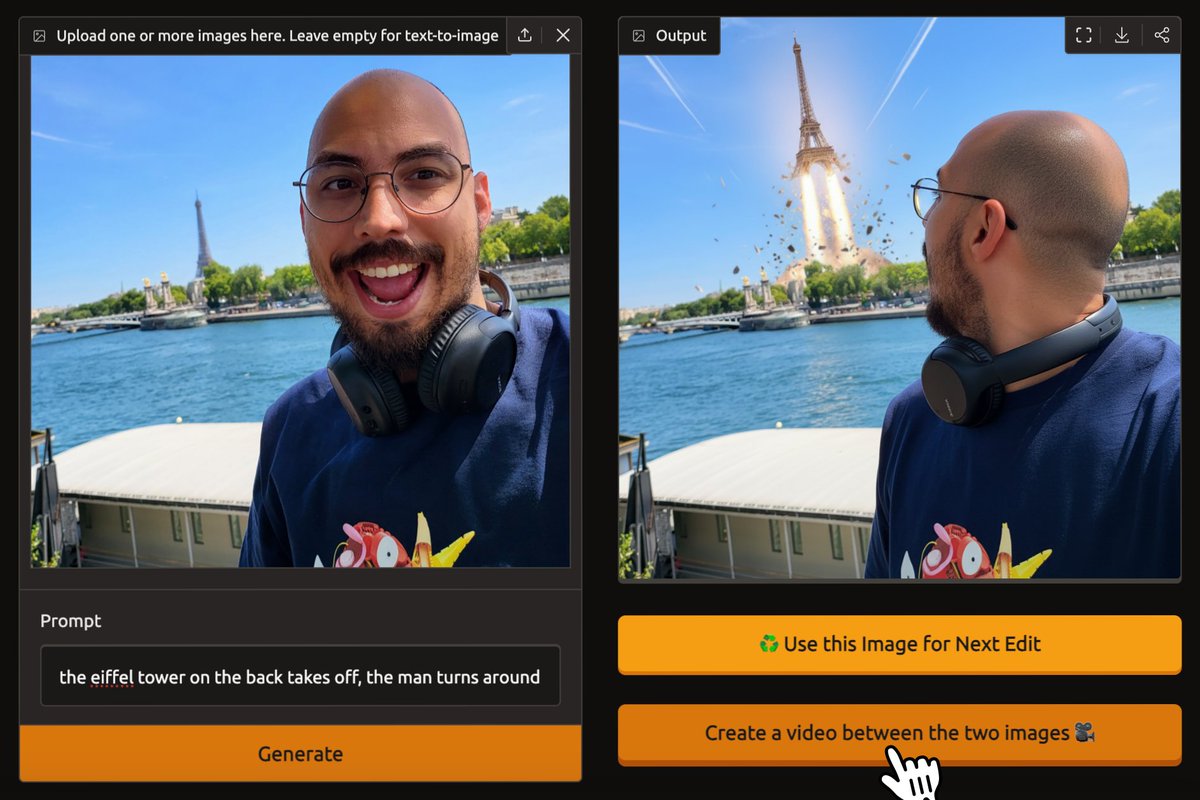

a mysterious new button appeared on the @huggingface Spaces Nano Banana app 👀 https://t.co/cY0nLznV8t

a mysterious new button appeared on the @huggingface Spaces Nano Banana app 👀 https://t.co/cY0nLznV8t

Hunyuan-MT-7B 🔥 open translation model released by @TencentHunyuan https://t.co/dTs5yBmkIi ✨ Supports 33 languages, including 5 ethnic minority languages in China 👀 ✨ Including a translation ensemble model: Chimera-7B ✨ Full pipeline: pretrain > CPT > SFT > enhancement > ensemble refinement > SOTA performance at similar scale

Hunyuan-MT-7B 🔥 open translation model released by @TencentHunyuan https://t.co/dTs5yBmkIi ✨ Supports 33 languages, including 5 ethnic minority languages in China 👀 ✨ Including a translation ensemble model: Chimera-7B ✨ Full pipeline: pretrain > CPT > SFT > enhancement > ensemble refinement > SOTA performance at similar scale

1/ shipping two synthetic med qa sets from @OpenMed_AI community, made by @mkurman88 (core contributor): • med-synth qwen3-235b-a22b (2507) • med-synth gemma 3 (27b-it) datasets on @huggingface 👇 https://t.co/1BP4hnABe2

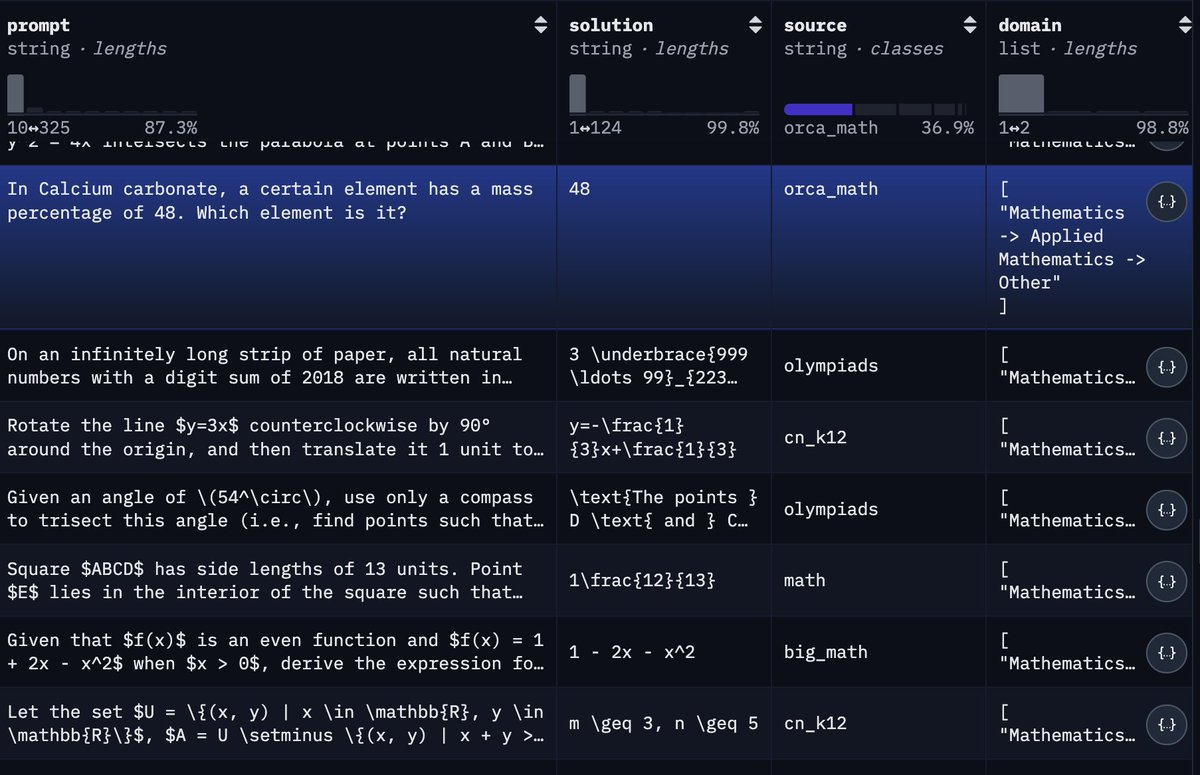

need your help! list your top 5 datasets on @huggingface for rl training with verified answers. - math - code - everyday stuff https://t.co/O54HdefTP4

1/ It's now easier than ever to use @huggingface 🤗 Datasets at scale! Introducing Daft's native support for reading from and writing to Hugging Face. https://t.co/JNJbrgiWNI

Hugging Faceでも公開しました。 https://t.co/HEdMxsH2Hi

GPQAデータセットの日本語訳であるJGPQAを公開しました。問題と選択肢を機械翻訳した後に人手で修正したものになります。諸事情で現在はGithubでの公開ですが、いずれHugging Faceでも公開予定です。 https://t.co/TbiKwv2y2b

Hugging Faceでも公開しました。 https://t.co/HEdMxsH2Hi

FYI HealthBench now conveniently available on HuggingFace. We hope it helps model developers and the healthcare community understand model performance and safety. https://t.co/BqFoLE6Sv5

that's a Chinese food delivery company absolutely mogging the competition https://t.co/r7aMojpUo1

chat, this is getting out of hand: "We introduce LongCat-Flash, a powerful and efficient language model with 560 billion total parameters, the model incorporates a dynamic computation mechanism that activates 18.6B∼31.3B parameters (averaging∼27B) based on contextual demands" S

that's a Chinese food delivery company absolutely mogging the competition https://t.co/r7aMojpUo1

@poetengineer__ You should make it a demo on https://t.co/qkxl7AQLv7 for the community

This was the comment. Had to delete it since I don't want my comments to serve as an outlet for this discourse. https://t.co/ydnzxPNvlA

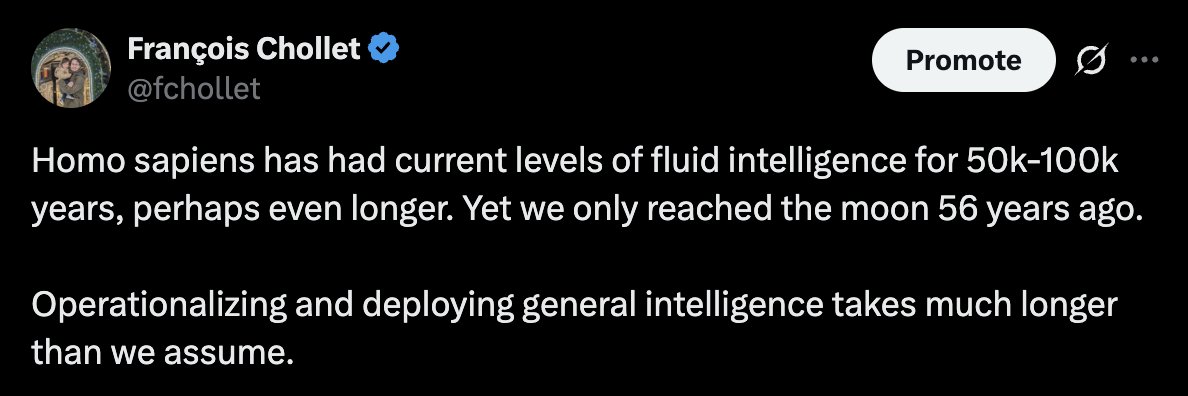

Do consider: some humans 40,000 years ago could paint better than you do (see Altamira bisons below). And the basics of civilization -- agriculture, domestication, writing, complex stone architecture, metallurgy (gold) were independently reinvented among populations that were completely isolated from each other for >20,000 years

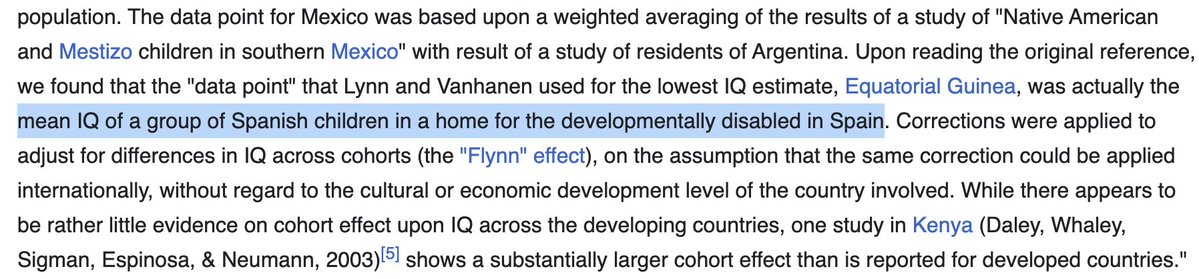

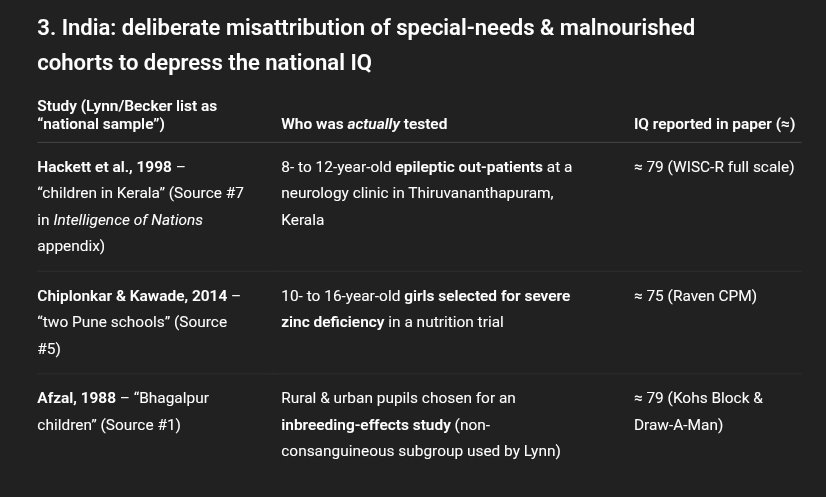

There's a specific world map image that's been been shared about 20 times in the comments and QT of my original tweet, that claims that the average IQ of entire continents is below 65 (a level at which you couldn't learn to read or even live independently). The data is from Lynn and Vanhanen. It has been widely debunked, and sharing it is just telling on yourself. Most country averages are collected from tiny sample sizes, often from children, and in many cases from children in mental institutions or refugees camps. The numbers were also arbitrarily massaged when they didn't agree with the message. It's not science, it's "political discourse" (you know the kind)

This is what we're dealing with. If you're sharing this data as "proof" that intelligence is a recent and exclusively European development, you are showing yourself to be incapable of the most basic level of critical thinking https://t.co/QxBlXTyW1I

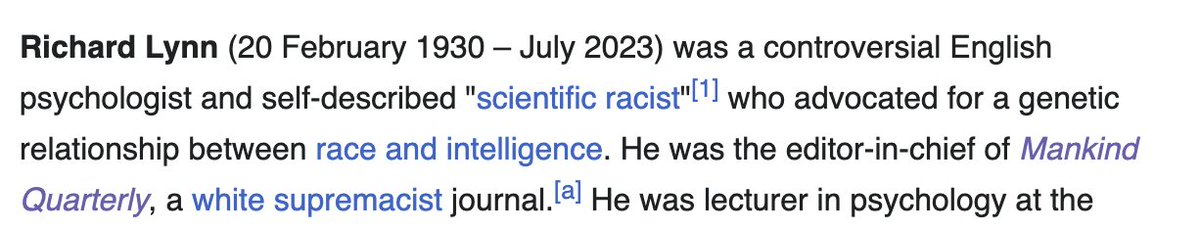

For those who don't know who Richard Lynn is -- he was a self-avowed racist who argued for eugenics, and who said about brown/black people, "we need to think realistically in terms of the 'phasing out' of such peoples" So it's not like his "work" was unbiased and guided by a love of truth-seeking. He went and made up some total bunk data points to support his racist views. Didn't even try to make it believable or auditable. And now drooling morons on Twitter are sharing his world map as if it were unquestionable "science". Incredible stuff

#Otd in 1955, the term “artificial intelligence” was coined in a conference proposal: https://t.co/BbzXHKRdns https://t.co/fRNik3DsLz

@Dhirajsoude Not a math book but my Machine Learning with PyTorch and Scikt-Learn book introduces/covers more of the math alongside the ML/DL topics. I also started writing about these on a more basic level but that’s years ago and haven’t had time to finish: https://t.co/R3B1AH65o6

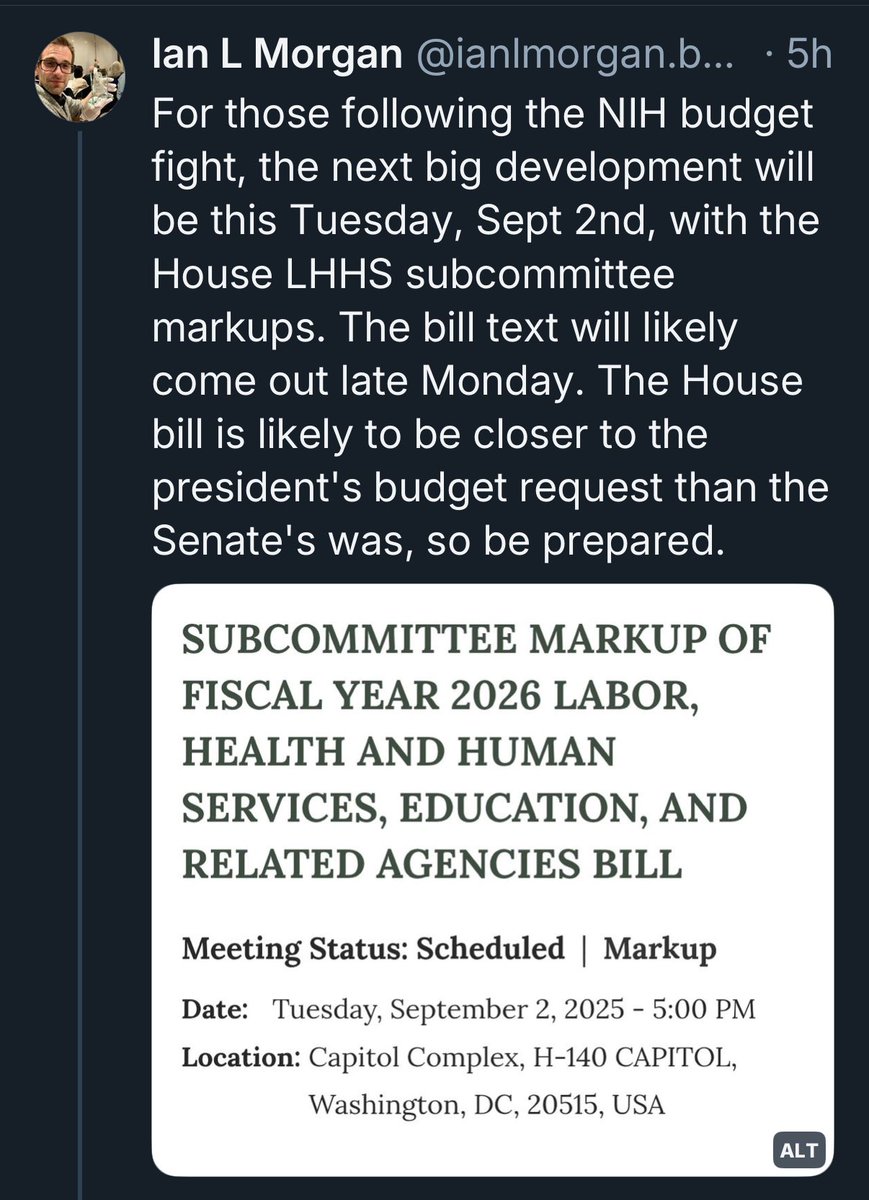

Trump proposed 40% cut to NIH 🤬💔😱 Senate committee (16 GOP, 10 DEM) voted 23-3 to INCREASE NIH budget by $400M 🎉 🎊 🙌 Next step is House 😶😶😶 https://t.co/QCJcVuYCo5

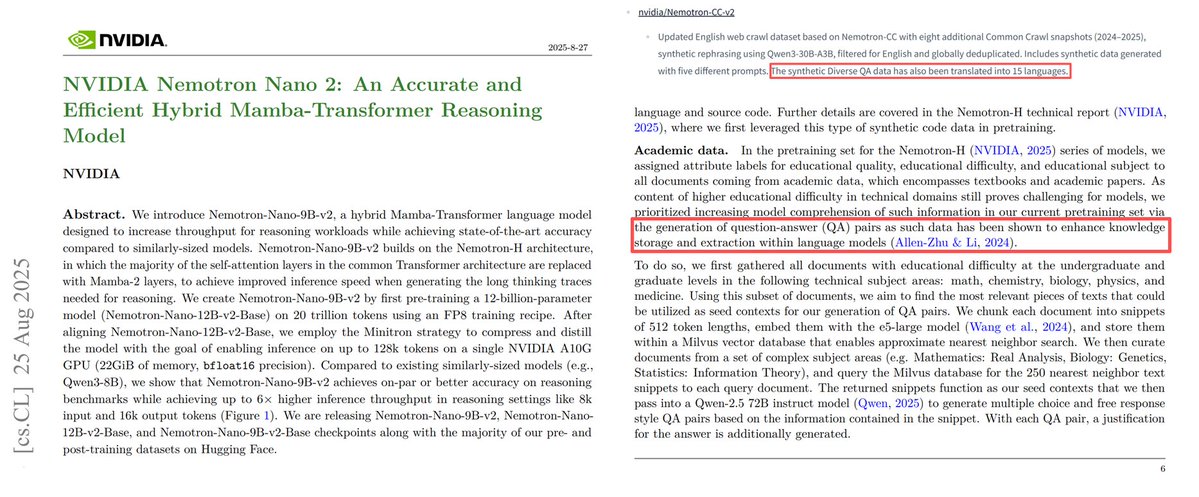

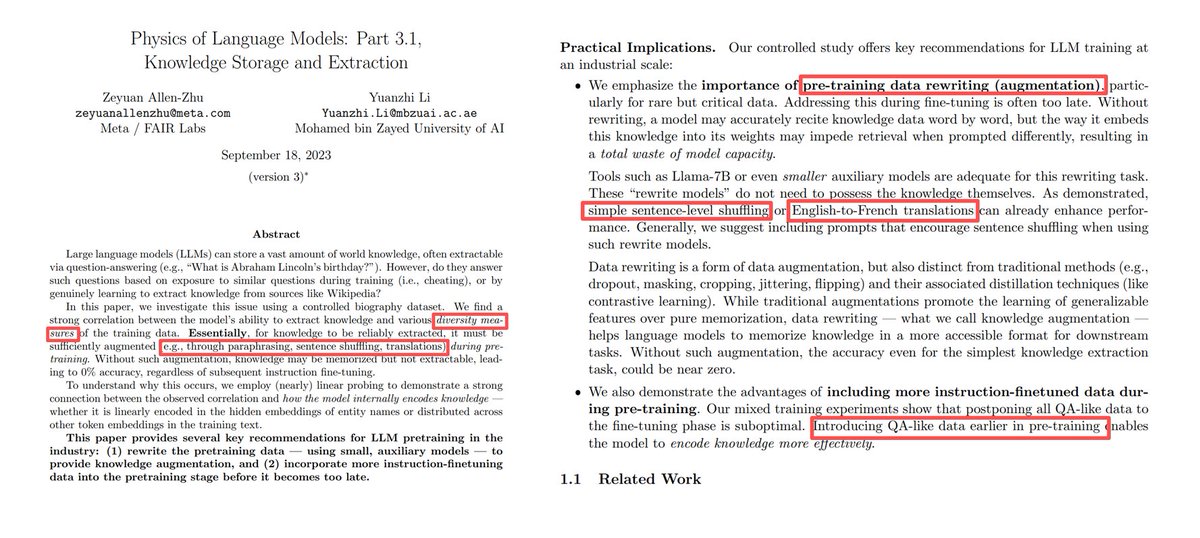

🚀 NVIDIA continues to lead on open-sourcing pretraining data — Nemotron-CC-v2 has dropped! 👏 Congrats to @KarimiRabeeh @issanjeev @PavloMolchanov @KezhiKong @SimonXinDong @ctnzr @YejinChoinka + many others! 🙏 A very loud thank you for citing our Physics of LMs, Part 3.1. You’re perhaps the first leading lab to publicly acknowledge its usefulness (knowledge augmentation: add QA at pretrain-level, add diversity + translation). When I ran this code 2 years ago, it was using V100s + 8 A100s so many didn’t believe in it --- I wasn’t approved to test on real-life data, couldn’t secure GPUs for larger experiments. That’s why this recognition really matters: it validates the value of foundational projects like ours, and helps me keep pushing to deliver more insights for the AI community. Truly grateful. https://t.co/c5g1VMUhCr

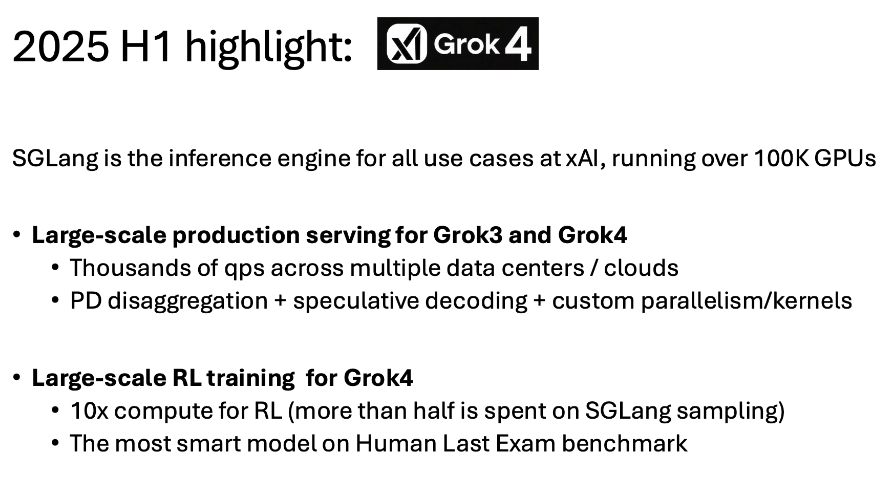

xAI may be one of the single biggest contributors to open-source inference just by serving everything with SGLang https://t.co/zPwZvfvjYP

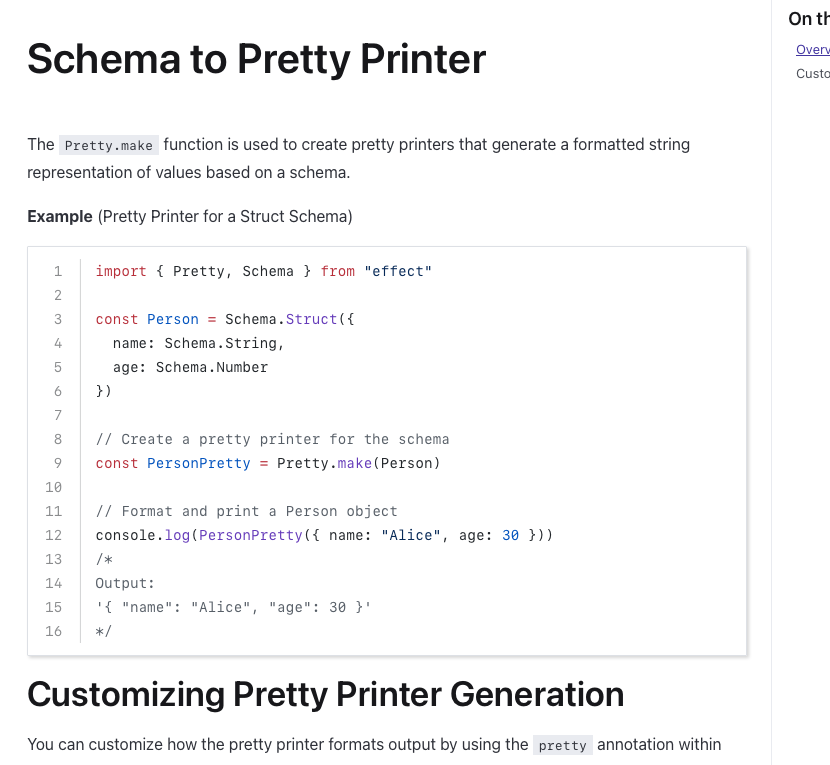

Is this rich for typescript with @EffectTS_ ? https://t.co/UYguHYH6hN

Things at manus move fast! Plenty of problems to solve and great people to work with We're hiring aggressively at https://t.co/Q65afg9ebq

Shout out to our engineers! Amazing 🤩

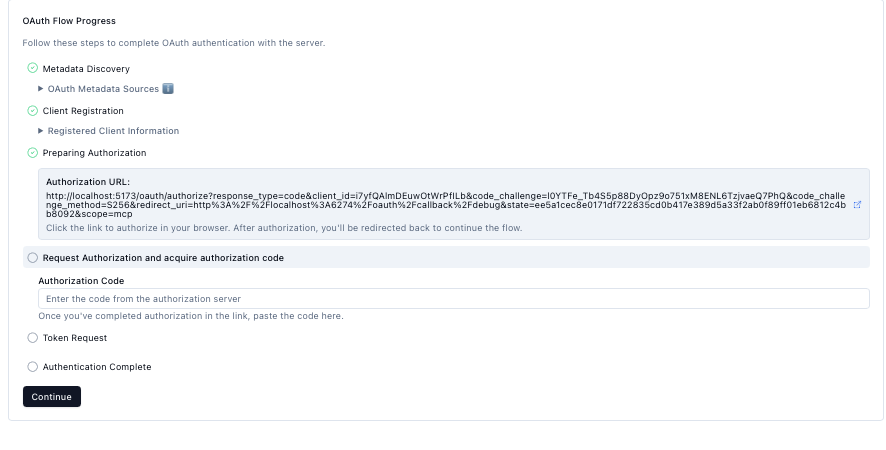

@backpinelabs @AmpCode Man this OAuth got teeth :( Should be done with it soon fingers crossed. This should make for a much more portable package https://t.co/0JtAL2n8os

The Fort Bragg Cartel would have never become a reality without @RollingStone. No other US magazine would have given me free rein to investigate the nexus between organized crime and special operations, or to come at the story from such an aggressively adversarial angle. https://t.co/7NyQCc3PaH

https://t.co/h8sWy9qOTS

me visiting the club chalamet wall in italy https://t.co/S2Dz6fedpM